OWL 2 adds several new features to OWL including:

1) Cleaner language design with axiom-centered structural specification and functional style syntax.

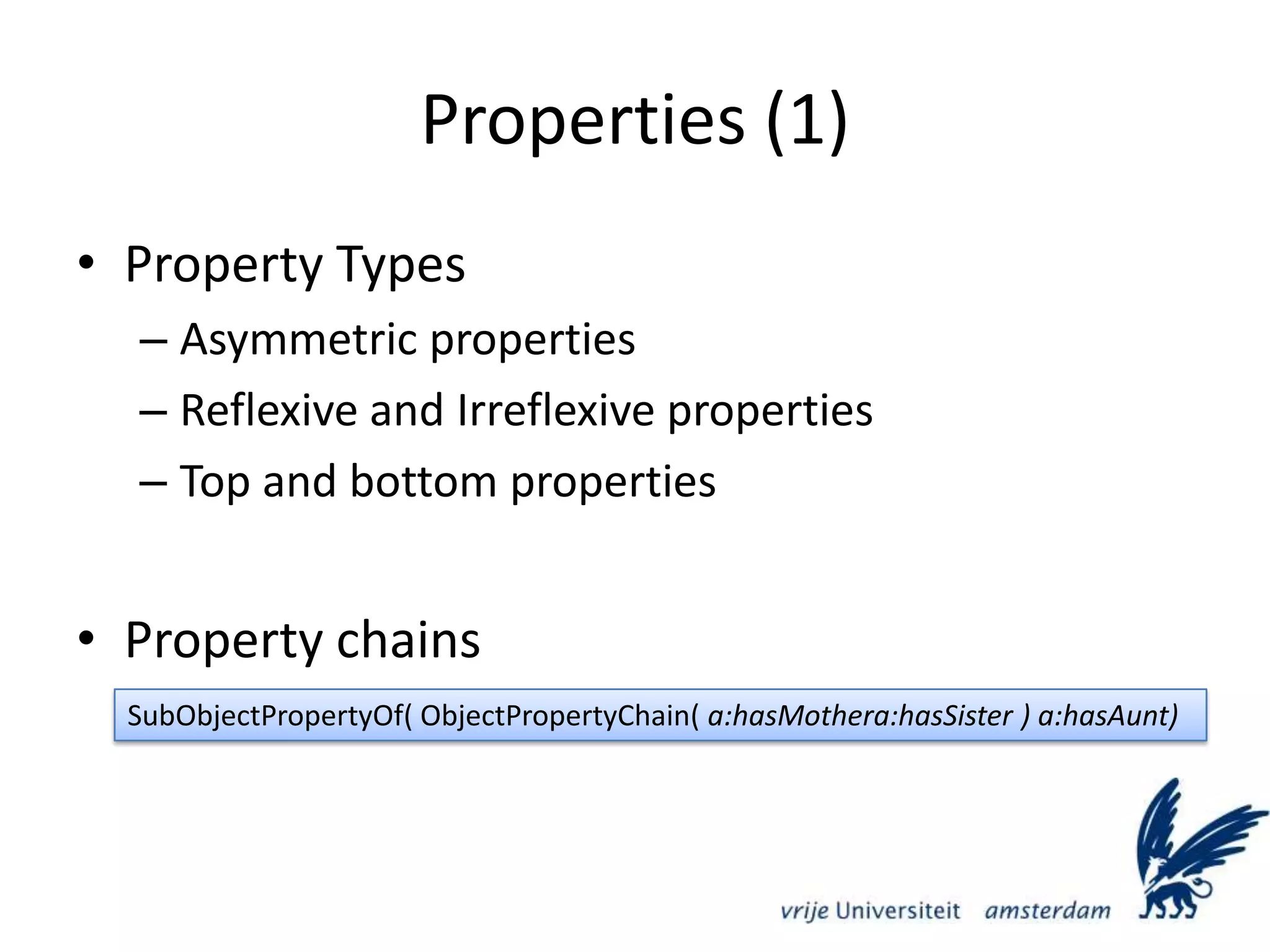

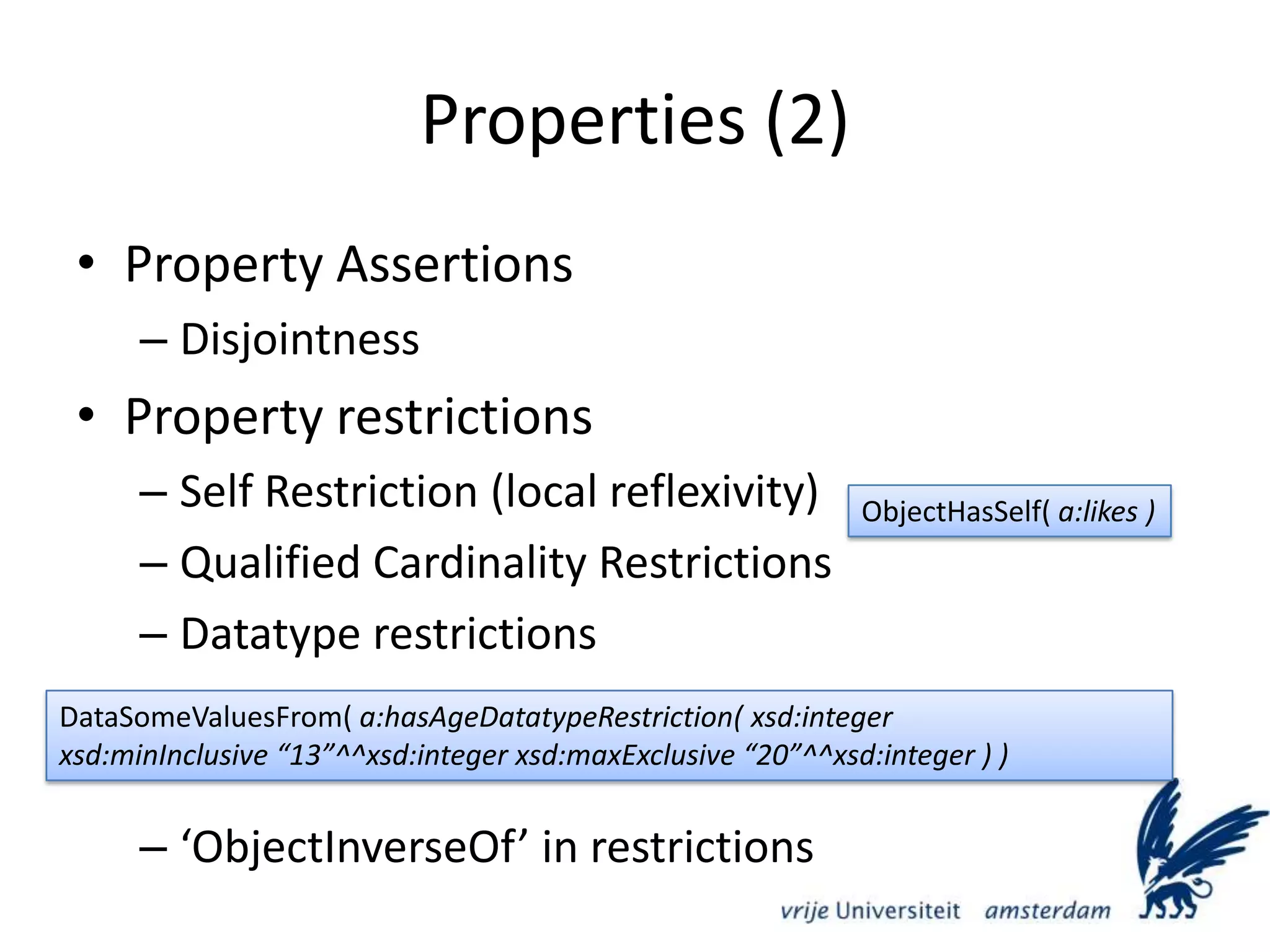

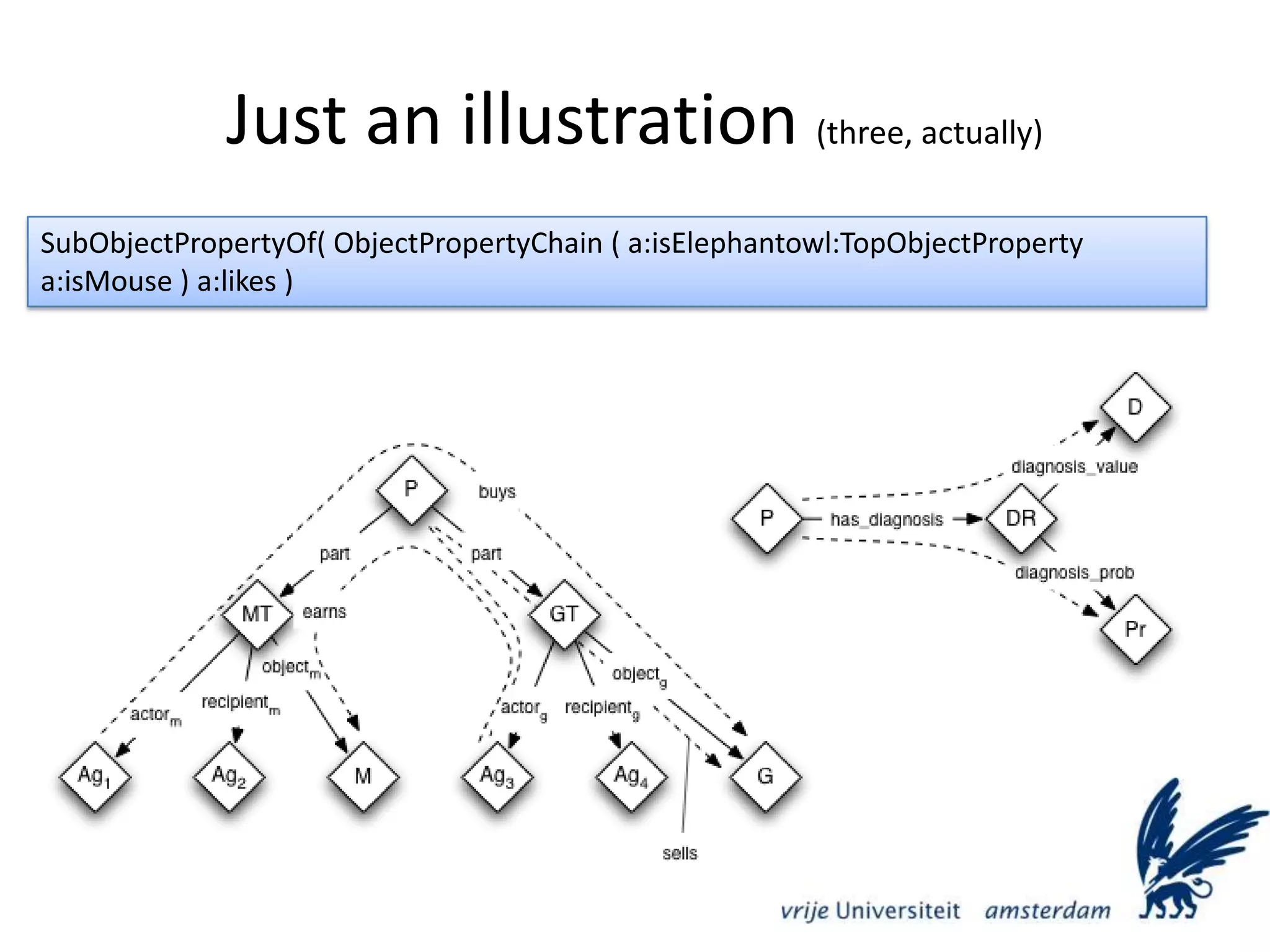

2) Increased expressiveness through properties such as property chains, qualified cardinality restrictions, and datatype restrictions on properties.

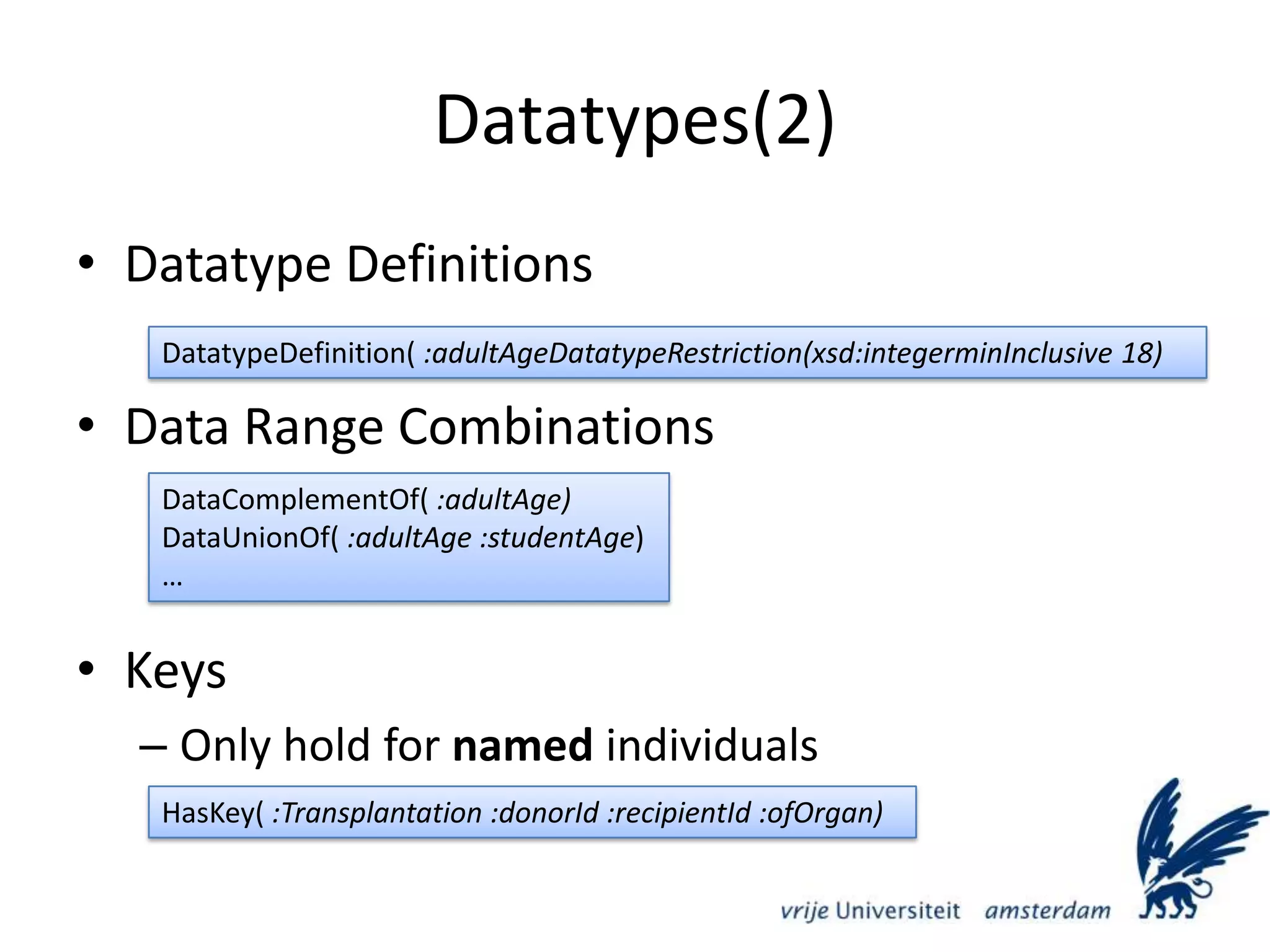

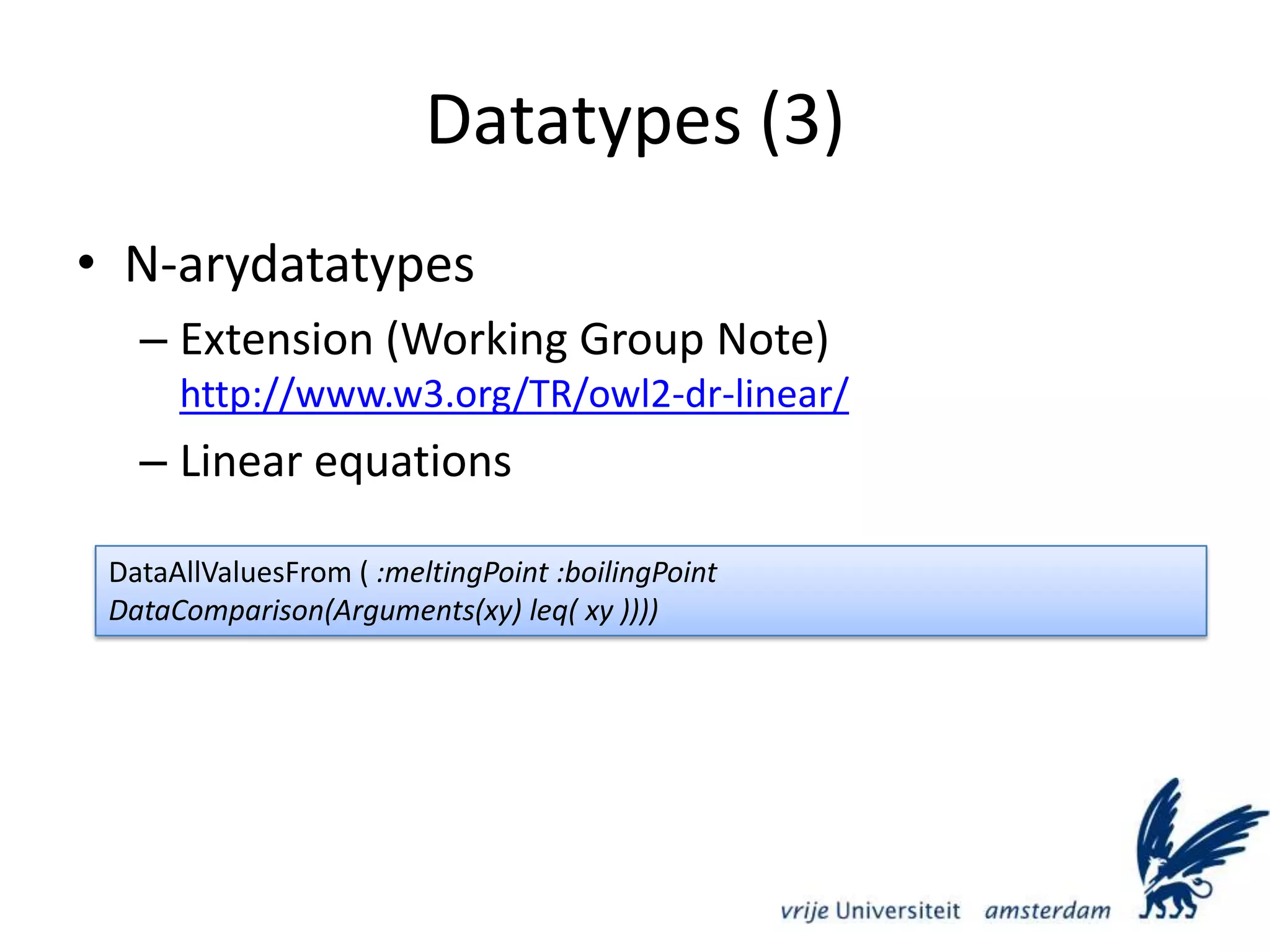

3) Enhanced datatypes including new datatypes, datatype definitions, and data range combinations.

3) Profiles such as OWL 2 EL, QL, and RL that provide different tradeoffs between expressiveness and reasoning complexity.

![Datatypes (1)Extended XML Schema compatibilityNew datatypes not in XML Schemaowl:real, owl:rationalDatatype definitionsxsd:minInclusive, xsd:maxInclusive, xsd:minExclusive, xsd:maxExclusivexsd:pattern (e.g. regular expressions), xsd:lengthrdf:PlainLiteral(together with RIFWG)All RDF plain literalsNot to be used in syntaxes that already deal with RDF plain literalsDatatypeDefinition( a:SSN DatatypeRestriction(xsd:stringxsd:pattern "[0-9]{3}-[0-9]{2}-[0-9]{4}" ))](https://image.slidesharecdn.com/vu-semantic-web-meeting-20091123-091216072032-phpapp01/75/Vu-Semantic-Web-Meeting-20091123-17-2048.jpg)