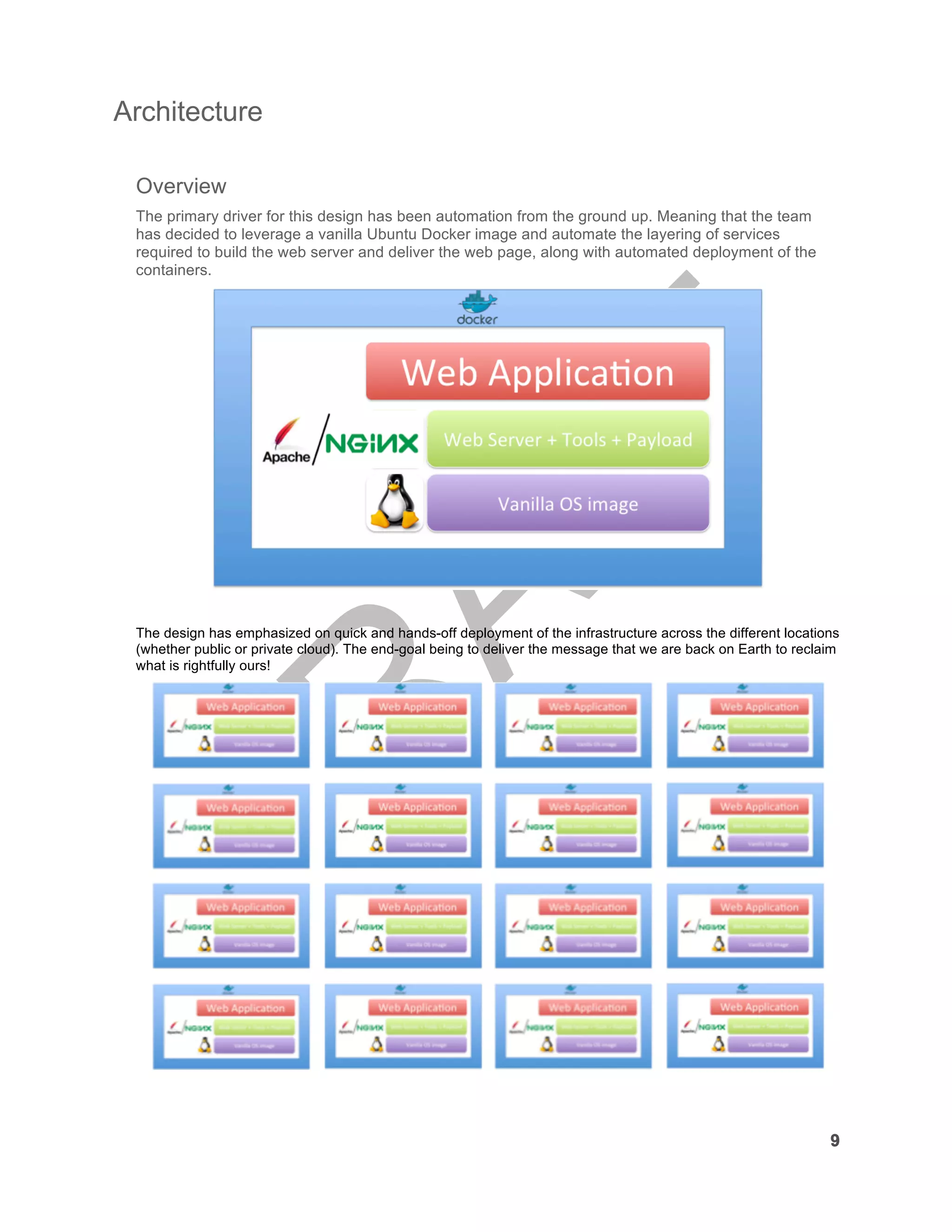

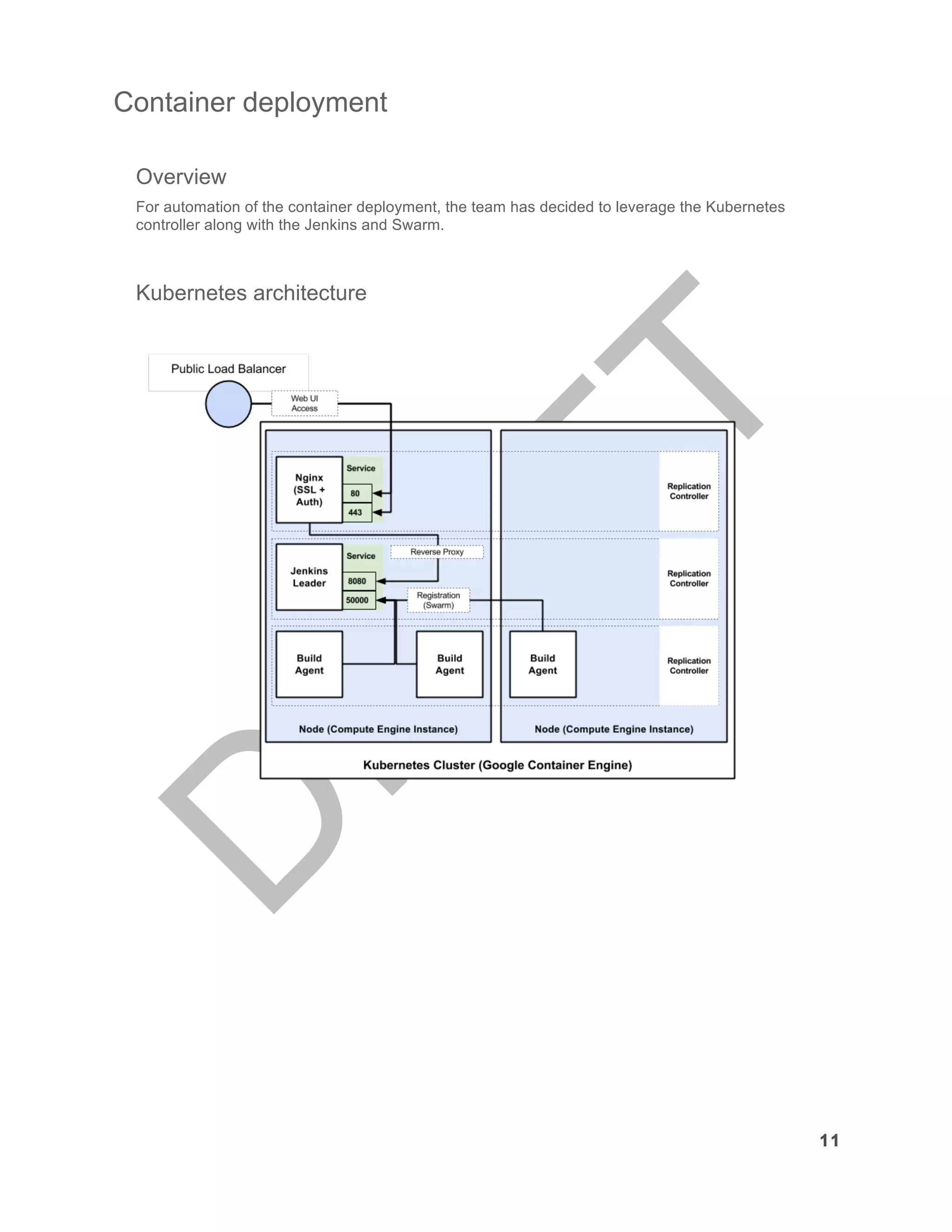

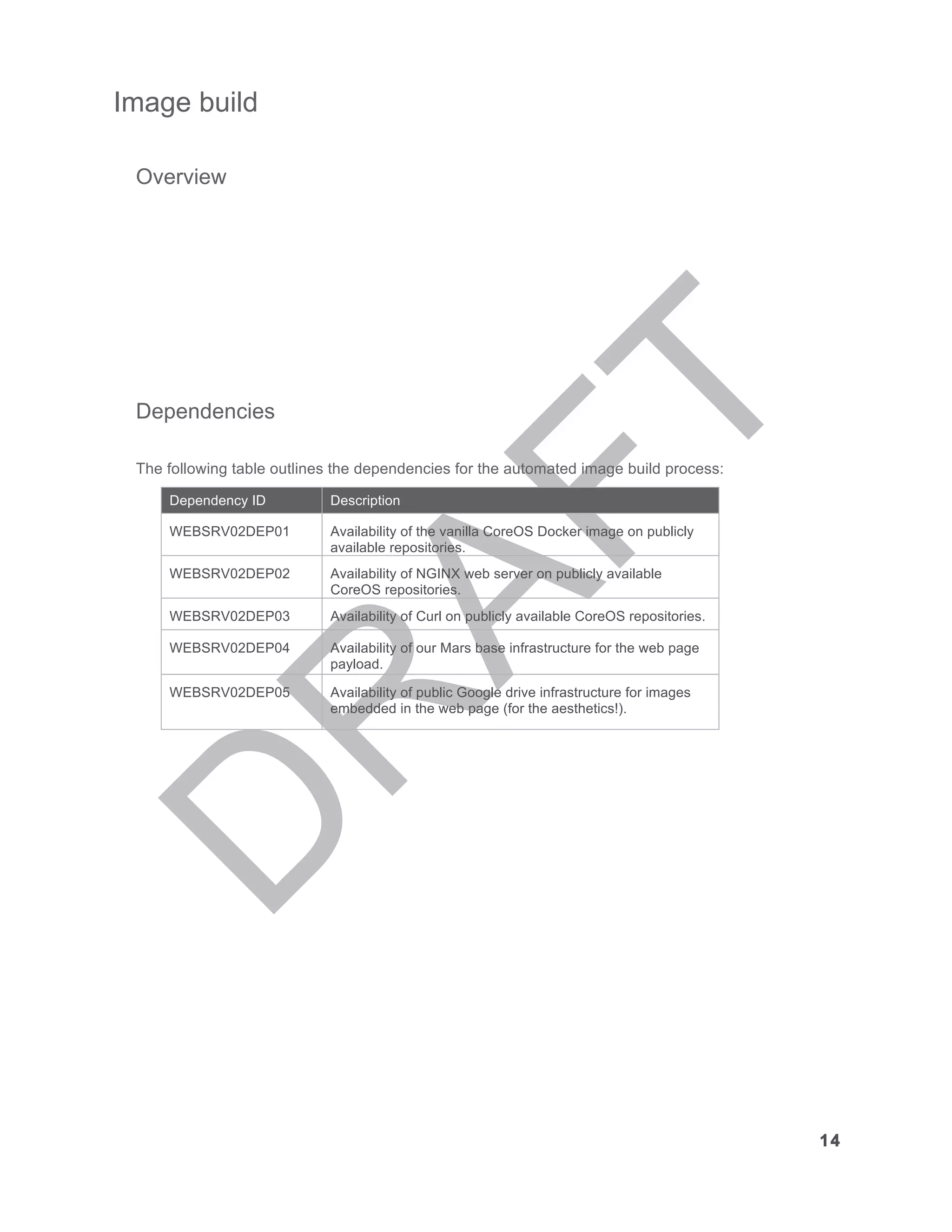

The document outlines the fourth challenge of the Virtual Design Master competition, where teams must design an infrastructure for reclaiming Earth from zombies, with a focus on deploying a simple web application. The project emphasizes automation, using container technology, and requires the use of two different orchestration tools, operating systems, and web servers. Key goals include creating an automated infrastructure build and sharing comprehensive instructions for deployment.