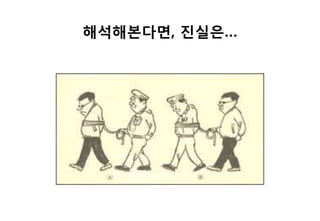

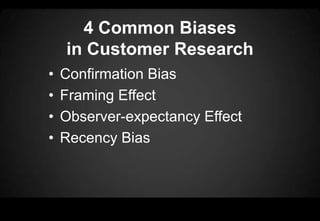

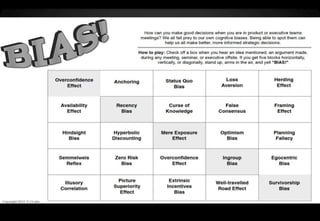

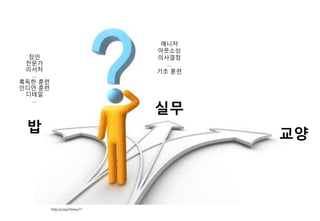

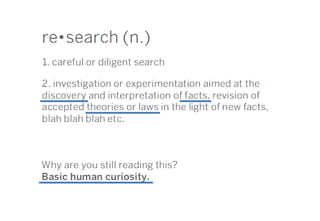

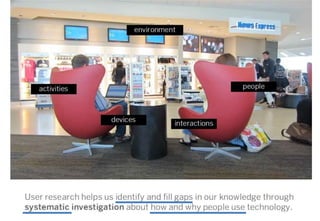

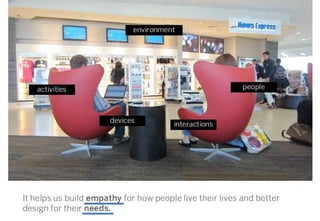

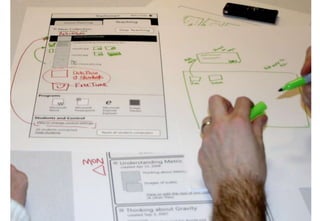

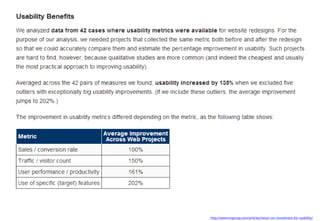

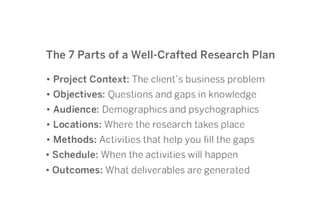

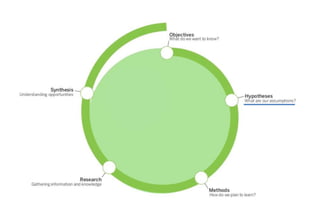

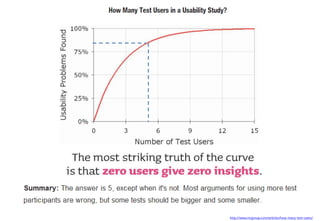

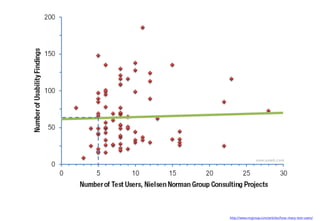

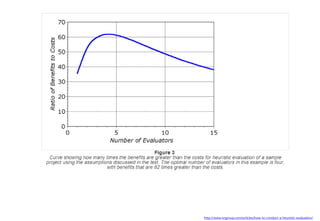

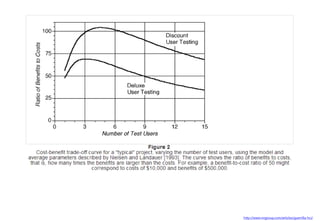

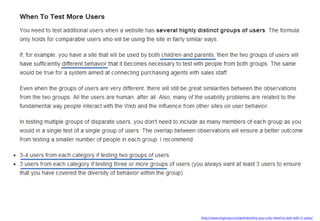

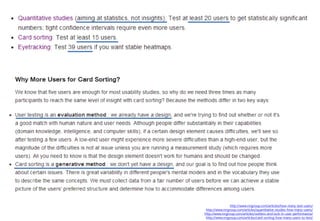

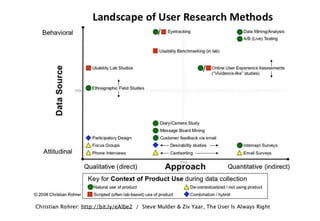

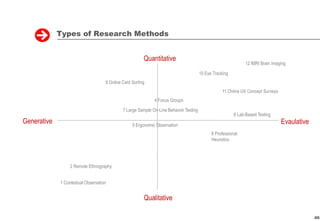

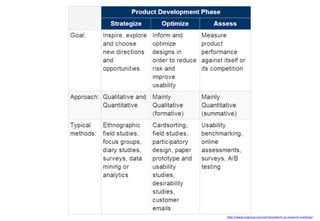

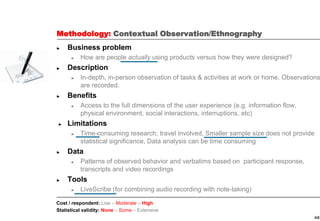

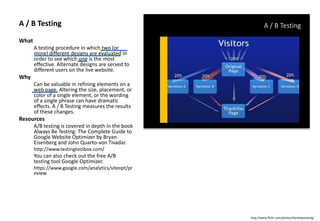

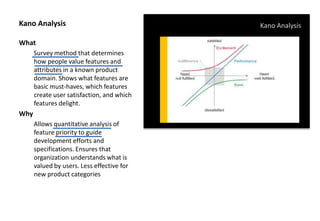

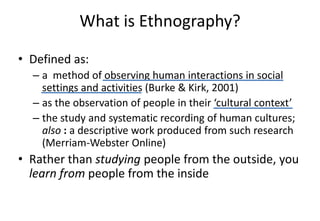

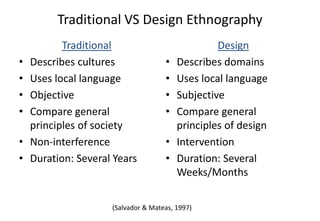

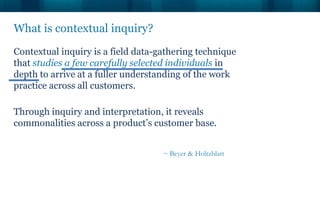

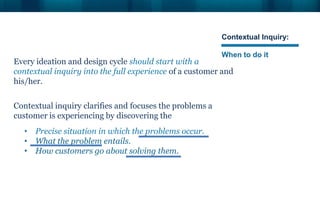

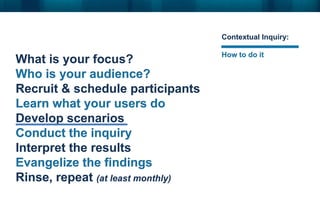

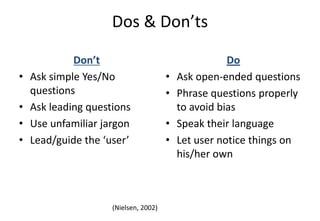

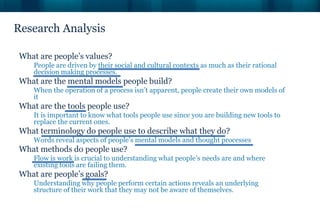

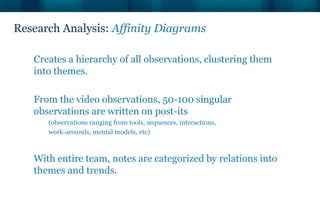

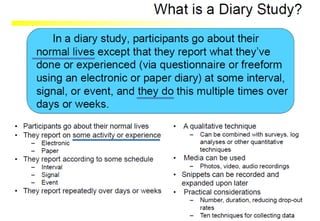

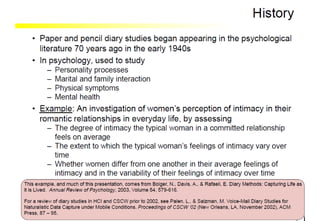

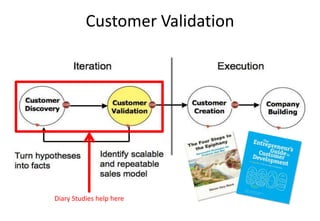

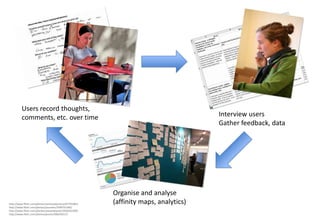

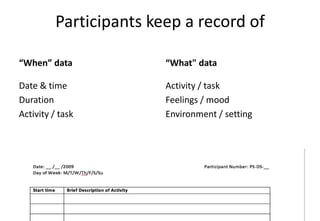

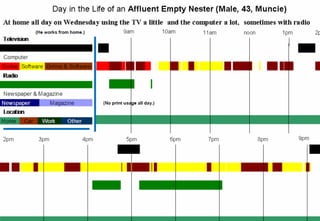

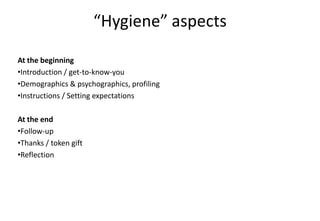

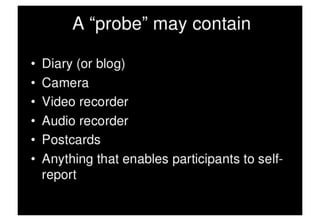

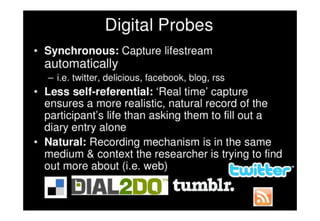

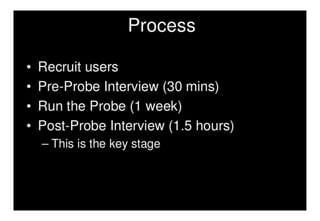

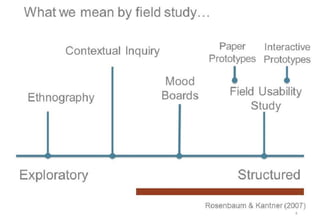

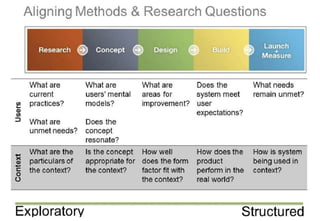

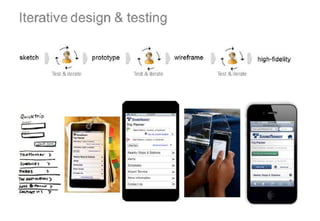

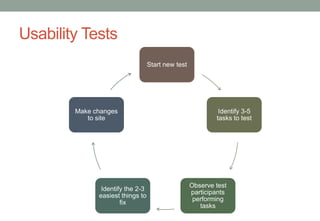

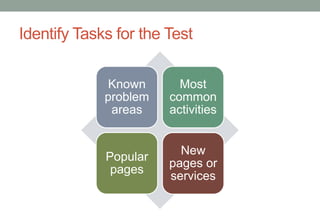

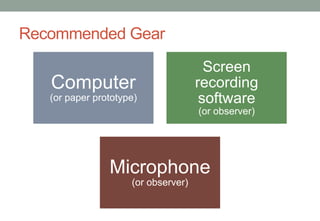

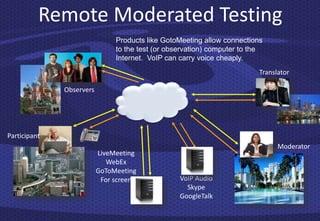

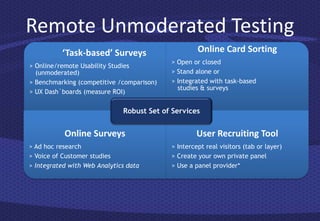

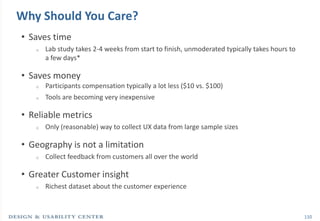

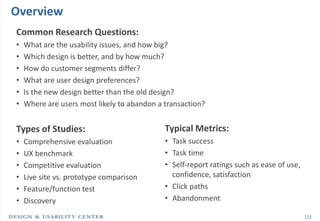

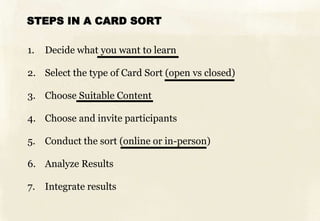

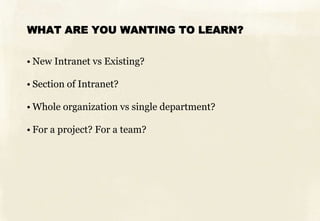

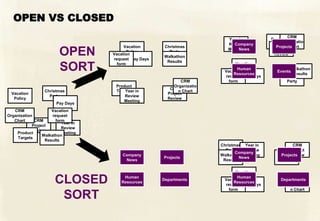

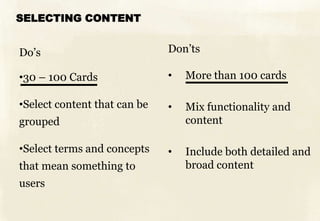

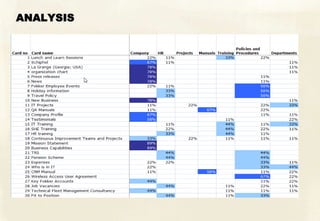

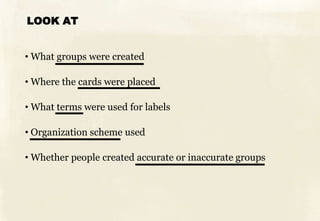

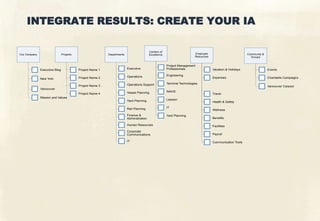

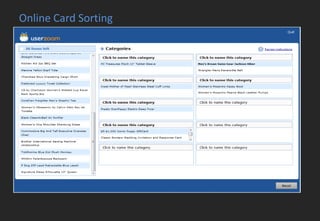

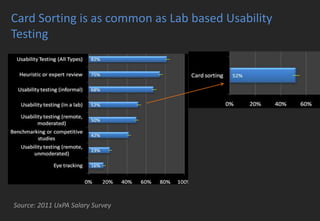

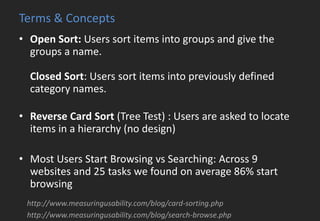

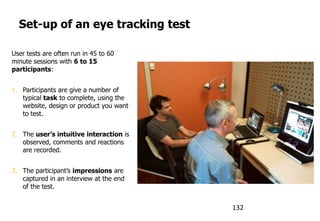

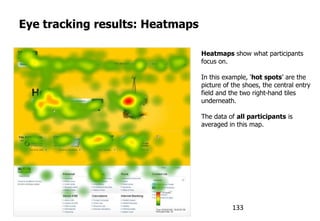

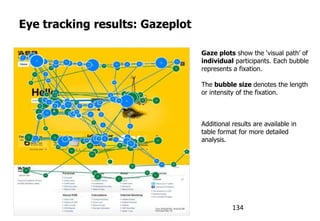

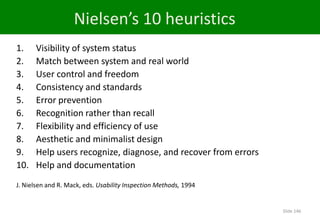

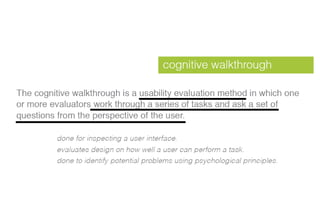

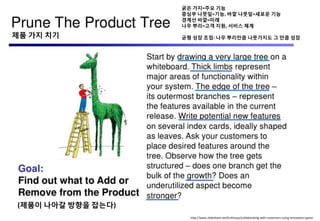

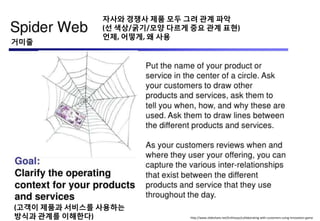

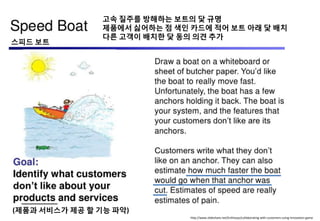

This document provides an overview of UX research methods. It begins with an introduction to big thinking in UX and discusses common biases in customer research such as confirmation bias and framing effect. The document then defines terms like market research, user research, and UX research. It provides examples of case studies and describes various methodologies for conducting UX research like contextual inquiry, diary studies, card sorting, and usability testing. Details are given for each methodology including when to use it, how to conduct it, types of data collected, and example tools. The document concludes with a section on innovation game techniques.