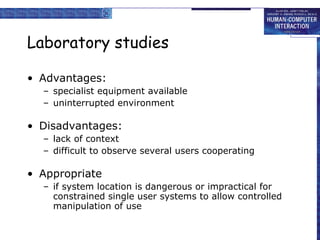

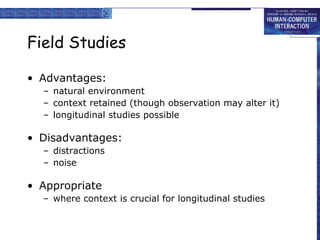

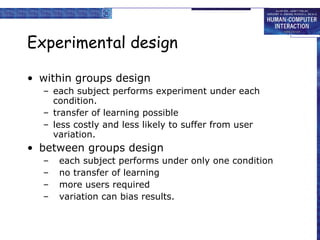

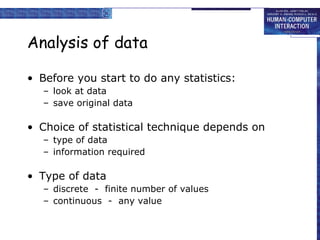

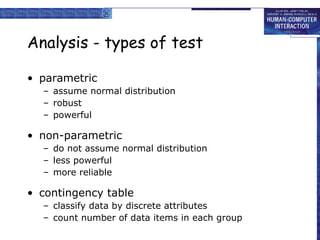

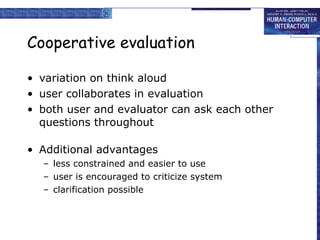

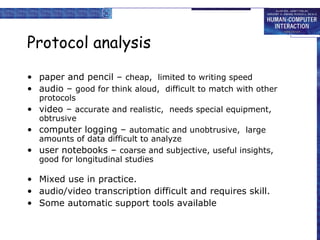

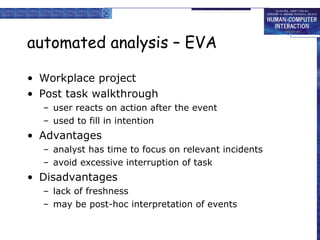

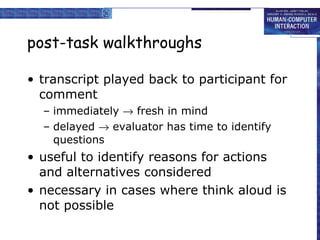

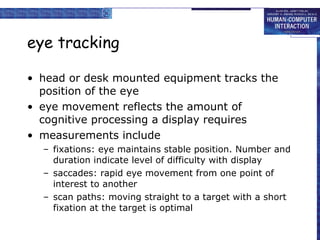

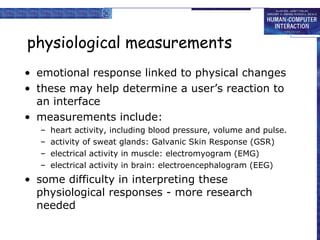

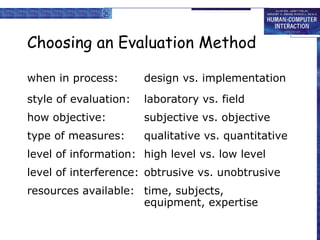

The document discusses various evaluation techniques for assessing the usability and functionality of systems through different methods, including cognitive walkthroughs, heuristic evaluations, and user participation. It outlines the advantages and disadvantages of laboratory and field studies, approaches for experimental evaluation, and data analysis considerations. Additionally, it covers the use of interviews, questionnaires, and physiological measurement methods to gather user feedback and assess interaction performance.