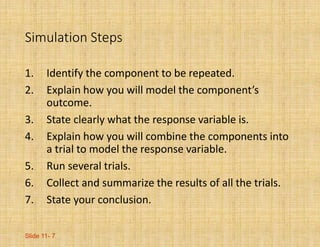

While computers are often used to generate random numbers, they are not truly random and instead produce pseudorandom values following a program. There are better ways to generate random data that is equally likely and truly random. Simulations are used to model and investigate random processes, events, and questions when collecting real data is difficult. A successful simulation involves identifying components, modeling outcomes, defining response variables, running multiple trials, analyzing results, and drawing conclusions. Care must be taken to avoid overstating findings or confusing simulation results with reality.