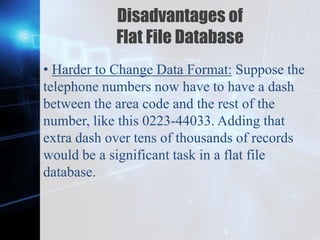

This document discusses trends in database management. It describes different types of databases including operational databases, analytical databases, data warehouses, distribution databases, columnar databases, data warehouse appliances, in-memory databases, embedded databases, document oriented databases, graph databases, hypermedia databases, and flat file databases. It outlines the key characteristics and purposes of each type of database. The document also covers learning outcomes which are to define and explain embedded databases and to identify, compare, and describe document oriented databases, graph databases, hypermedia databases, and flat file databases.