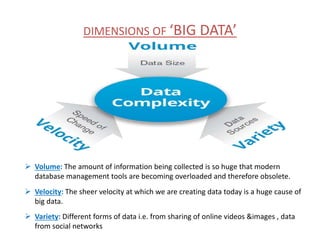

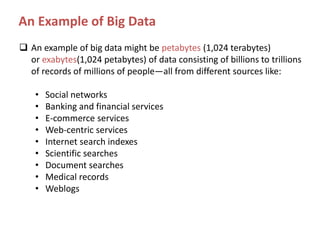

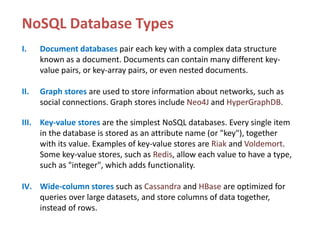

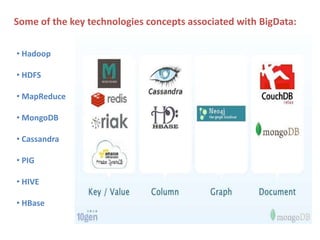

Big data refers to massive amounts of structured and unstructured data that is difficult to process using traditional databases due to its volume, velocity and variety. NoSQL databases provide an alternative for storing and analyzing big data by allowing flexible, schema-less models and scaling horizontally. While NoSQL databases offer benefits like flexibility and scalability, they also present challenges including lack of maturity compared to SQL databases and difficulties with analytics, administration and expertise.