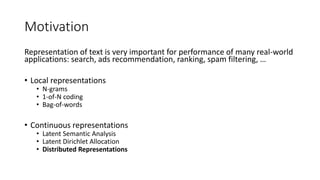

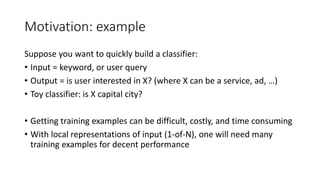

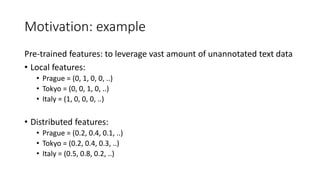

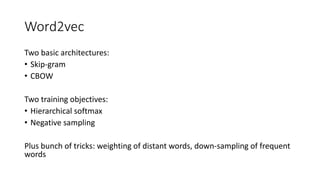

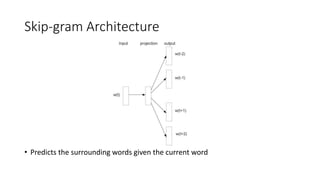

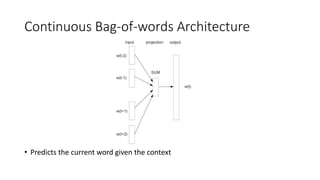

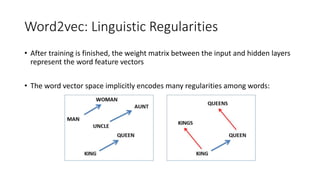

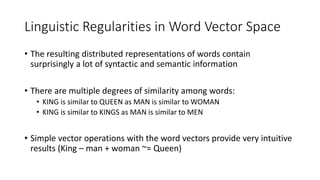

The document discusses word embedding techniques, specifically Word2vec. It introduces the motivation for distributed word representations and describes the Skip-gram and CBOW architectures. Word2vec produces word vectors that encode linguistic regularities, with simple examples showing words with similar relationships have similar vector offsets. Evaluation shows Word2vec outperforms previous methods, and its word vectors are now widely used in NLP applications.