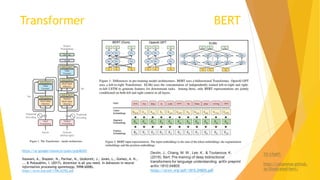

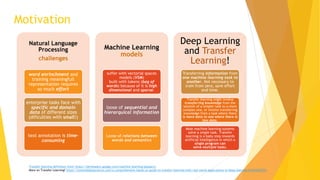

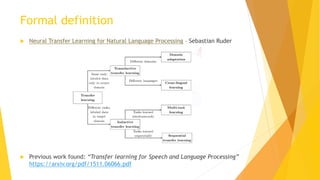

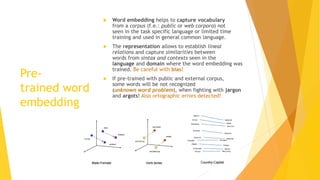

The document outlines the challenges and advancements in transfer learning within natural language processing (NLP), emphasizing the importance of word embeddings and pre-trained models. It discusses various techniques for generating word embeddings, their limitations, and the evolving role of deep learning architectures like BERT and transformers in improving NLP tasks. Furthermore, it highlights future directions, including addressing issues related to out-of-vocabulary words and biases in language models.

![Conceptual Architecture

(common offline/training and online/predict/execution time)

Representation

obtained with

Word embedding

client 0.29, 0.53, -1.1, 0.23, …, 0.43

branch 0.39, 0.49, -1.2, 0.42, …, 0.98

Text entry

processed

(sentence,

Short text,

Long text)

Neural network

model

Unsupervised

model

Classifiers

(…)

[ [ 0.29, 0.53, -1.1, 0.23, …, 0.43], …, [0.39, 0.49, -1.2, 0.42, …, 0.98] ]

Mapping / look-up table

Vocabulary Word vectors

Response

for

specific

task

Evaluation

EvaluationEvaluation

Concatenation as input (for example)](https://image.slidesharecdn.com/challengesintransferlearninginnlp-190531073944/85/Challenges-in-transfer-learning-in-nlp-11-320.jpg)