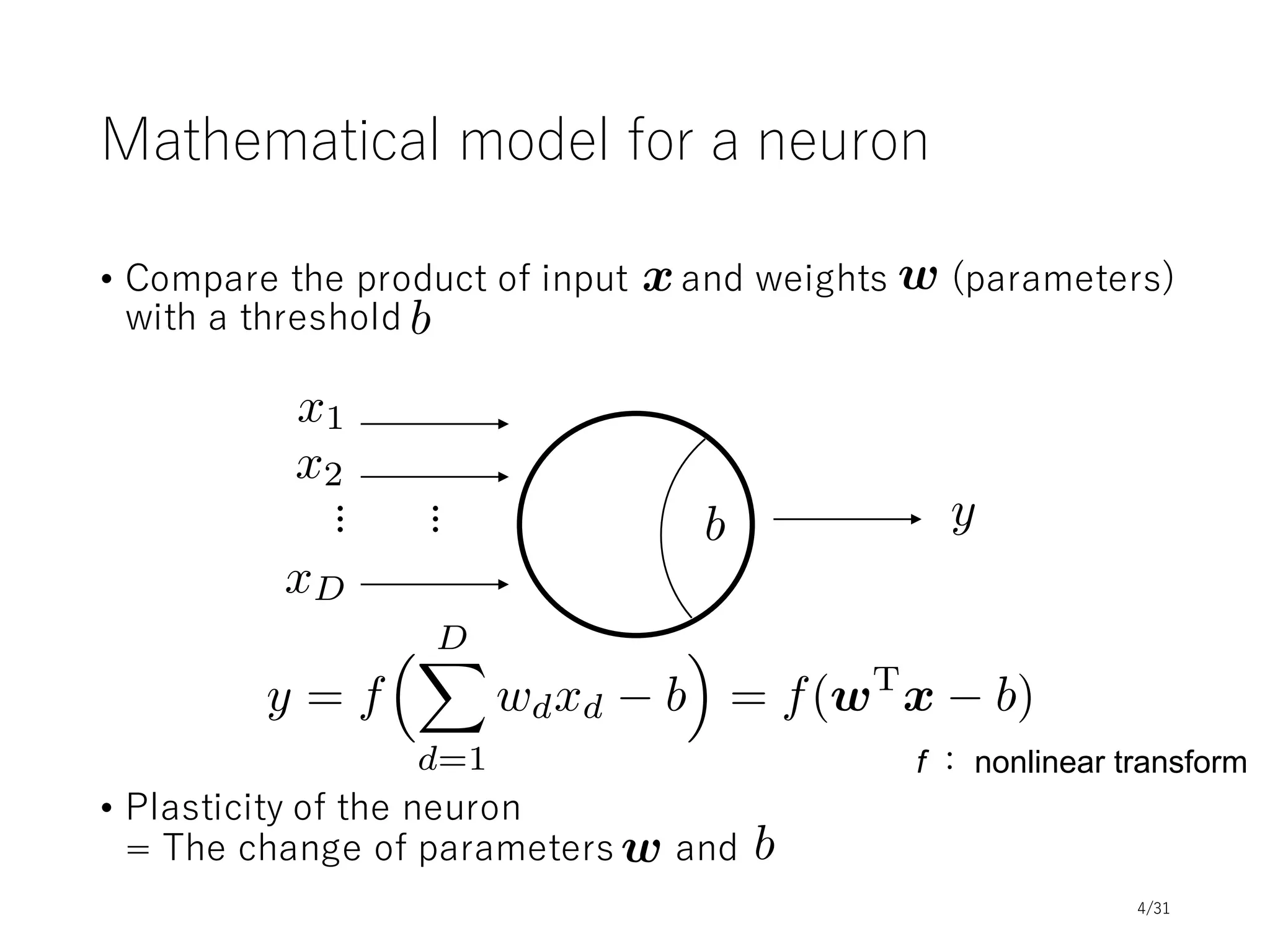

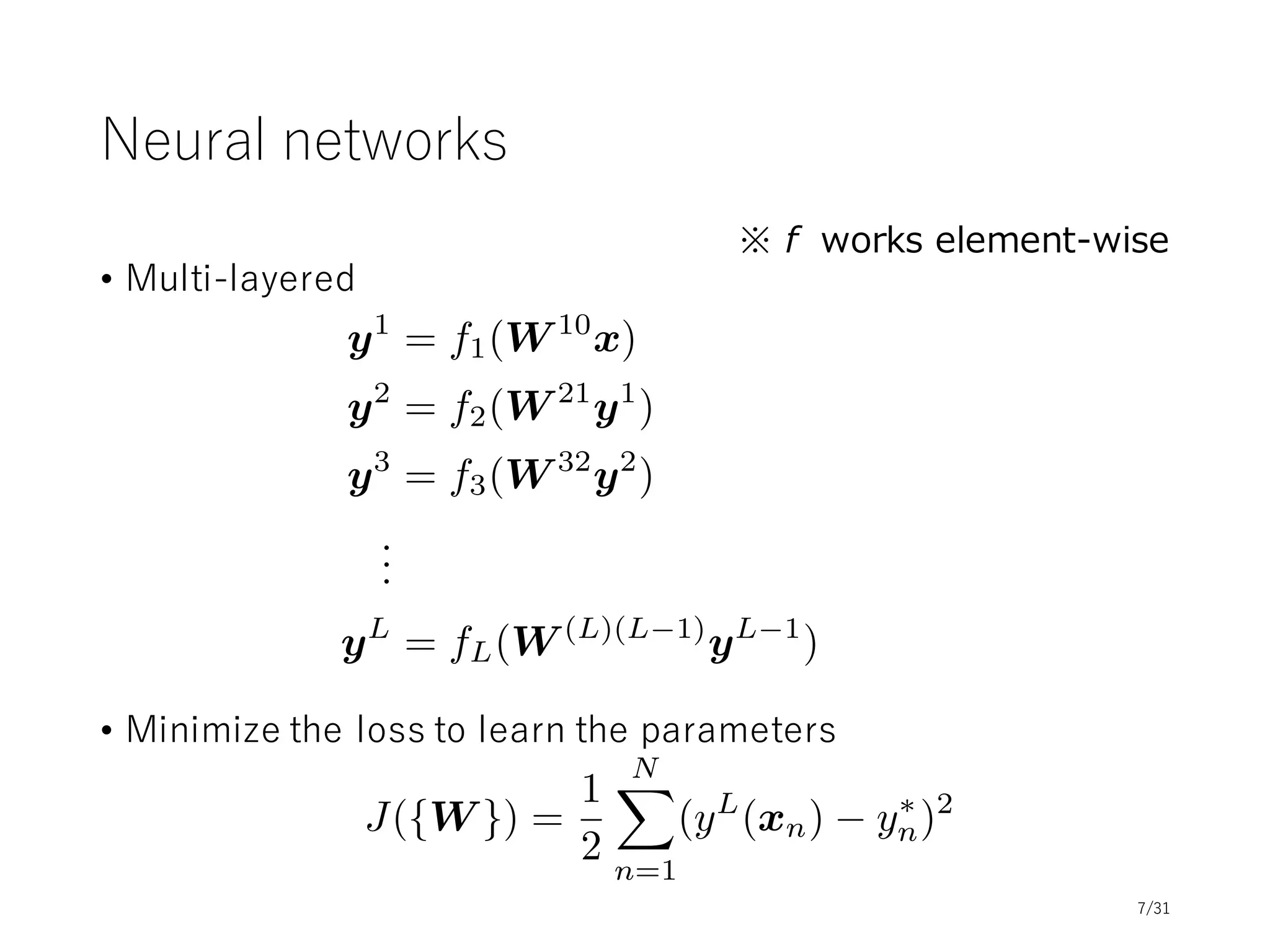

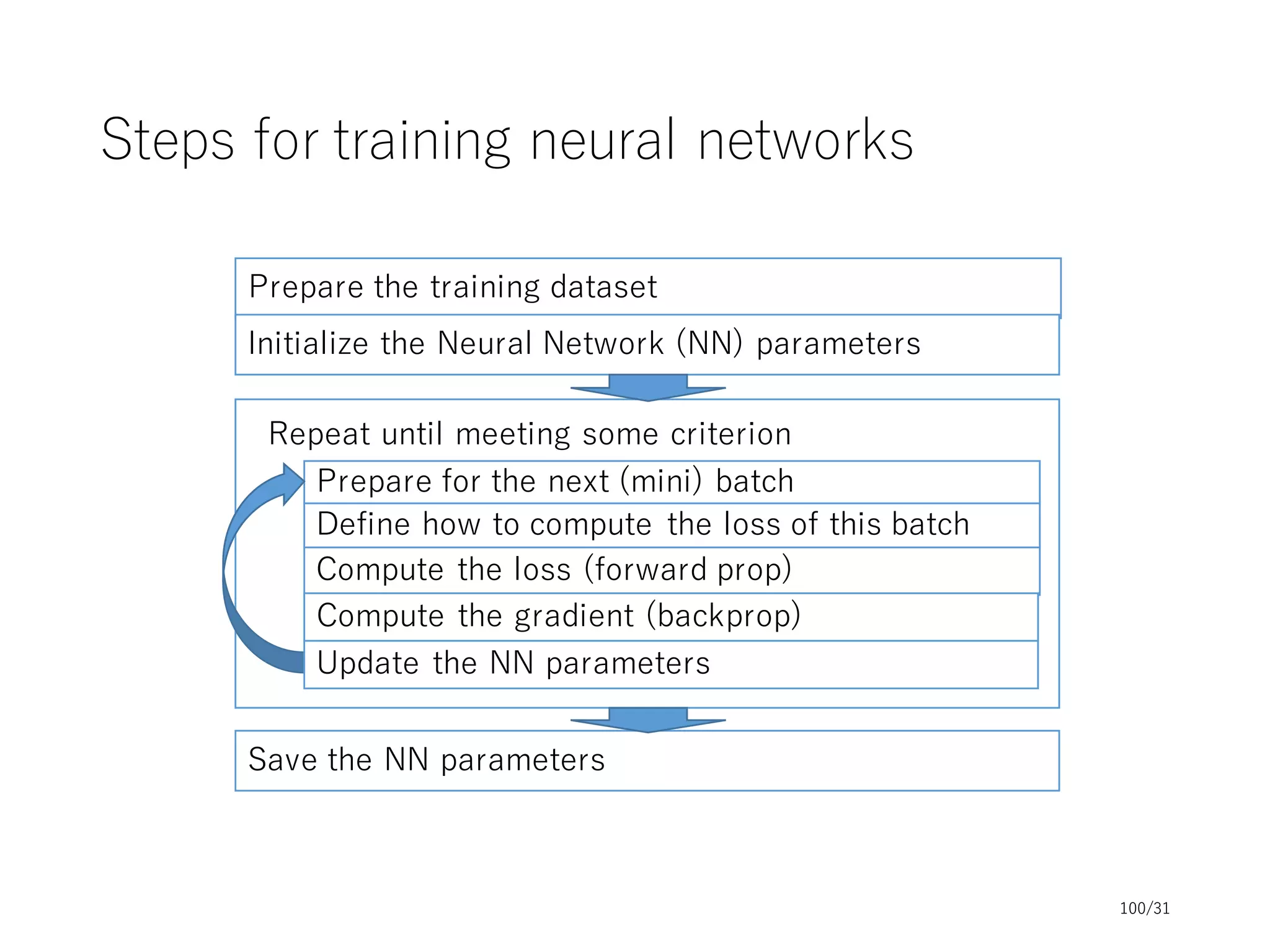

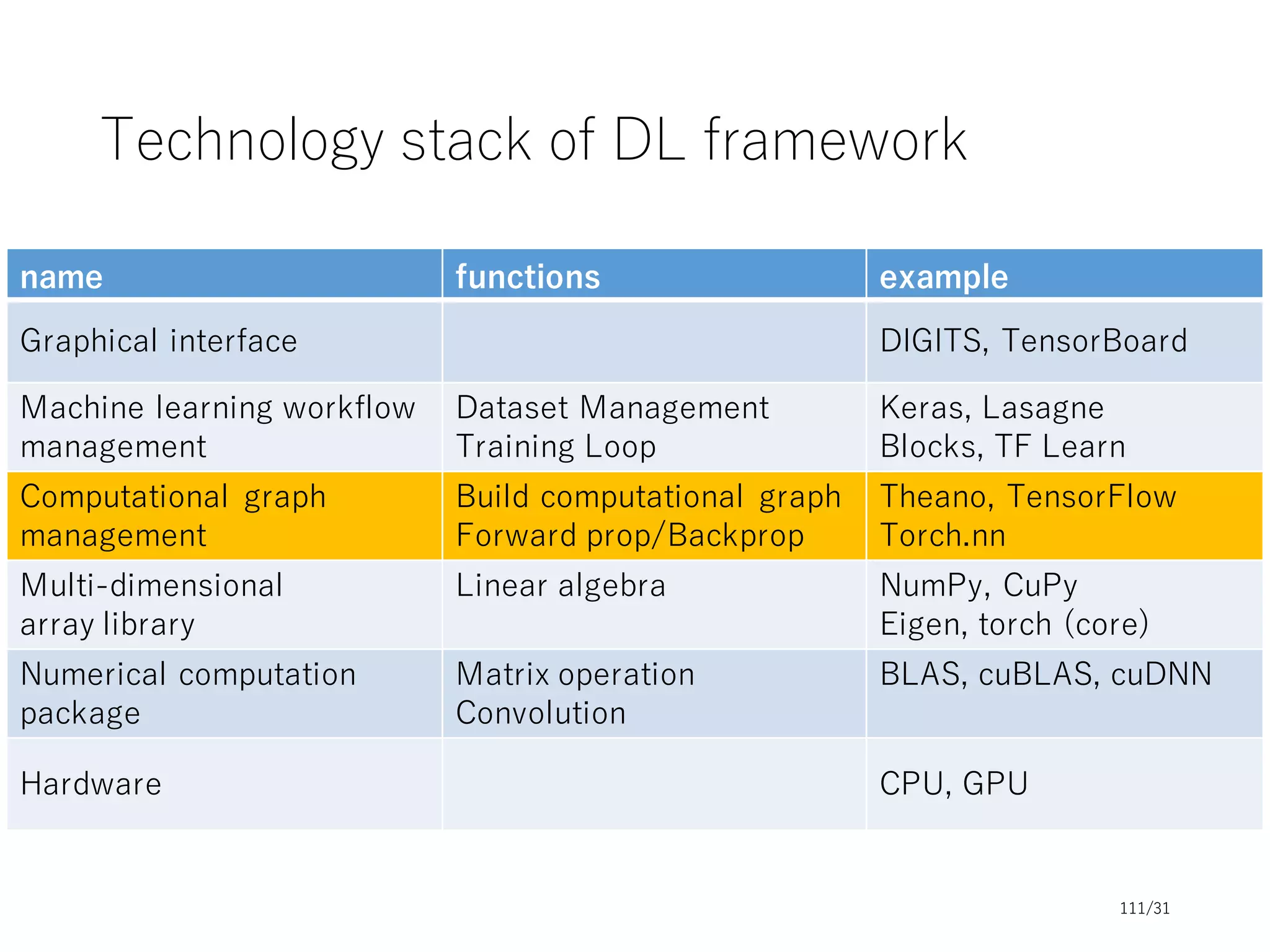

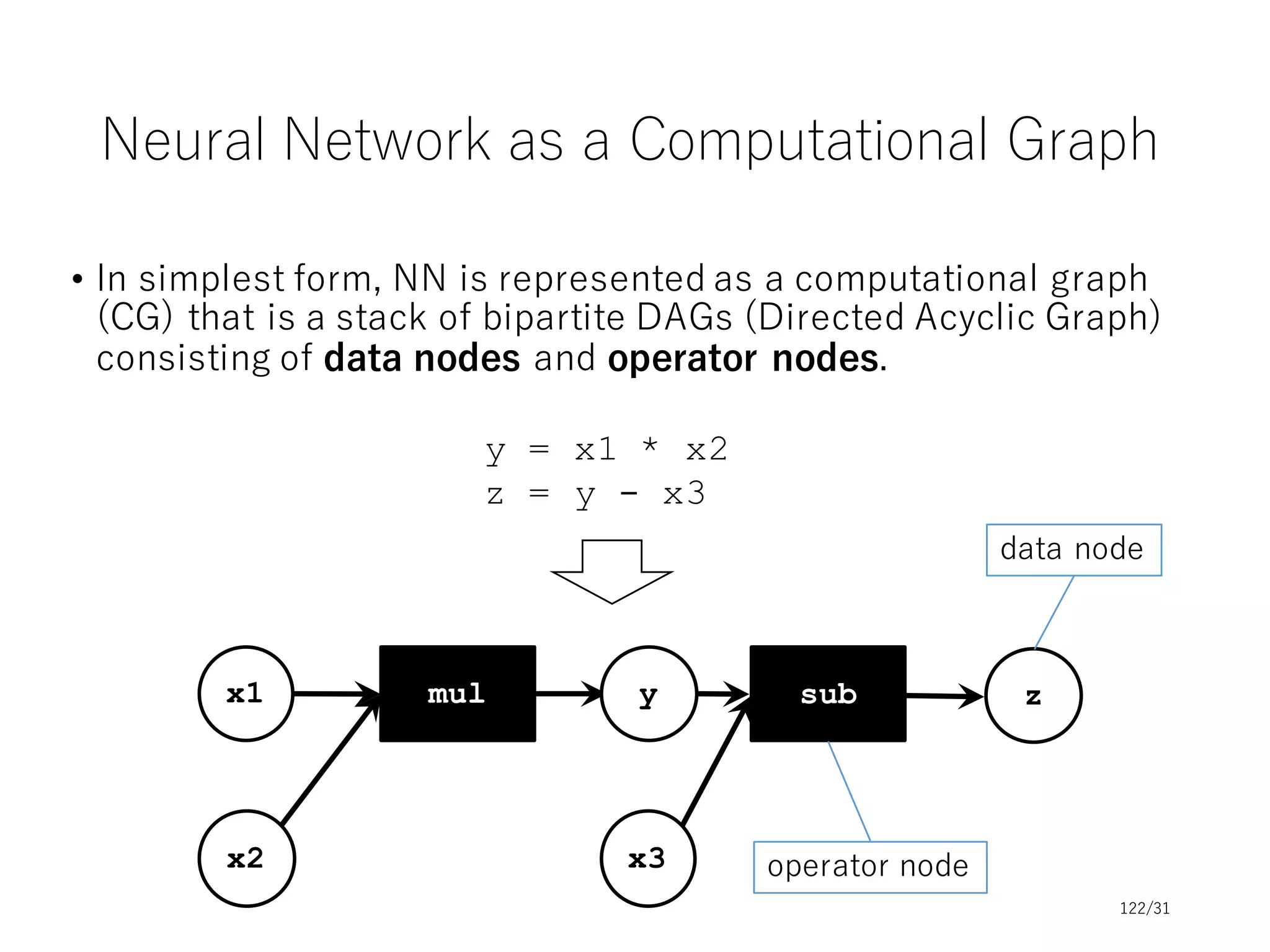

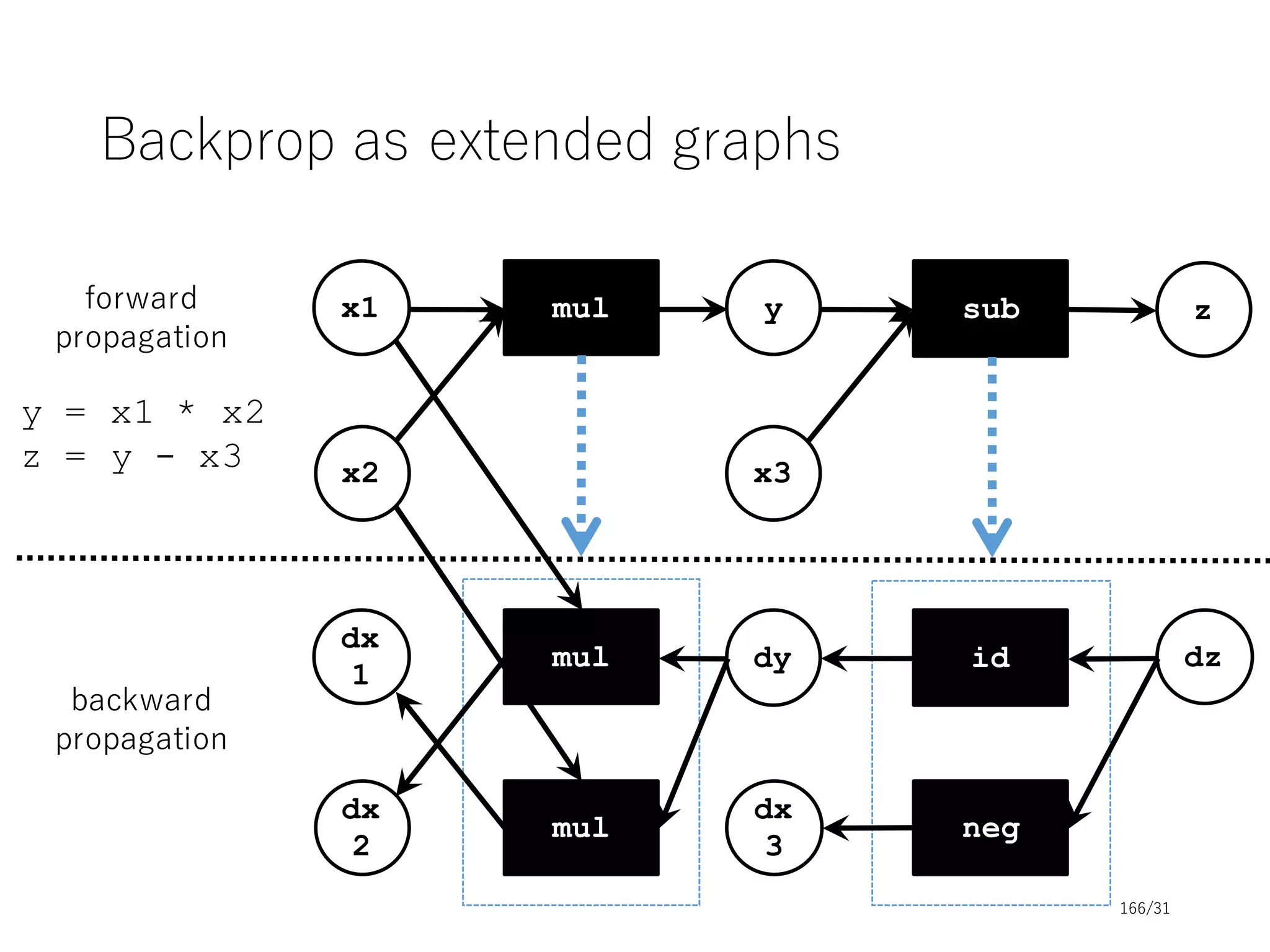

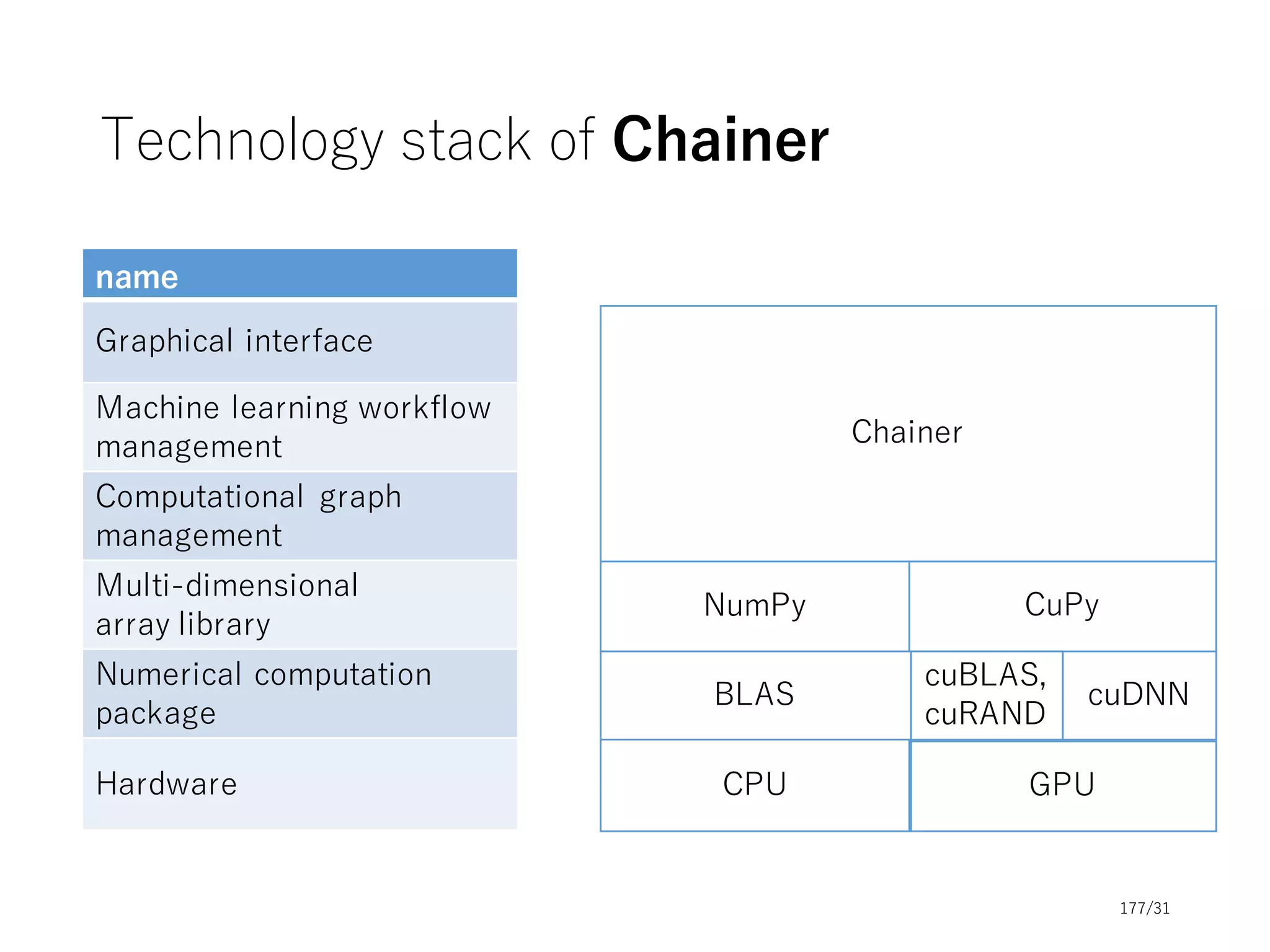

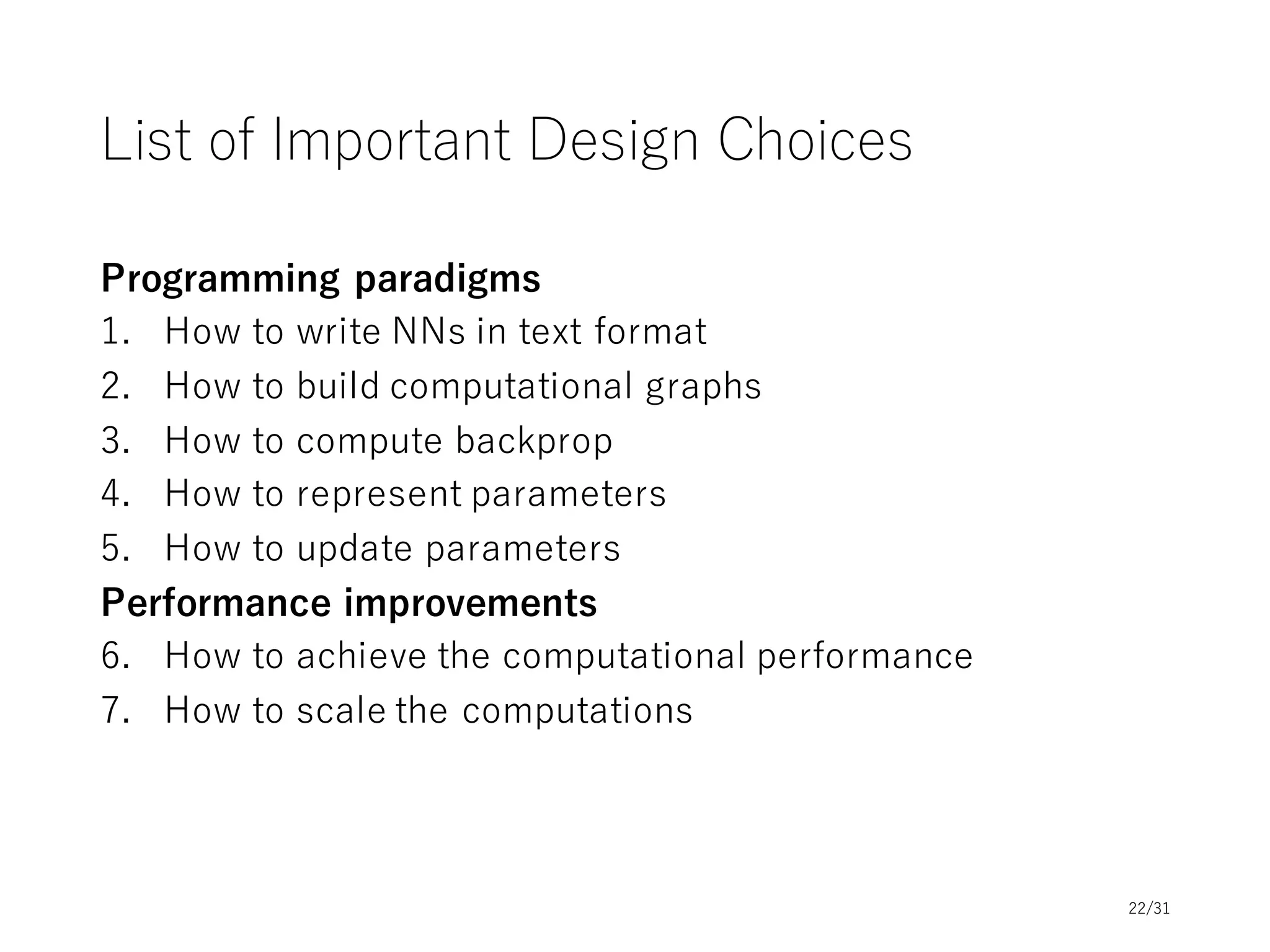

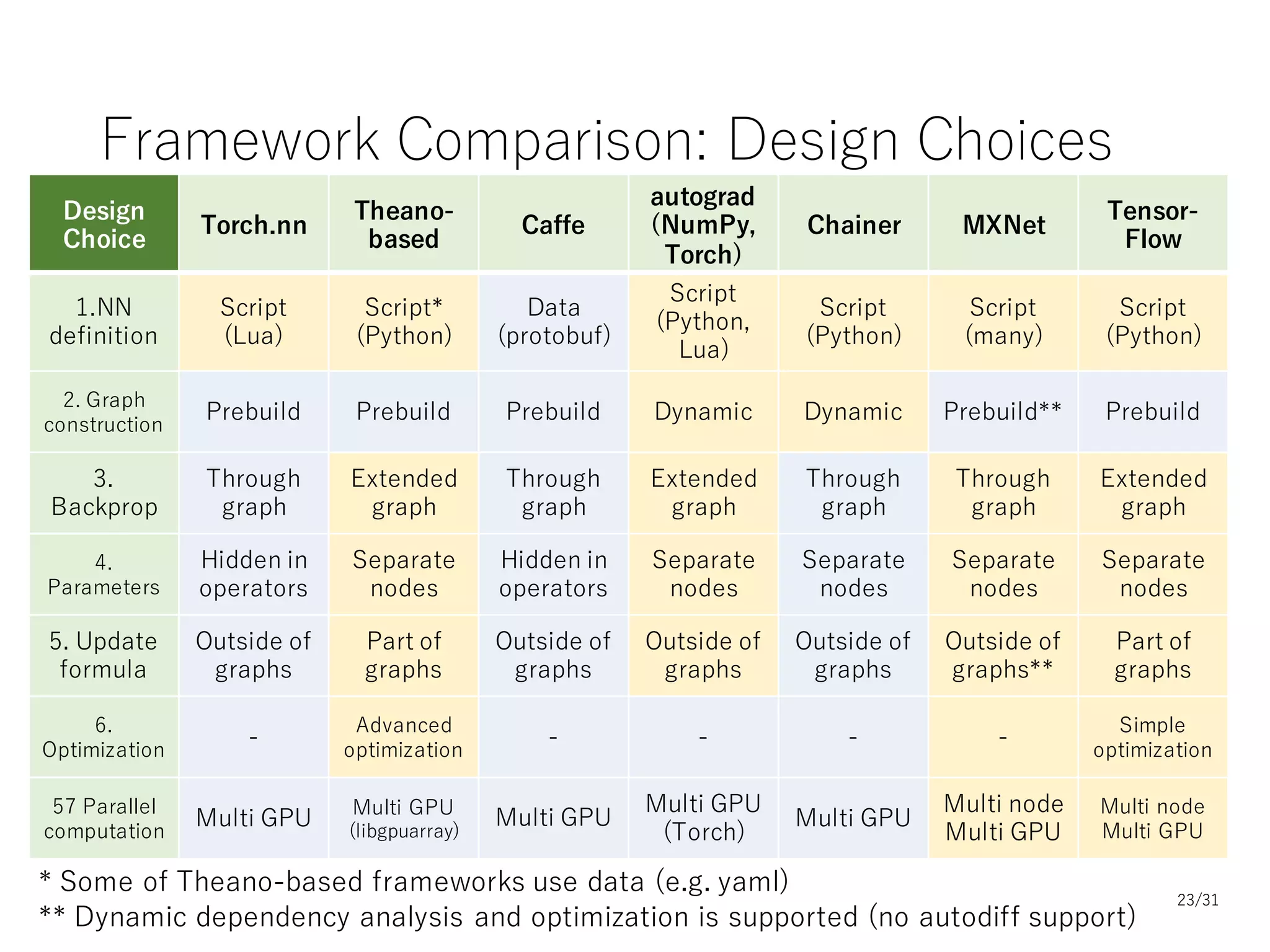

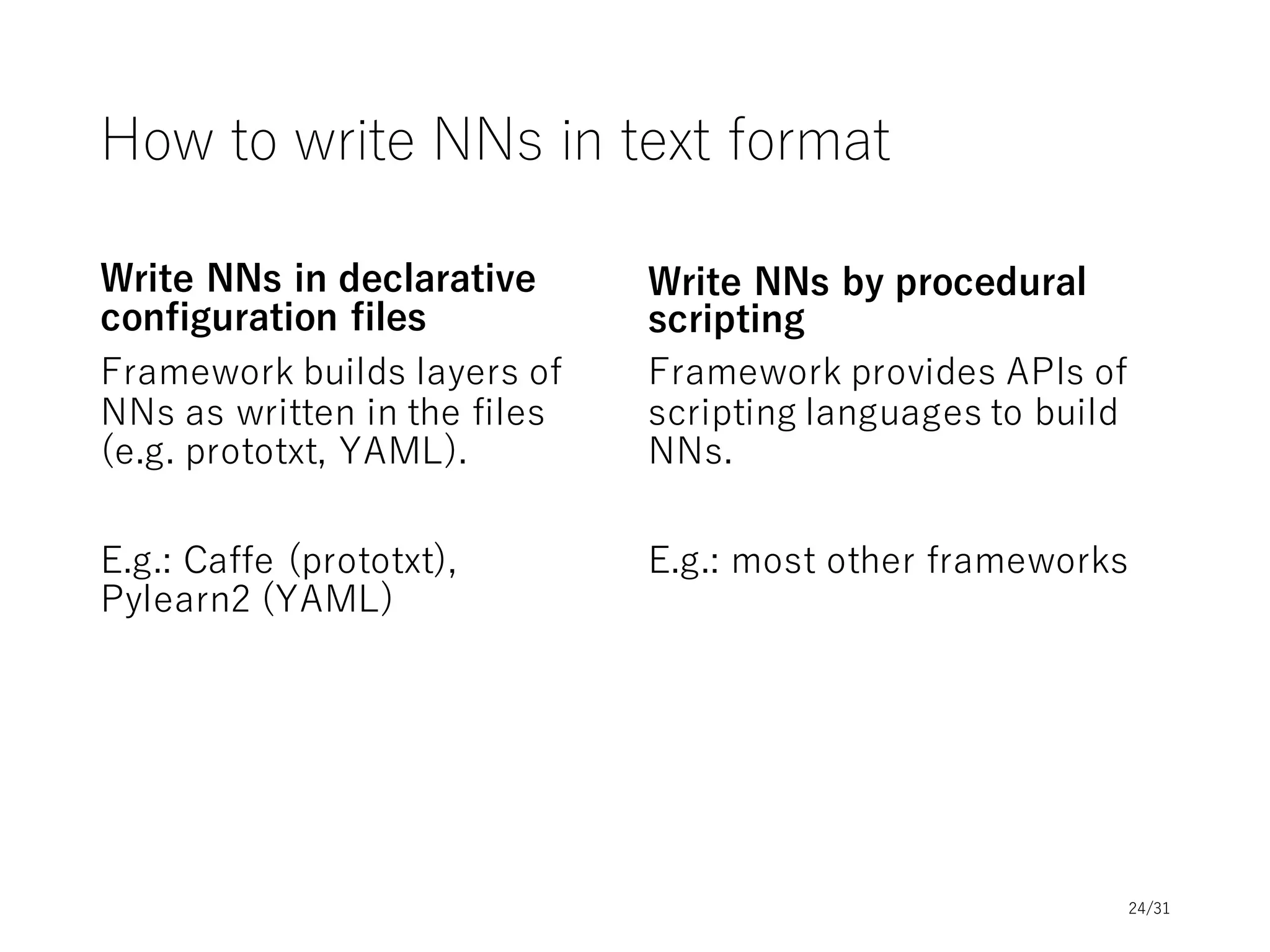

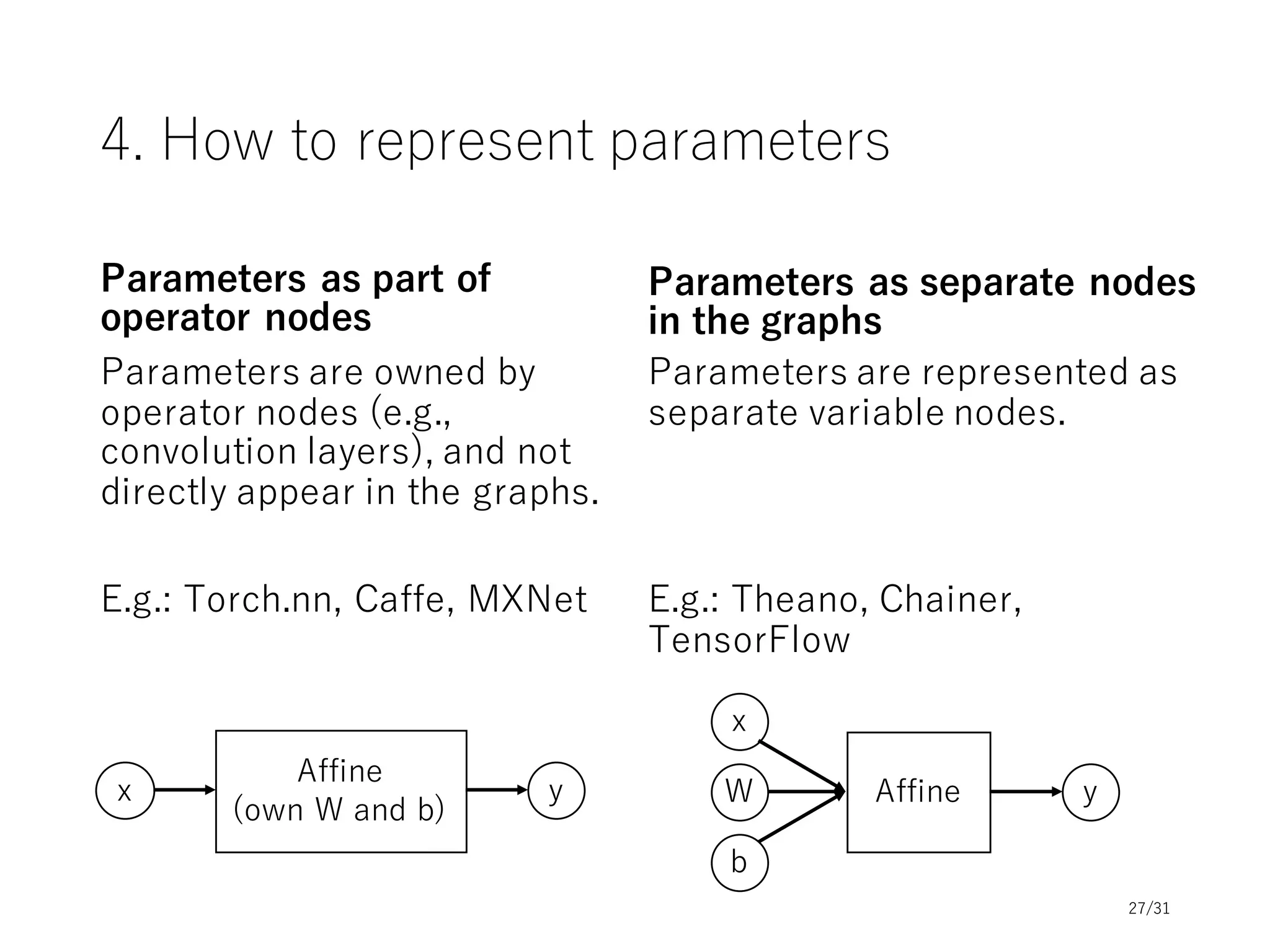

This document provides an overview and agenda for a tutorial on deep learning implementations and frameworks. The tutorial is split into two sessions. The first session will cover basics of neural networks, common design aspects of neural network implementations, and differences between deep learning frameworks. The second session will include coding examples of different frameworks and a conclusion. Slide decks and resources will be provided on topics including basics of neural networks, common design of frameworks, and differences between frameworks. The tutorial aims to introduce fundamentals of deep learning and compare popular frameworks.