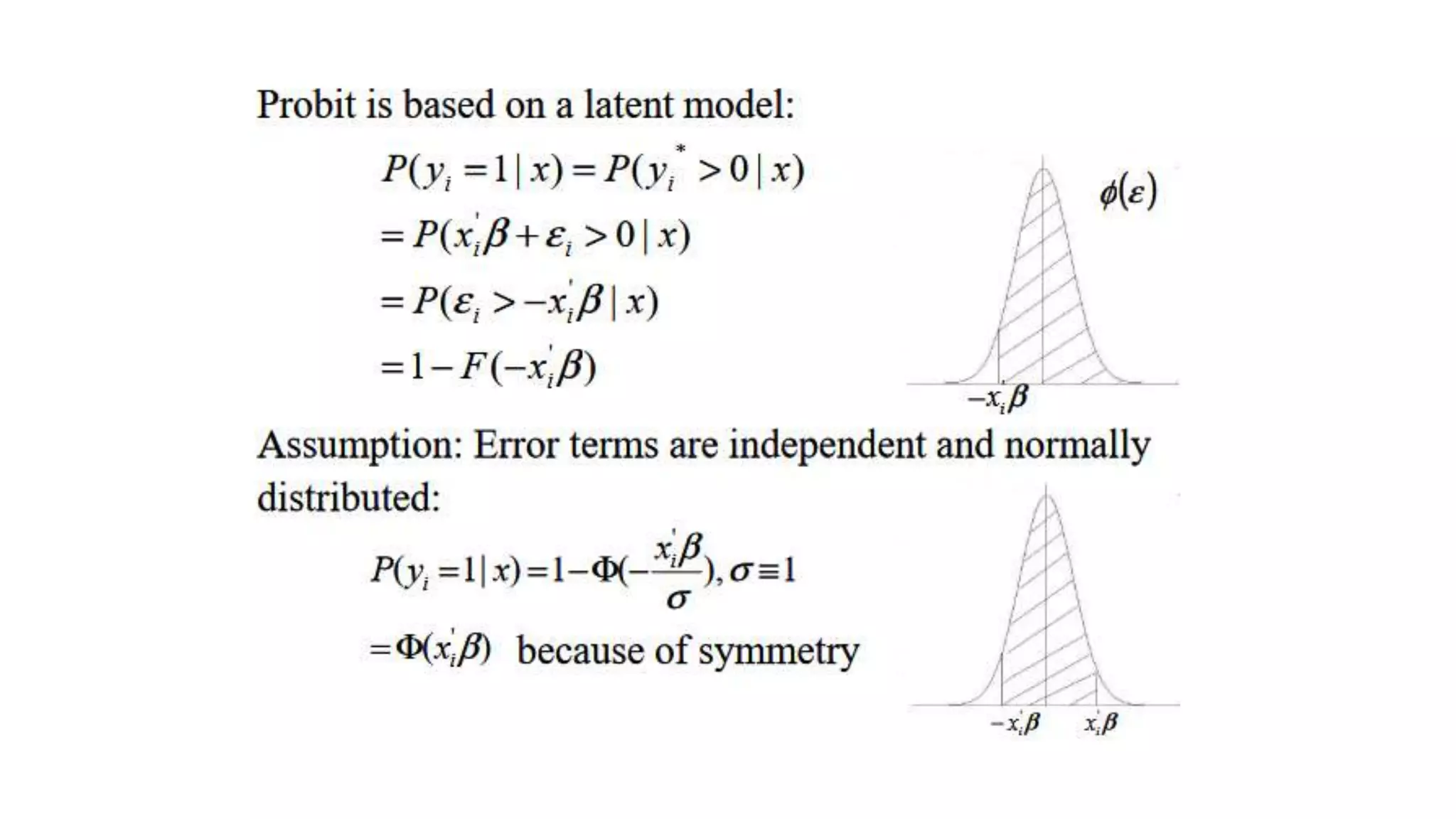

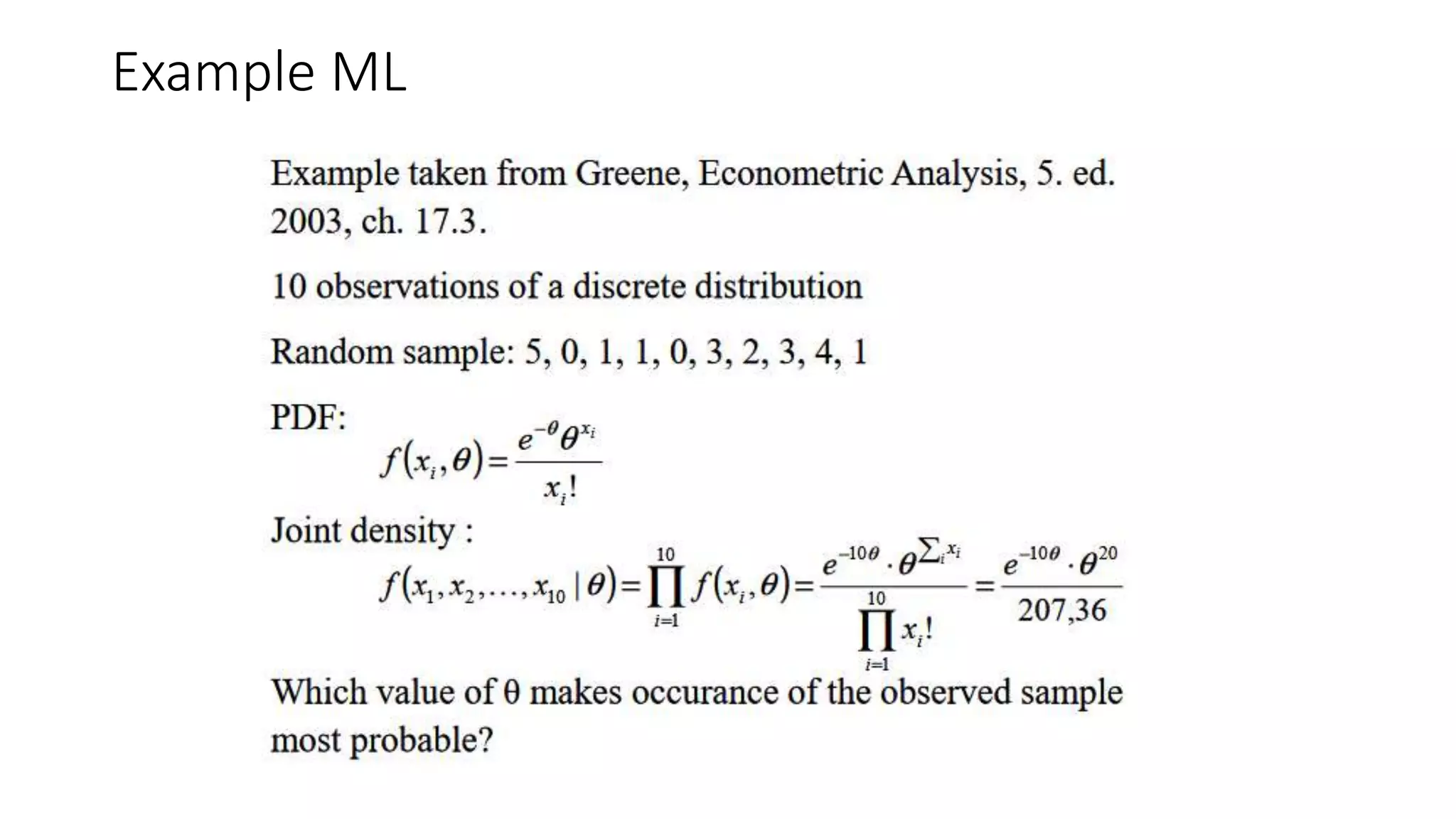

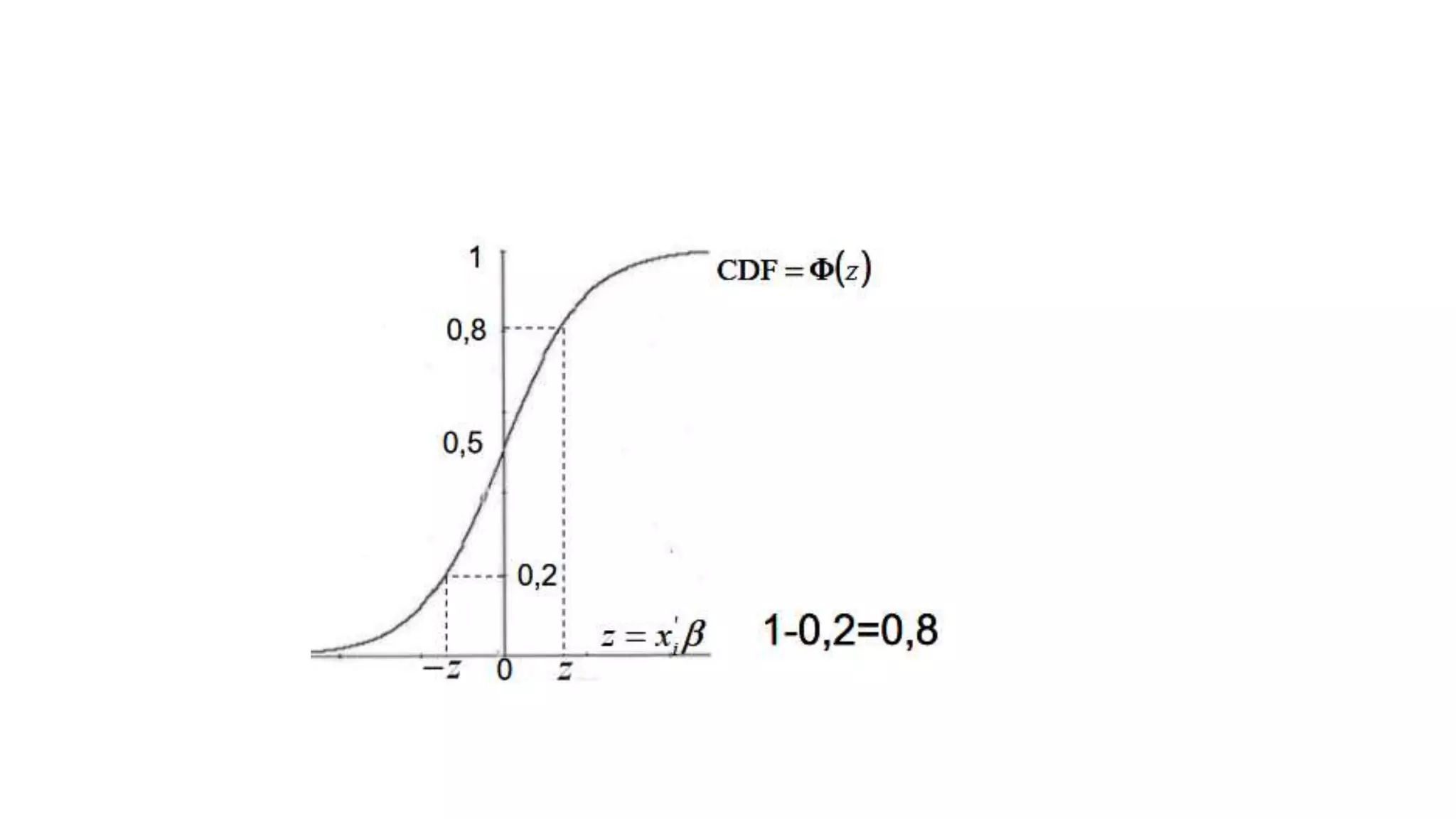

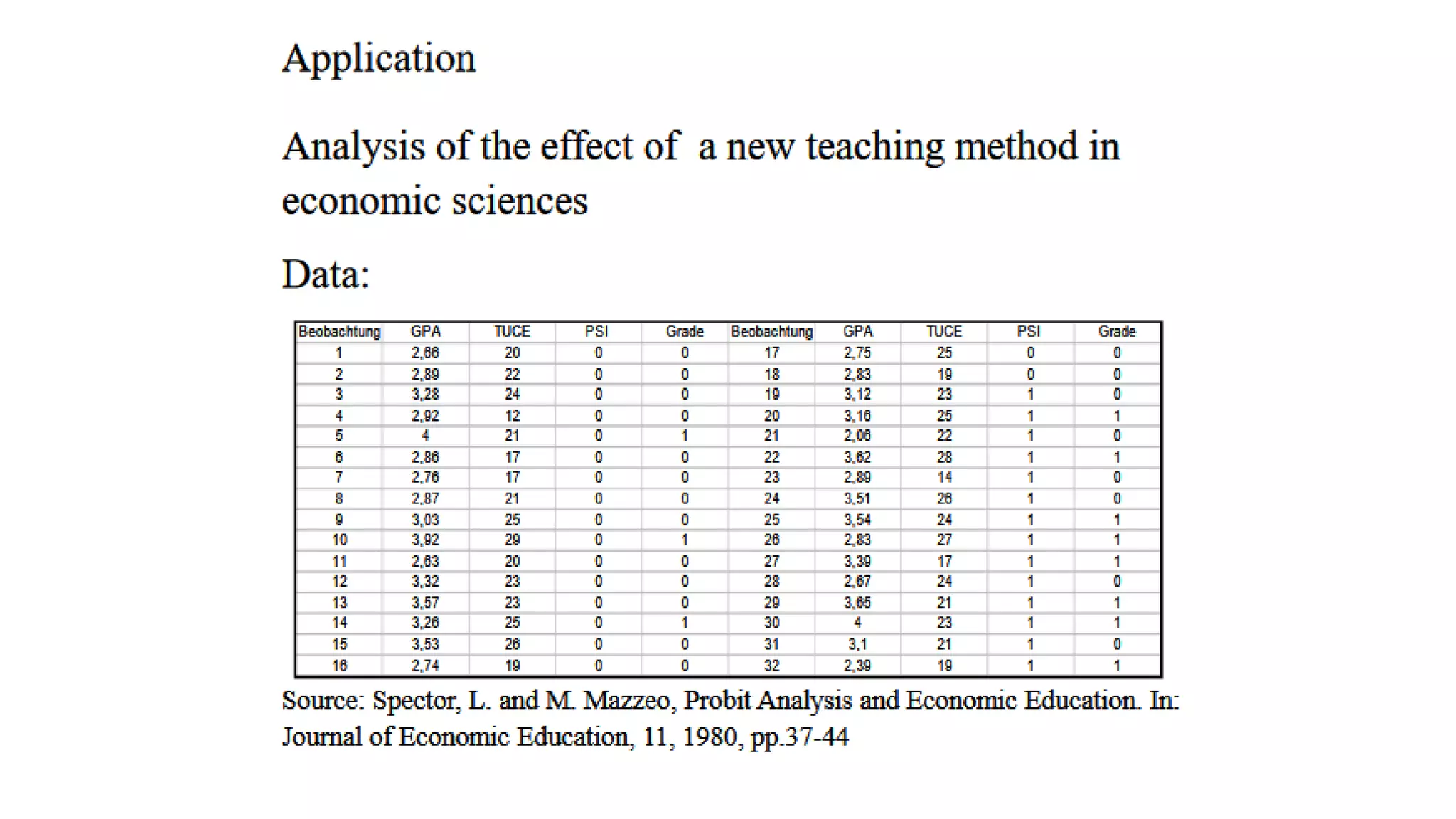

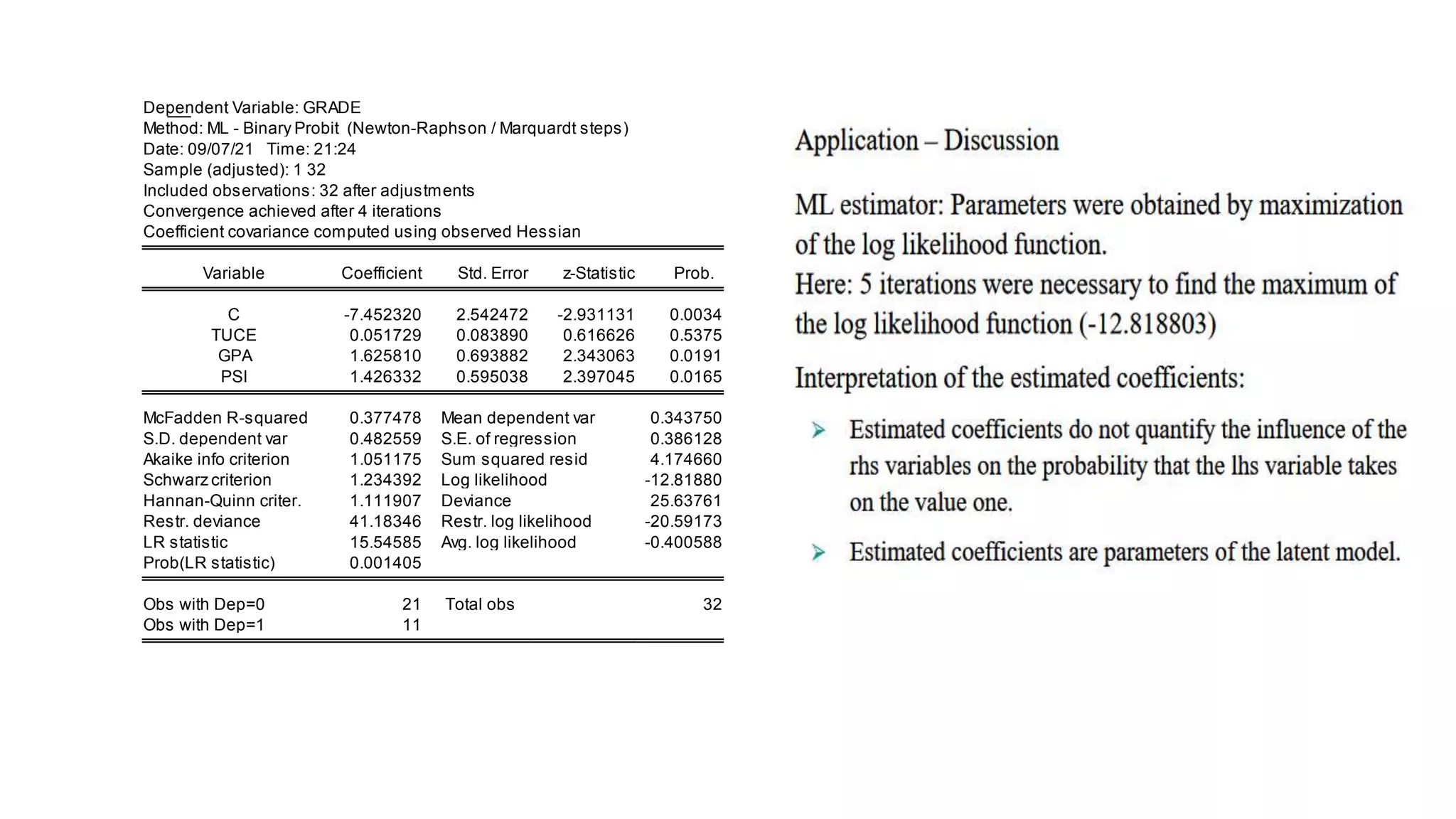

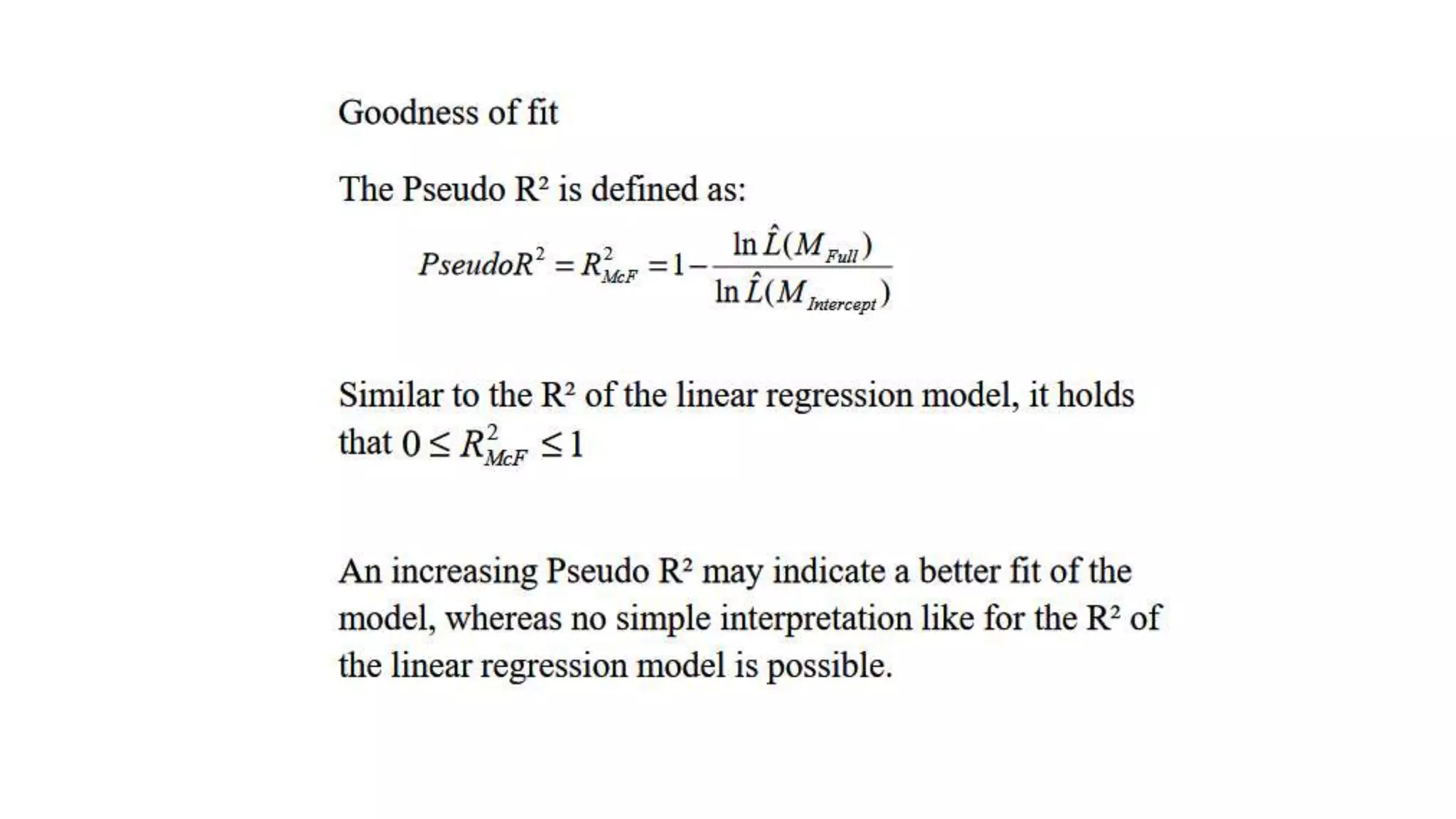

The probit model is appropriate when estimating the effects of independent variables on a binomial dependent variable from a dose-response experiment. It uses the normal cumulative distribution function. The document provides an example of using a probit model to analyze the relationship between promotion size (dose) and customer purchase probability (response) across different retail channels. It also gives an example application using probit analysis to estimate the effects of GPA, entrance exam score, and teaching method on student final grade.