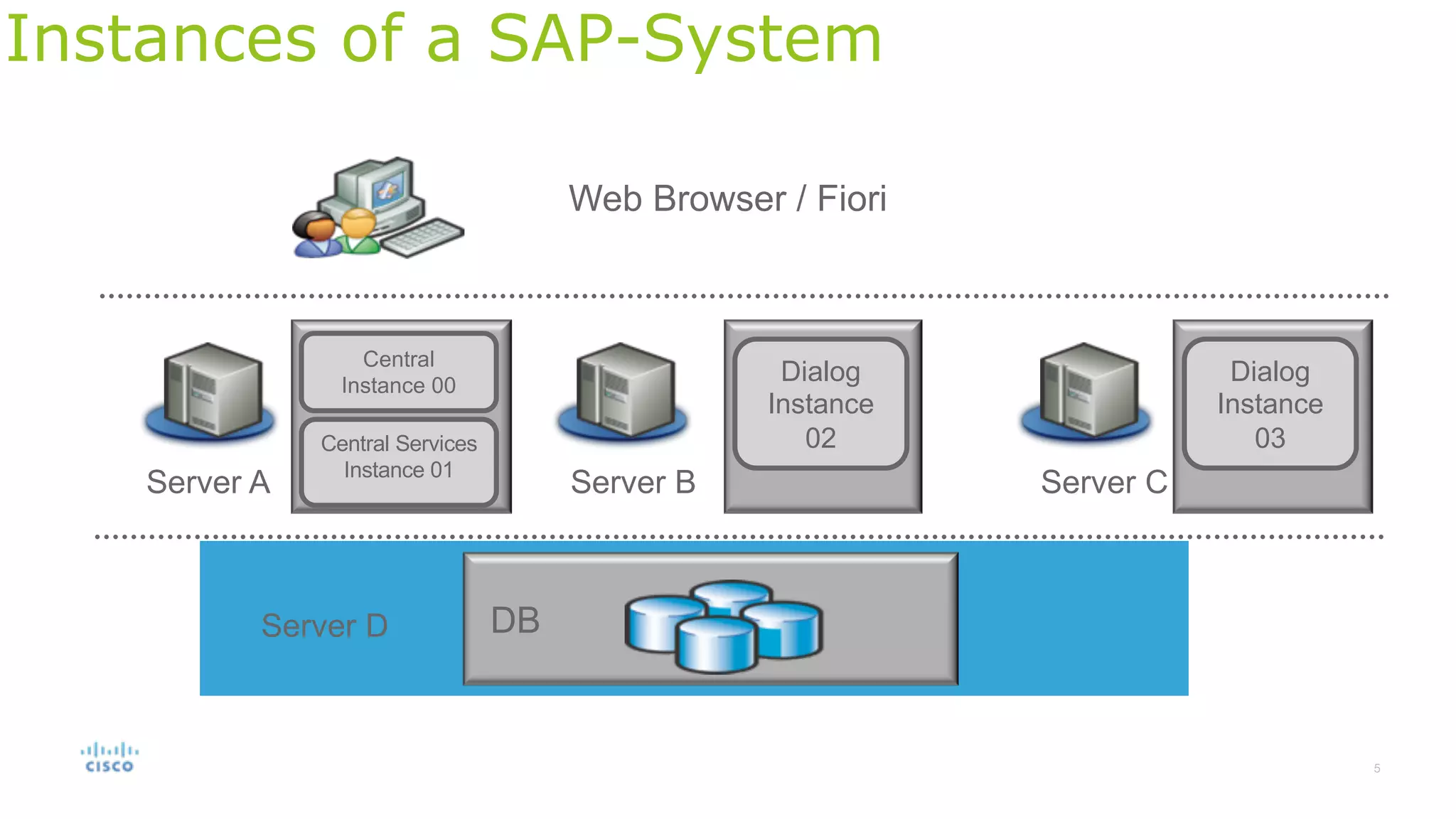

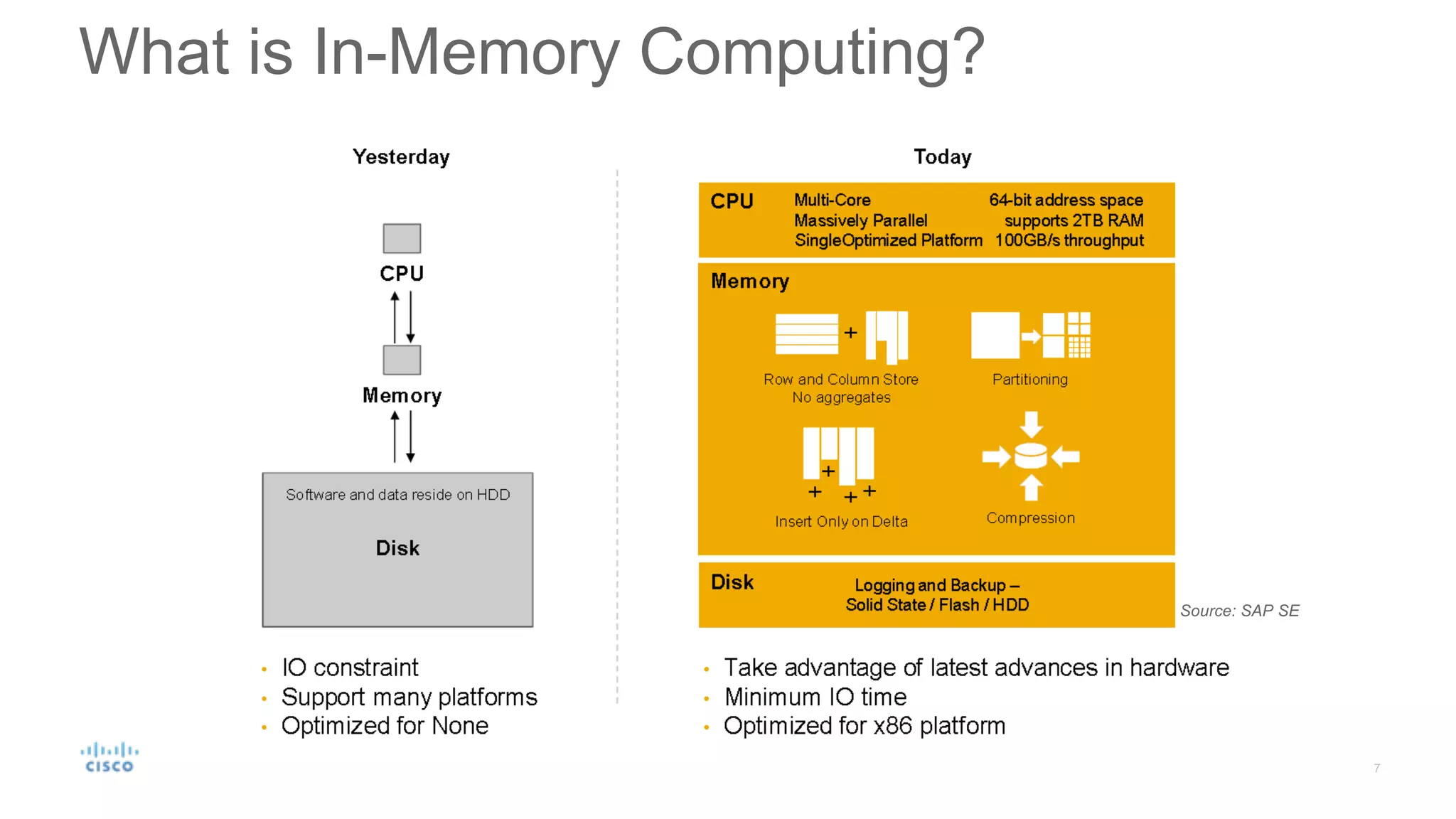

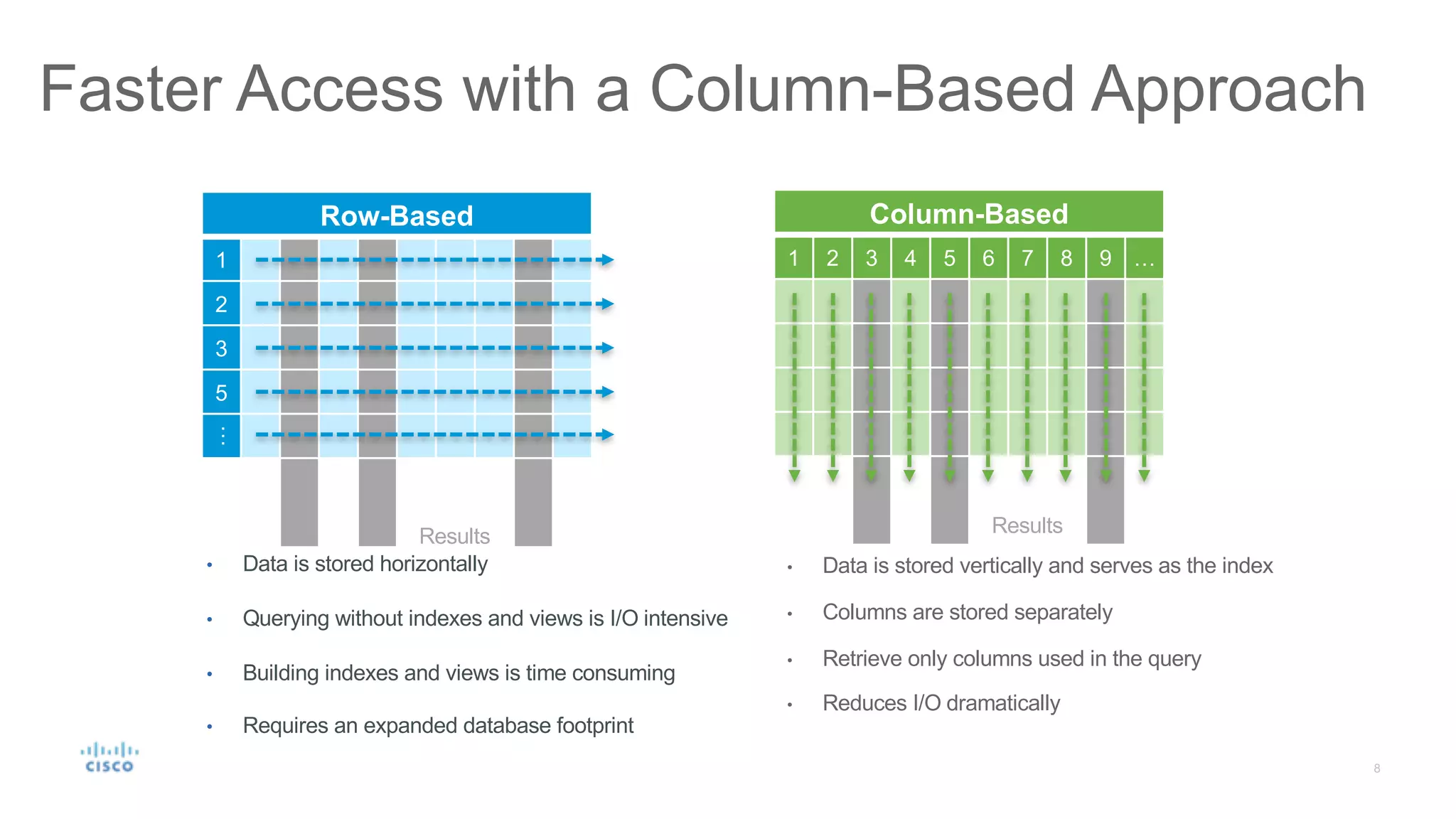

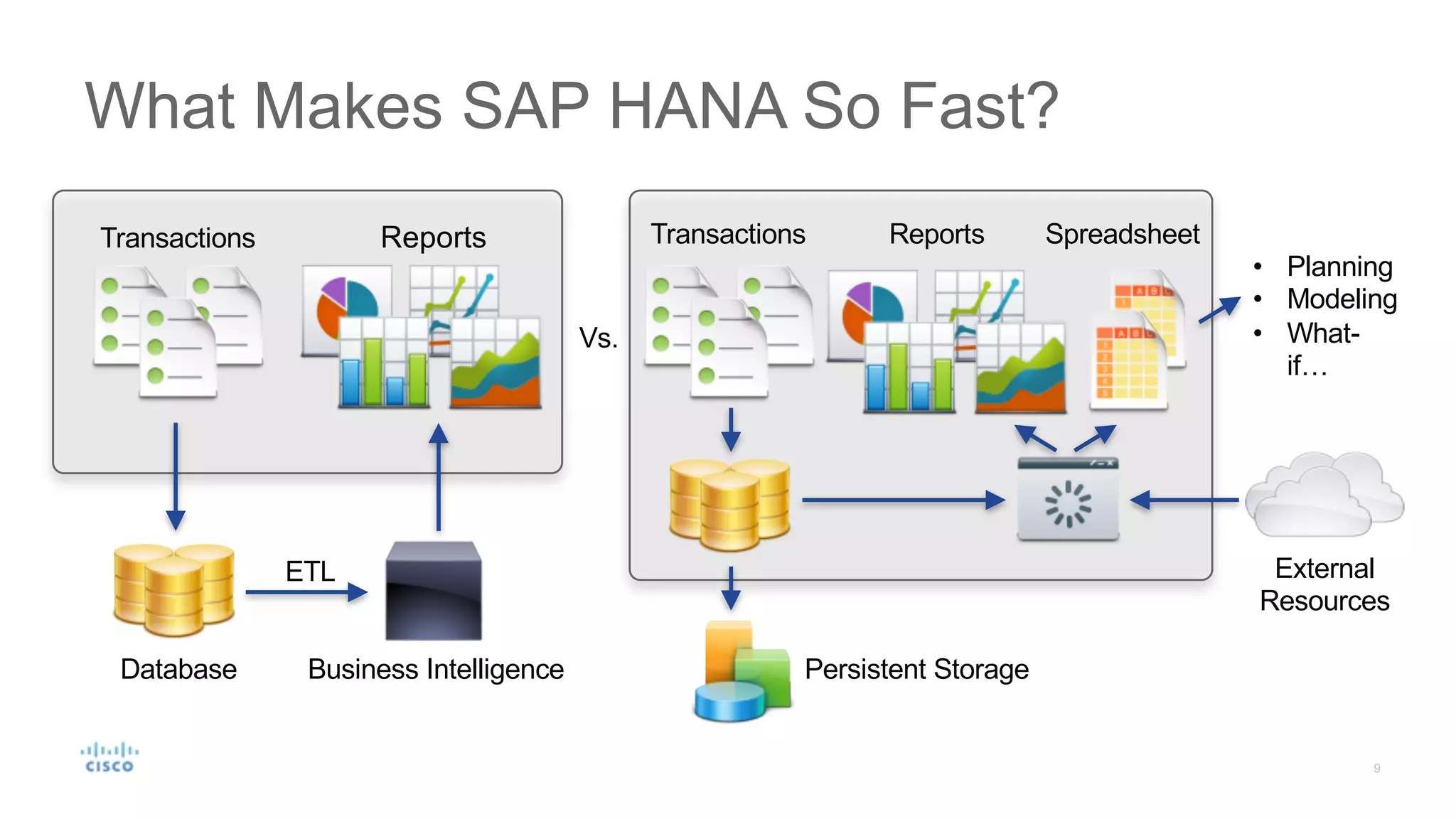

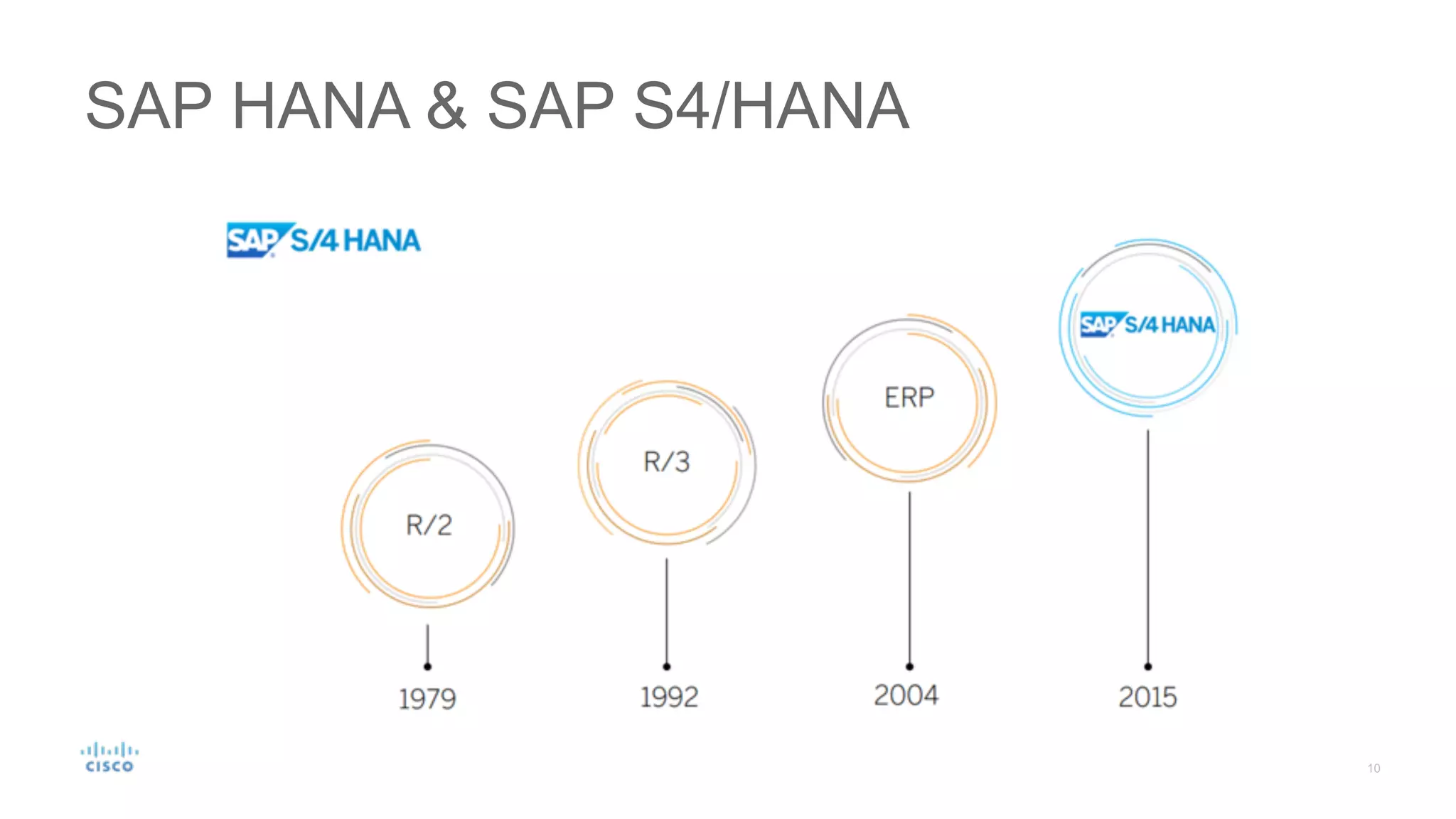

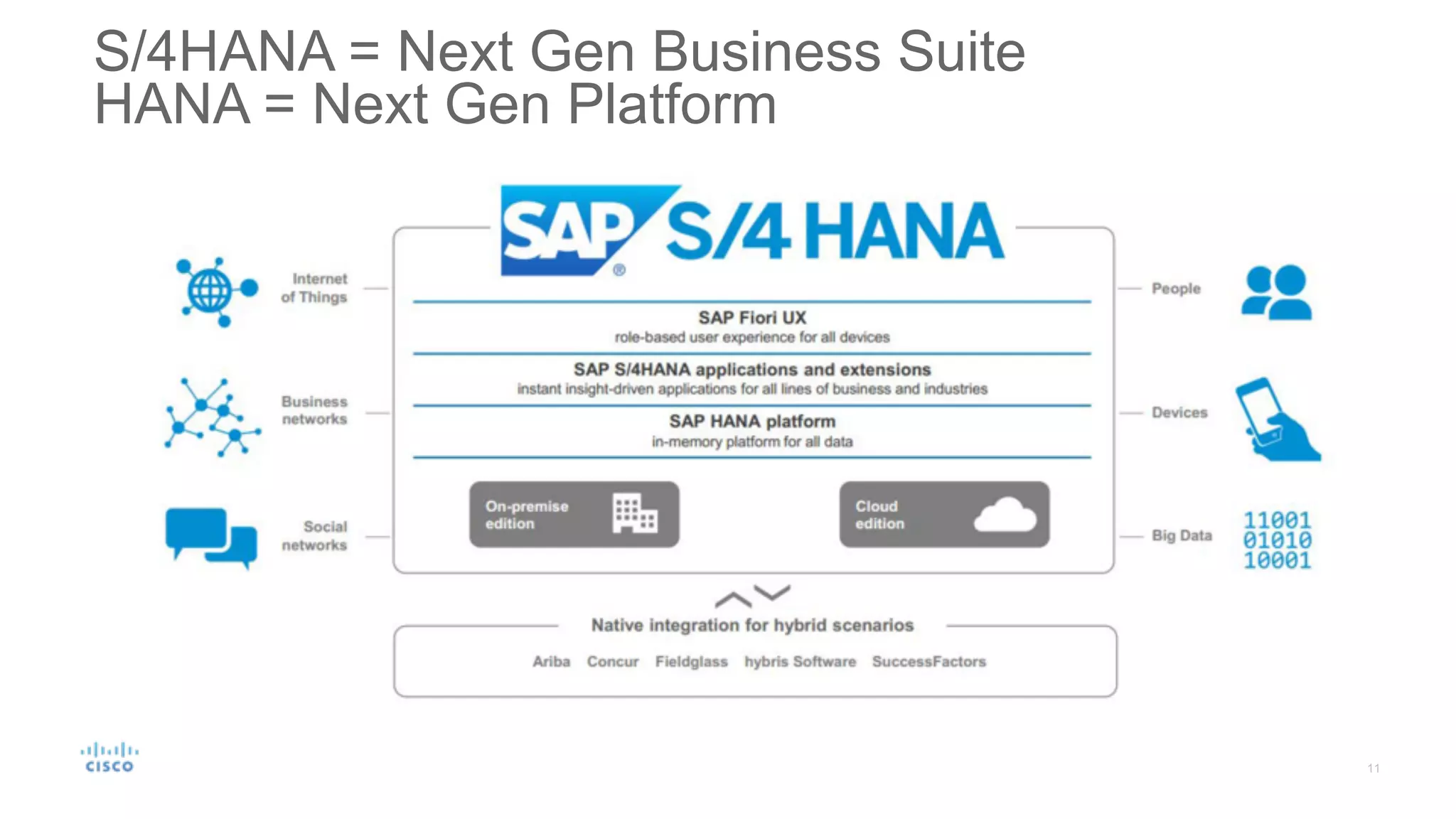

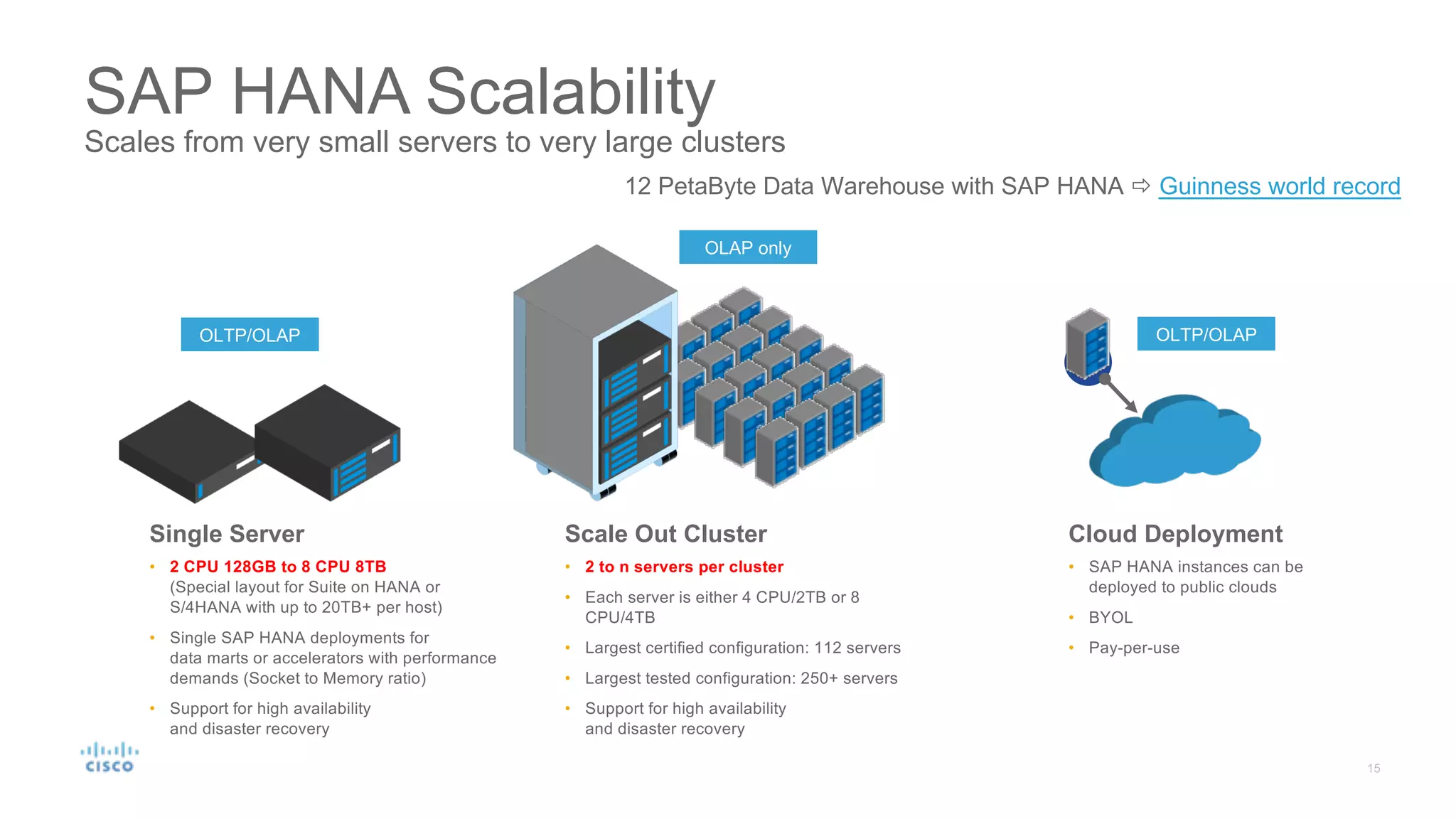

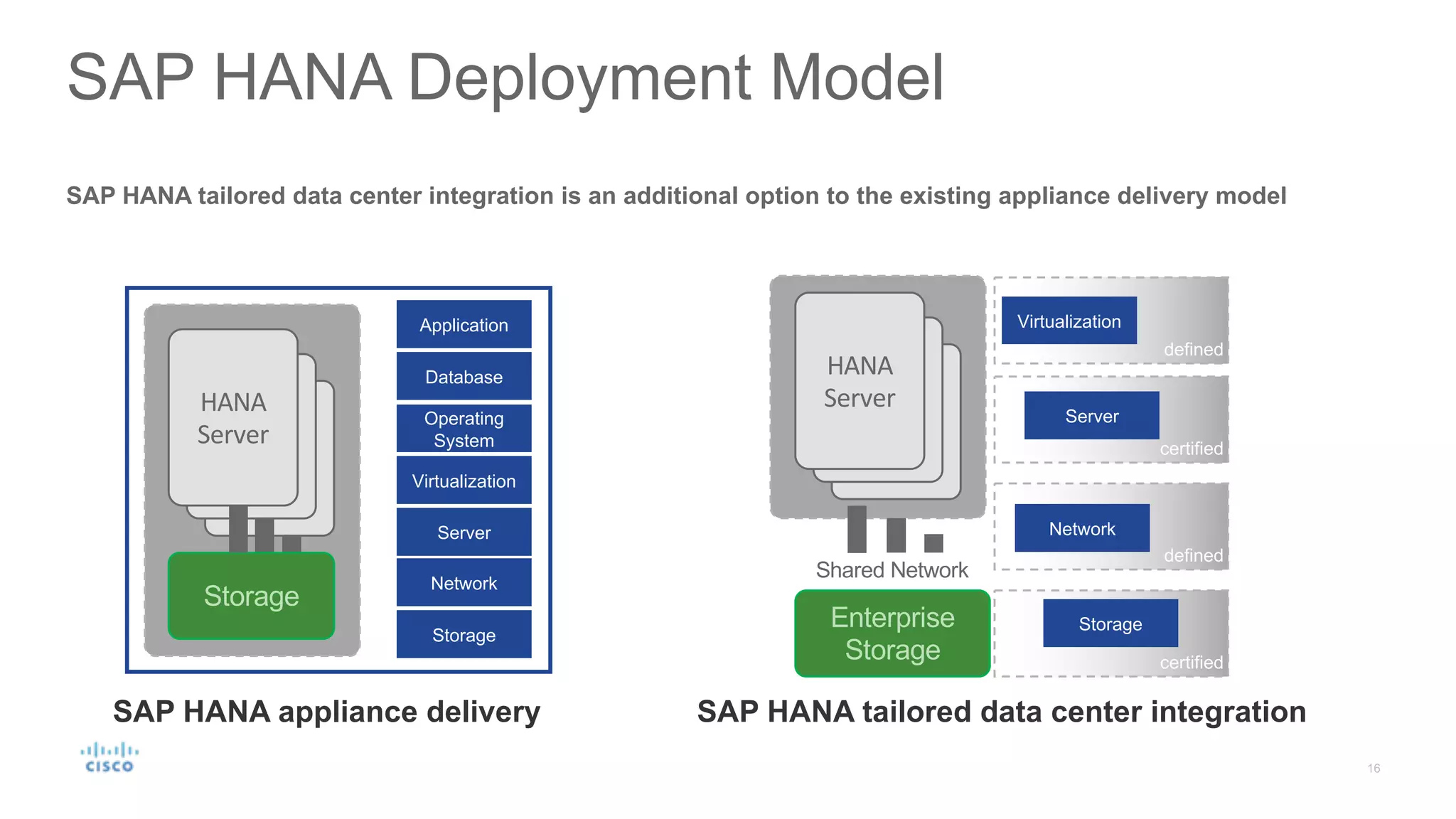

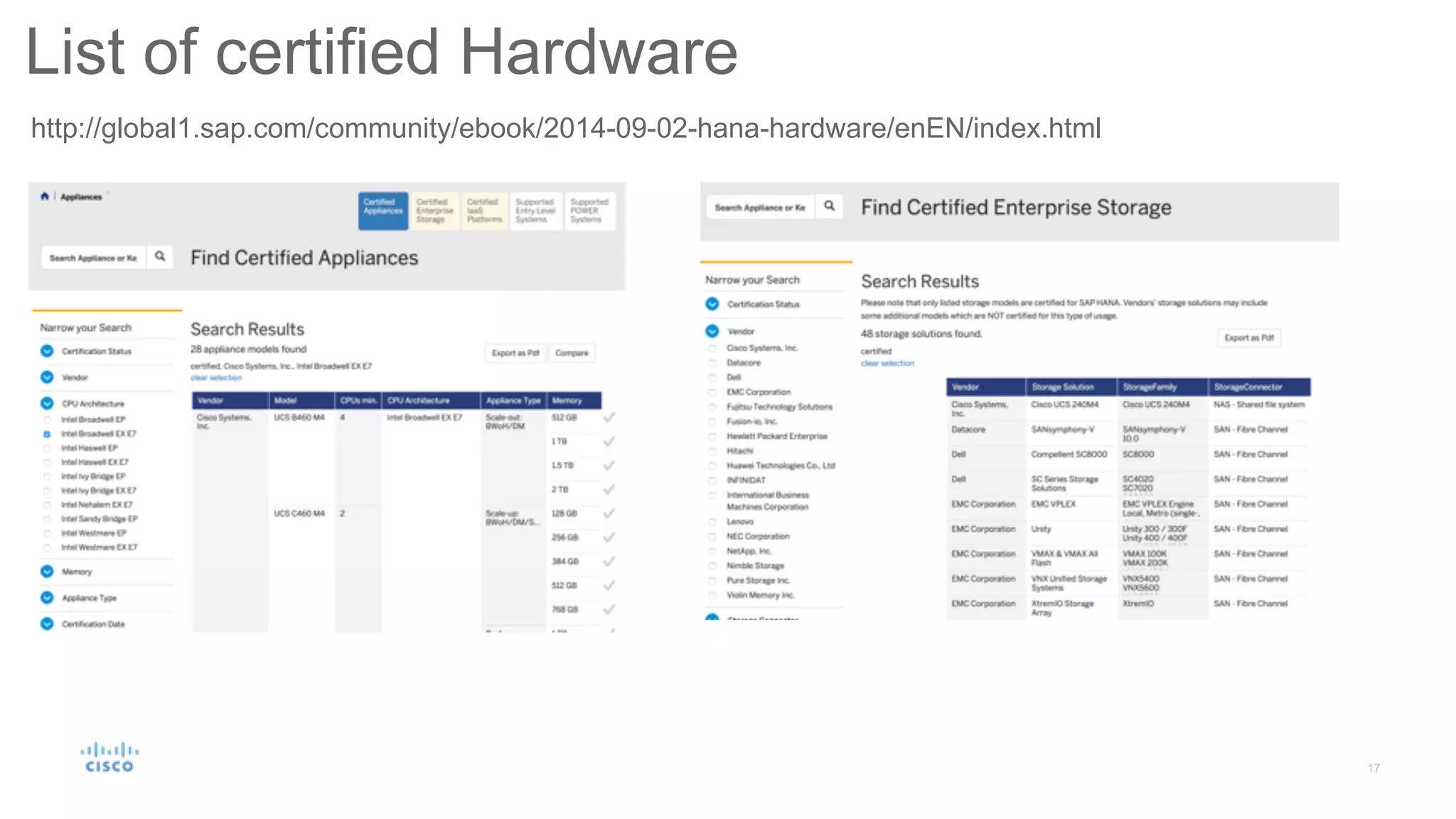

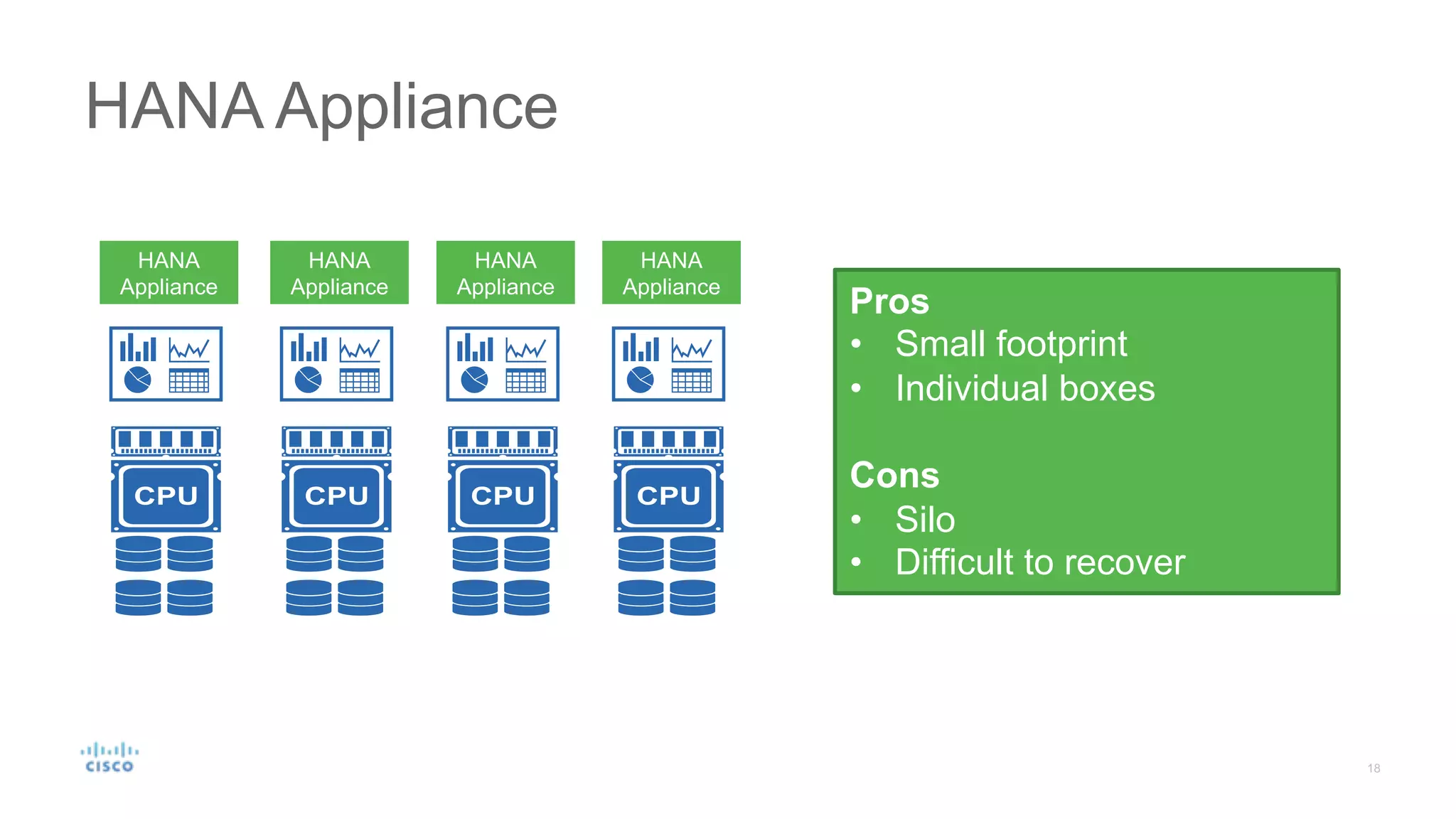

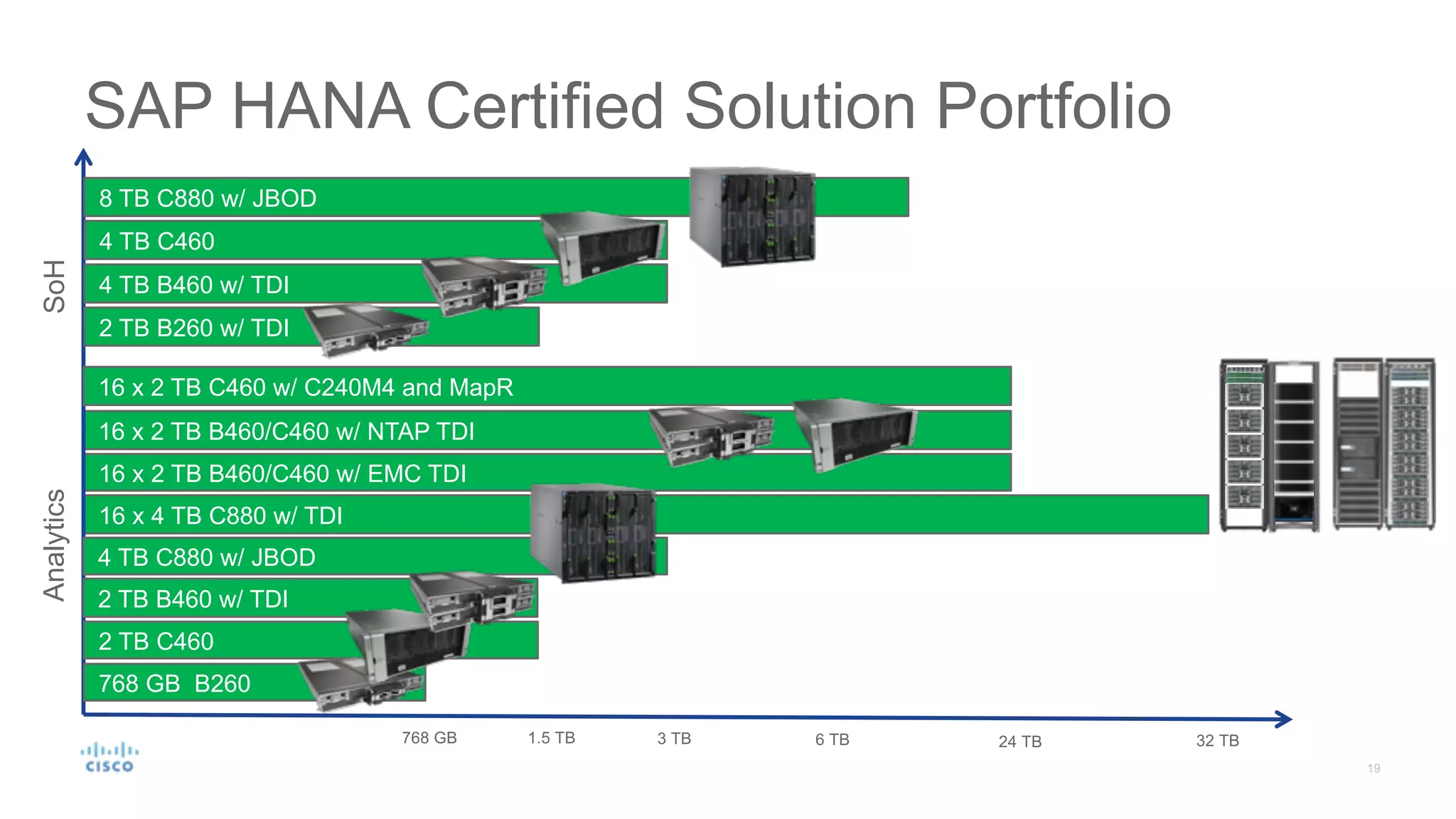

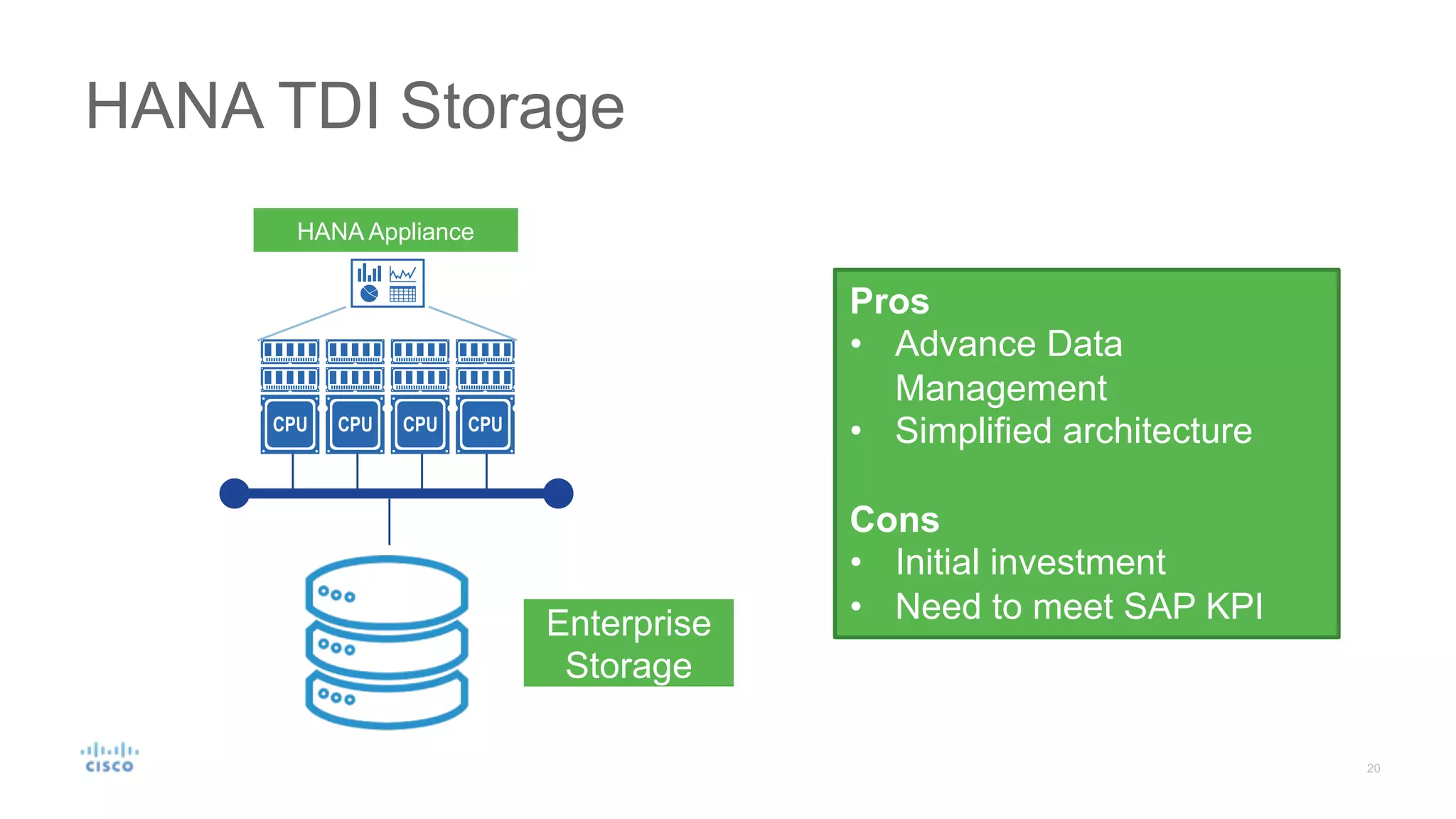

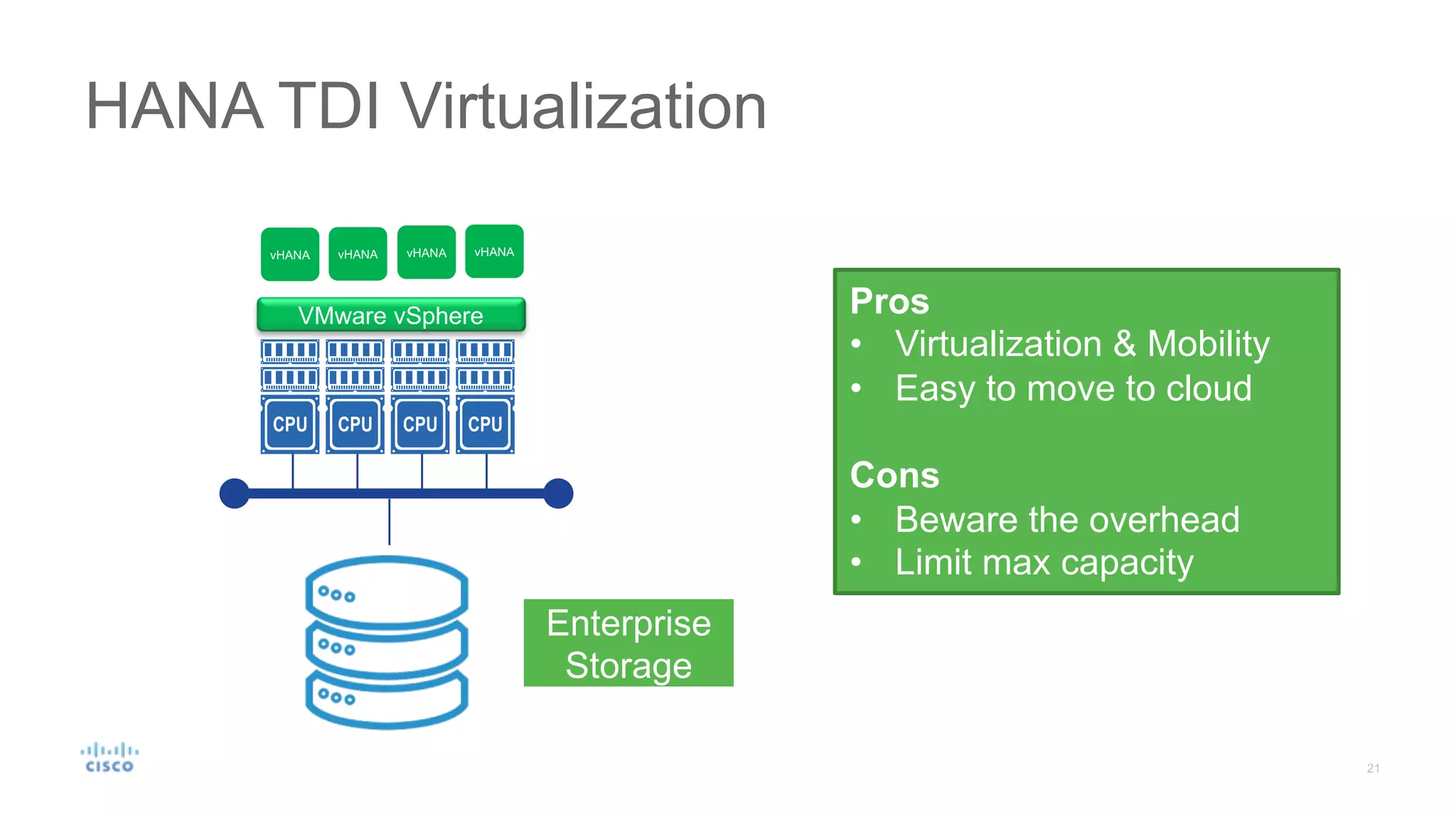

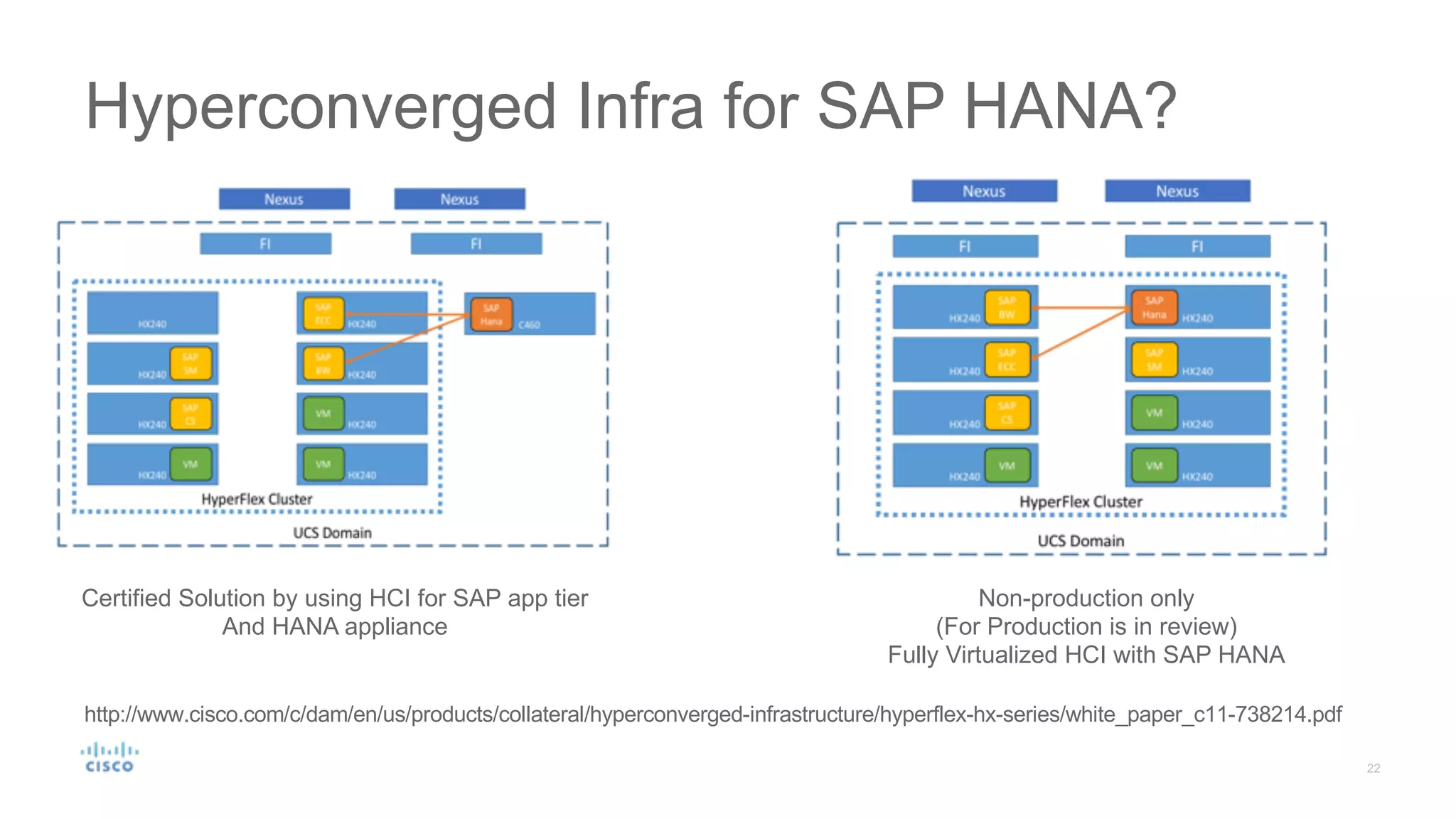

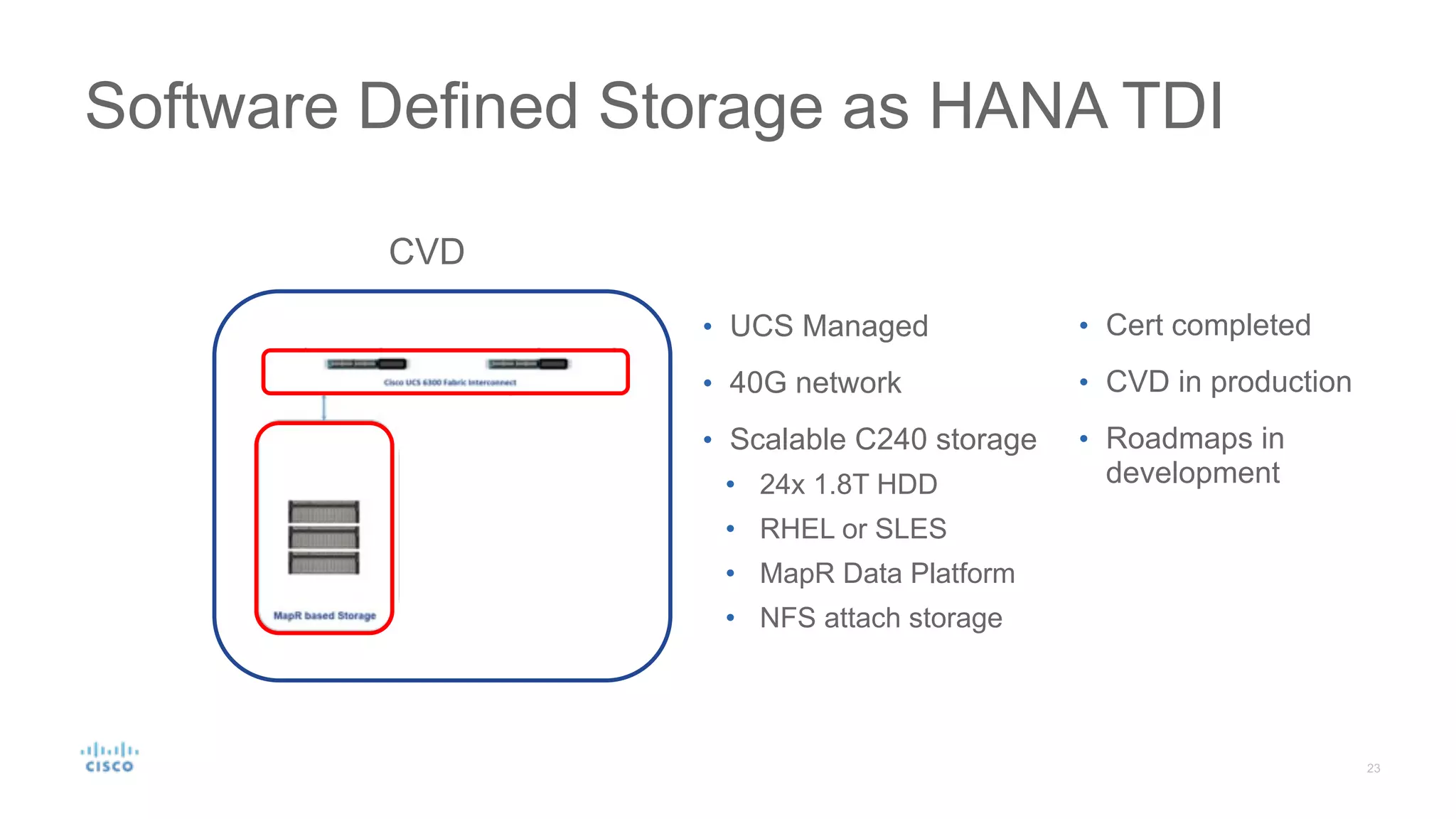

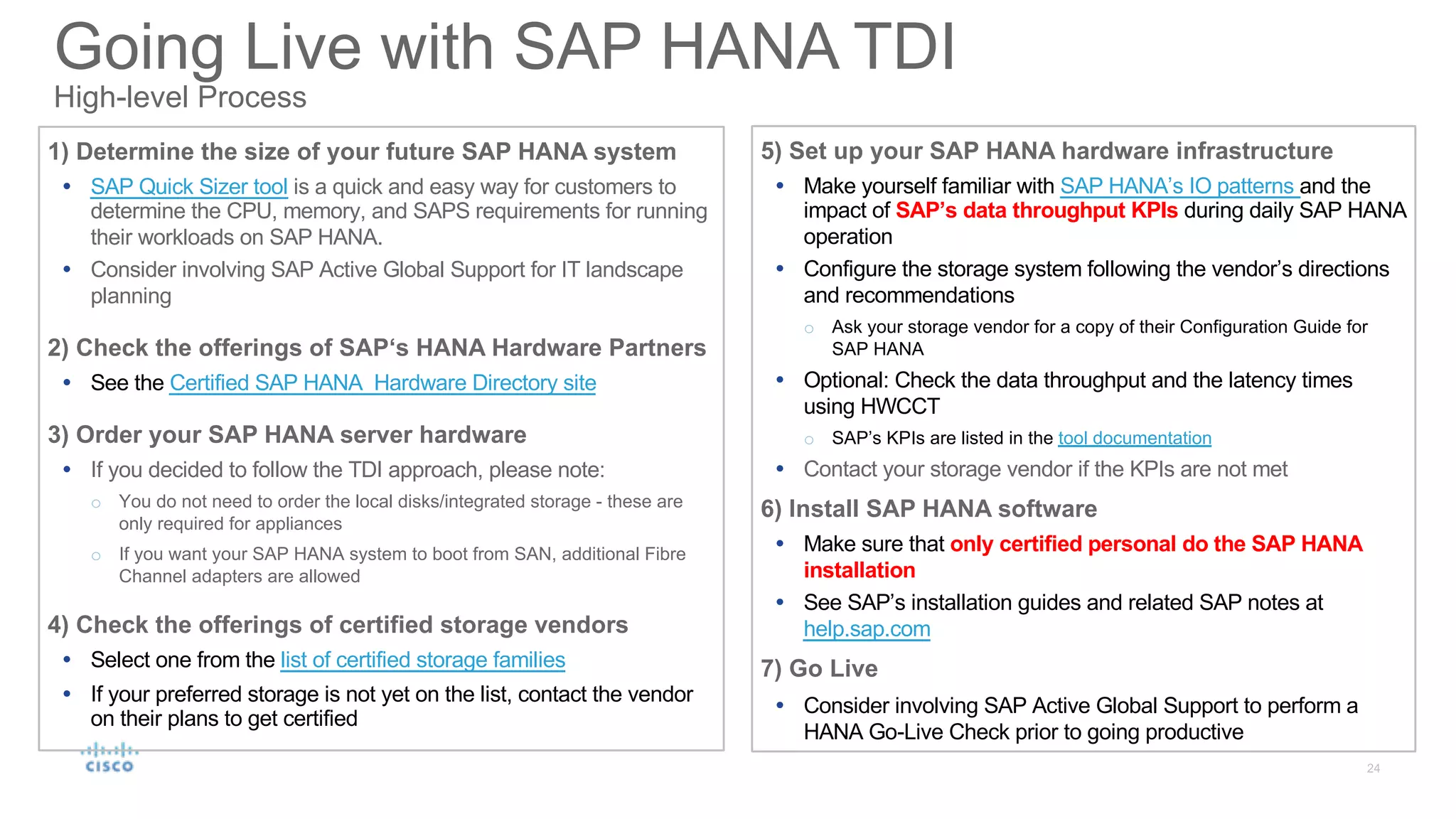

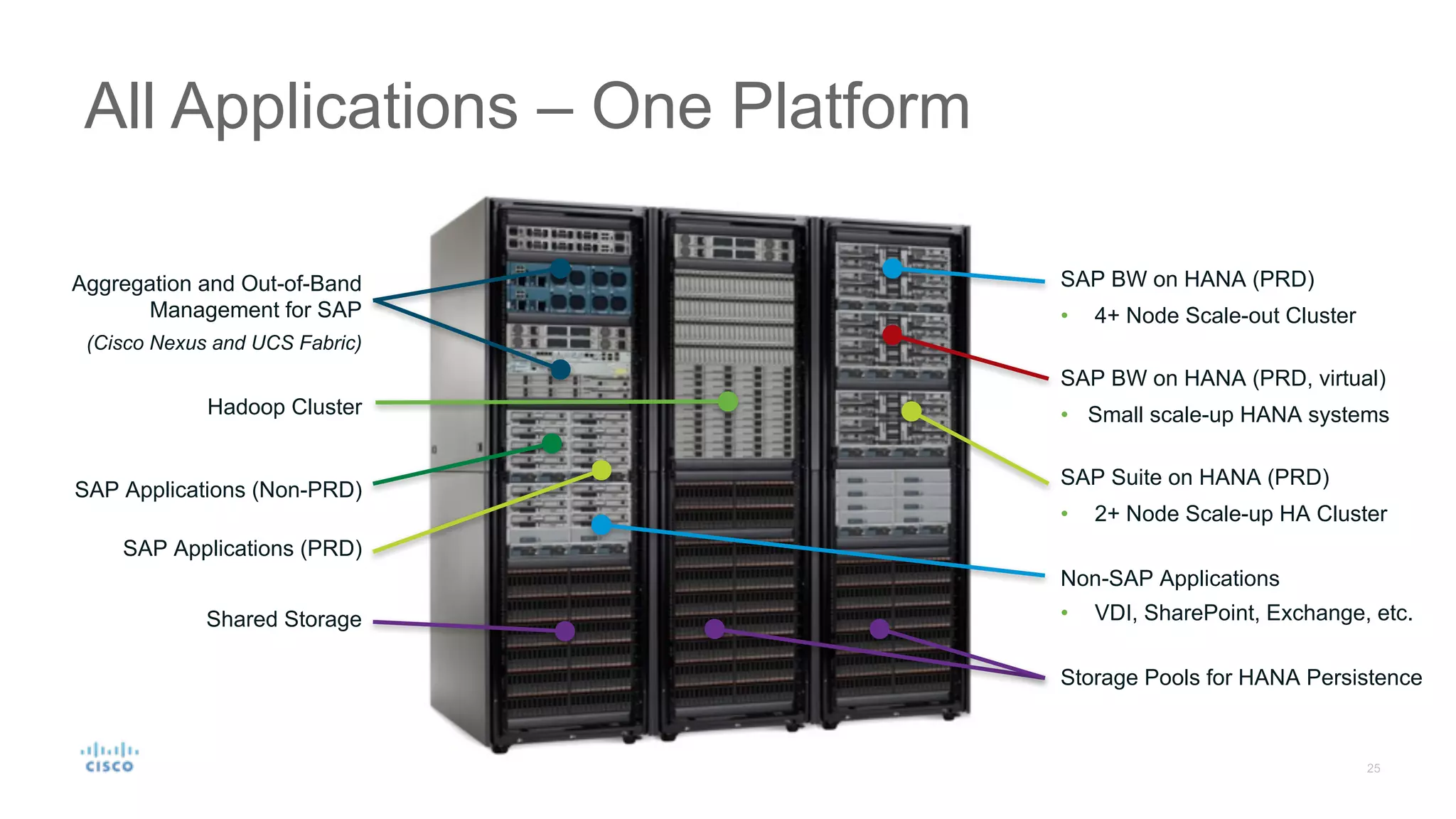

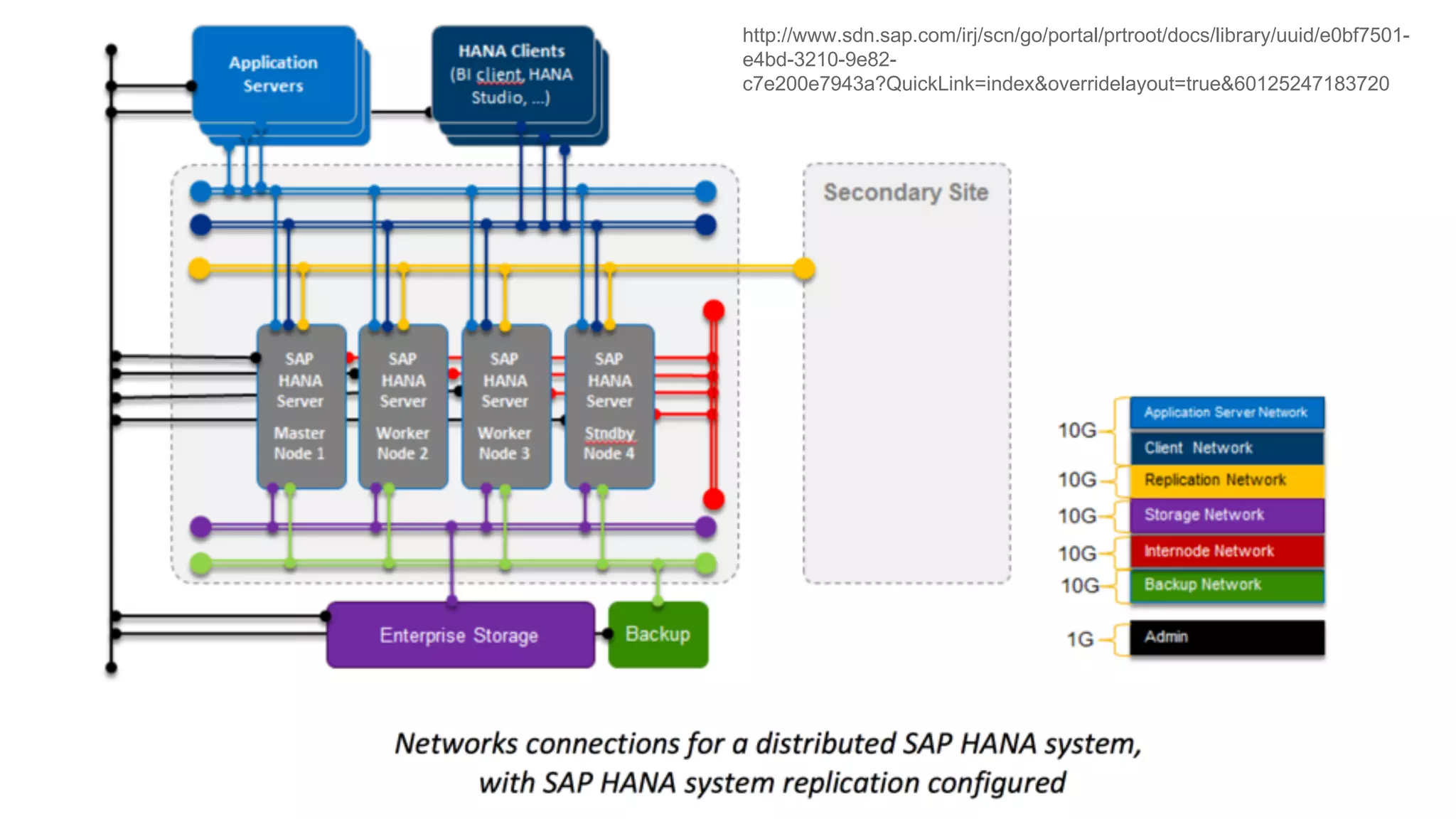

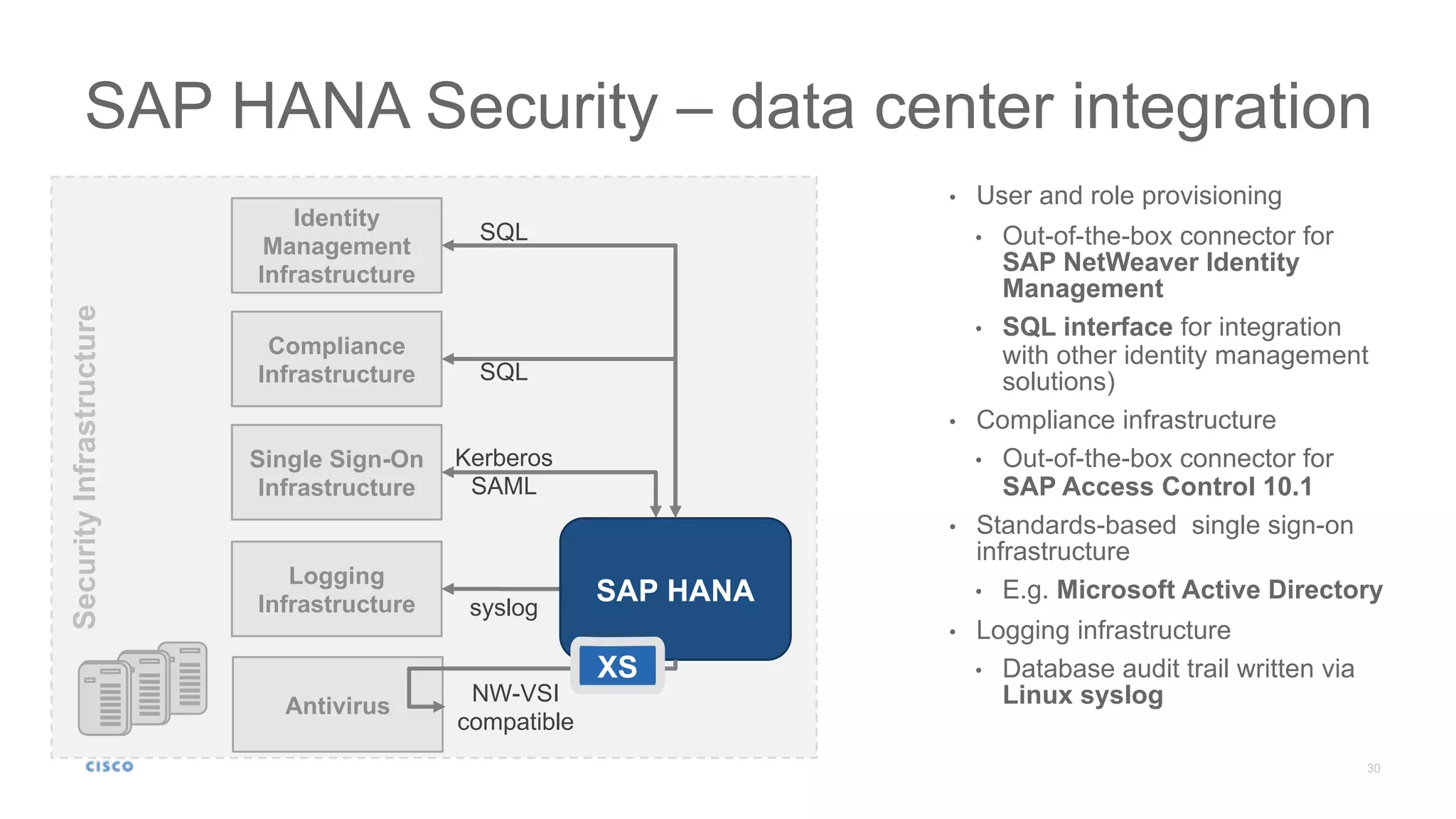

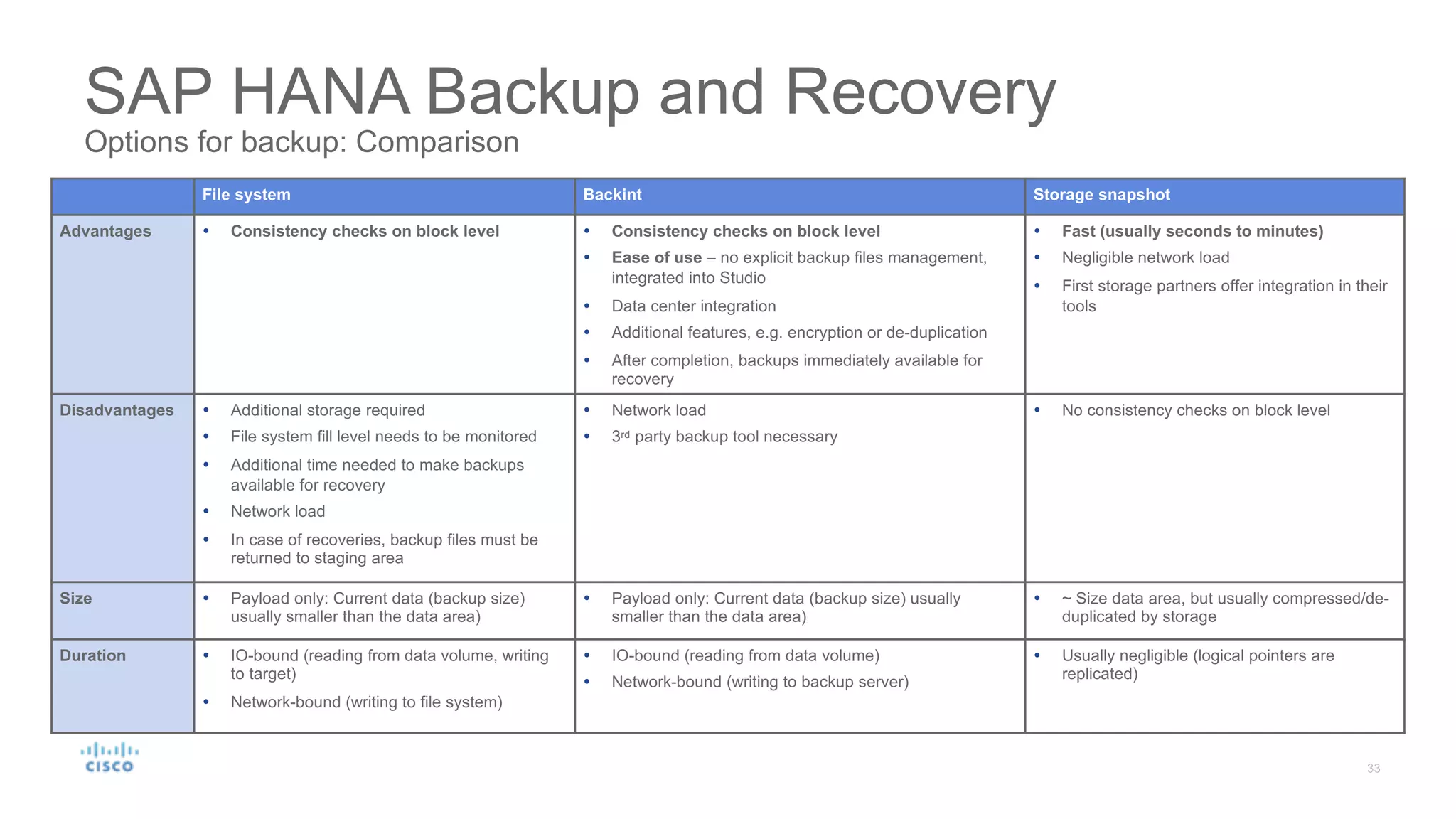

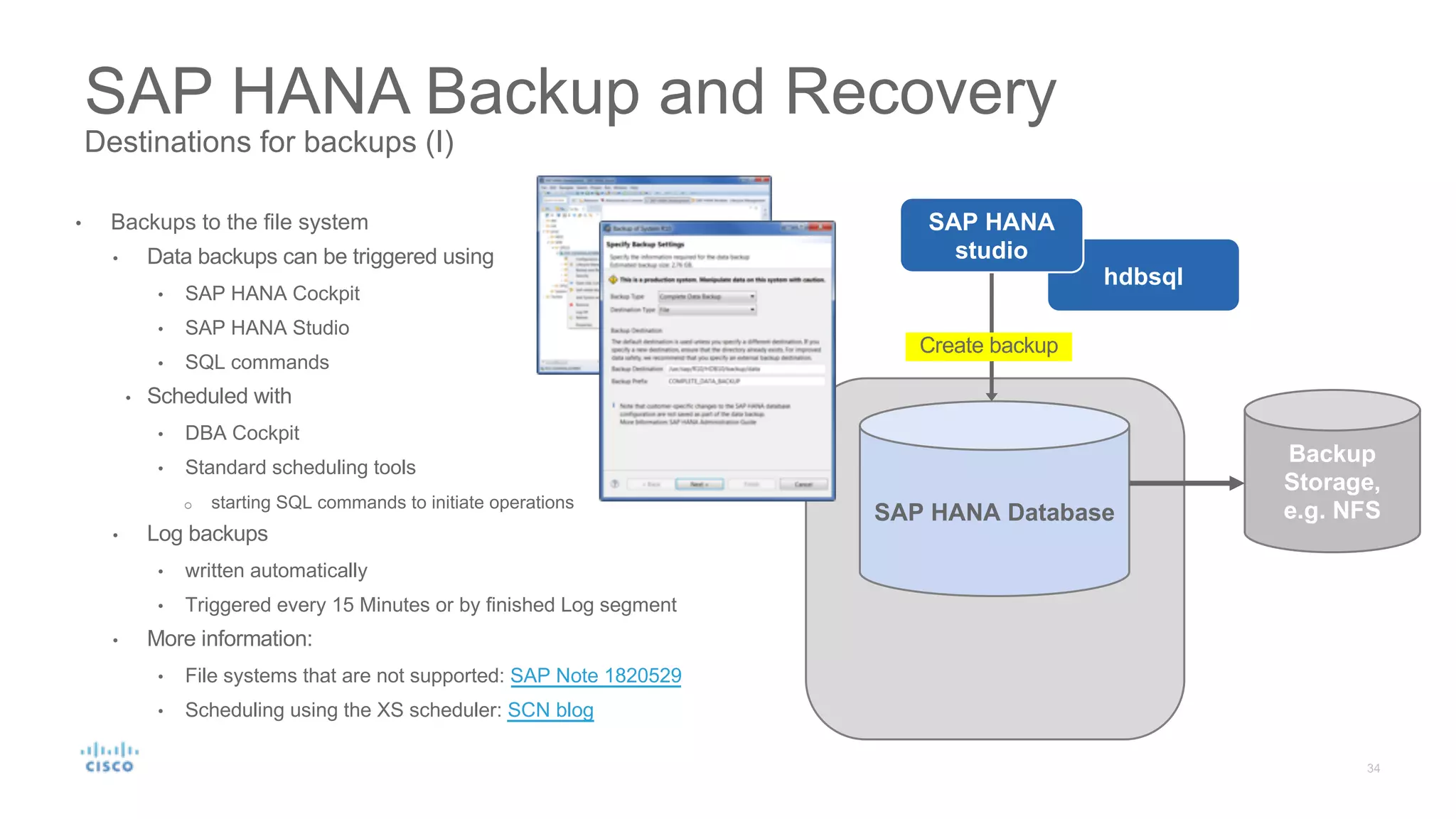

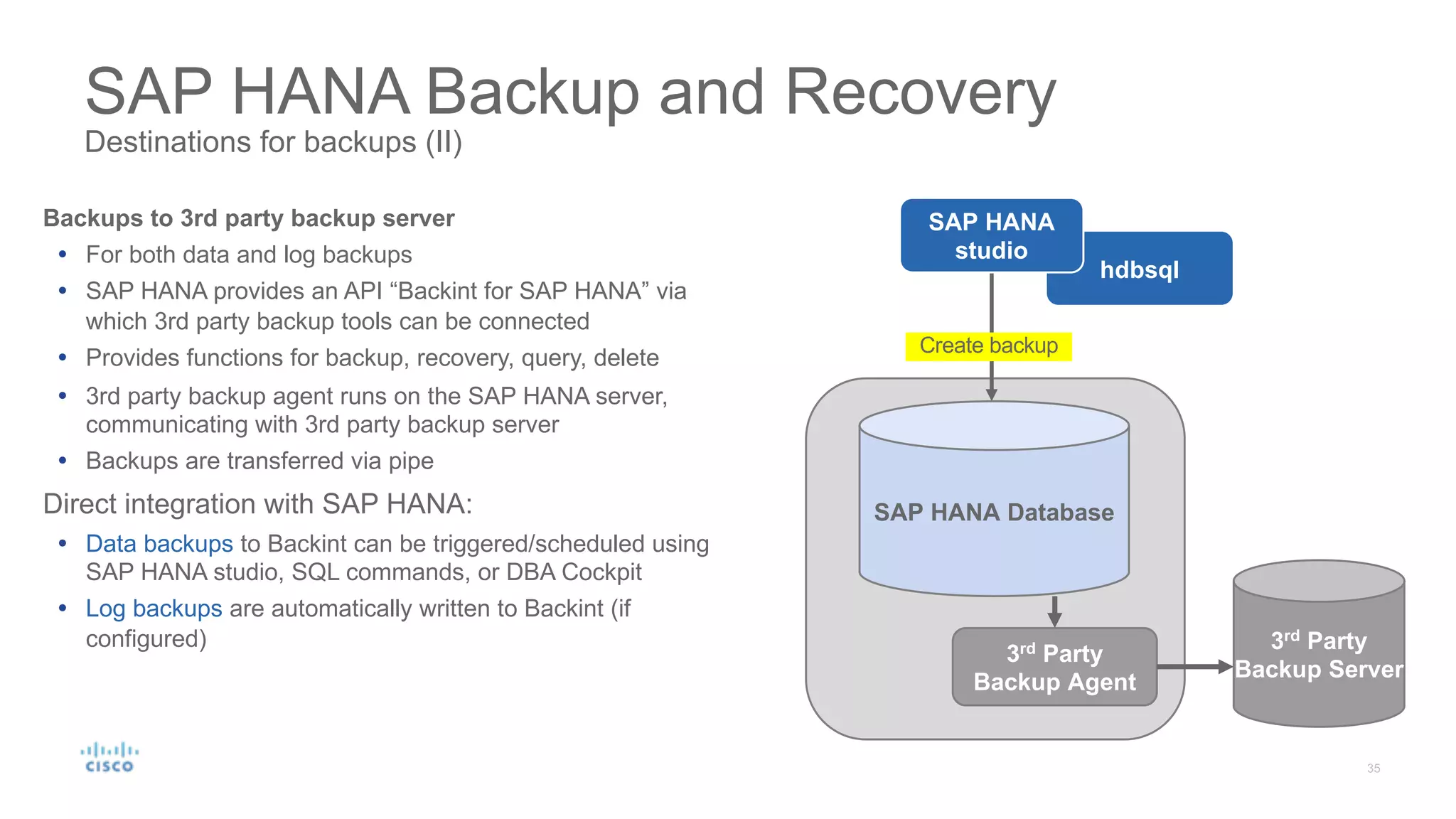

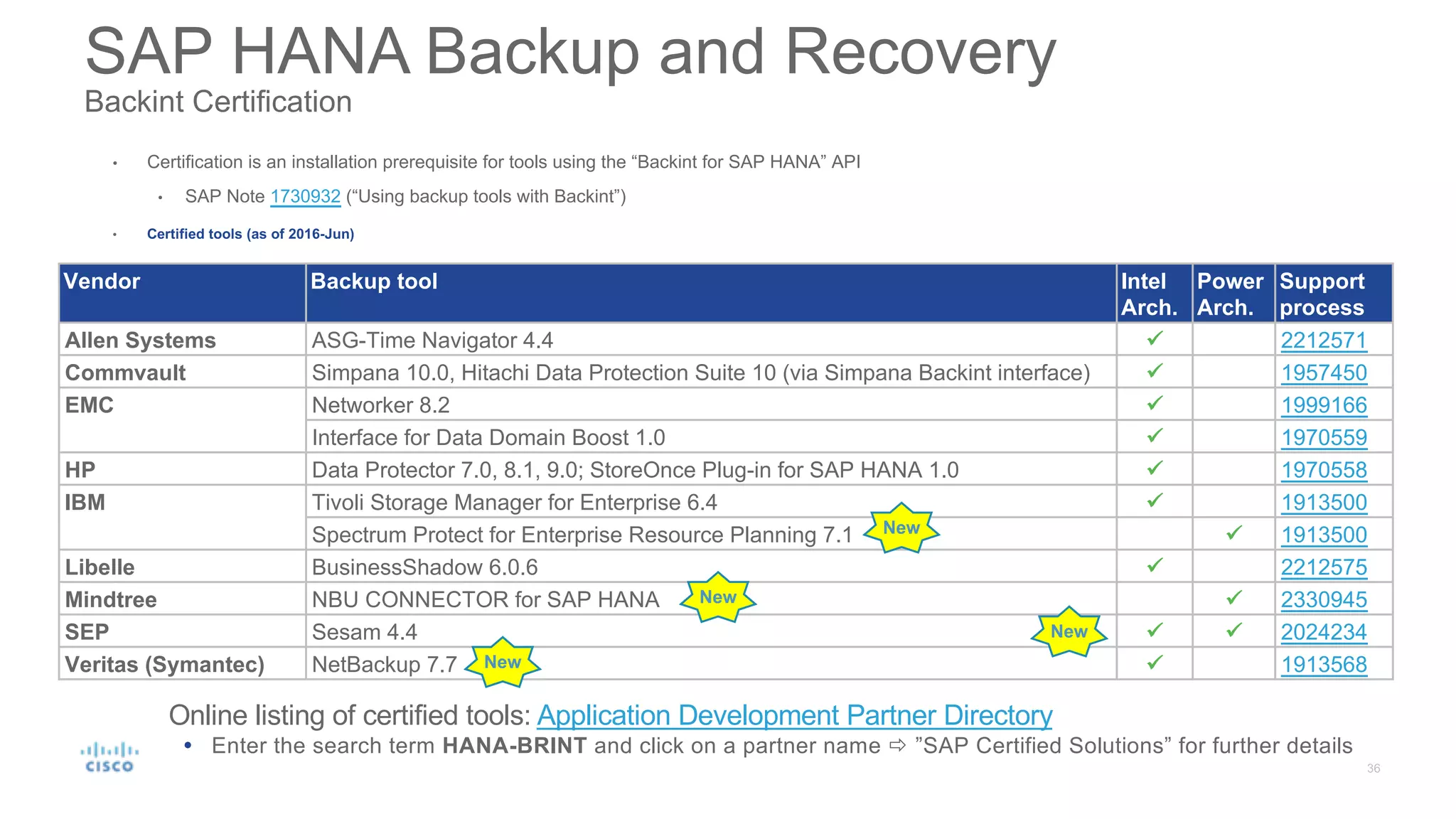

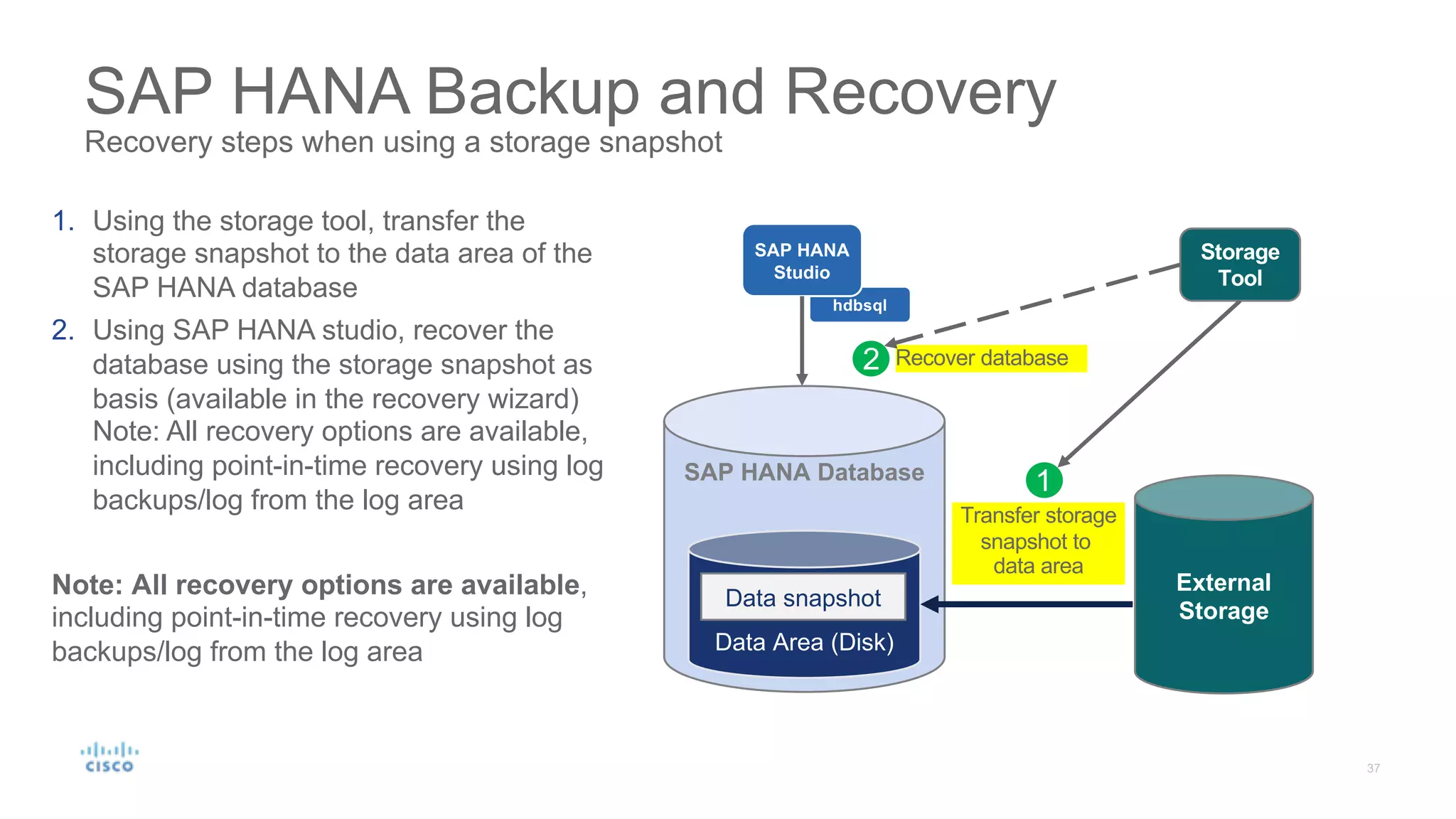

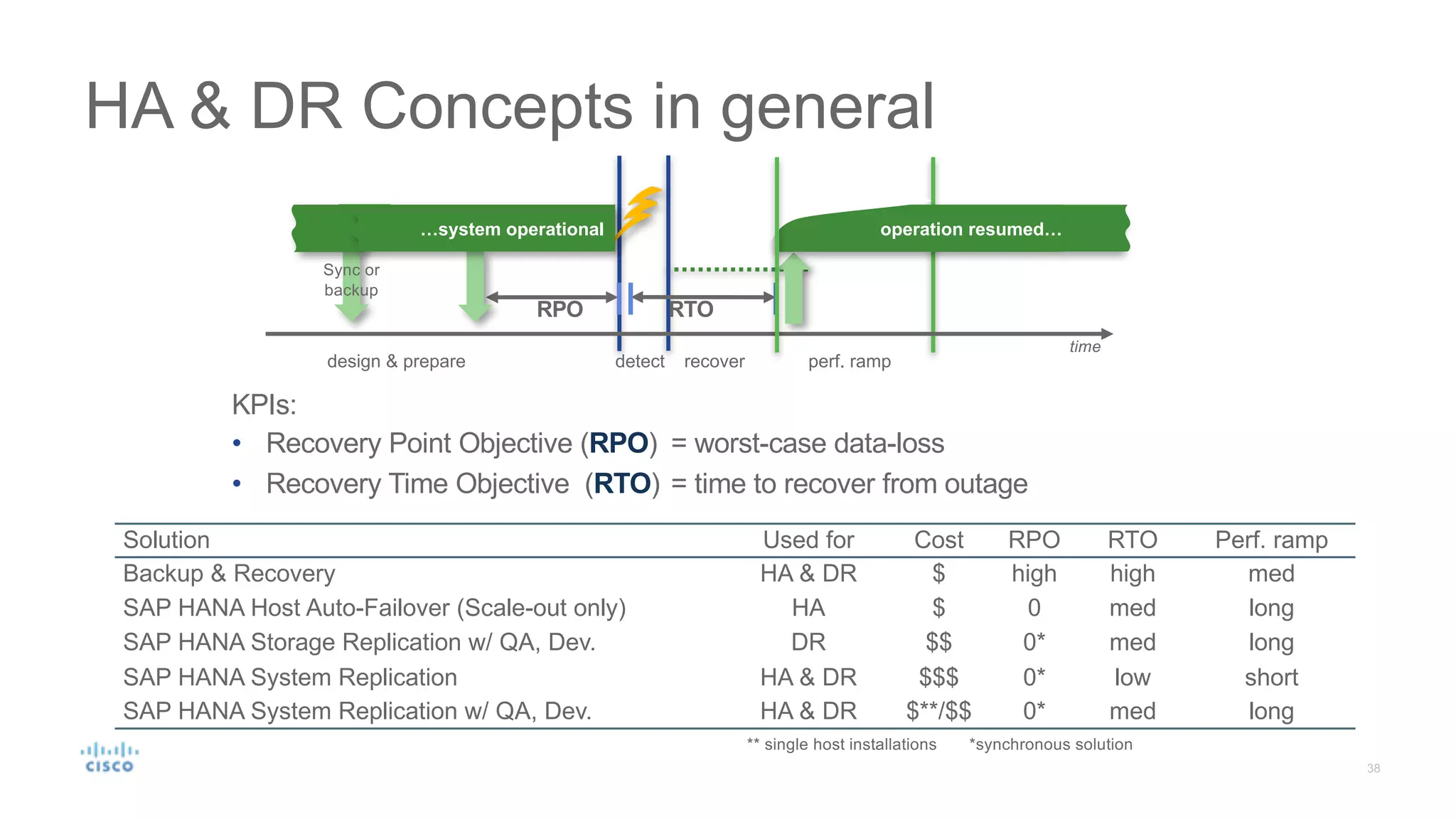

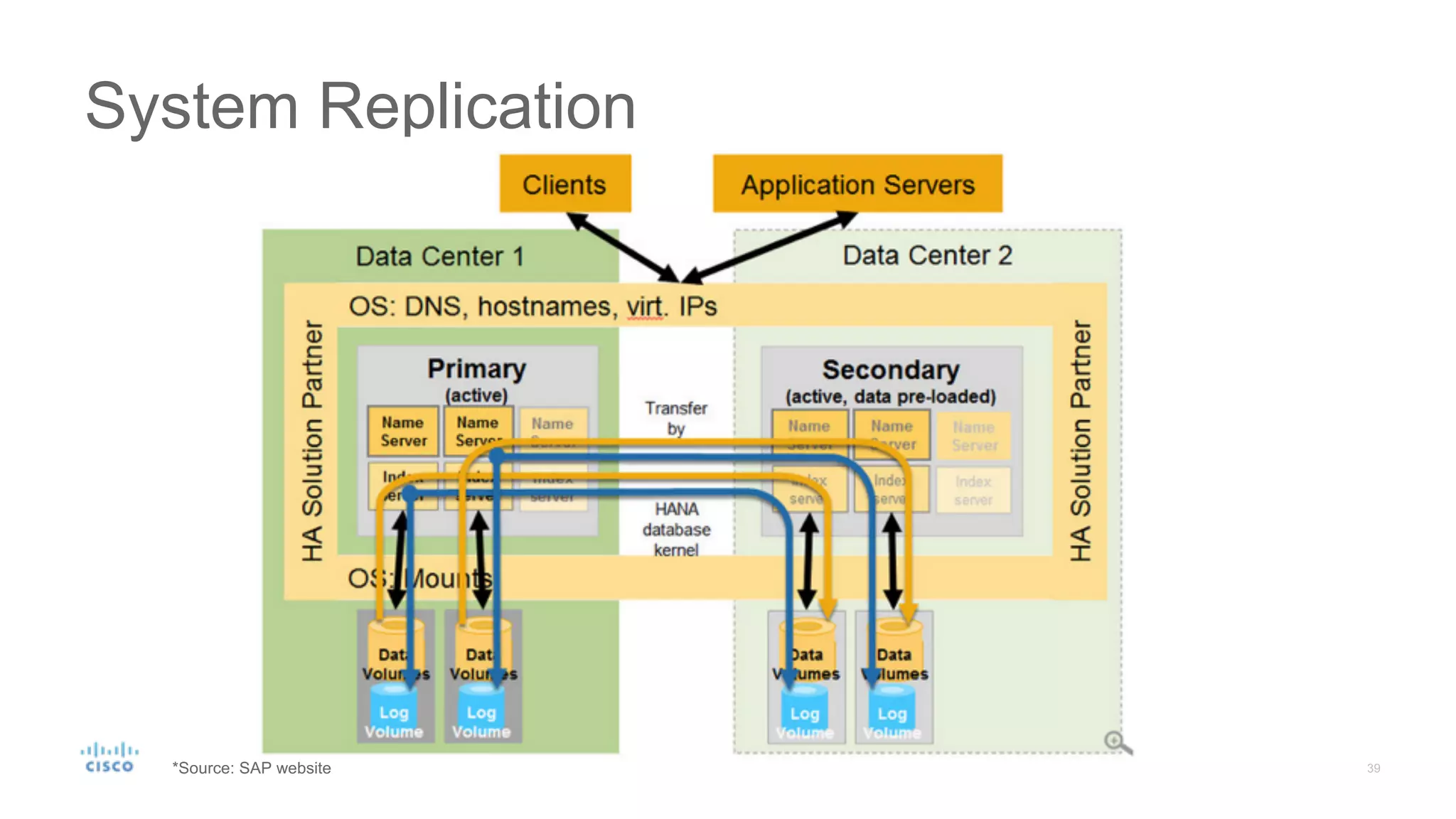

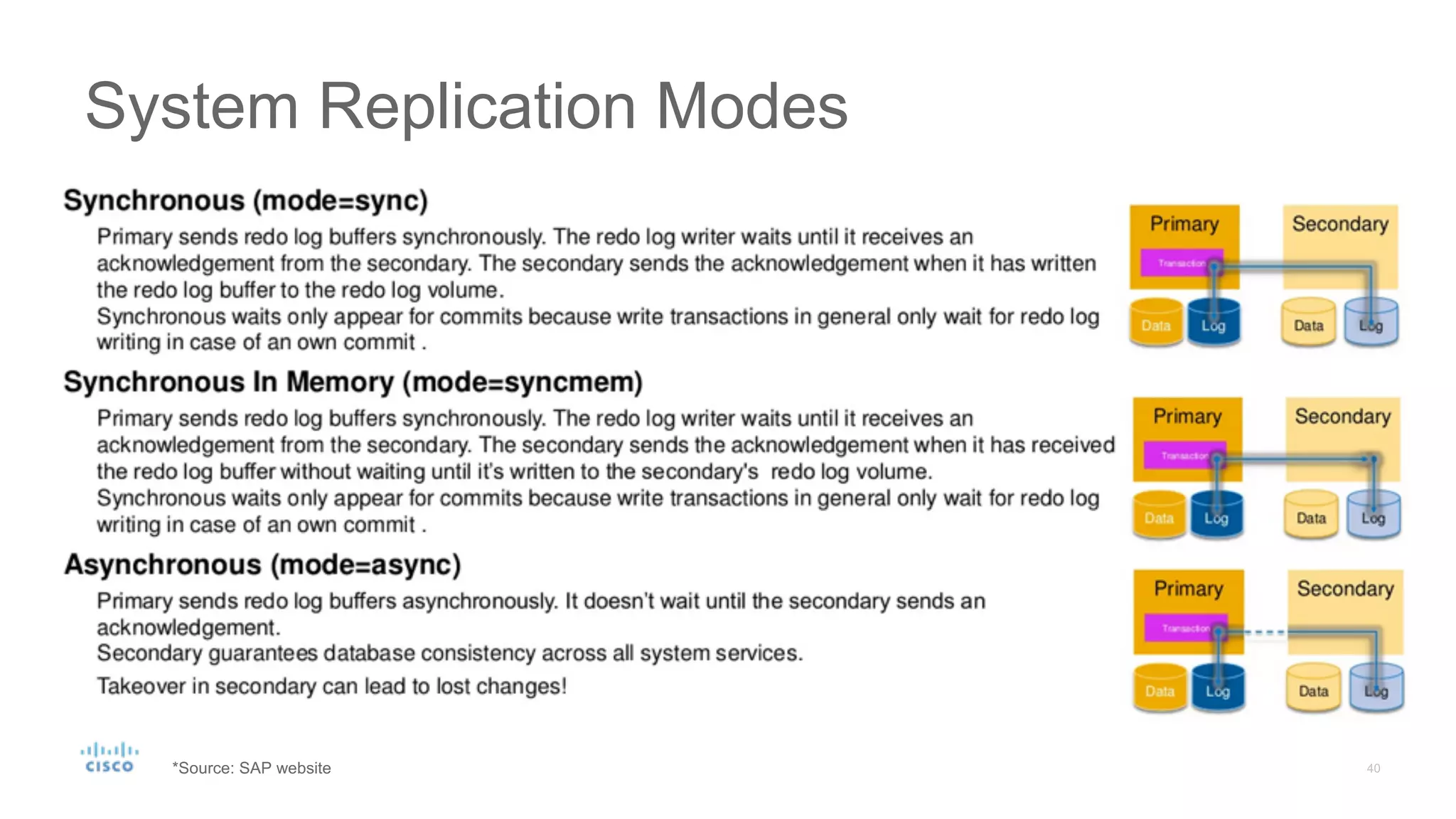

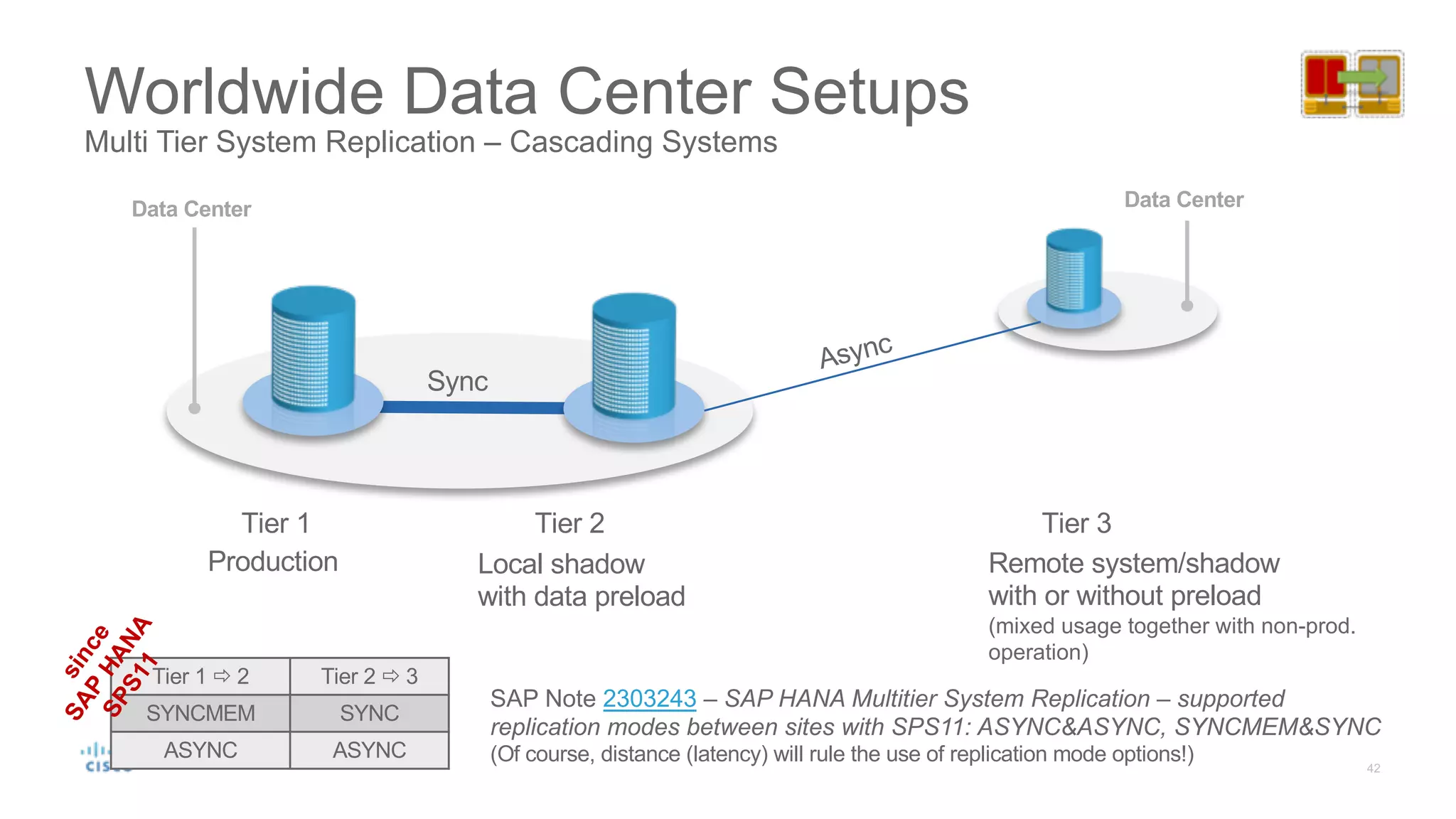

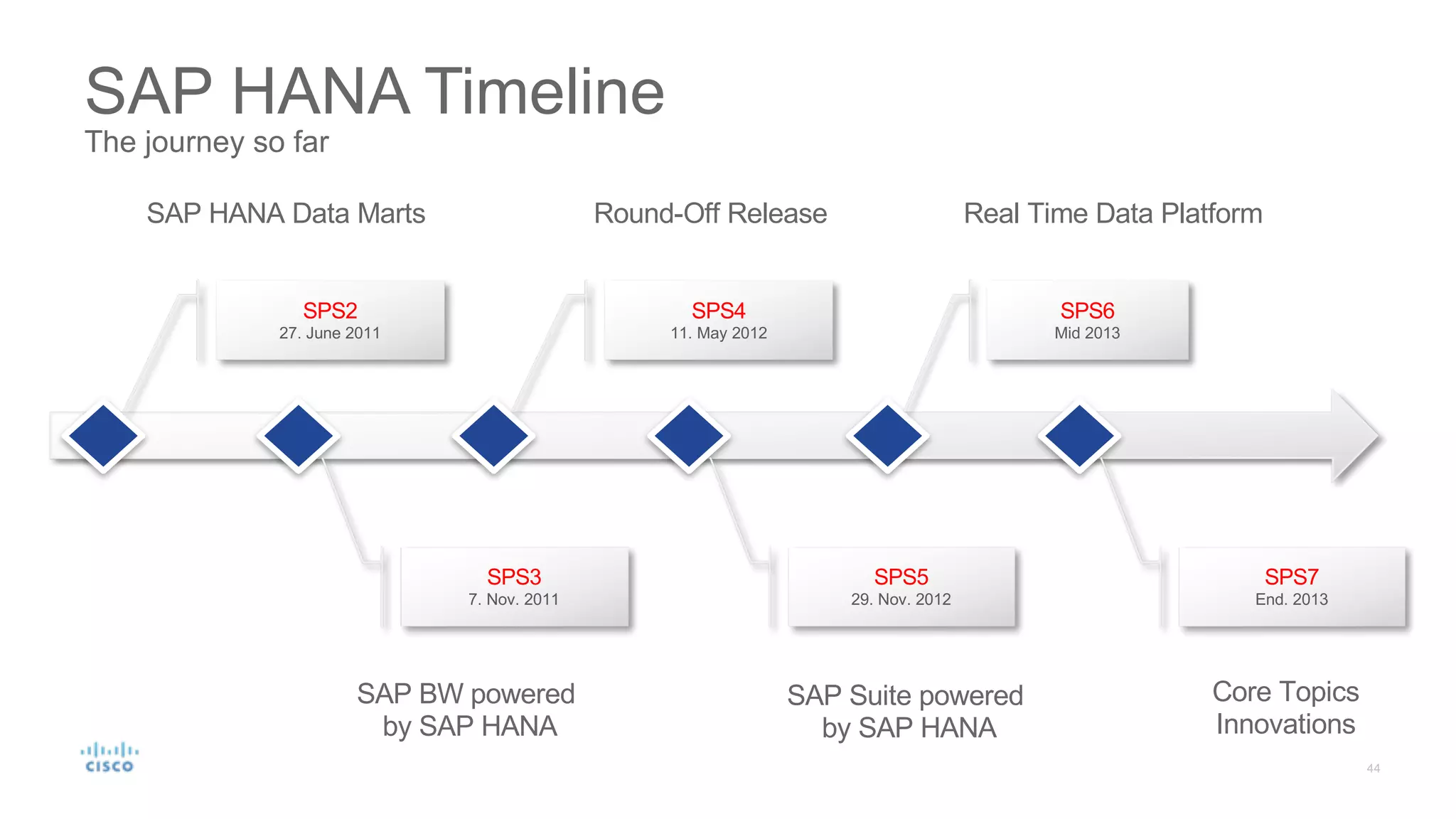

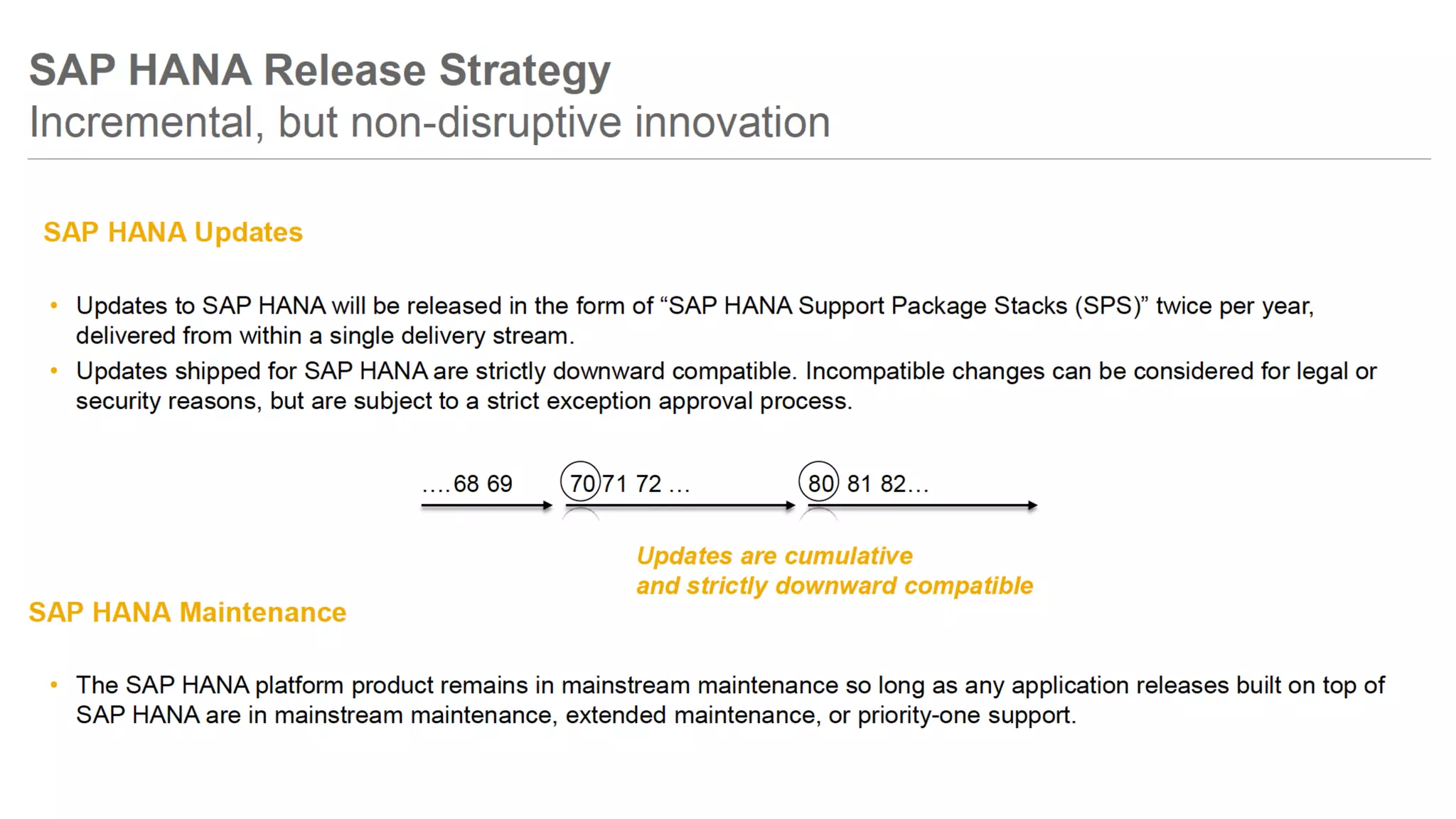

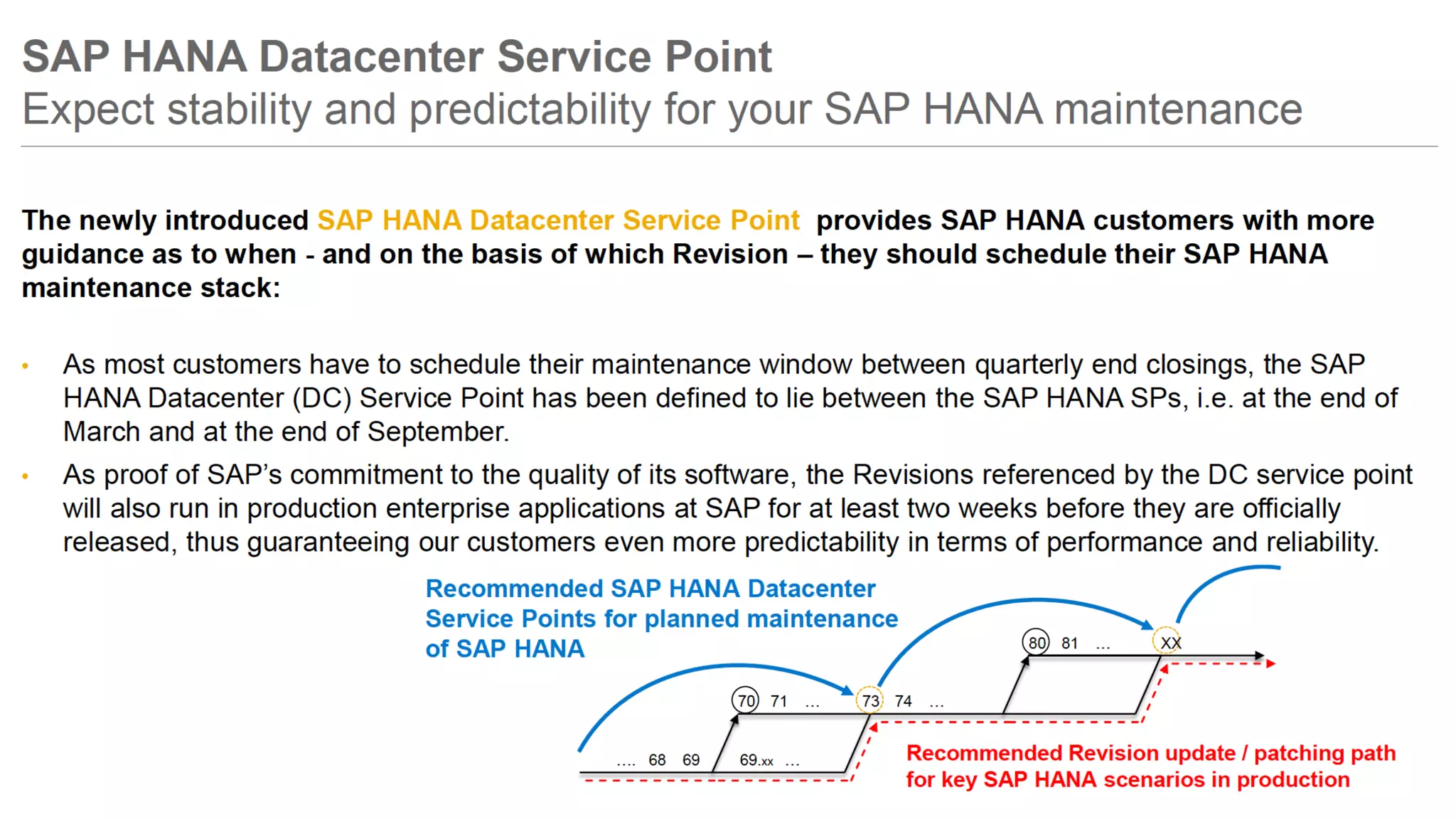

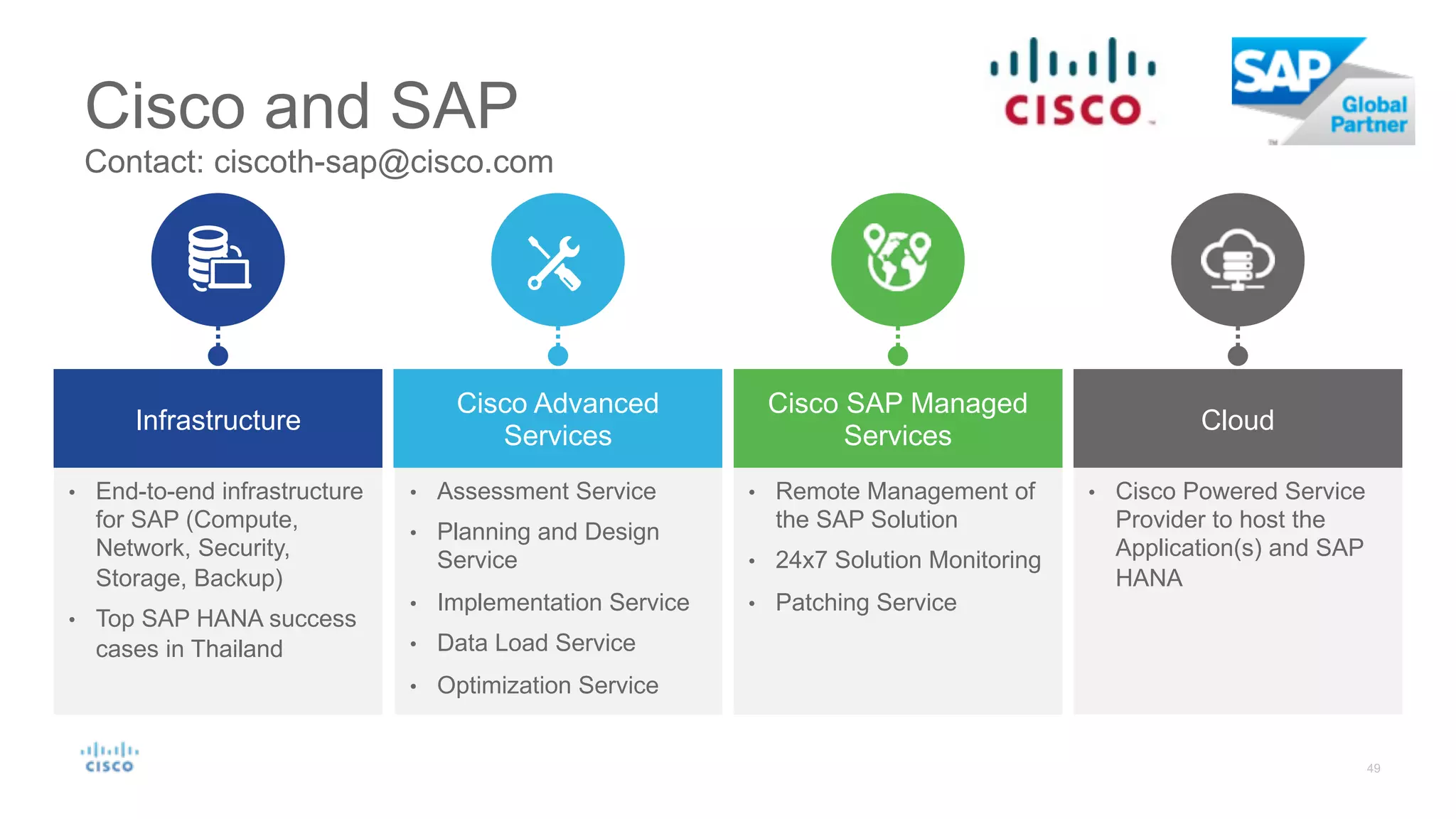

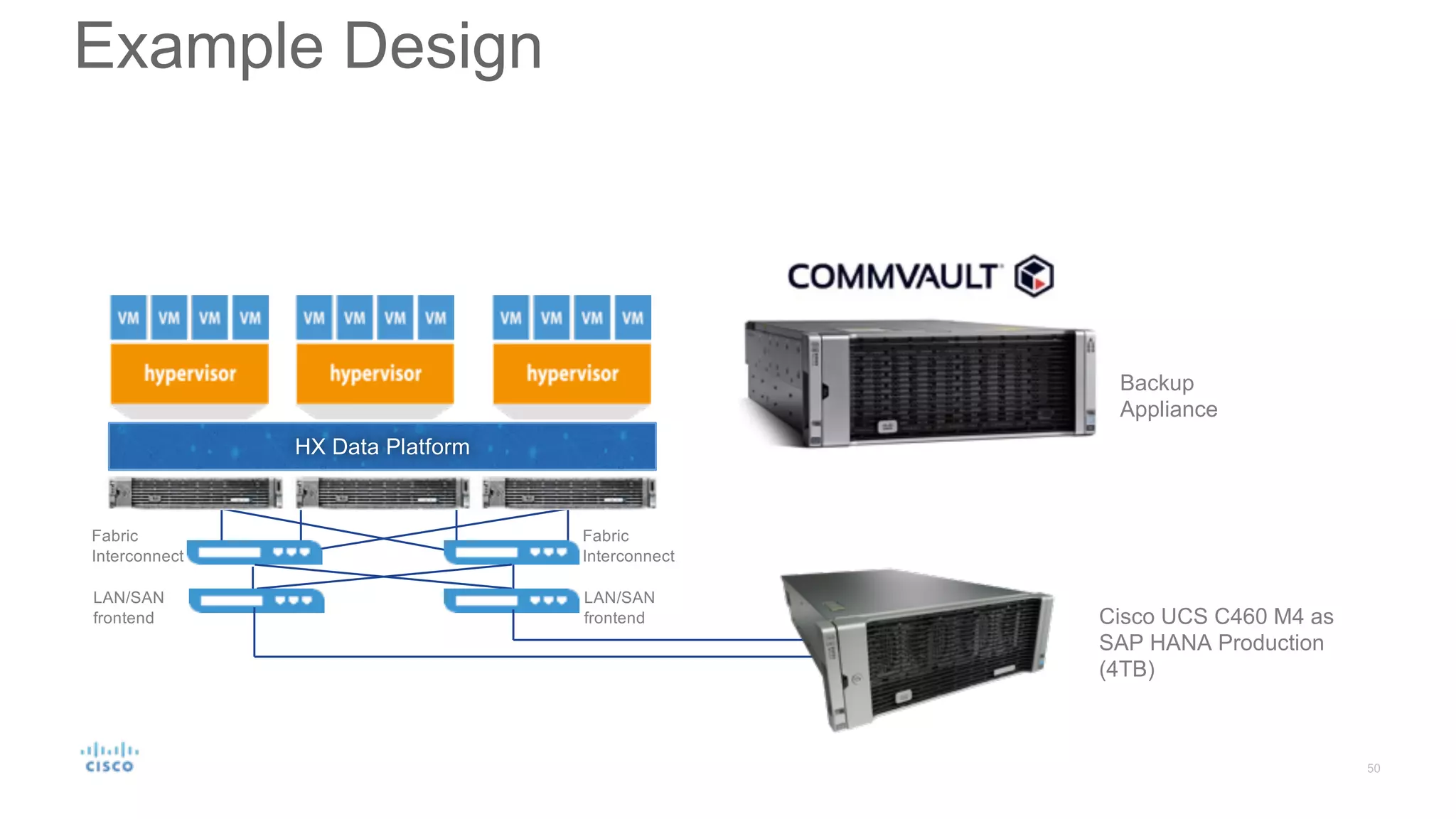

The document discusses topics related to designing and implementing an SAP HANA infrastructure, including the hardware and software components required for the SAP HANA server, storage, network, backup, and disaster recovery systems. It provides information on sizing SAP HANA systems, certified hardware partners, storage options like TDI, network requirements, security best practices, backup methods, and high availability and disaster recovery strategies. The presentation aims to help with planning and designing the various elements of an SAP HANA infrastructure.