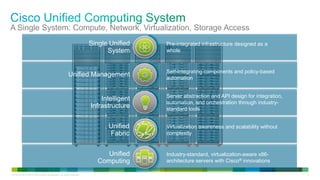

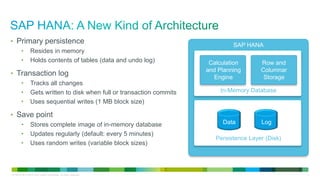

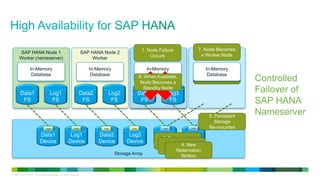

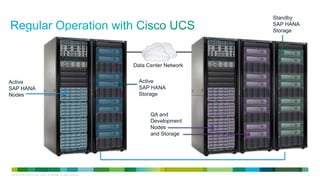

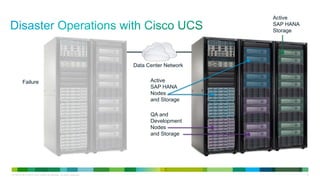

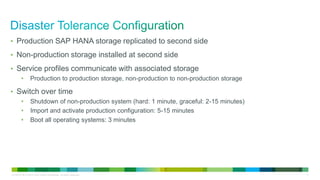

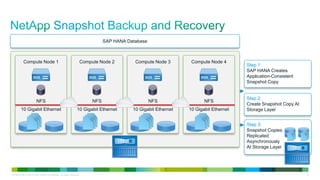

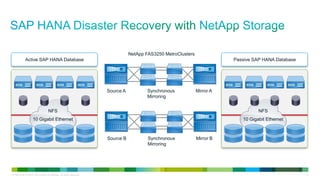

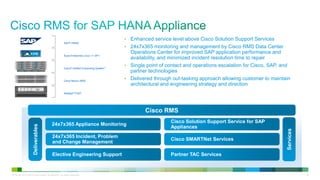

The document discusses Cisco UCS with NetApp storage for SAP HANA solutions. It provides an overview of Cisco UCS and how it provides a unified system for compute, network, virtualization and storage access. It also discusses NetApp storage solutions and how Cisco and NetApp can provide solutions for SAP HANA deployments including high availability, disaster recovery, and data migration services.