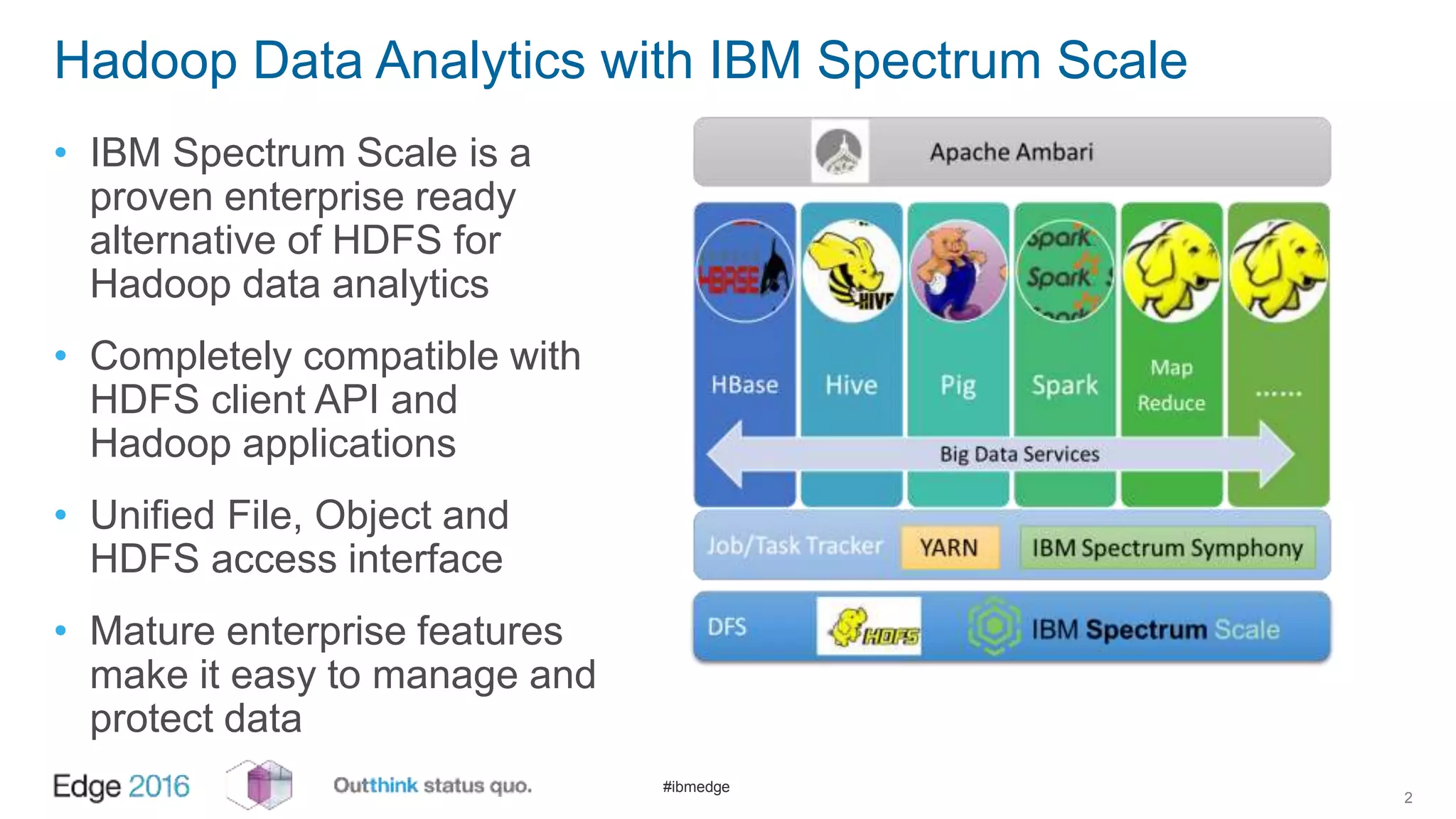

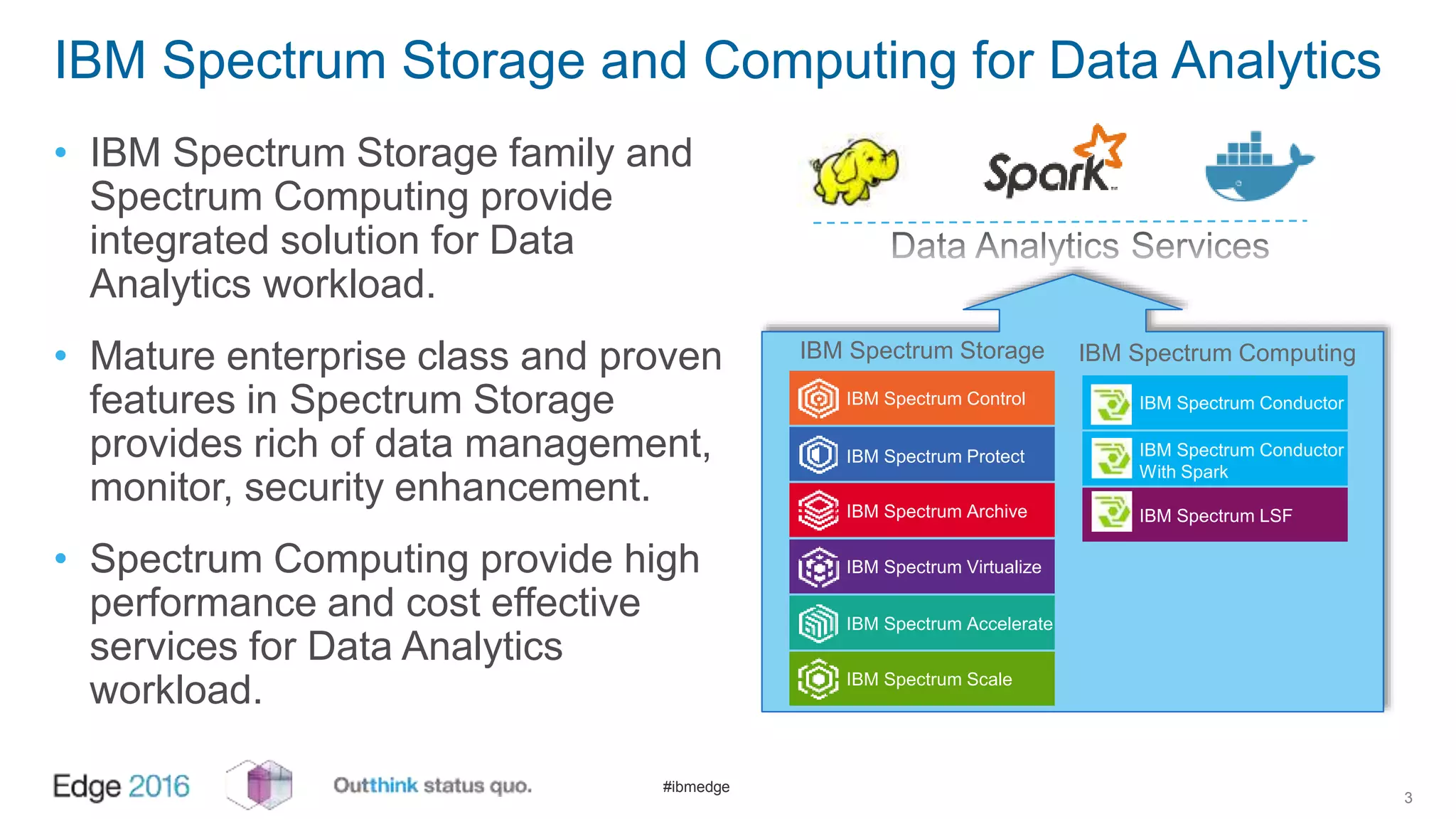

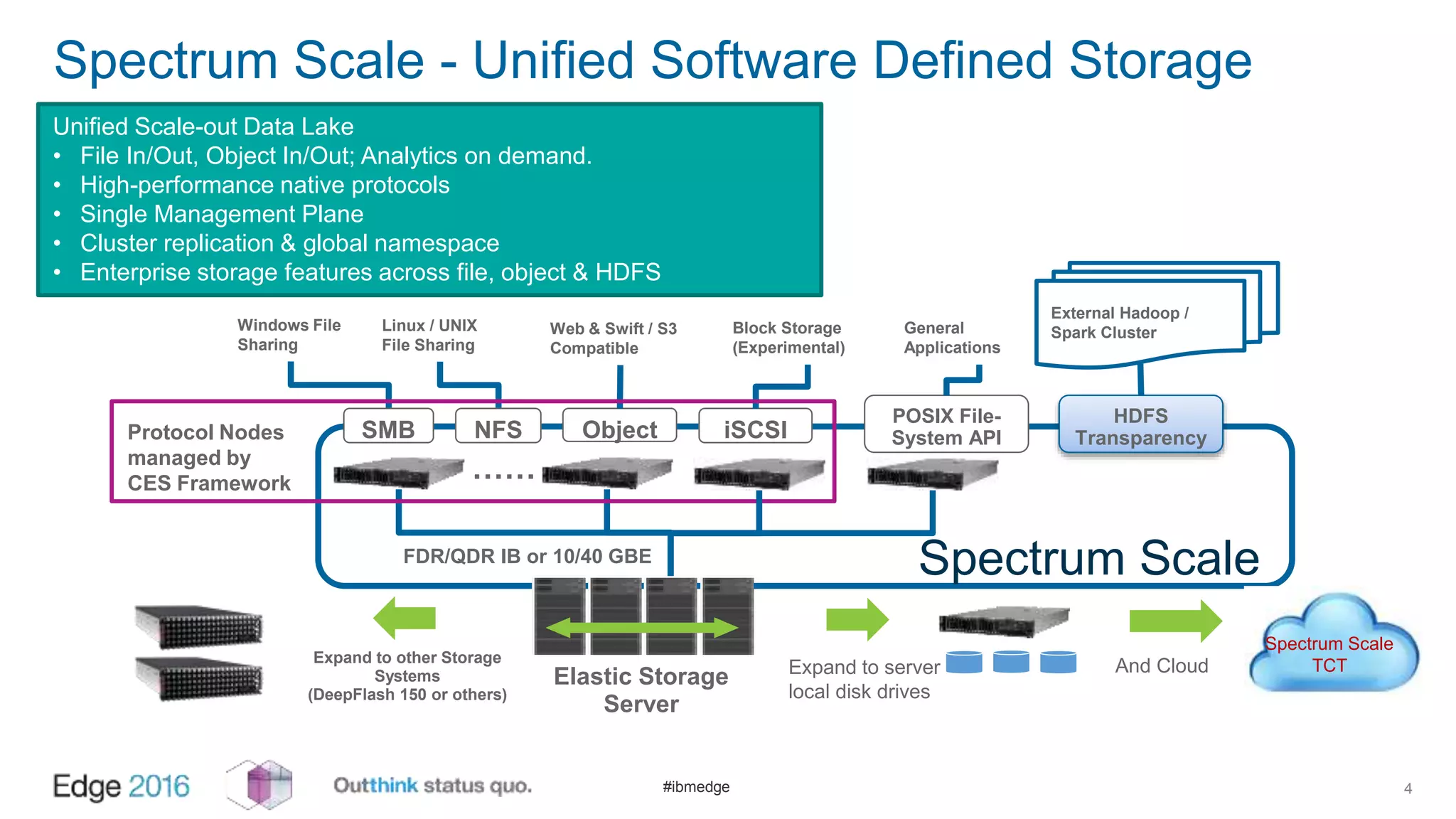

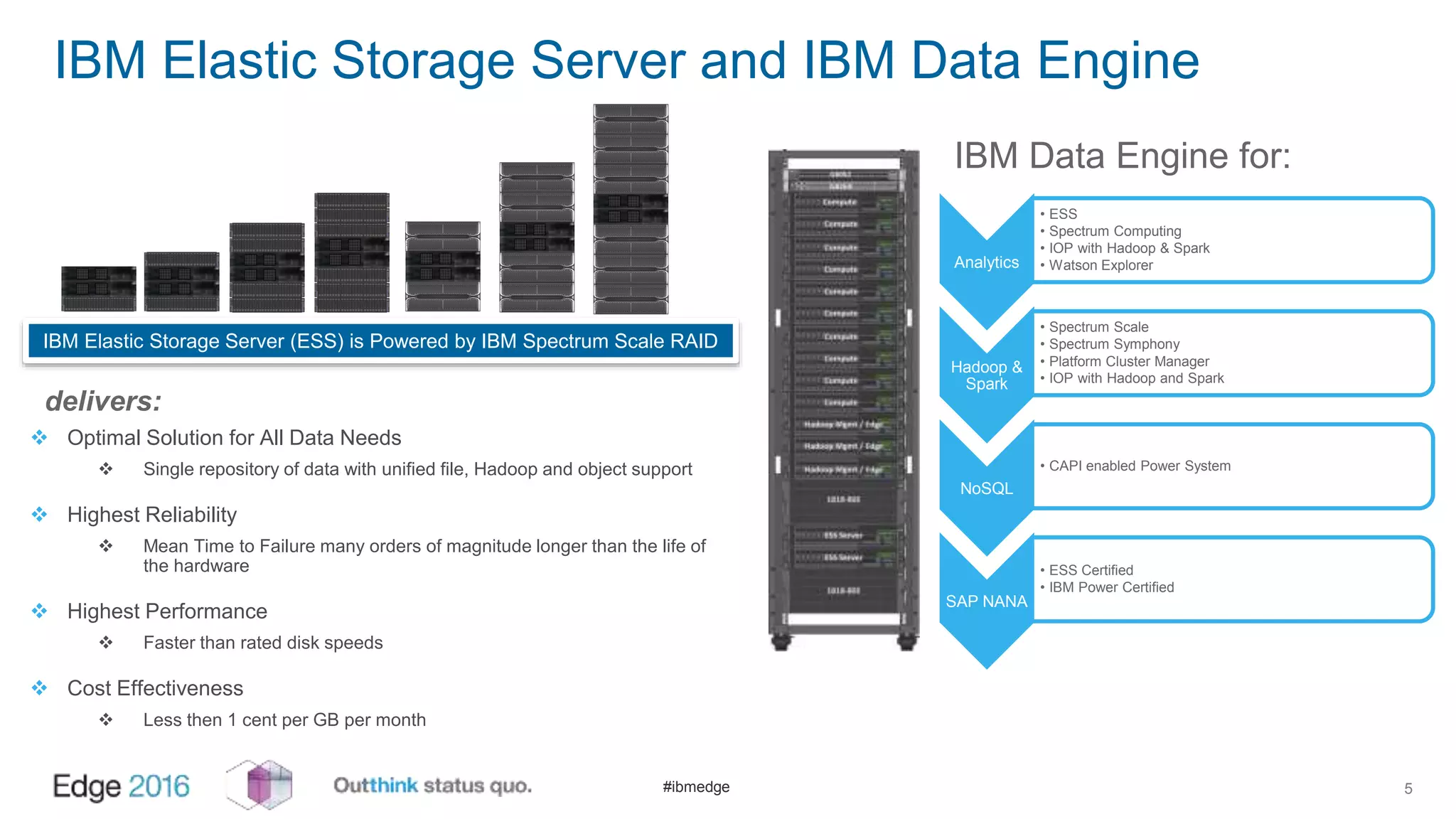

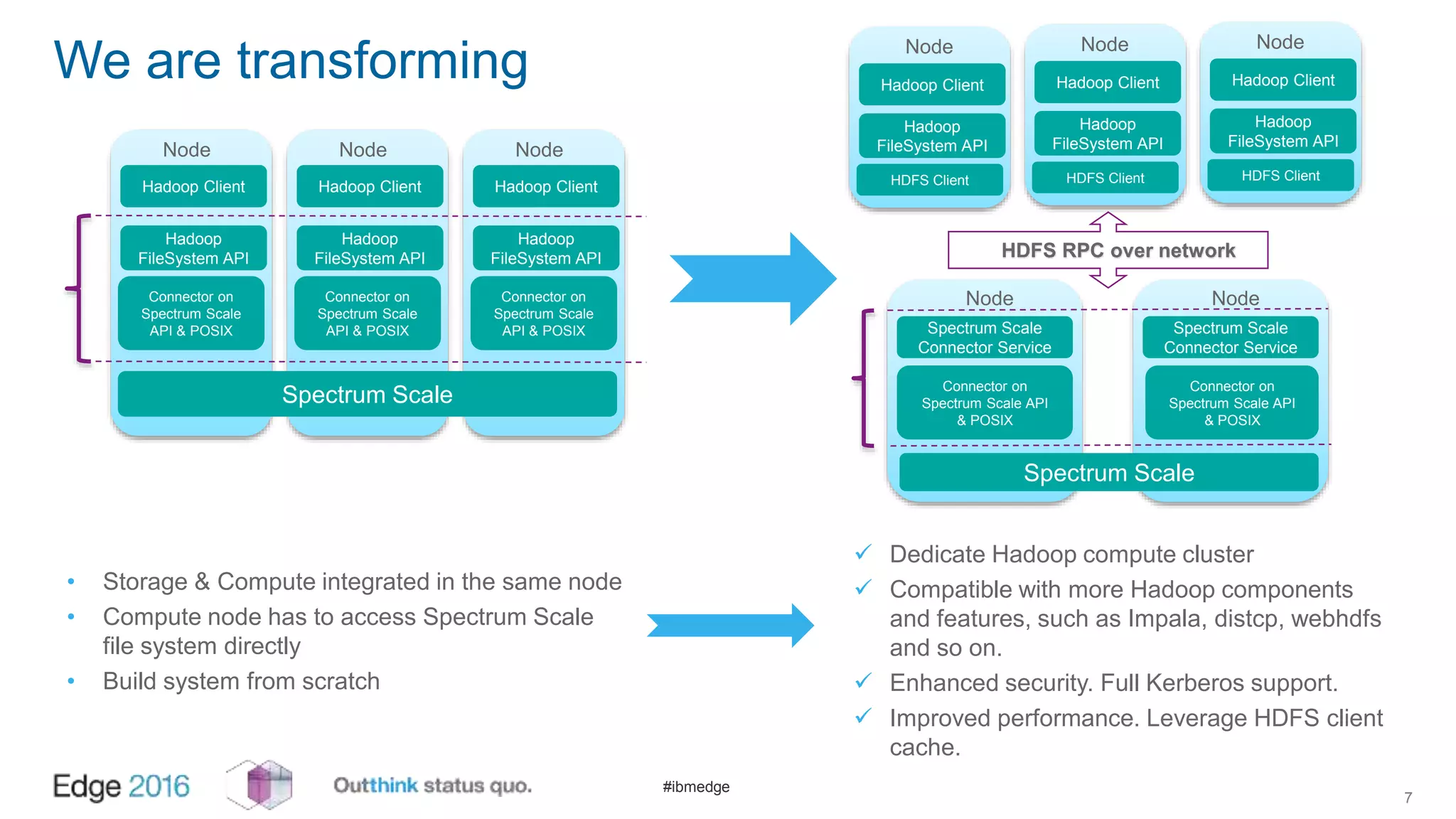

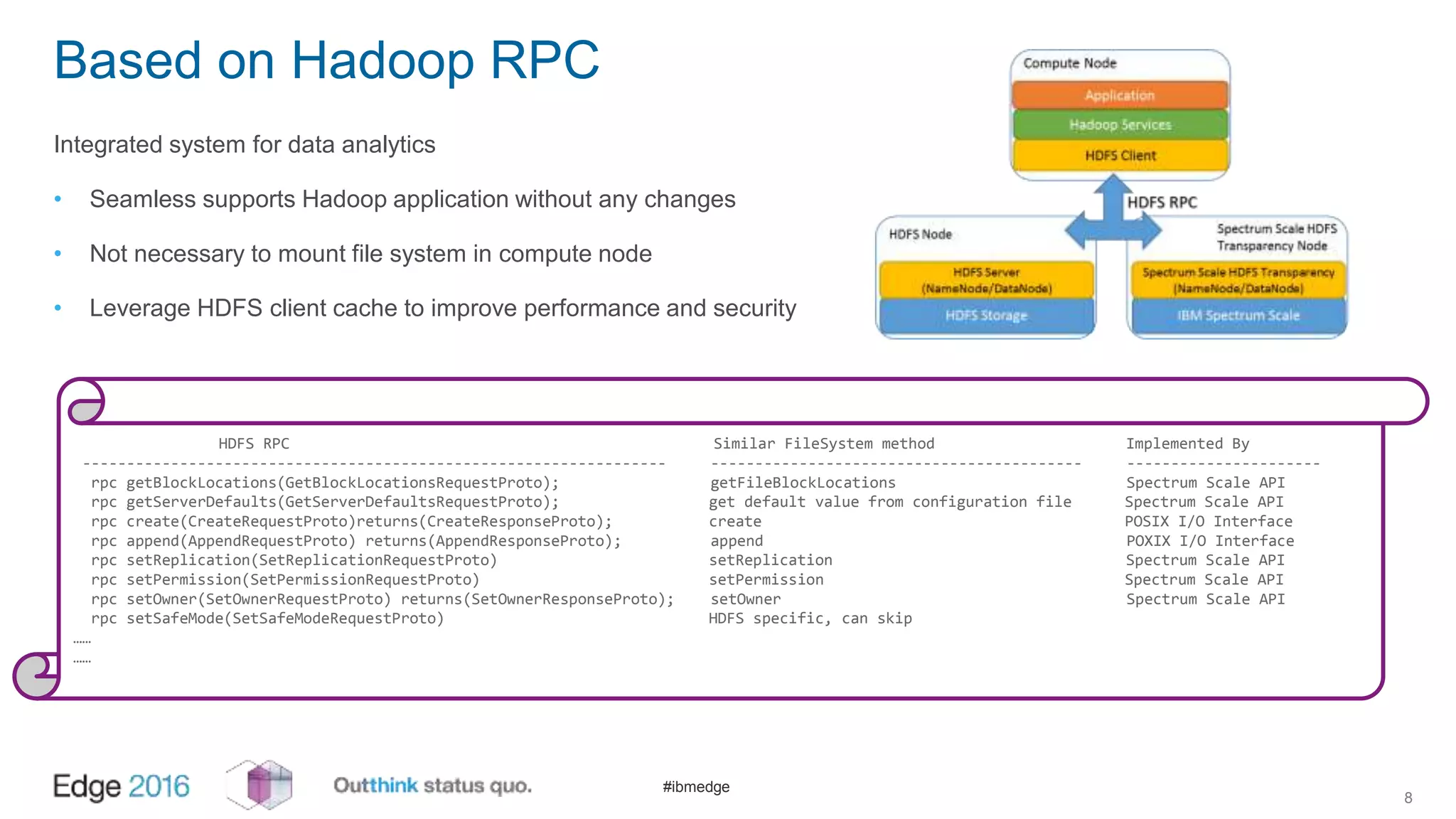

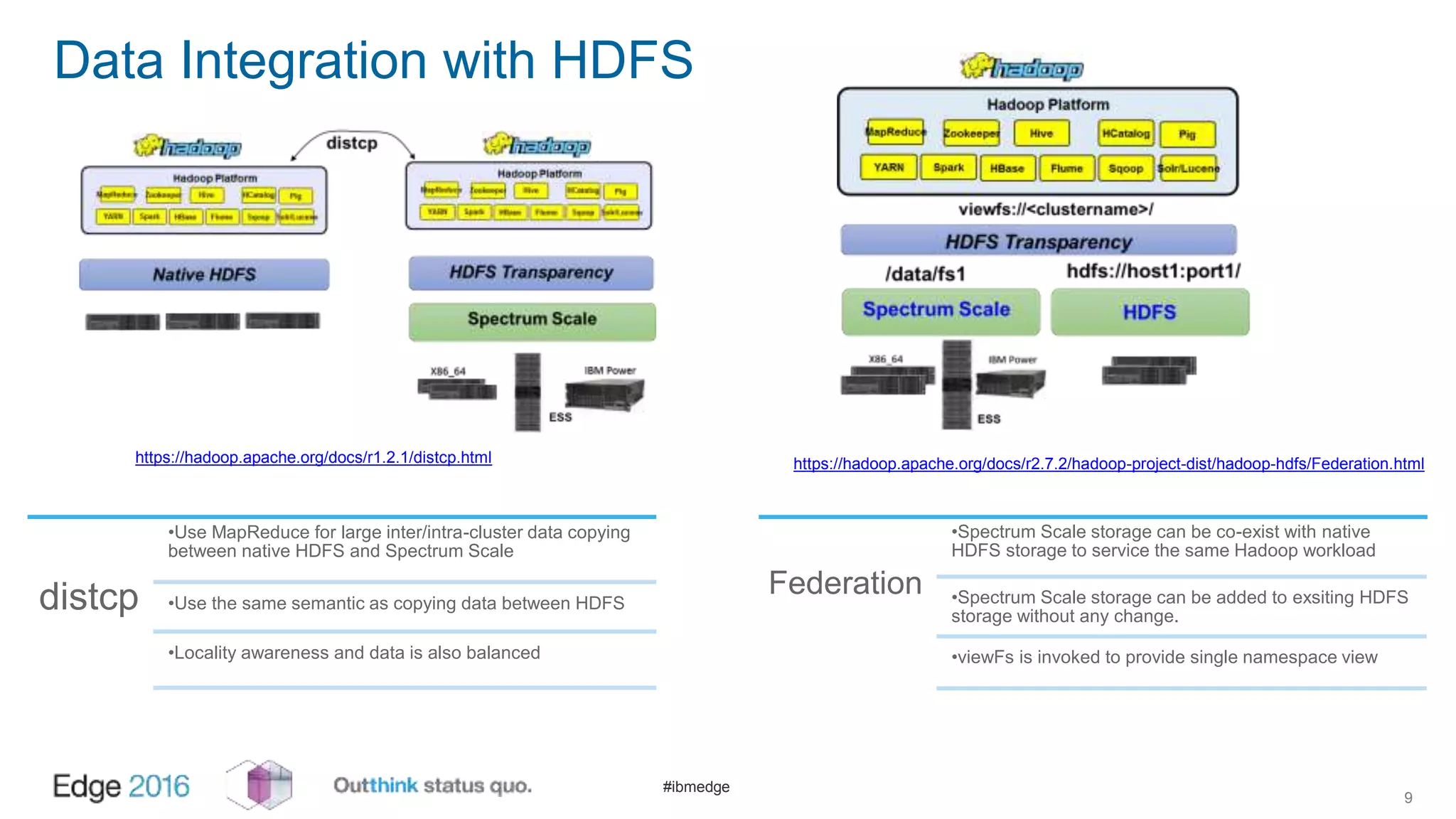

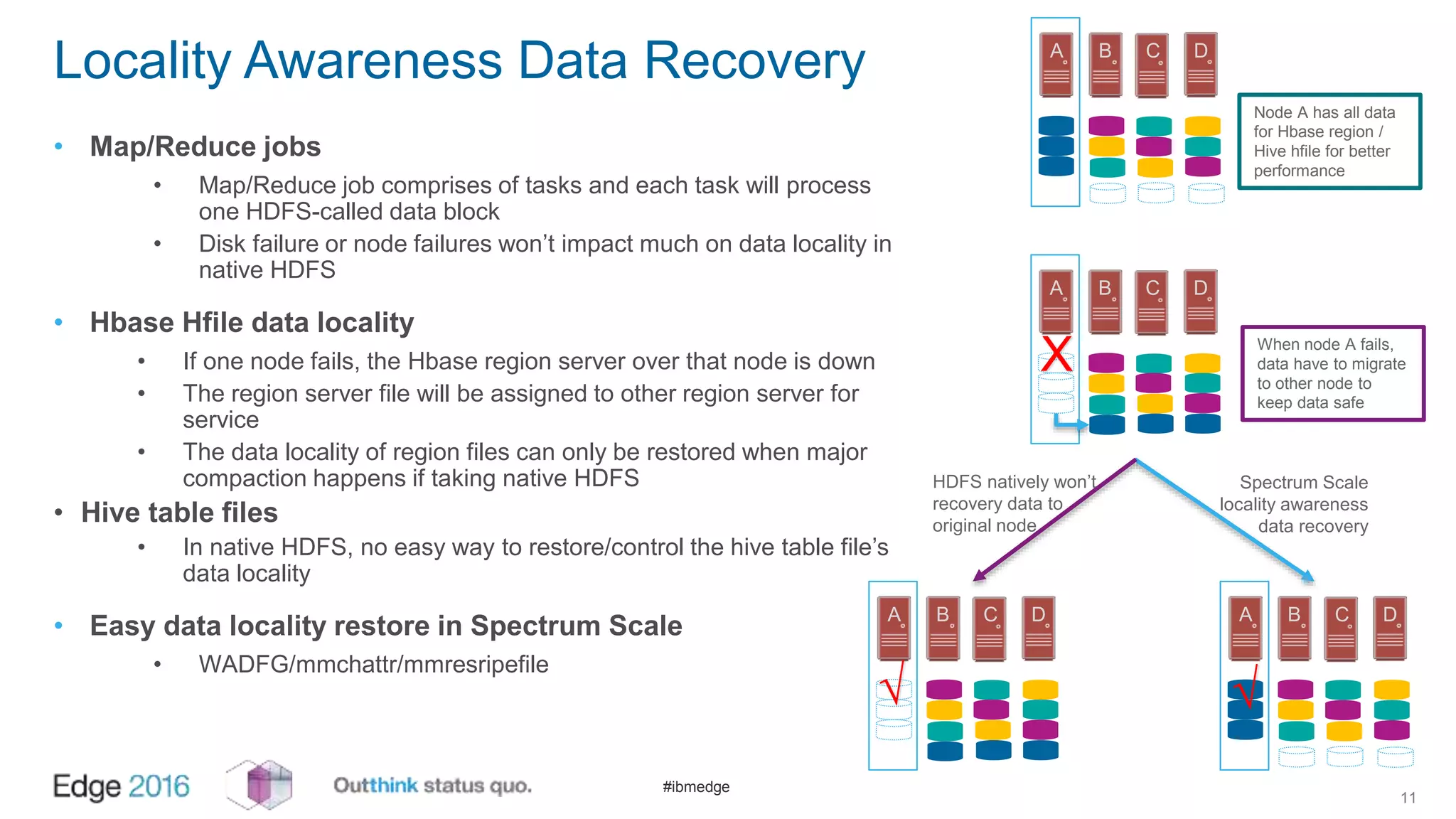

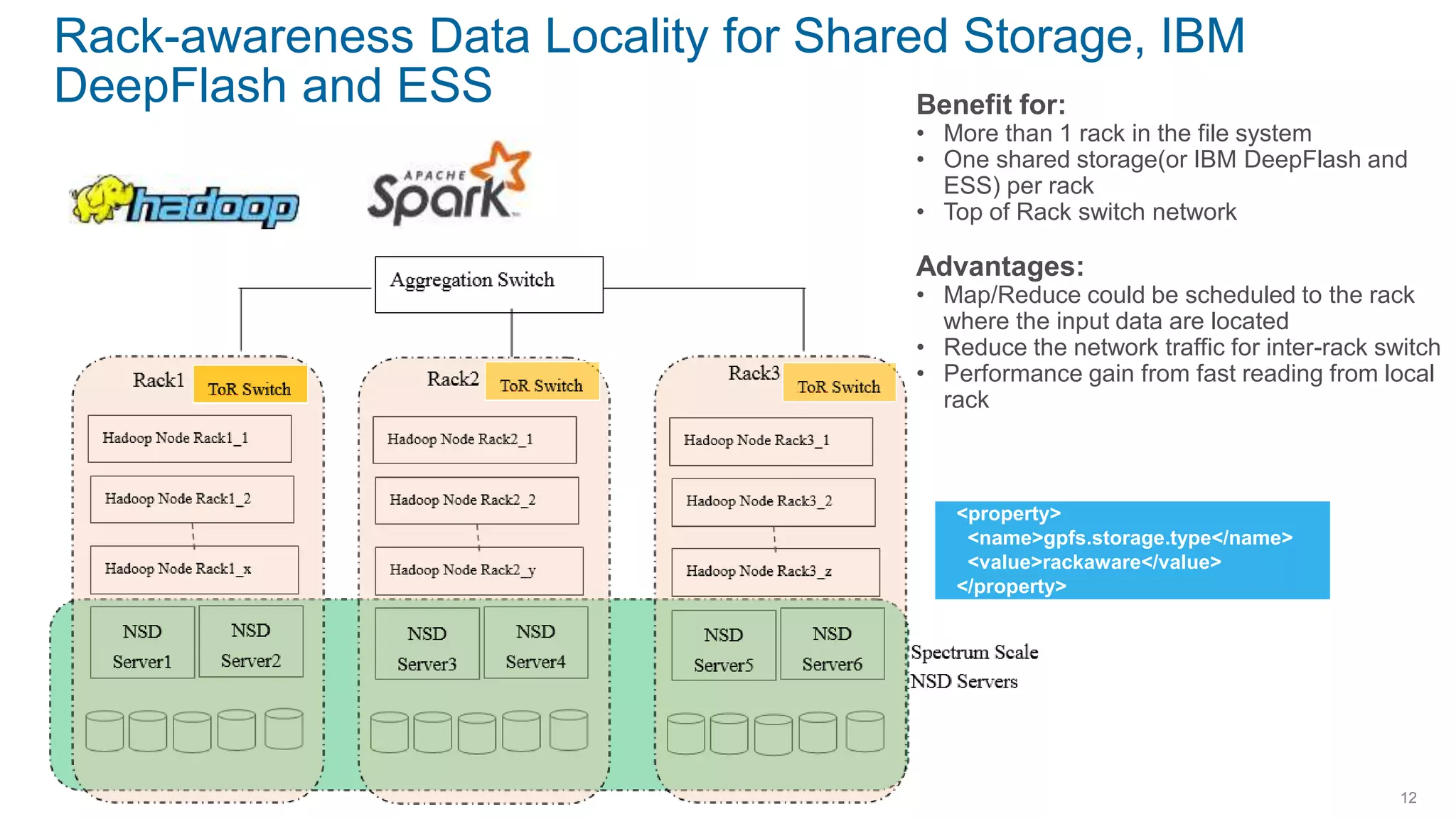

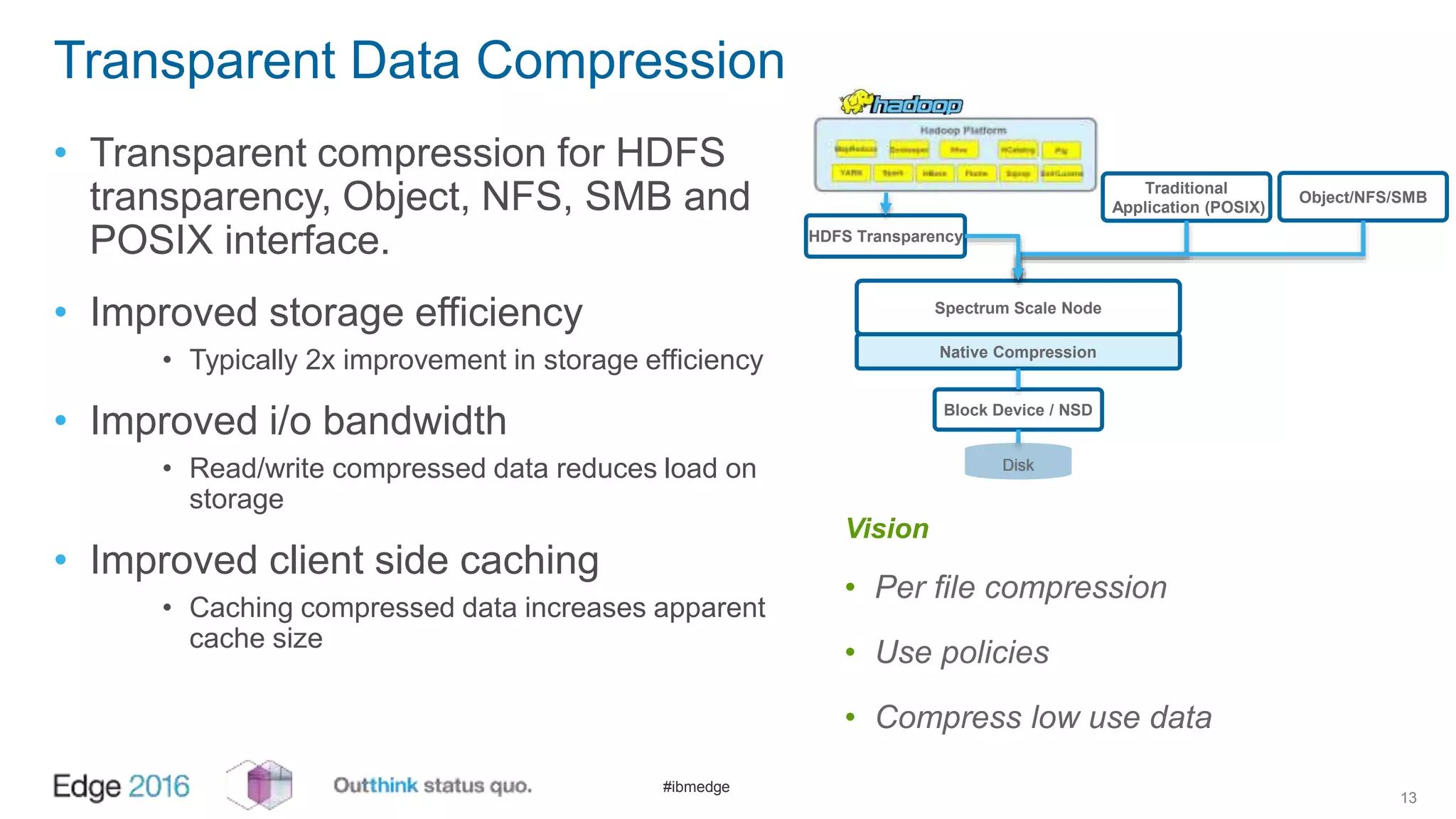

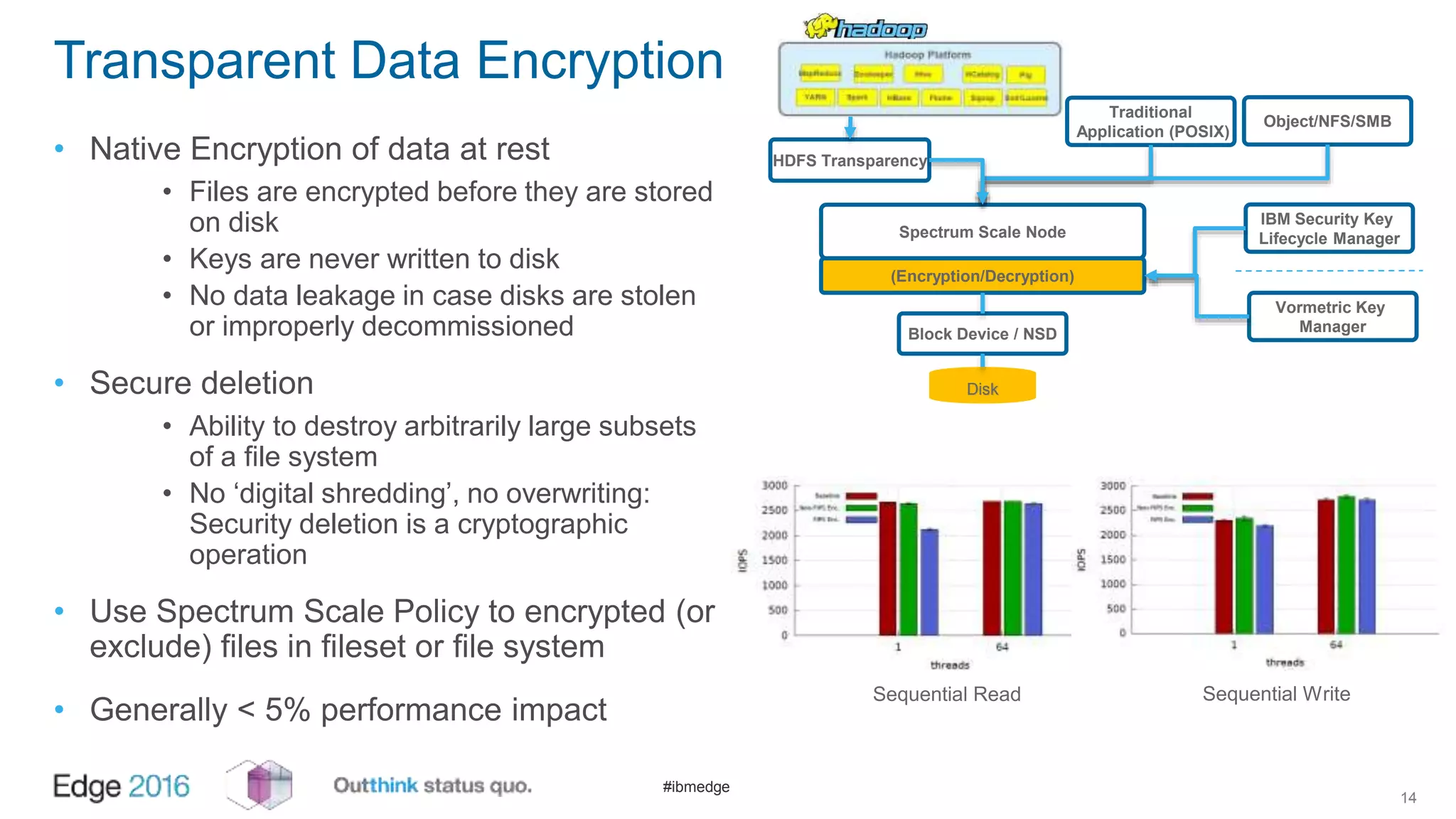

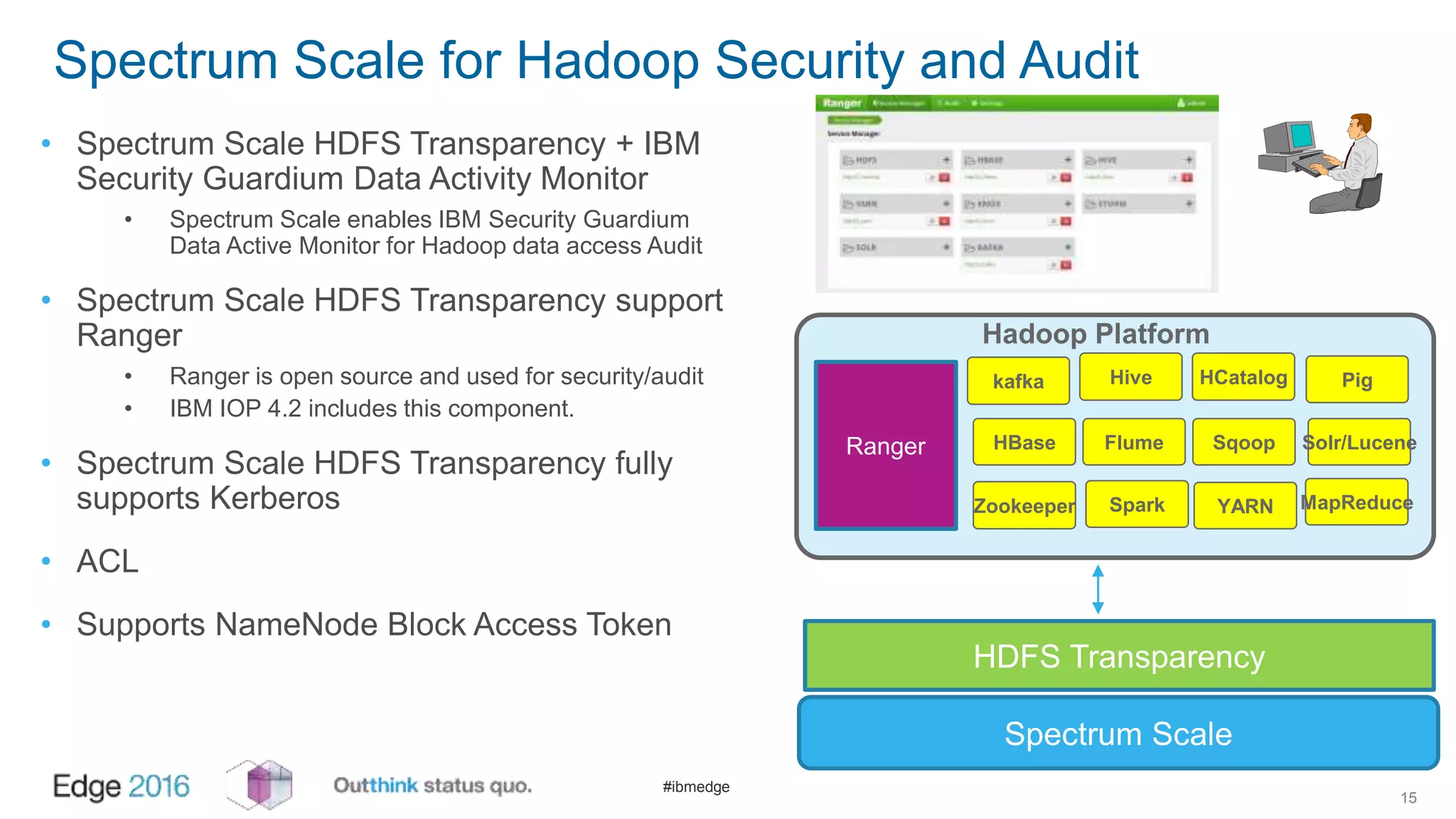

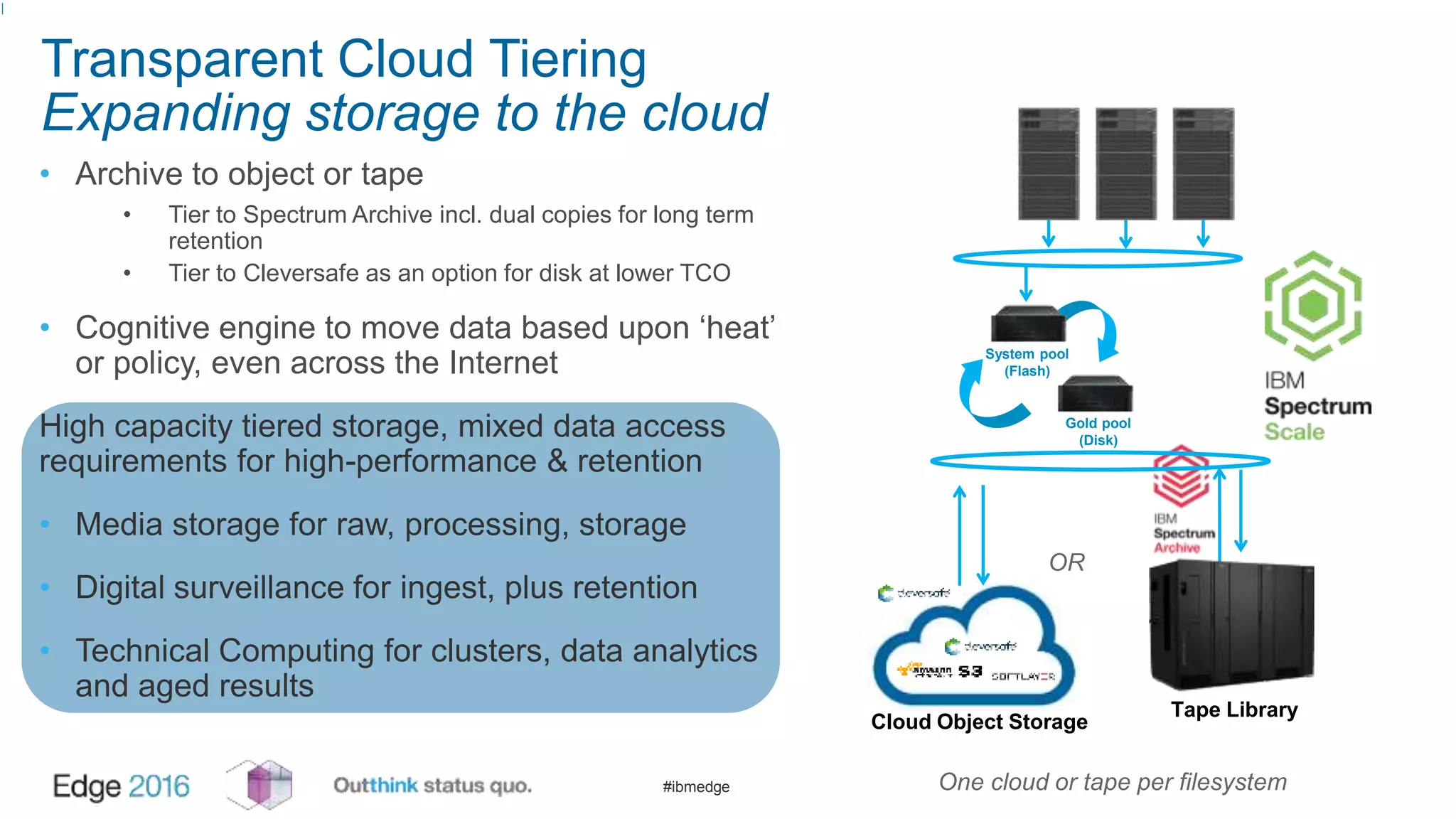

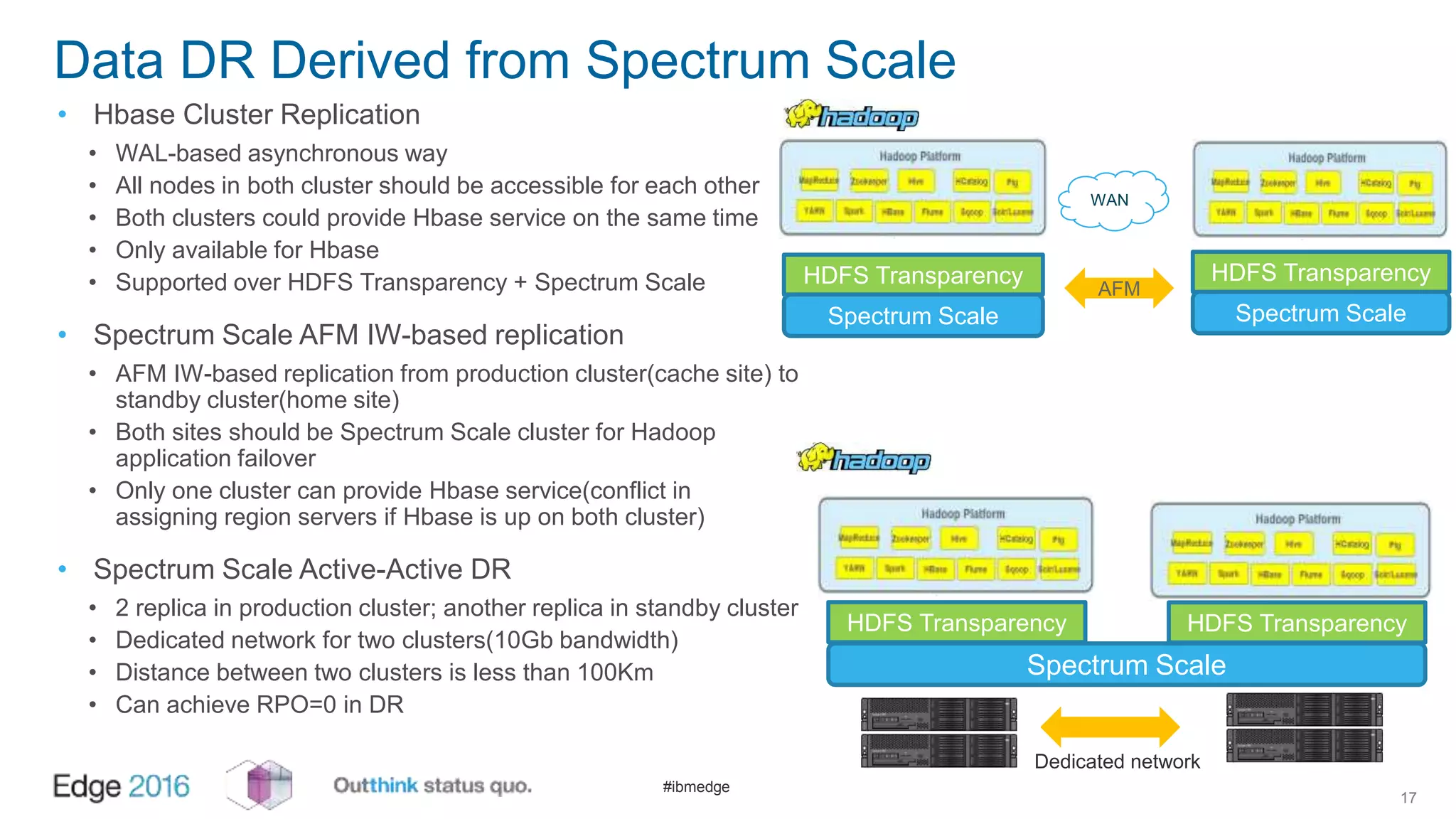

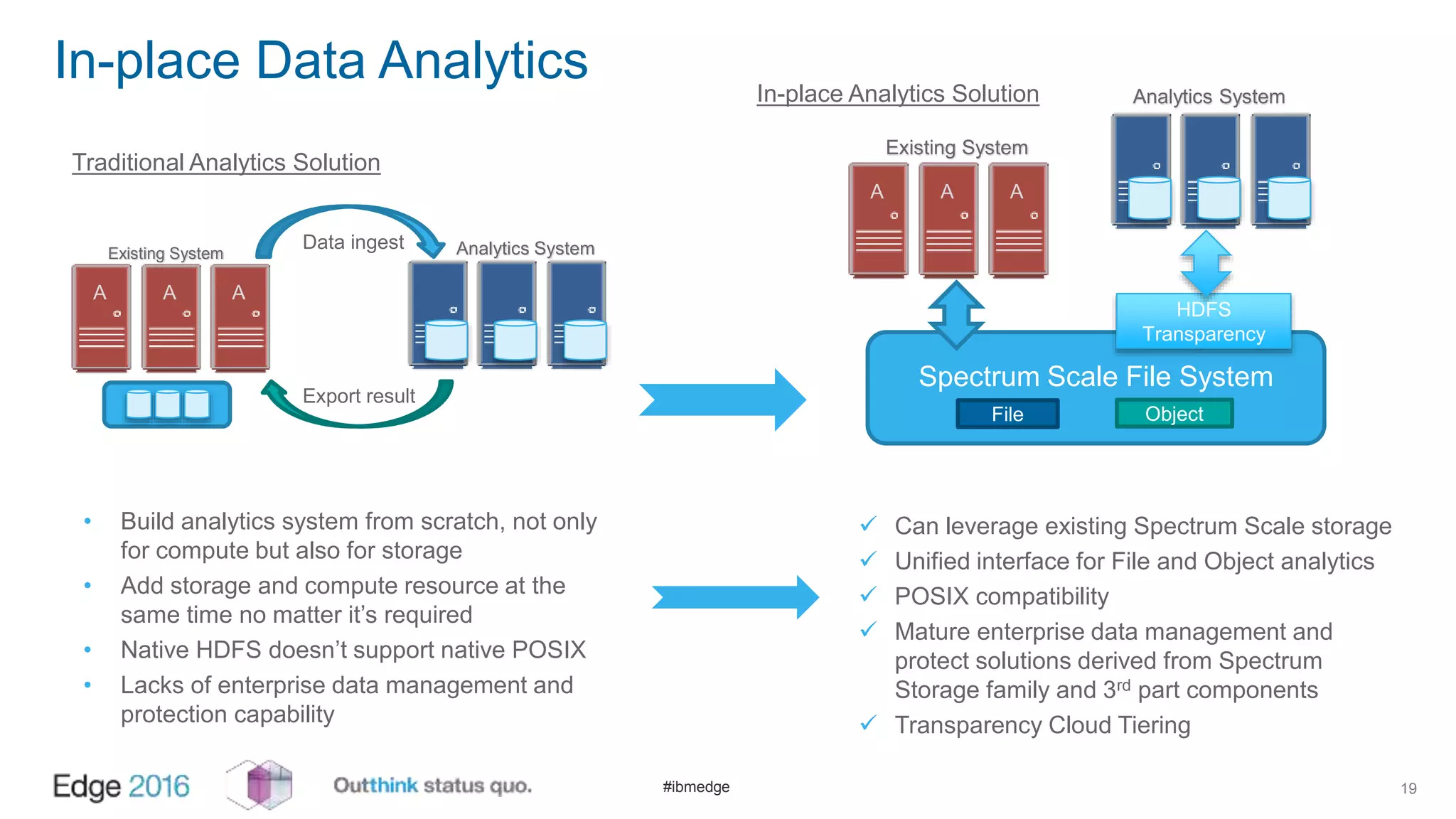

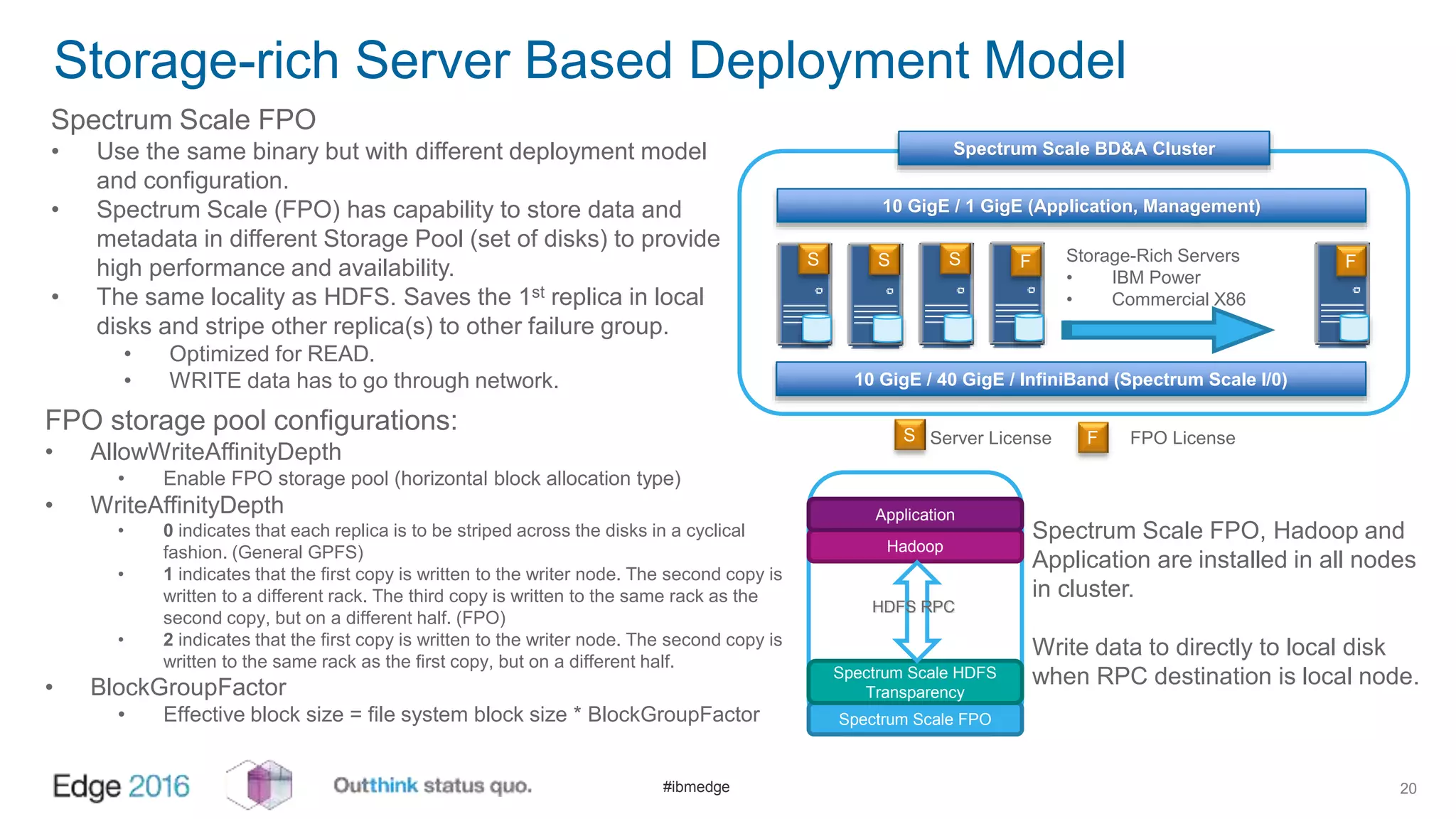

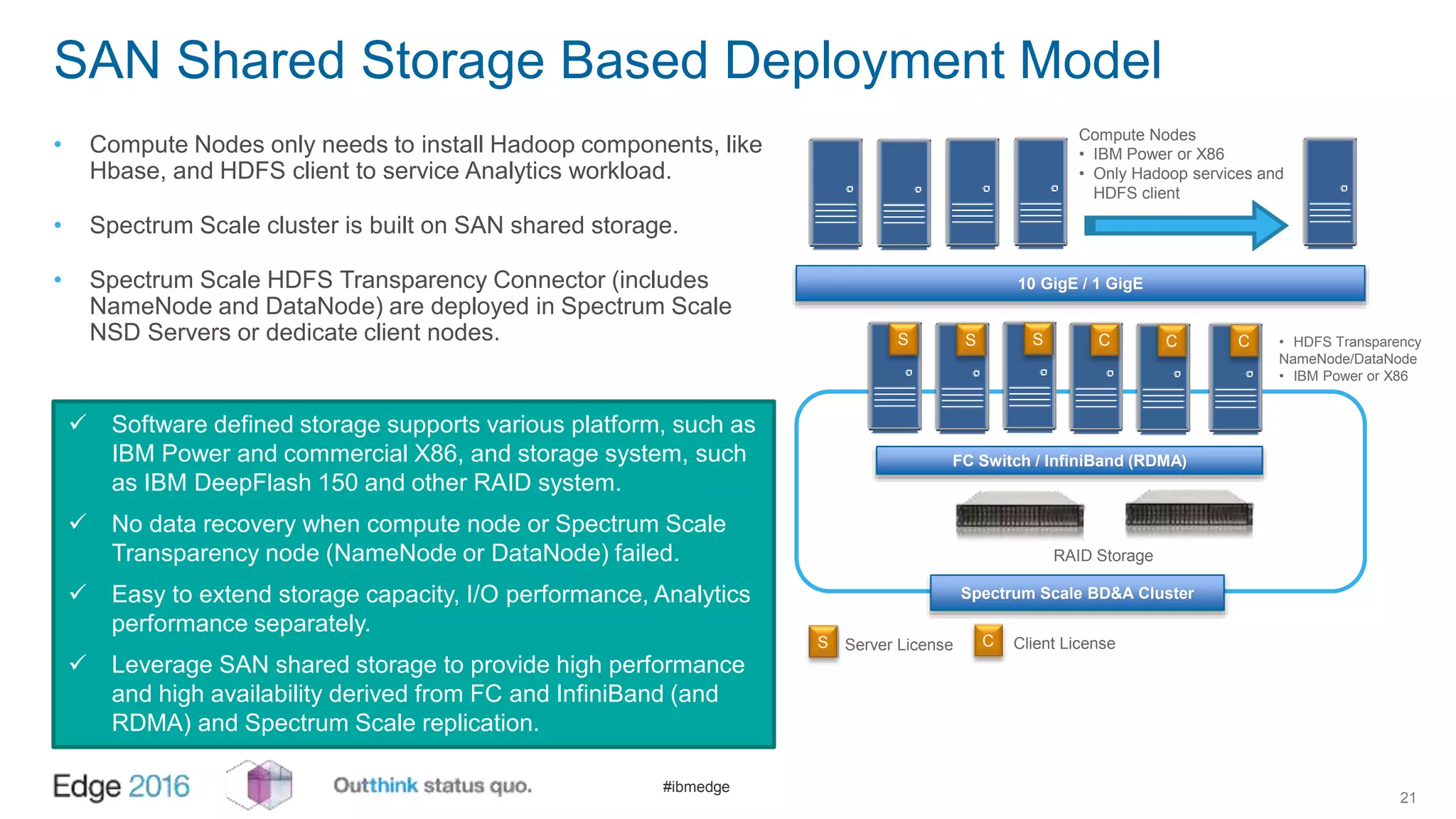

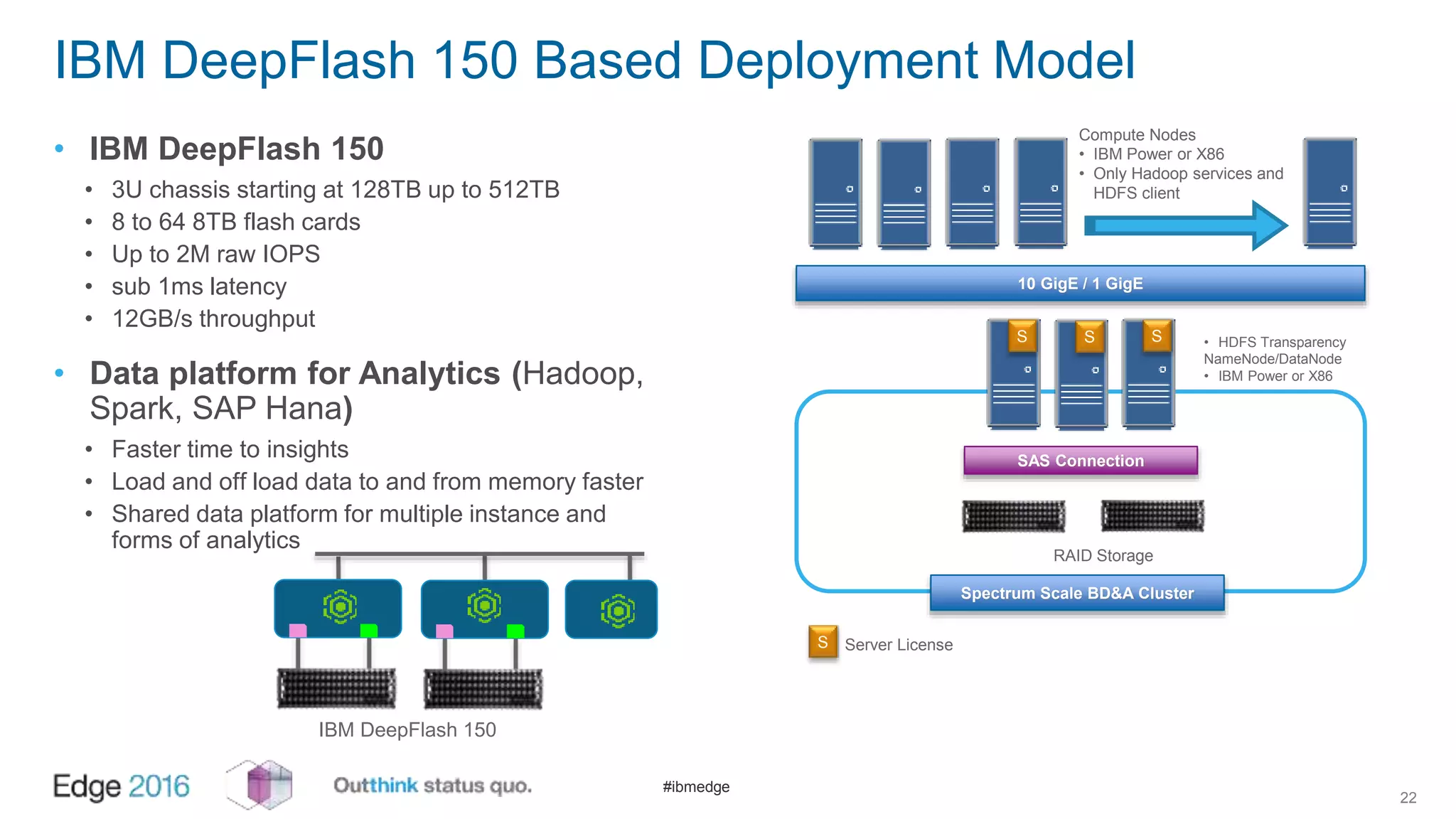

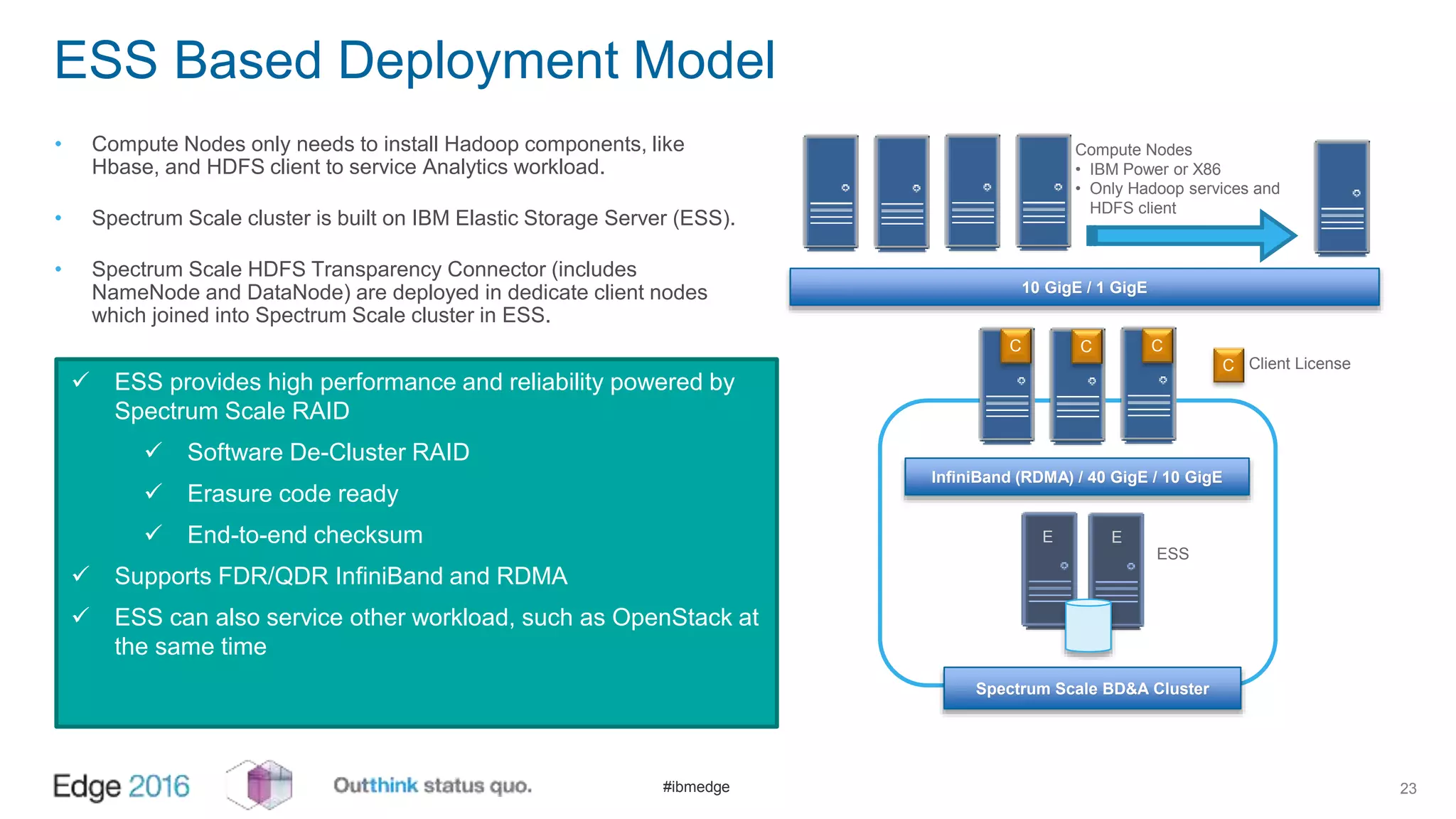

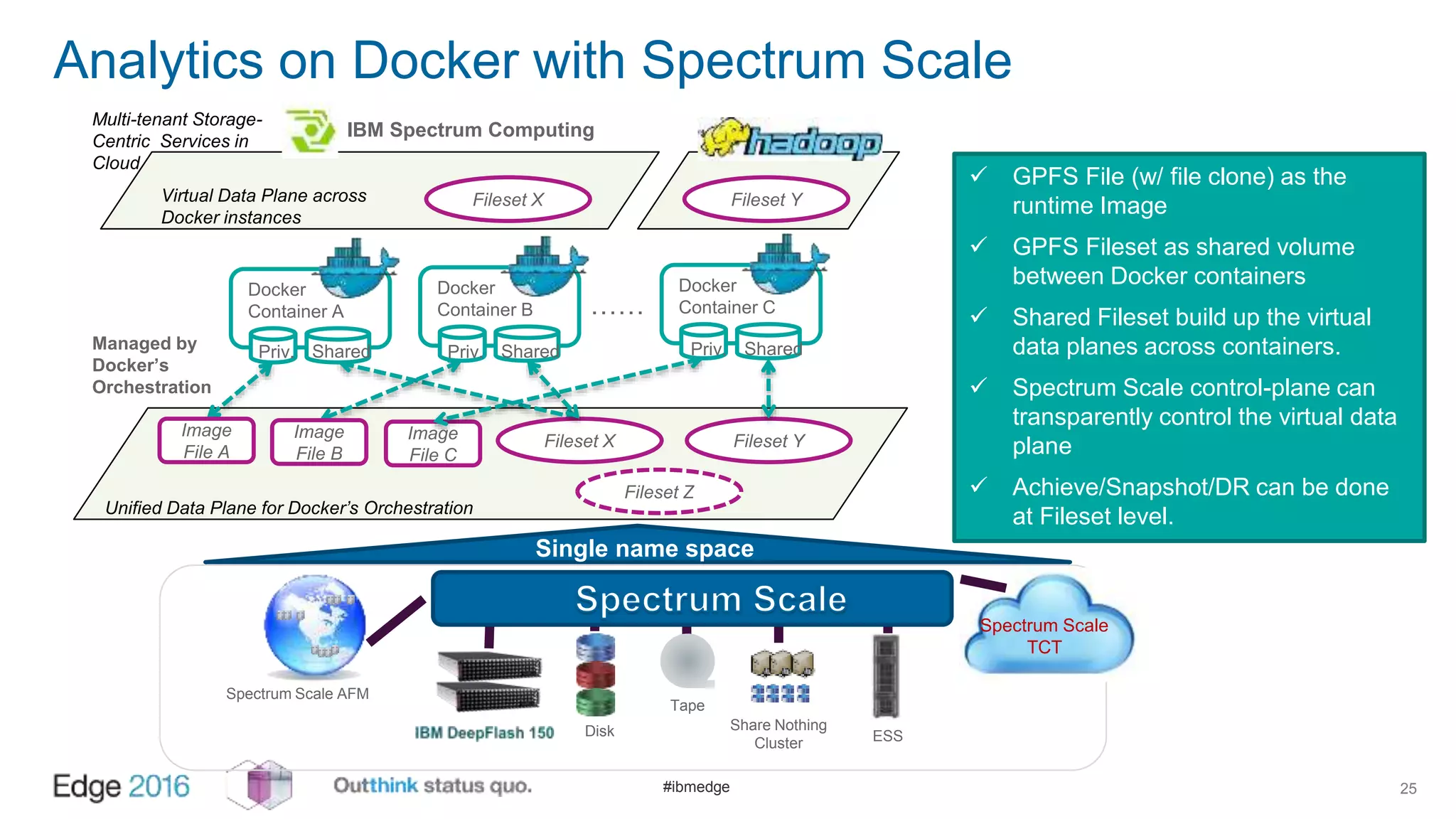

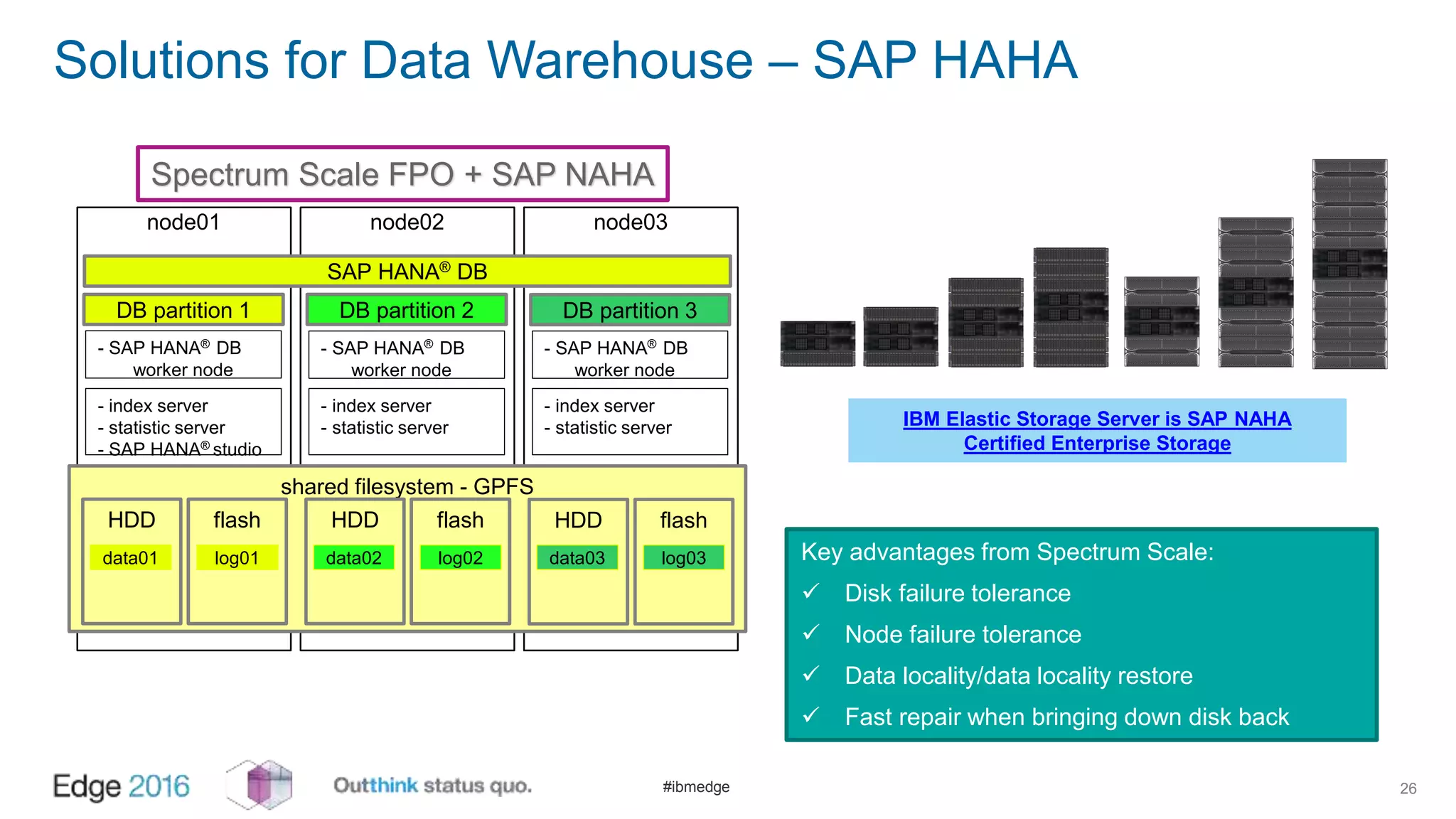

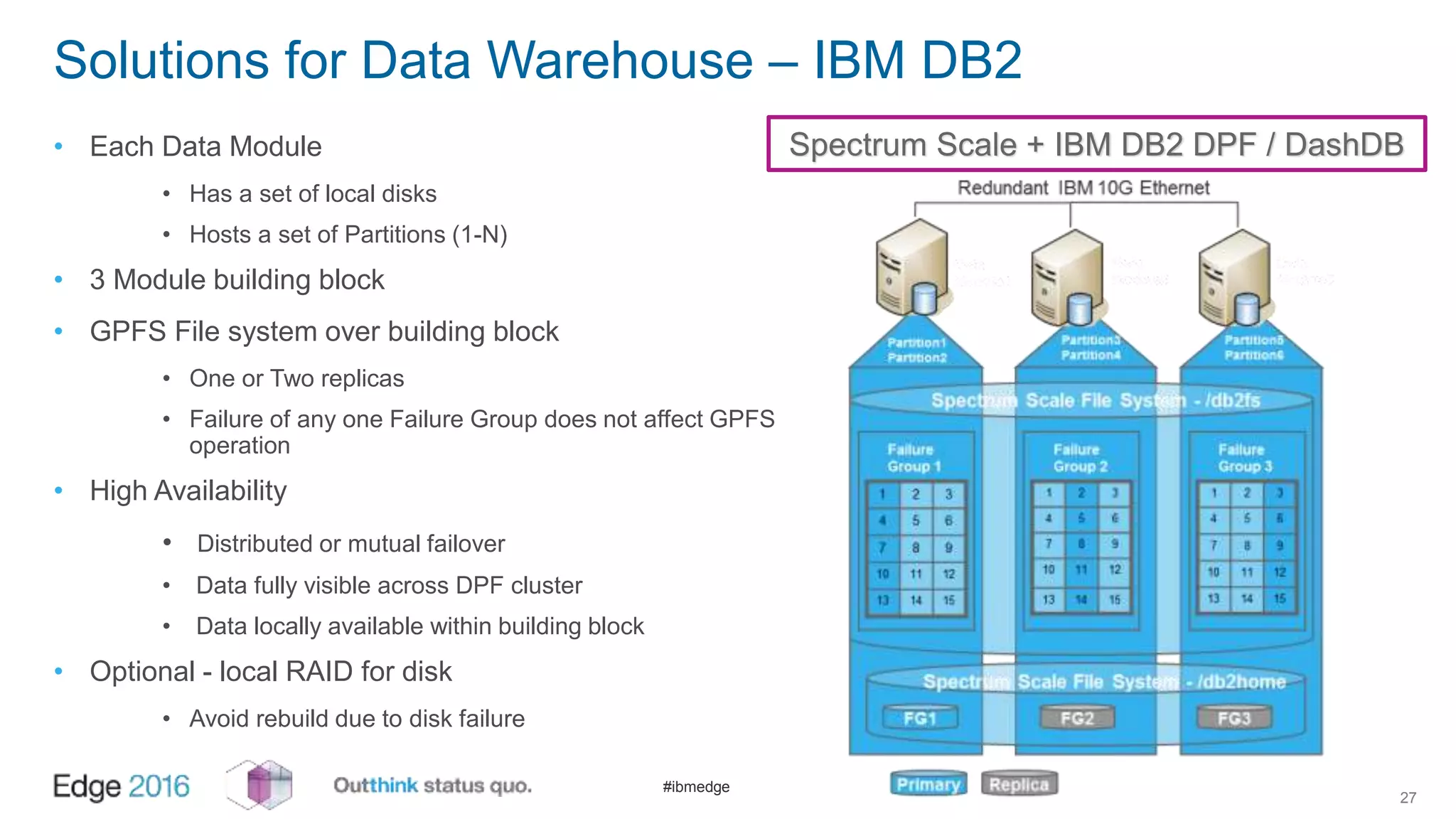

IBM Spectrum Scale provides a unified solution for Hadoop data analytics, ensuring compatibility with HDFS client APIs while offering advanced data management and protection features. It integrates storage and computing, enabling scalable deployment models for efficient data analytics workloads. Key features include transparency for HDFS, enhanced security, data locality awareness, and options for cloud tiering and archiving, positioning it as a robust choice for enterprise data analytics needs.