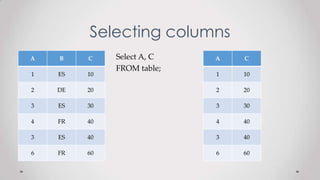

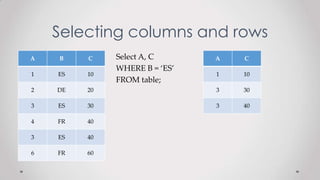

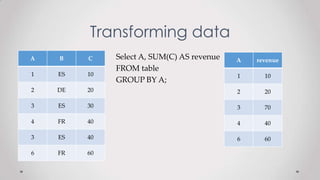

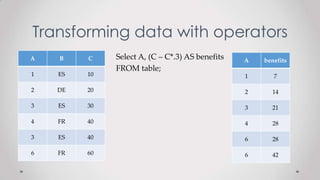

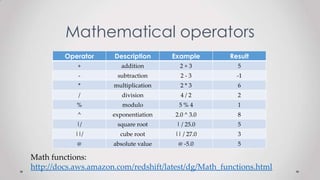

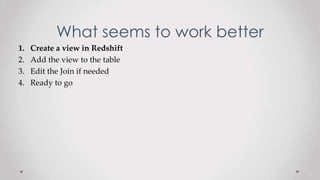

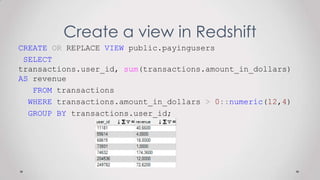

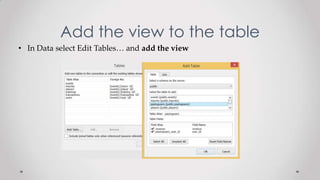

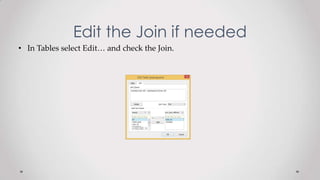

The document outlines the process for generating a new table using Tableau and Redshift without deleting, inserting, or updating existing data. It includes examples of selecting columns and rows, transforming data using grouping and mathematical operators, and creating views in Redshift. Additionally, it provides links to relevant SQL and Redshift documentation for further reference.