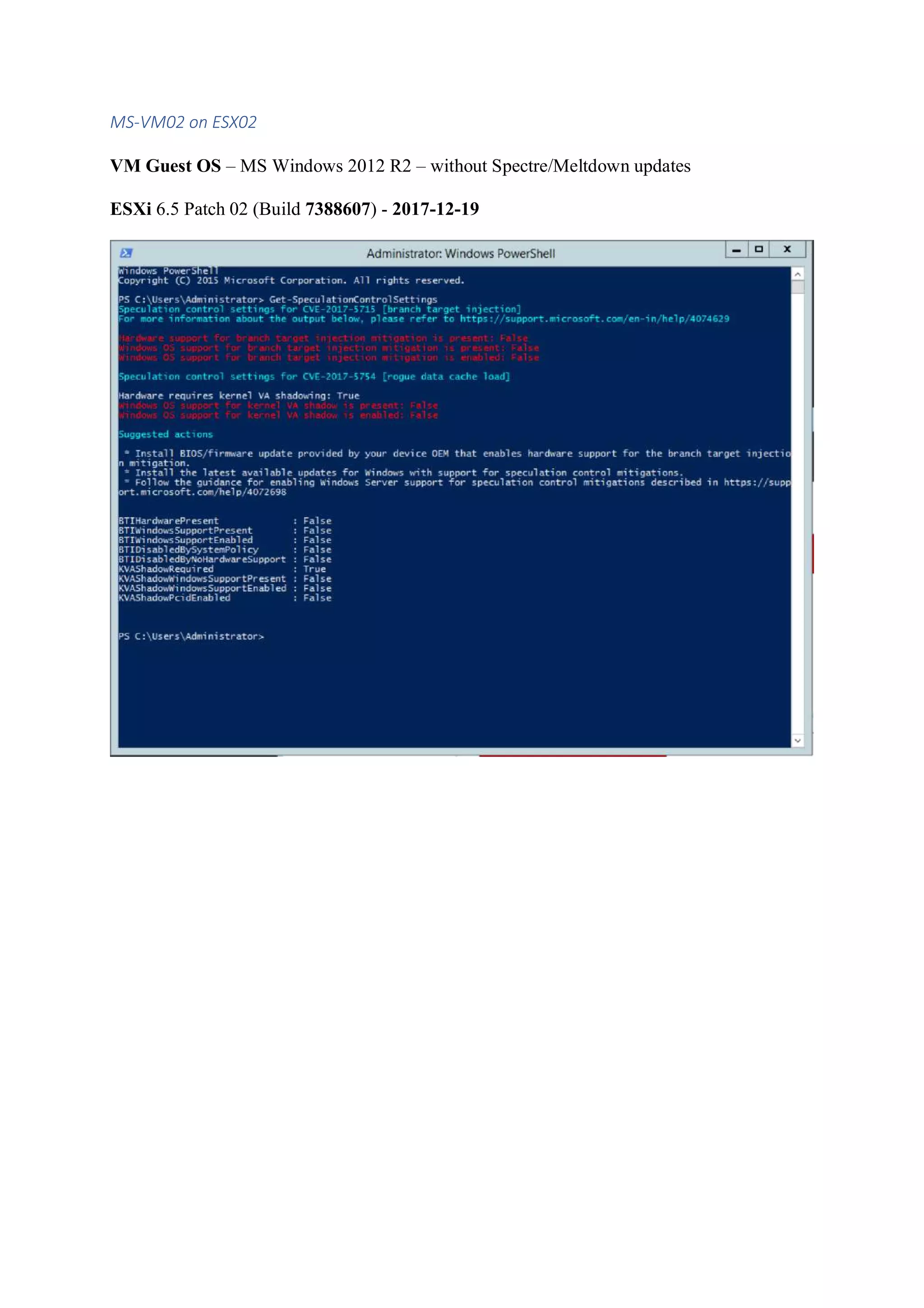

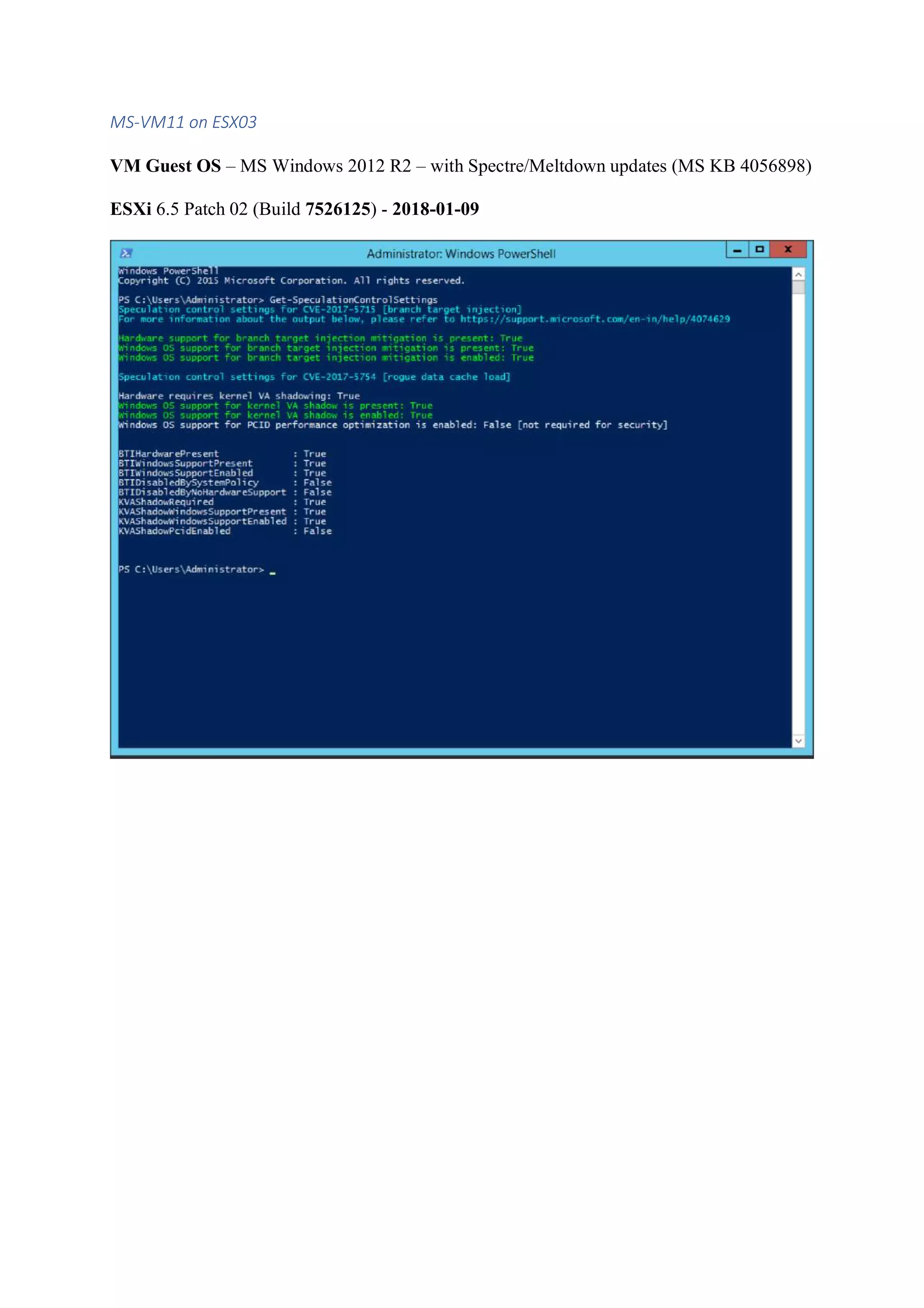

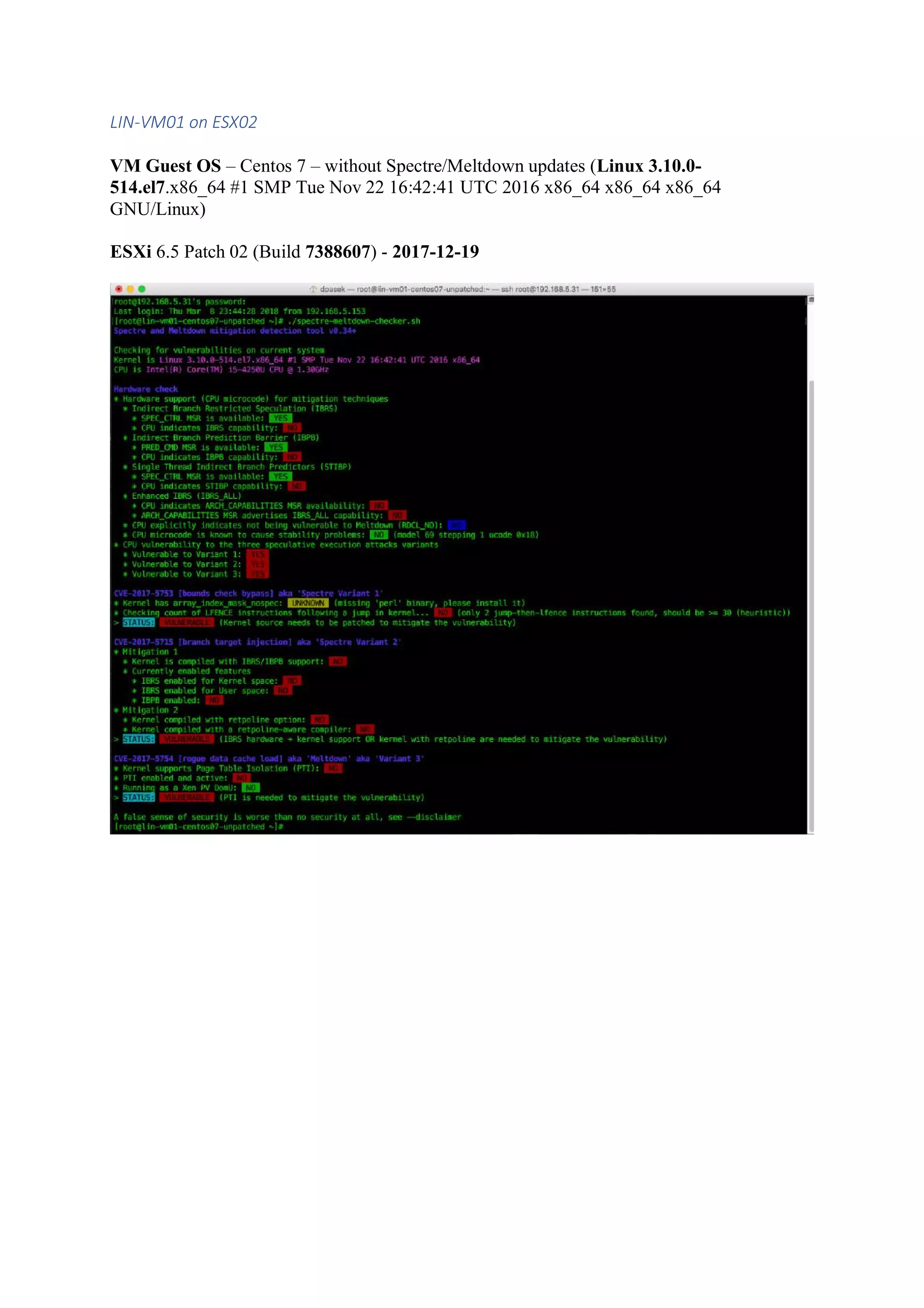

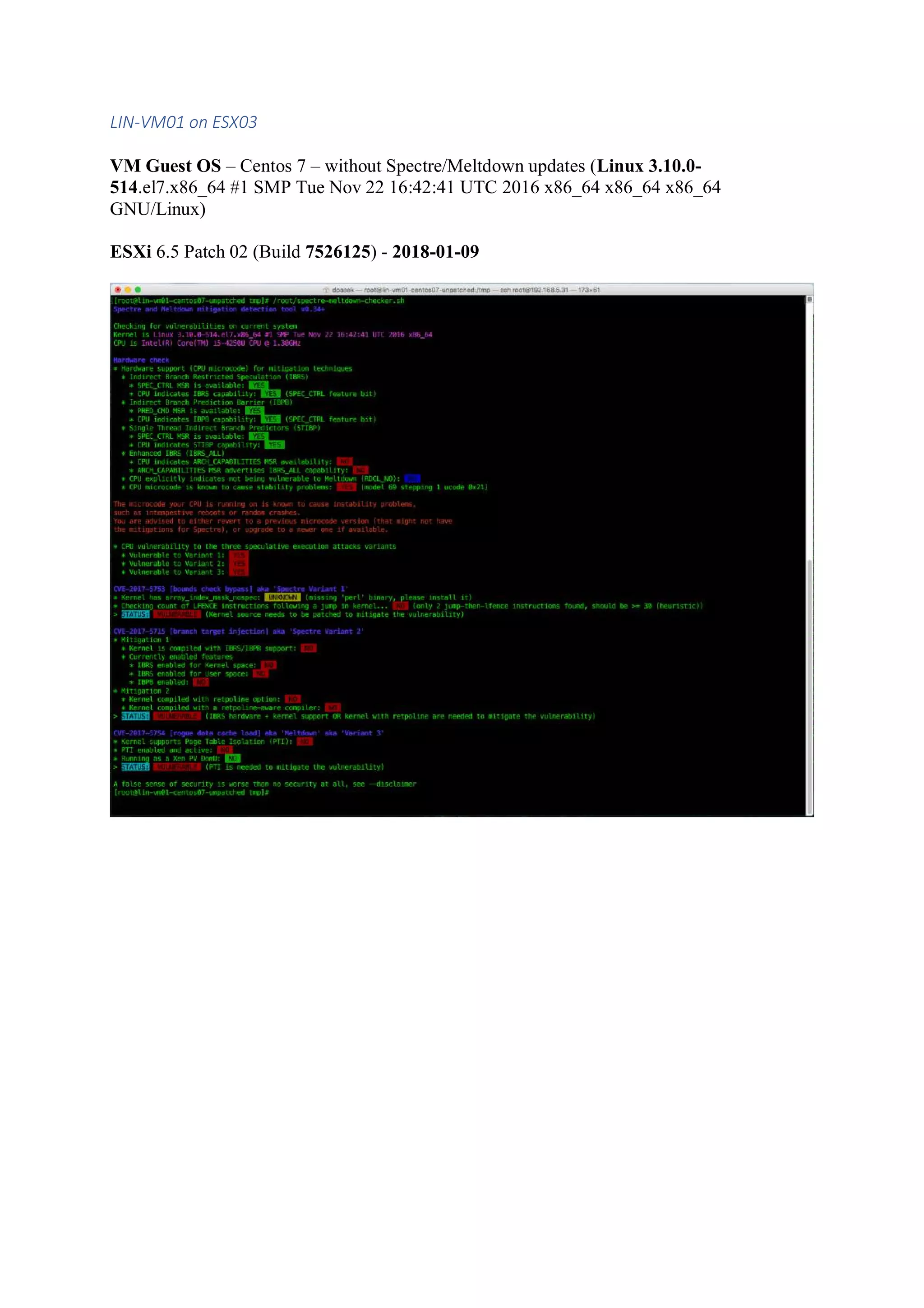

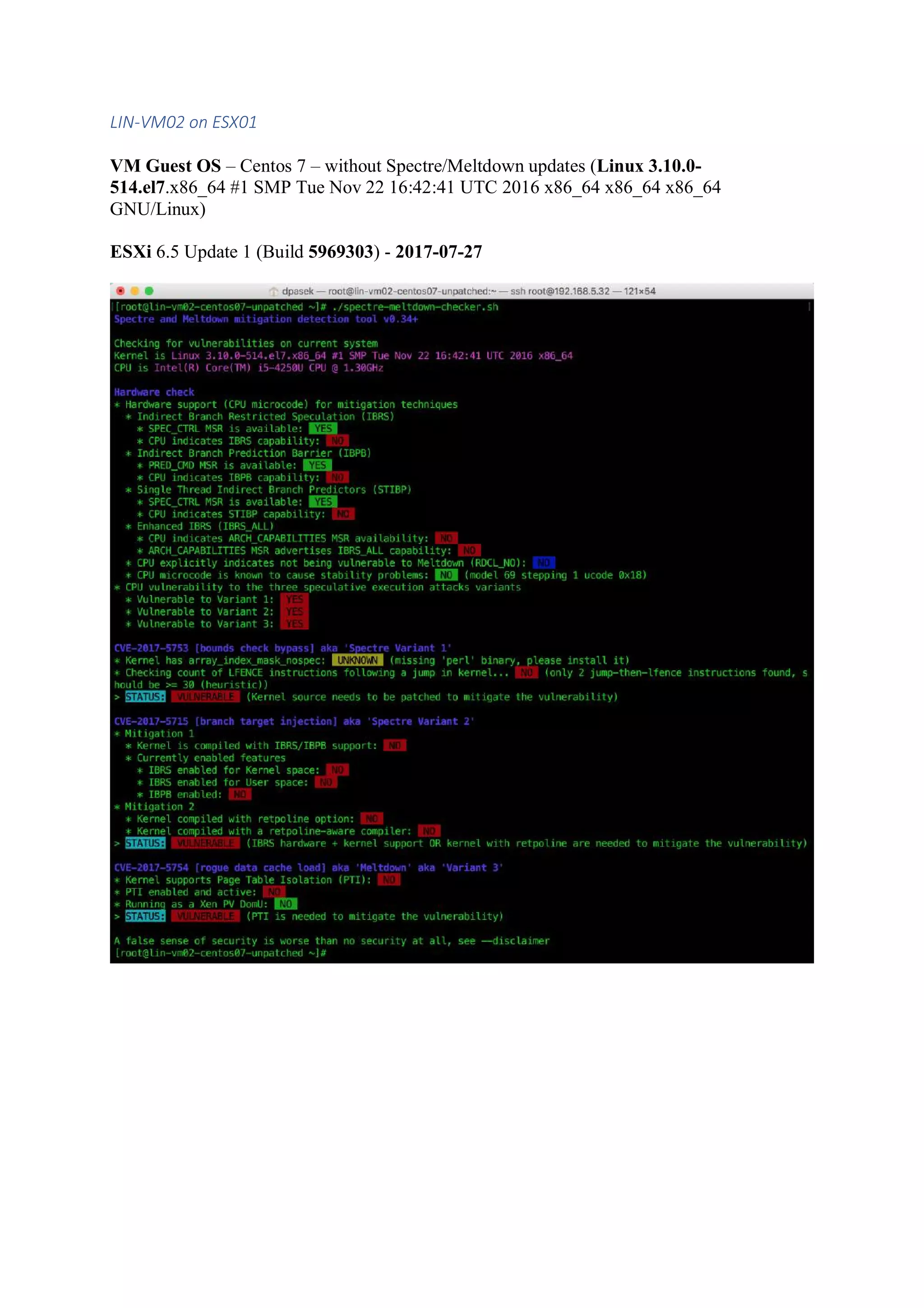

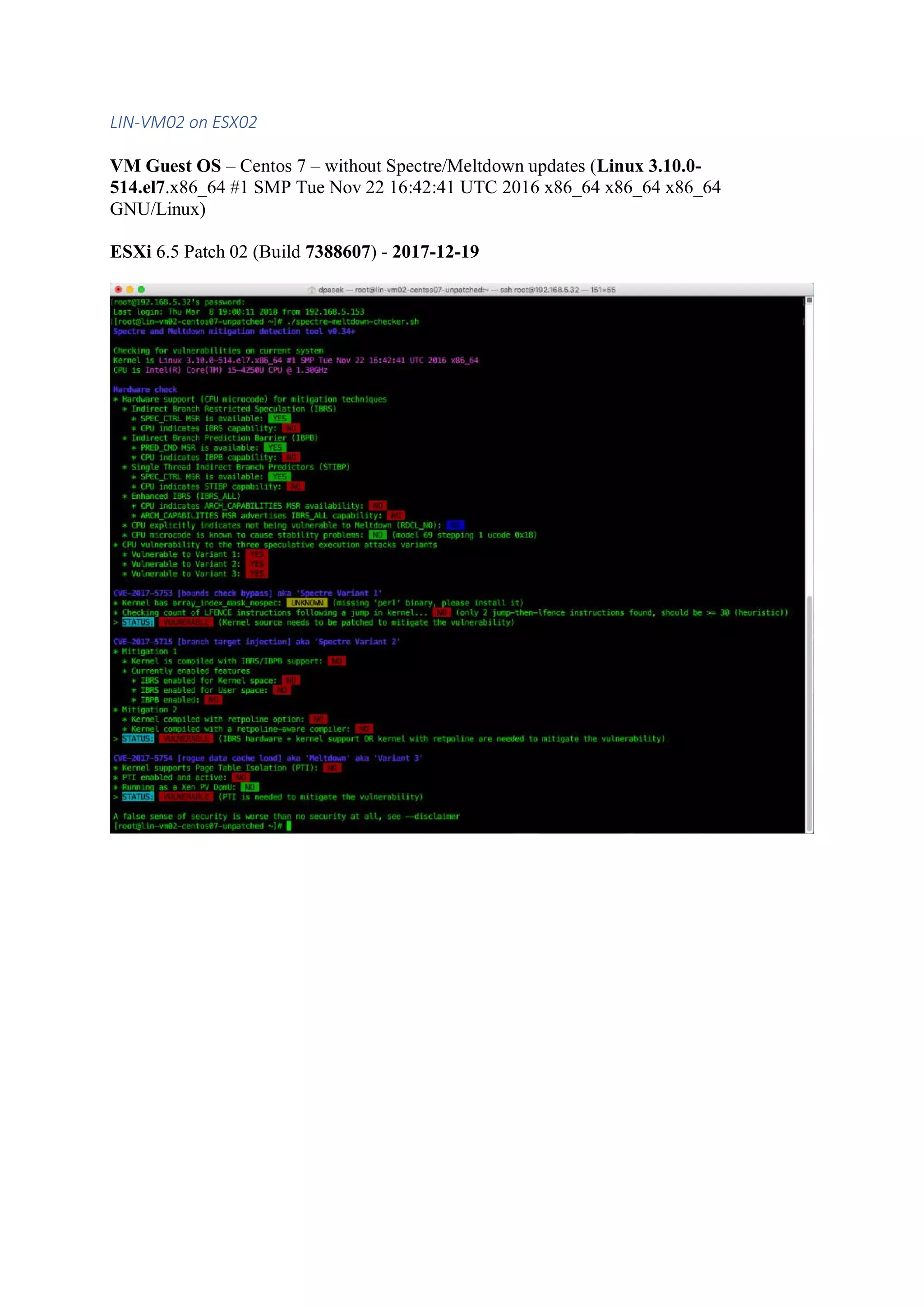

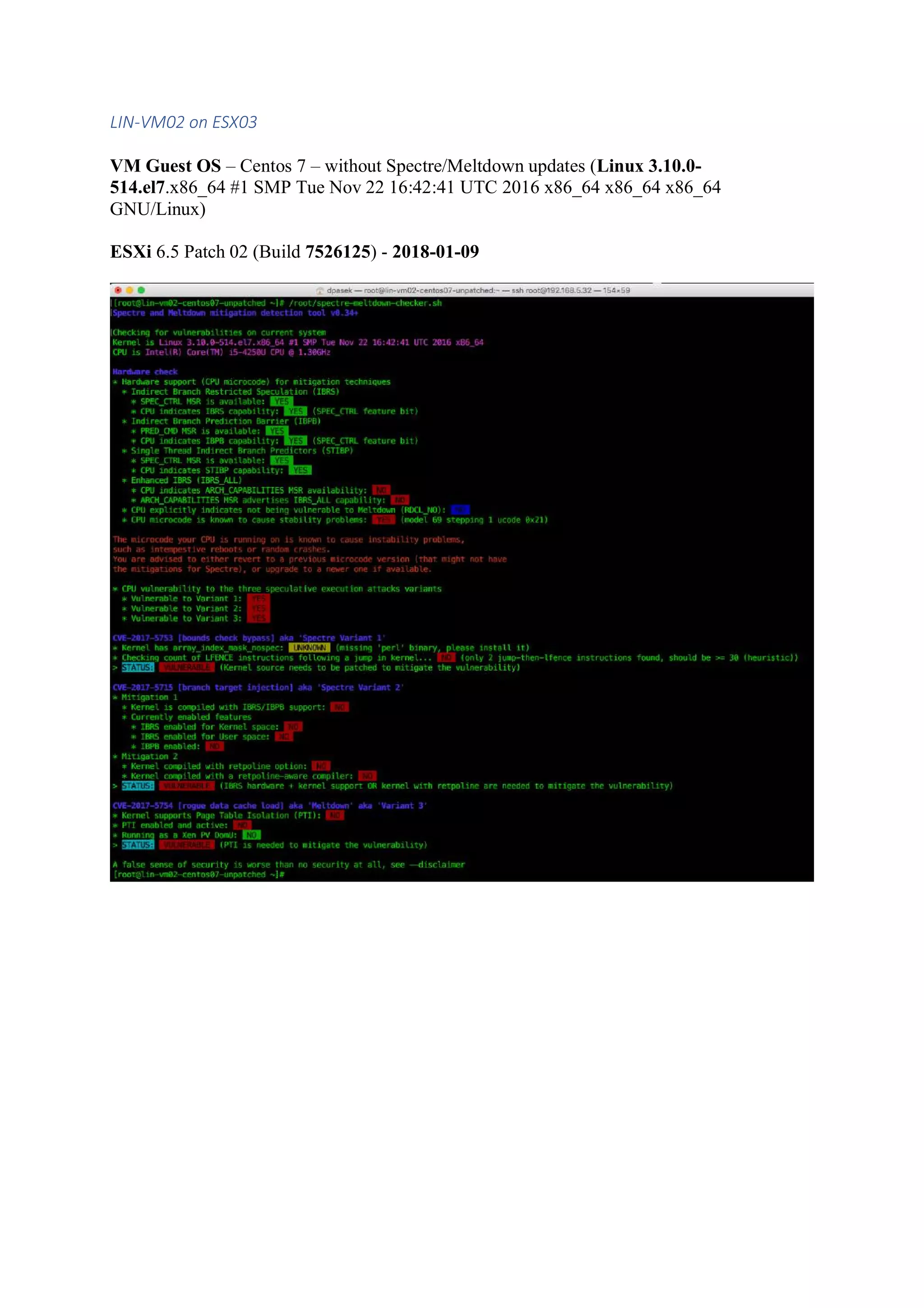

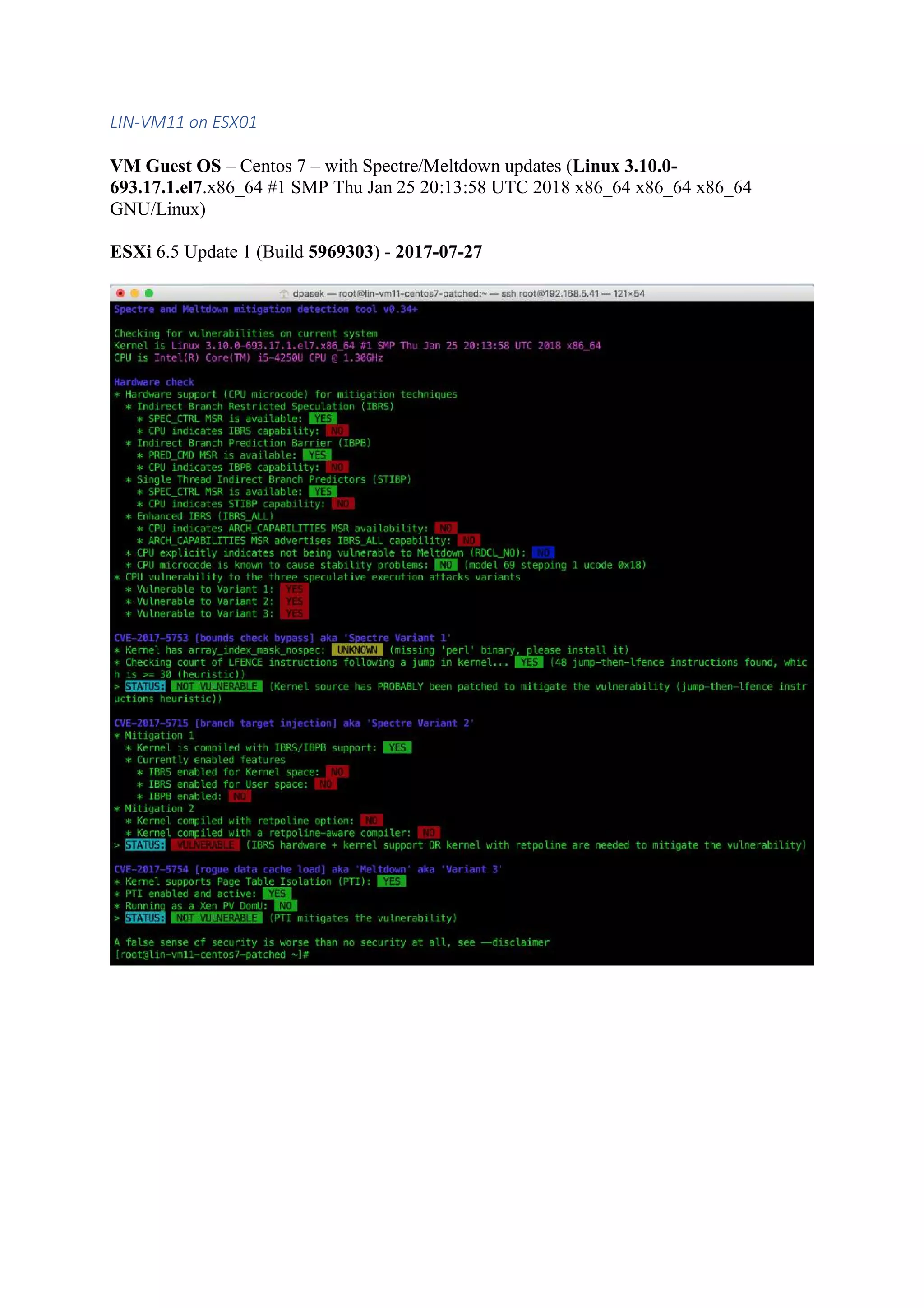

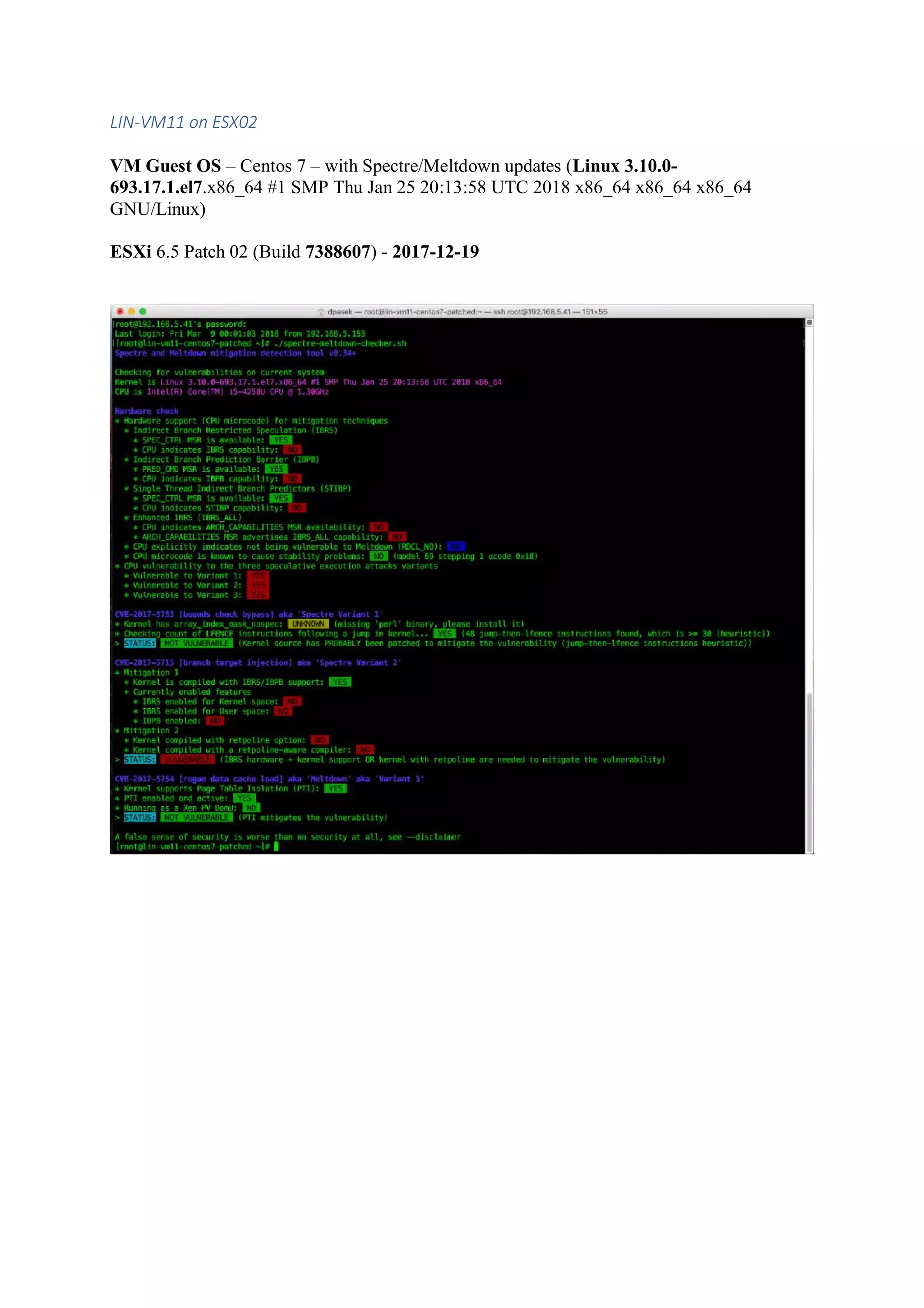

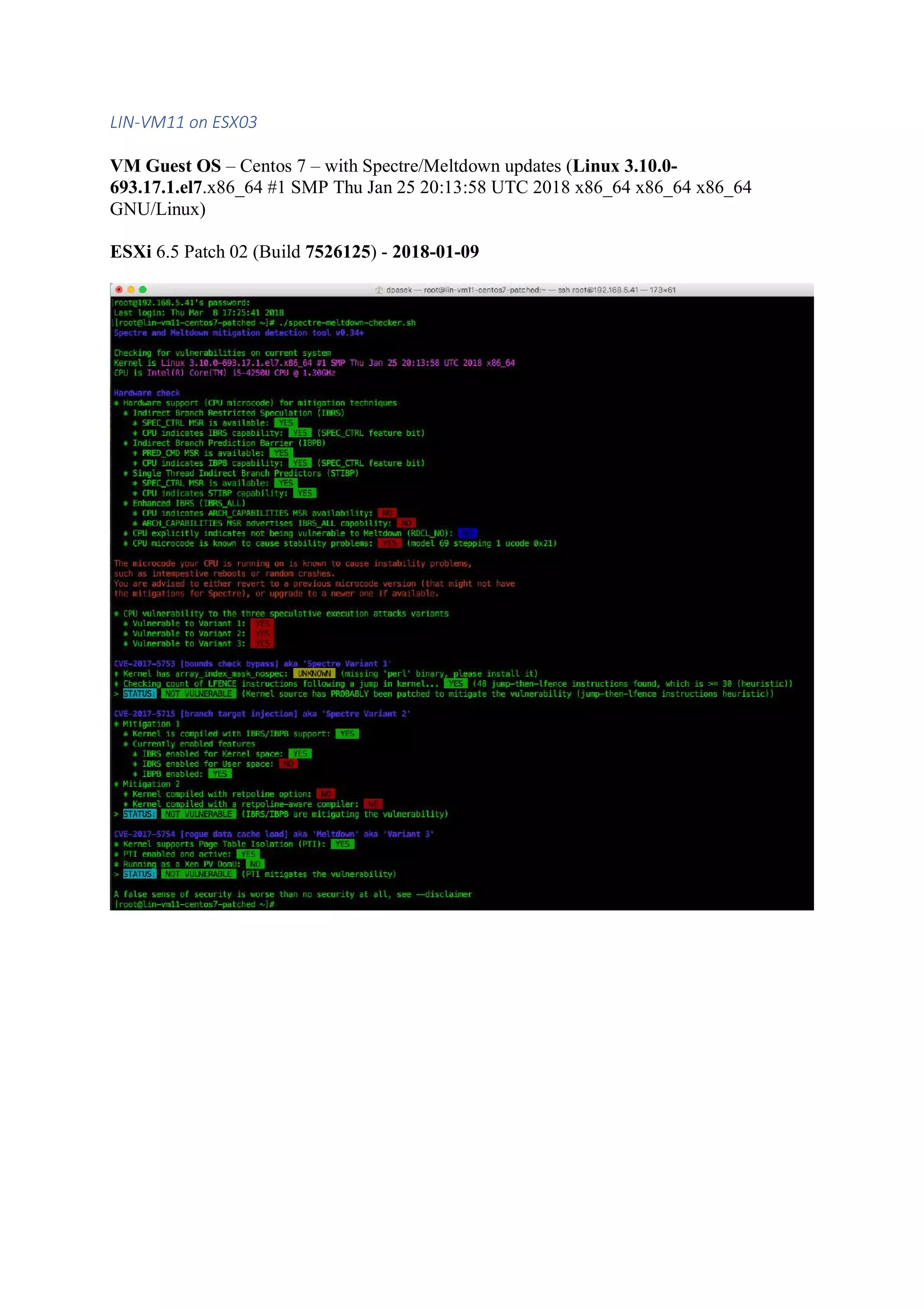

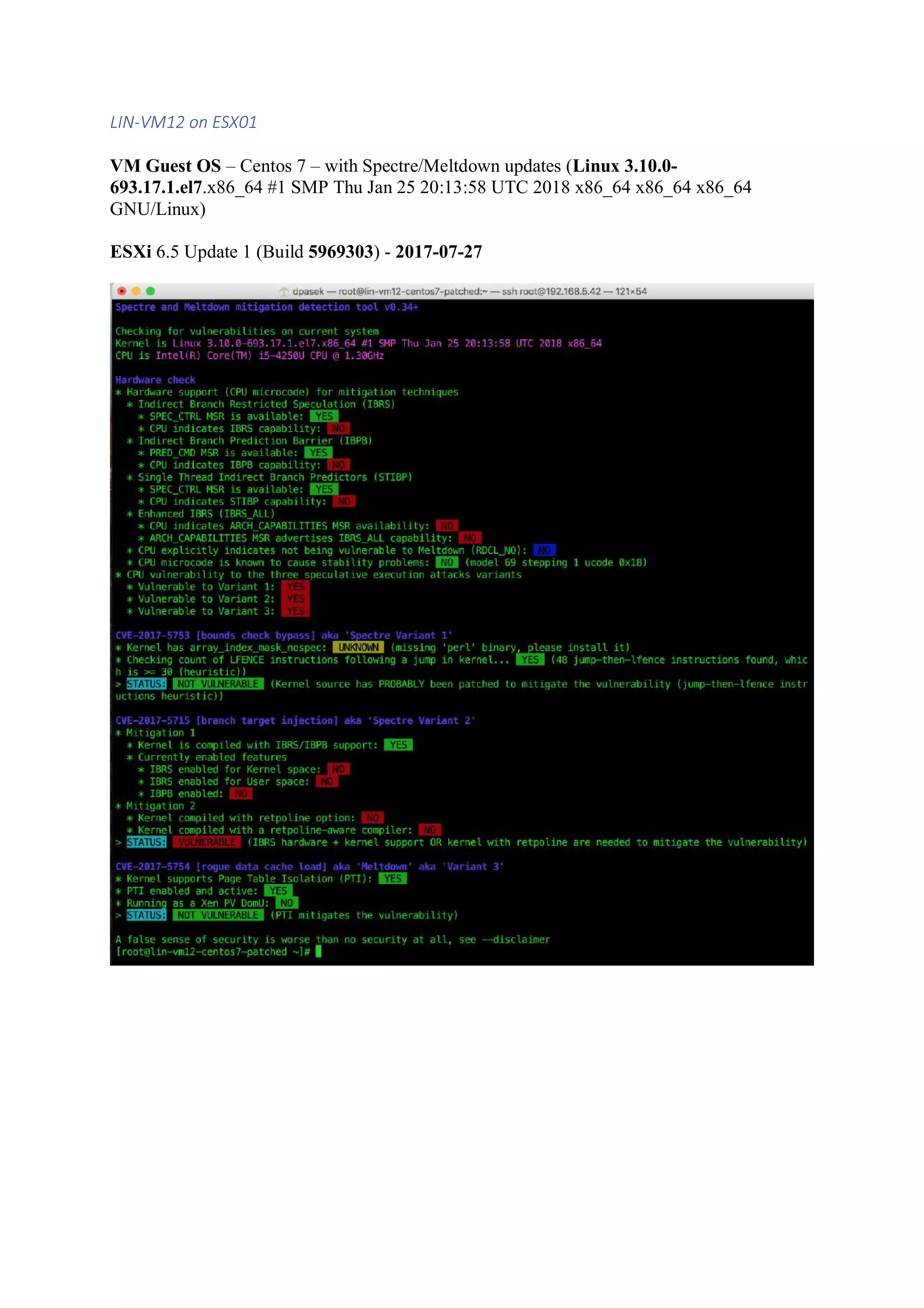

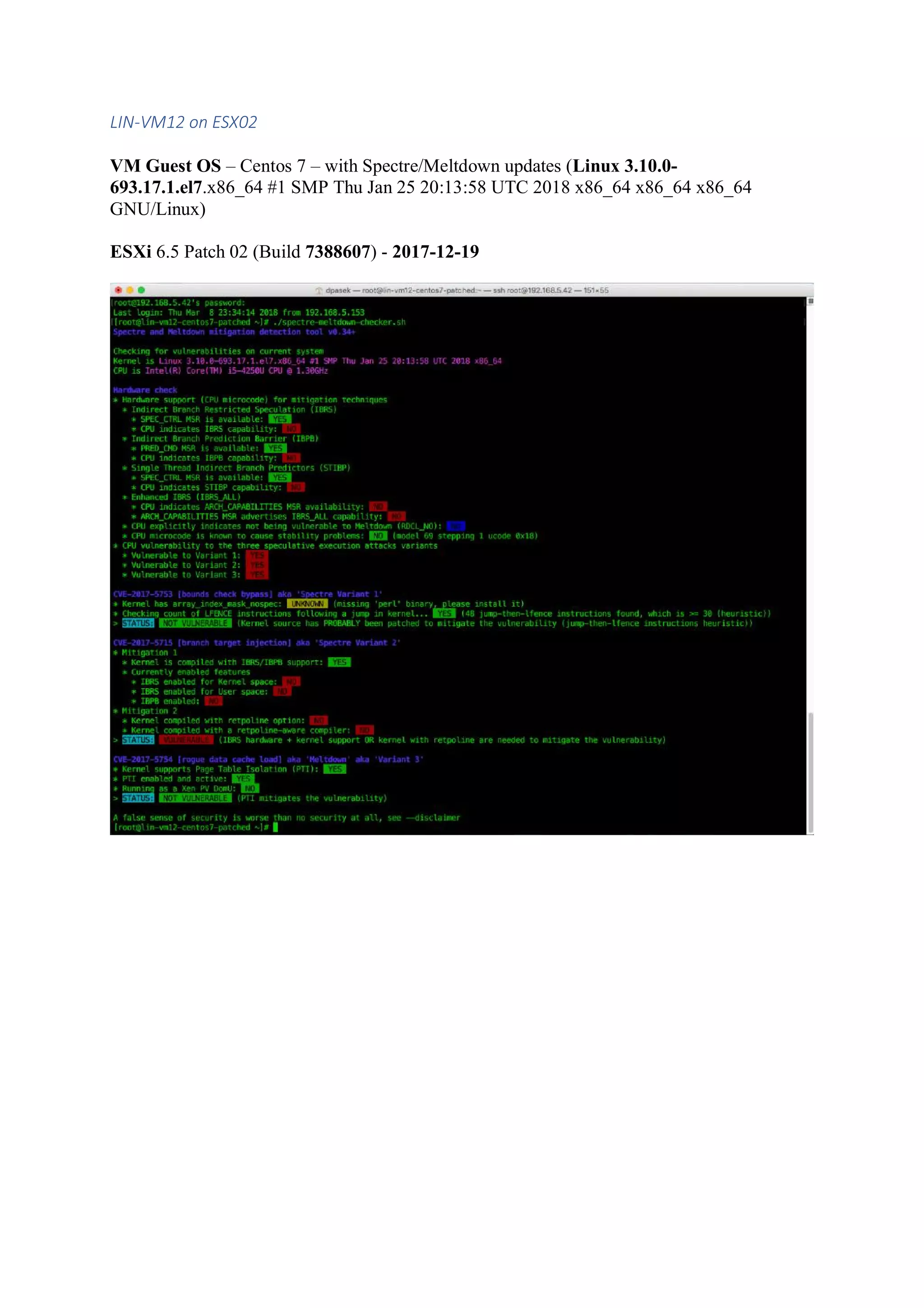

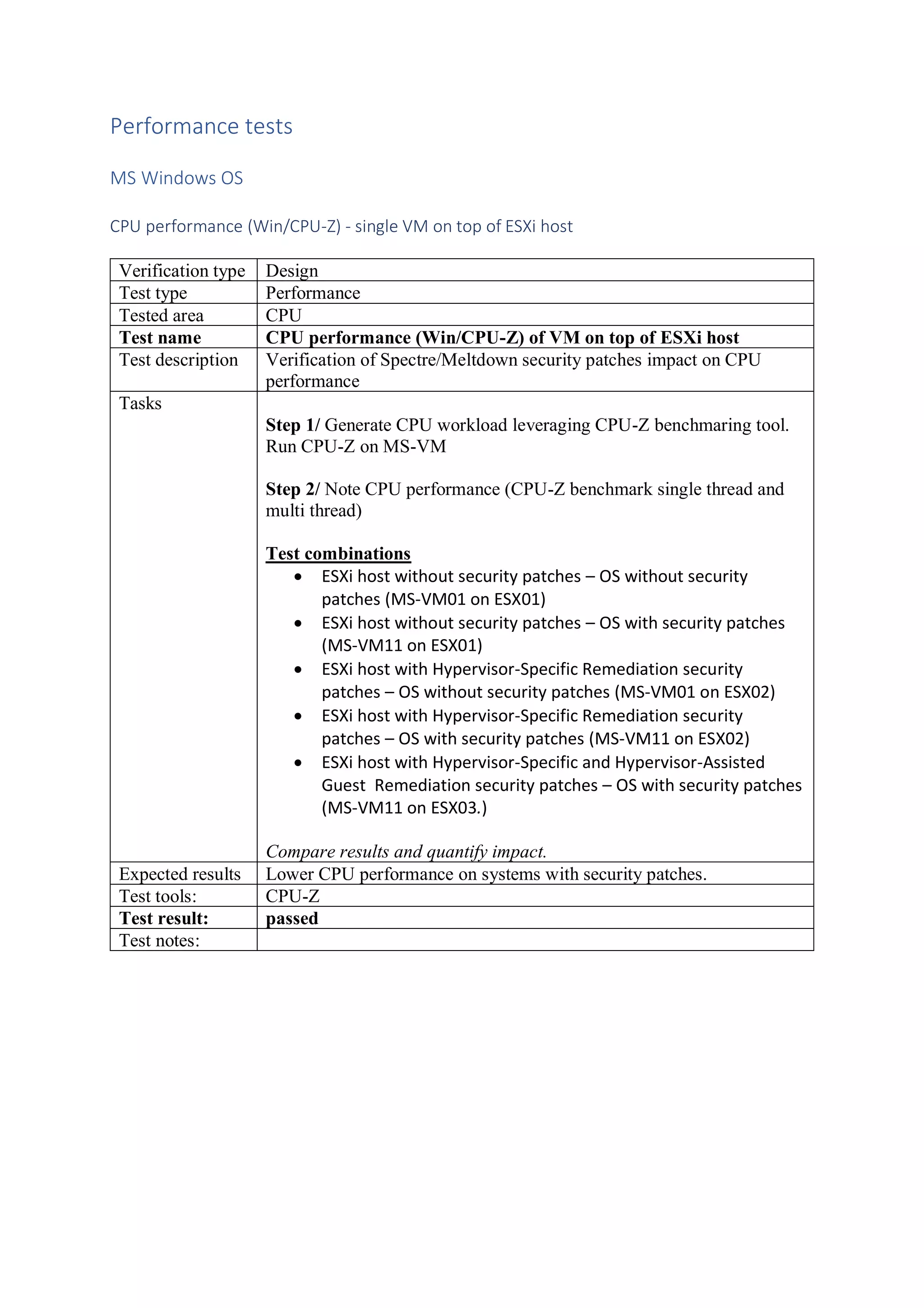

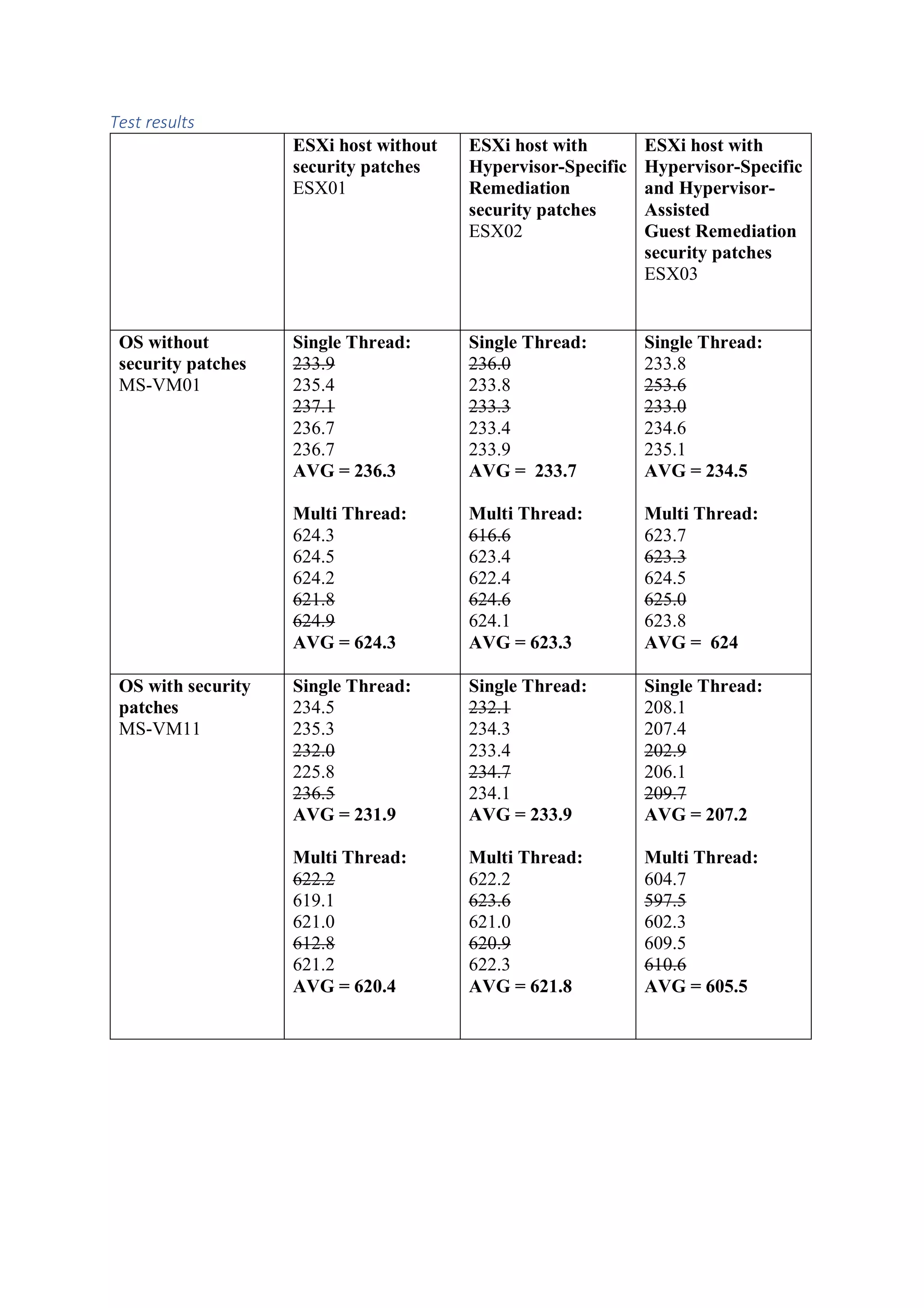

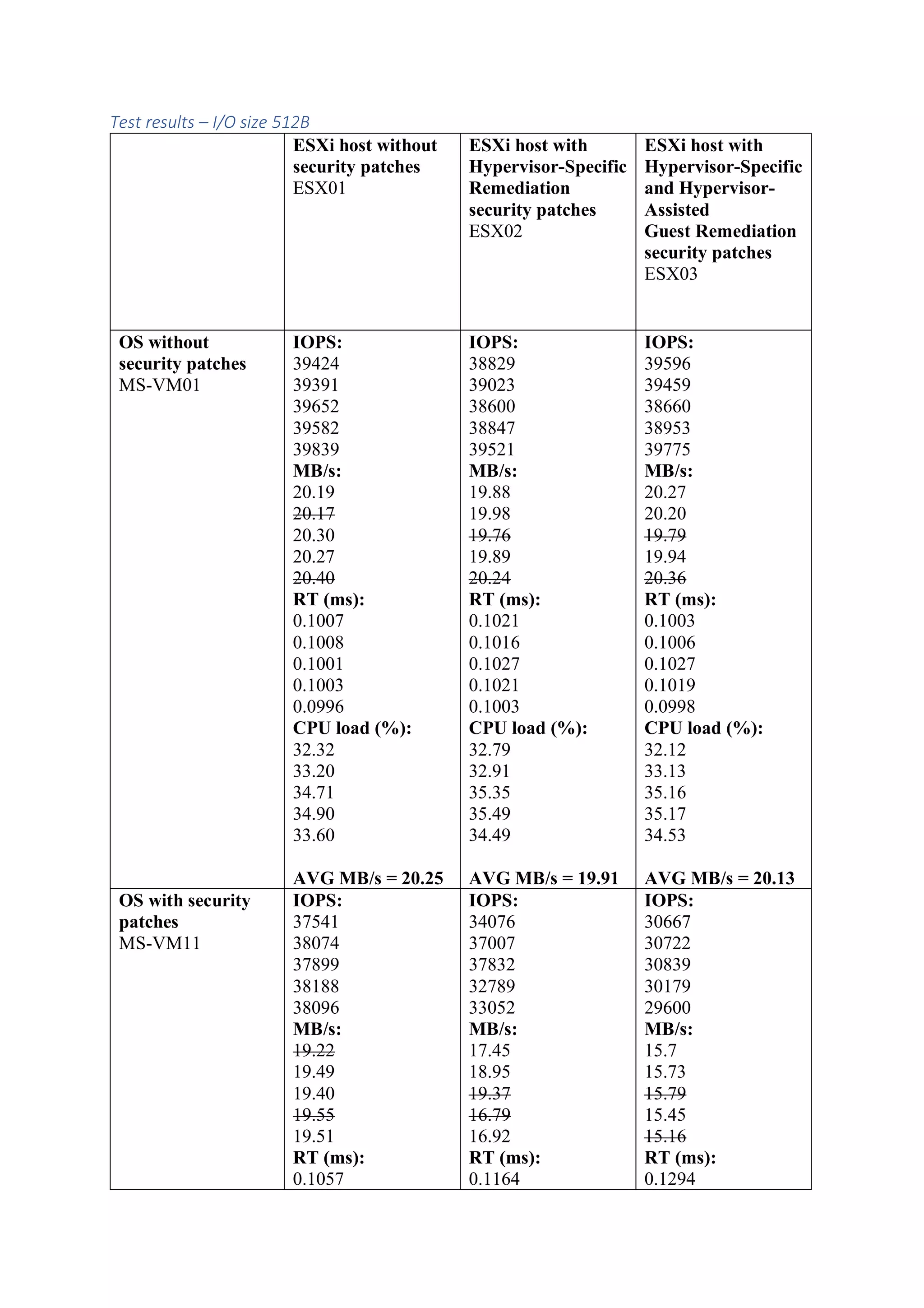

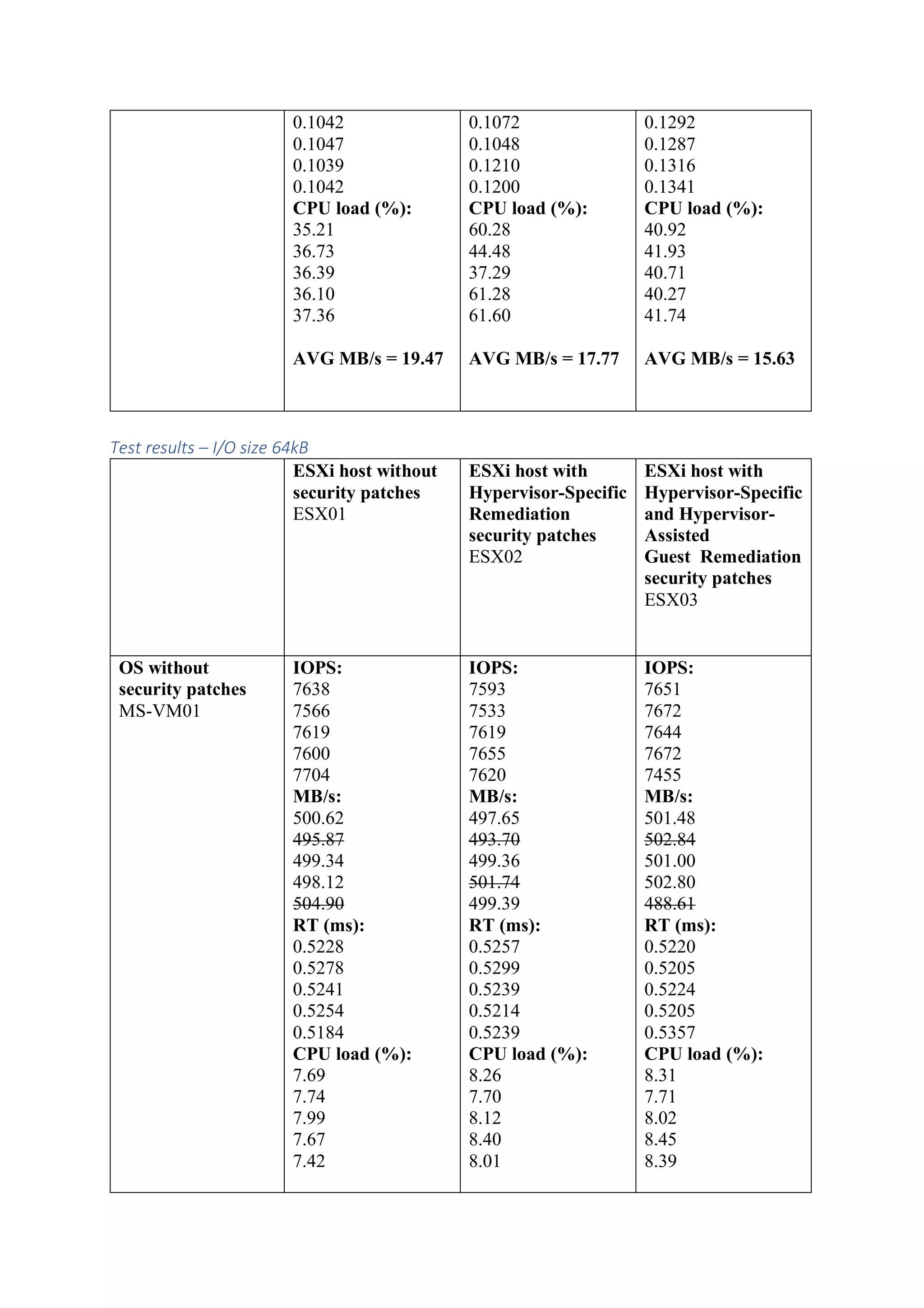

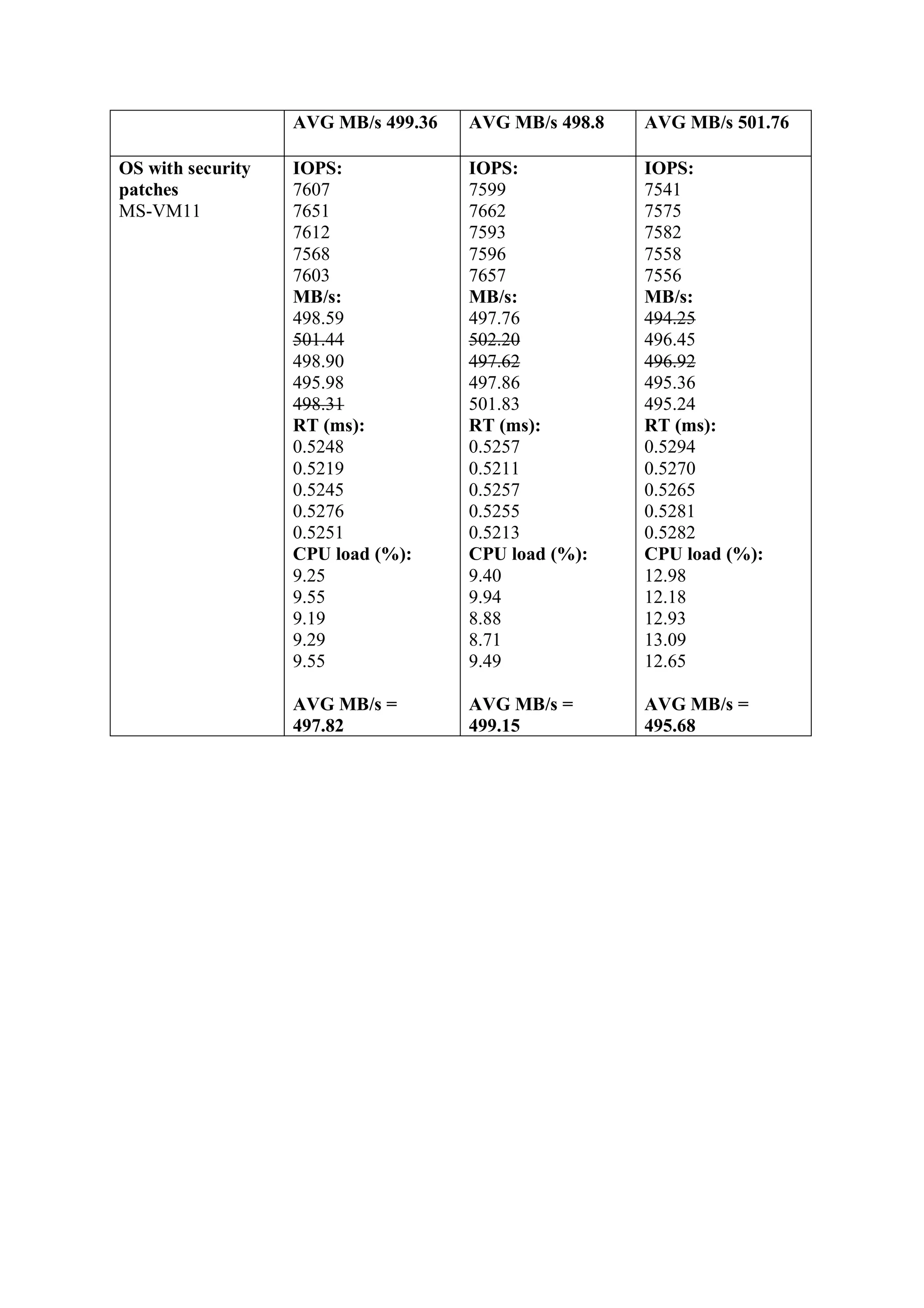

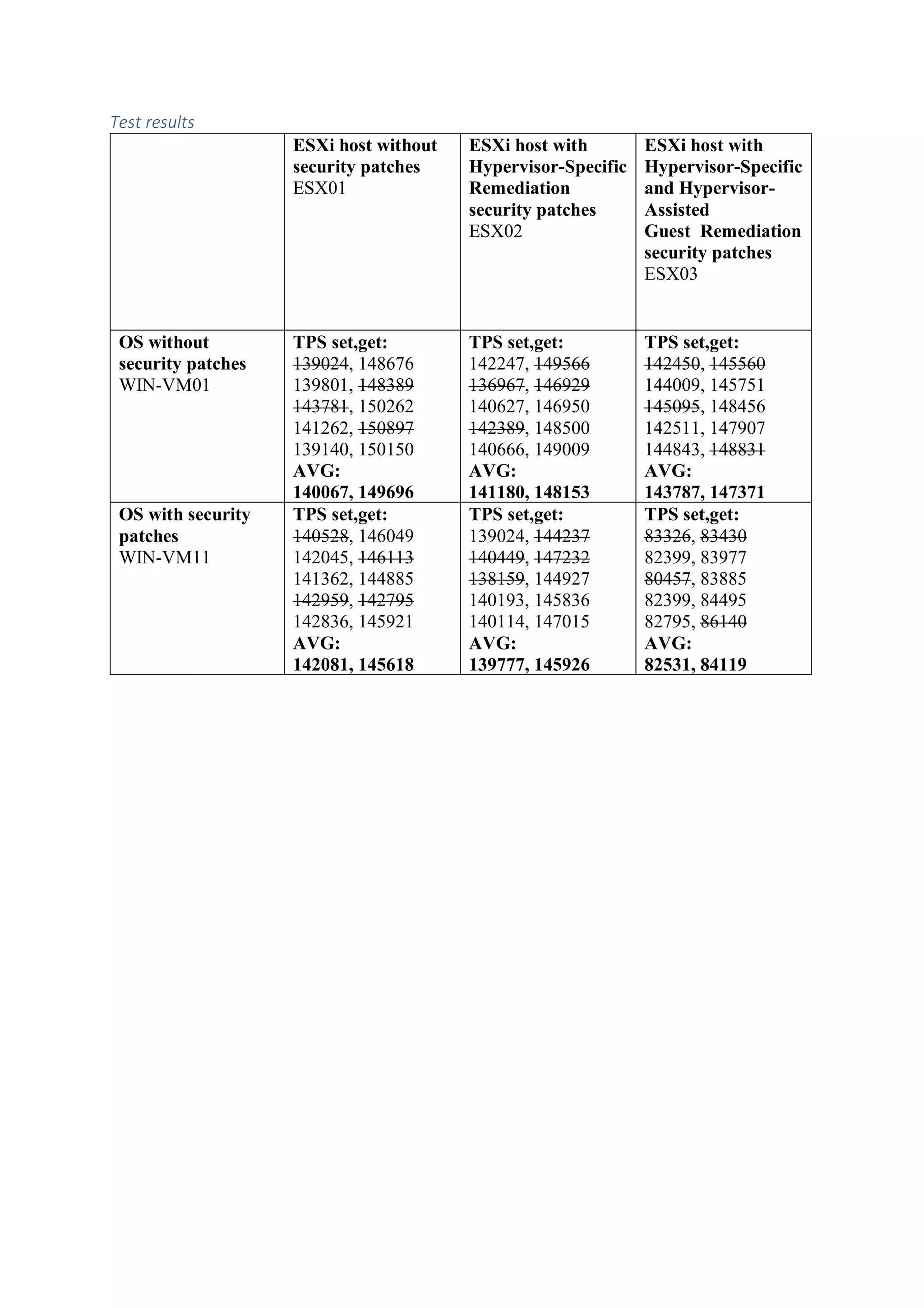

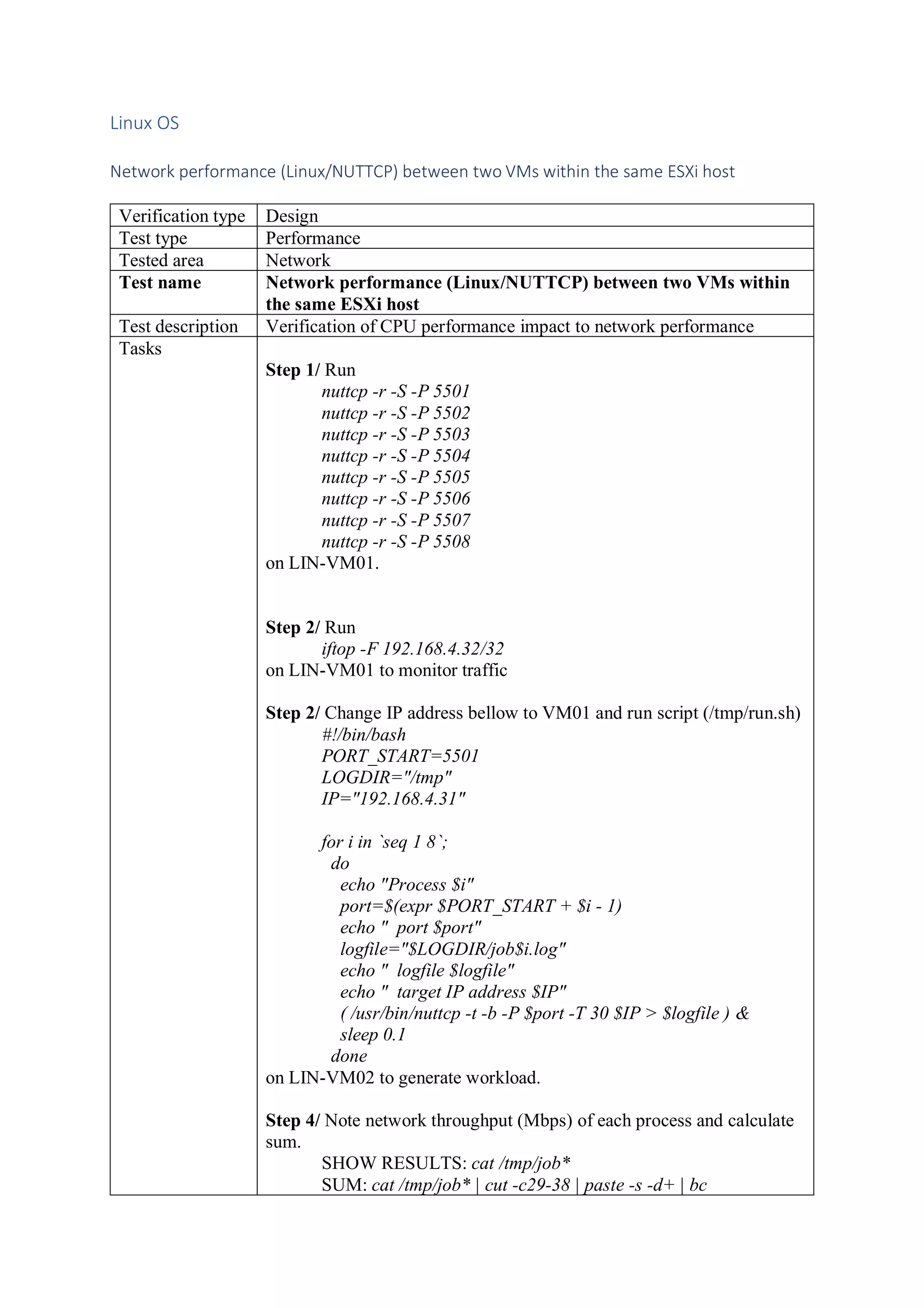

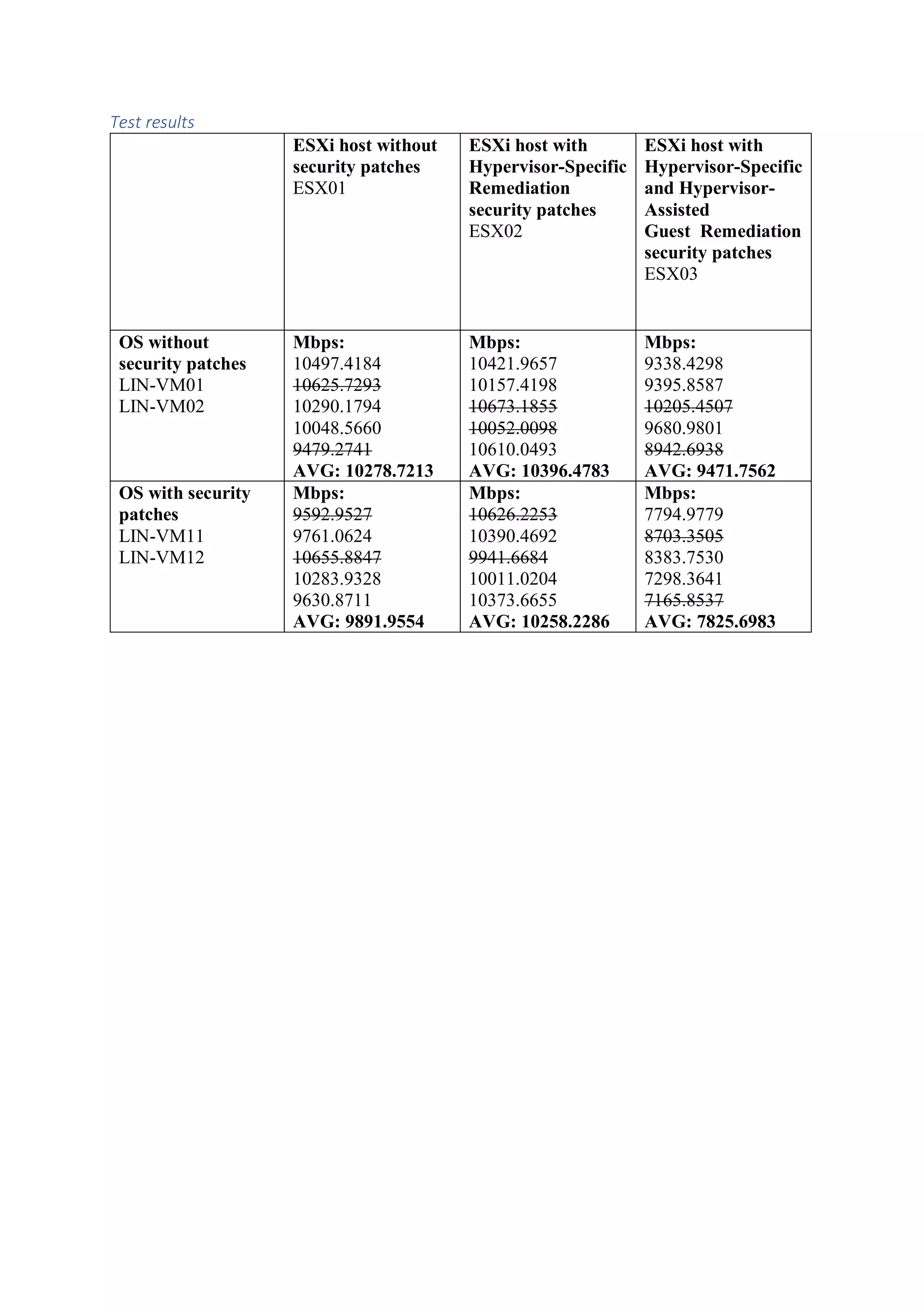

This document outlines a test plan to evaluate the performance impact of Spectre and Meltdown vulnerabilities on Intel CPUs within VMware's ESXi hypervisors. It details the hardware and software specifications for different test environments, including both patched and non-patched setups, along with the tools used for performance measurement. The results aim to inform users about potential performance variations based on specific configurations during remediation processes.

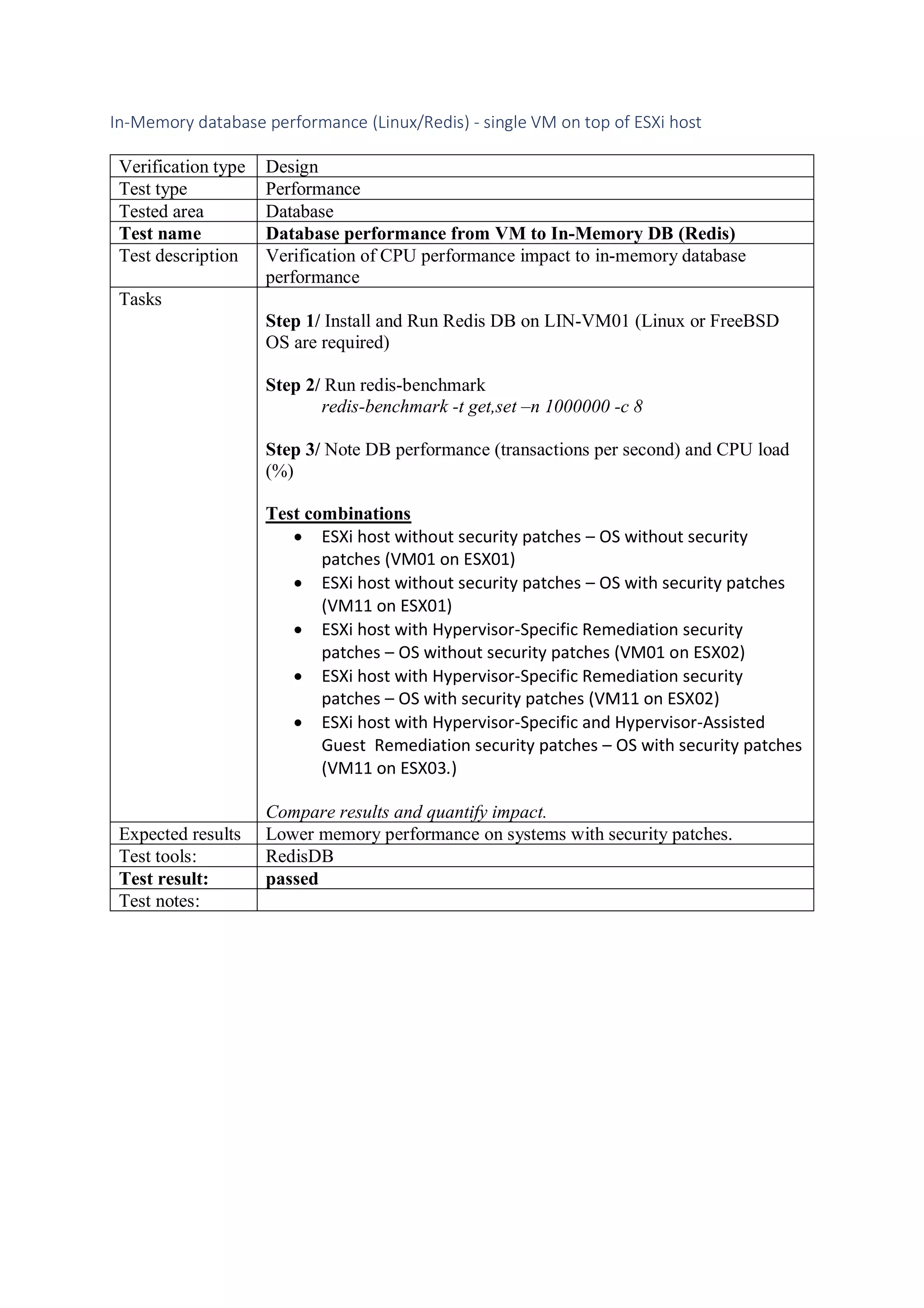

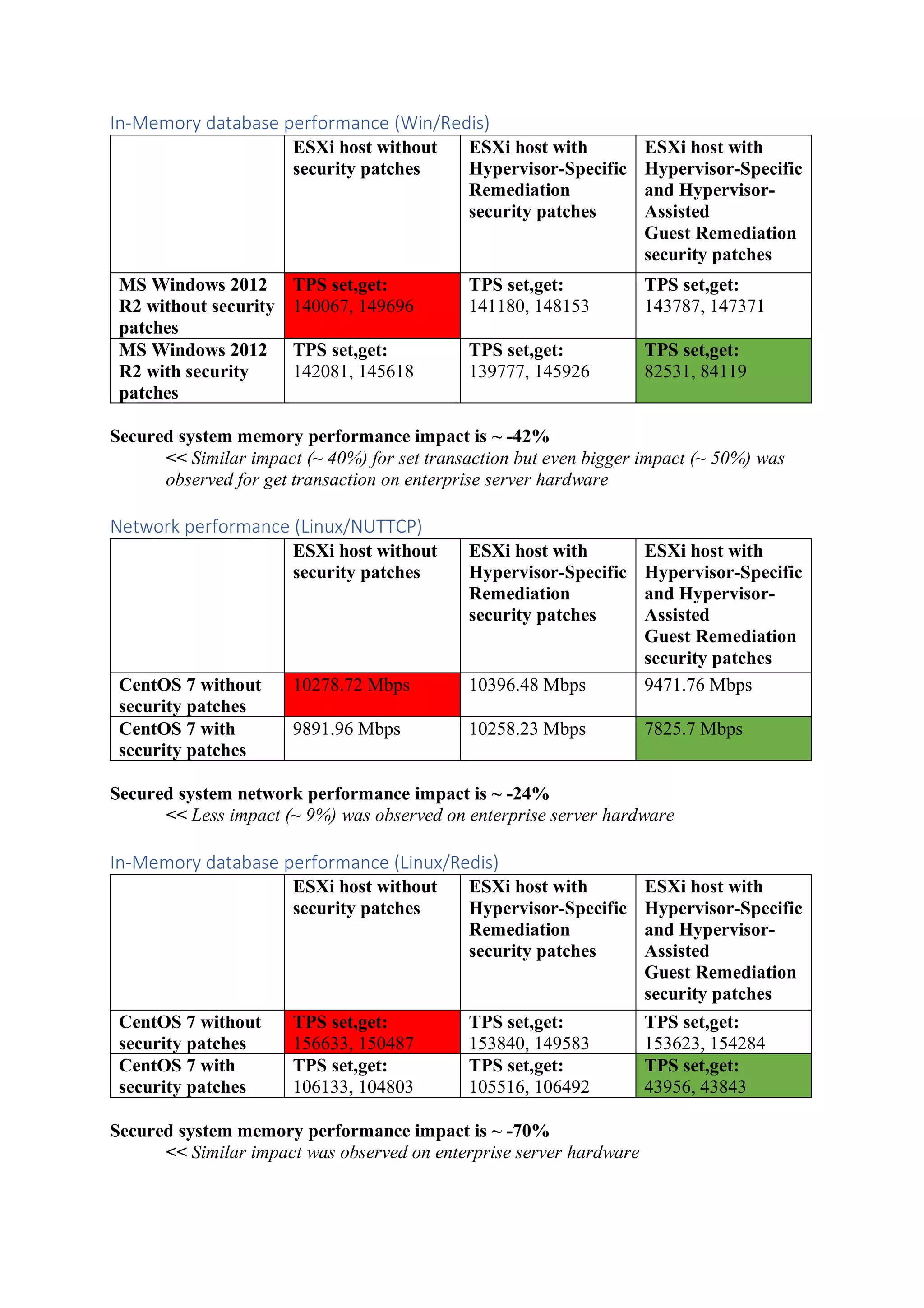

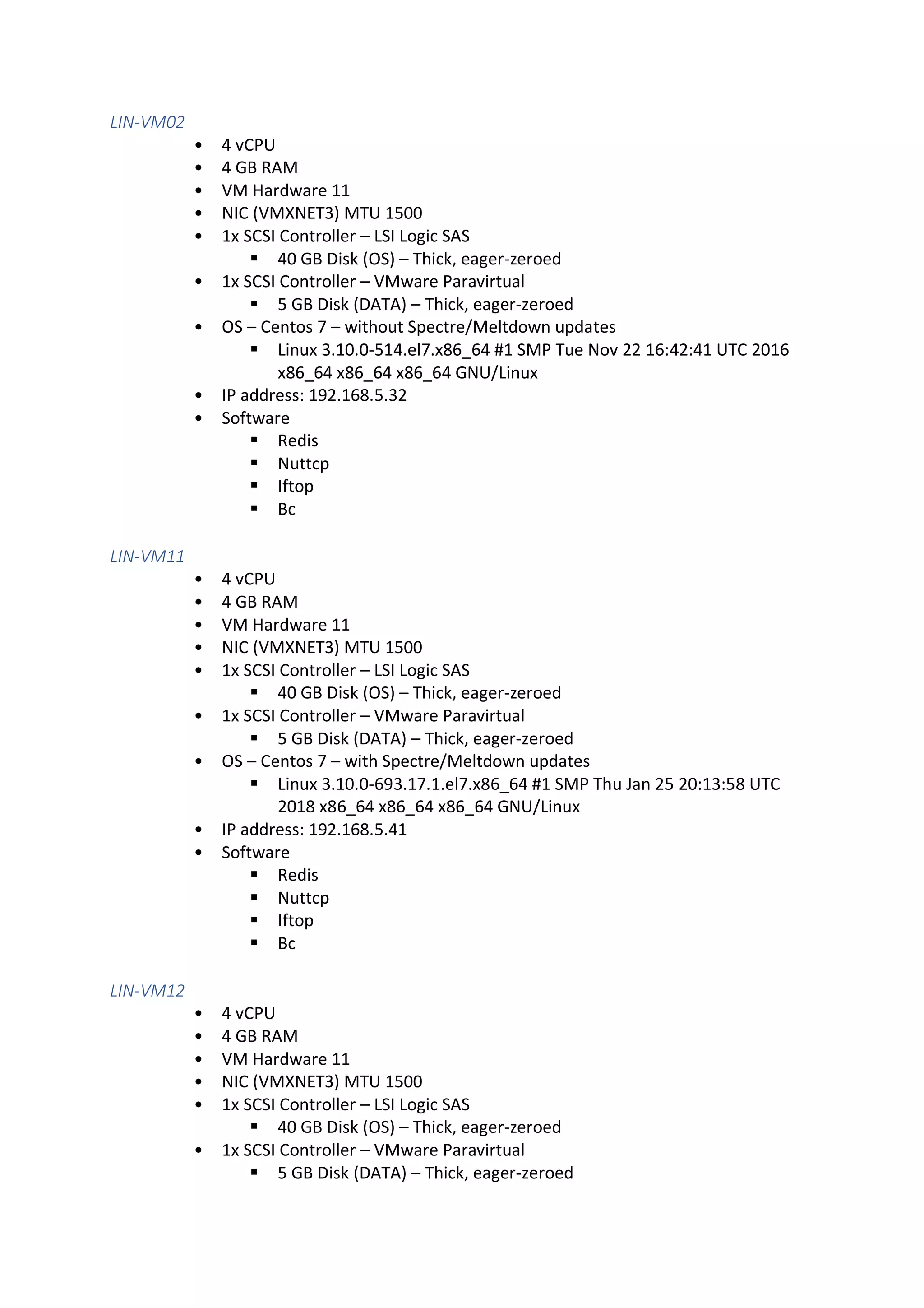

![Spectre/Meldown OS remediations

ESXi

Use VMware Update Manager and patches based on VMSA-2018-02 and VMSA-2018-04.

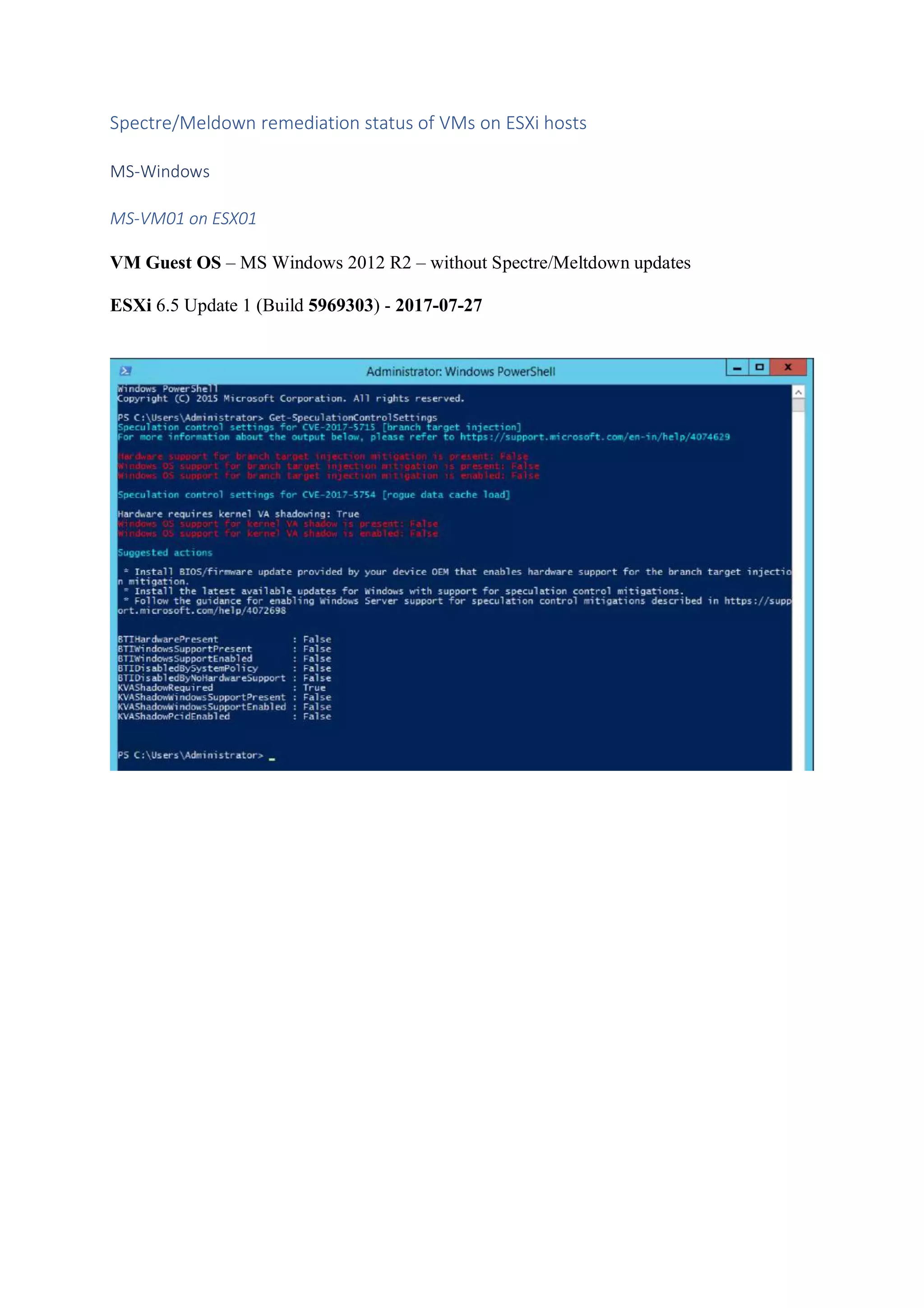

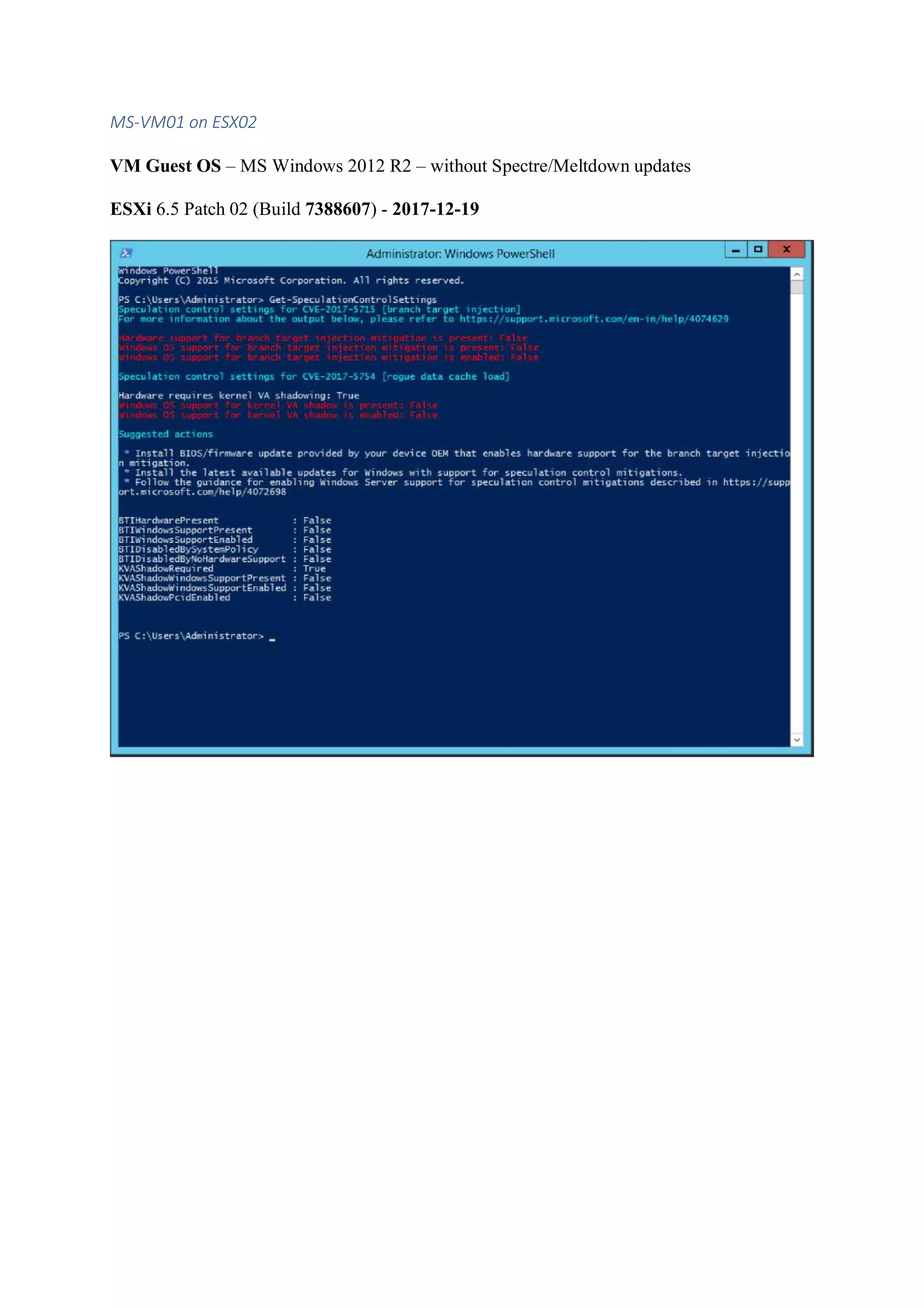

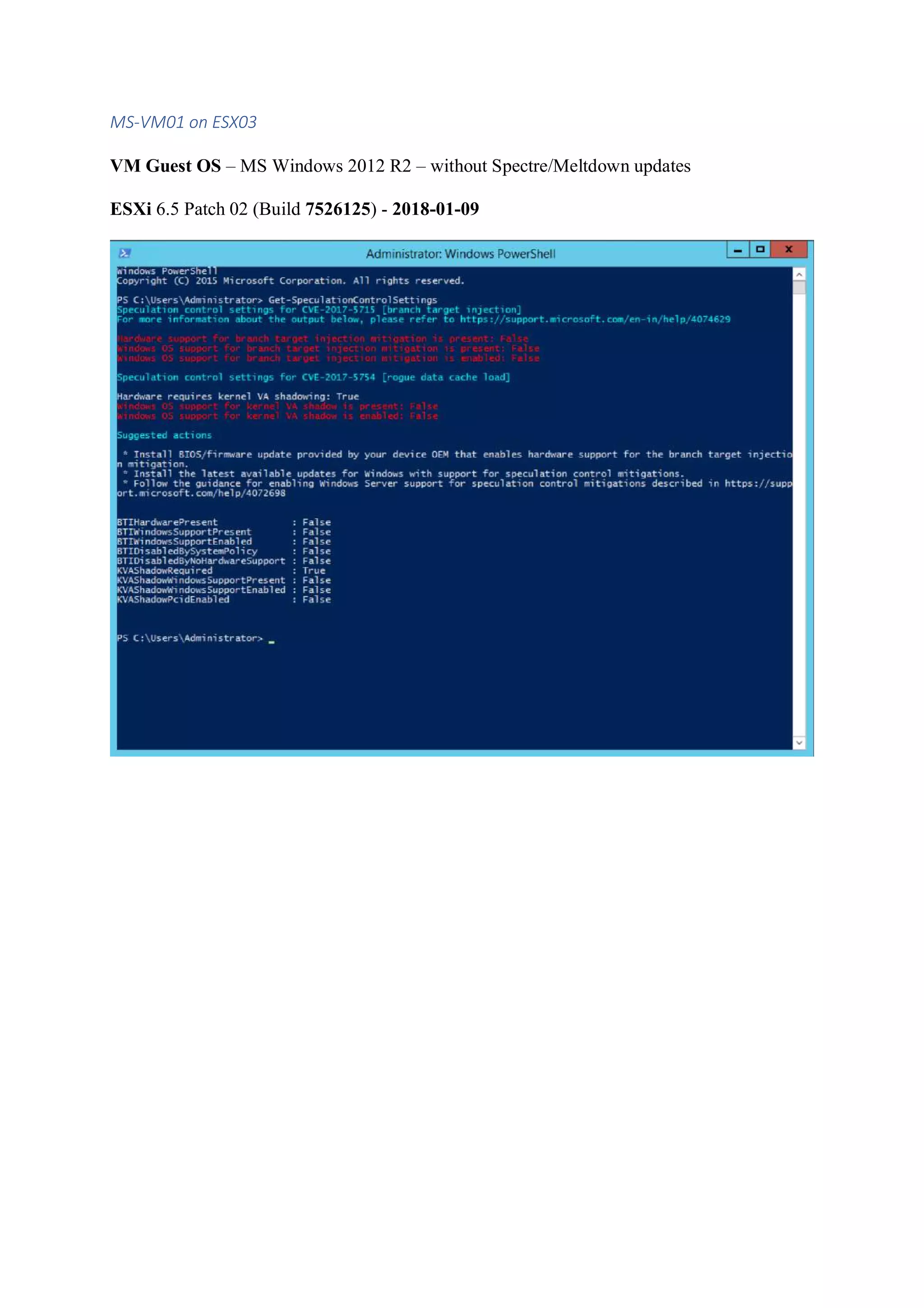

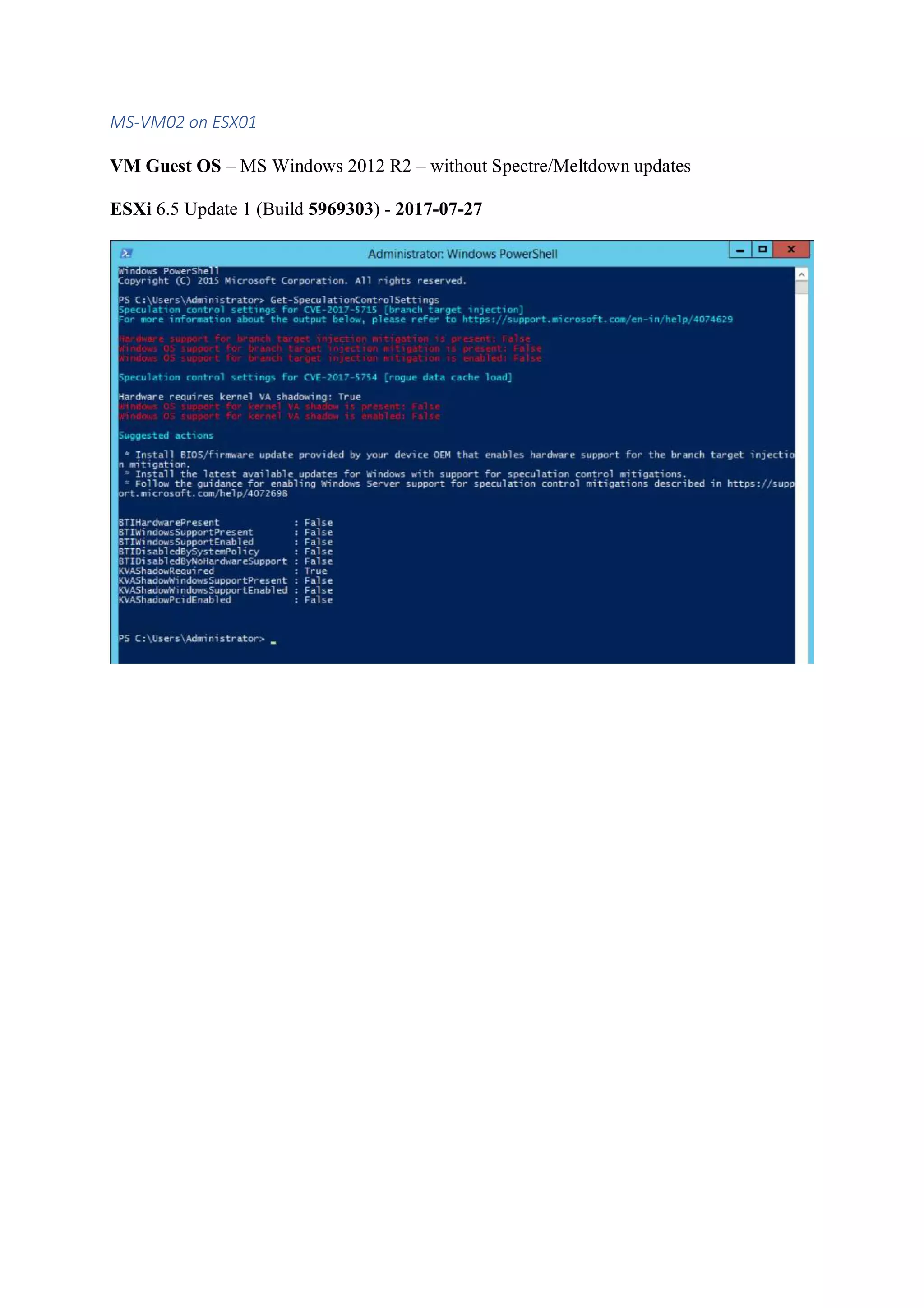

MS-Windows

To protect MS-Windows apply updates available here

http://www.catalog.update.microsoft.com/Search.aspx?q=KB4056898

To enable the fix change Registry Settings

reg add "HKEY_LOCAL_MACHINESYSTEMCurrentControlSetControlSession

ManagerMemory Management" /v FeatureSettingsOverride /t REG_DWORD /d 0 /f

reg add "HKEY_LOCAL_MACHINESYSTEMCurrentControlSetControlSession

ManagerMemory Management" /v FeatureSettingsOverrideMask /t REG_DWORD /d 3 /f

reg add "HKLMSOFTWAREMicrosoftWindows NTCurrentVersionVirtualization" /v

MinVmVersionForCpuBasedMitigations /t REG_SZ /d "1.0" /f

Restart the server for changes to take effect.

Linux / Centos

Use “yum update” and apply the latest OS updates.

Spectre/Meldown remediation checkers

ESXi

ESXi command to get information if microcode is updated …

if [ `vsish -e get /hardware/msr/pcpu/0/addr/0x00000048 2&>1 > /dev/null ;echo $?` -eq 0 ];

then echo -e "nIntel Security Microcode Updatedn";else echo -e "nIntel Security Microcode

NOT Updatedn";fi

MS-Windows

MS-Windows test tool for SPECTRE/MELTDOWN remediation

Installation

• Article: https://support.microsoft.com/en-us/help/4073119/protect-against-

speculative-execution-side-channel-vulnerabilities-in

• PowerShell 5.0 is required](https://image.slidesharecdn.com/spectremeltdownperformancetests-v0-190516090436/75/Spectre-meltdown-performance_tests-v0-3-10-2048.jpg)