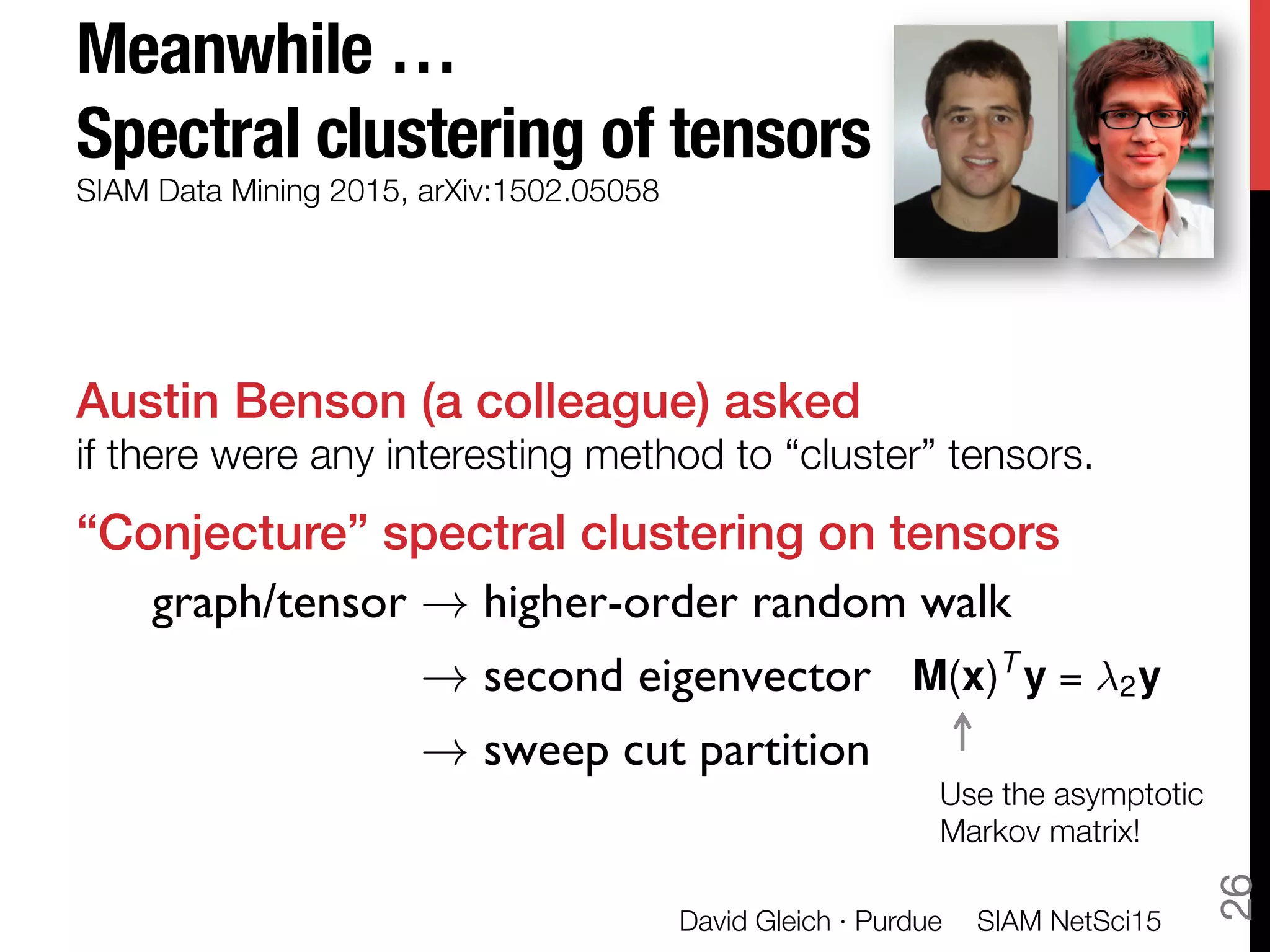

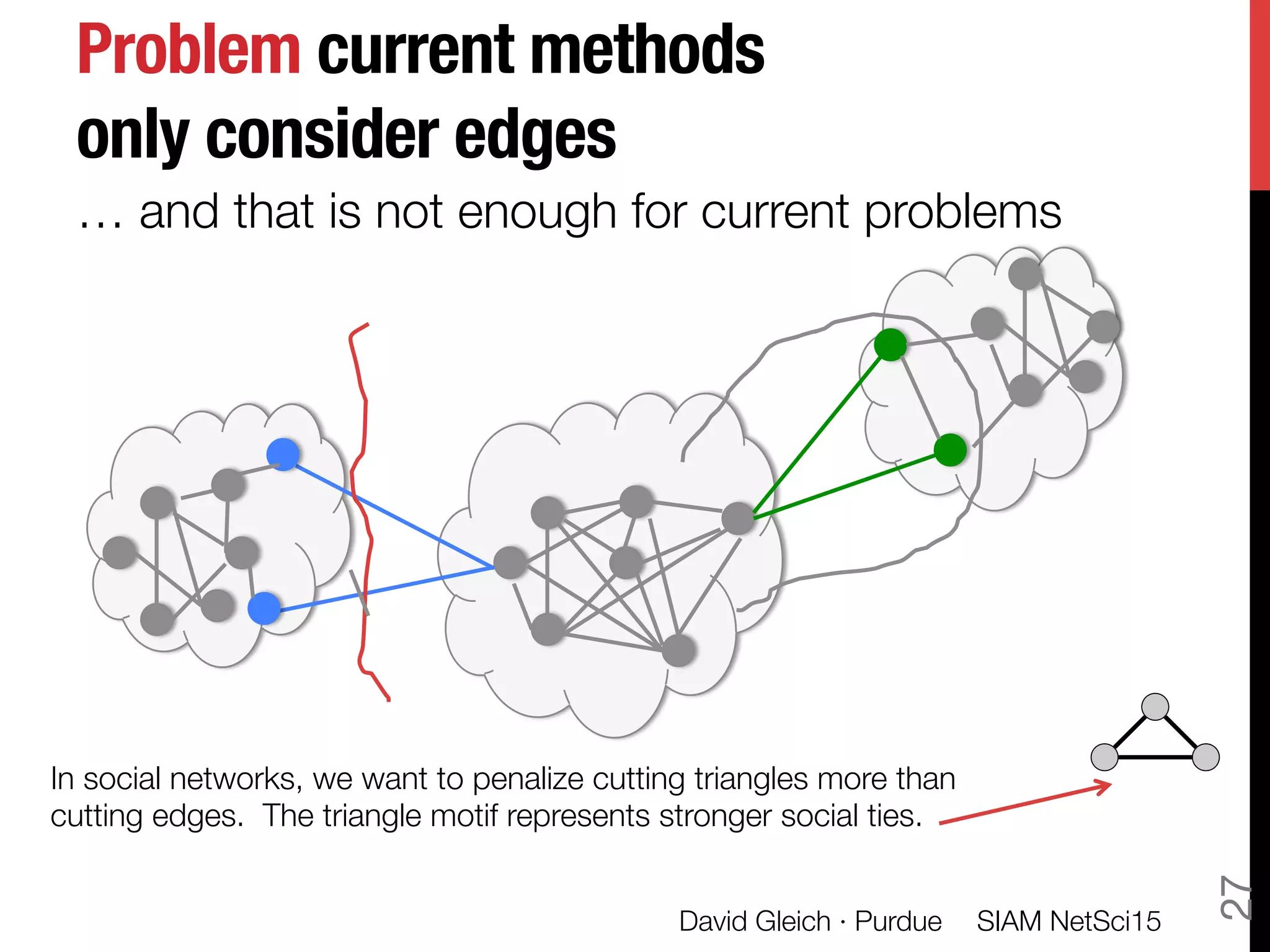

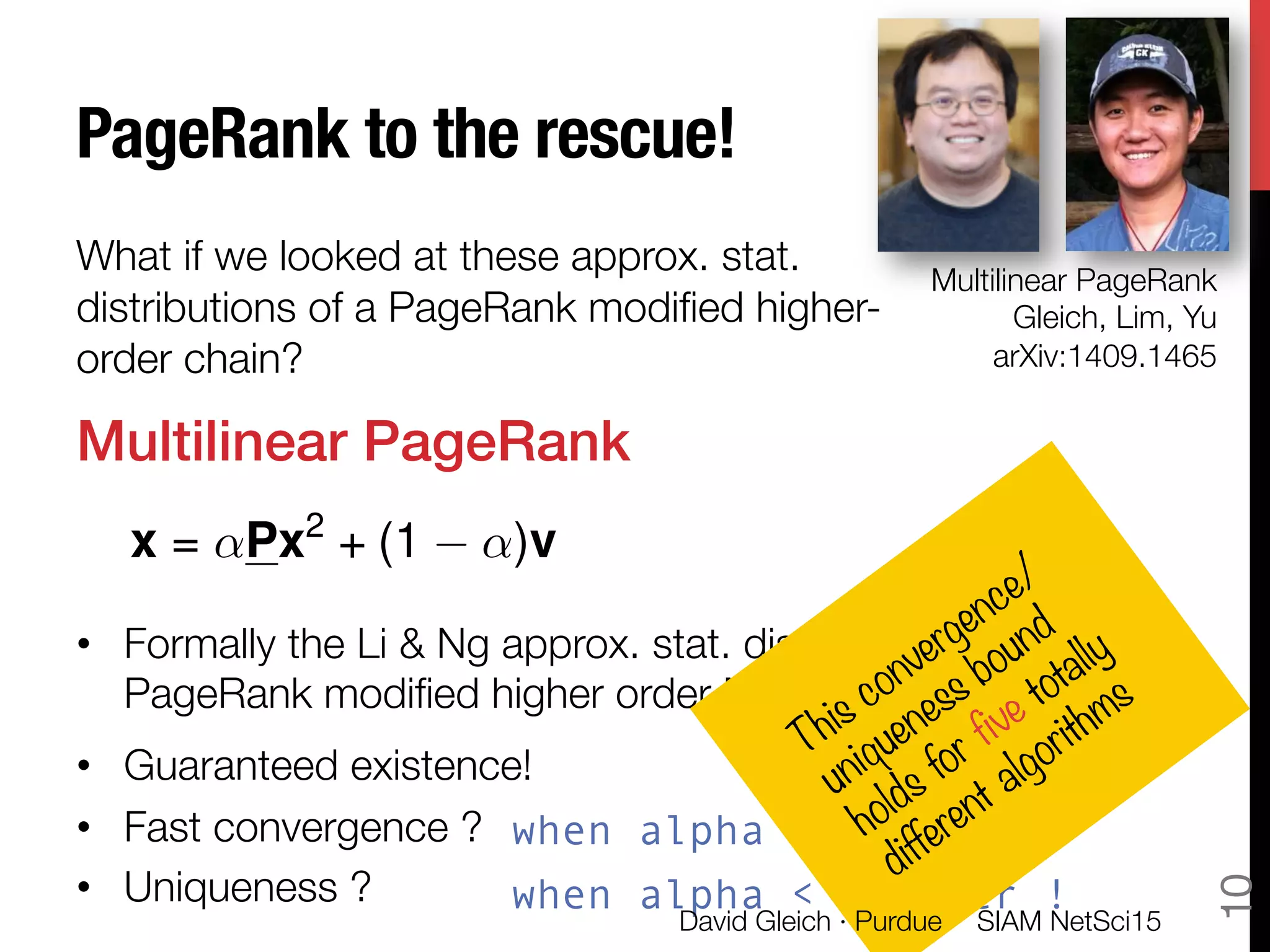

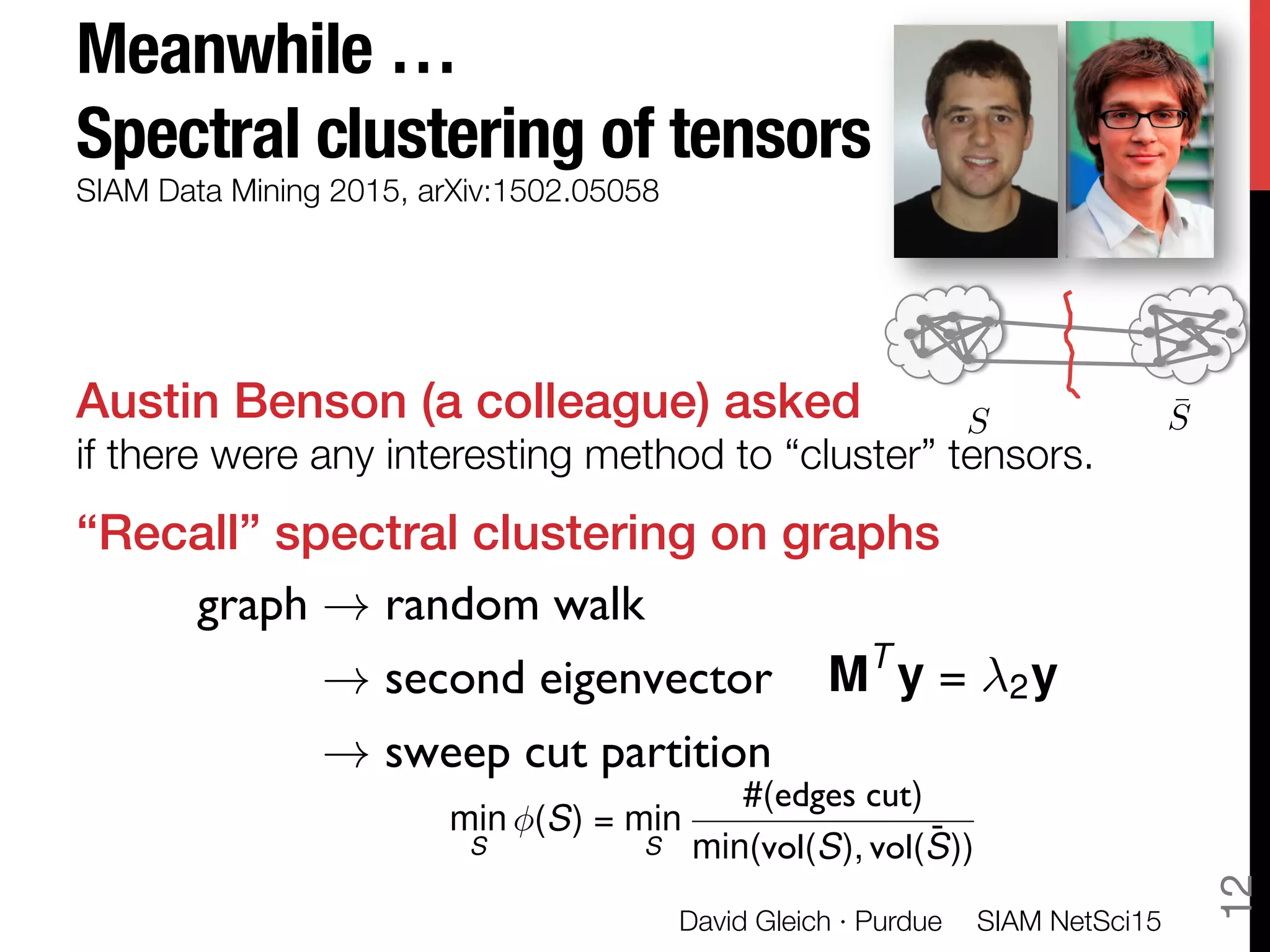

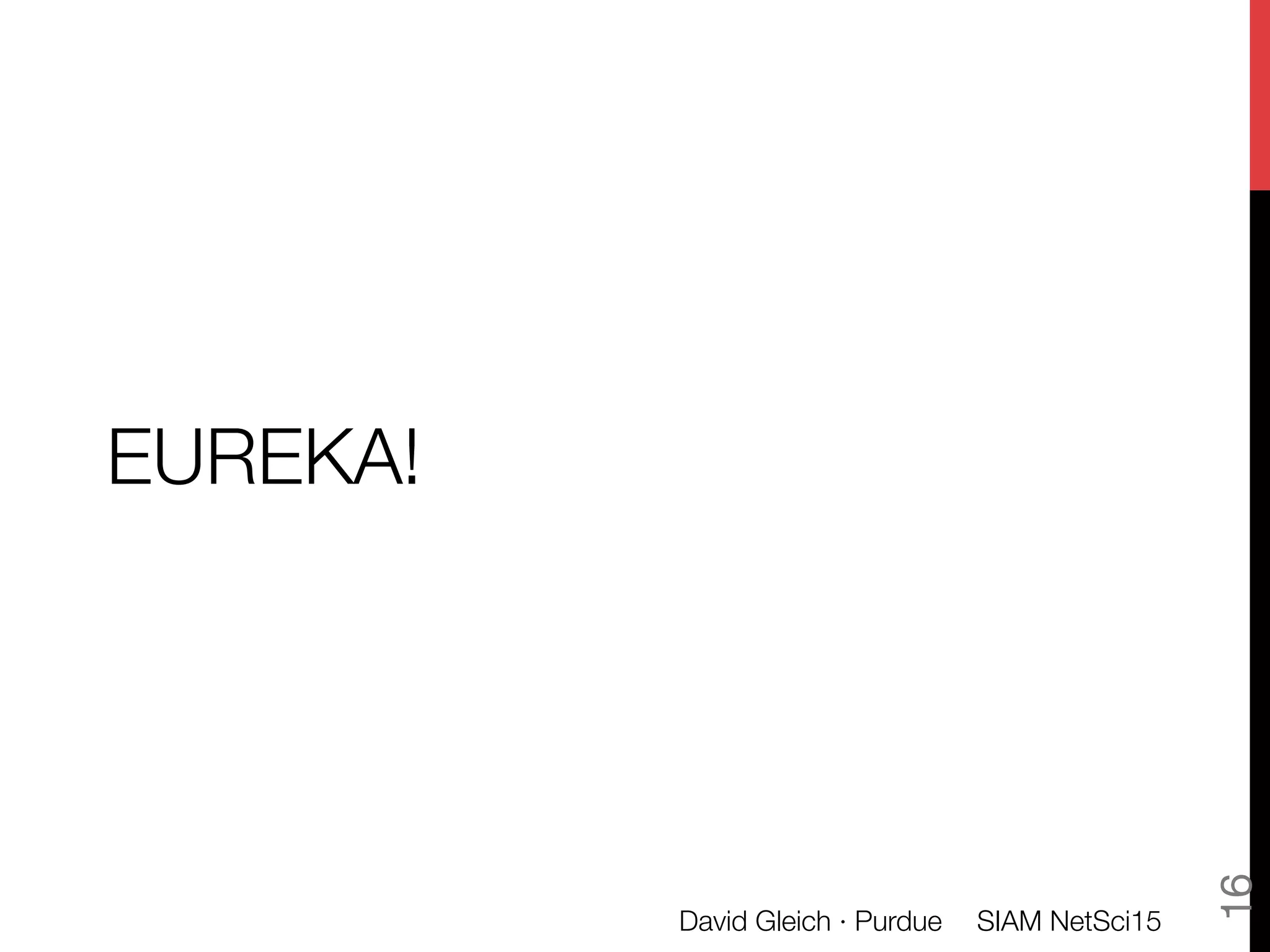

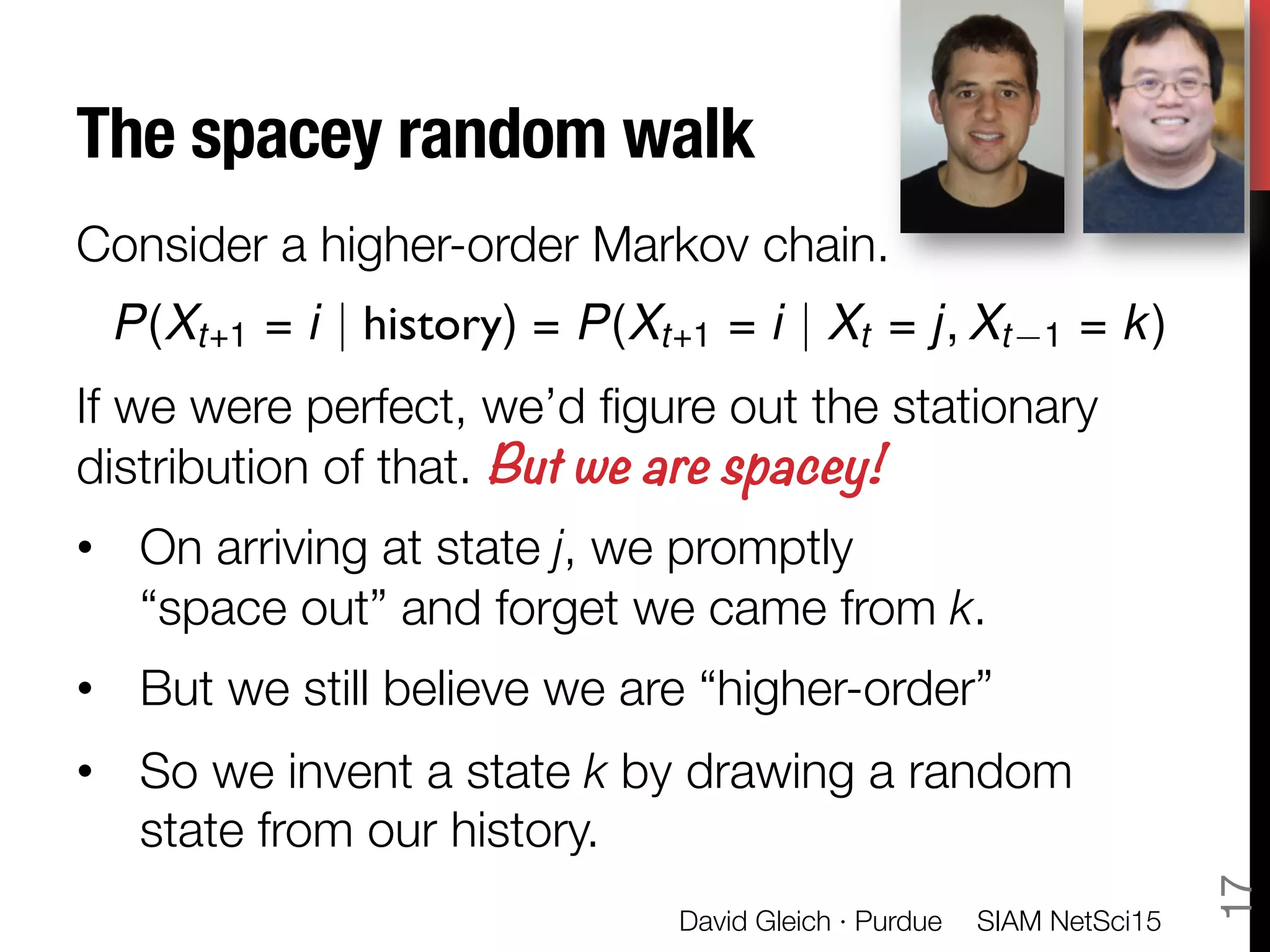

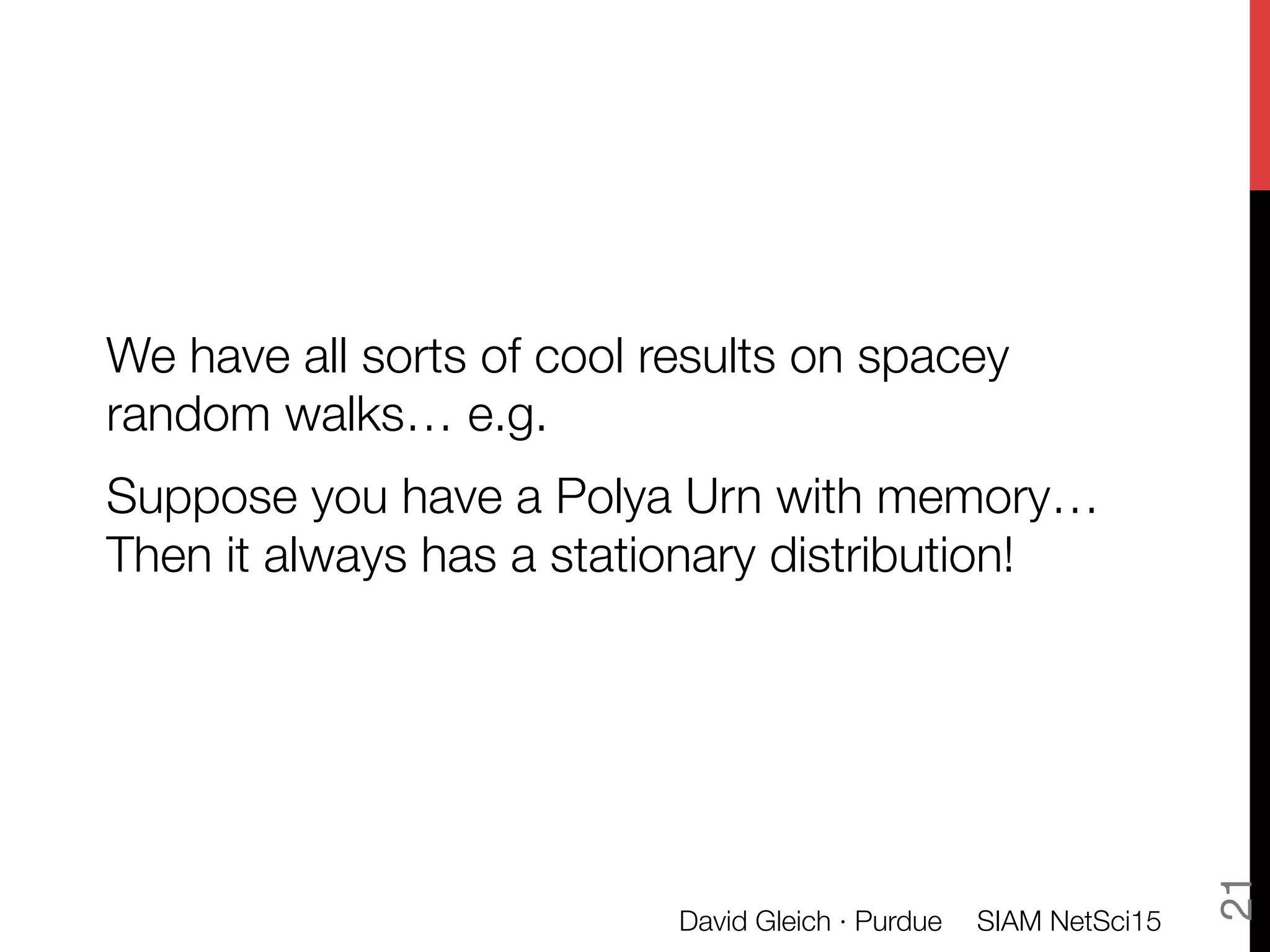

The document discusses the concept of spacey random walks on higher-order Markov chains, exploring their utility in approximating stationary distributions and their connection to PageRank algorithms. It introduces the notion of vertex-reinforced random walks, detailing how they operate and their relevance in analyzing complex data structures. The talk also touches upon spectral clustering of tensors and highlights possible applications in social networks and transcription networks.

![Approximate stationary distributions

of higher-order Markov chains

A higher order Markov chain!

depends on the last few states.

These become Markov chains on the product state space."

But that’s usually too large for stationary distributions.

The approximation!

is that we form a rank-1 approximation of that stationary

distribution object.

Due to Michael Ng and collaborators

P(Xt+1 = i | history) = P(Xt+1 = i | Xt = j, Xt 1 = k)

P(X = [i, j]) = xi xj

SIAM NetSci15

David Gleich · Purdue

6

P(X = [i, j]) = Xi,j](https://image.slidesharecdn.com/spacey-random-surfer-talk-150516162138-lva1-app6892/75/Spacey-random-walks-and-higher-order-Markov-chains-6-2048.jpg)

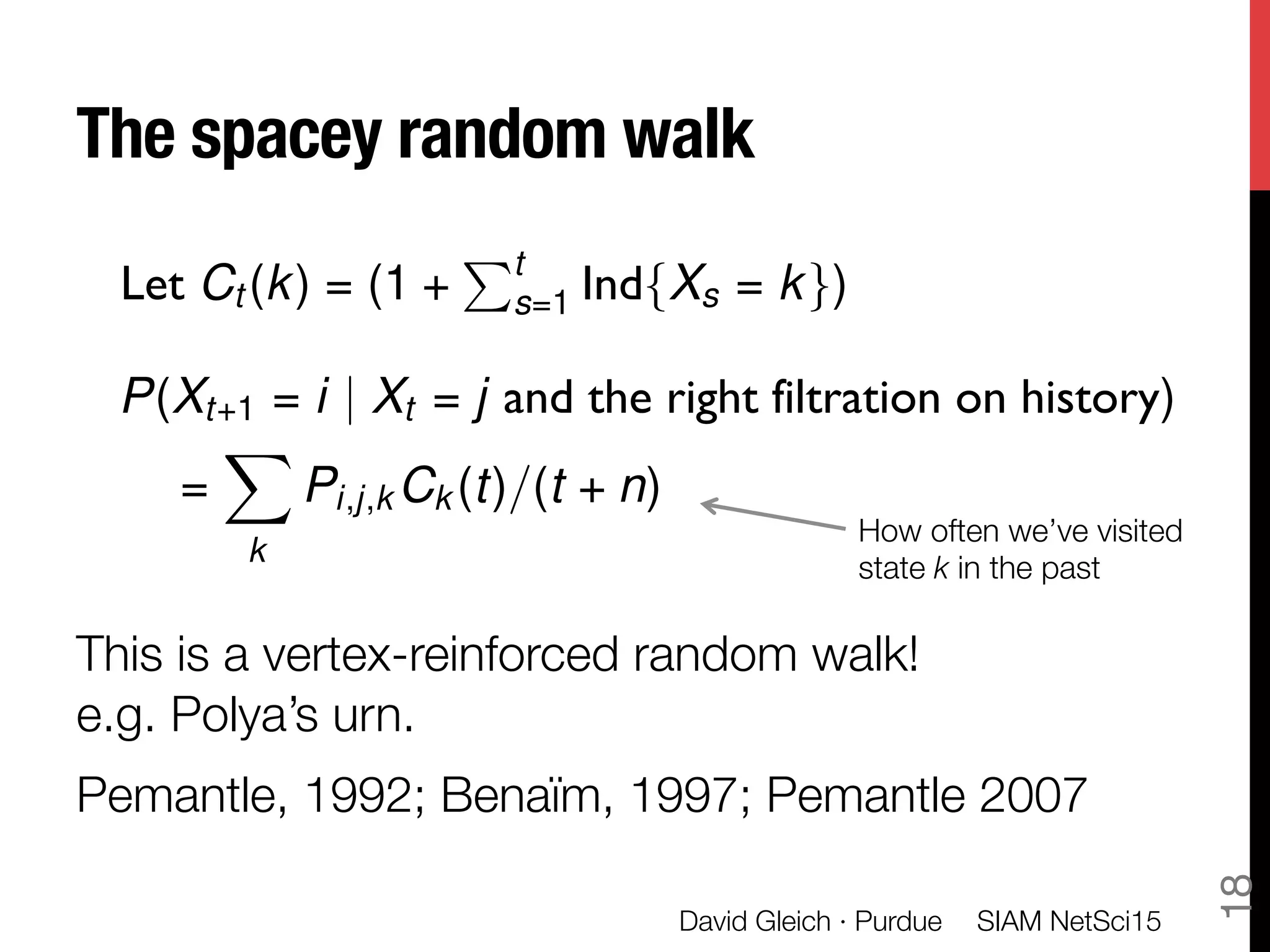

![Stationary distributions of vertex

reinforced random walks

A vertex-reinforced random walk at time t transitions

according to a Markov matrix M given the observed

frequencies.

This has a stationary distribution, iff the dynamical system

converges.

SIAM NetSci15

David Gleich · Purdue

19

dx

dt

= ⇡[M(x)] x

P(Xt+1 = i | Xt = j and the right filtration on history)

= [M(t)]i,j

= [M(c(t))]i,j

⇡[M] is a map to the stat. dist.

M. Benïam 1997](https://image.slidesharecdn.com/spacey-random-surfer-talk-150516162138-lva1-app6892/75/Spacey-random-walks-and-higher-order-Markov-chains-19-2048.jpg)

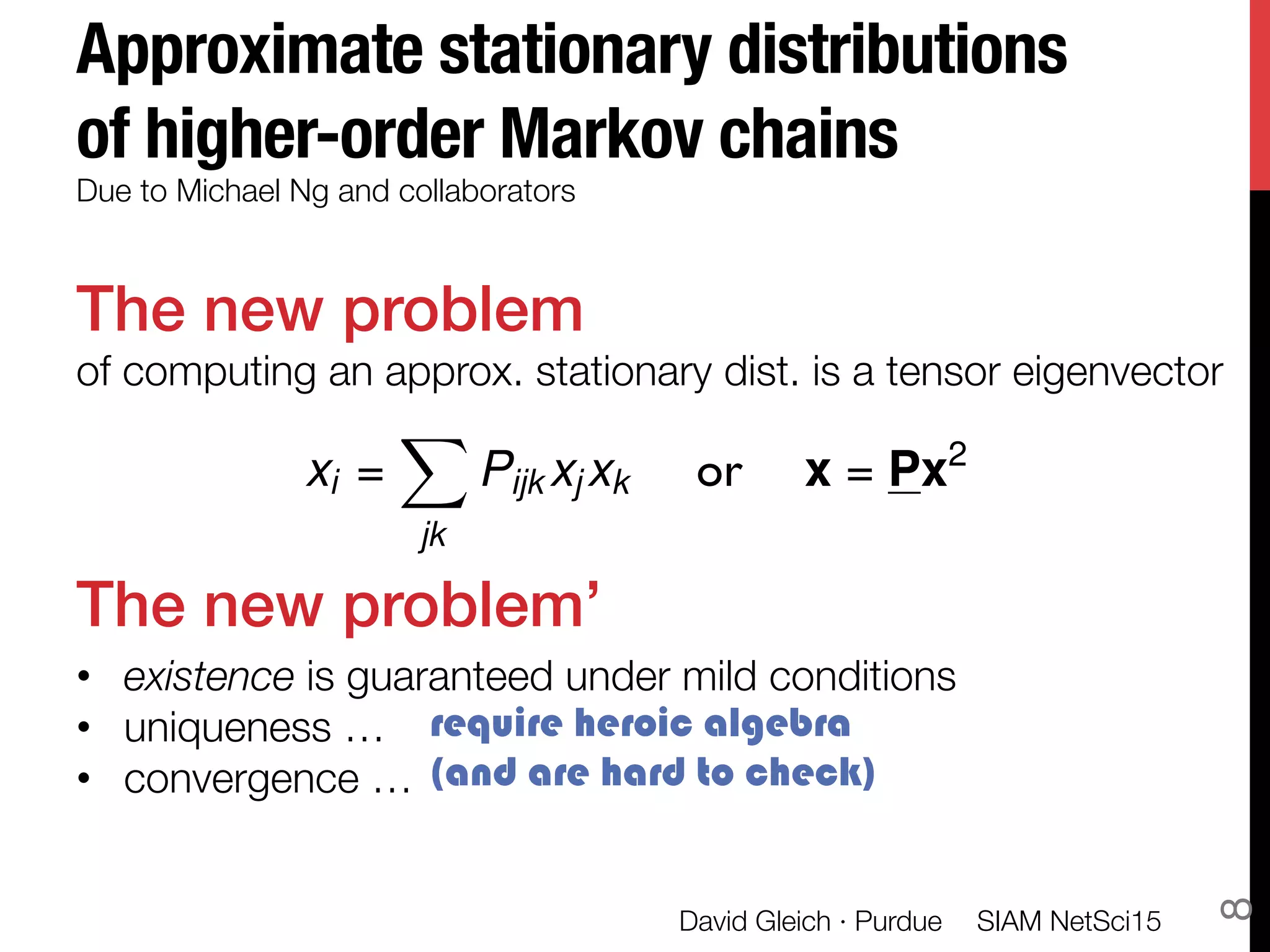

![The Markov matrix for "

Spacey Random Walks

A necessary condition for a stationary distribution

(otherwise makes no sense)

SIAM NetSci15

David Gleich · Purdue

20

Property B. Let P be an order-m, n dimensional probability table. Then P has

property B if there is a unique stationary distribution associated with all stochastic

combinations of the last m 2 modes. That is, M =

P

k,`,... P(:, :, k, `, ...) k,`,... defines

a Markov chain with a unique Perron root when all s are positive and sum to one.

dx

dt

= ⇡[M(x)] x

M =

X

k

P(:, :, k)xk

This is the transition probability associated

with guessing the last state based on history!](https://image.slidesharecdn.com/spacey-random-surfer-talk-150516162138-lva1-app6892/75/Spacey-random-walks-and-higher-order-Markov-chains-20-2048.jpg)

![Back to Multilinear PageRank

The Multilinear PageRank problem is what we call a

spacey random surfer model.

• This is a spacey random walk

• We add random jumps with probability (1-alpha)

It’s also a vertex-reinforced random walk.

Thus, it has a stationary probability if

converges.

SIAM NetSci15

David Gleich · Purdue

22

dx

dt

= ⇡[M(x)] x

M(x) = ↵

P

k P(:, :, k)xk

+ (1 ↵)v

Which occurs when alpha < 1/order !](https://image.slidesharecdn.com/spacey-random-surfer-talk-150516162138-lva1-app6892/75/Spacey-random-walks-and-higher-order-Markov-chains-22-2048.jpg)