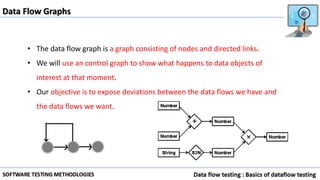

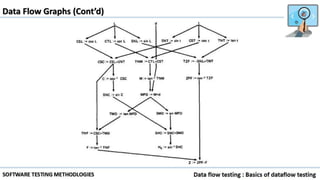

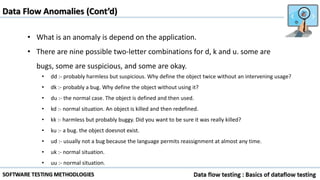

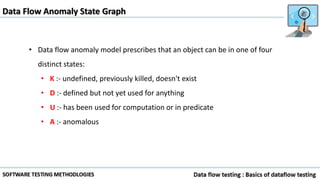

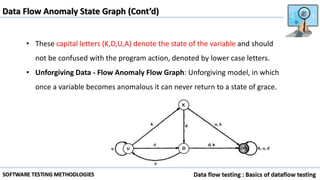

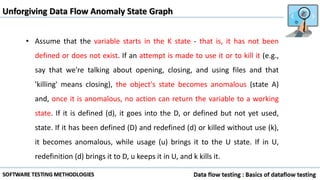

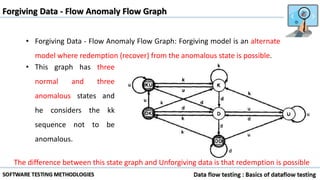

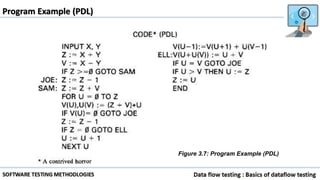

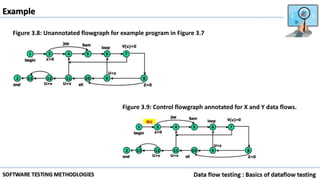

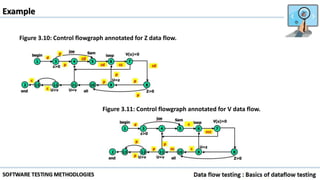

Data flow testing uses a program's control flow graph annotated with symbols like d, k, u to track the state of variables and identify anomalies. Static analysis can detect some anomalies but is insufficient on its own due to limitations in analyzing dynamic features like pointers, concurrency, and interrupts. The data flow model represents each statement as a node and links are weighted with sequences of symbols showing variable states to identify anomalies like ku that indicate bugs.