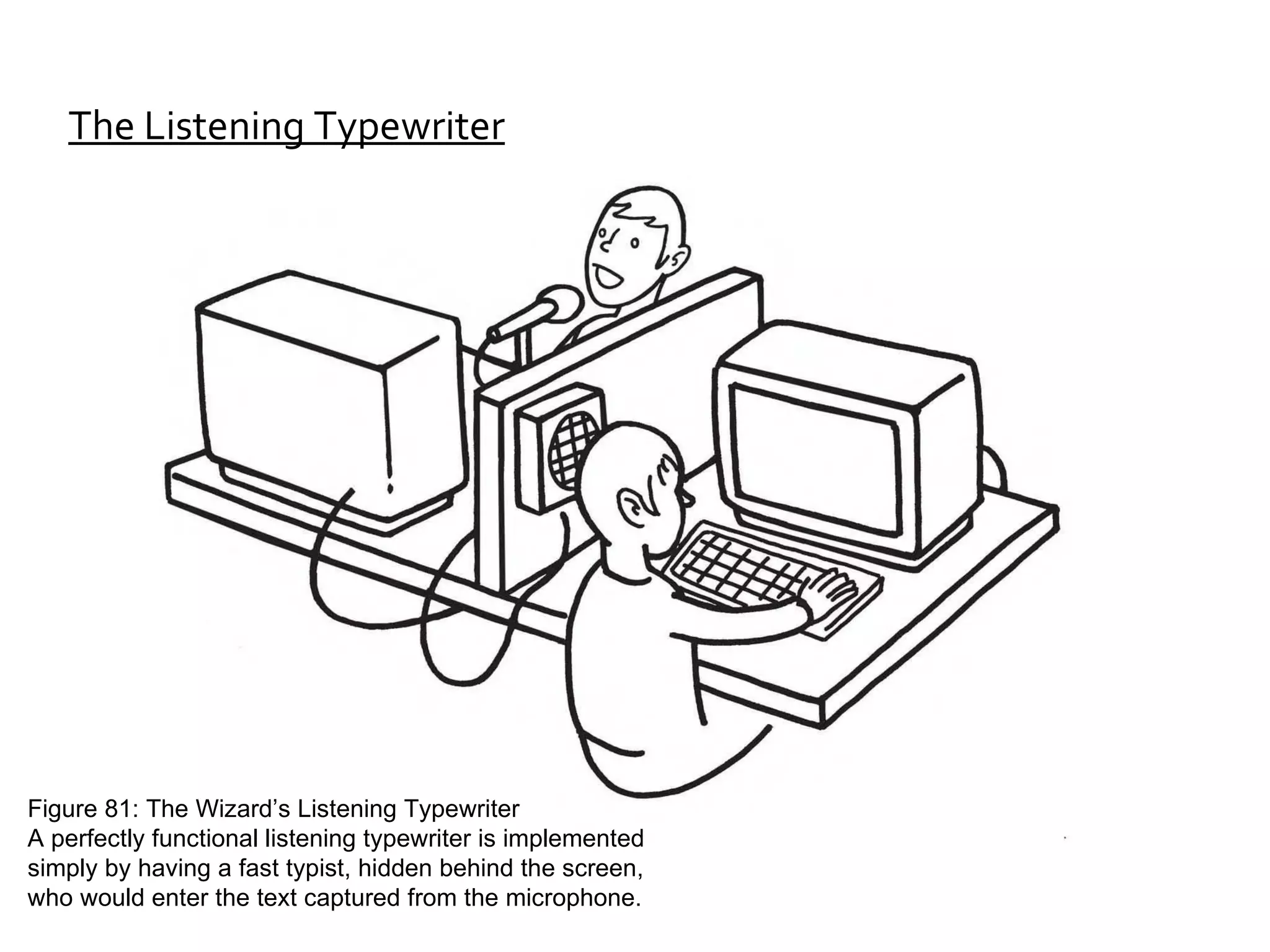

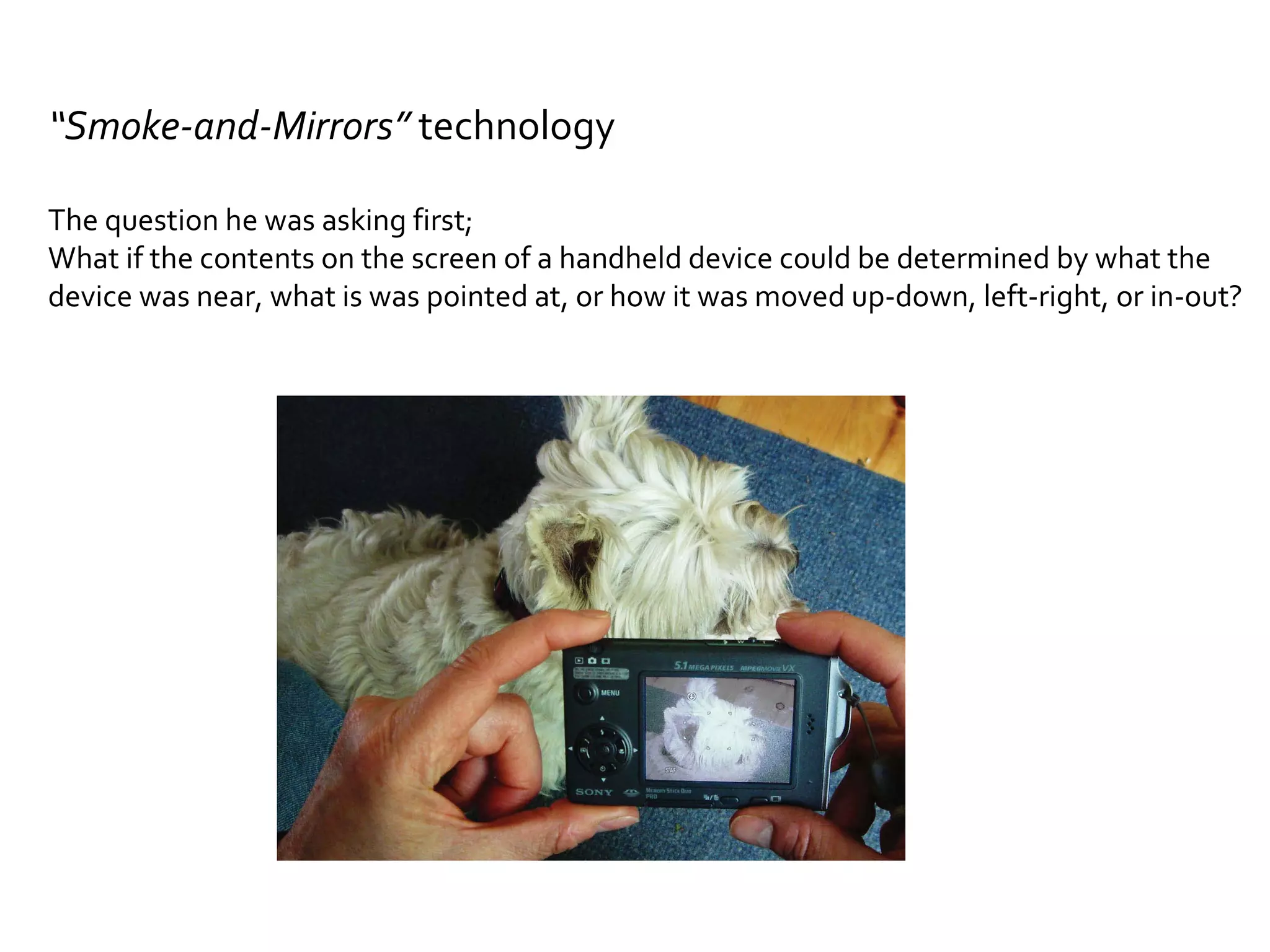

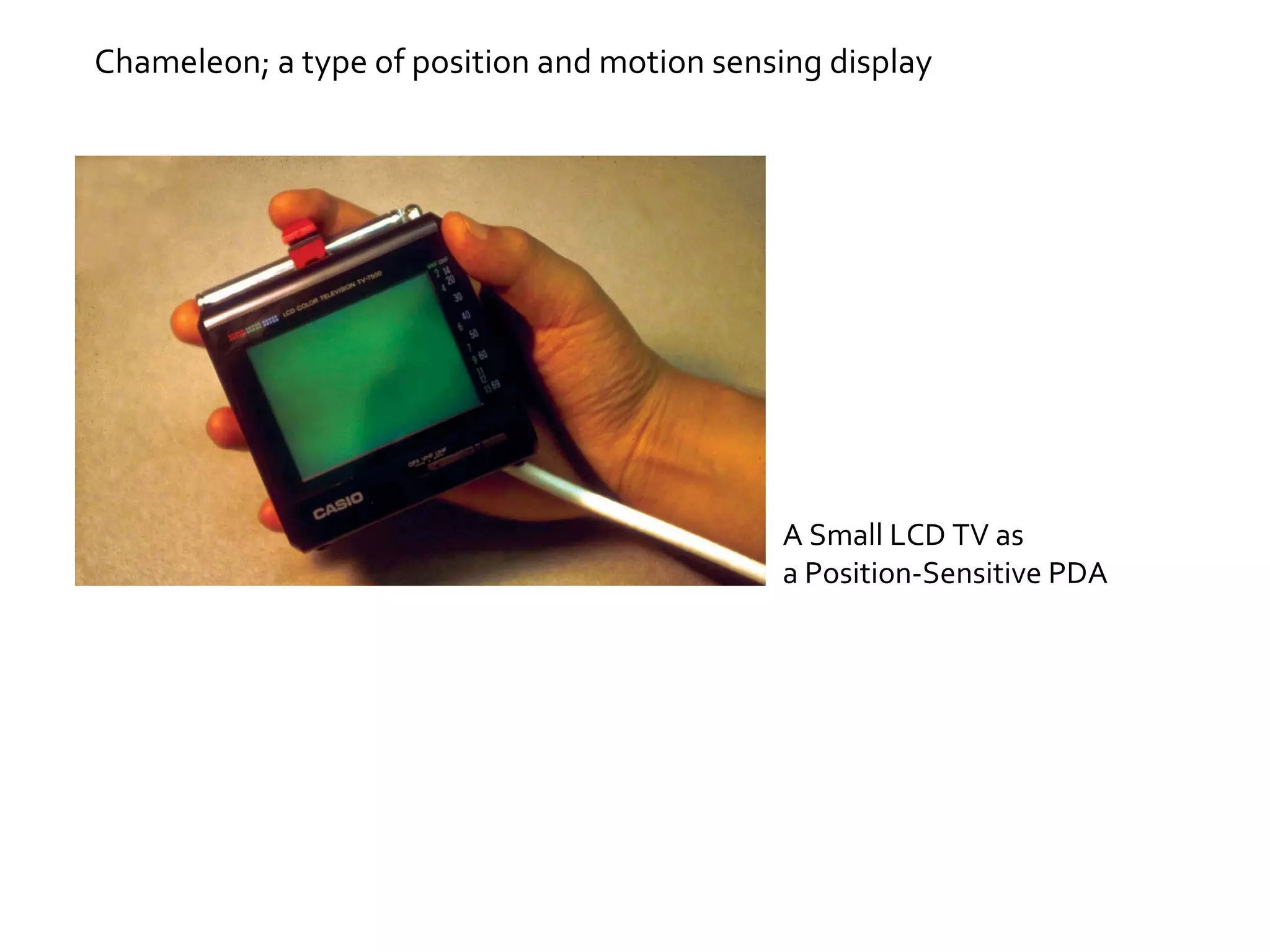

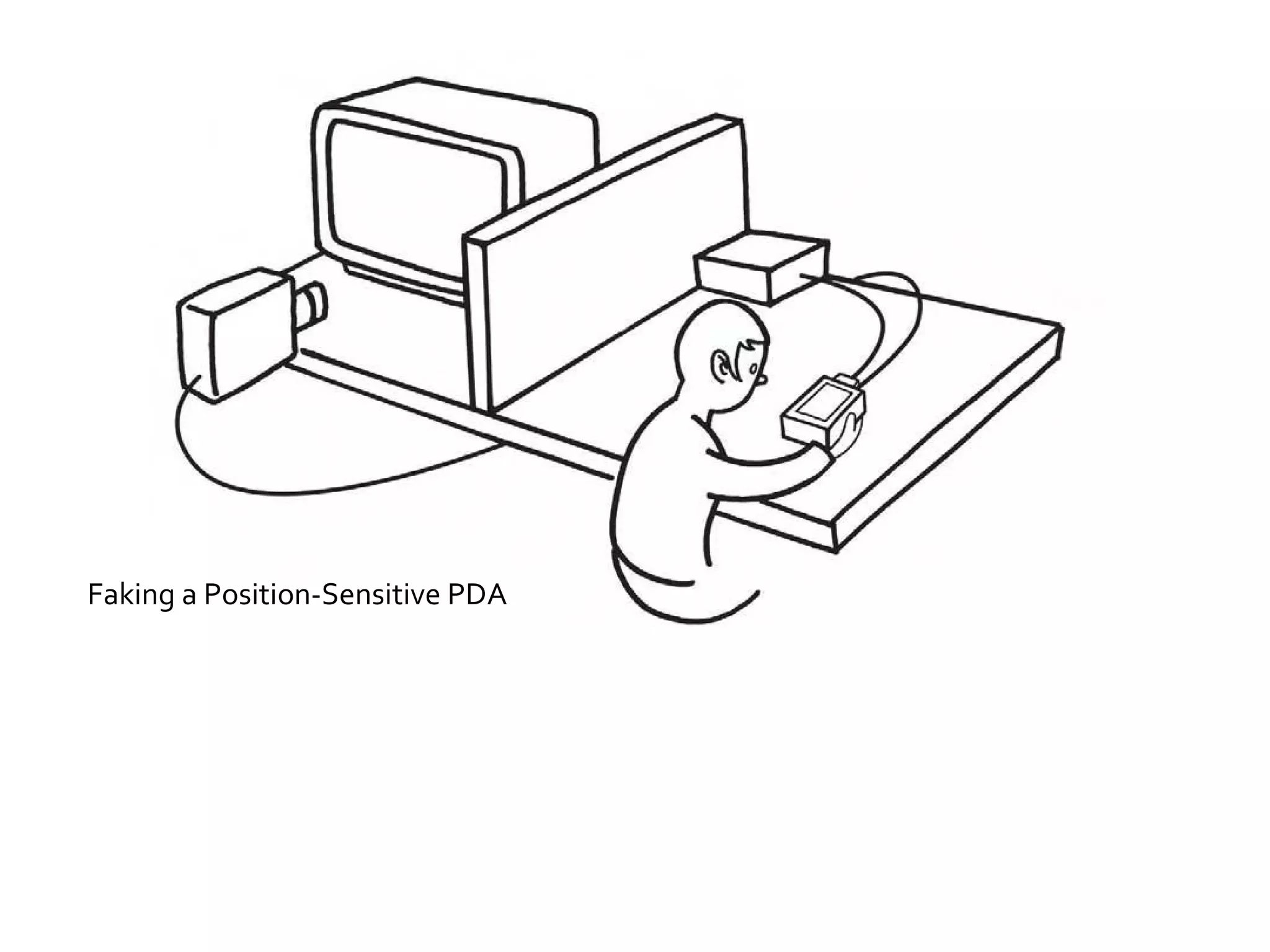

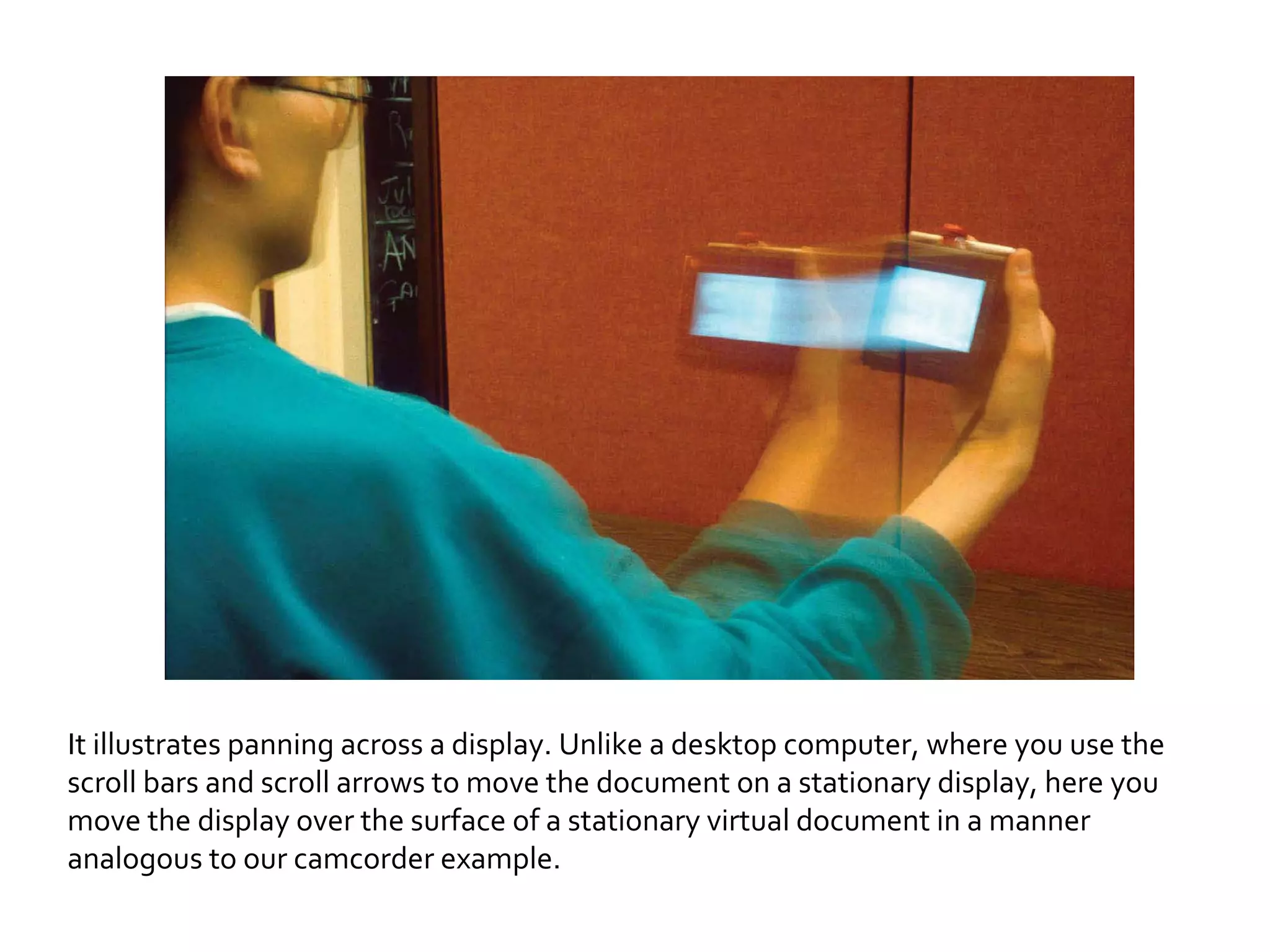

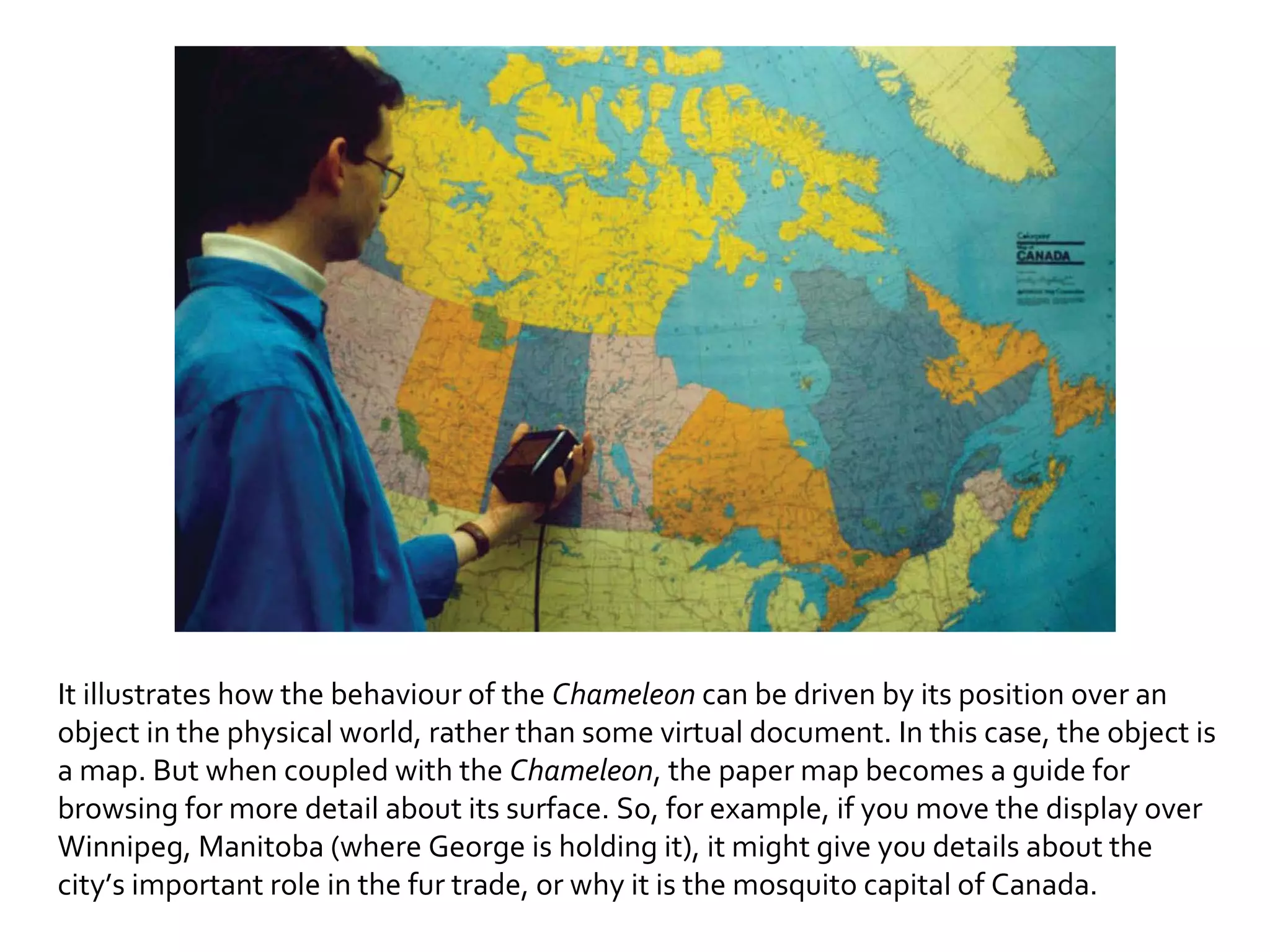

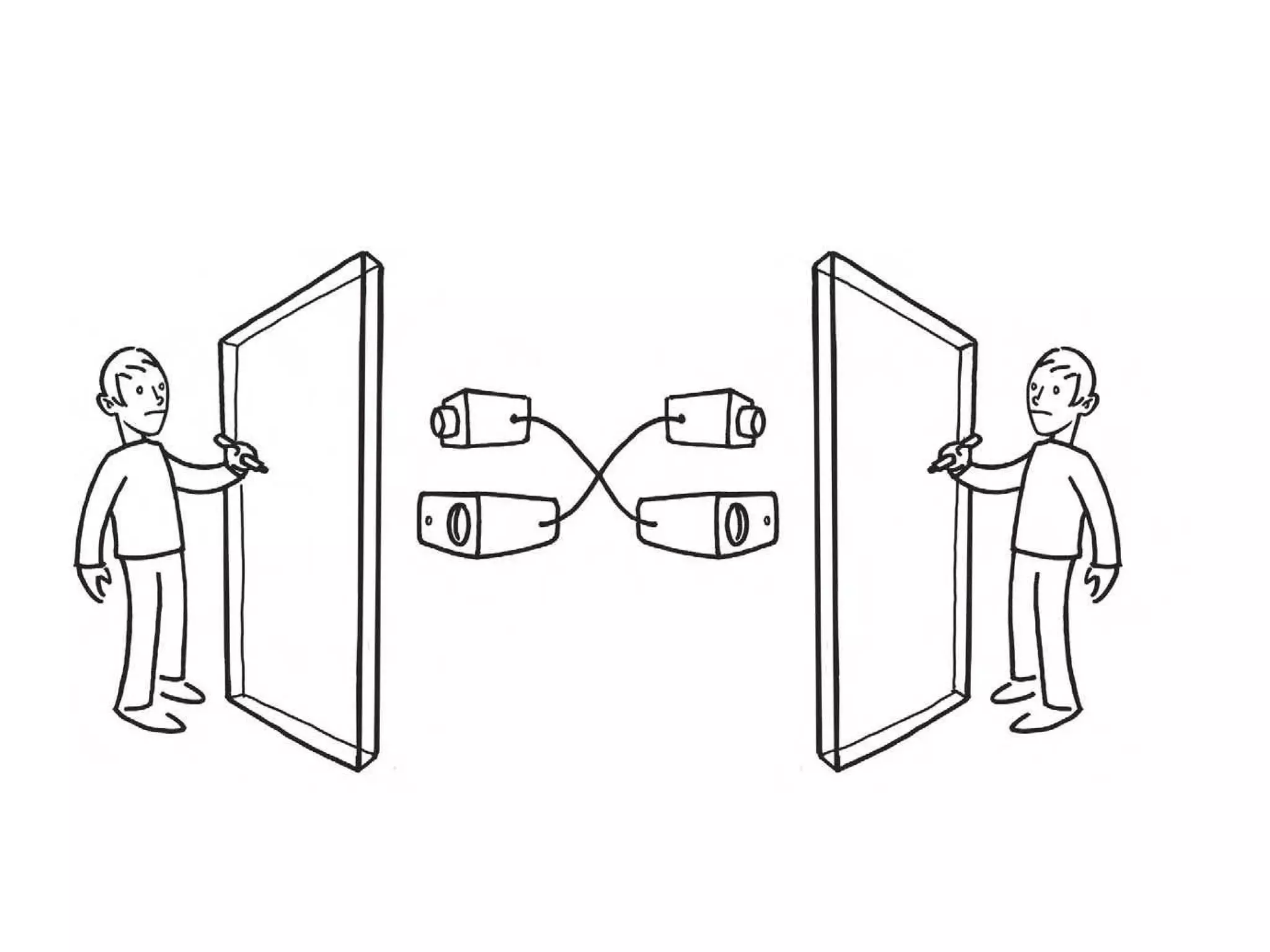

The document discusses Bill Buxton's approach to illuminating best practices in interaction design through examples of prototyping techniques like the "Wizard of Oz technique". It provides examples of early prototypes like a listening typewriter and a position-sensitive display called Chameleon that were created using these prototyping methods before the underlying technology existed. The document advocates for prototyping interactive systems through low-fidelity techniques in order to experience designs before implementing fully functional systems.