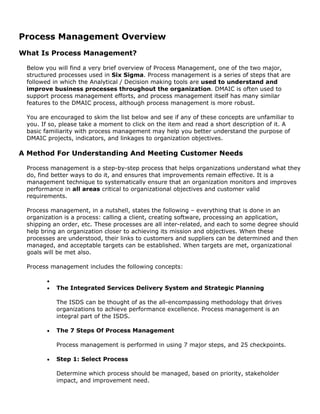

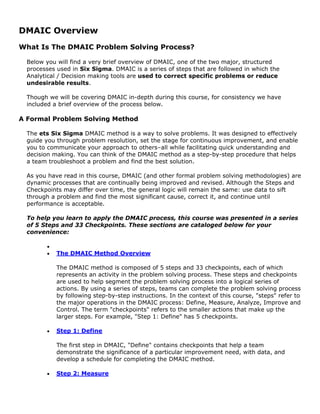

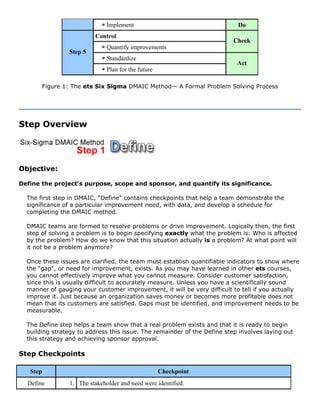

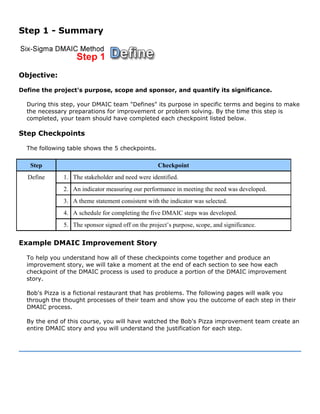

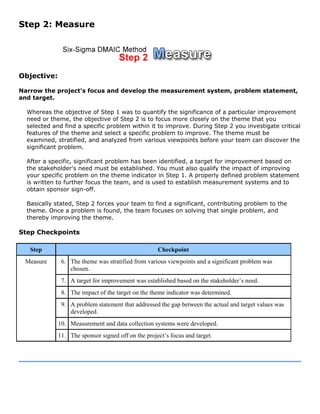

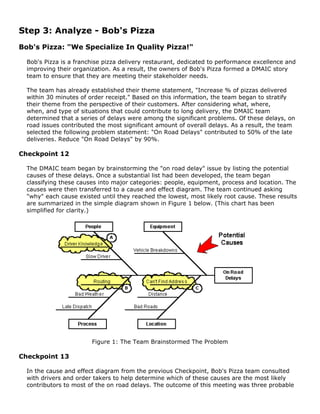

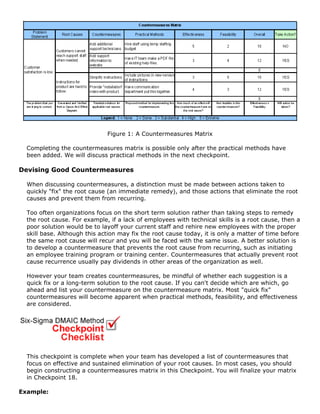

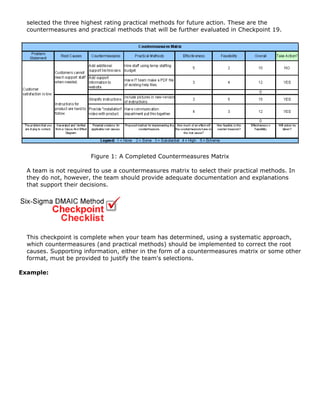

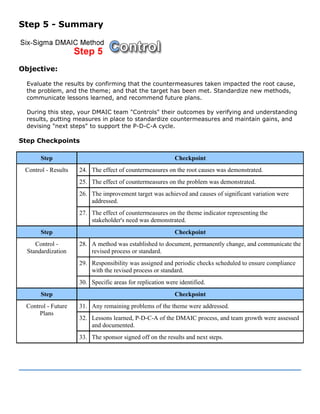

The document provides an overview of Six Sigma, explaining that it is a management system that uses data and systematic approaches to continually improve quality and performance. It discusses the five major areas of Six Sigma: analytical tools, decision-making tools, process management, the DMAIC problem solving process, and leadership/strategic planning. The course will provide a quick overview of key Six Sigma concepts and then focus on walking through the DMAIC problem solving process step-by-step using an example.