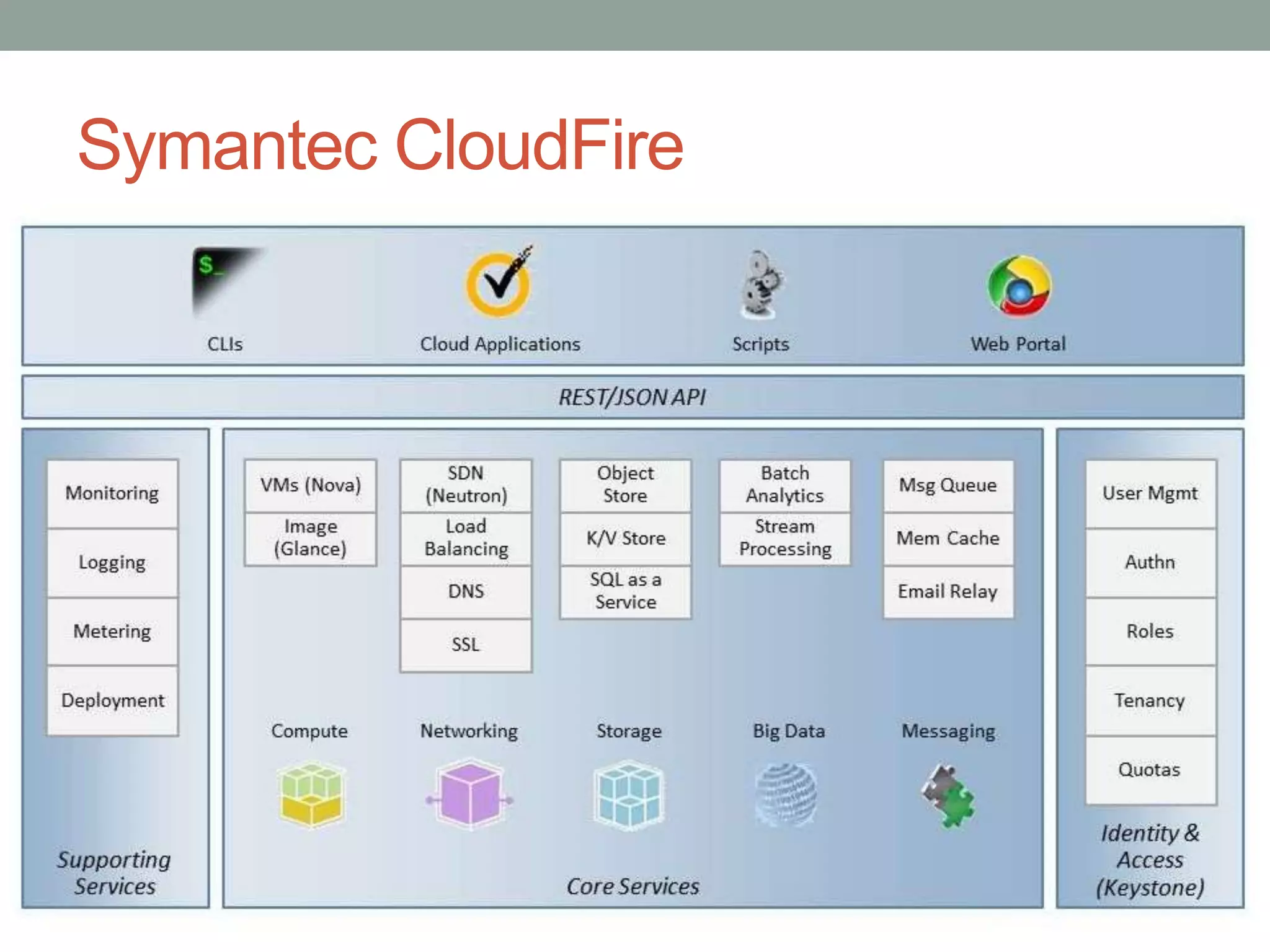

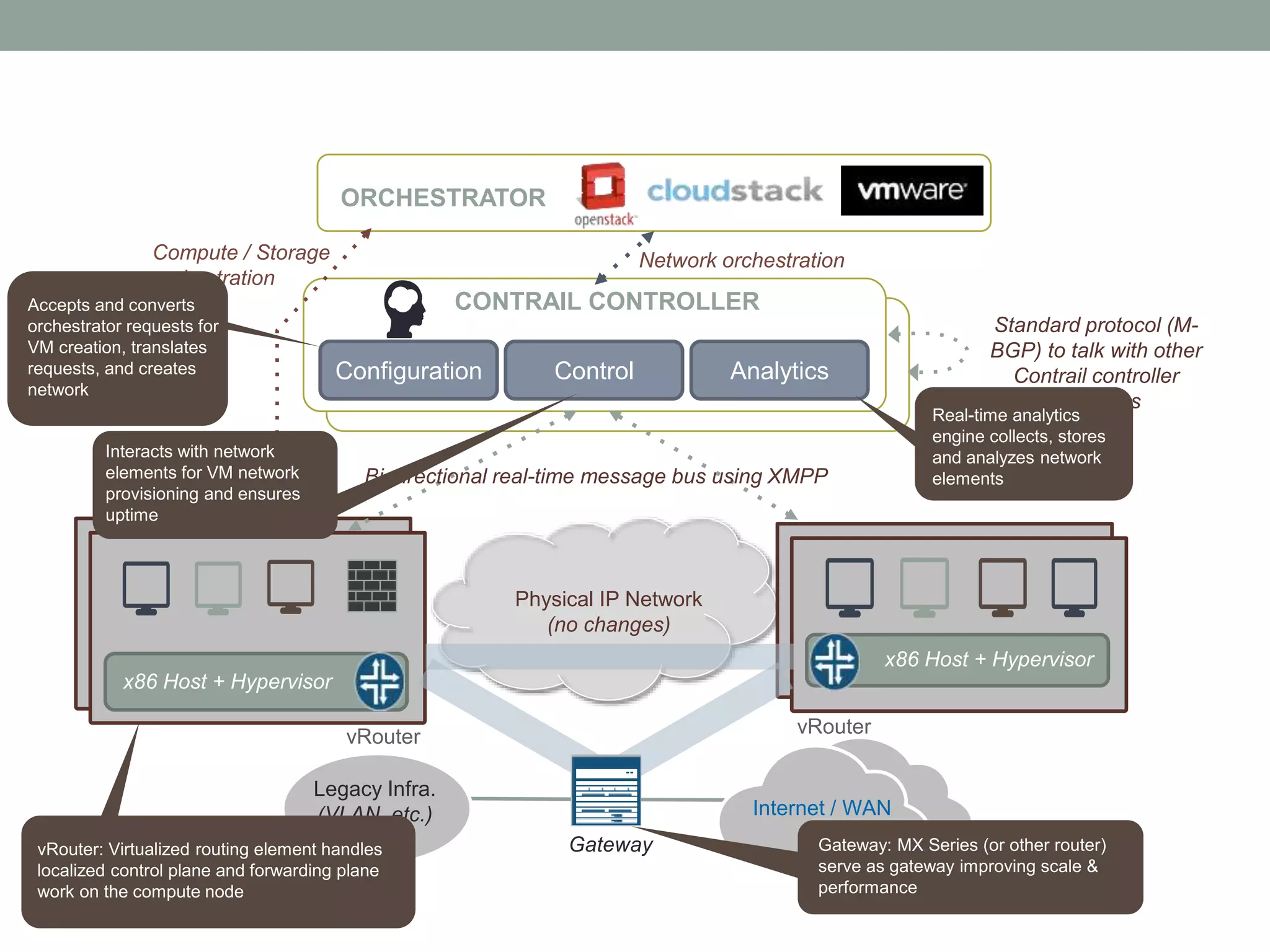

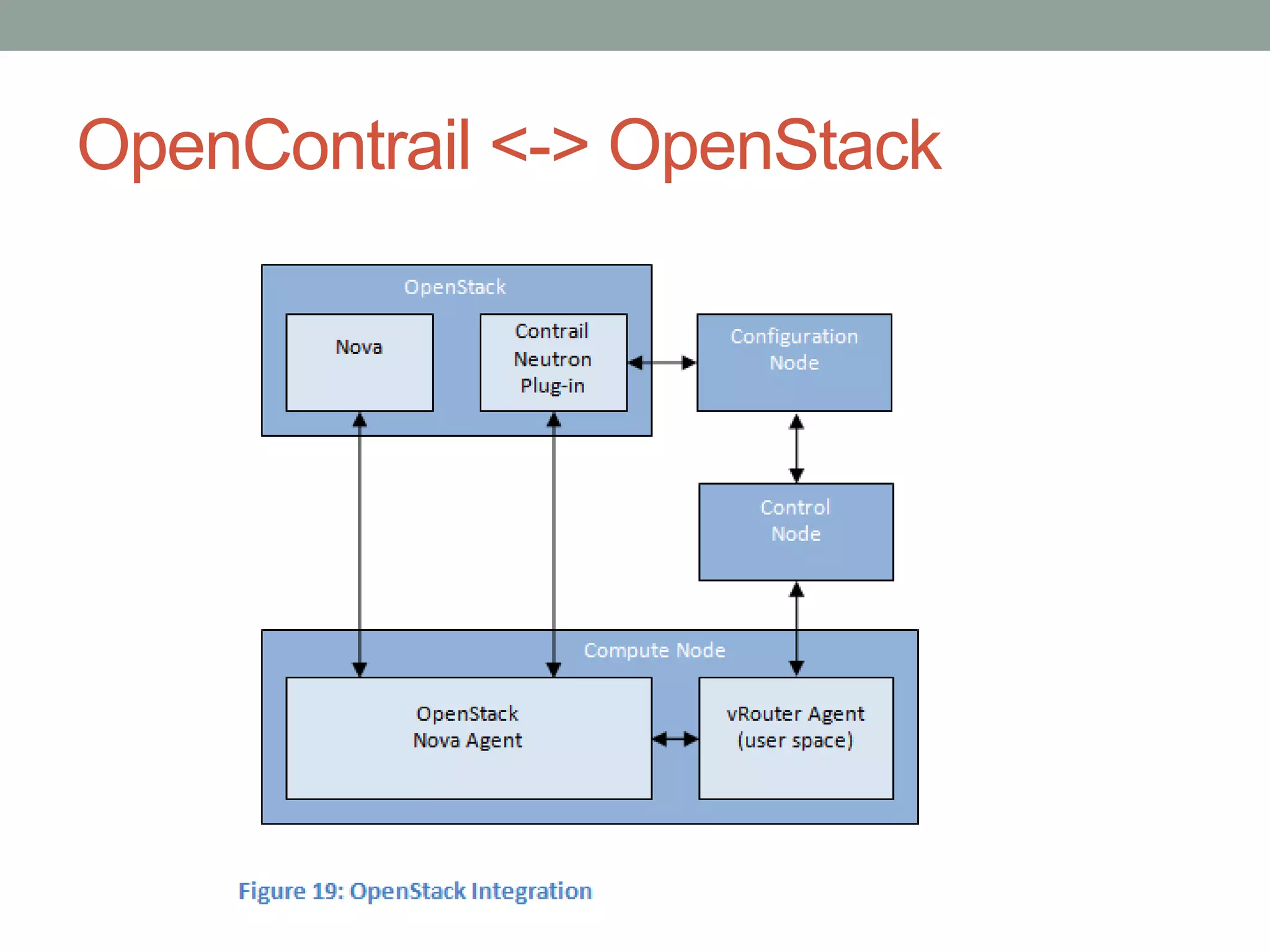

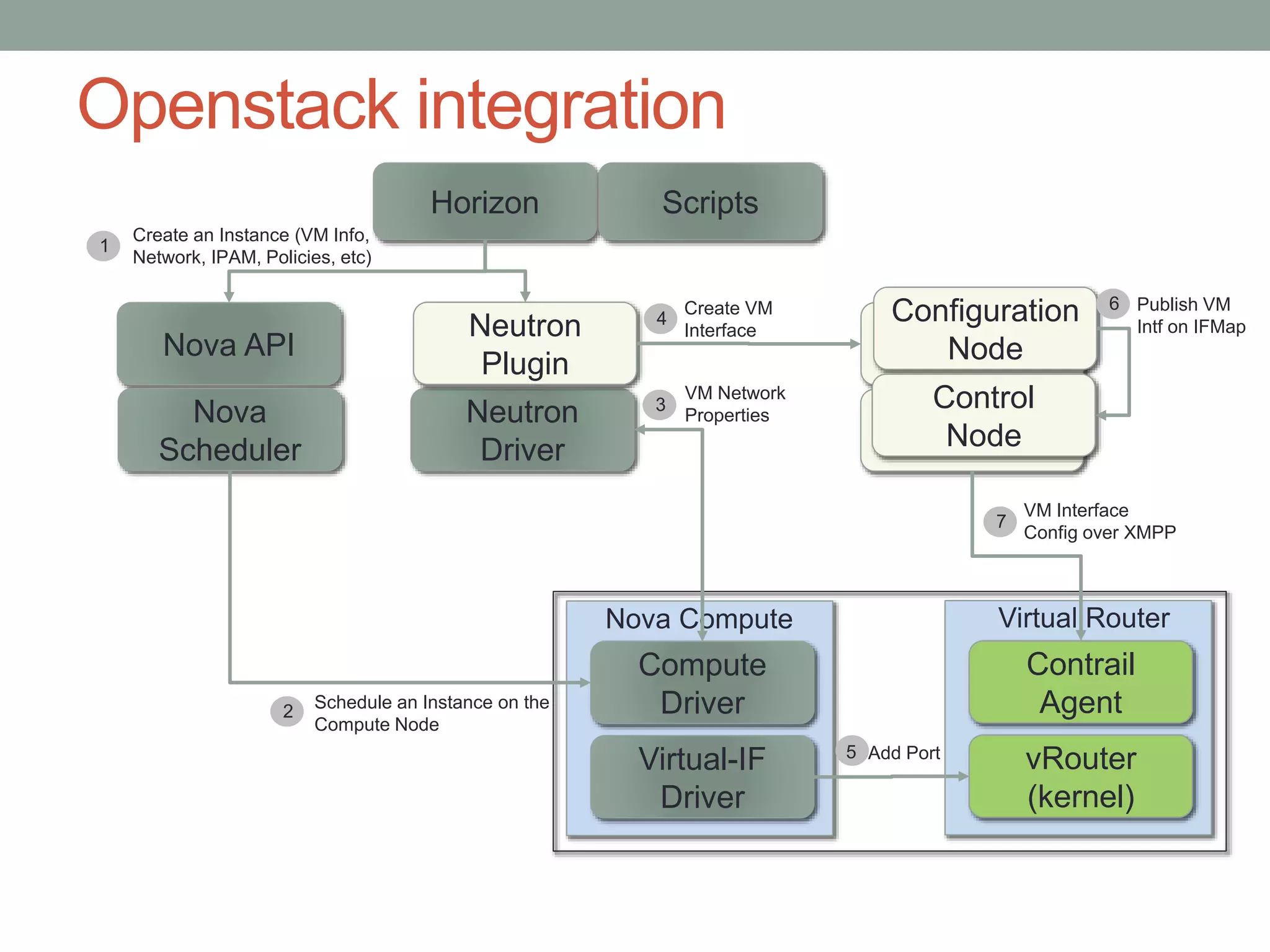

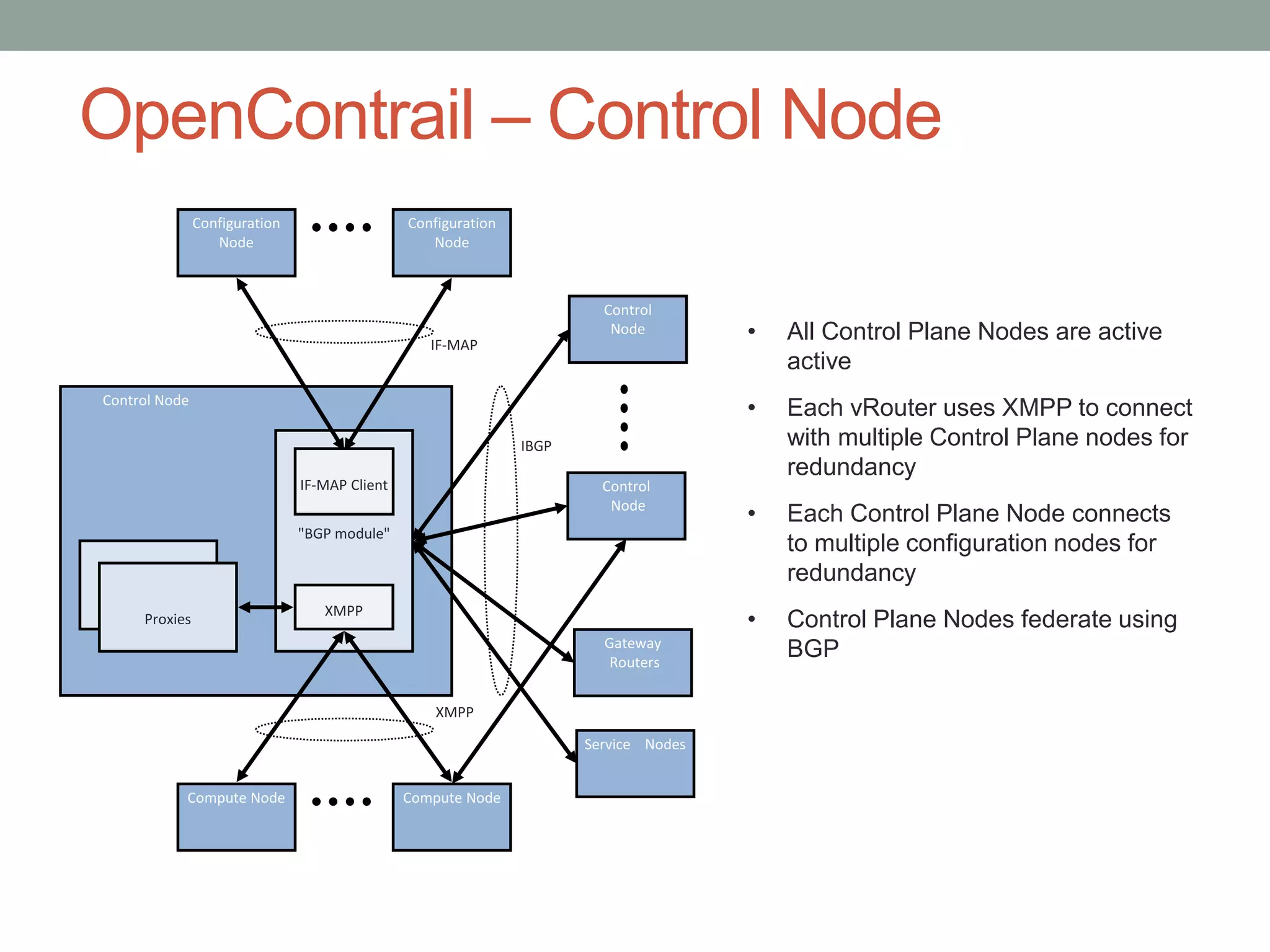

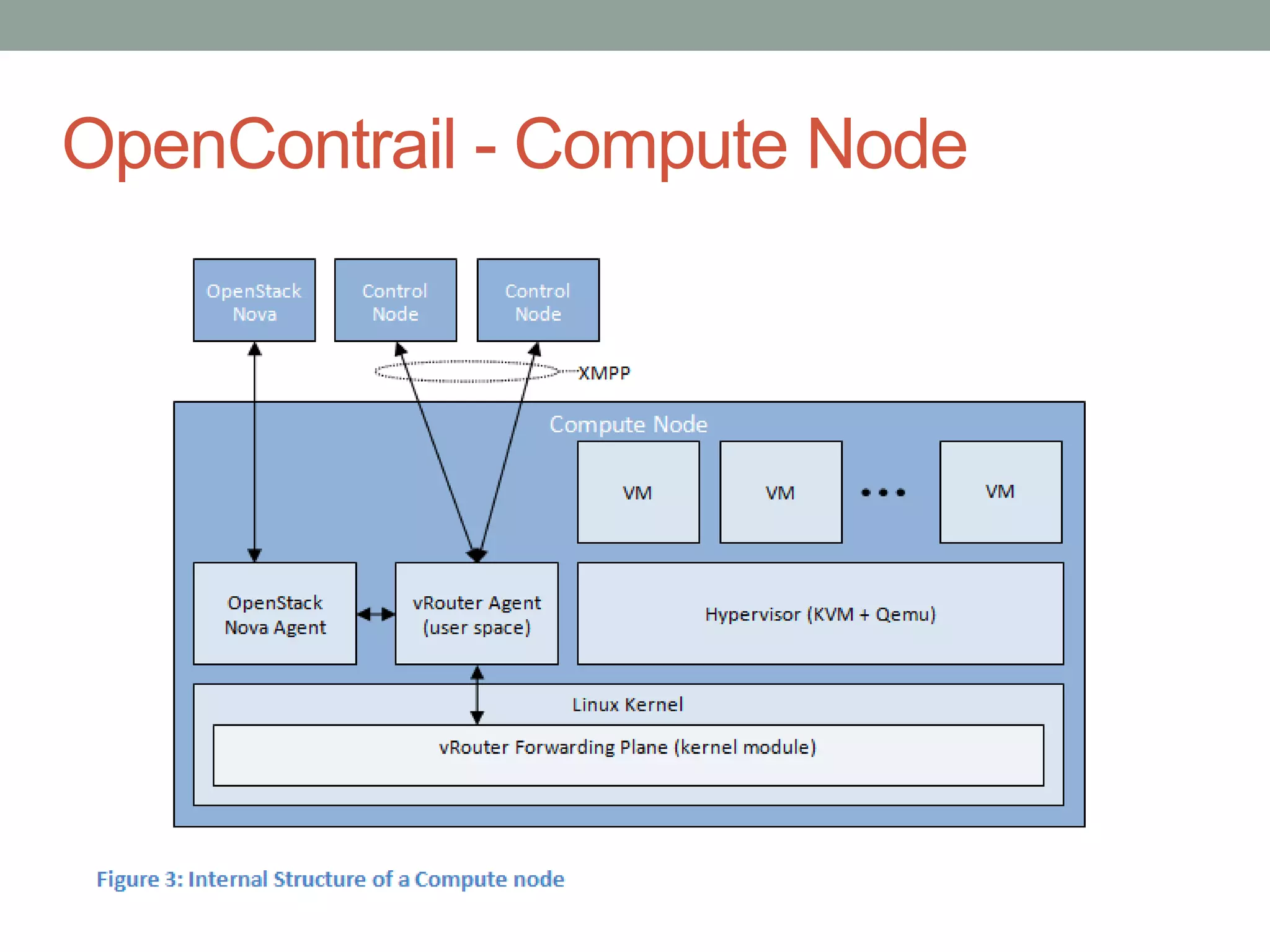

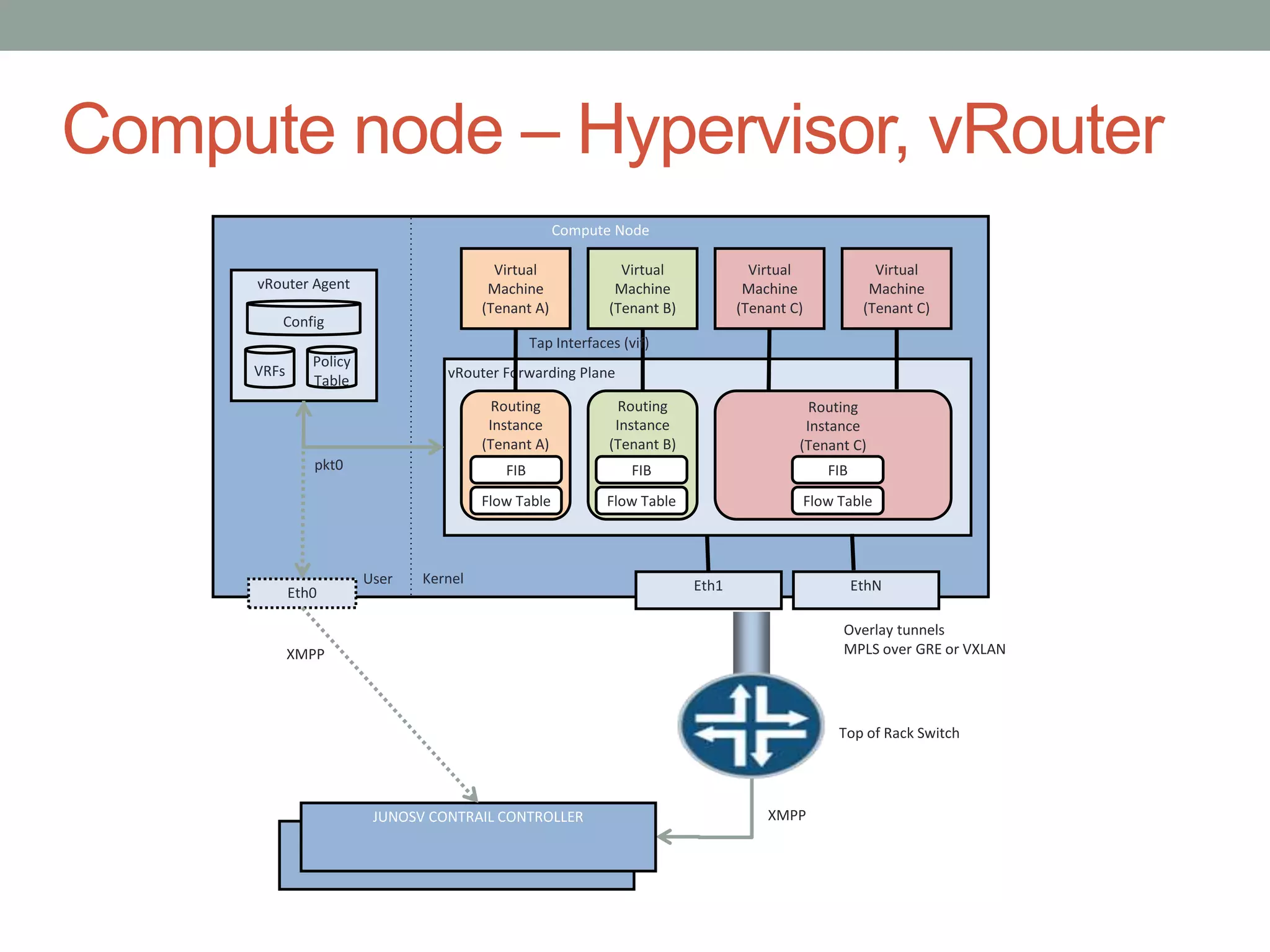

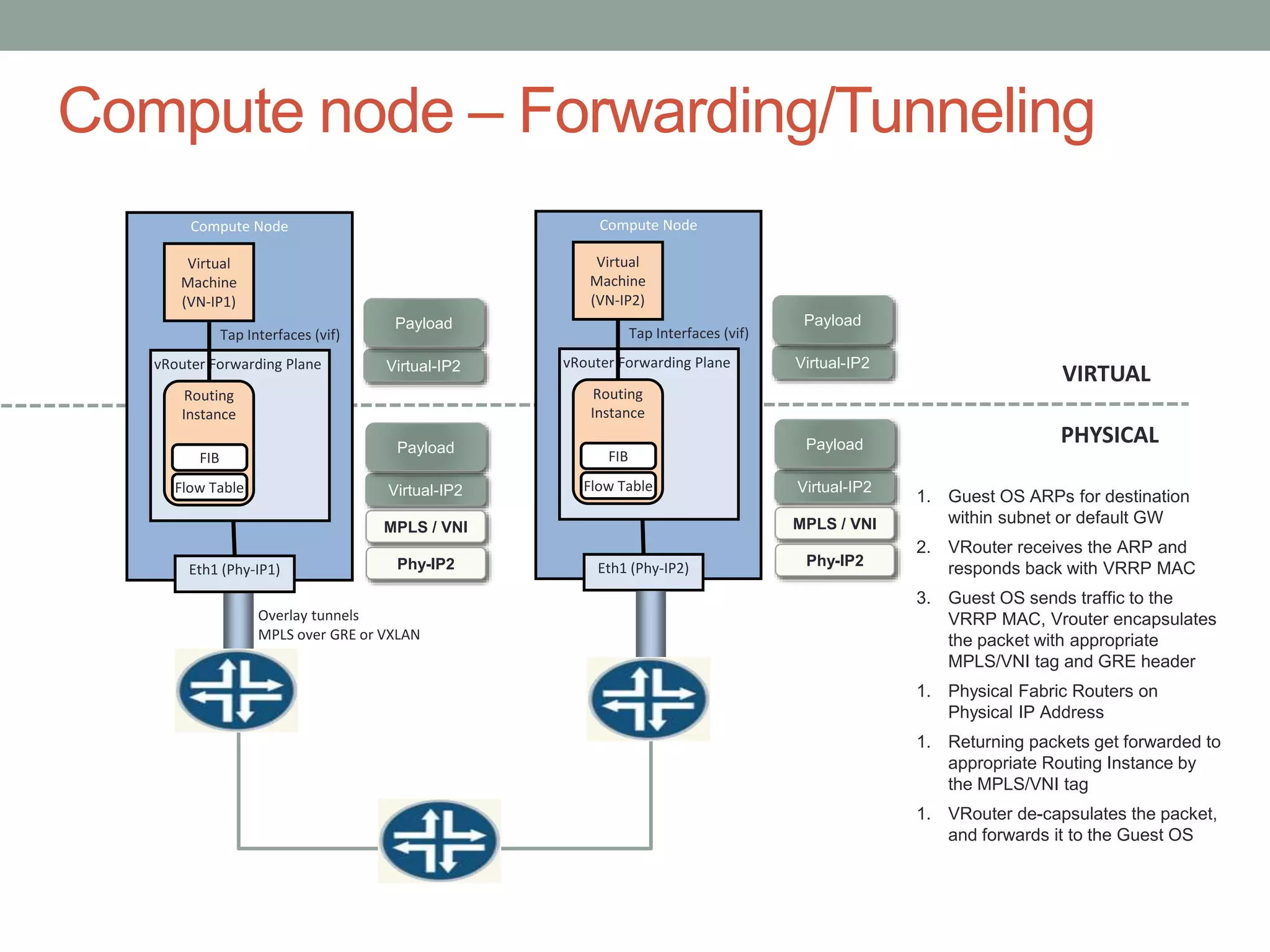

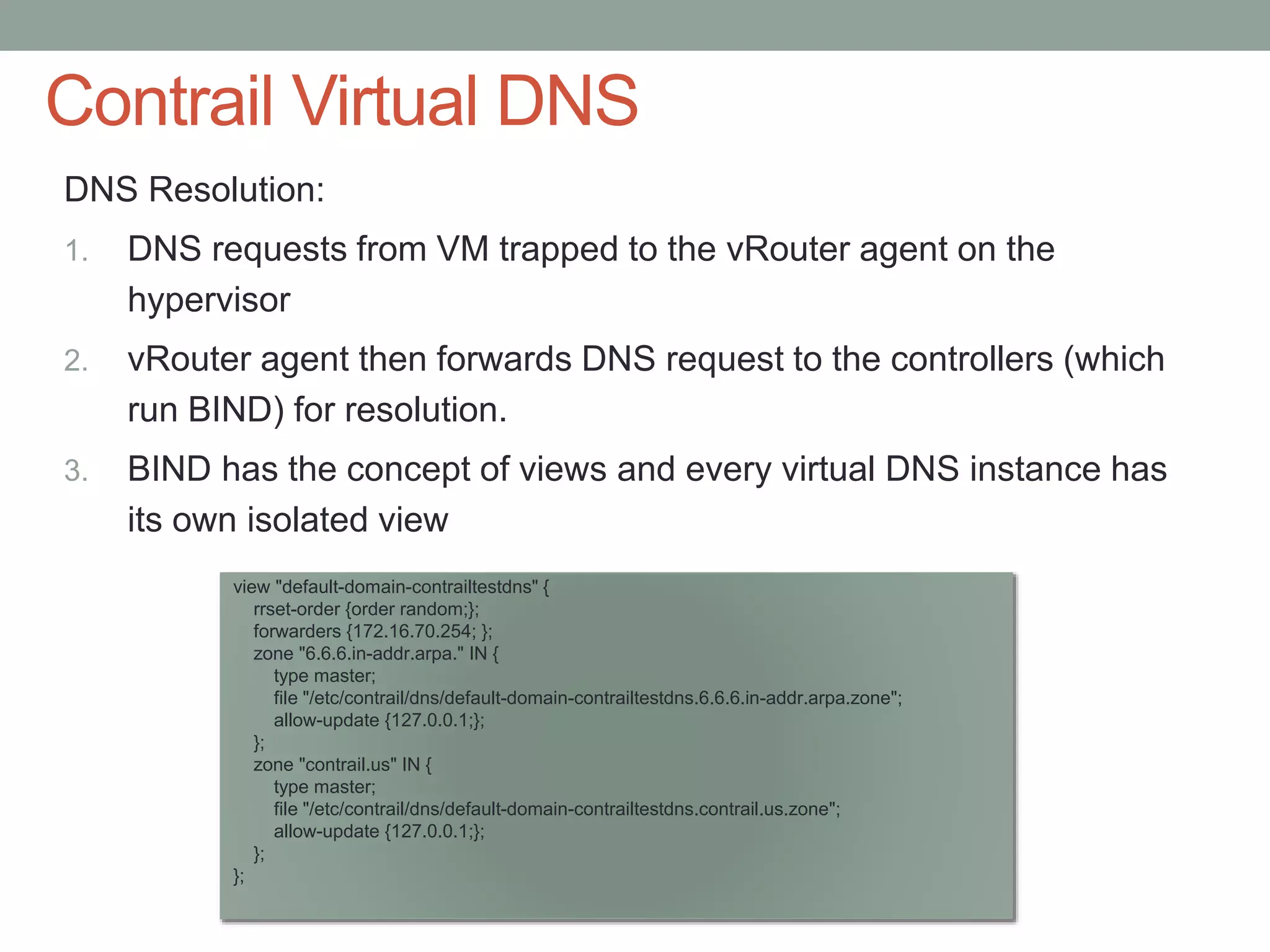

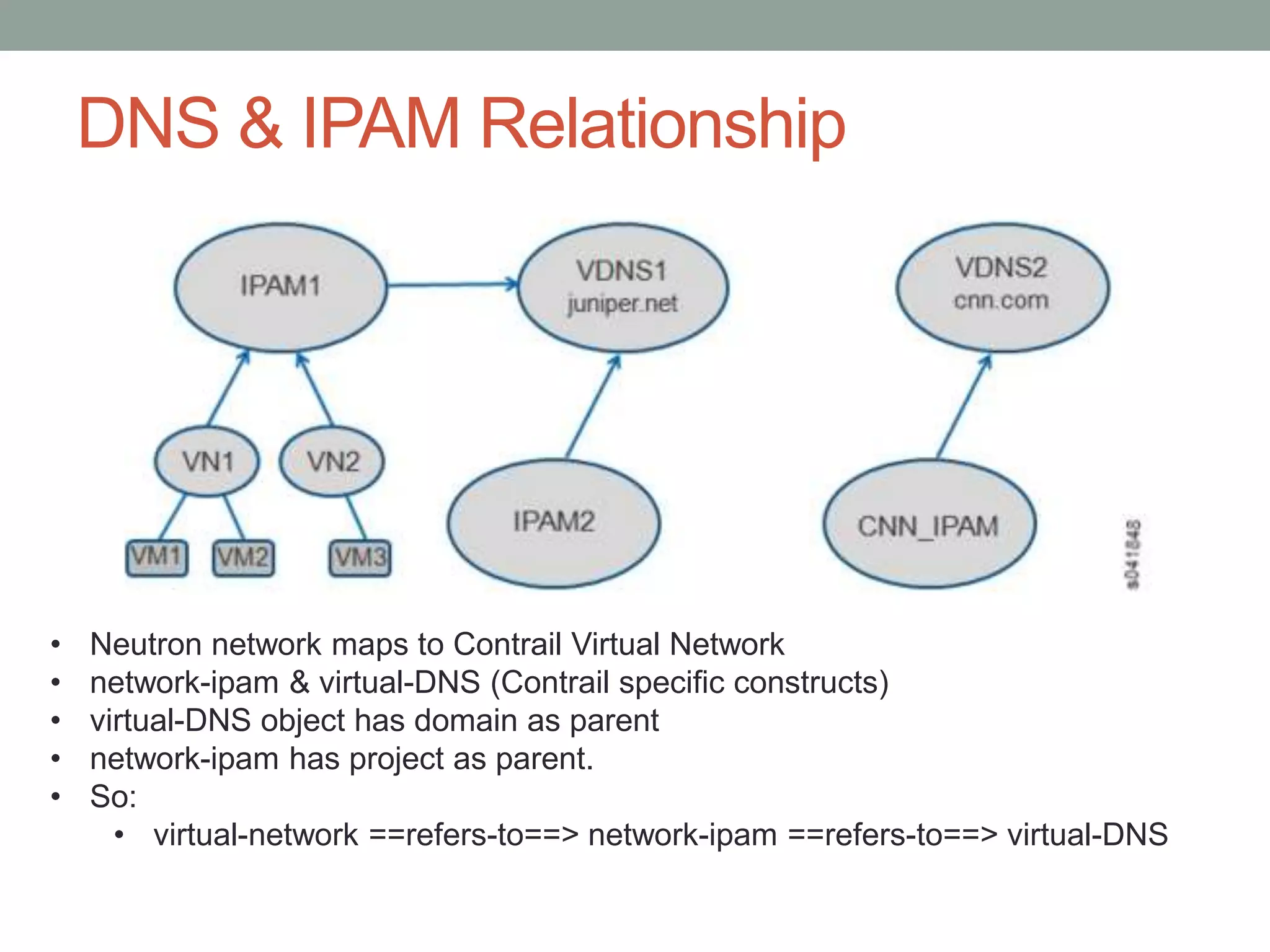

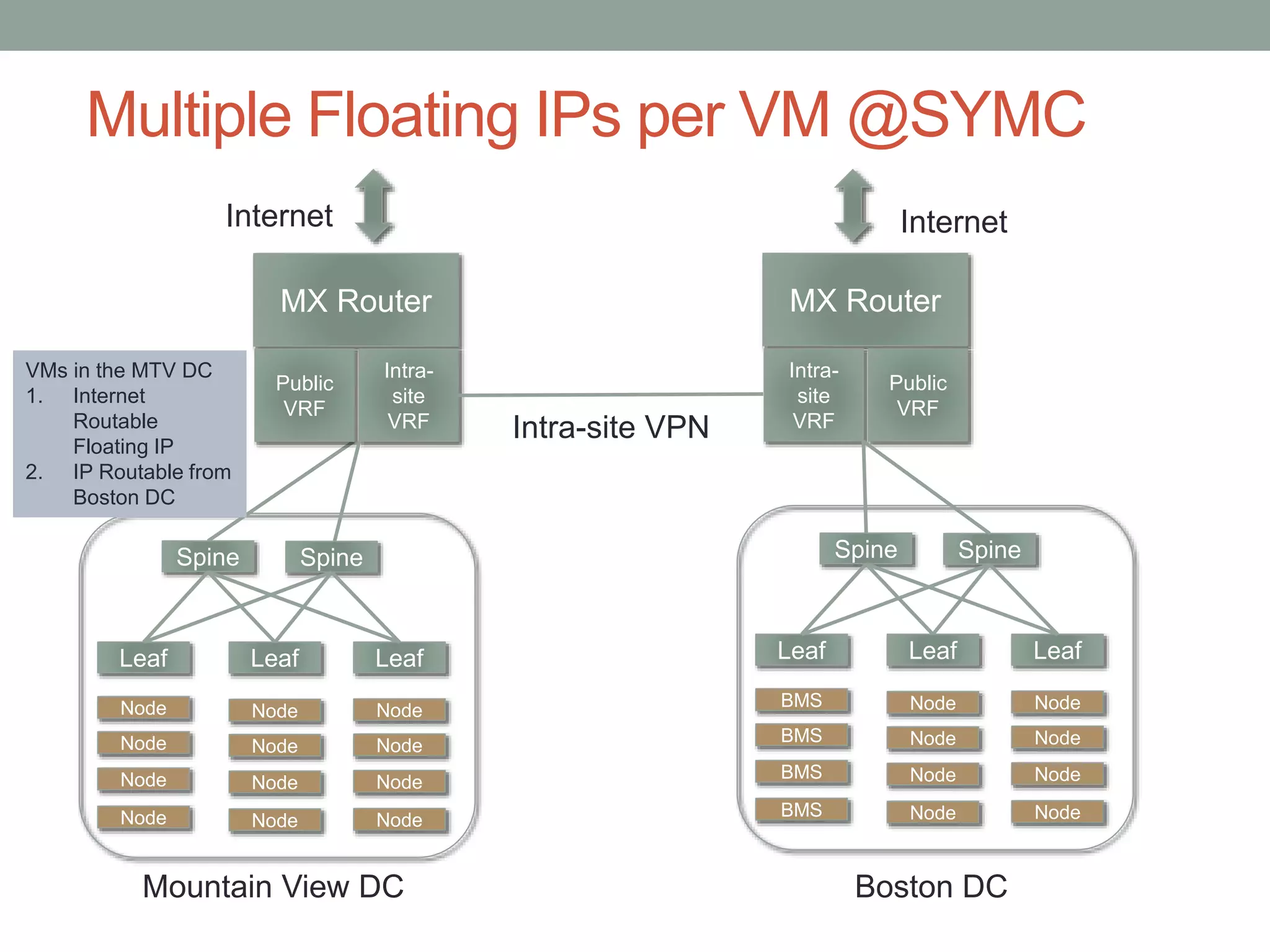

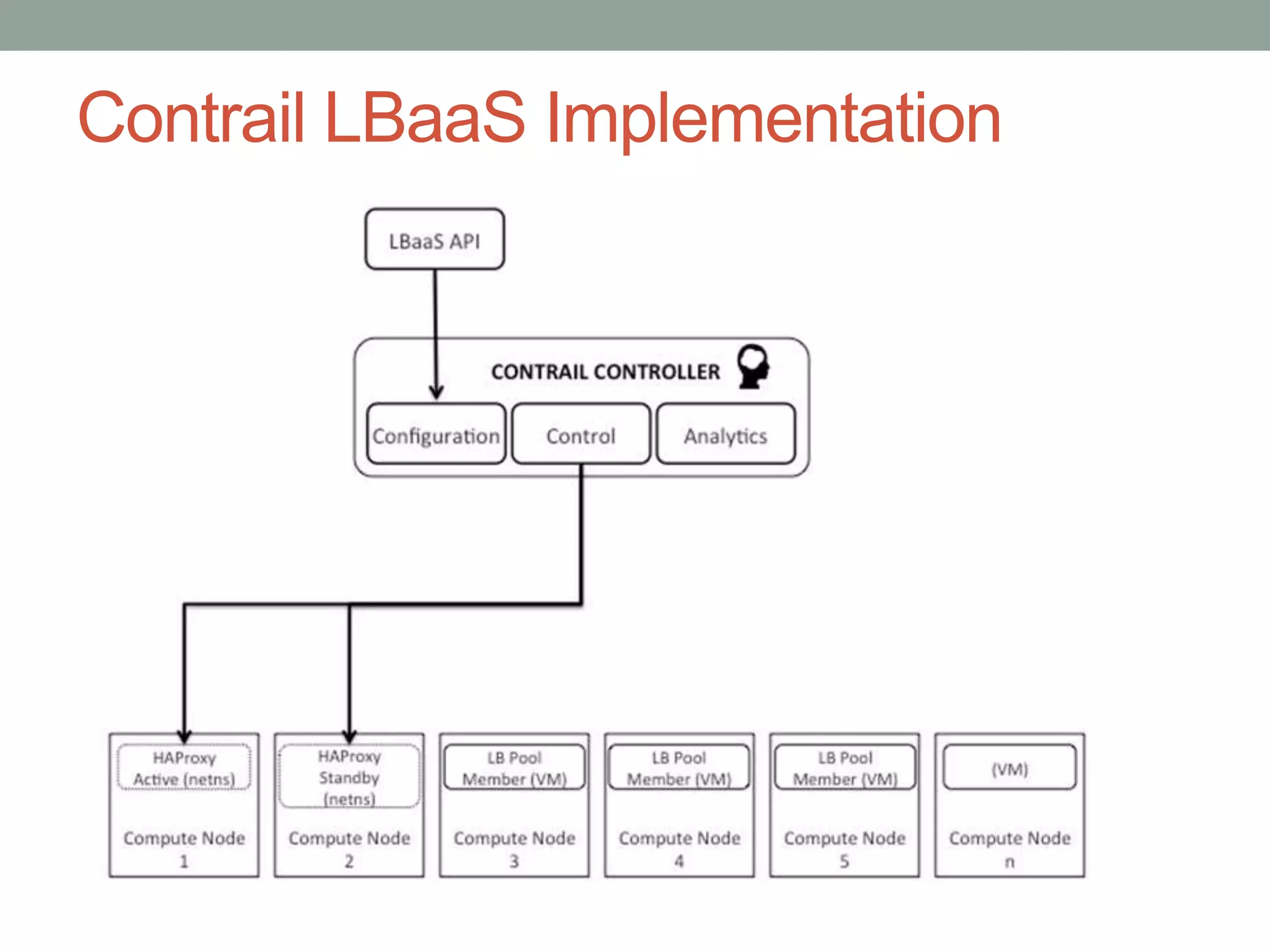

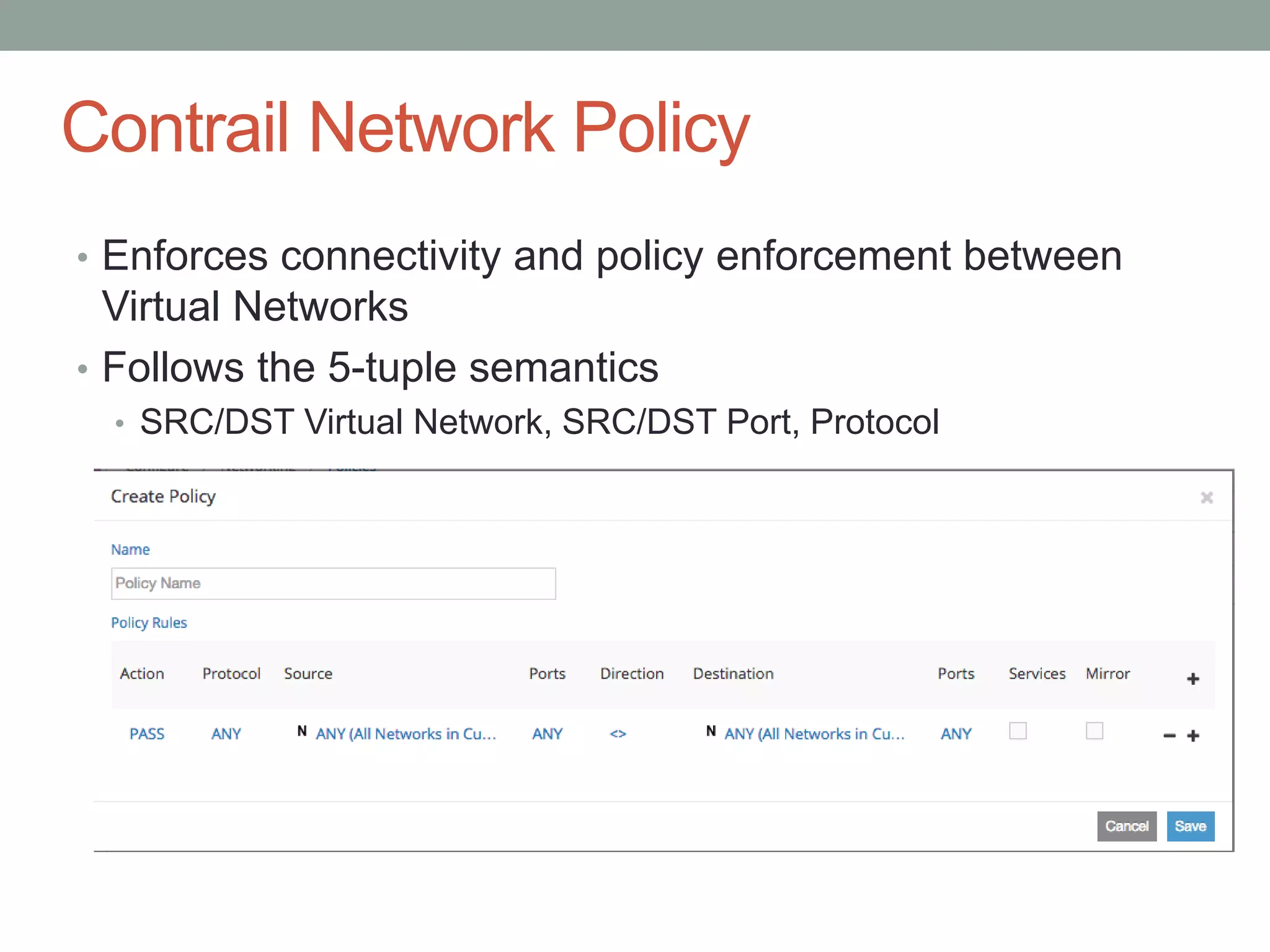

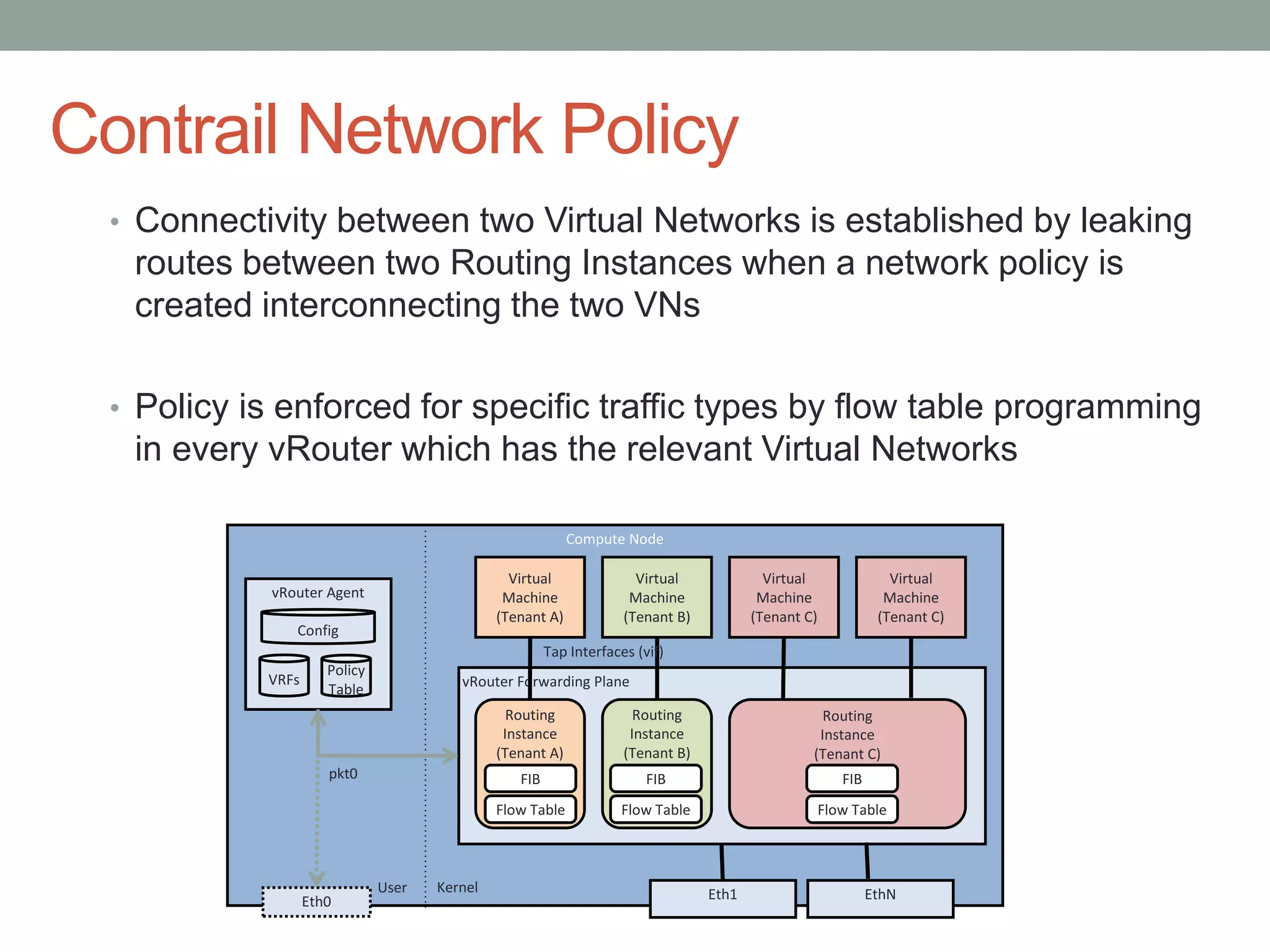

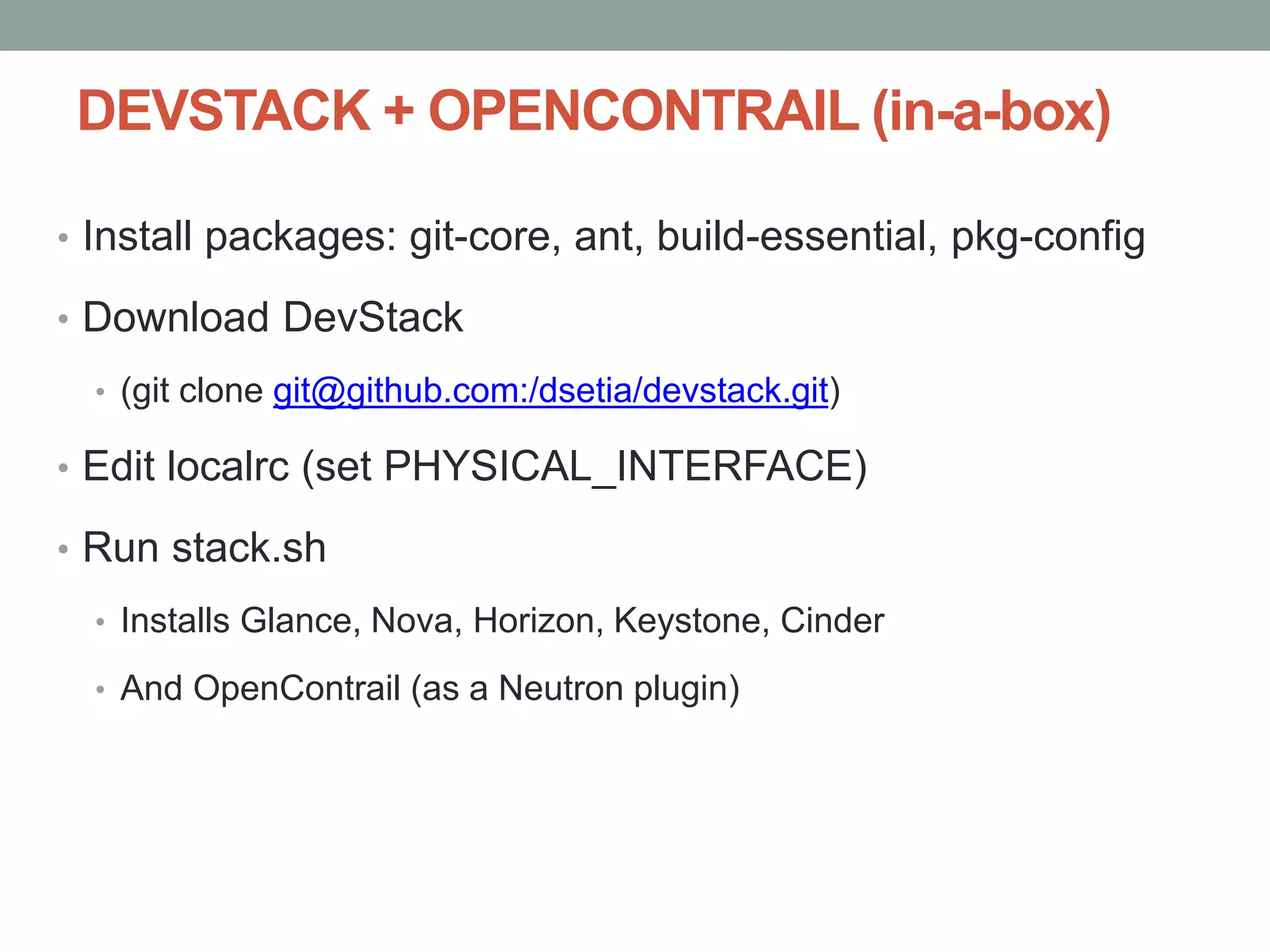

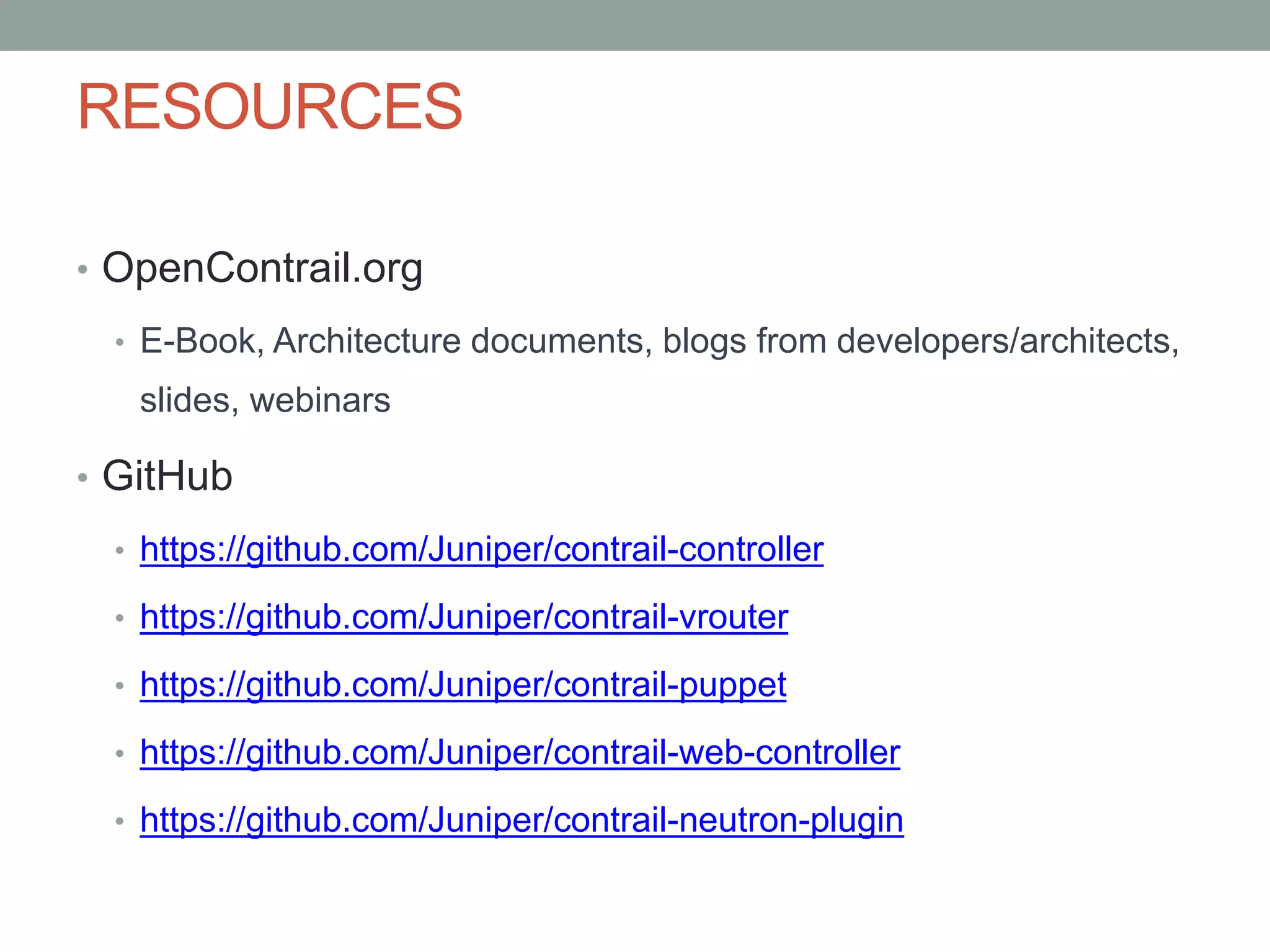

The document discusses architecting and building a secure multi-tenant cloud infrastructure for SaaS applications, highlighting the importance of network virtualization for workloads. It introduces OpenContrail, an open-source network virtualization platform, and details its architecture, features, and integration with OpenStack. Key topics include DNS management within the virtual networks, floating IPs, load balancing, and network policy enforcement to ensure isolation and fault tolerance among tenants.