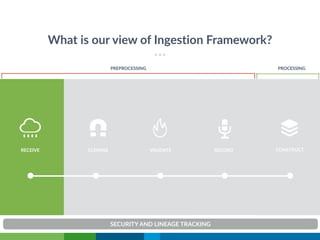

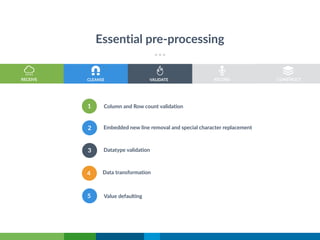

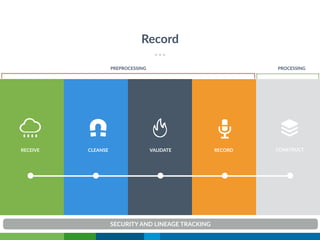

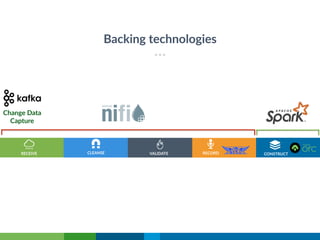

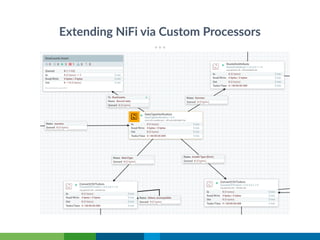

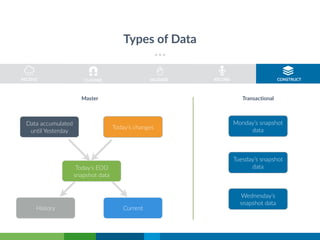

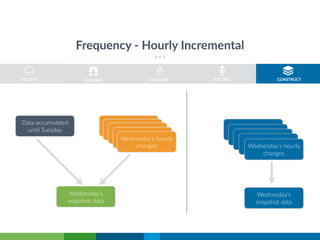

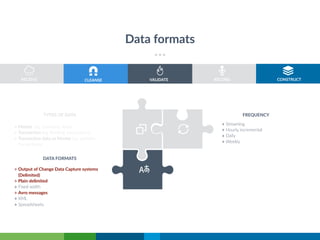

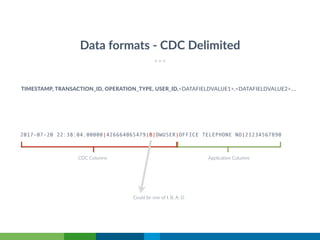

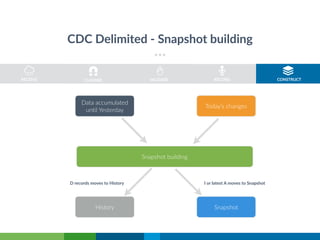

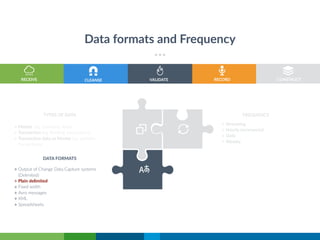

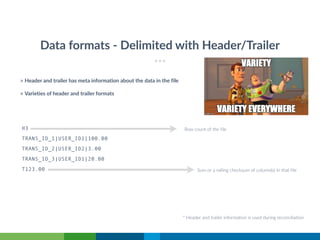

The document discusses the evolution and architecture of a standardized data management and ingestion platform led by Arun Manivannan, highlighting the complexities involved in managing vast amounts of data across various applications and regulatory environments. It emphasizes the transition from legacy systems to a unified ingestion framework, detailing key processes such as data validation, cleansing, and change data capture. The aim is to enhance processing efficiency, manage data flows effectively, and eventually open source the developed framework.