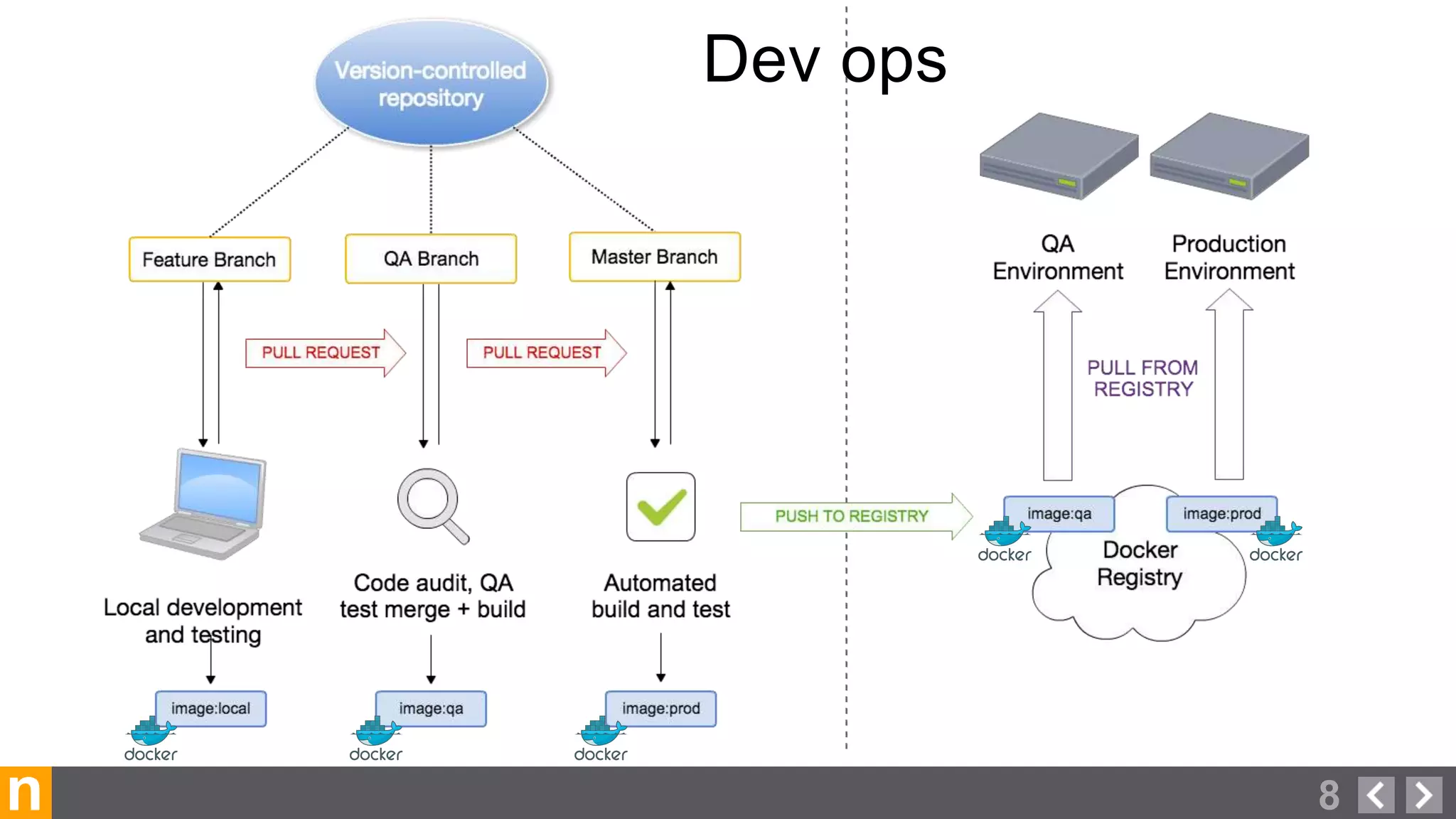

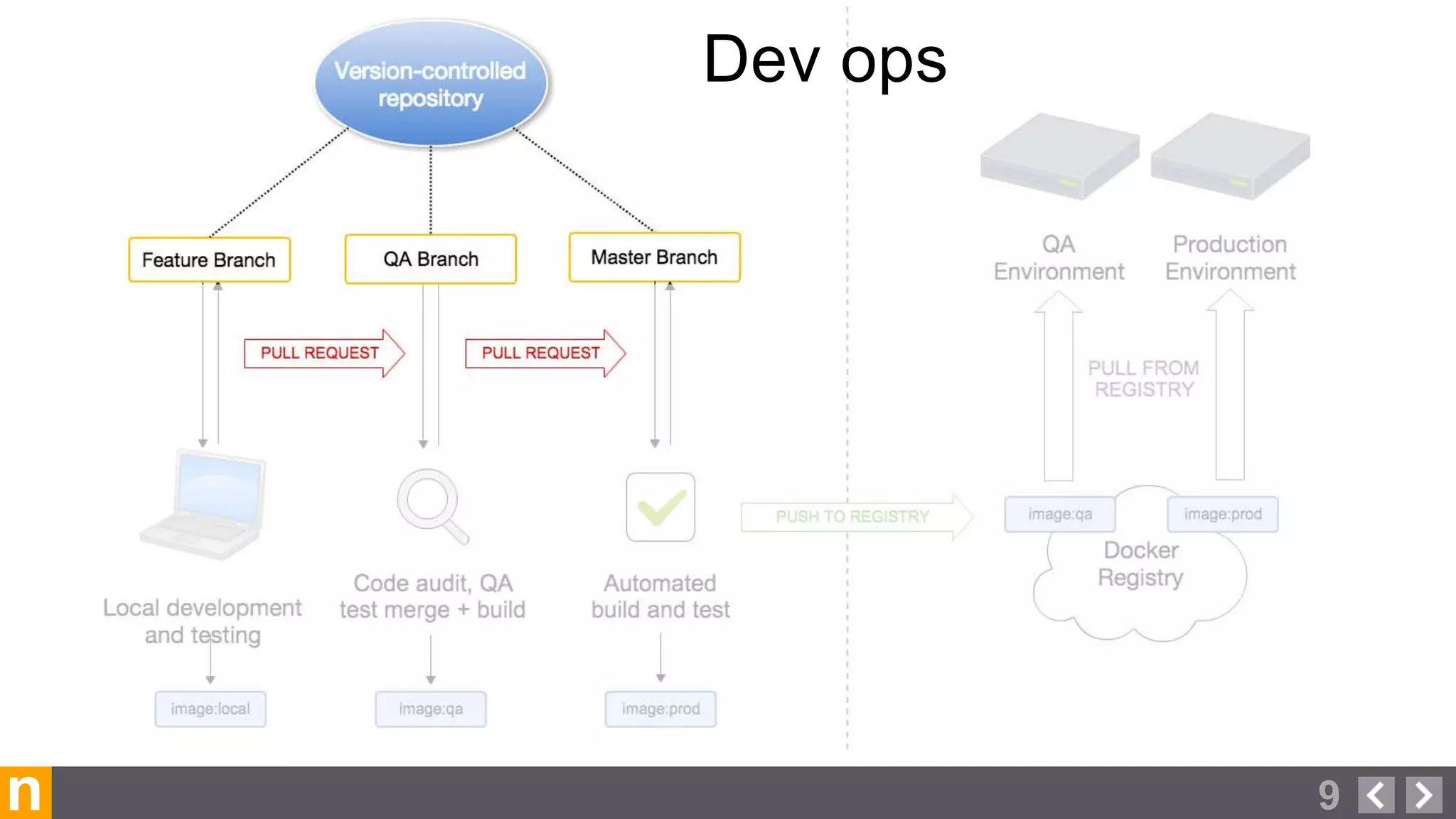

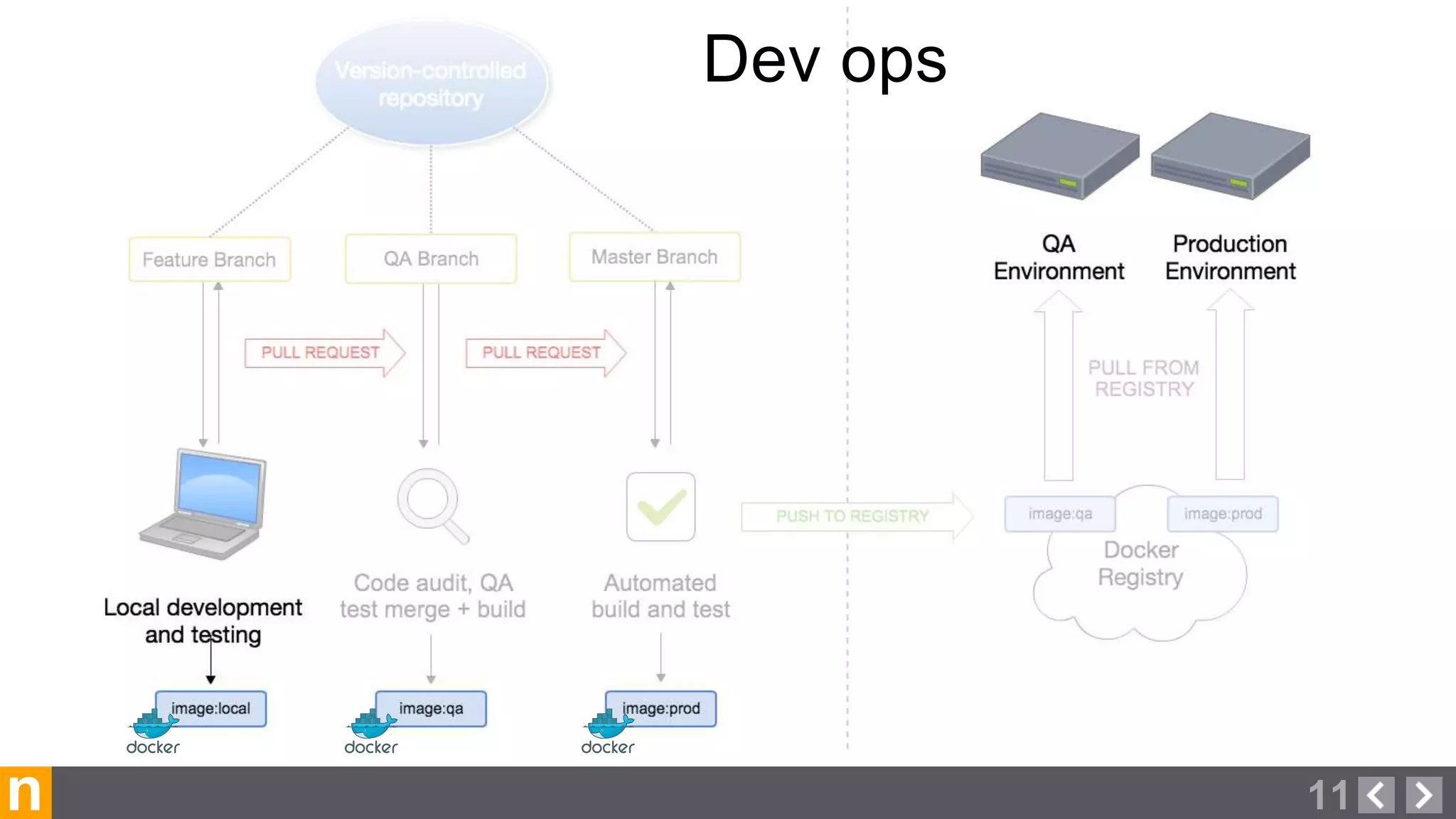

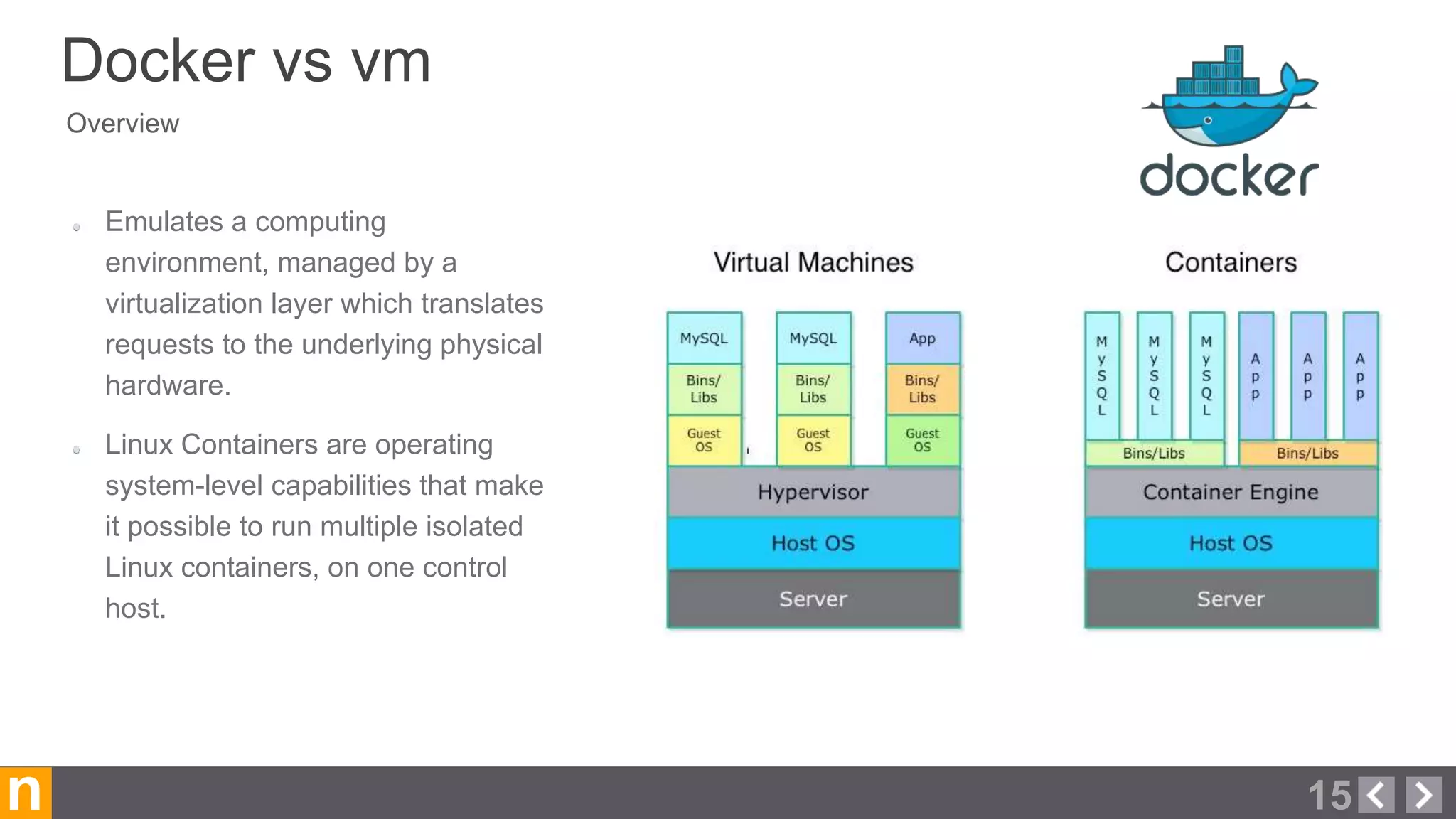

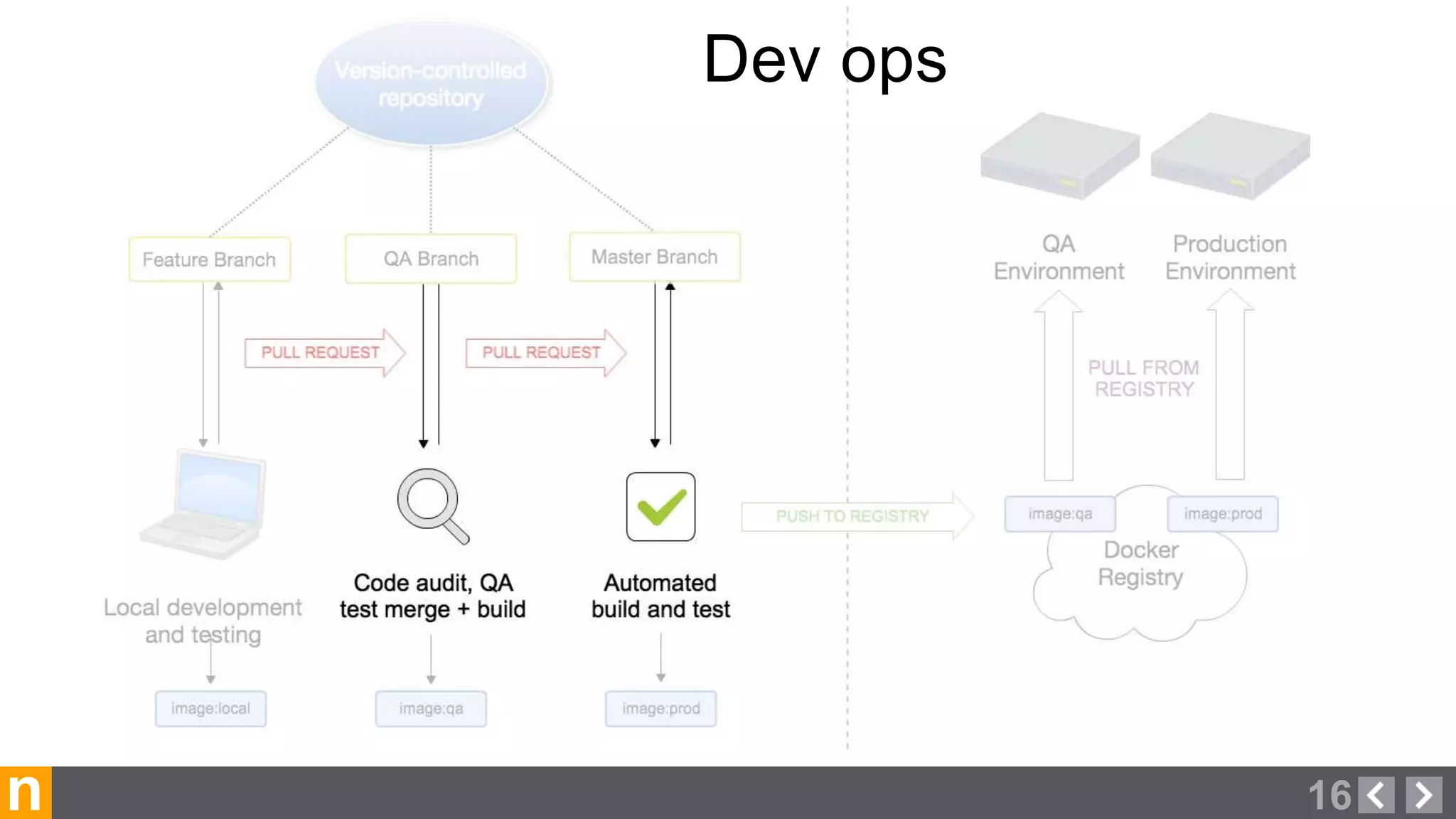

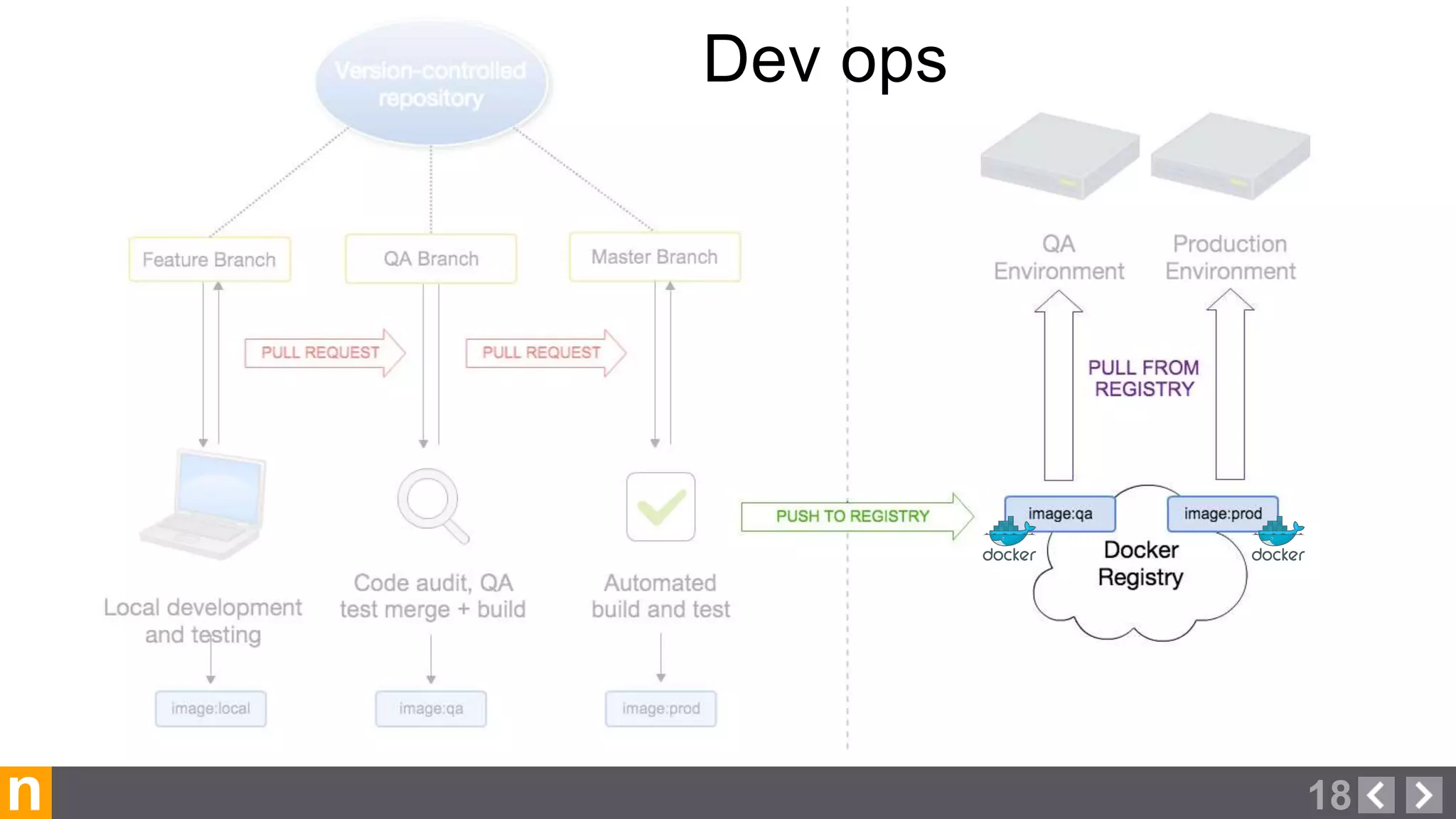

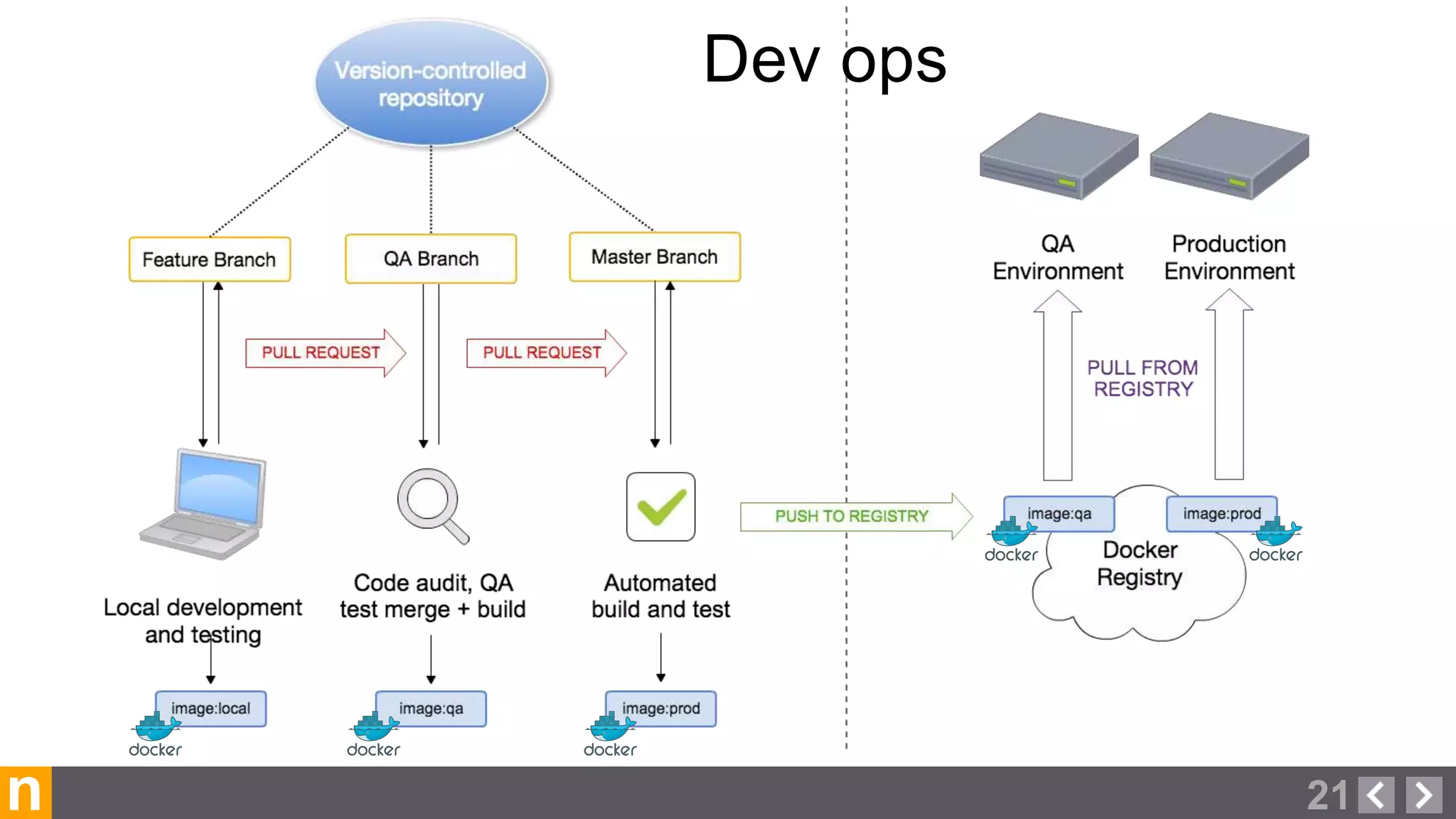

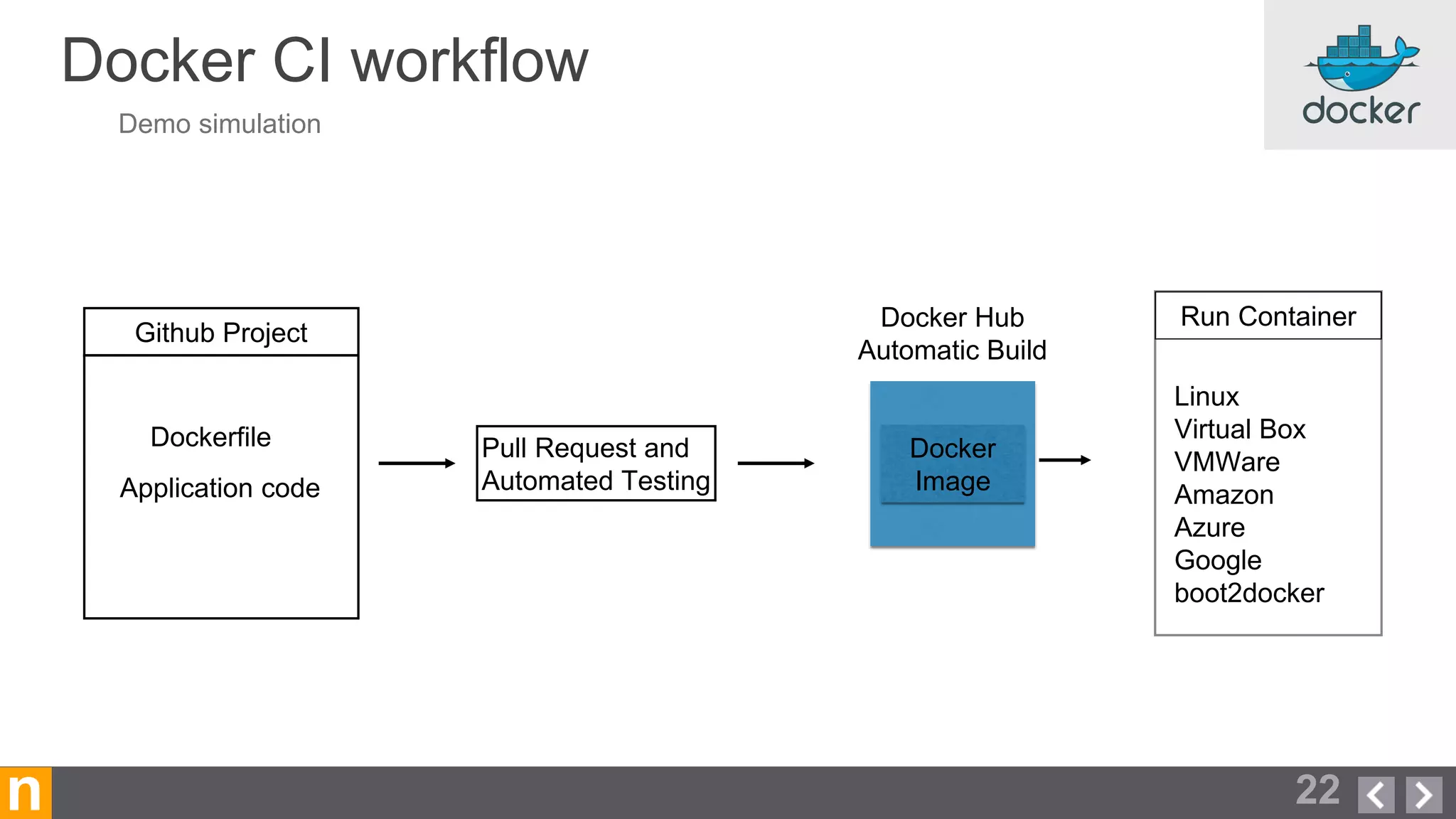

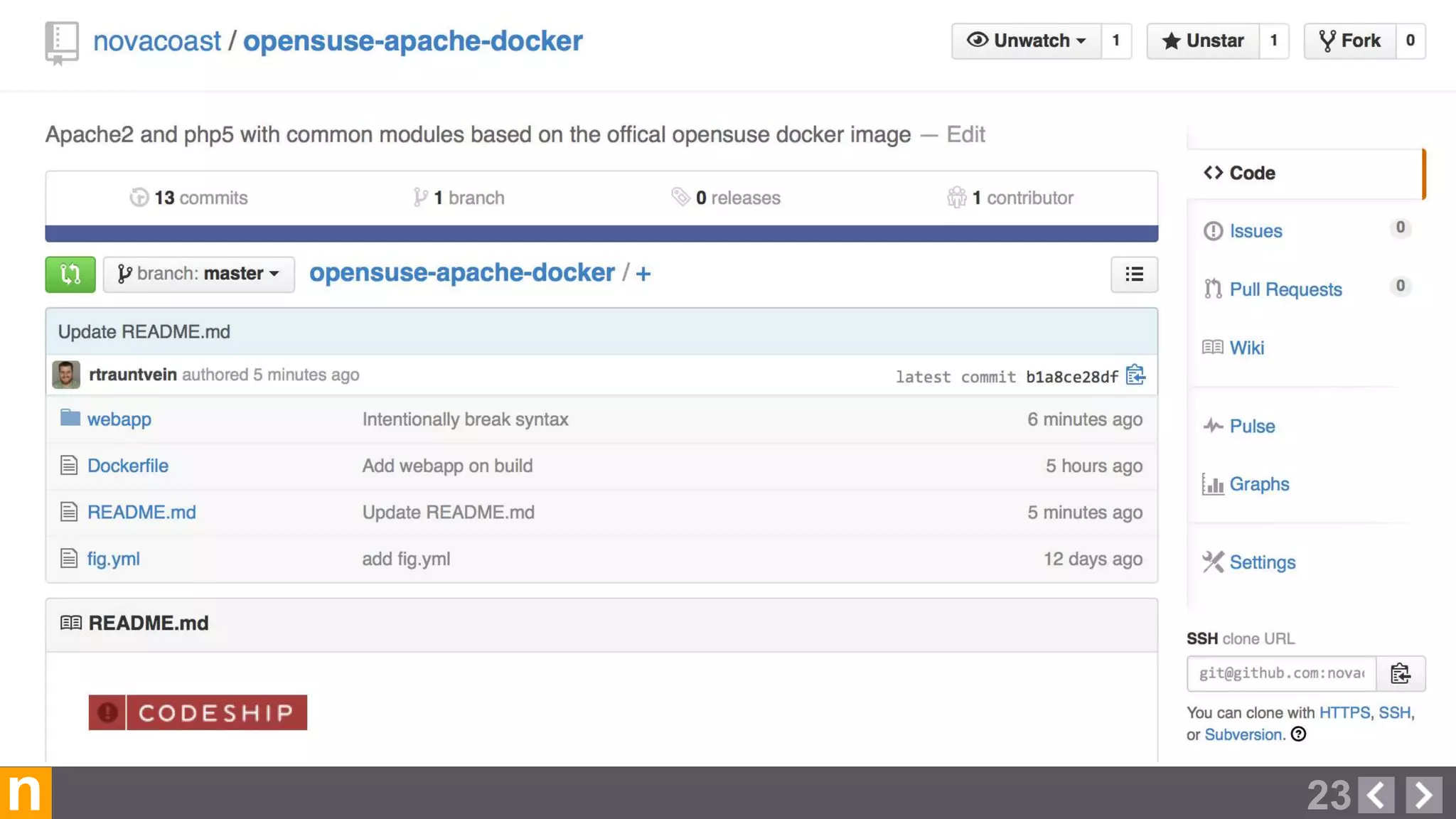

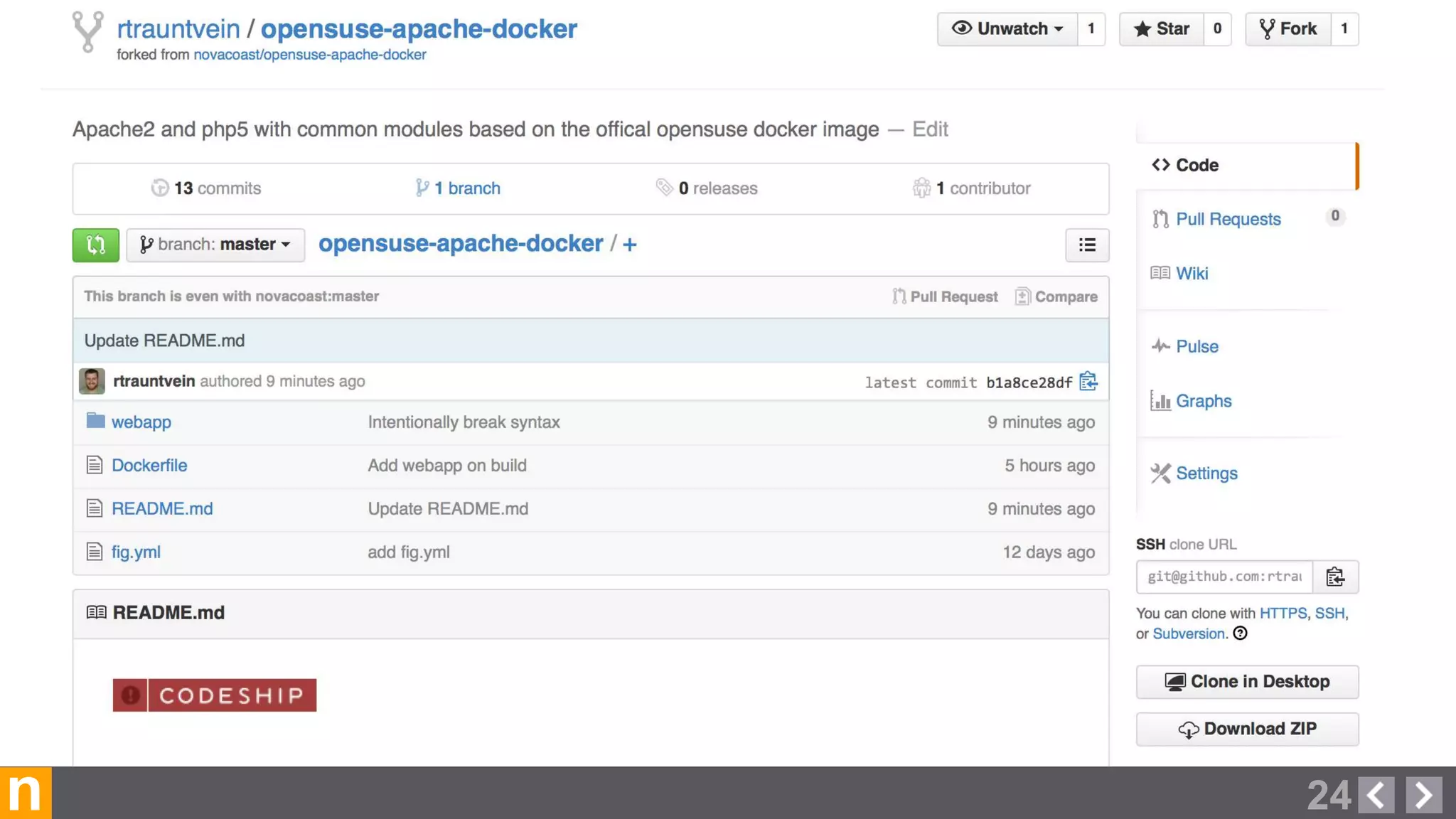

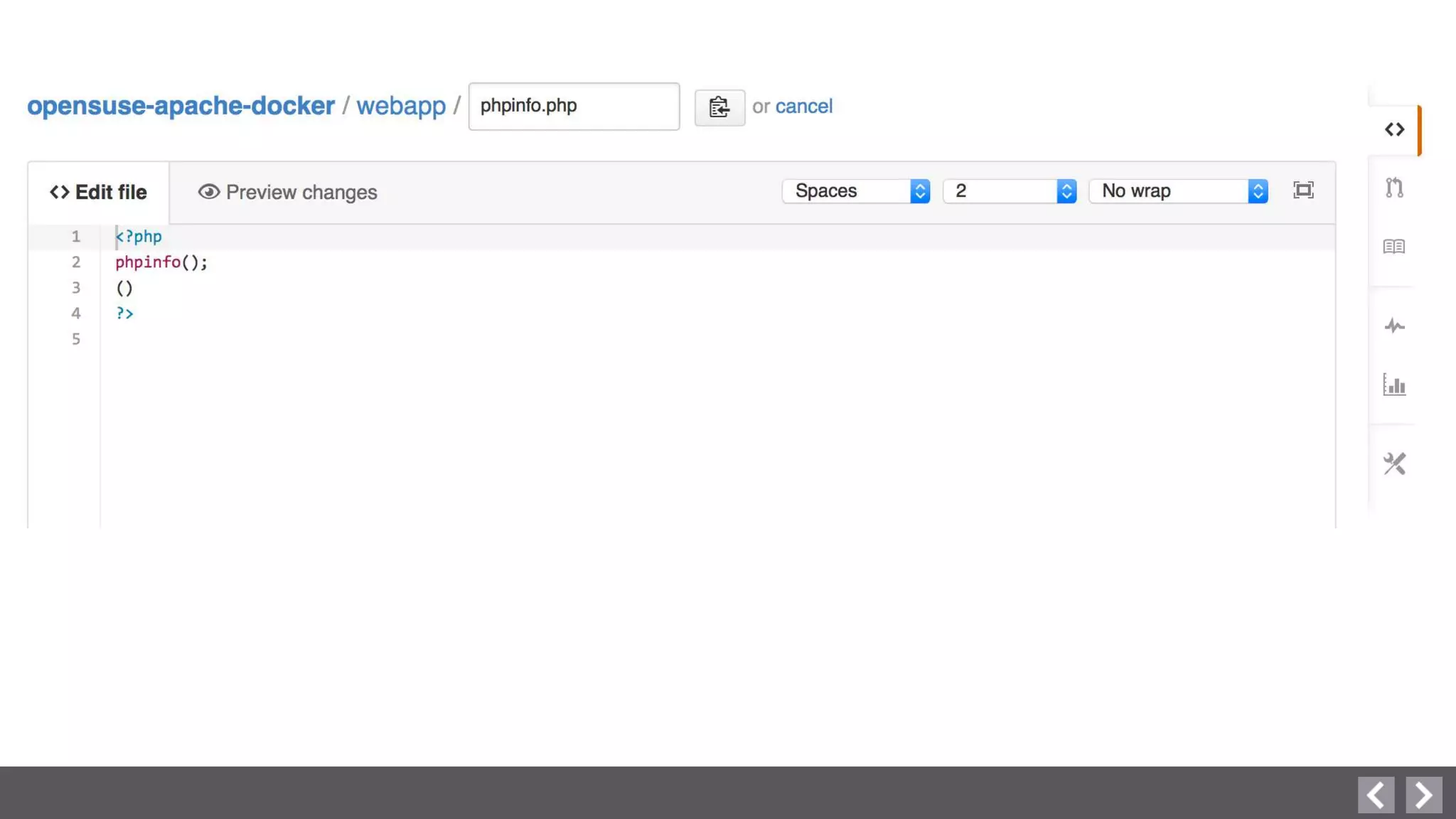

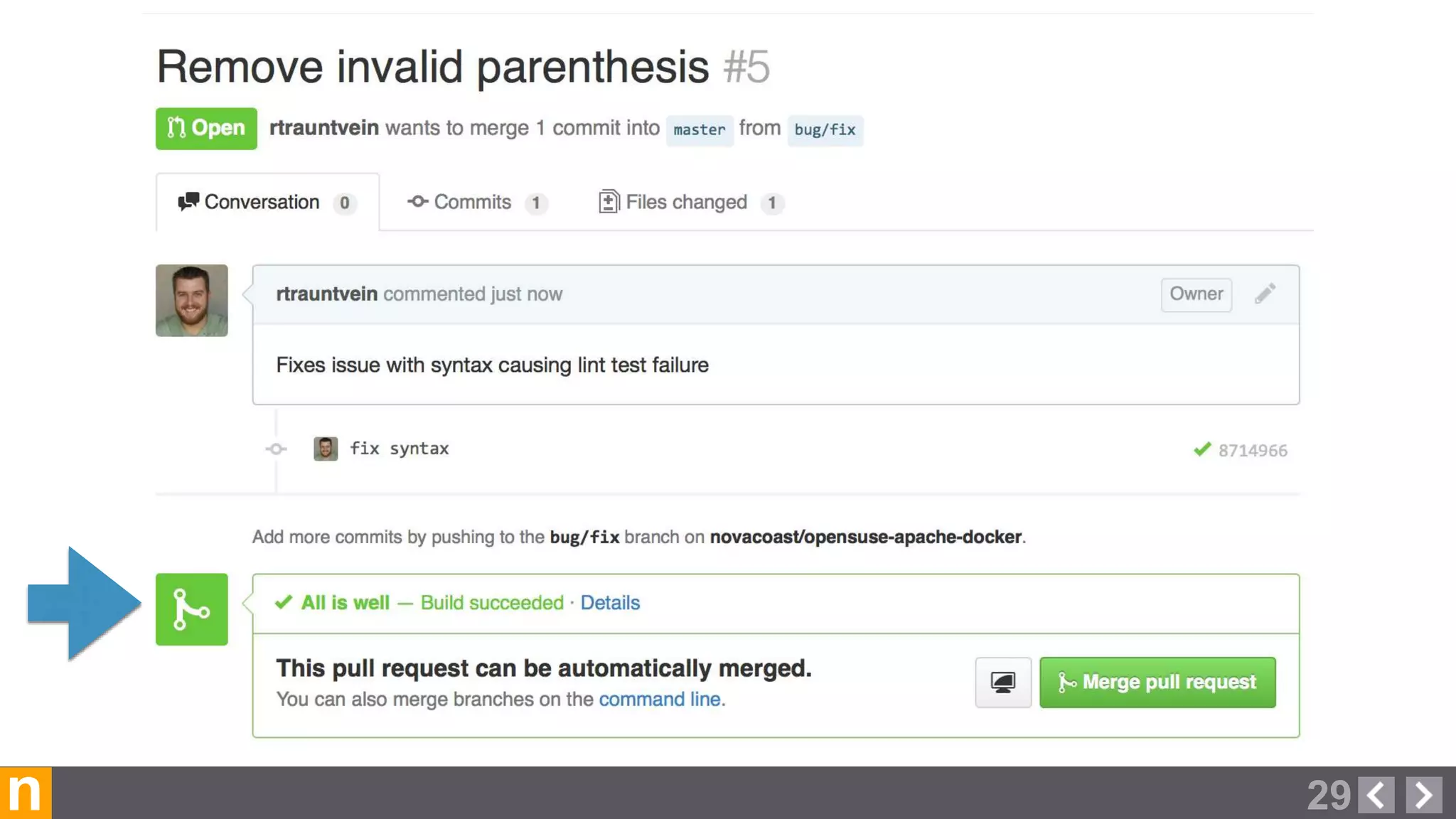

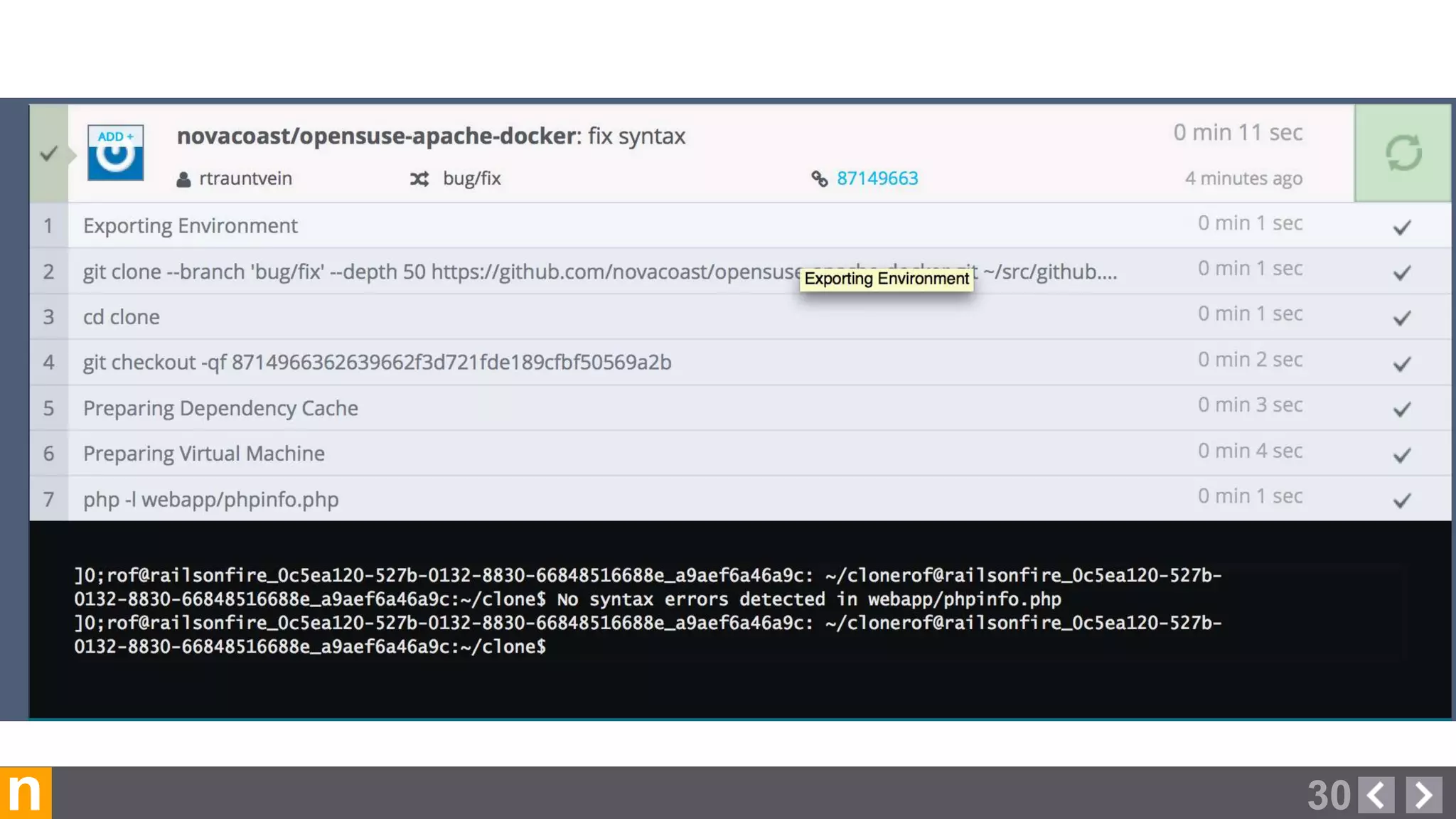

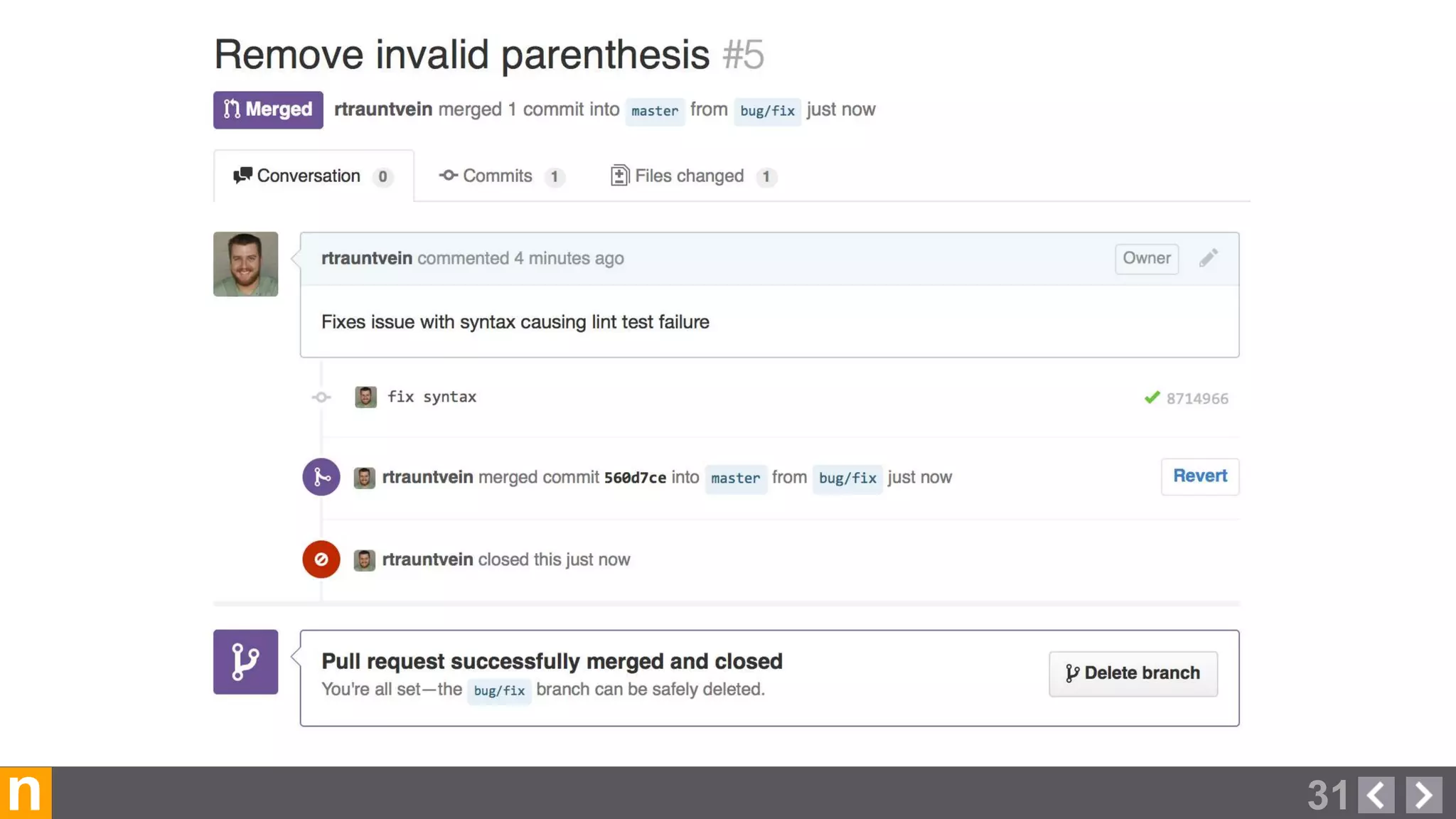

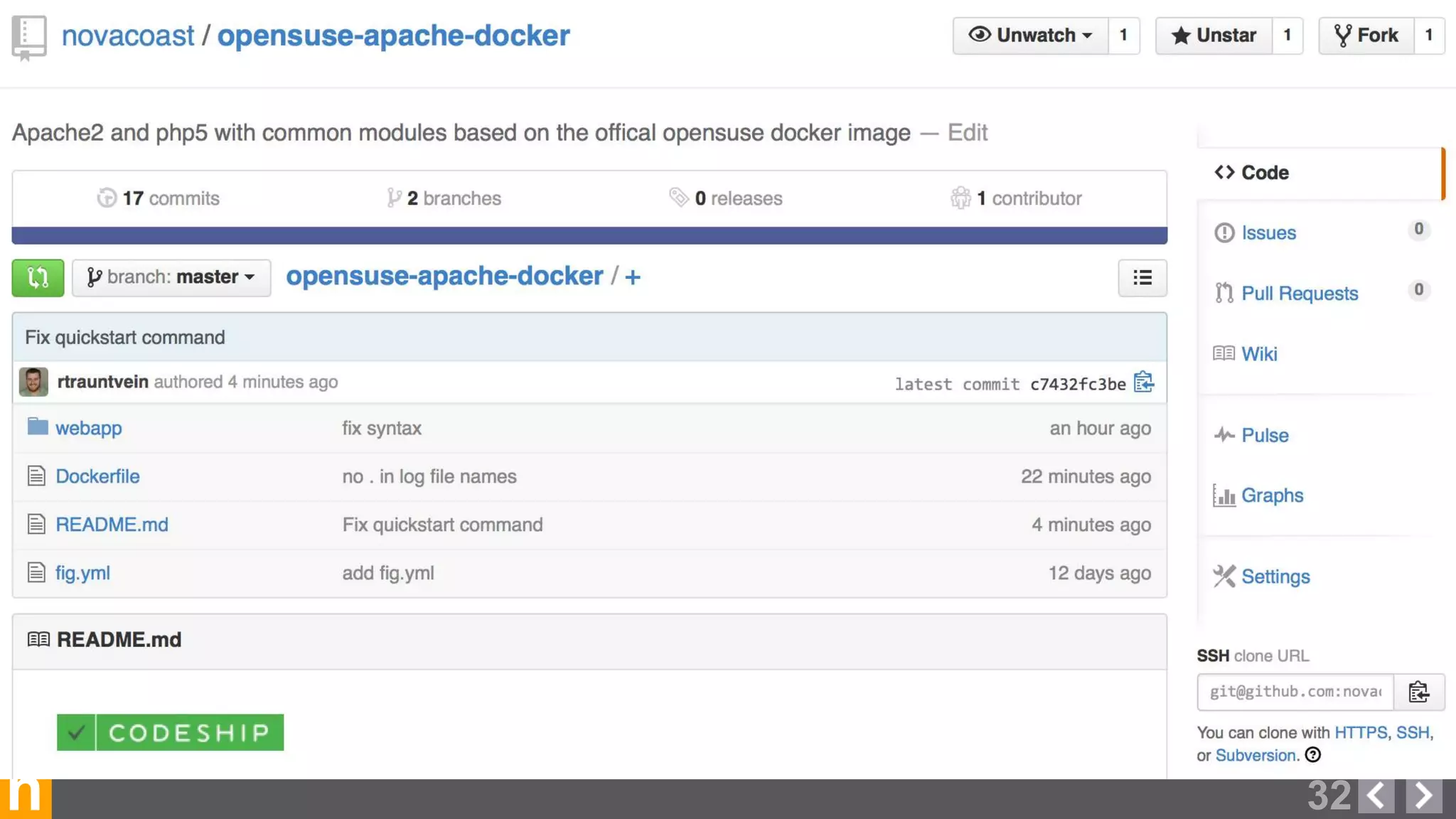

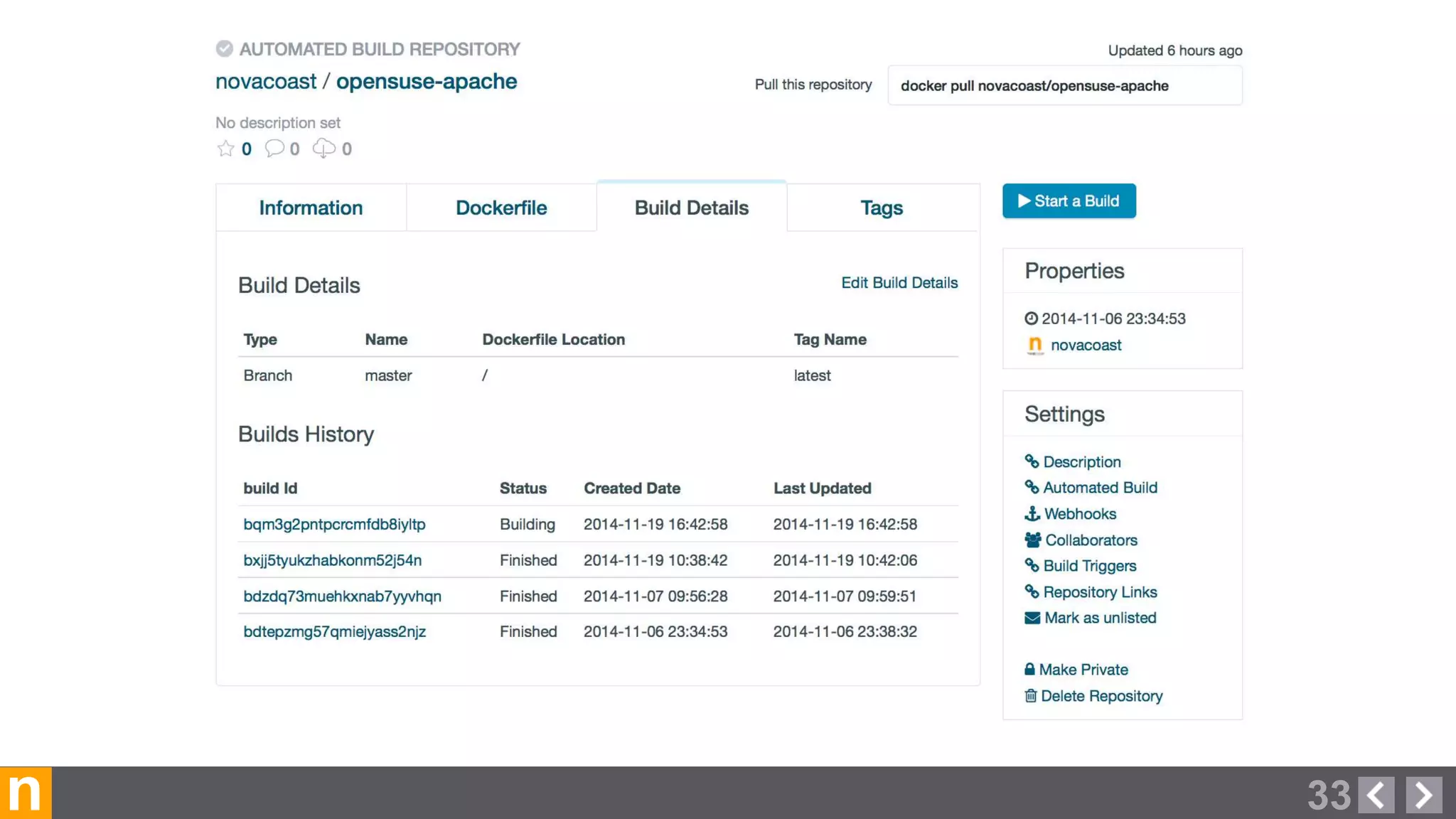

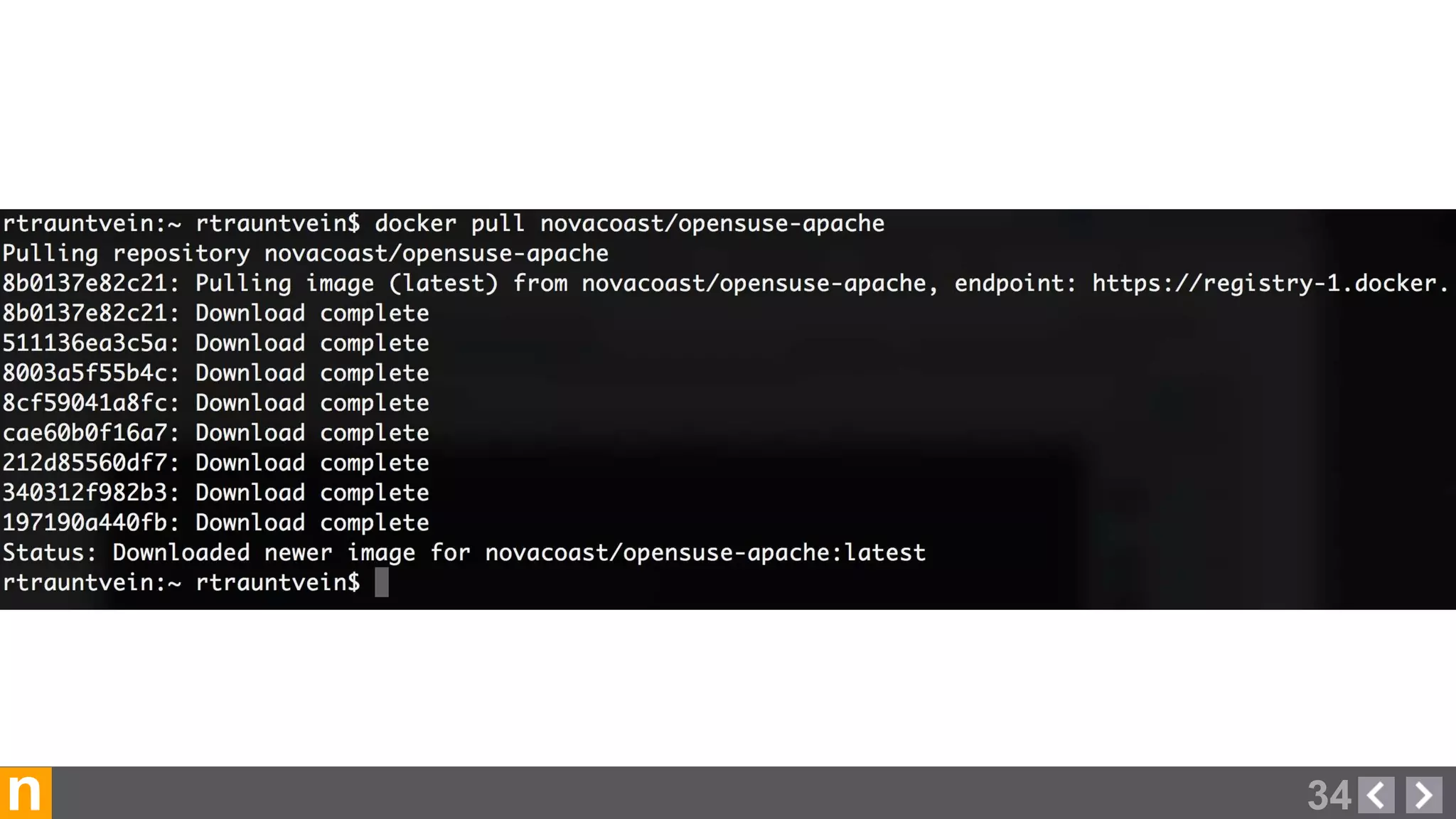

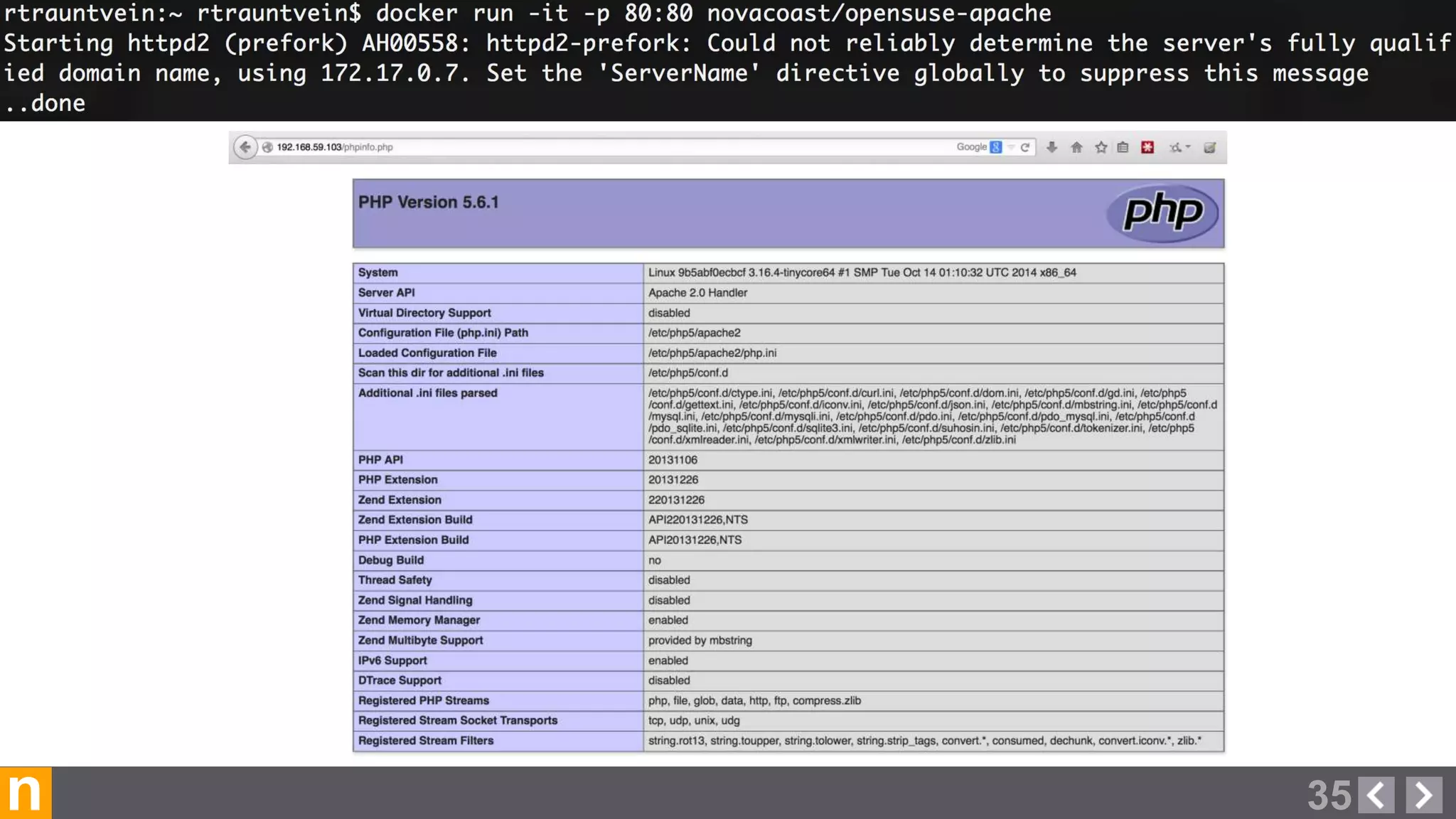

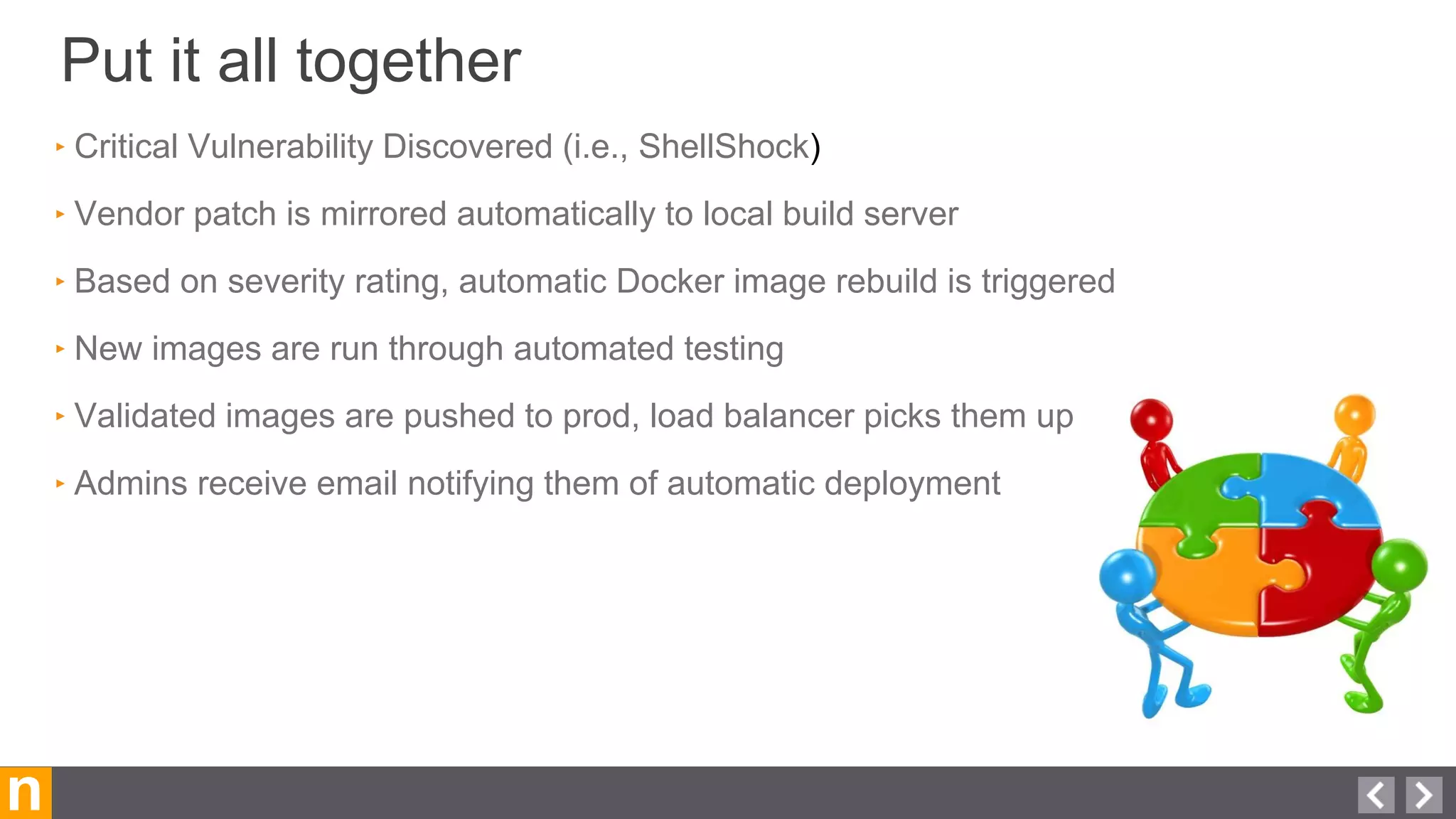

Novacoast uses Docker and DevOps practices to streamline their development and operations processes. Previously, setting up servers and deploying code was manual and time-consuming. Now, Docker containers allow applications and their dependencies to be packaged together for easy, consistent deployment. Continuous integration using Jenkins builds and tests code changes automatically. Successful builds are pushed to an internal Docker registry for deployment via Chef to production servers. This automated workflow allows for faster, more reliable releases while improving security.