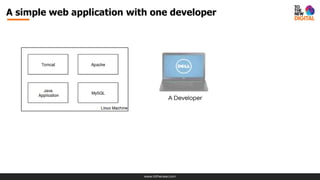

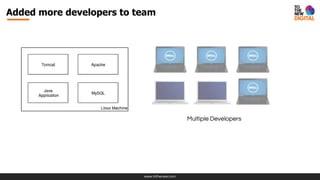

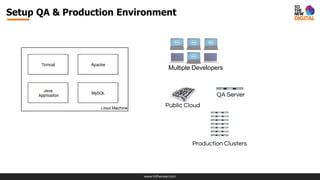

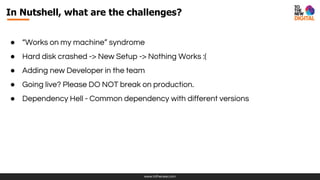

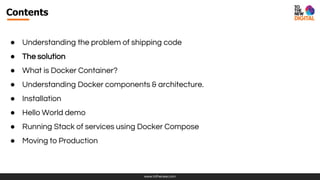

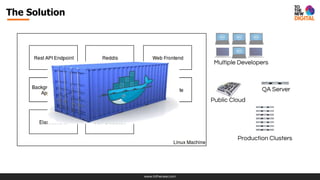

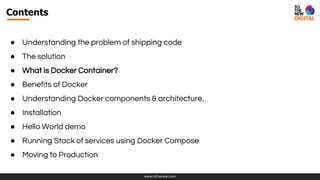

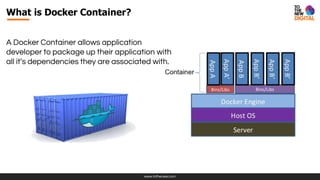

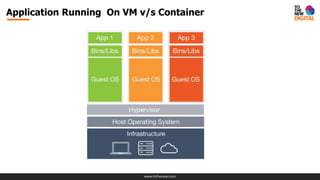

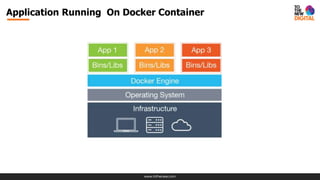

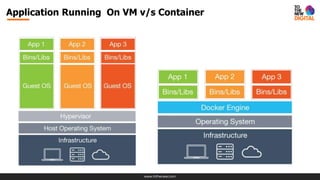

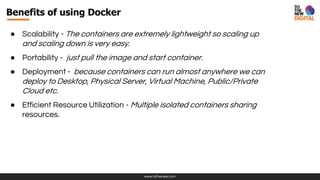

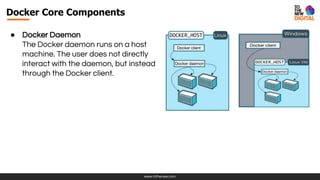

The document presents a comprehensive overview of Docker, emphasizing its importance in shipping code efficiently in modern development environments. It outlines the challenges developers face, introduces Docker containers as a solution, and discusses the benefits such as portability, scalability, and efficiency in resource utilization. Additionally, it covers installation steps, Docker's core components, and orchestration tools for managing Docker clusters.

![www.tothenew.com

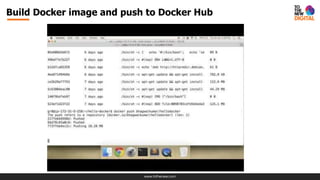

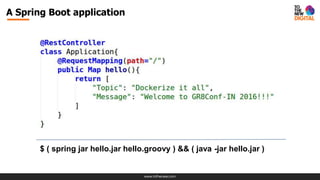

Build Image Using Dockerfile

FROM java:8

MAINTAINER Puneet Behl "puneet.behl@tothenew.com"

ADD hello.jar /app/hello.jar

EXPOSE 8080

CMD ["java", "-jar", "/app/hello.jar"]](https://image.slidesharecdn.com/d9dxtutites0cs3nuccc-signature-0e764bb60ff24a8ff5b12a4bc85585e2f108c3fc2f73be5dd487e1f90ede9126-poli-160906153152/85/Dockerize-it-all-52-320.jpg)