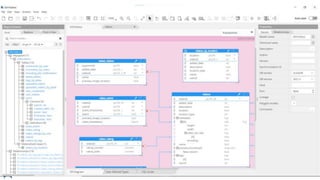

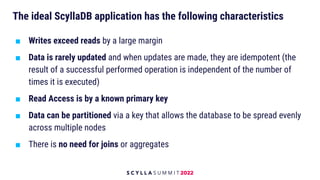

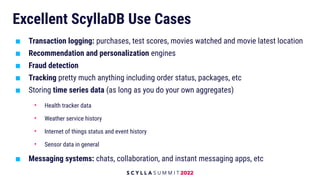

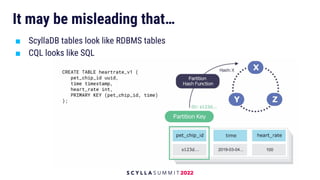

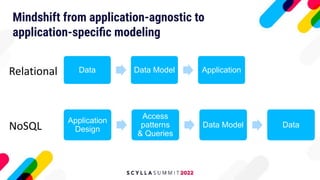

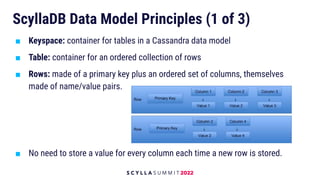

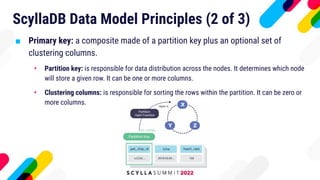

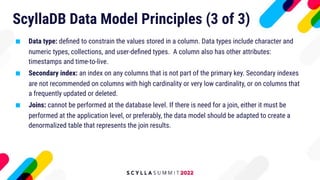

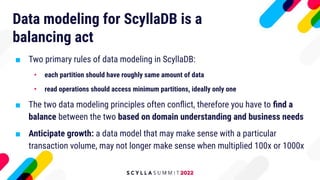

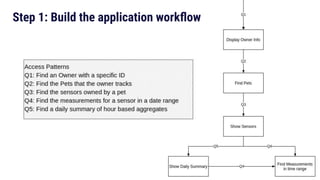

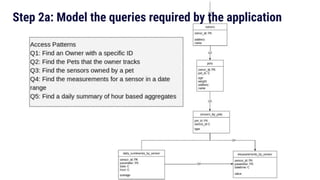

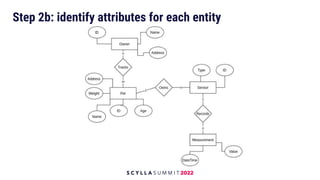

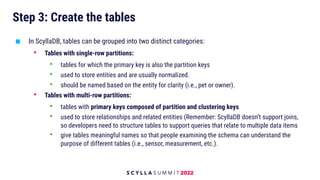

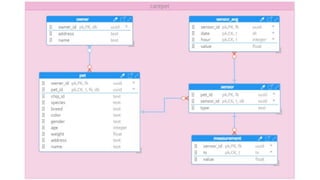

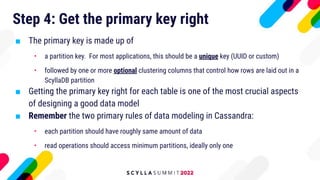

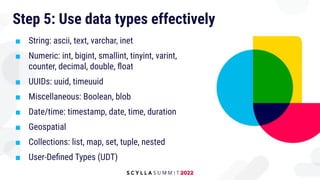

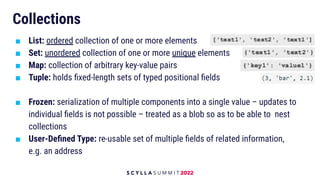

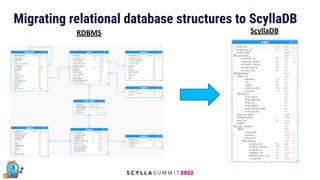

The document discusses data modeling best practices for ScyllaDB, emphasizing its significance in software development and the characteristics of ideal ScyllaDB applications. It outlines principles for creating effective data models, such as understanding primary and clustering keys, and encourages balancing data distribution with efficient query access. Finally, it suggests a methodology for migrating relational database structures to ScyllaDB while highlighting the benefits of data modeling tools.