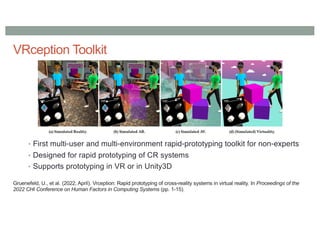

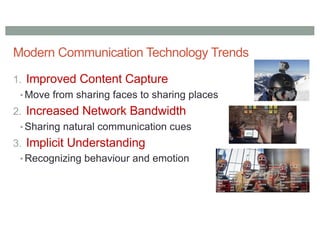

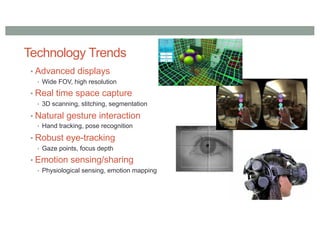

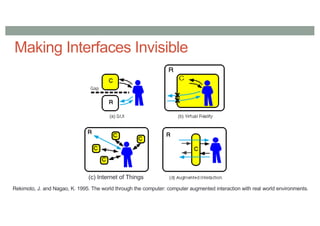

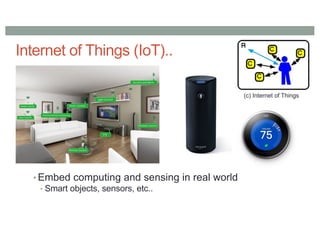

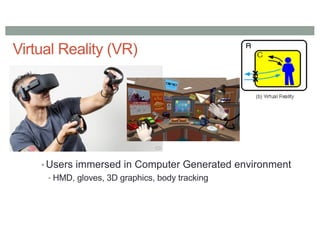

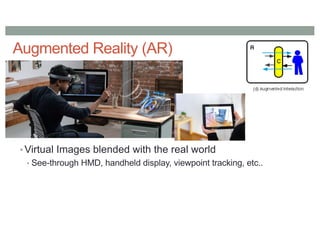

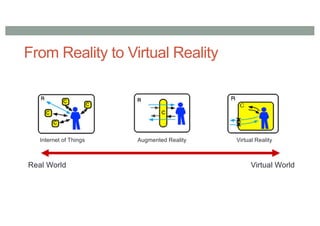

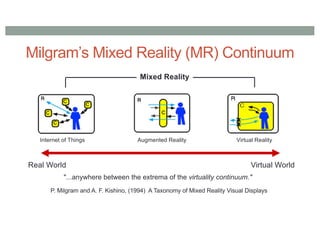

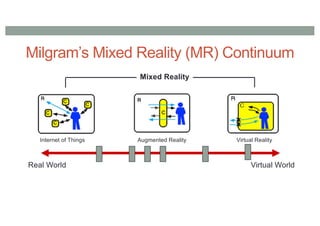

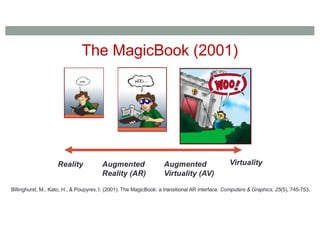

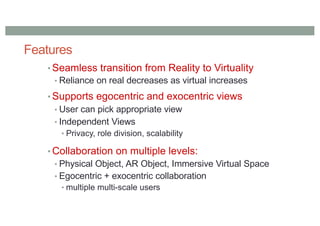

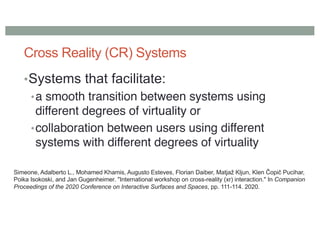

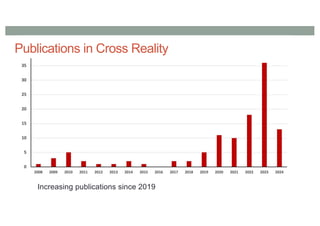

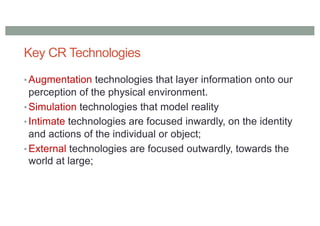

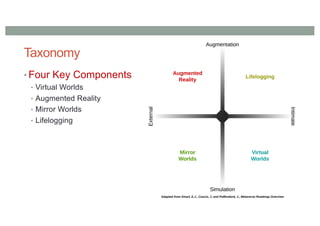

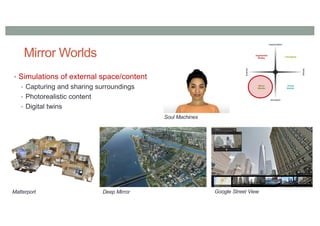

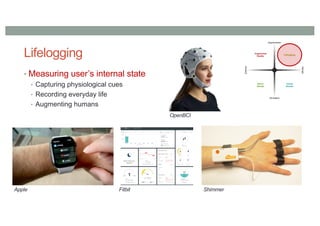

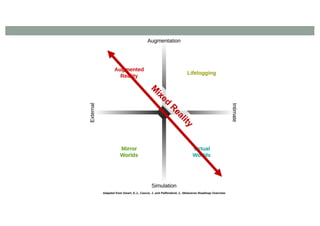

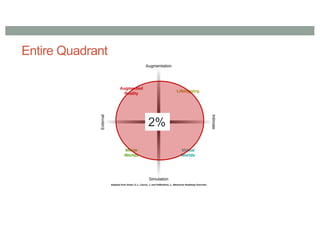

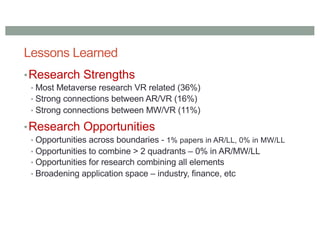

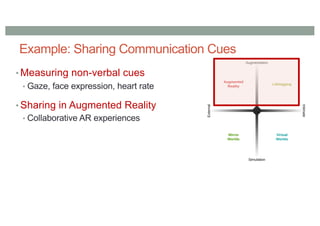

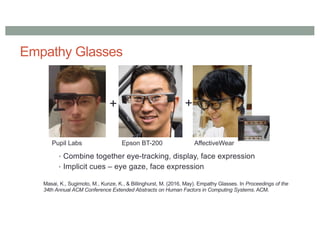

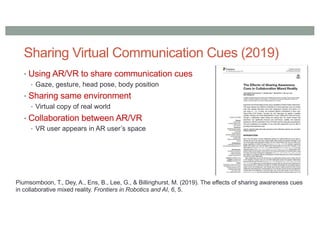

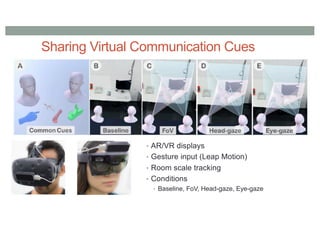

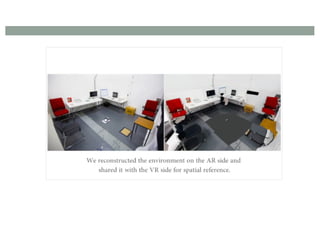

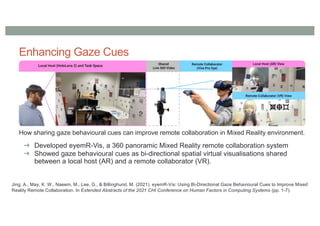

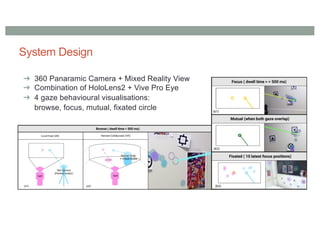

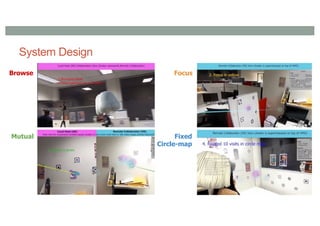

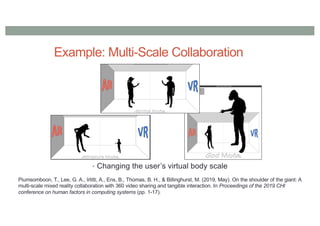

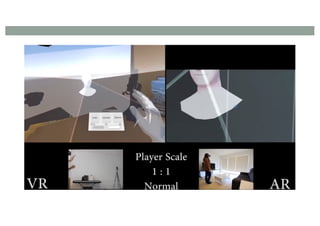

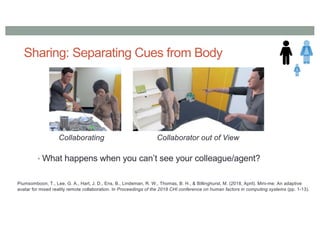

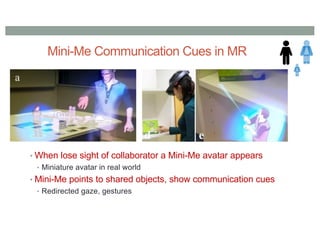

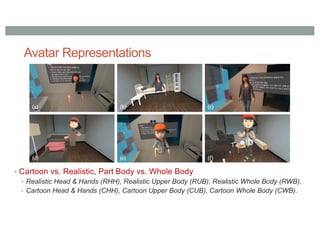

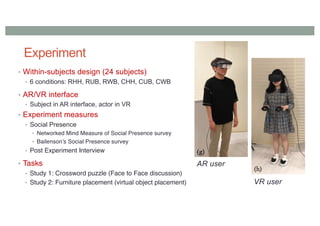

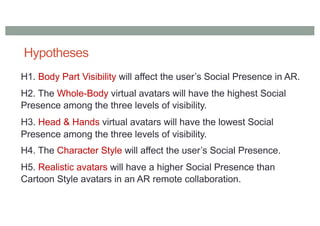

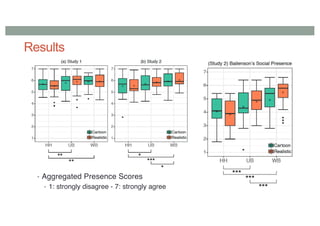

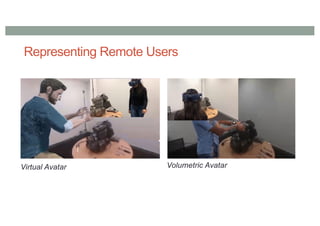

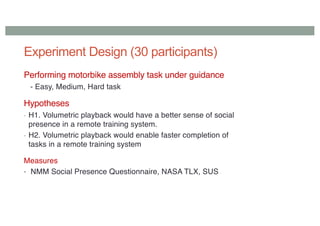

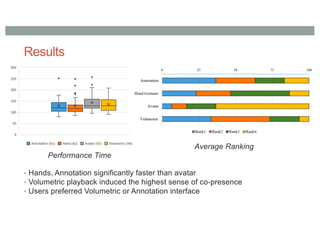

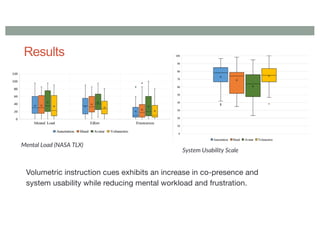

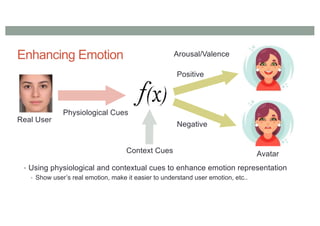

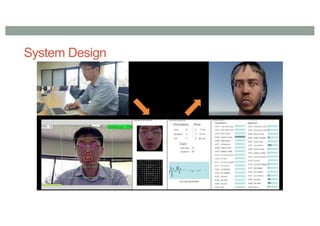

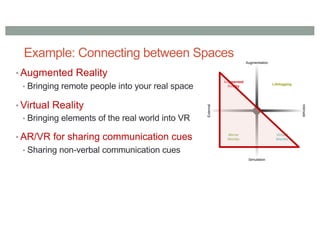

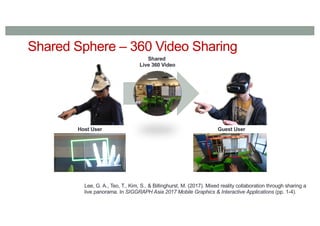

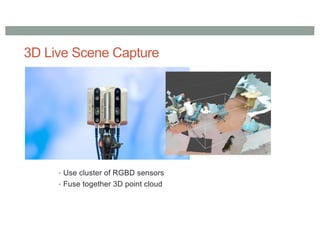

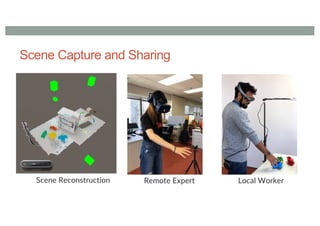

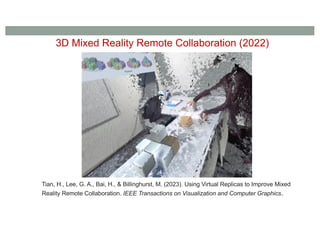

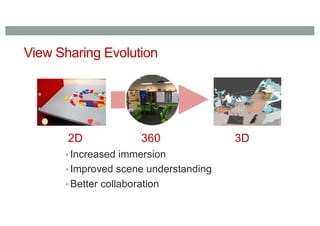

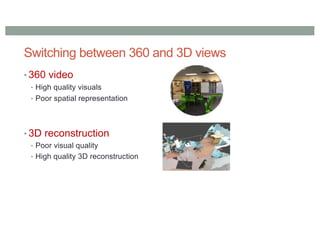

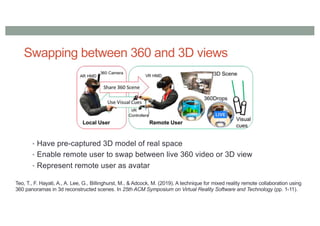

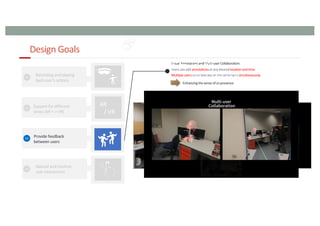

The document discusses research directions in cross reality interfaces, including the integration of augmented reality (AR), virtual reality (VR), and the Internet of Things (IoT). Key highlights include the mixed reality continuum, various publications in cross reality technologies, collaboration strategies, and emerging applications in the metaverse. It emphasizes the need for enhanced communication cues, avatar representation, and innovative system designs to improve user interaction and social presence in mixed reality environments.

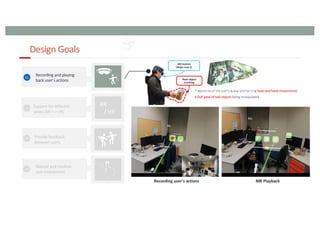

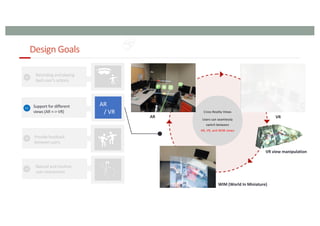

![Design Goals

99

AR

/ VR

[ Seamless Transition ]

AR -> VR

VR -> AR

[ Avatar, Virtual Replica ]](https://image.slidesharecdn.com/billinghurst-cr-240702084349-25c3879f/85/Research-Directions-for-Cross-Reality-Interfaces-99-320.jpg)

![“Time Travellers” Overview

100

Time

Step 1: Recording an expert’s

standard process

Step 2: Reviewing the recorded process through the

hybrid cross-reality playback system

2nd

User

1st

User MR Headset

(Magic Leap 2)

Real object

tracking

1st User’s view

Visual annotation

Avatar interaction

2nd User’s view

Timeline manipulation

Recording Data

[ 3D Work space ]

[ Avatar, Object ]

Spatial Data

1st User’s view Real object

tracking

AR mode VR mode

Cross reality asynchronous collaborative system

AR mode VR mode](https://image.slidesharecdn.com/billinghurst-cr-240702084349-25c3879f/85/Research-Directions-for-Cross-Reality-Interfaces-100-320.jpg)

![Pilot User Evaluation -User studyDesign-

101

• The participants(6) performed a task of reviewing and annotating on recorded videos in both AR

and AR+VR (Cross Reality) conditions.

• Leaves a marker when the action begins, and an arrow when it ends.

[ AR condition ] [ AR+VR condition ]](https://image.slidesharecdn.com/billinghurst-cr-240702084349-25c3879f/85/Research-Directions-for-Cross-Reality-Interfaces-101-320.jpg)

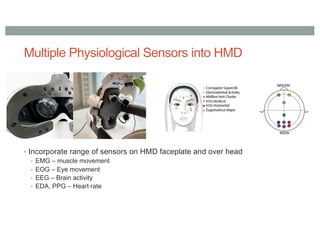

![Pilot User Evaluation -Measurements -

102

• Objective Measurements

[ Task Completion Time ] [ Moving Trajectory ]

[ Timeline Manipulation Time ]

• Subjective Measurements

• NASA TLX

• System sability questionnaire (SUS)](https://image.slidesharecdn.com/billinghurst-cr-240702084349-25c3879f/85/Research-Directions-for-Cross-Reality-Interfaces-102-320.jpg)

![Pilot User Evaluation - Results and Lessons Learned -

103

• Objective Measurements

[ Task Completion Time ] [ Moving Trajectory ]

[ Timeline Manipulation Time ]

200

210

220

230

240

Completion time

(sec)

AR AR+VR

0

5

10

15

20

Timeline

manipulation (sec)

AR AR+VR

0

10

20

30

40

50

Moving trajectories (m)

AR AR+VR](https://image.slidesharecdn.com/billinghurst-cr-240702084349-25c3879f/85/Research-Directions-for-Cross-Reality-Interfaces-103-320.jpg)