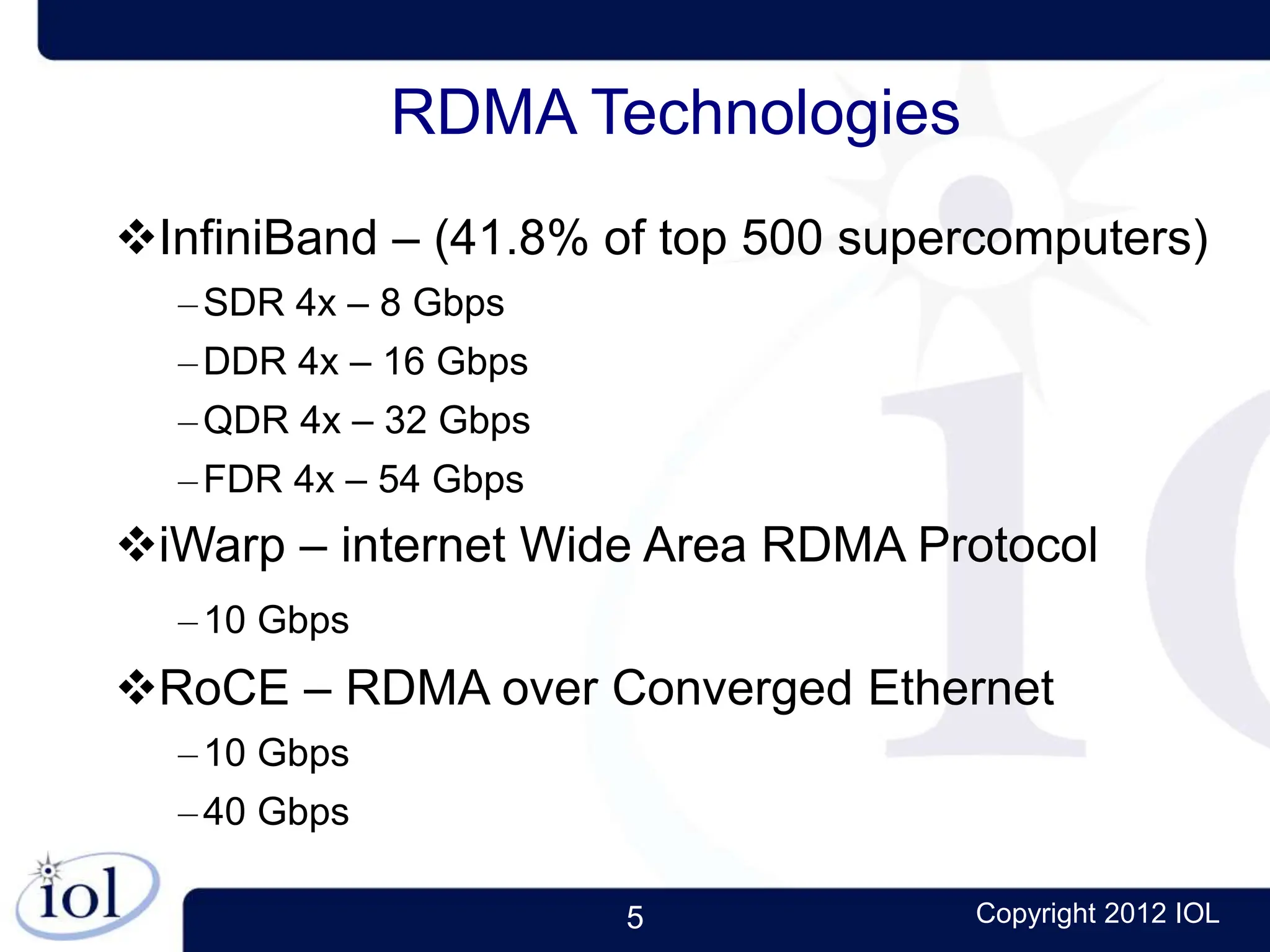

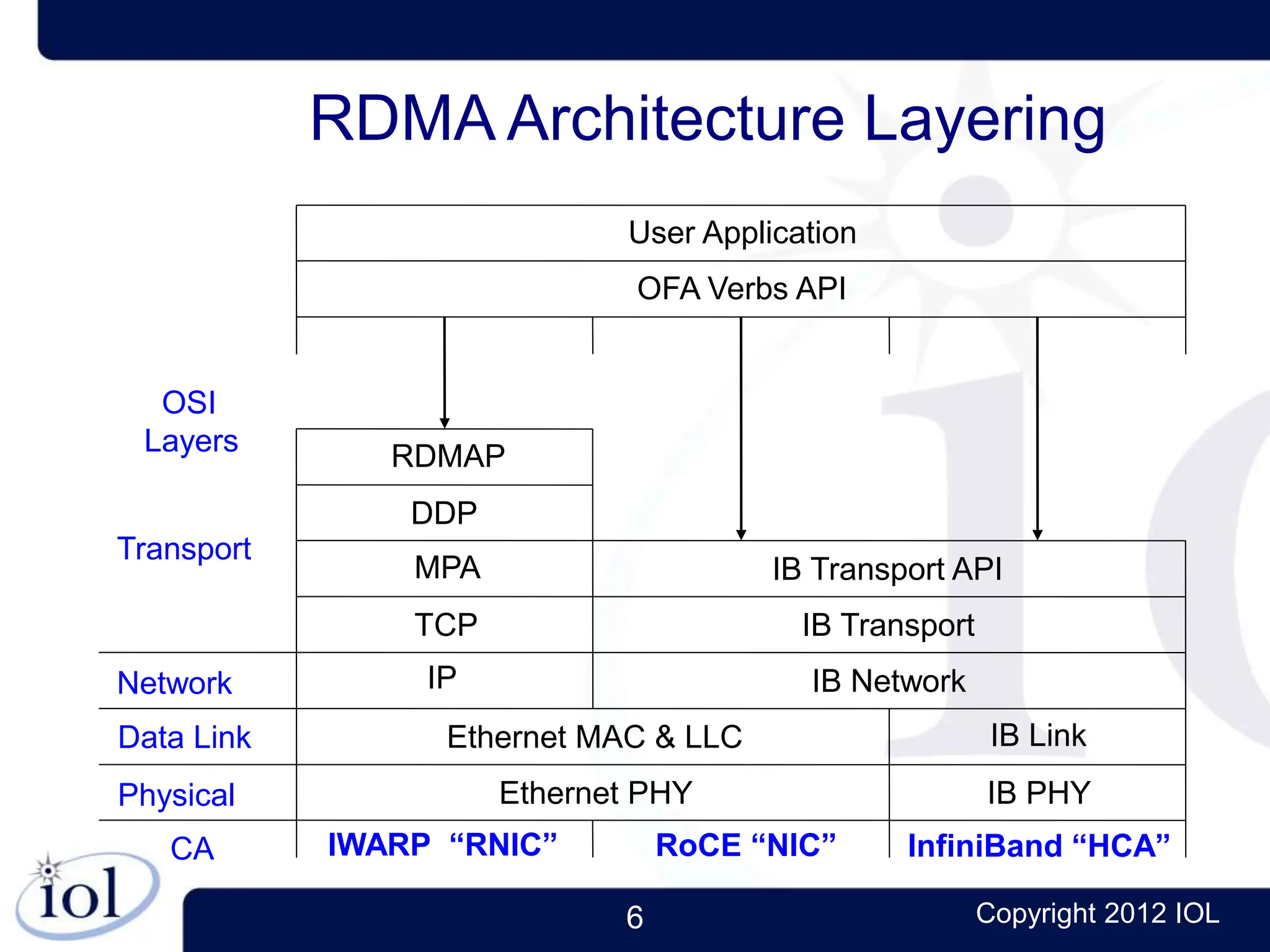

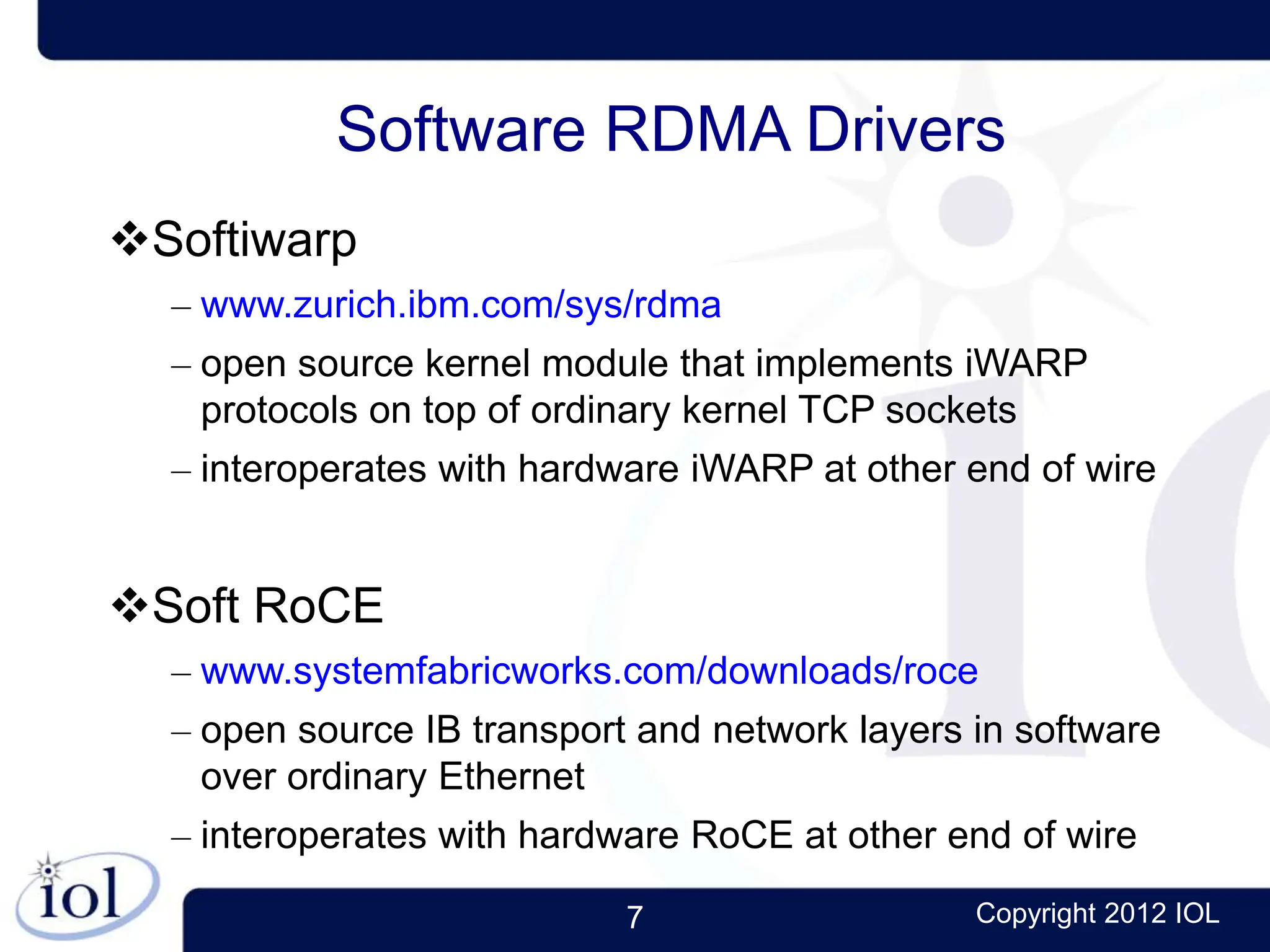

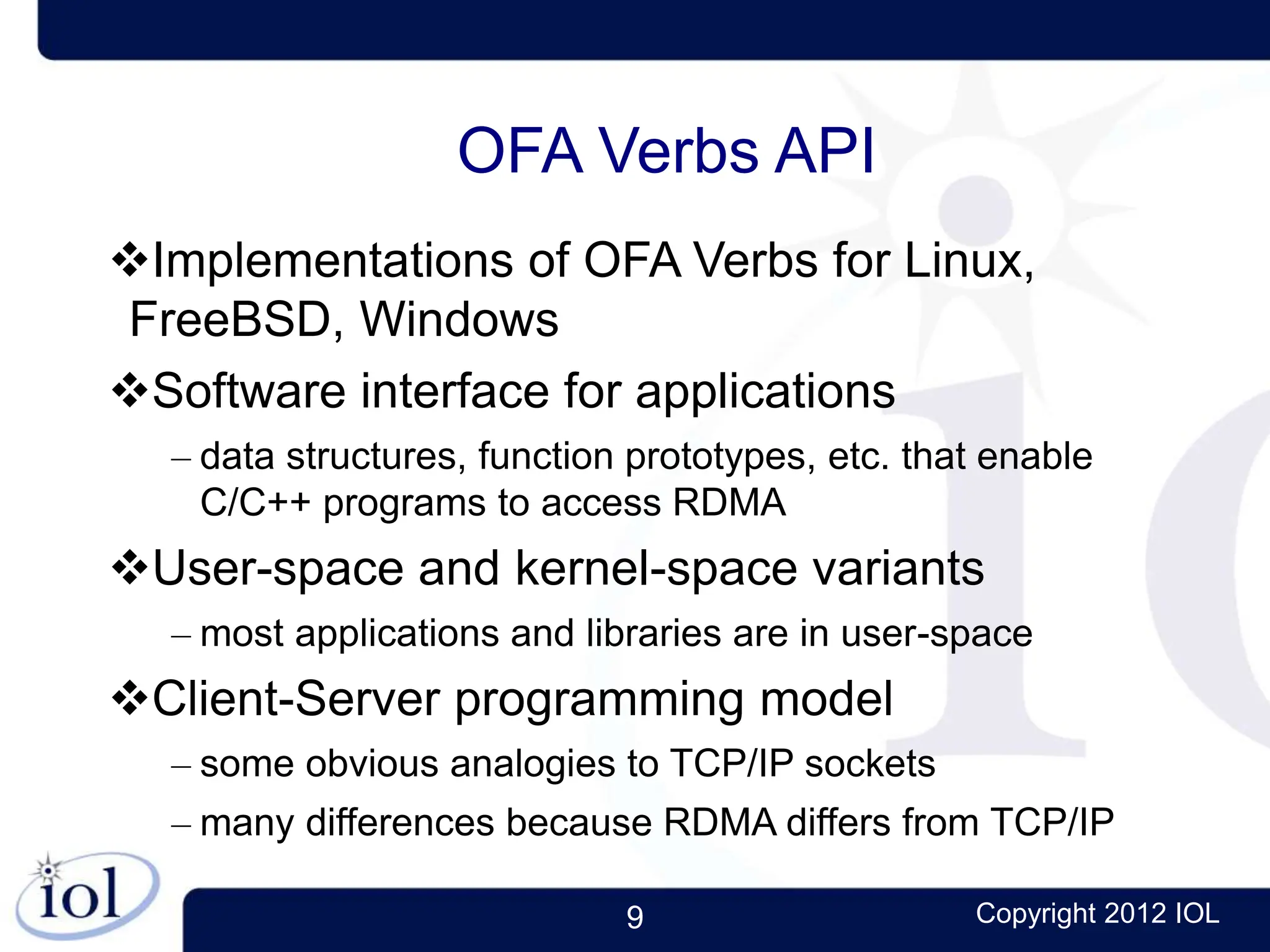

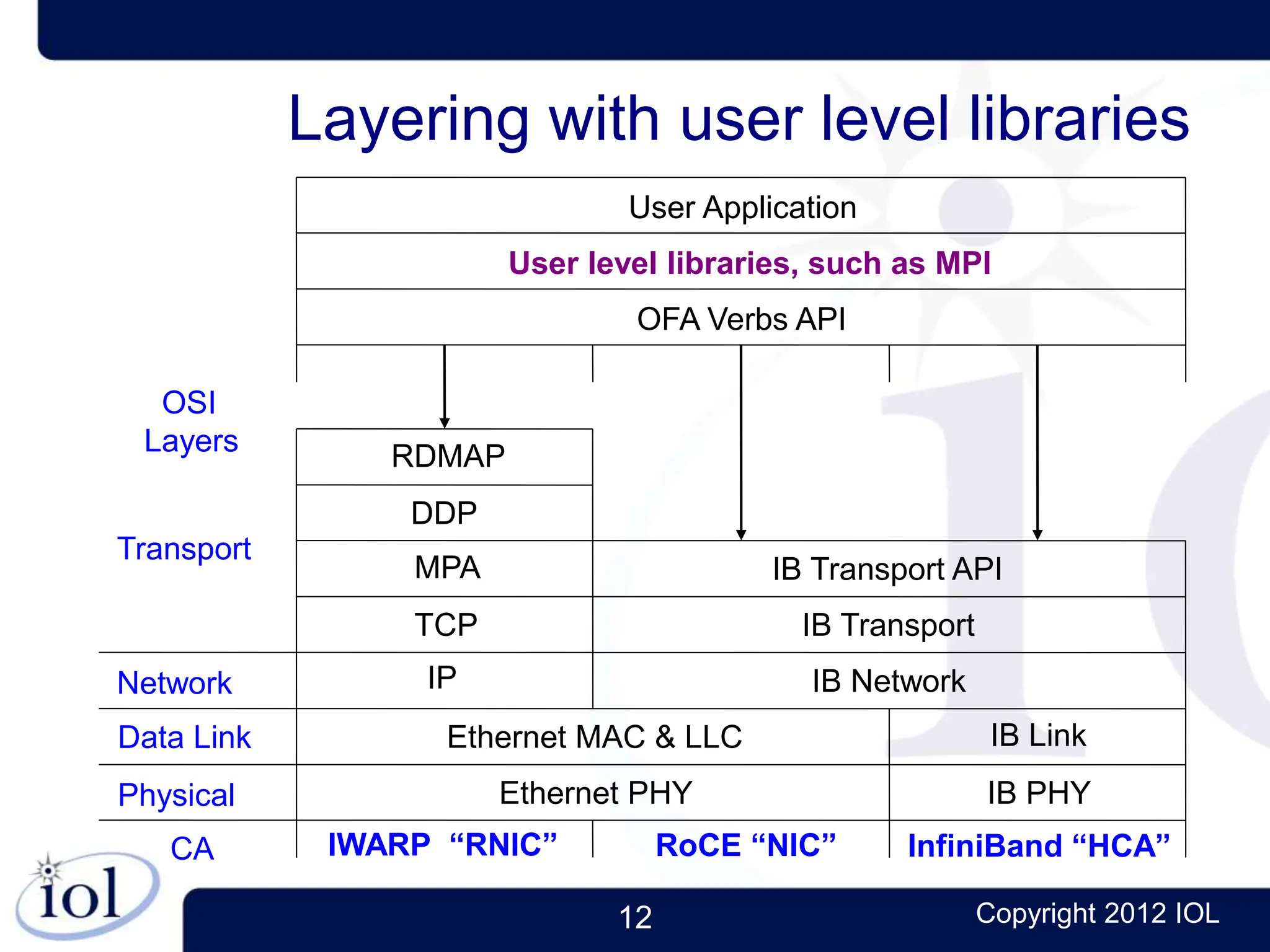

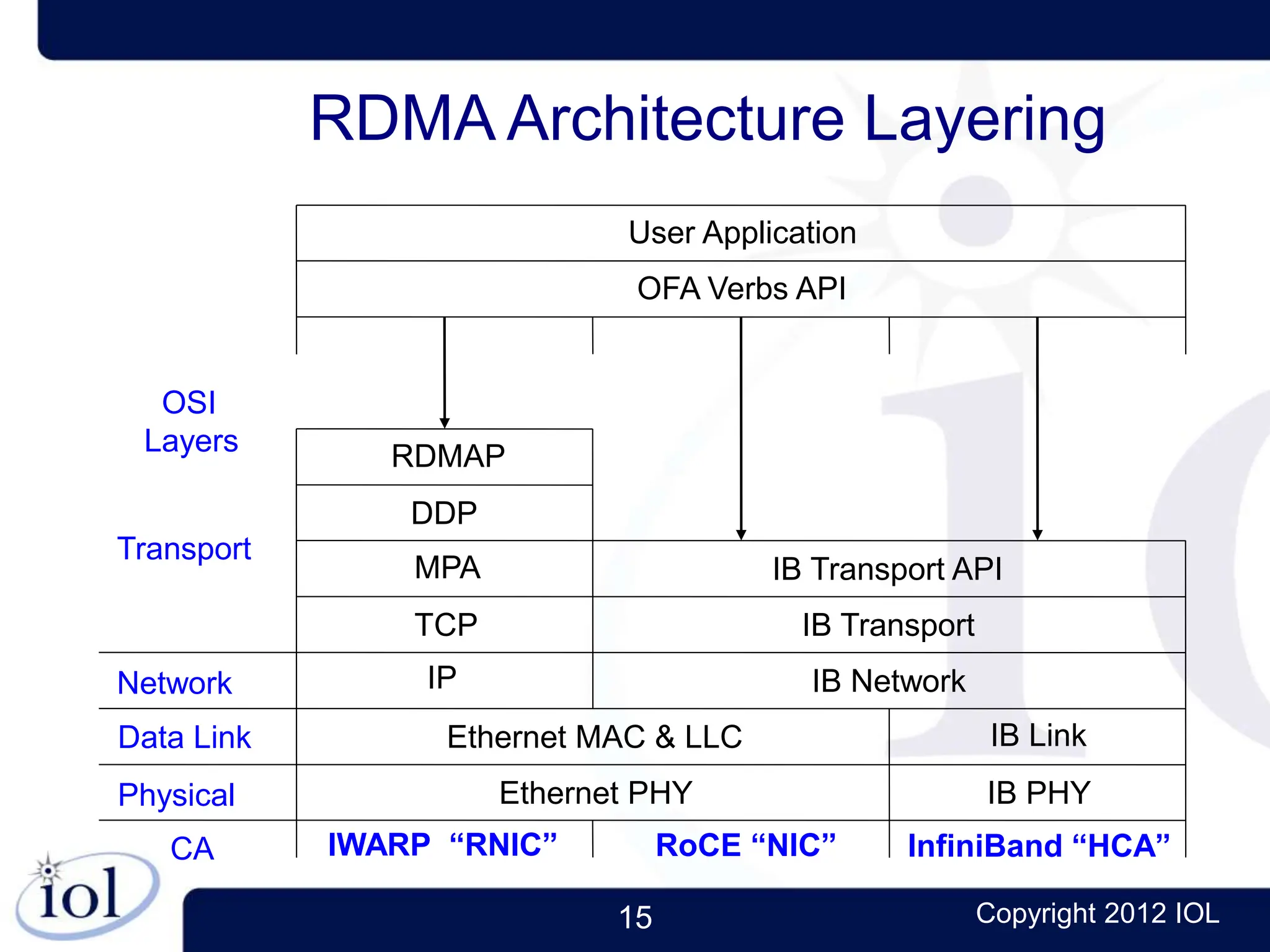

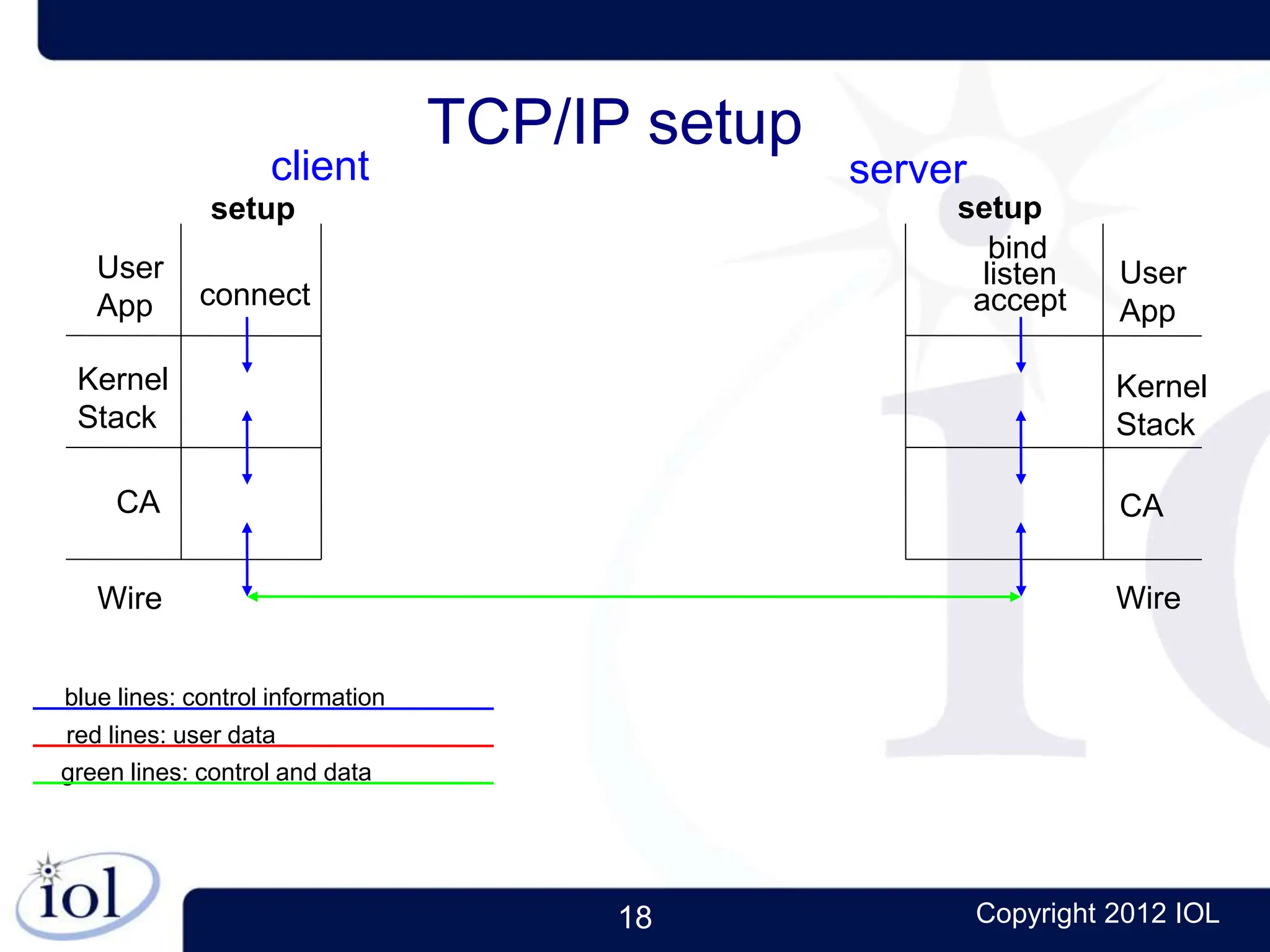

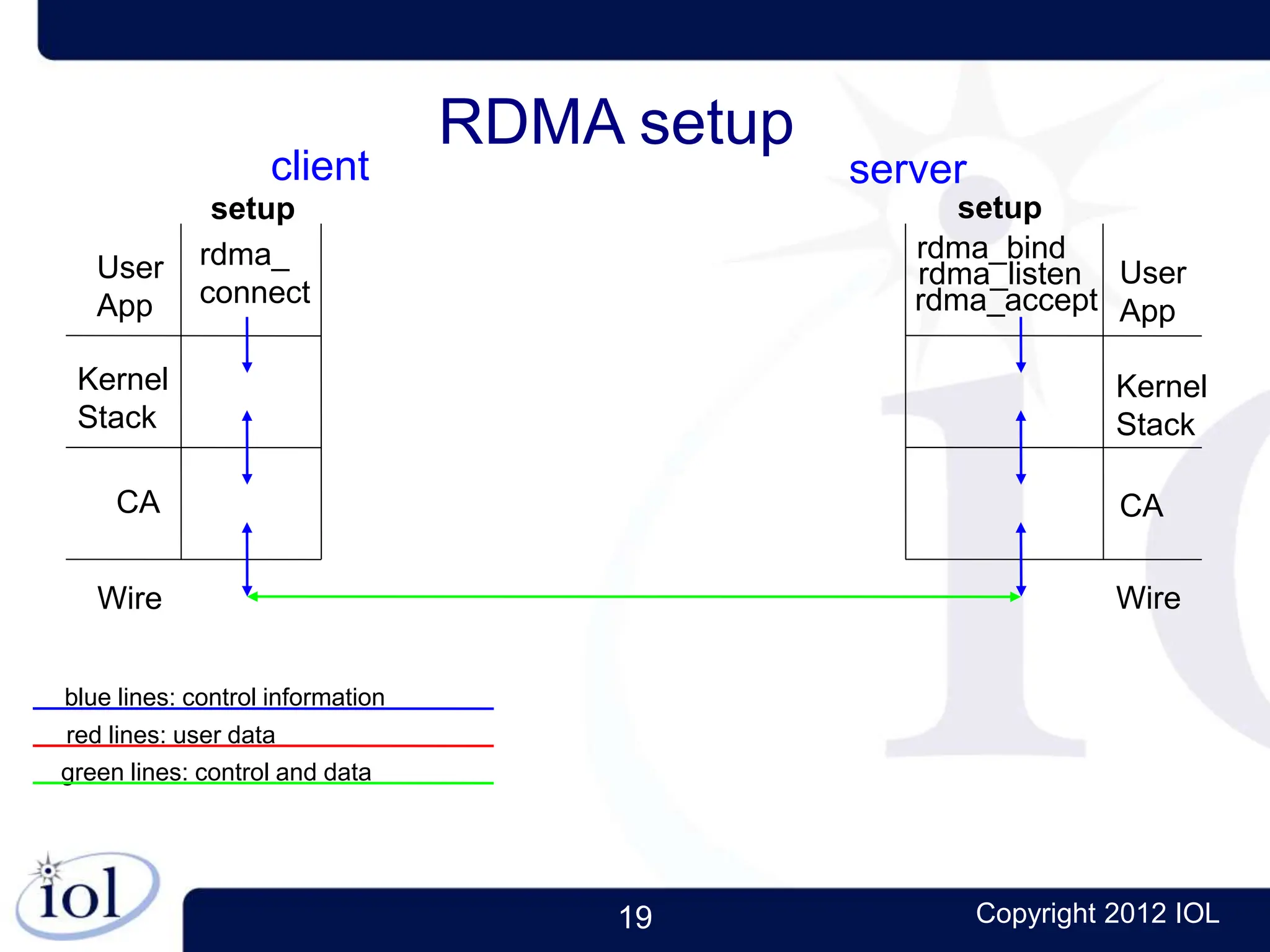

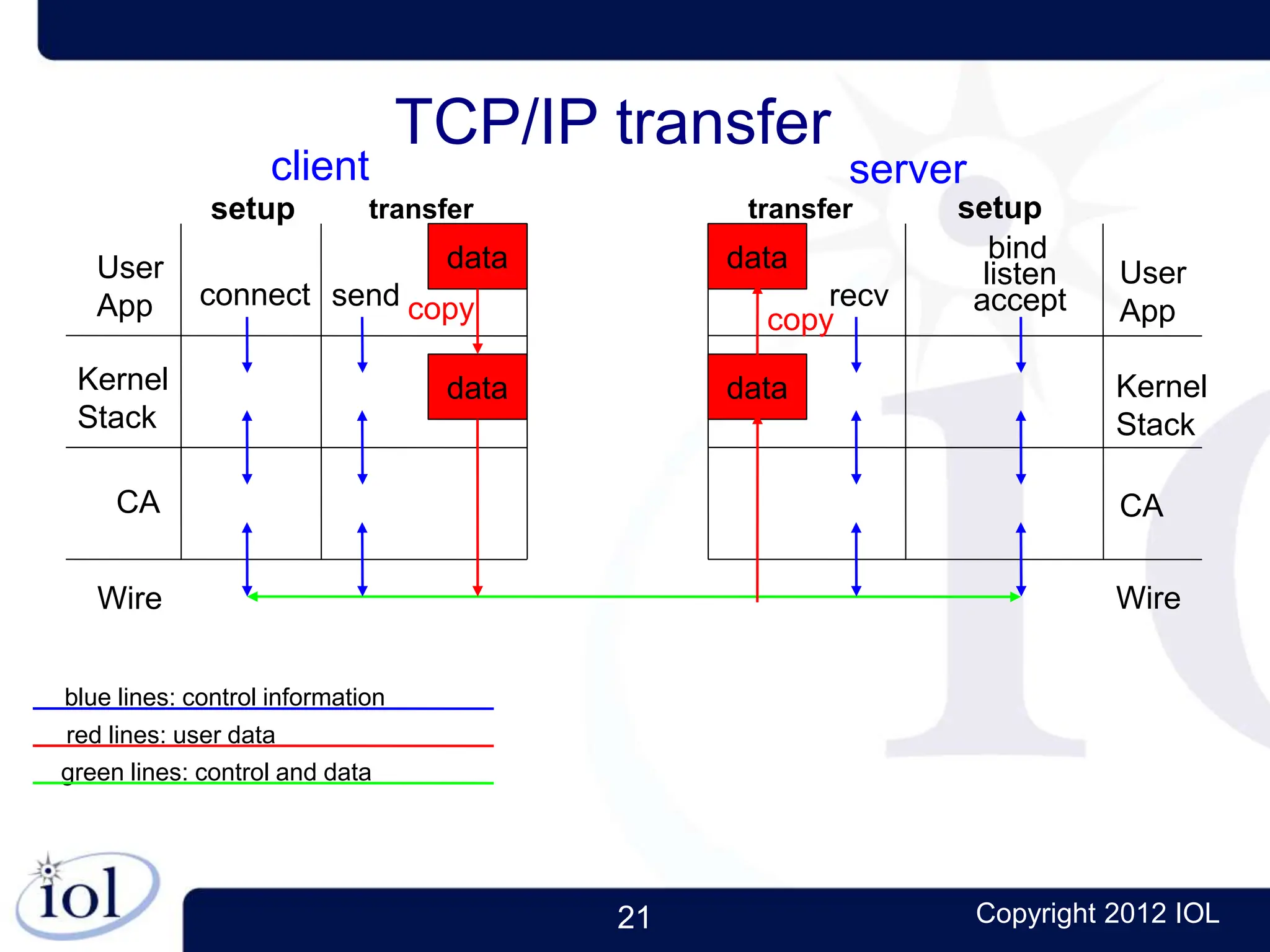

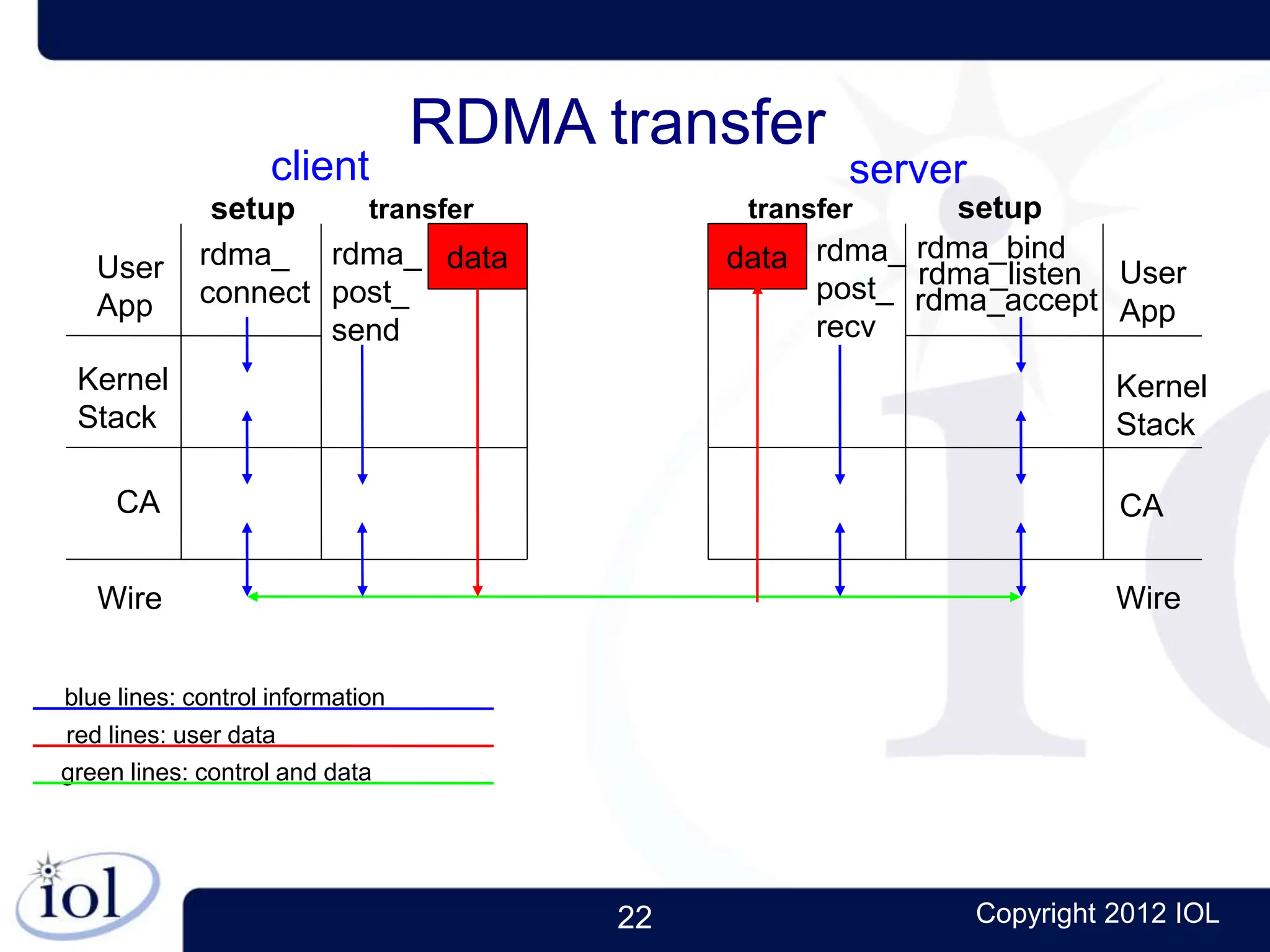

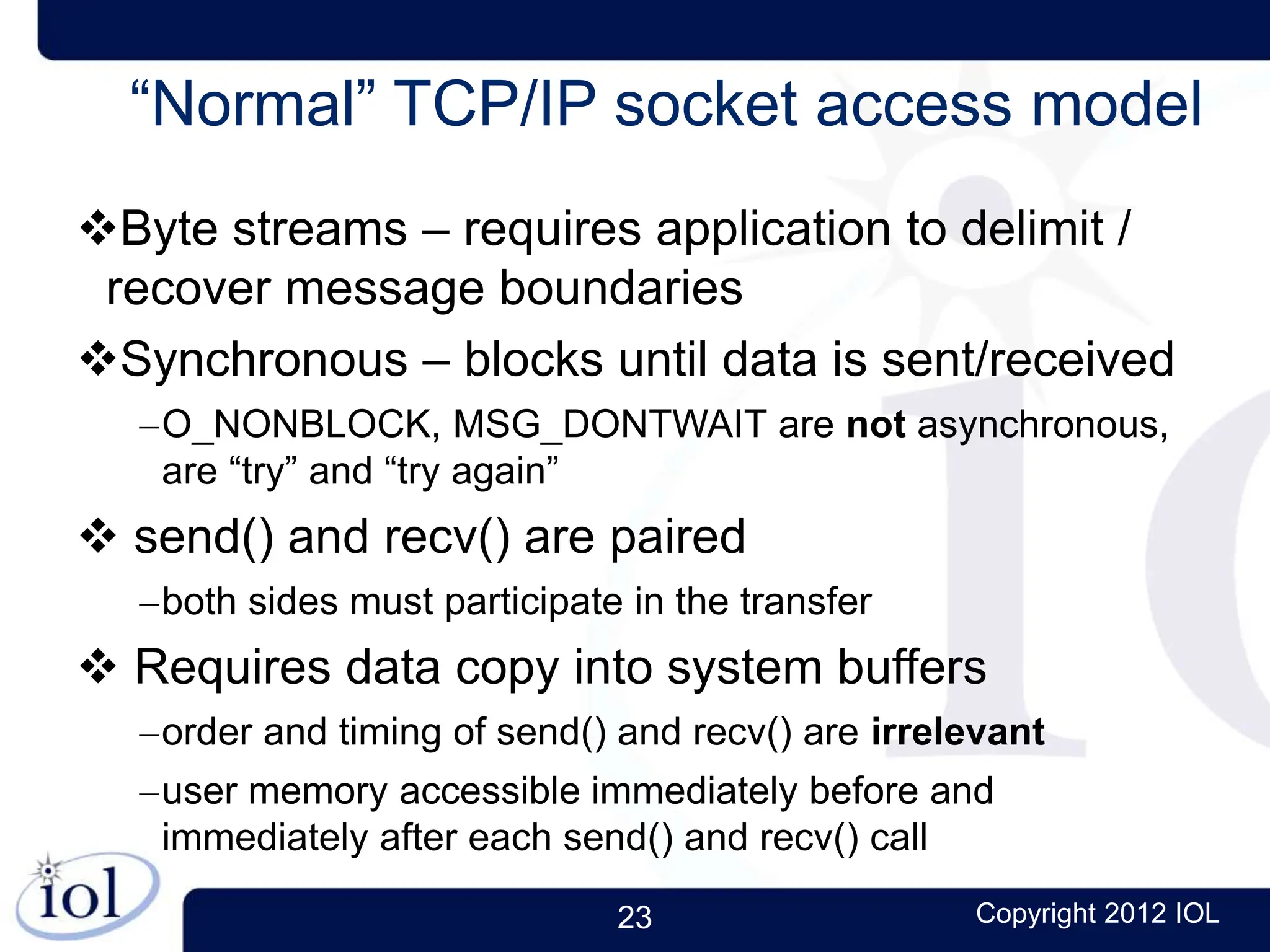

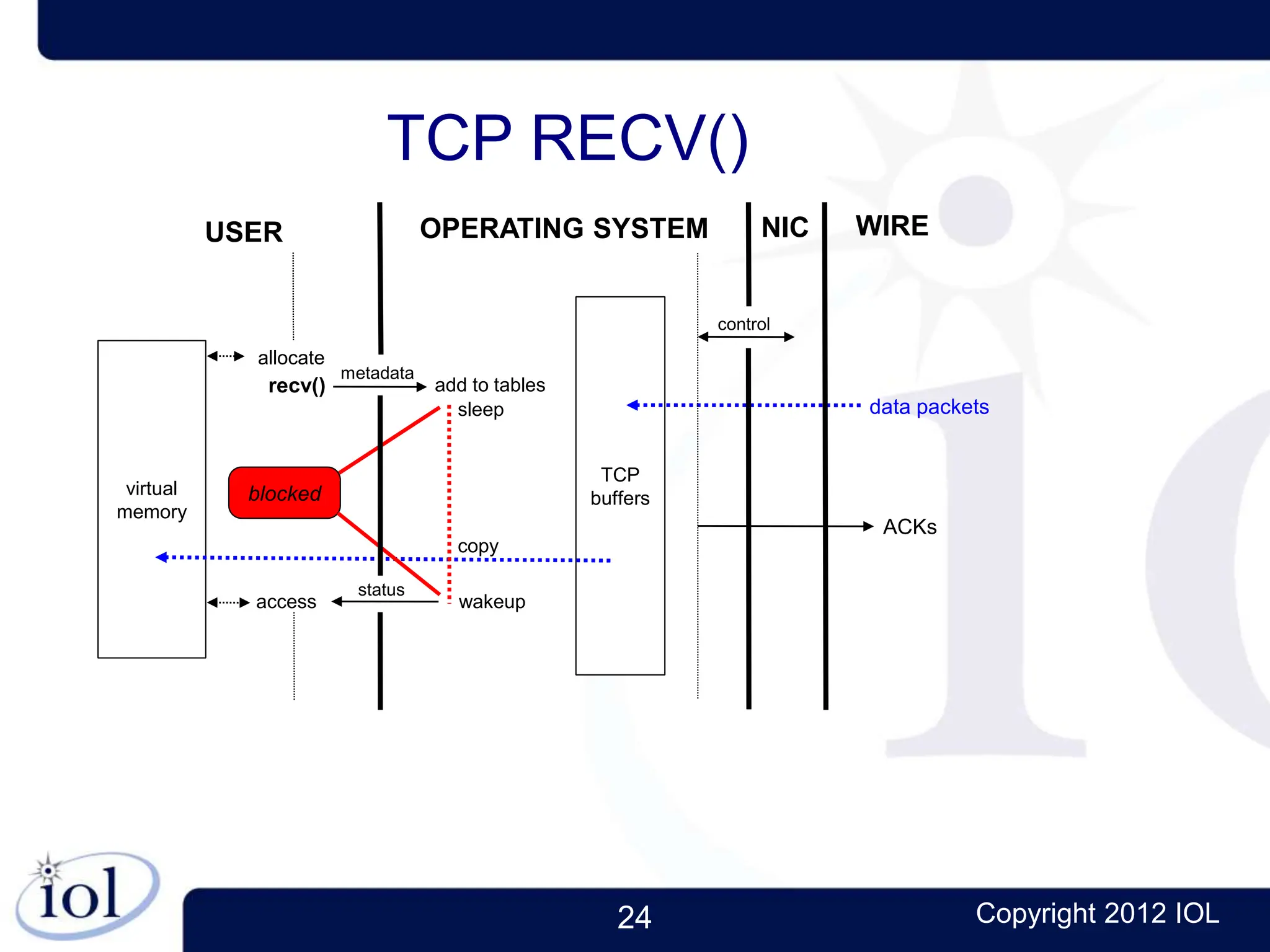

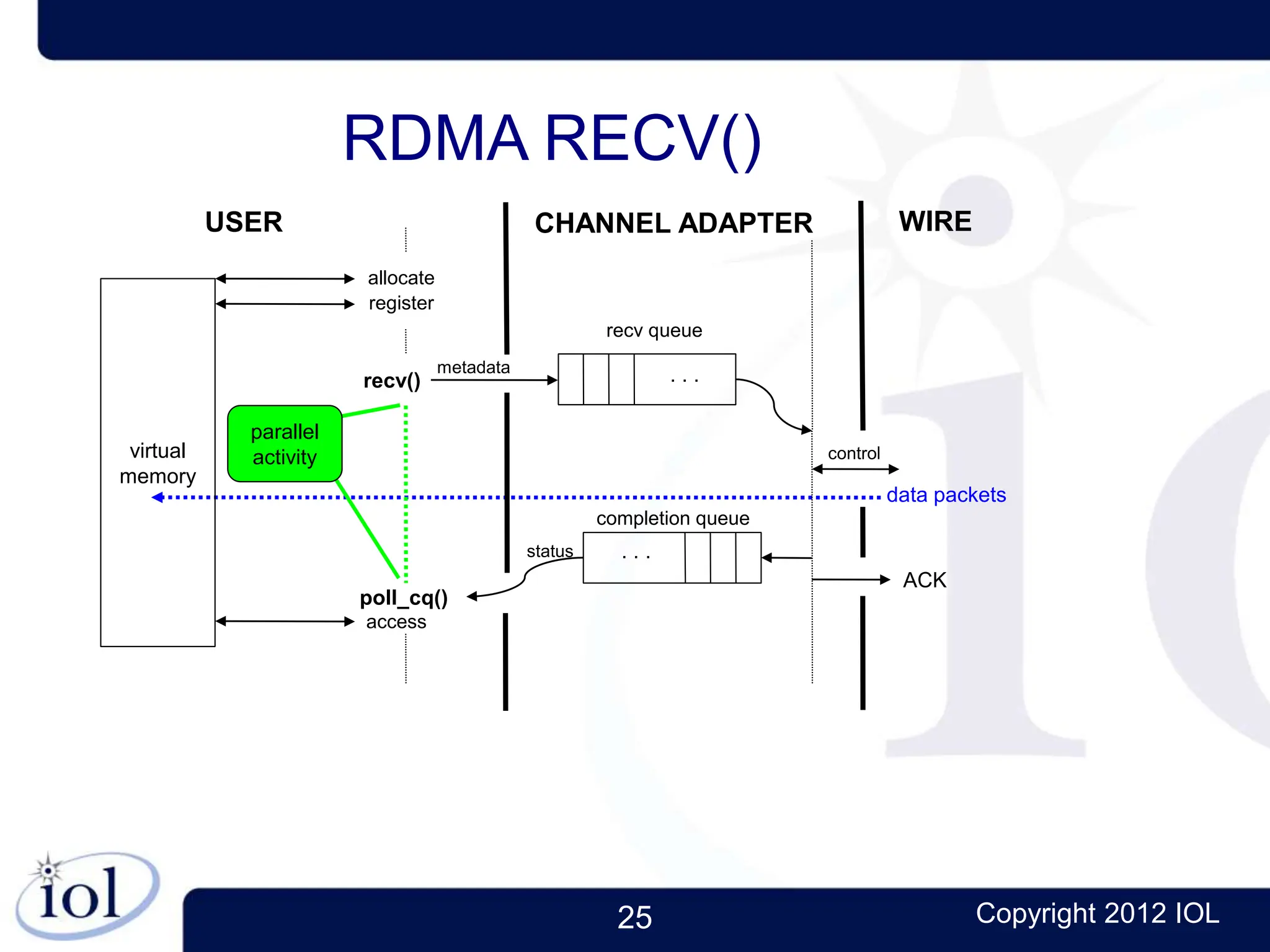

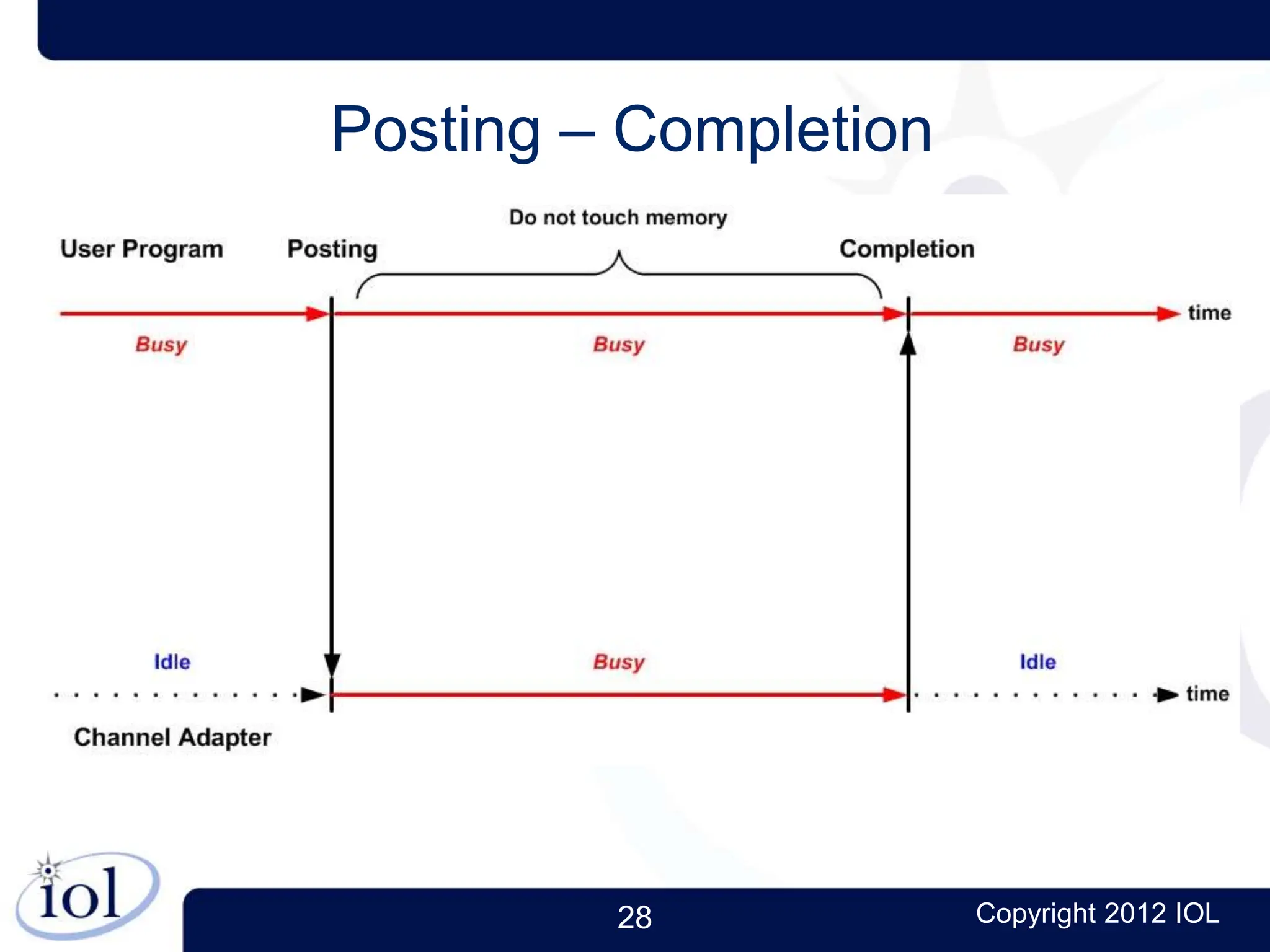

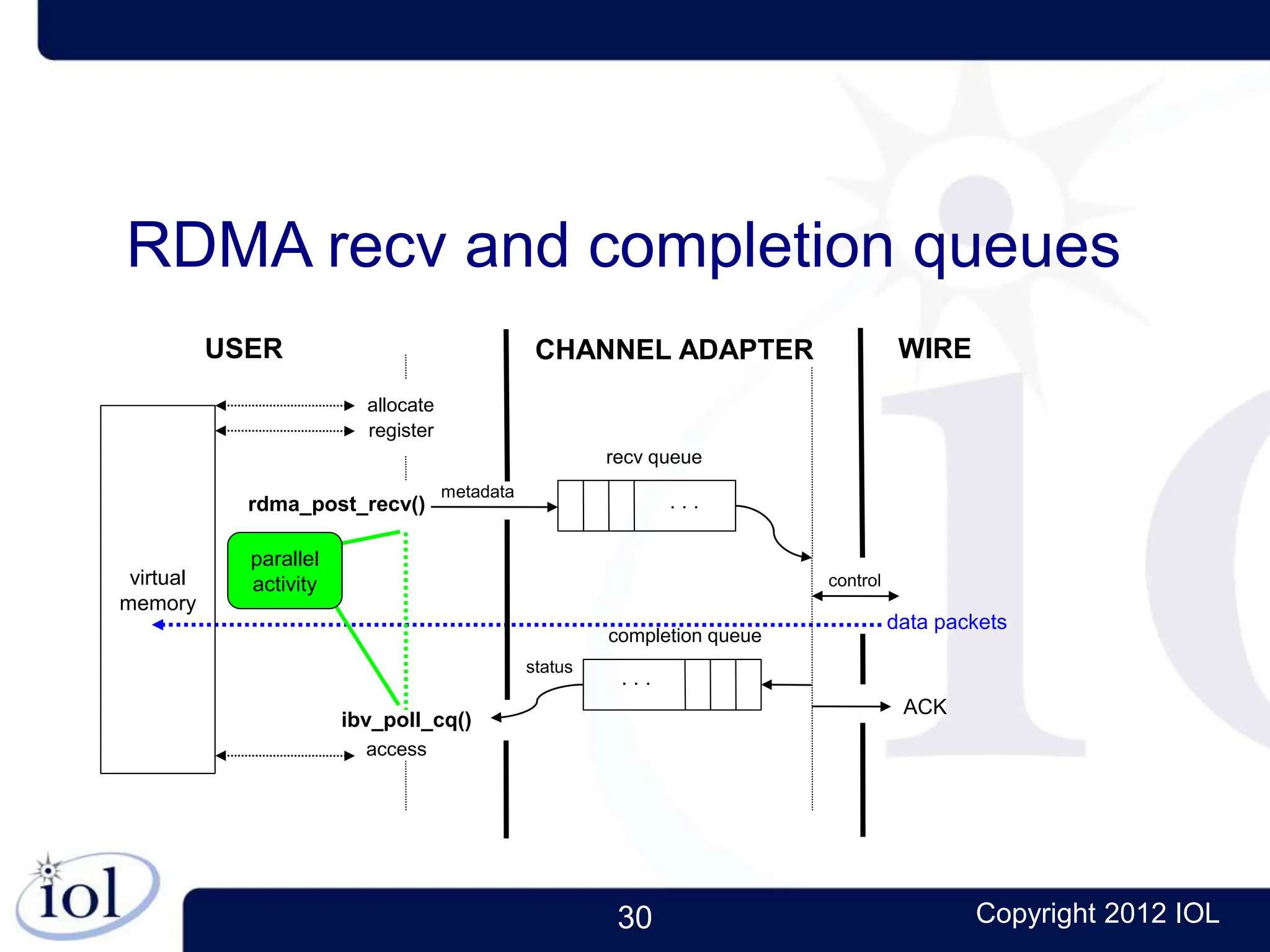

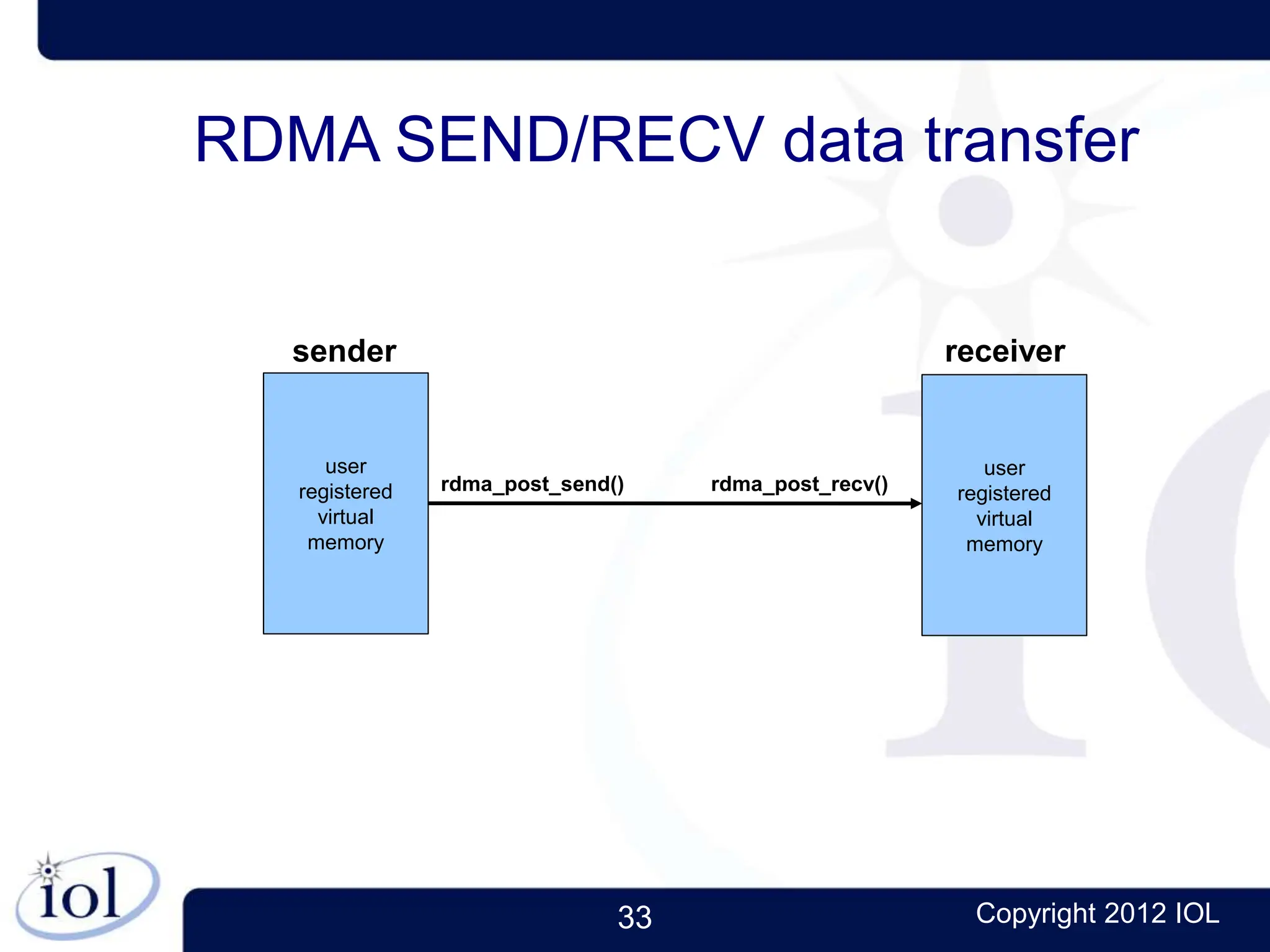

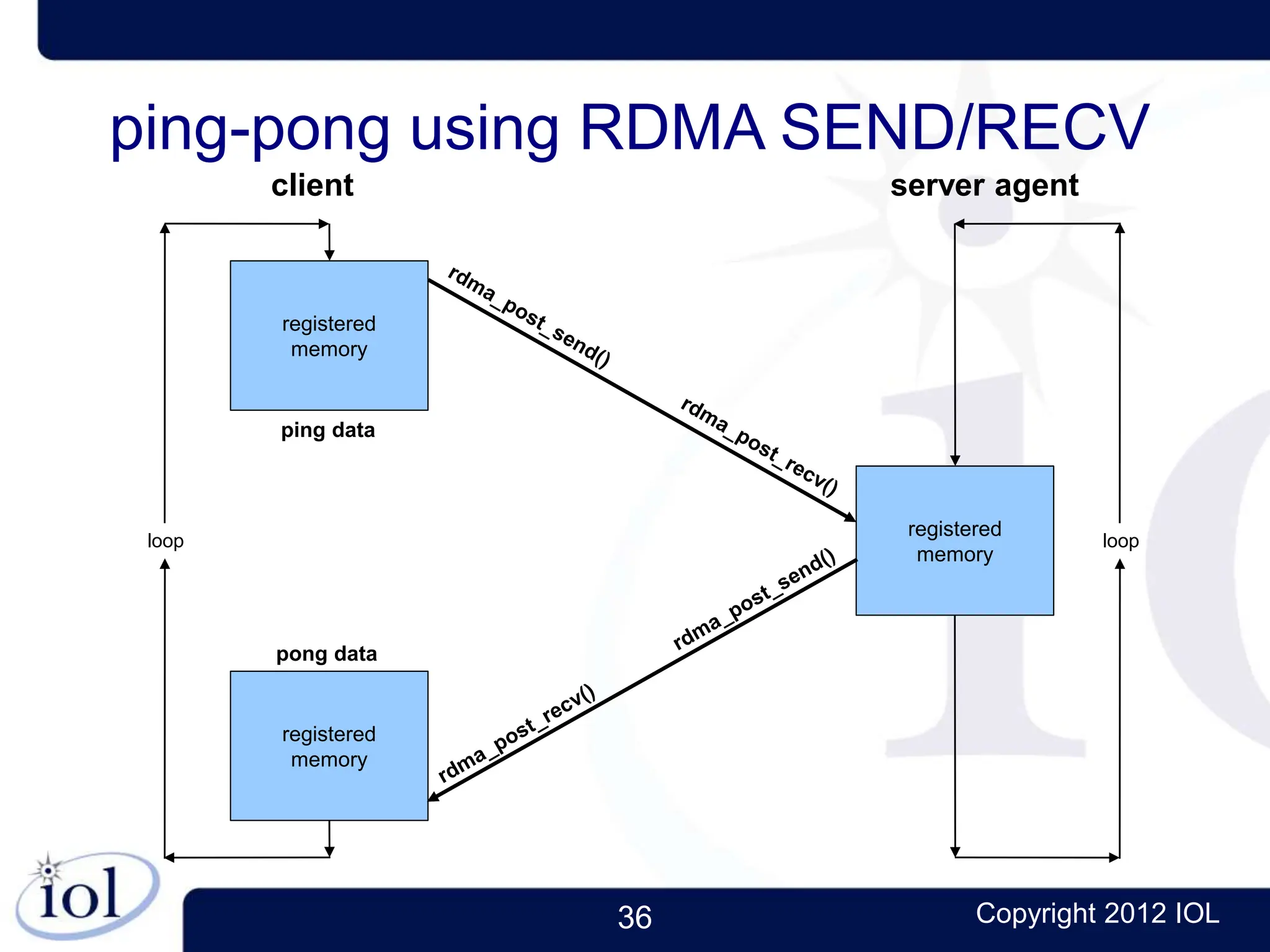

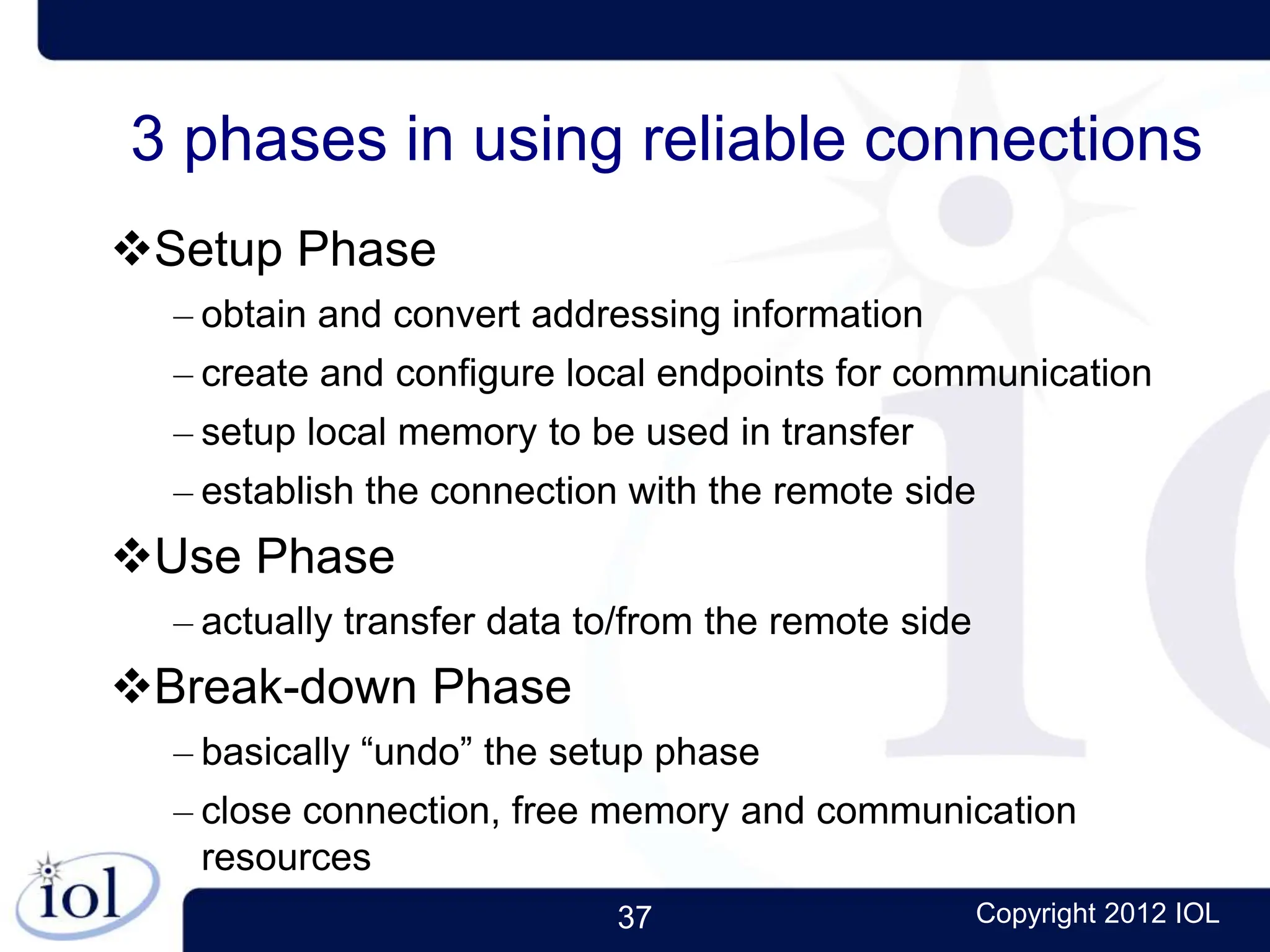

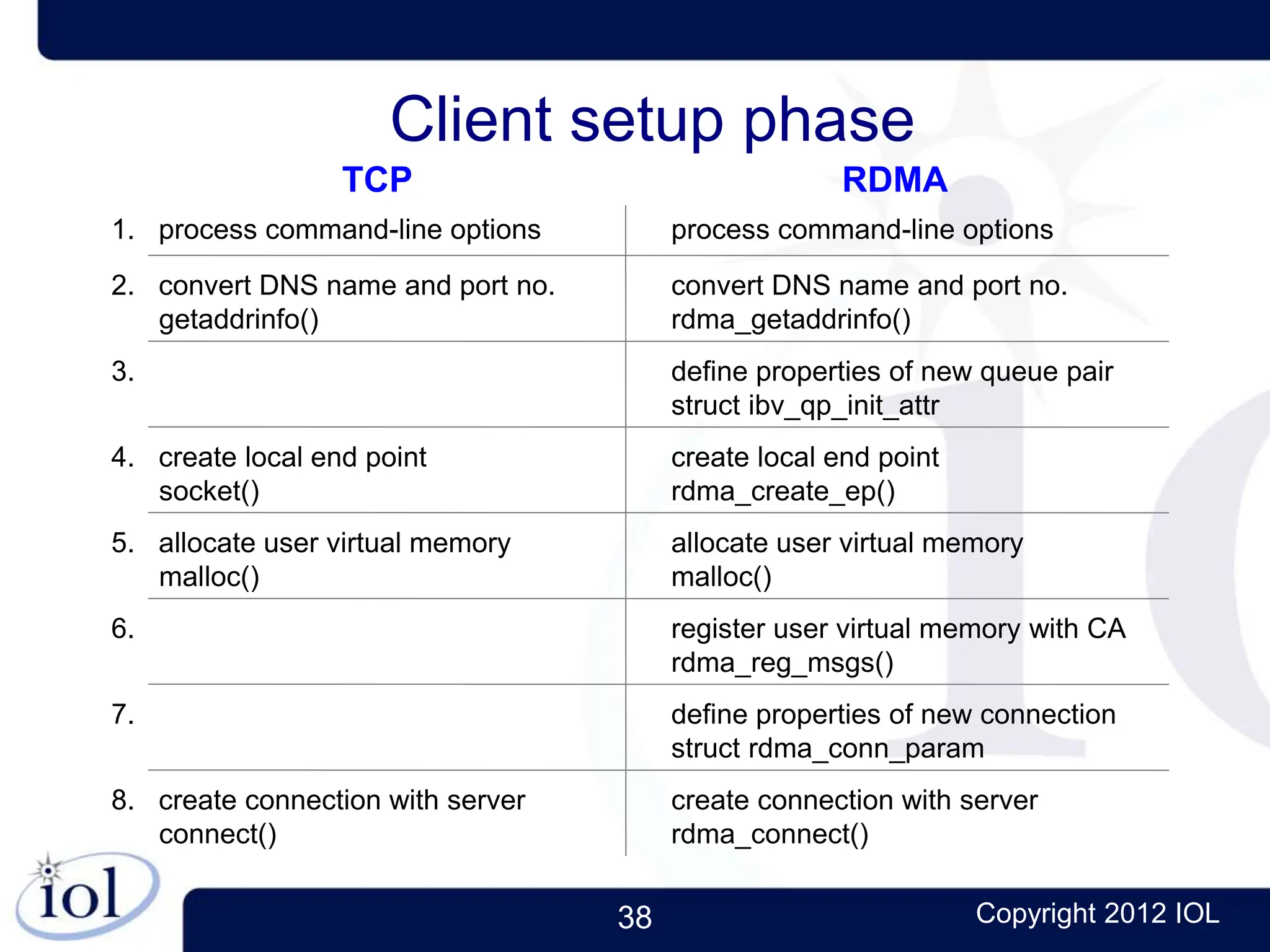

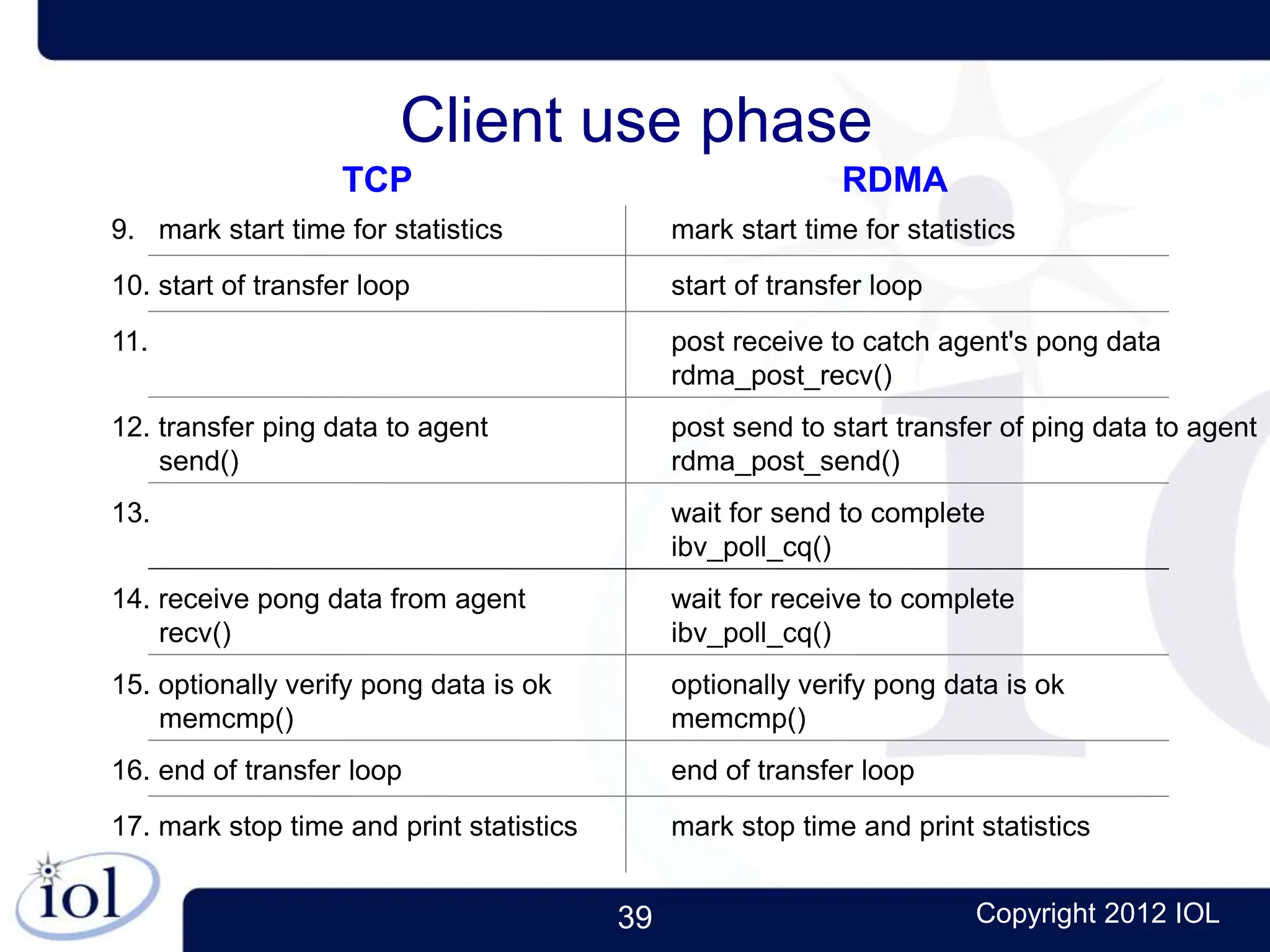

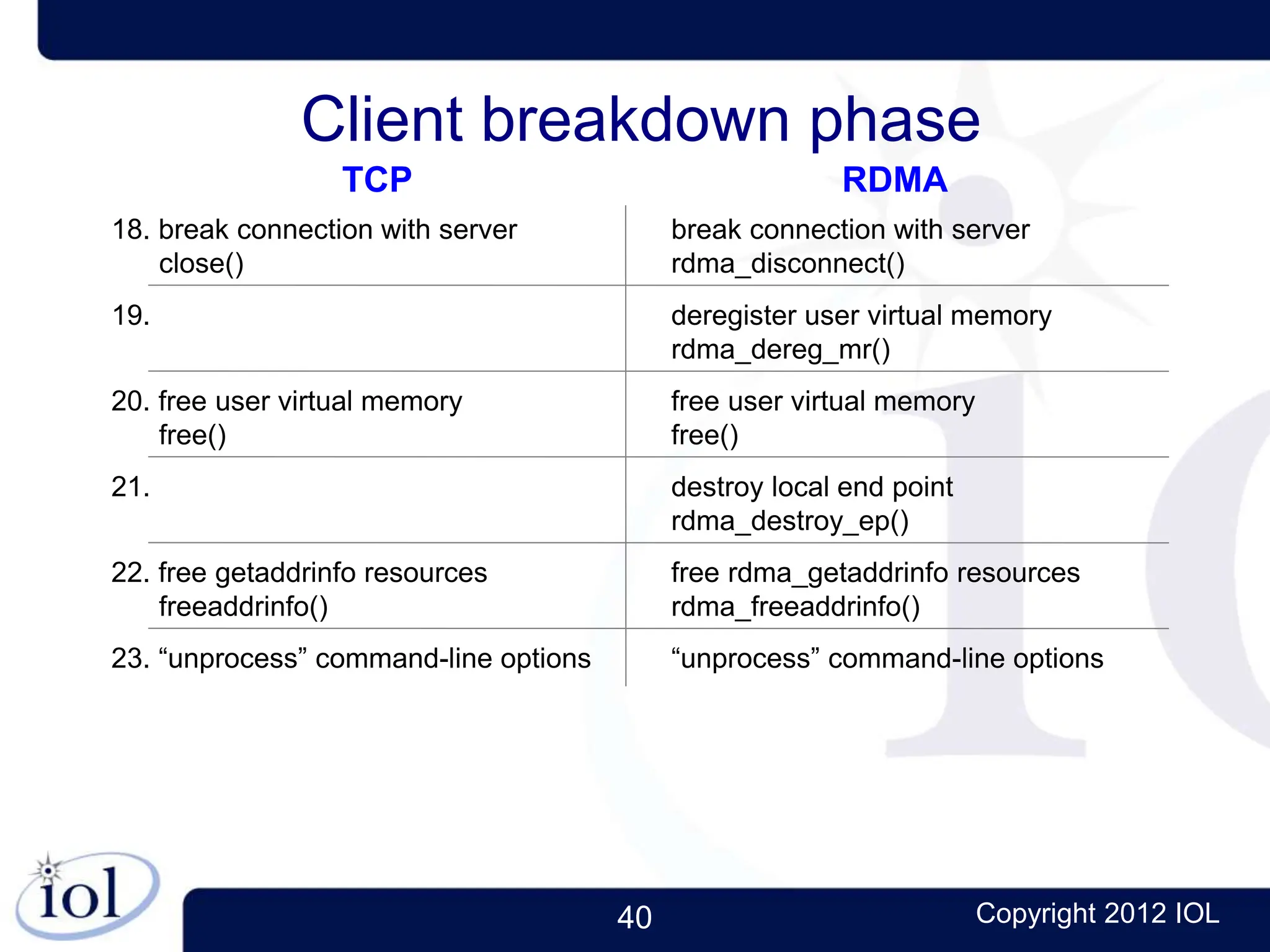

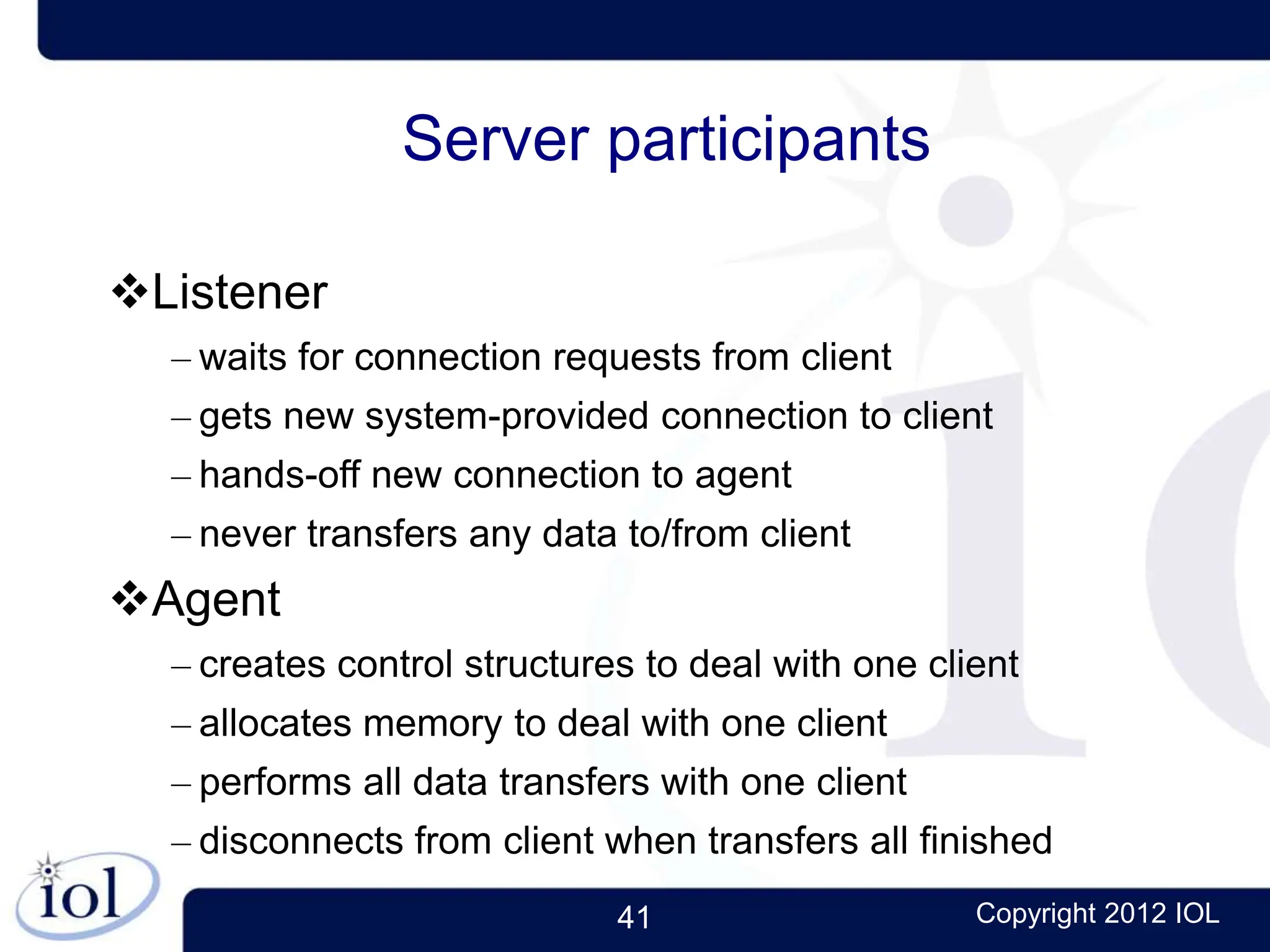

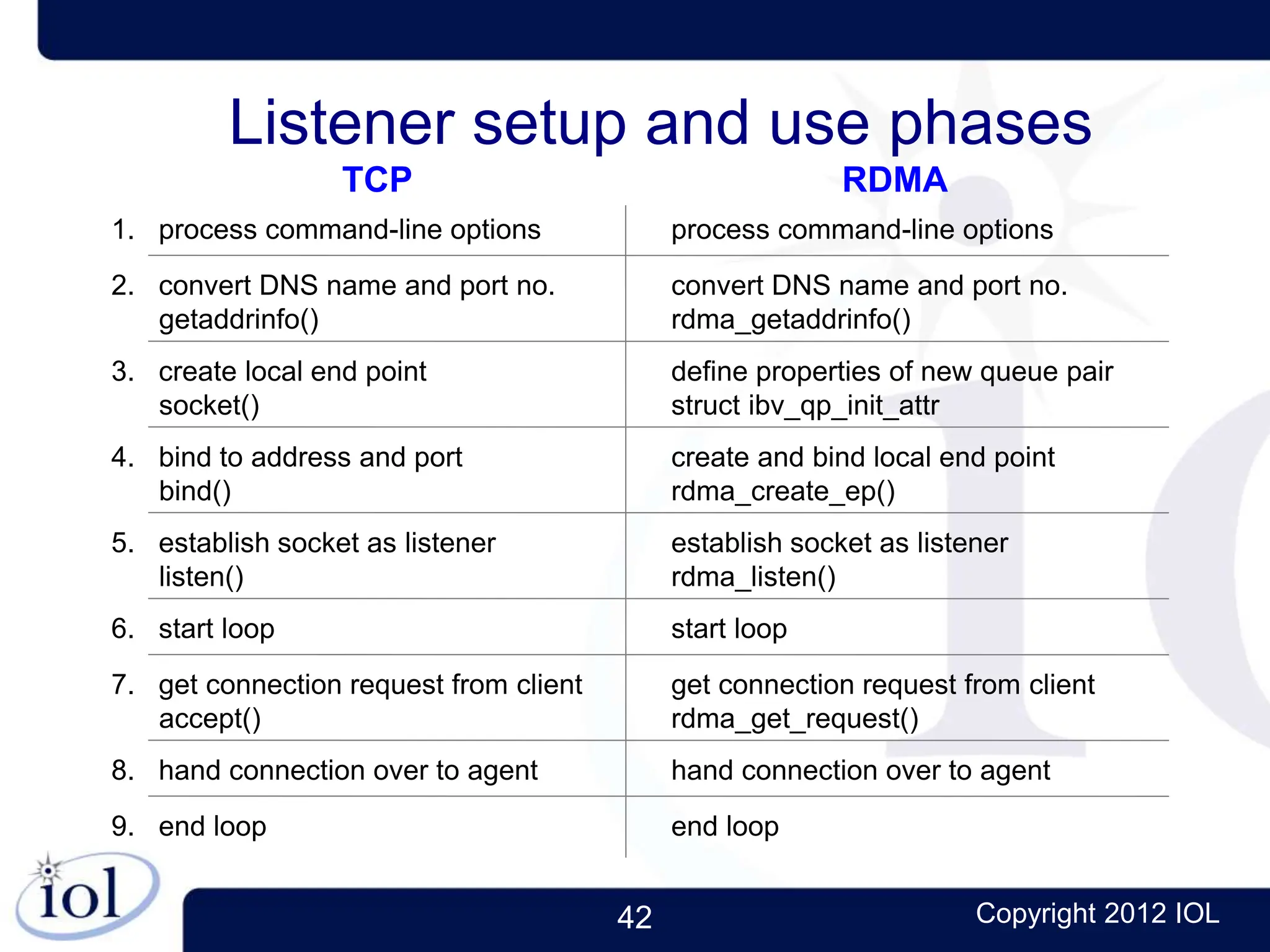

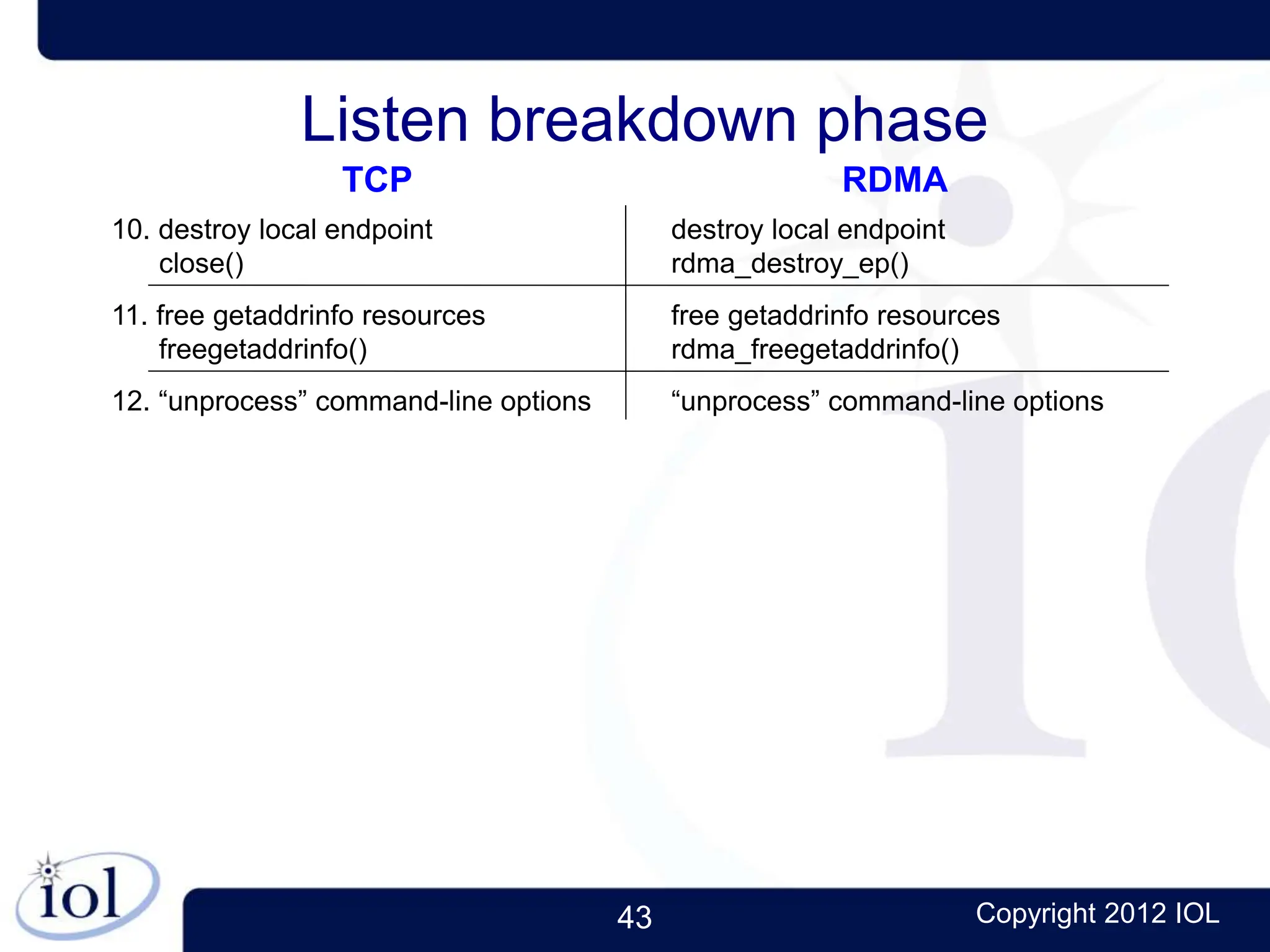

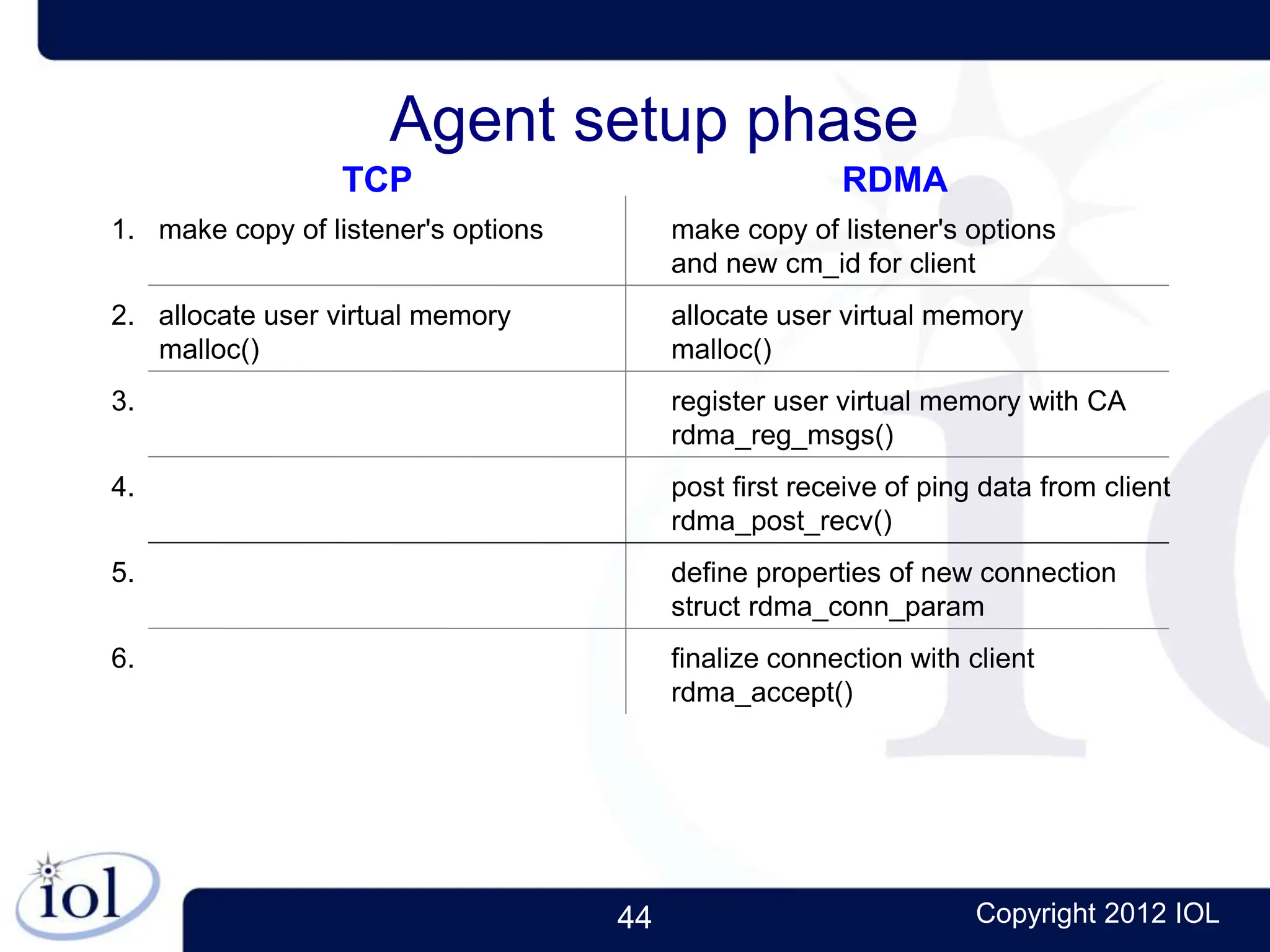

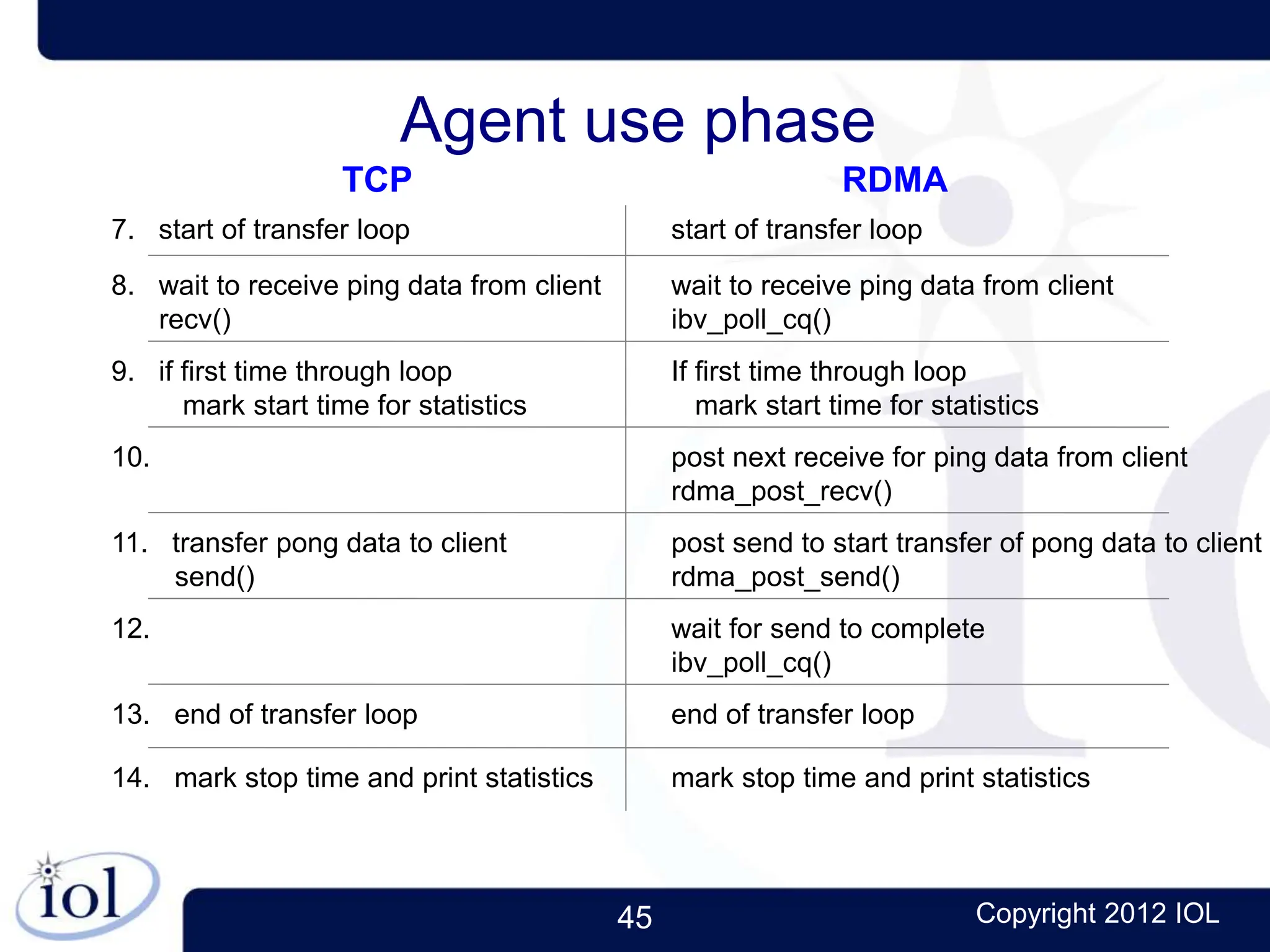

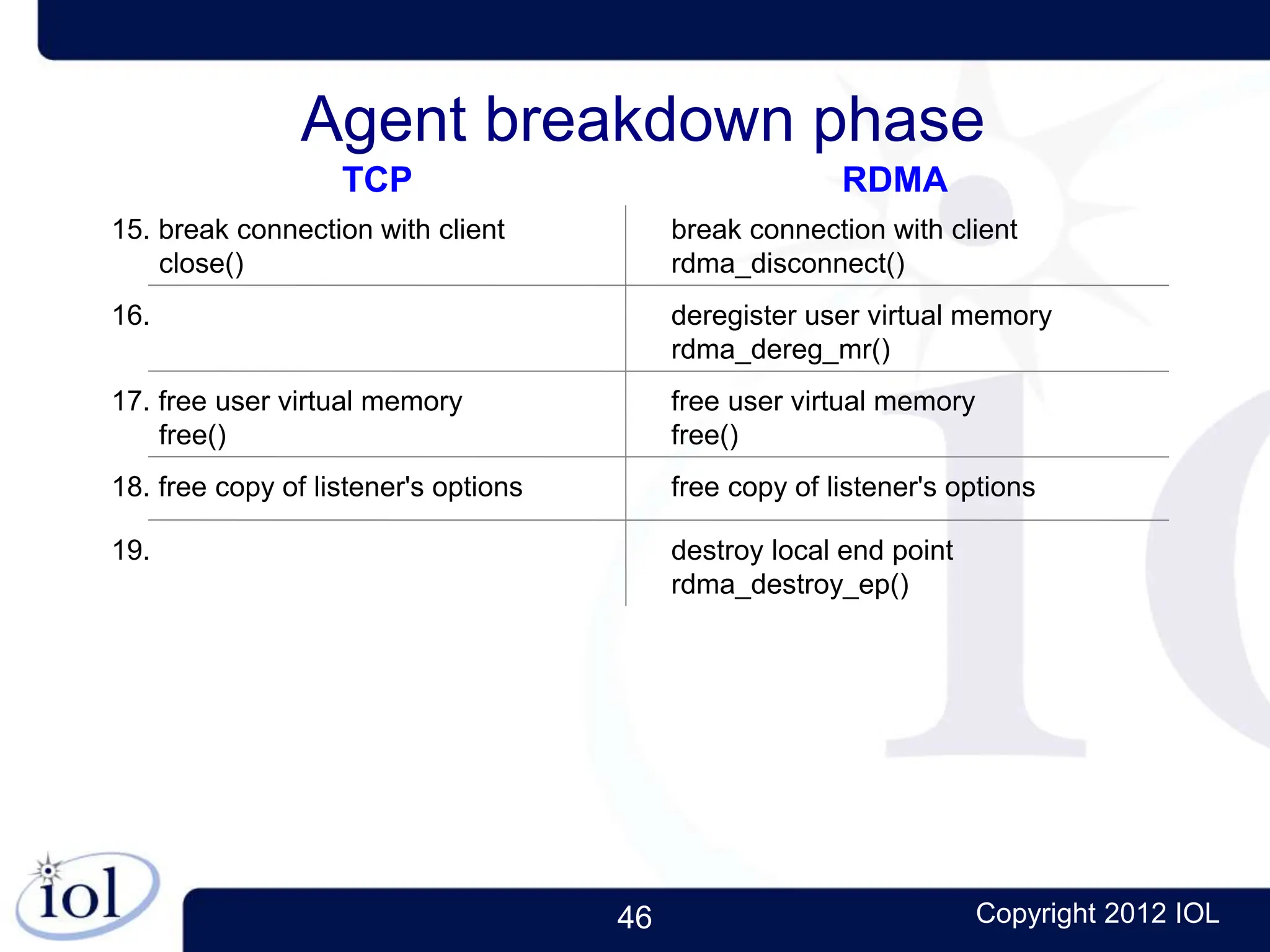

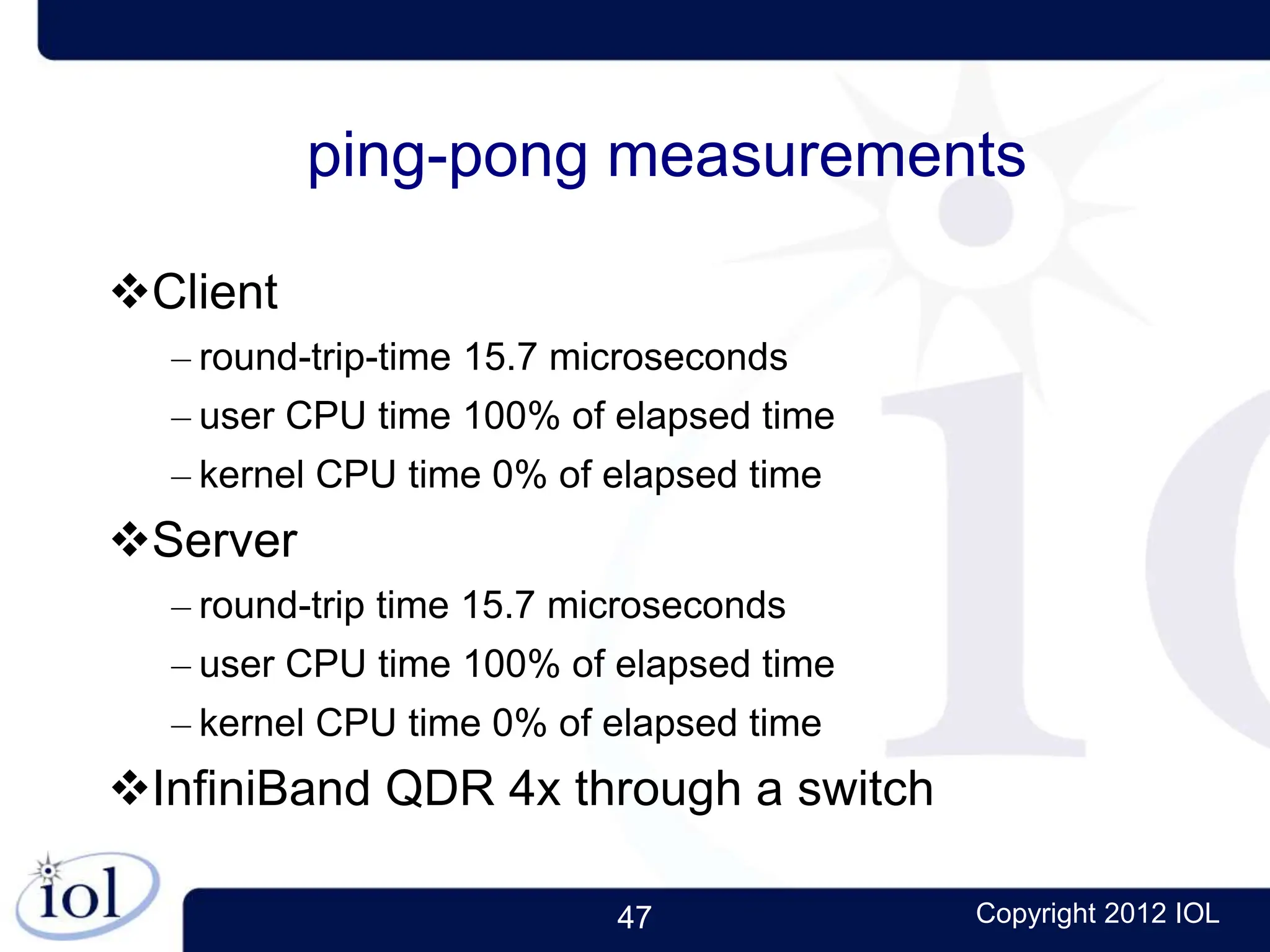

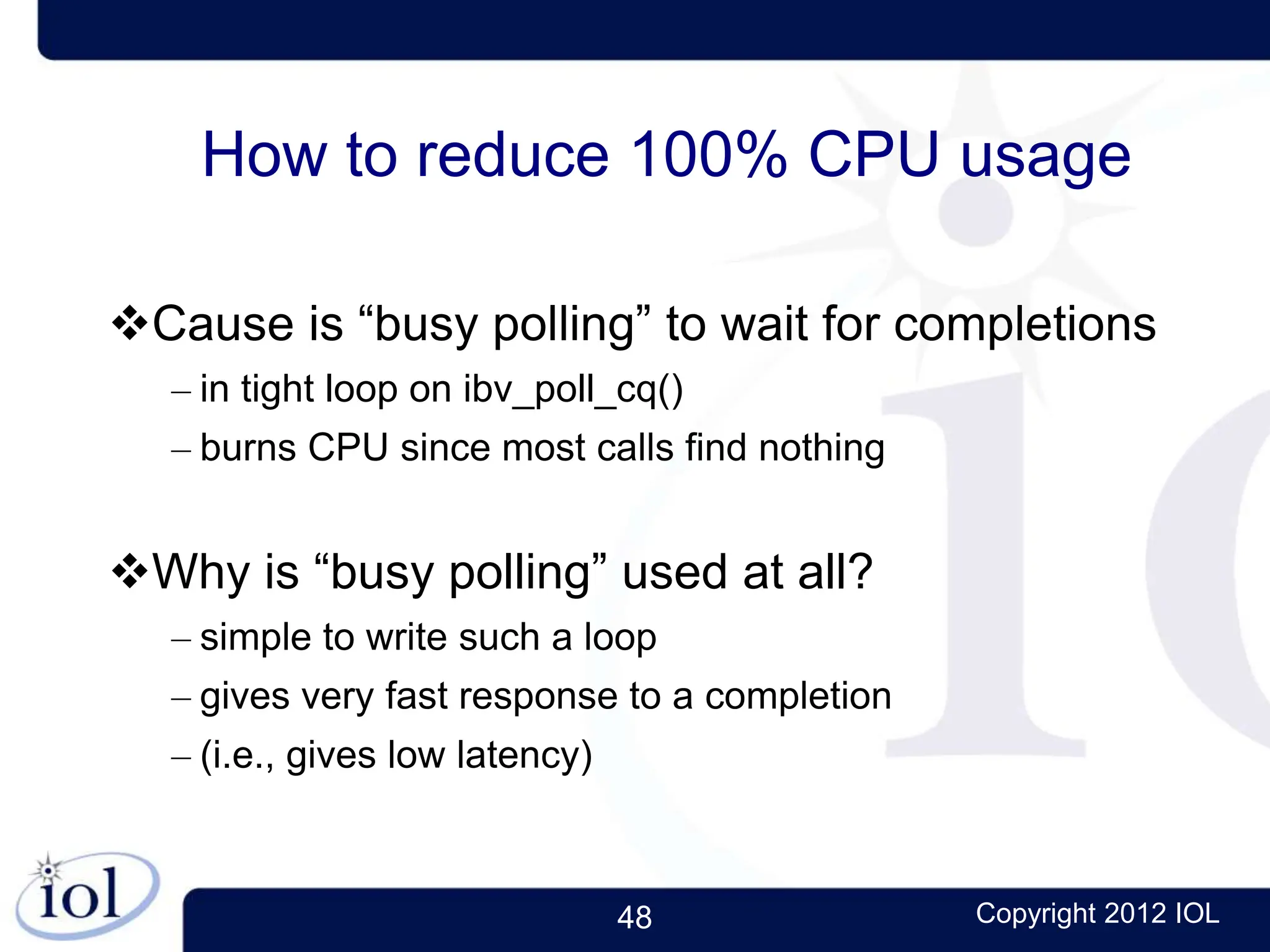

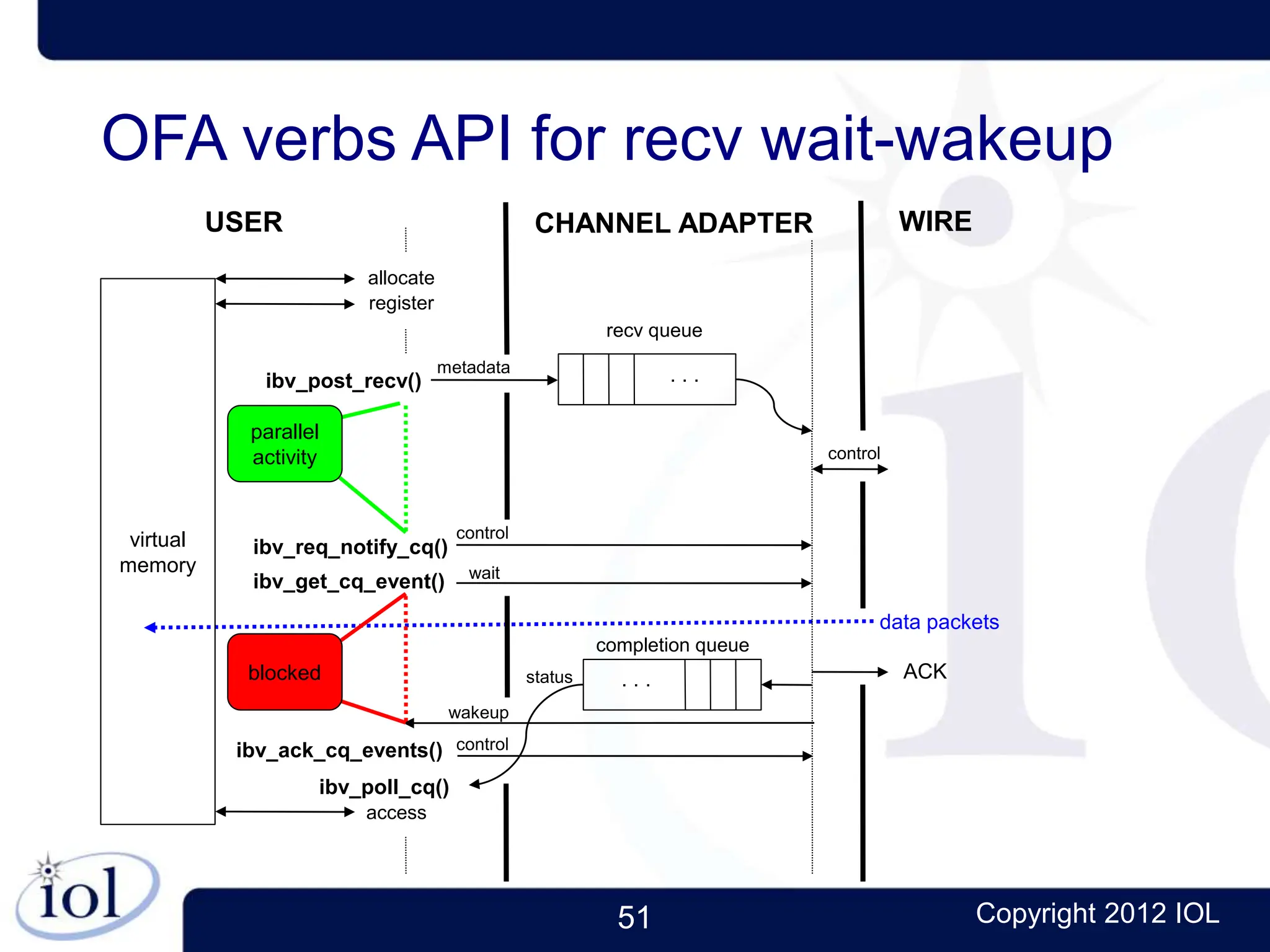

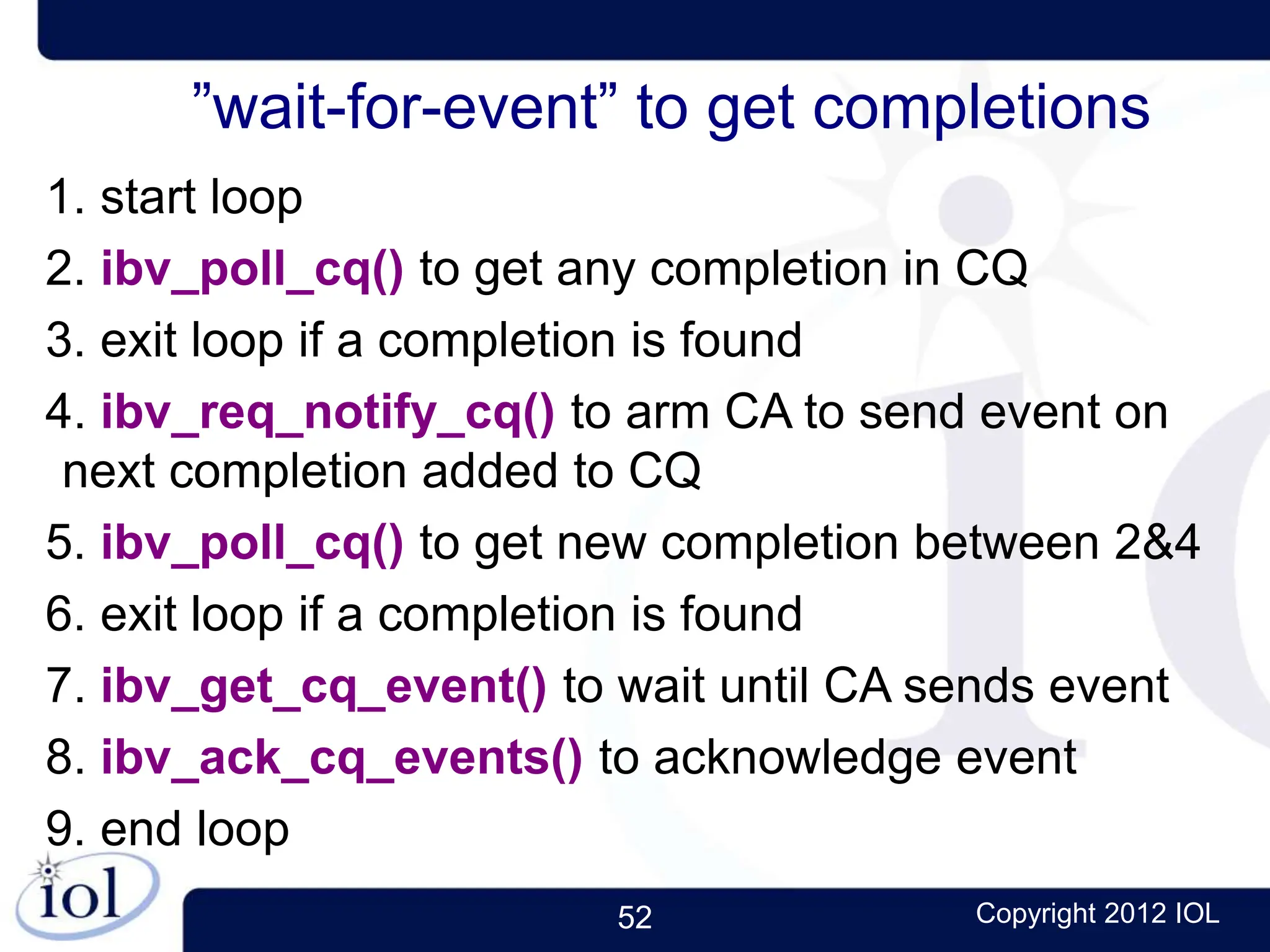

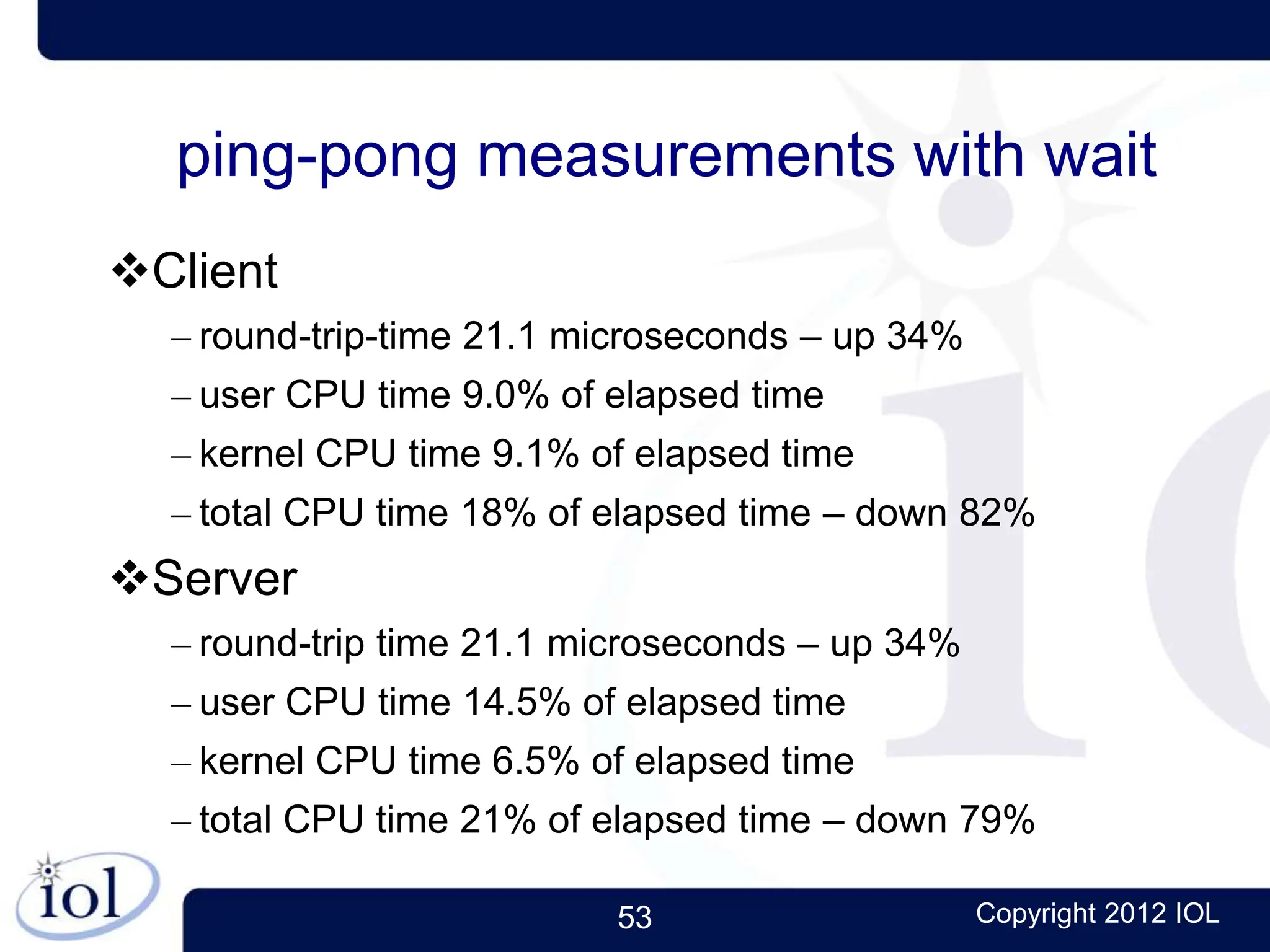

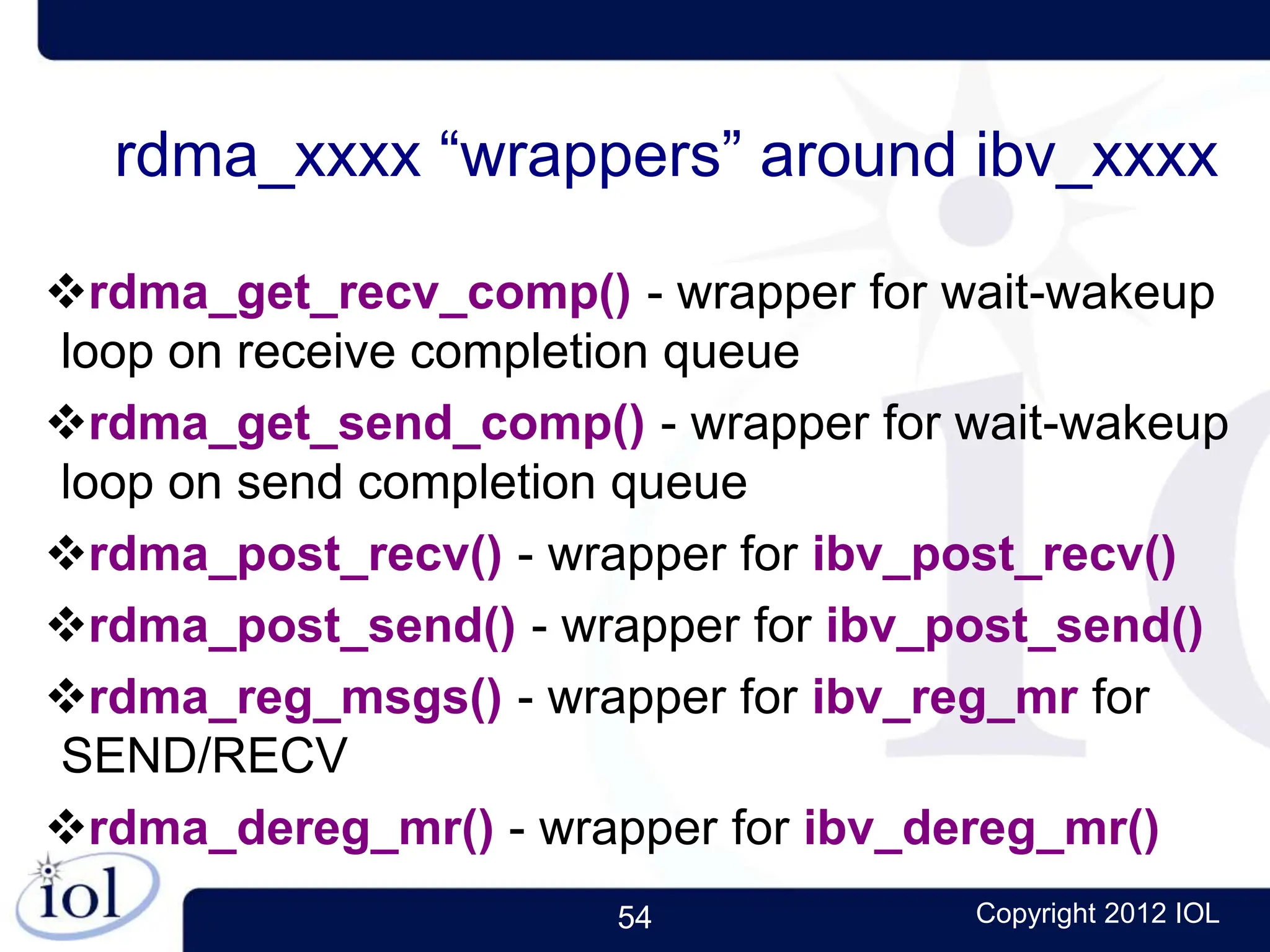

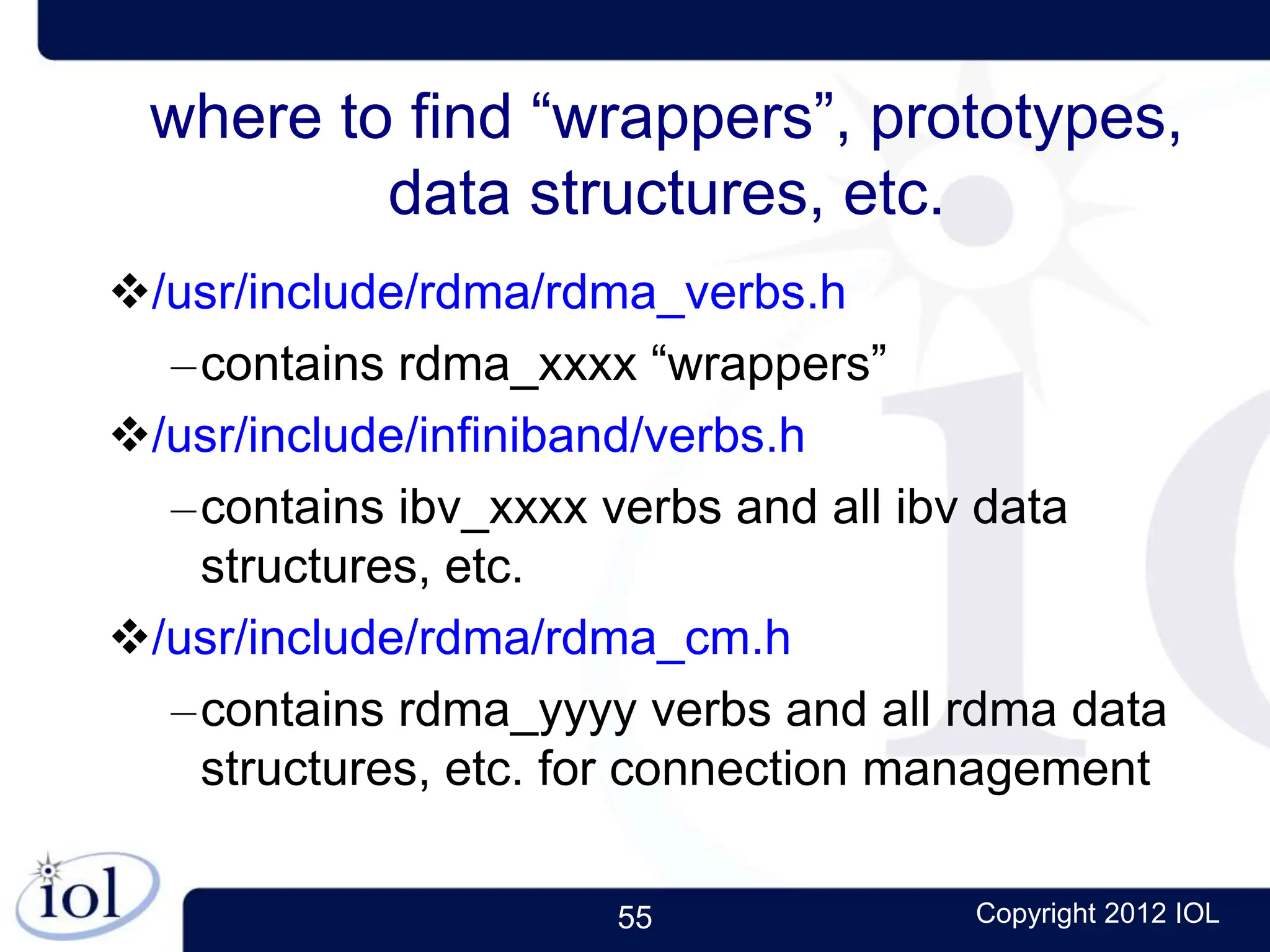

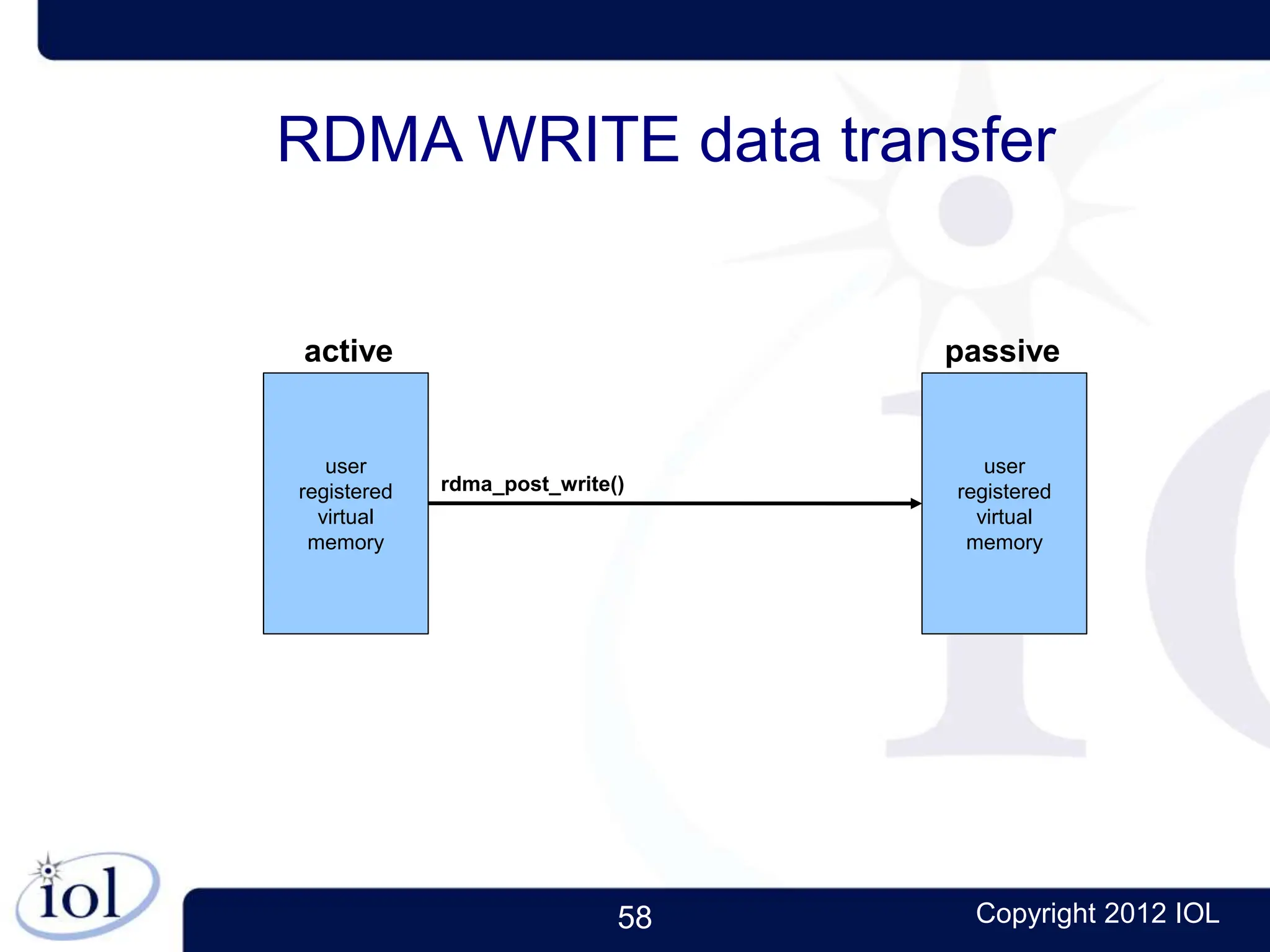

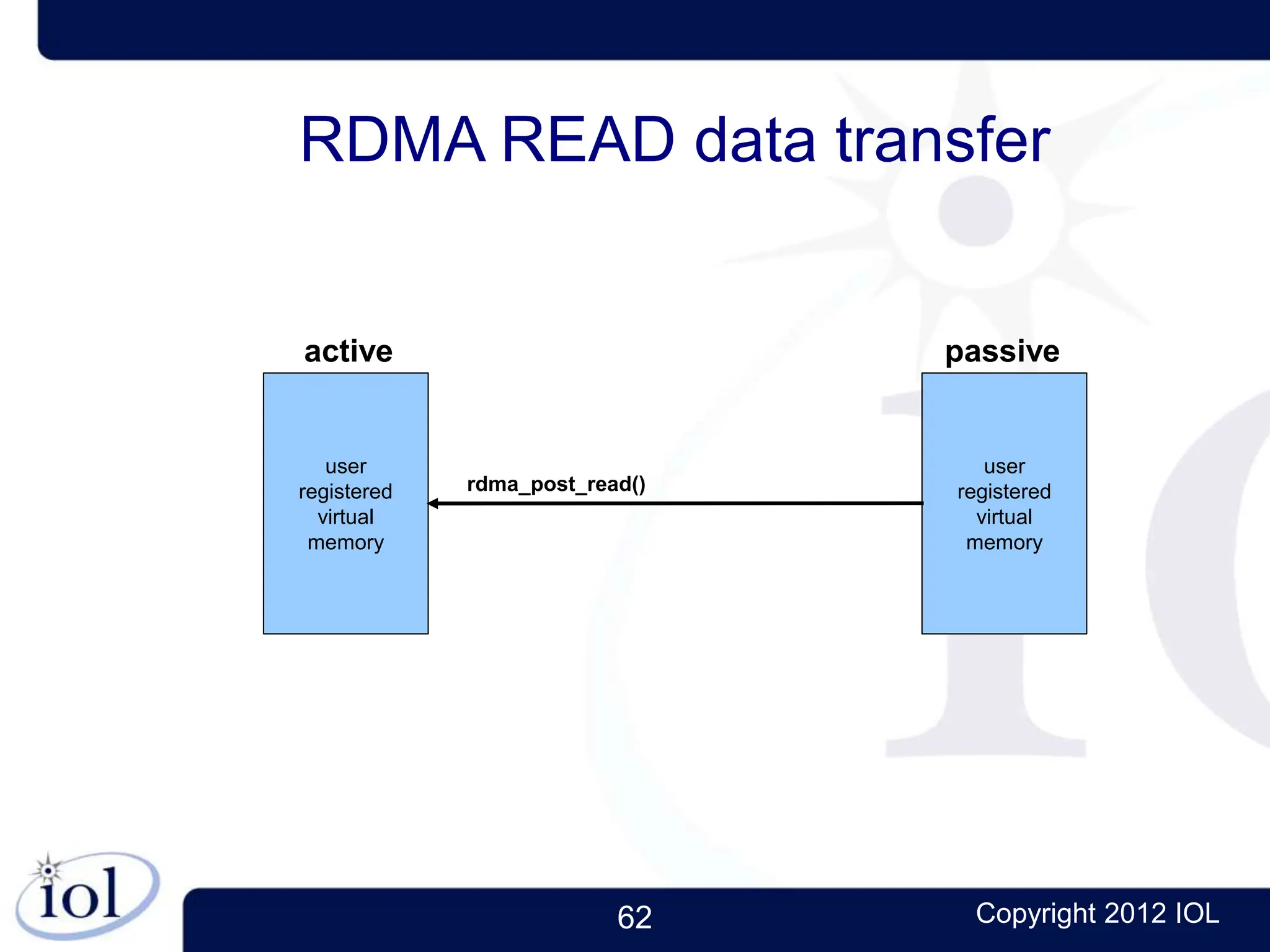

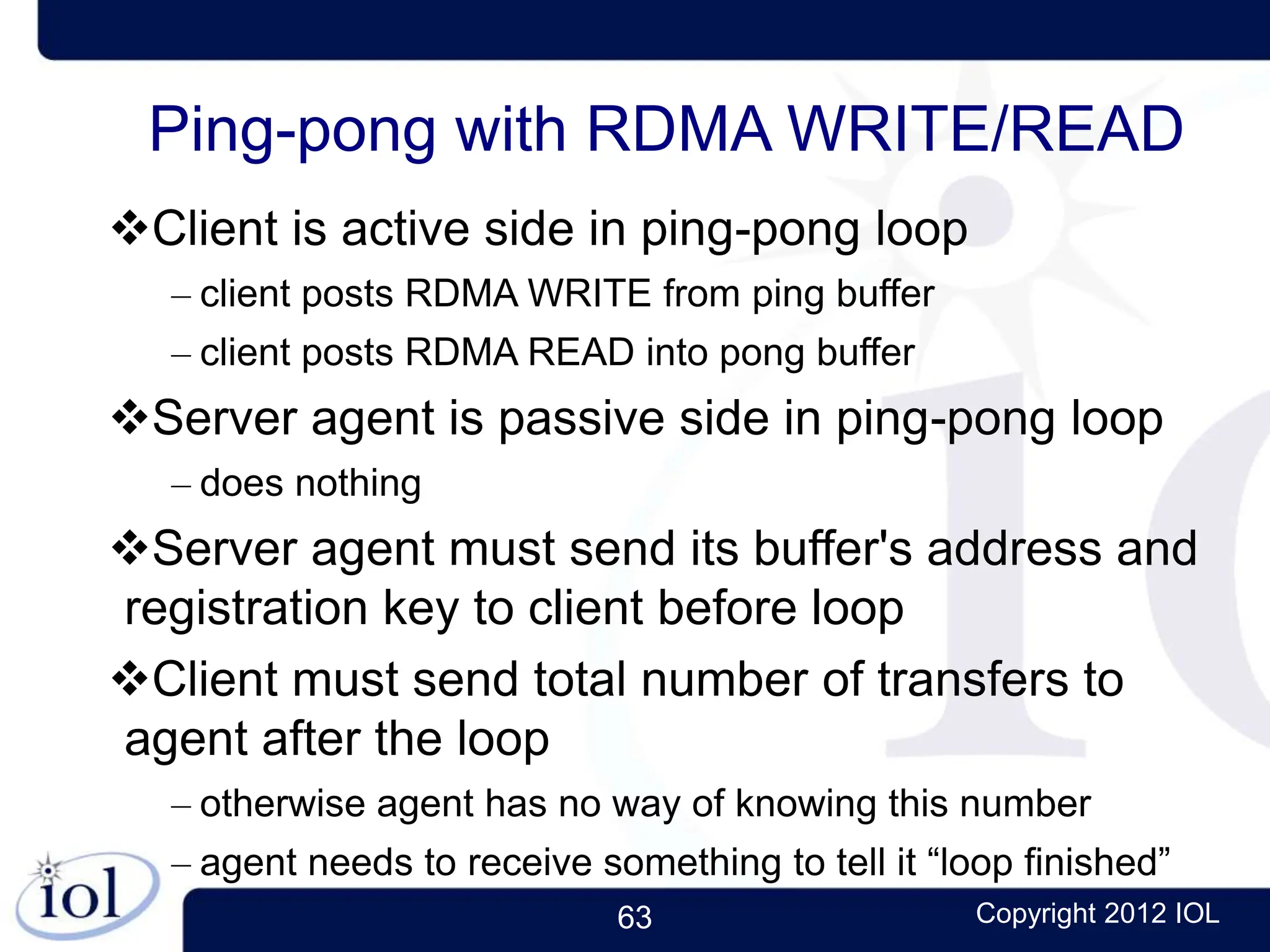

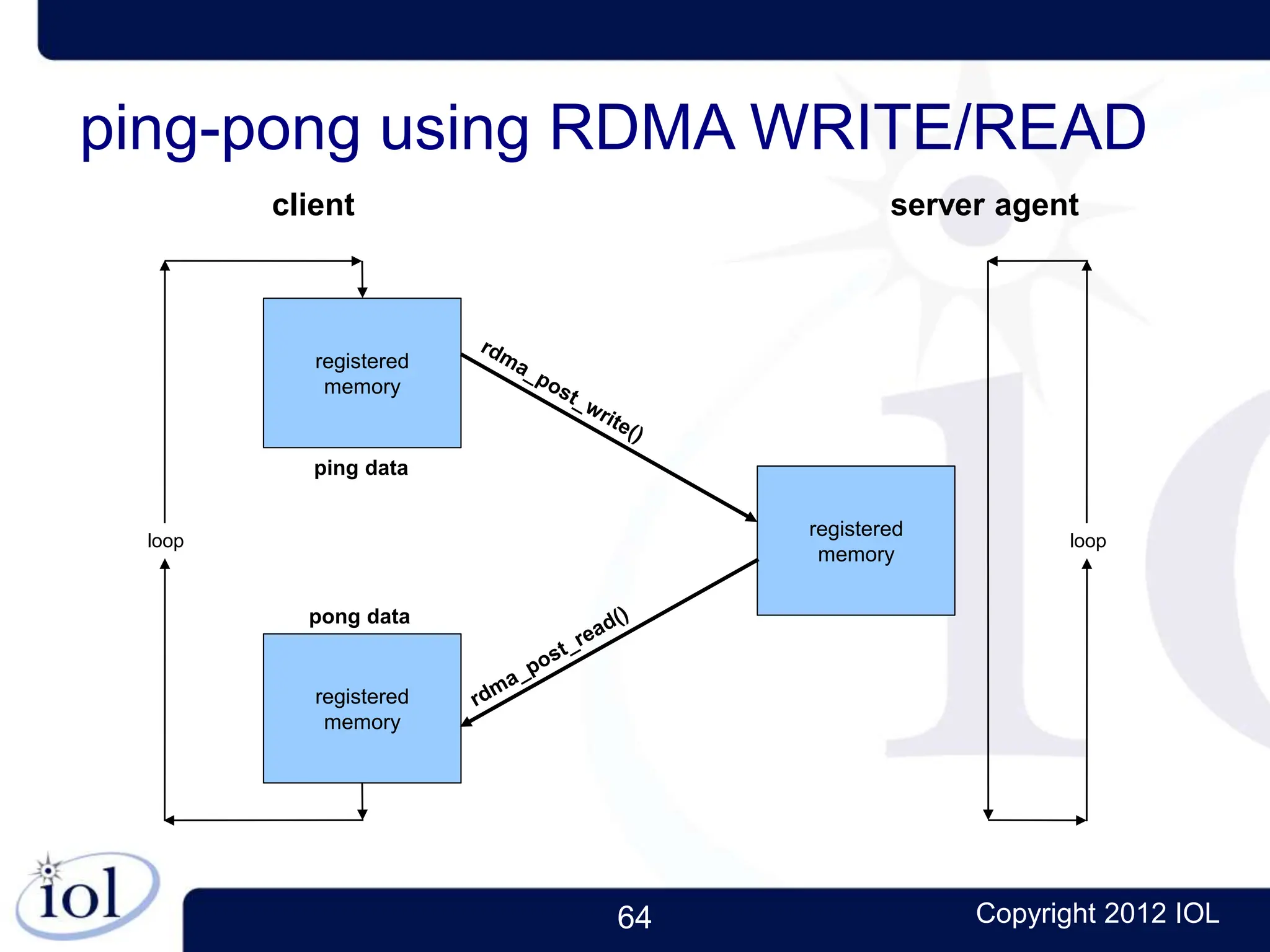

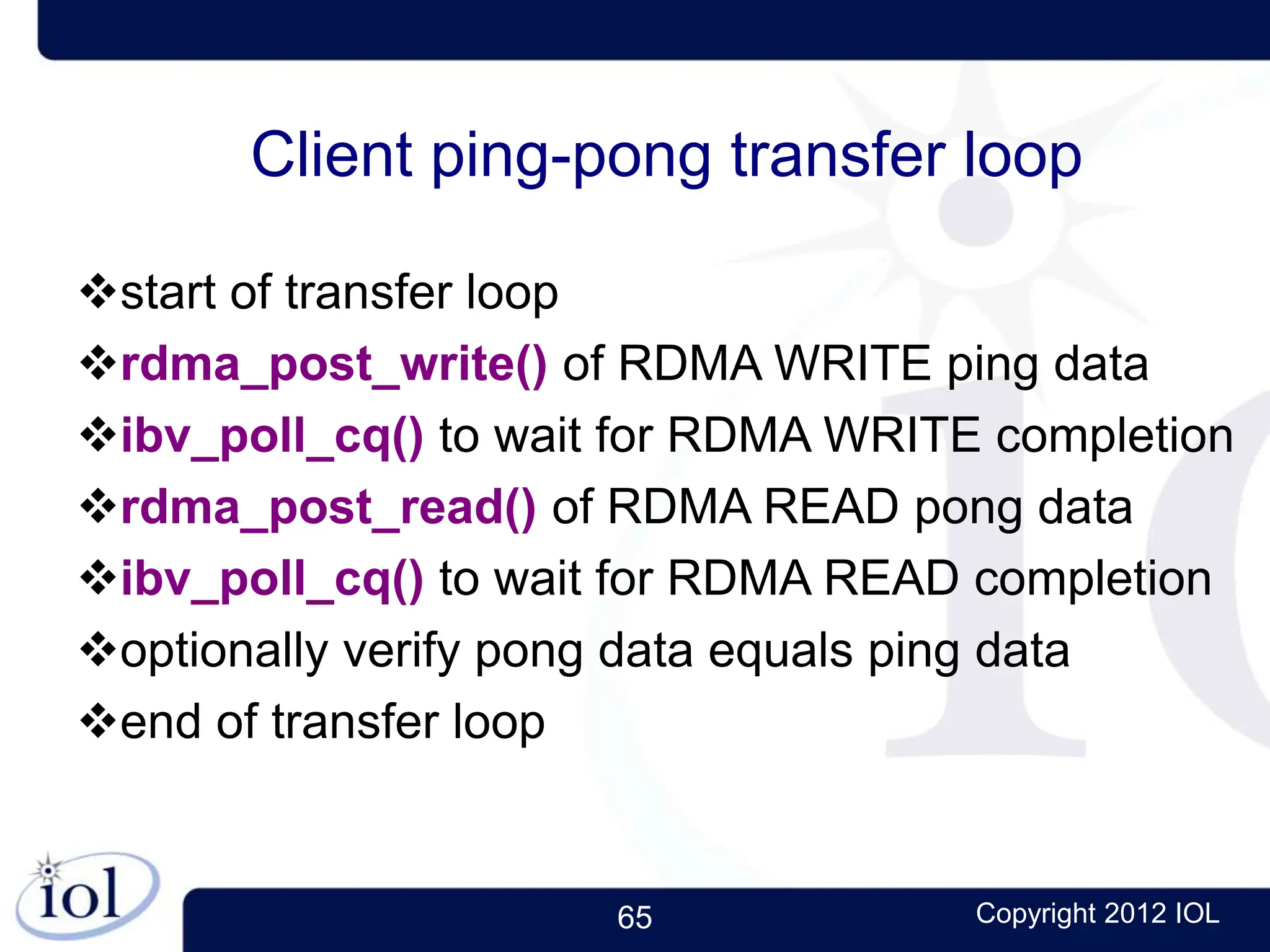

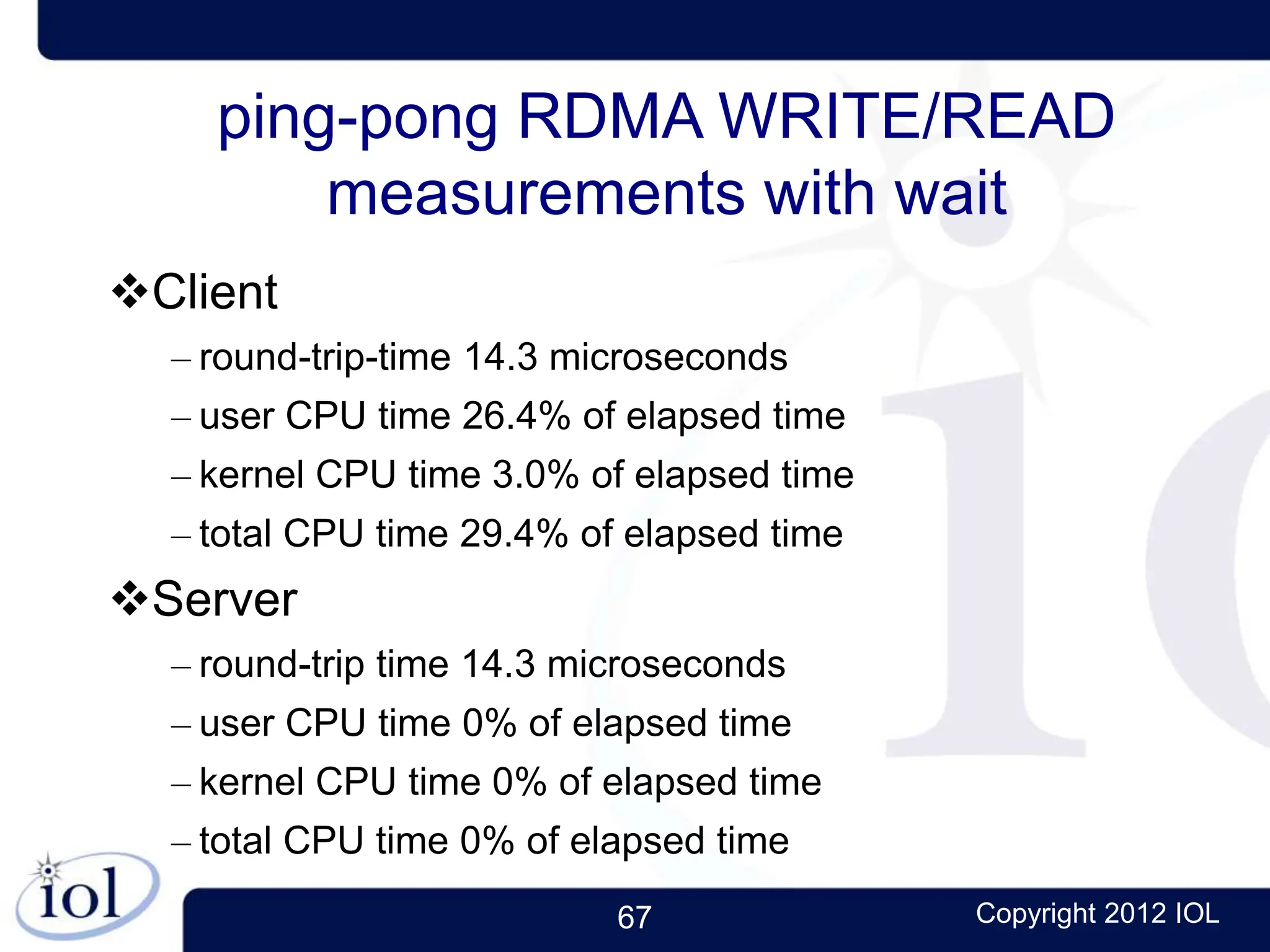

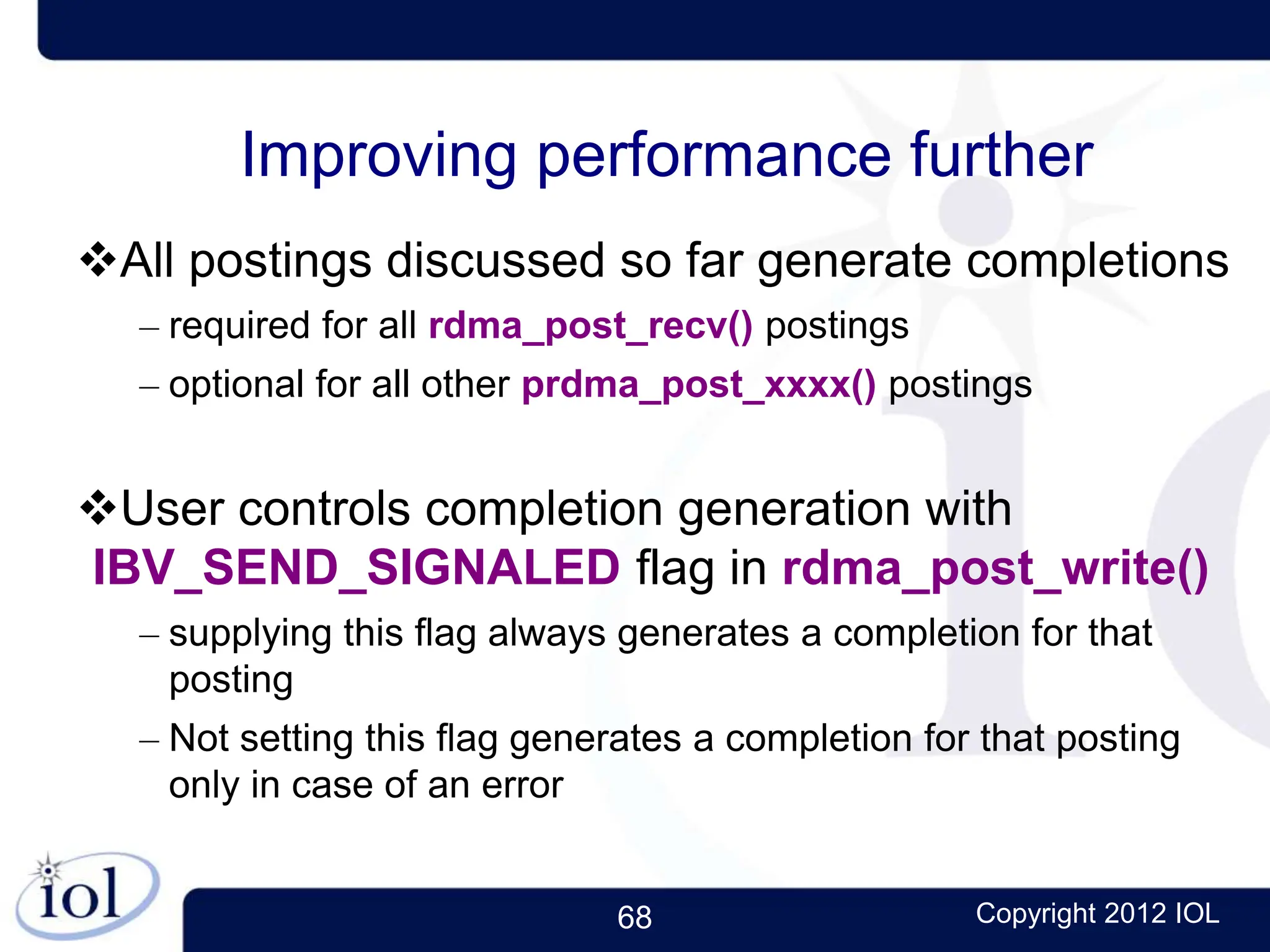

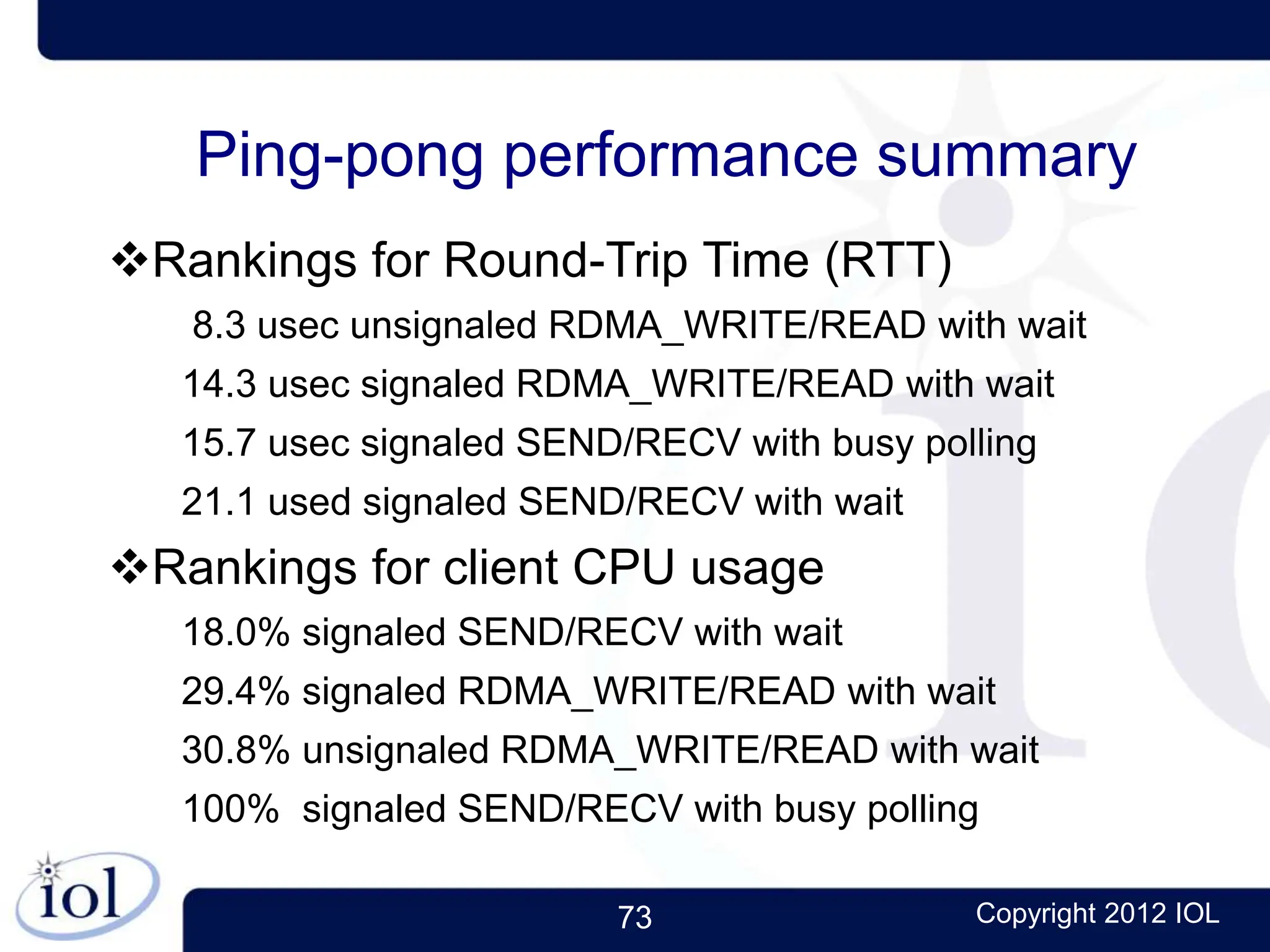

This document provides an introduction and overview of Remote Direct Memory Access (RDMA) programming. It describes what RDMA is, the benefits it provides over traditional TCP/IP networking, common RDMA technologies like InfiniBand and RoCE, the software drivers and libraries used, and how data is transferred asynchronously without operating system involvement through the use of channels, queues and memory registration. The document also compares the client/server models and data transfer processes of RDMA and TCP/IP.