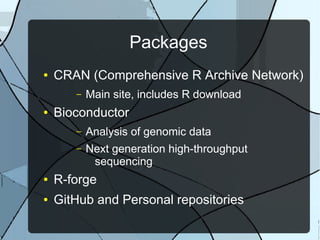

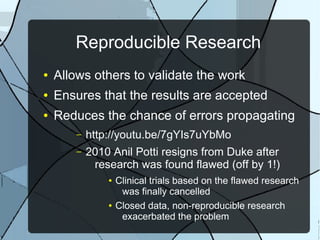

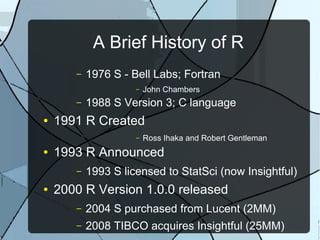

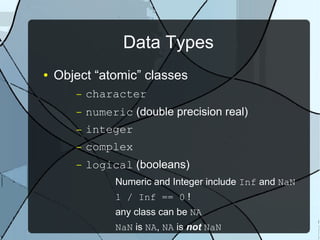

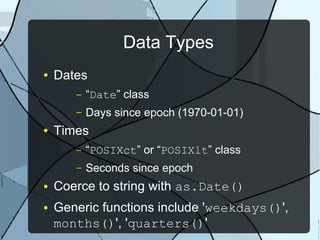

The document provides a comprehensive history and overview of the R programming language, detailing its origins, development, and applications in statistical analysis and data visualization. It outlines various data types, control structures, and key packages used in R, including tools for reproducible research and data exploration. Additionally, it highlights resources for sharing research findings and creating interactive visualizations with R.

![Operators

● Grouping: ()

● Assignment: to<-from AND from->to

● Vectorized: + - ! * / ^ %% & |

● ~ ? : %/% %*% %o% %x% %in% < > == >=

<= && ||

● Element access: [[]] [] $

● Function argument types:

– symbol, symbol=default, ...](https://image.slidesharecdn.com/r-150517174529-lva1-app6892/85/R-the-language-12-320.jpg)