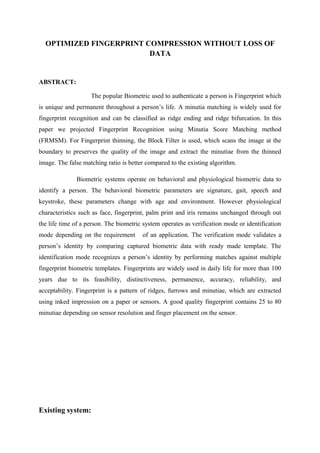

This document summarizes research on optimized fingerprint compression without loss of data. It discusses how fingerprint recognition works by extracting minutiae features from fingerprints. It then describes the proposed fingerprint recognition method using minutiae score matching (FRMSM), which uses block filtering for thinning fingerprints to preserve image quality while extracting minutiae. Experimental results showed the false matching ratio was better than existing algorithms. The document also provides background on biometric systems and fingerprint recognition. It reviews related work on fingerprint enhancement, orientation field estimation, and minutiae extraction. The proposed system describes a line extraction and graph matching approach for fingerprint matching with improved robustness. Modules for the system include authentication, image capturing, fingerprint matching, binarization, and