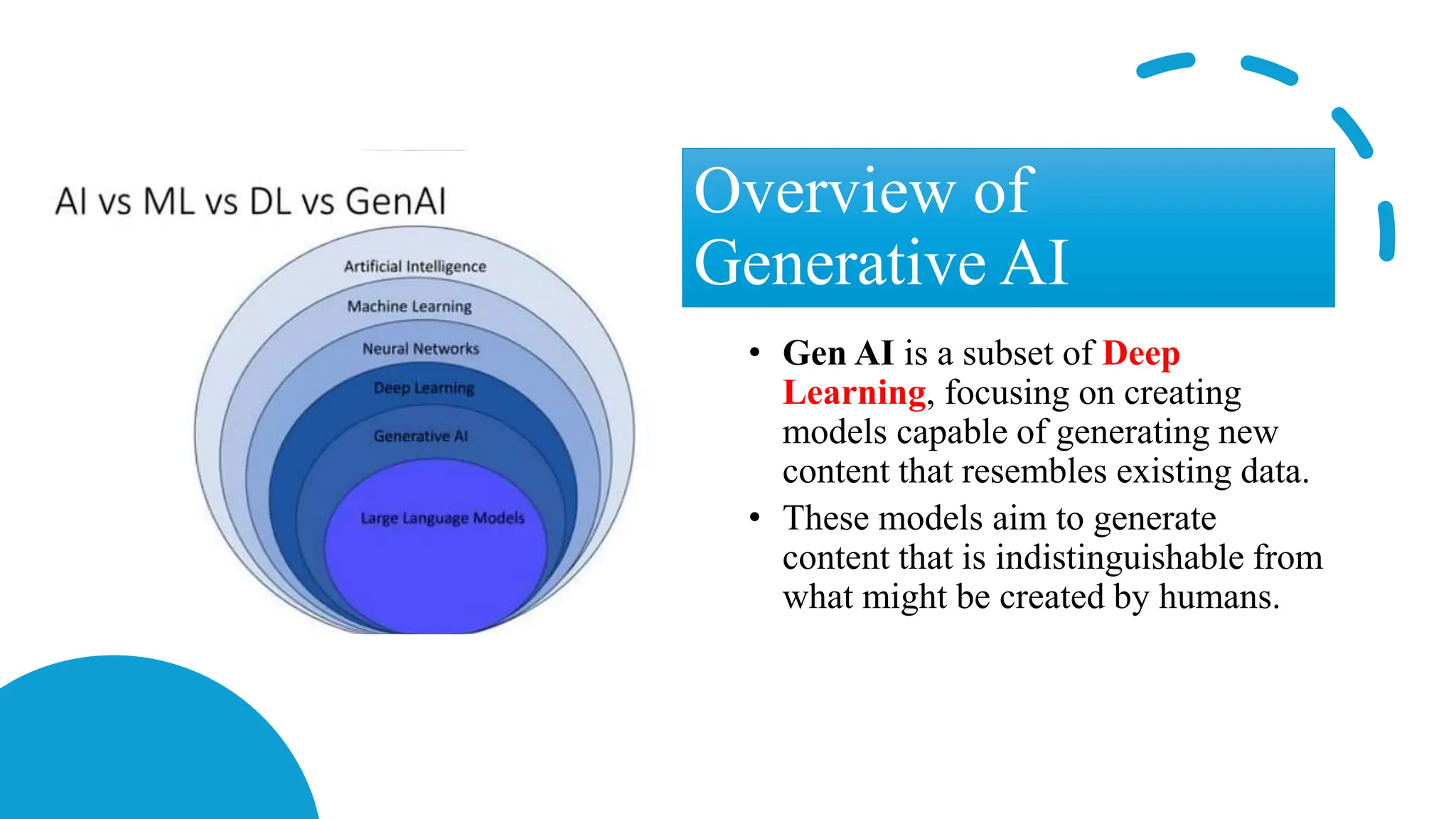

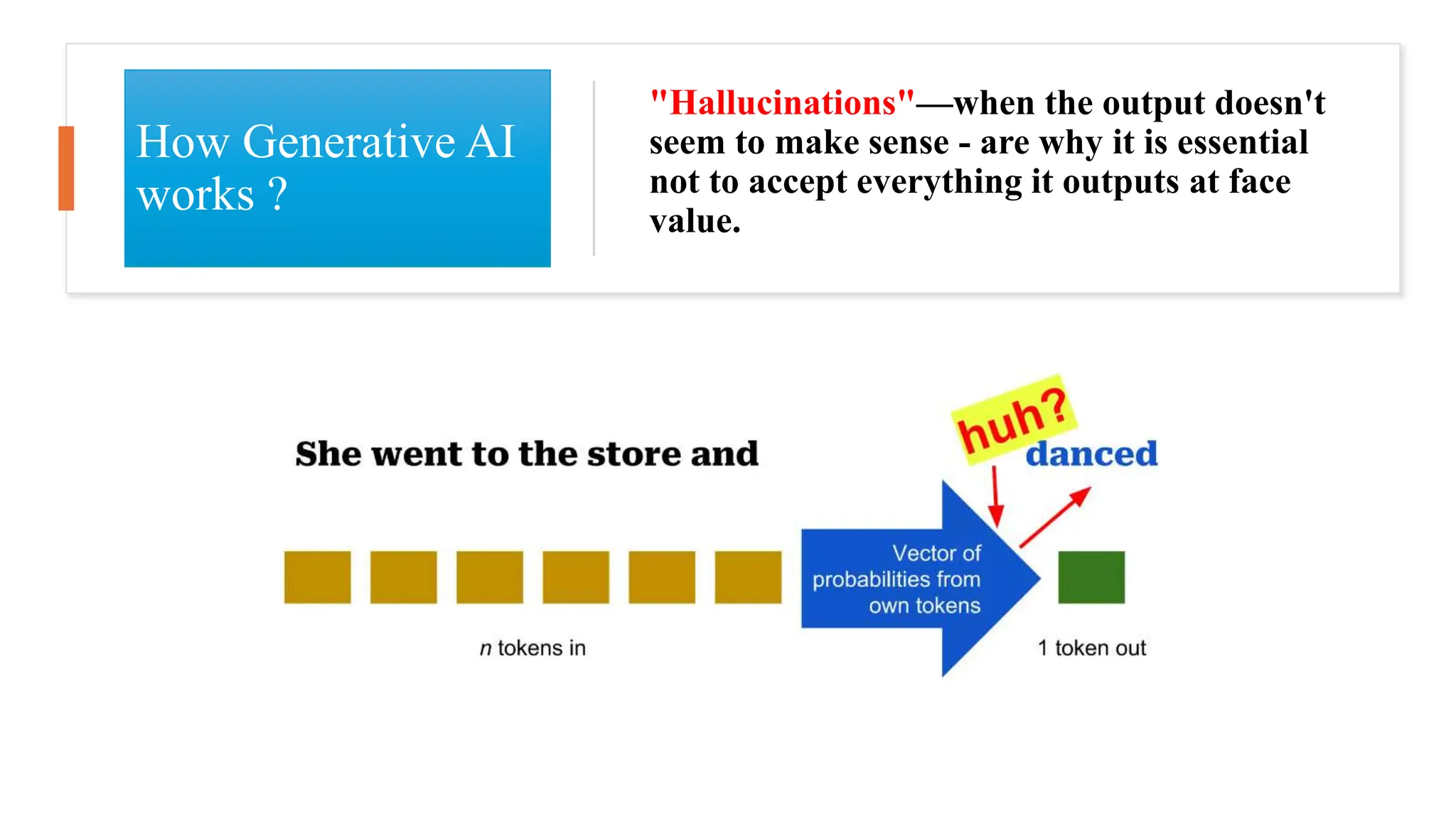

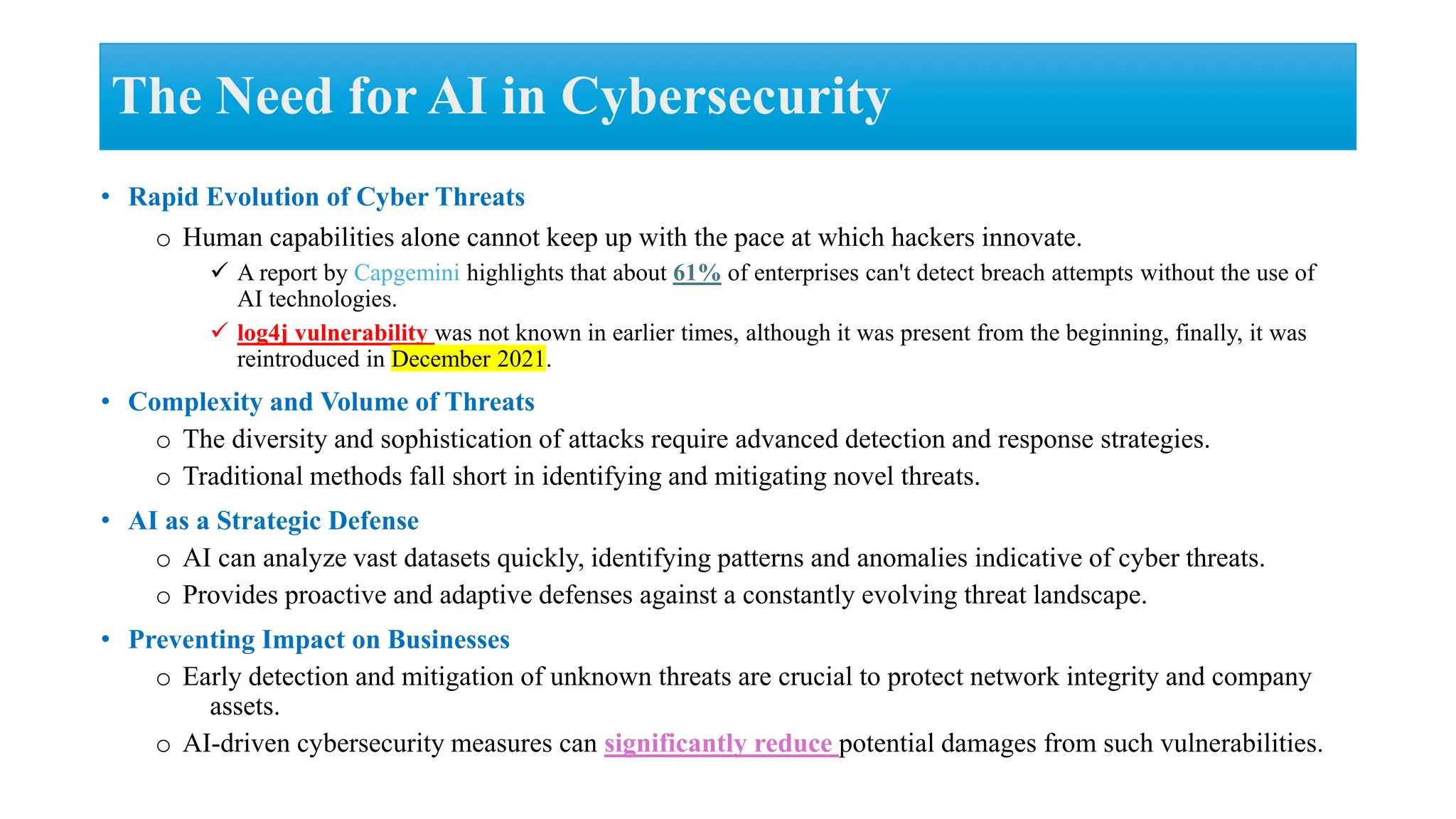

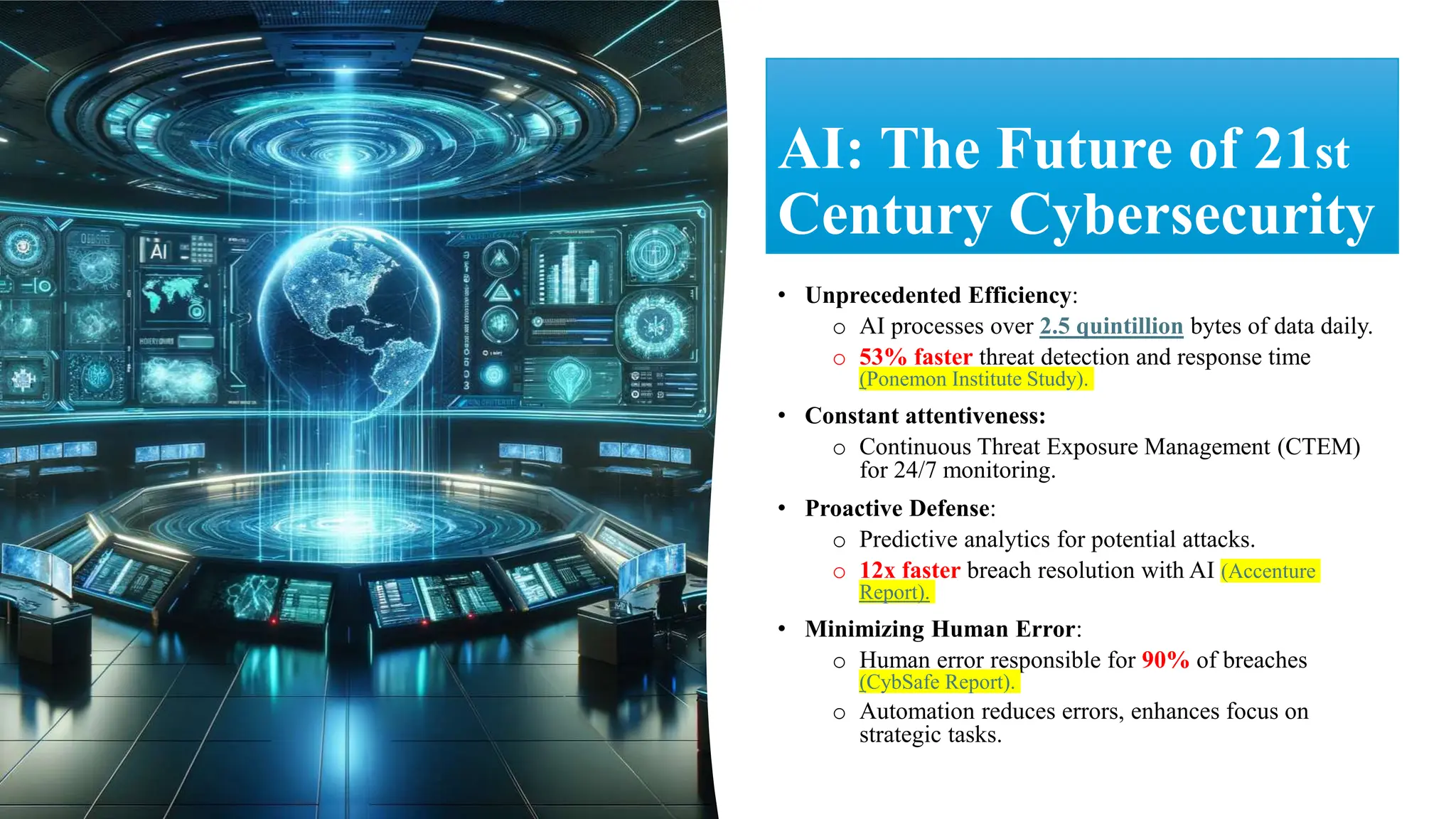

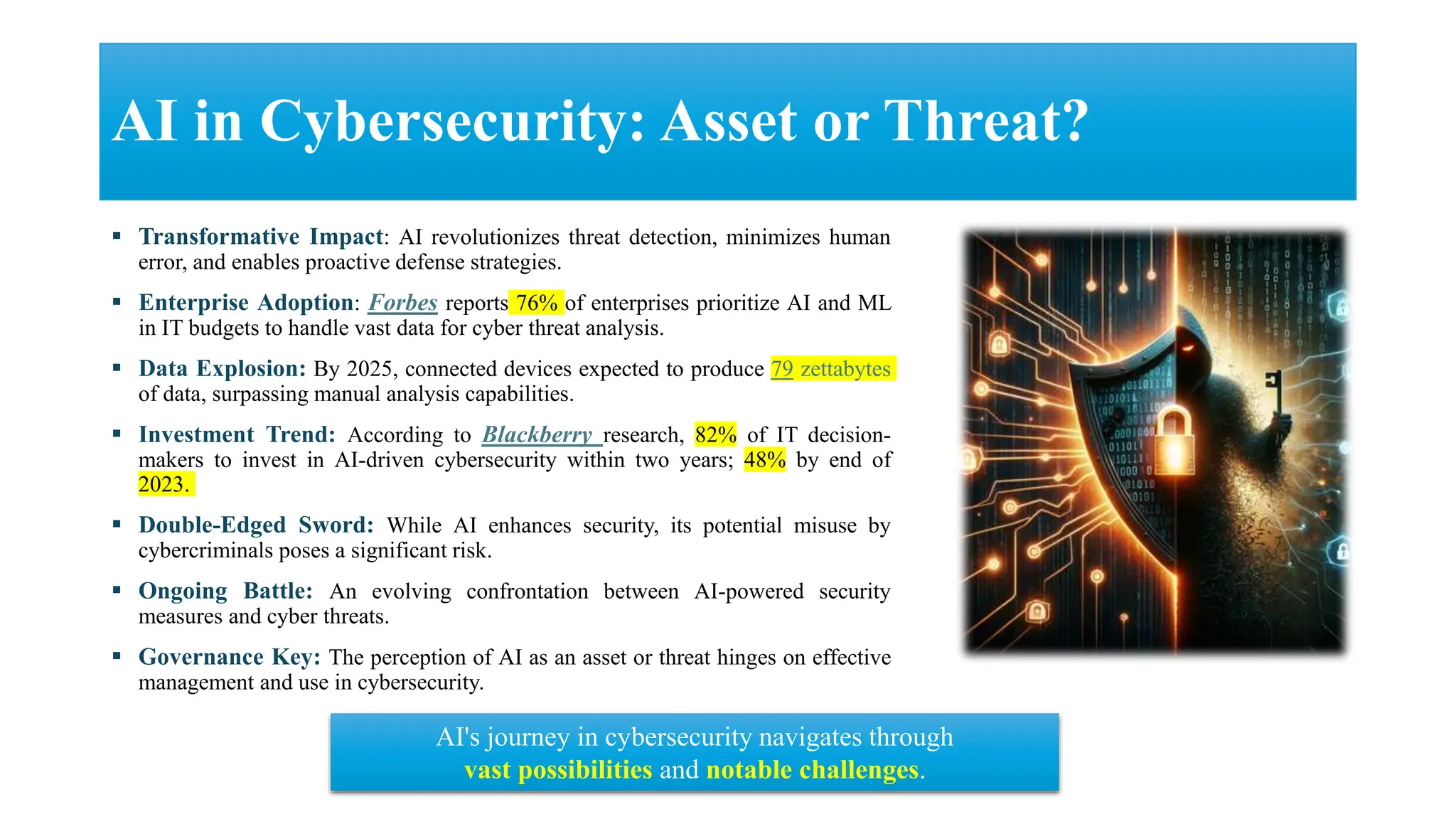

The document discusses generative AI and its implications for cybersecurity, covering various AI techniques such as generative pre-trained transformers and generative adversarial networks. It highlights the role of AI in enhancing cybersecurity through improved threat detection, anomaly detection, and proactive defenses against cyber threats. The need for AI in cybersecurity is underscored by its ability to process large datasets, minimize human error, and support advanced threat modeling and detection strategies.

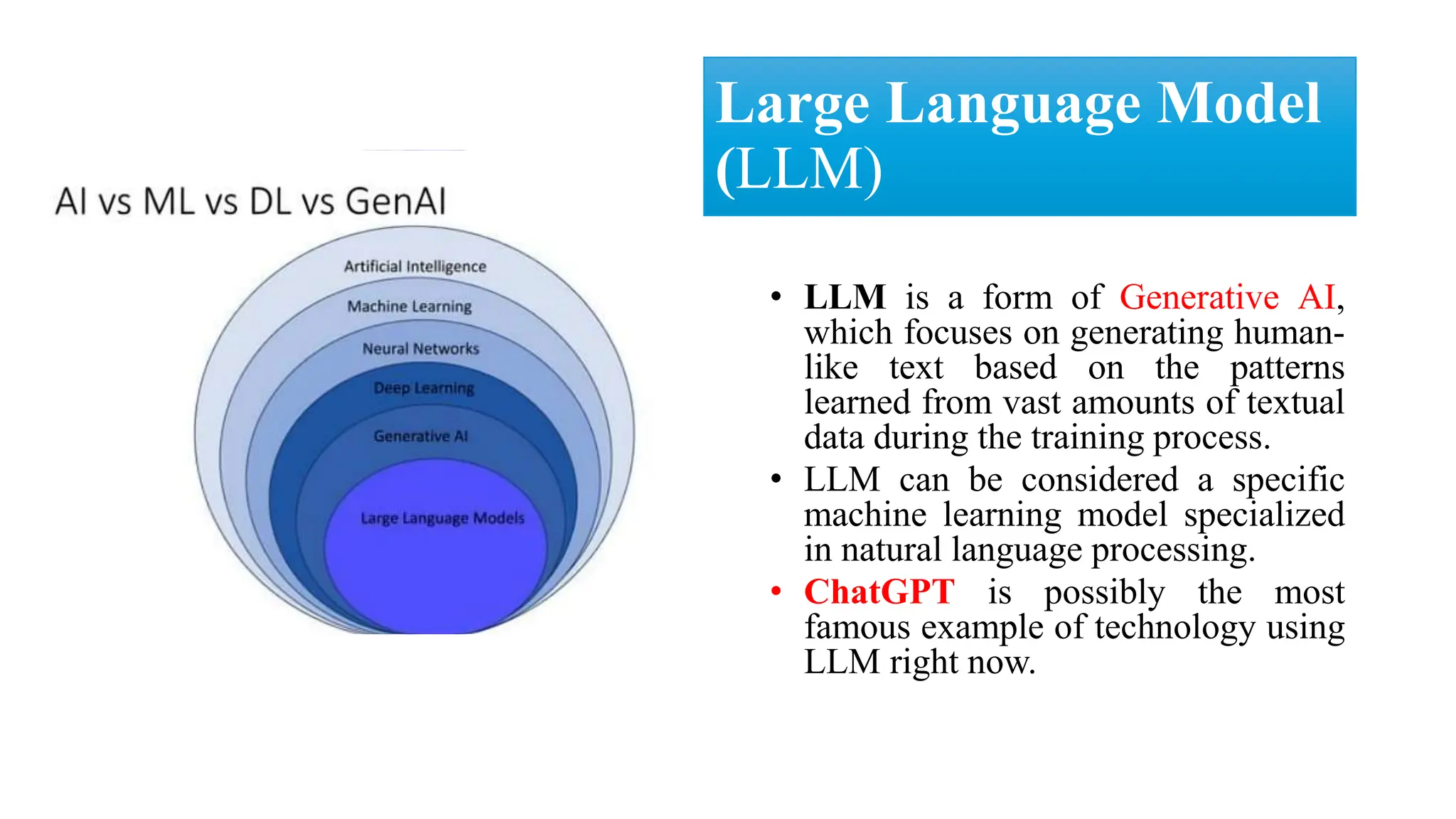

![Four Main Types Of Generative AI (GAI)

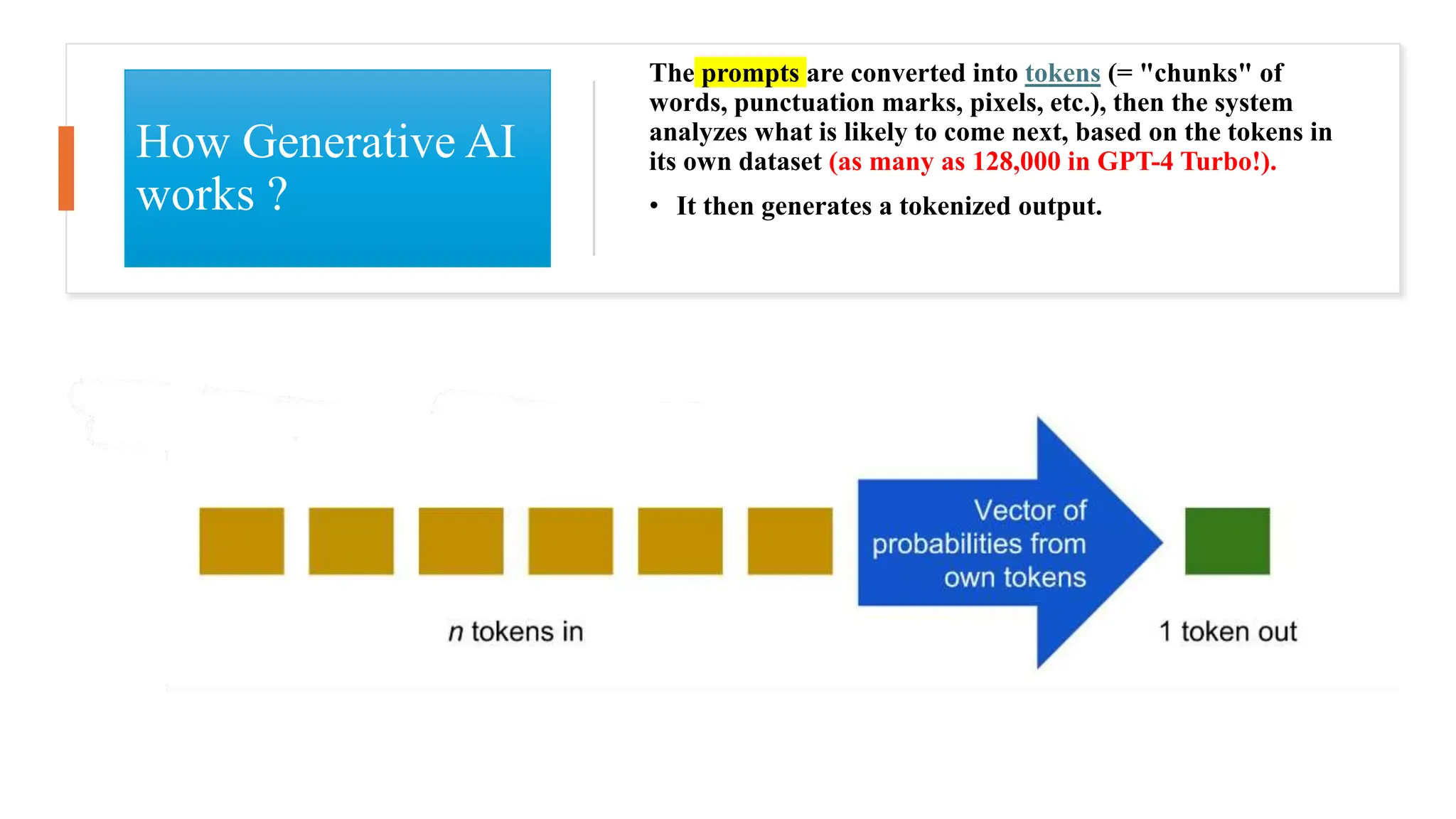

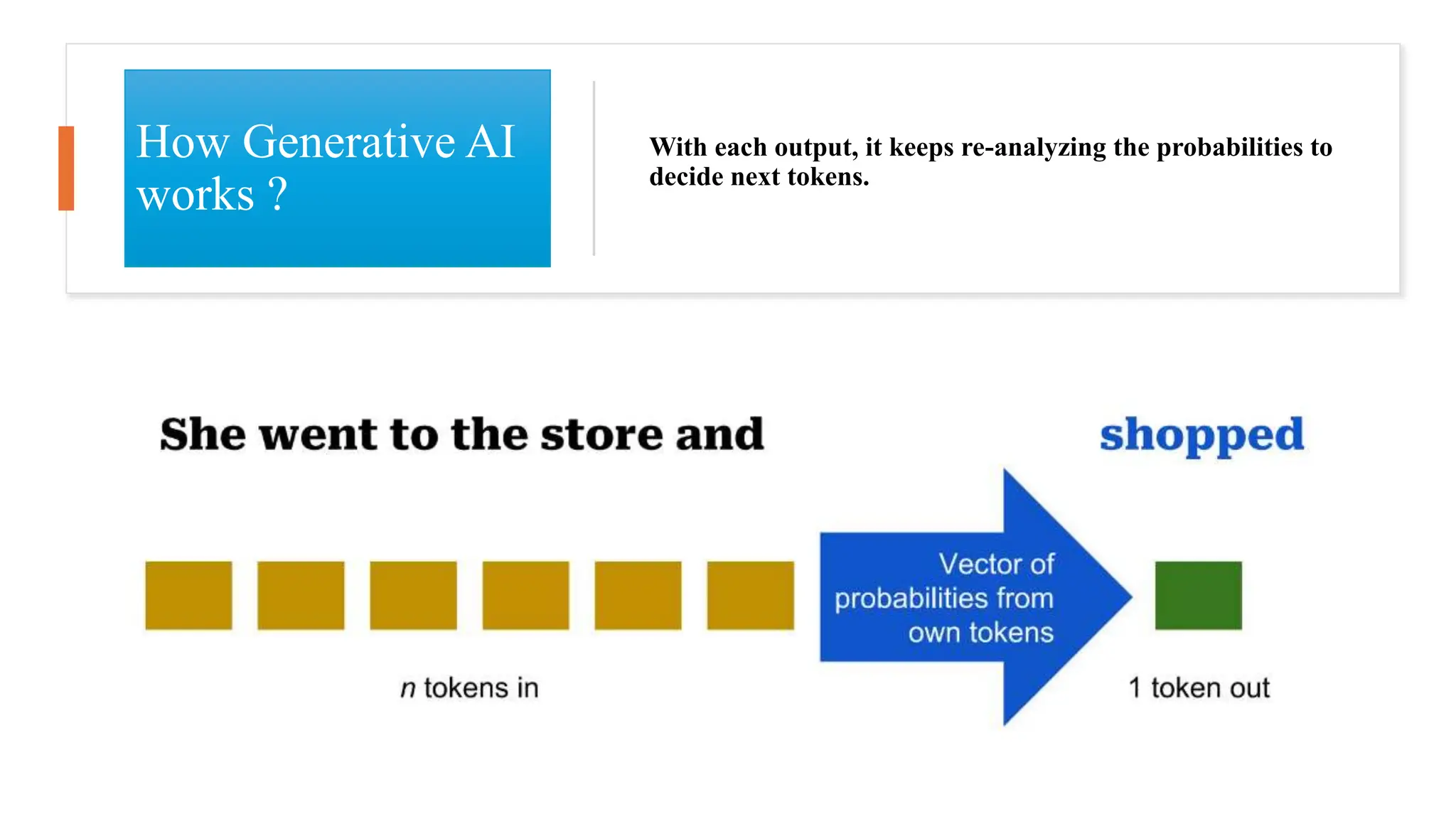

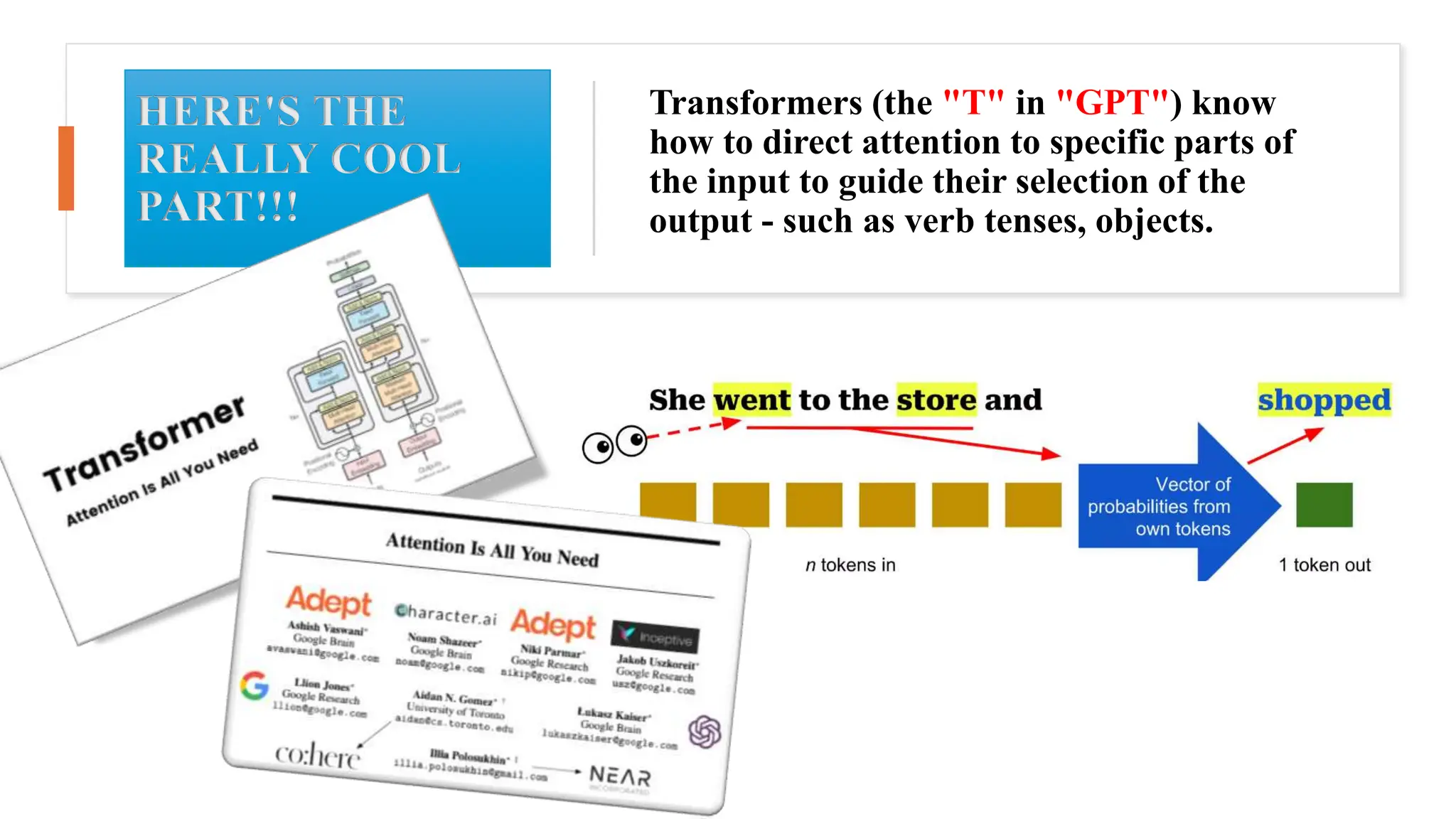

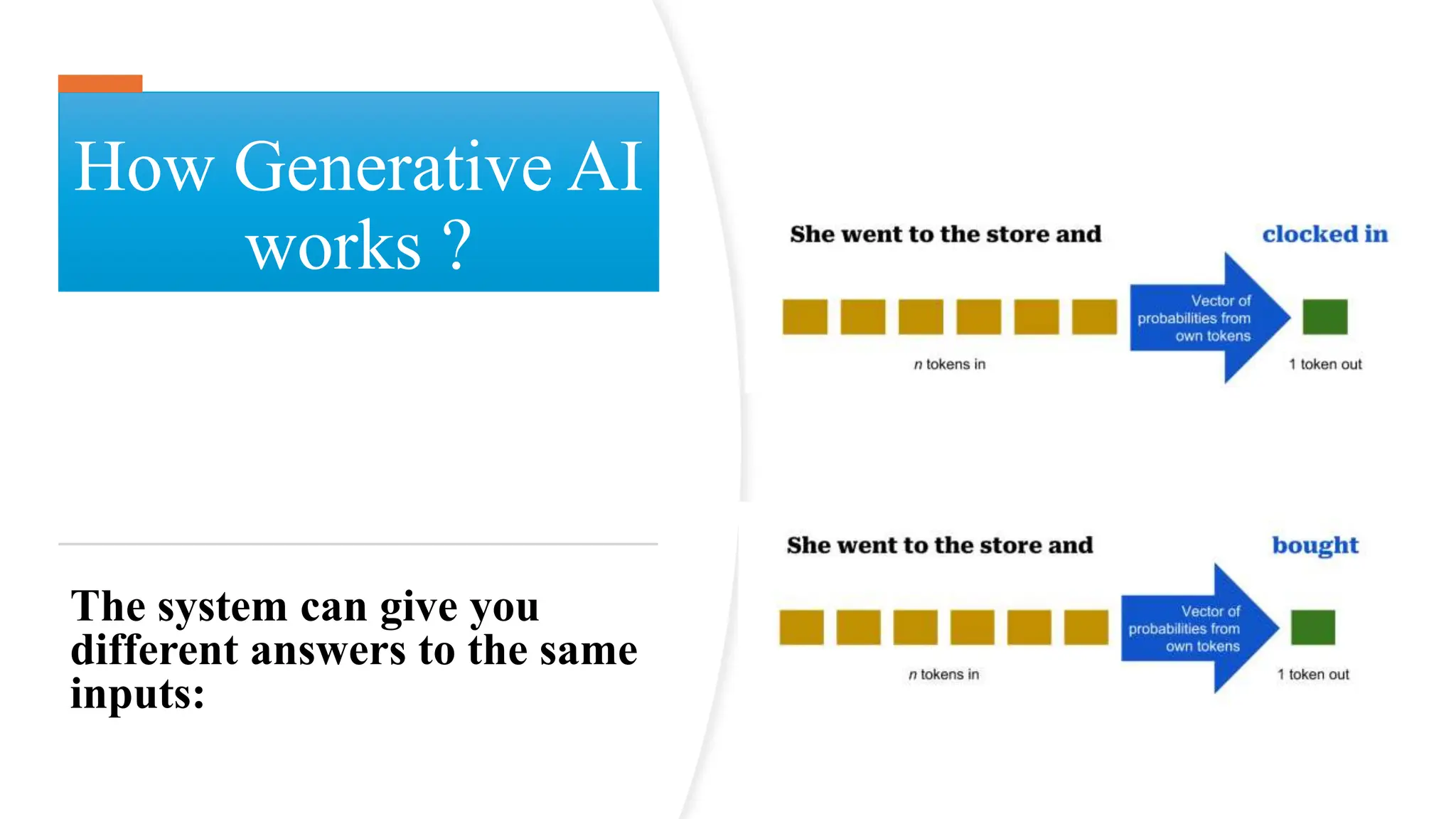

Techniques [1]

•Models that generate human-like text across

various languages and styles, improving with

each version through larger training datasets.

Generative Pre-trained

Transformer (GPT)

• Two neural networks, a “Generator” and a

“Discriminator”, work together.

• The generator creates content, and the

discriminator judges its authenticity, aiming for

indistinguishably realistic outputs.

Generative Adversarial

Networks (GANs)](https://image.slidesharecdn.com/generativecybersecurityai-240408161138-1b52f403/75/AI-Cybersecurity-Pros-Cons-AI-is-reshaping-cybersecurity-4-2048.jpg)

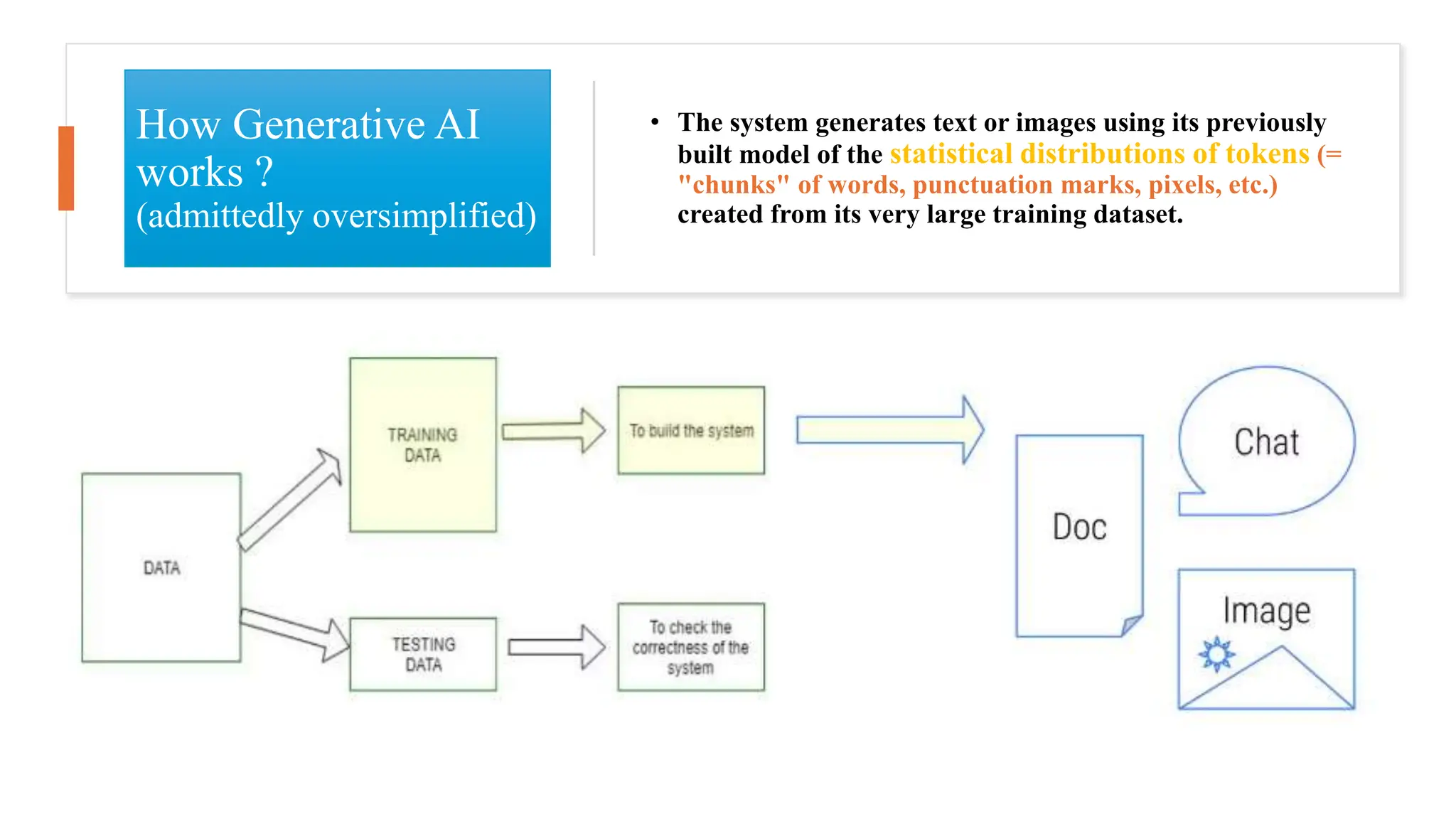

![Main Types Of Generative AI (GAI) Techniques [1]

•Starts with data,

adds noise, then

learns to reverse

this process,

creating high-

quality content

from random

noise.

The

Generative

Diffusion

Model

(GDM)](https://image.slidesharecdn.com/generativecybersecurityai-240408161138-1b52f403/75/AI-Cybersecurity-Pros-Cons-AI-is-reshaping-cybersecurity-5-2048.jpg)

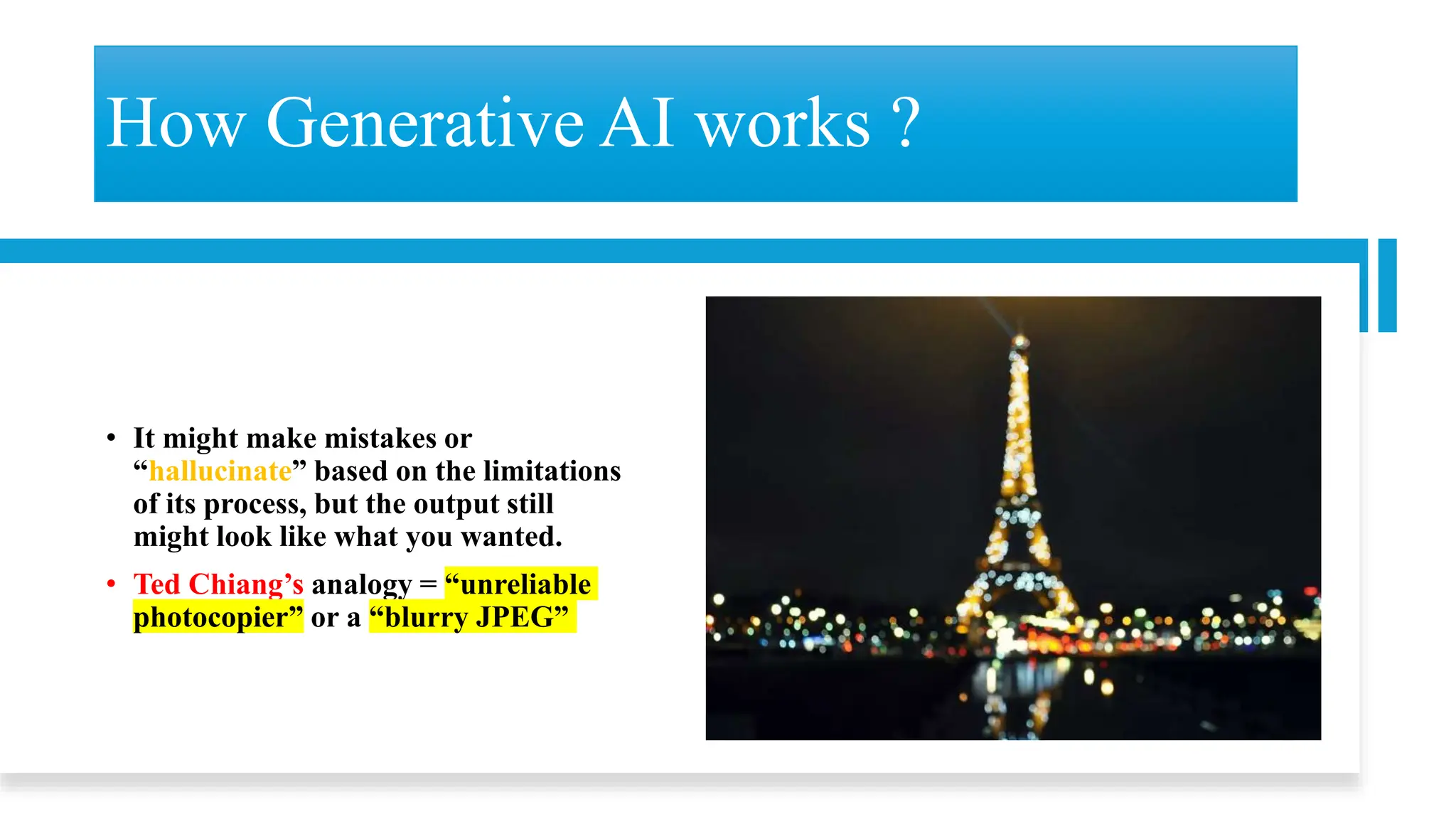

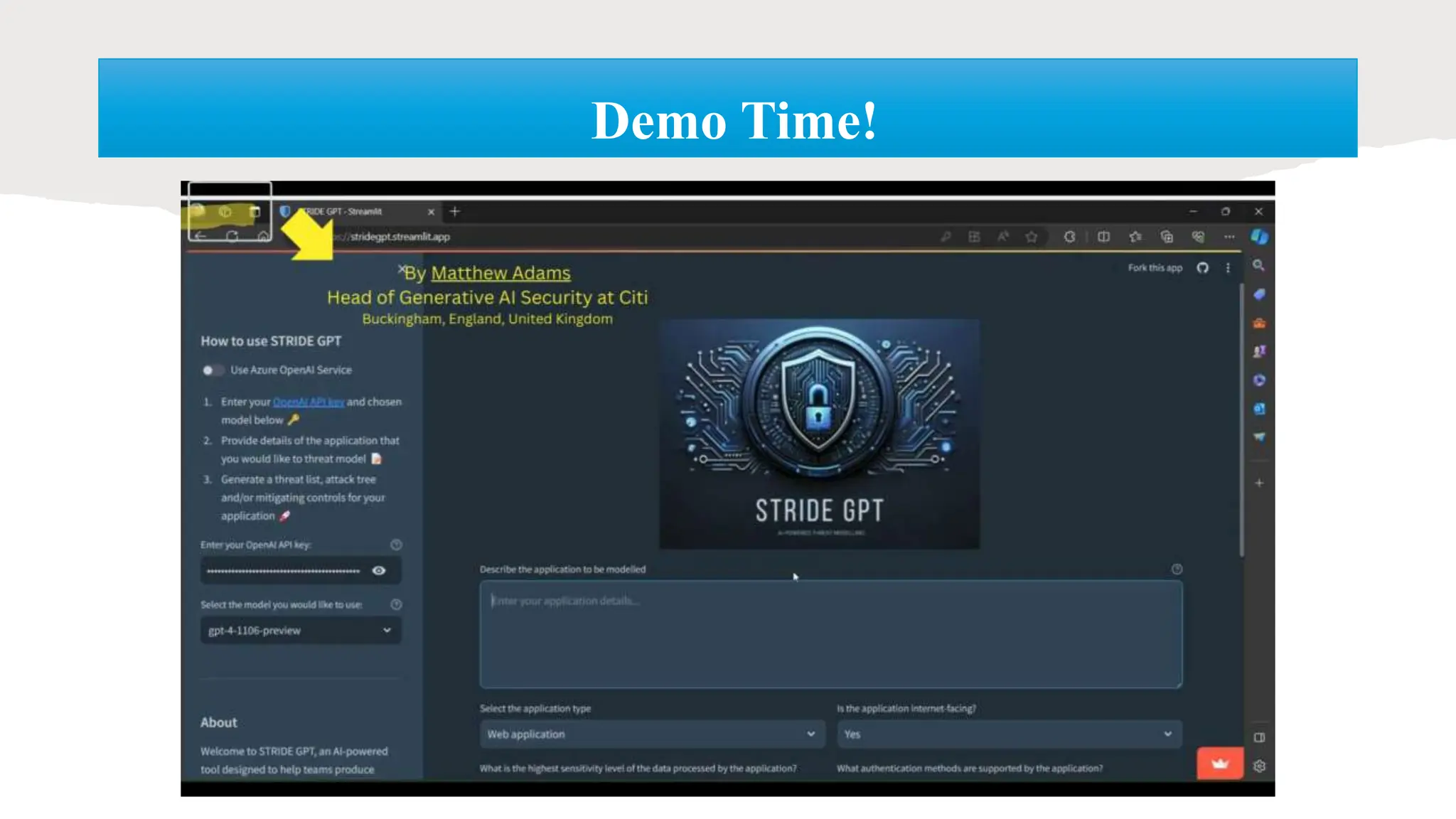

![• Objective: Compare the effectiveness of phishing emails created by GPT-4, the

V-Triad method, a combination of both, and a control group of generic phishing

emails.

• Method: Simulated phishing attacks on 112 participants using a red teaming

approach.

• Key Findings

Click-Through Rates:

o Control Group: 19-28%

o GPT-4 Generated: 30-44%

o V-Triad Generated: 69-79%

o Combined GPT-4 & V-Triad: 43-81%

Detection: Large language models (GPT, Claude, PaLM, LLaMA) effectively detected

phishing intent, sometimes outperforming human detection.

II- Synthetic Phishing Emails

Devising and Detecting Phishing Emails Using Large Language Models [2]](https://image.slidesharecdn.com/generativecybersecurityai-240408161138-1b52f403/75/AI-Cybersecurity-Pros-Cons-AI-is-reshaping-cybersecurity-31-2048.jpg)

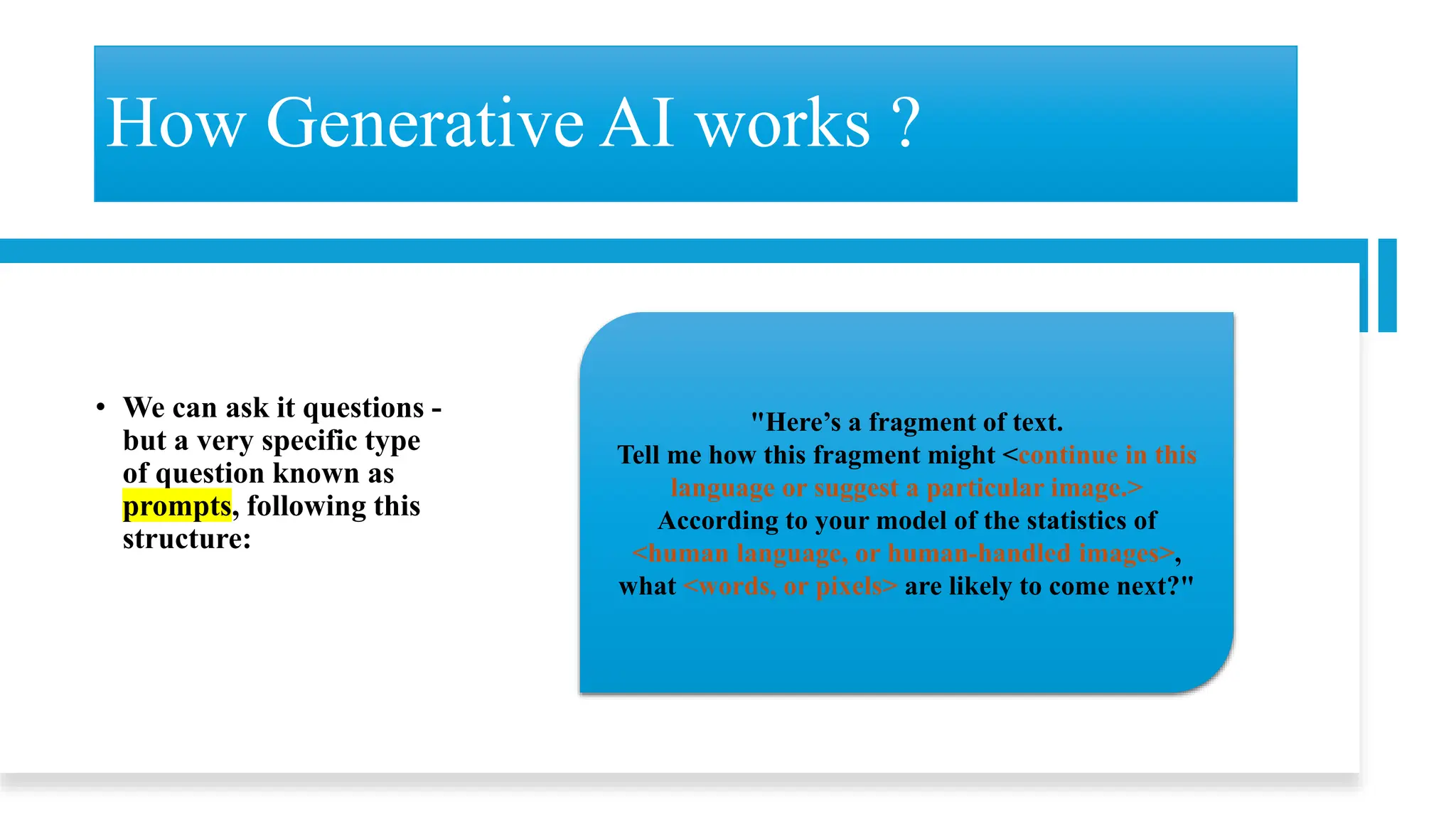

![• Objective : To introduce SecurityBERT, a BERT-based architecture, for efficient cyber threat

detection in IoT networks, enhancing precision and minimizing computational demand.

• Method

o Utilization of Bidirectional Encoder Representations from Transformers (BERT) with a novel

Privacy-Preserving Fixed-Length Encoding (PPFLE) and the Byte-level Byte-Pair Encoder

(BBPE) Tokenizer.

o Evaluation using the Edge-IIoTset cybersecurity dataset for identifying fourteen distinct

attack types.

• Key Findings

o Performance: SecurityBERT achieved an impressive 98.2% accuracy, outperforming

traditional ML and DL models, including CNNs and RNNs.

o Efficiency: Demonstrated high efficiency with an inference time of <0.15 seconds on average

CPUs and a model size of 16.7MB, suitable for deployment on resource-constrained IoT

devices.

III- Threat Detection

Revolutionizing Cyber Threat Detection With Large Language Models: A Privacy-Preserving BERT-

Based Lightweight Model for IoT/IIoT Devices [3]](https://image.slidesharecdn.com/generativecybersecurityai-240408161138-1b52f403/75/AI-Cybersecurity-Pros-Cons-AI-is-reshaping-cybersecurity-32-2048.jpg)

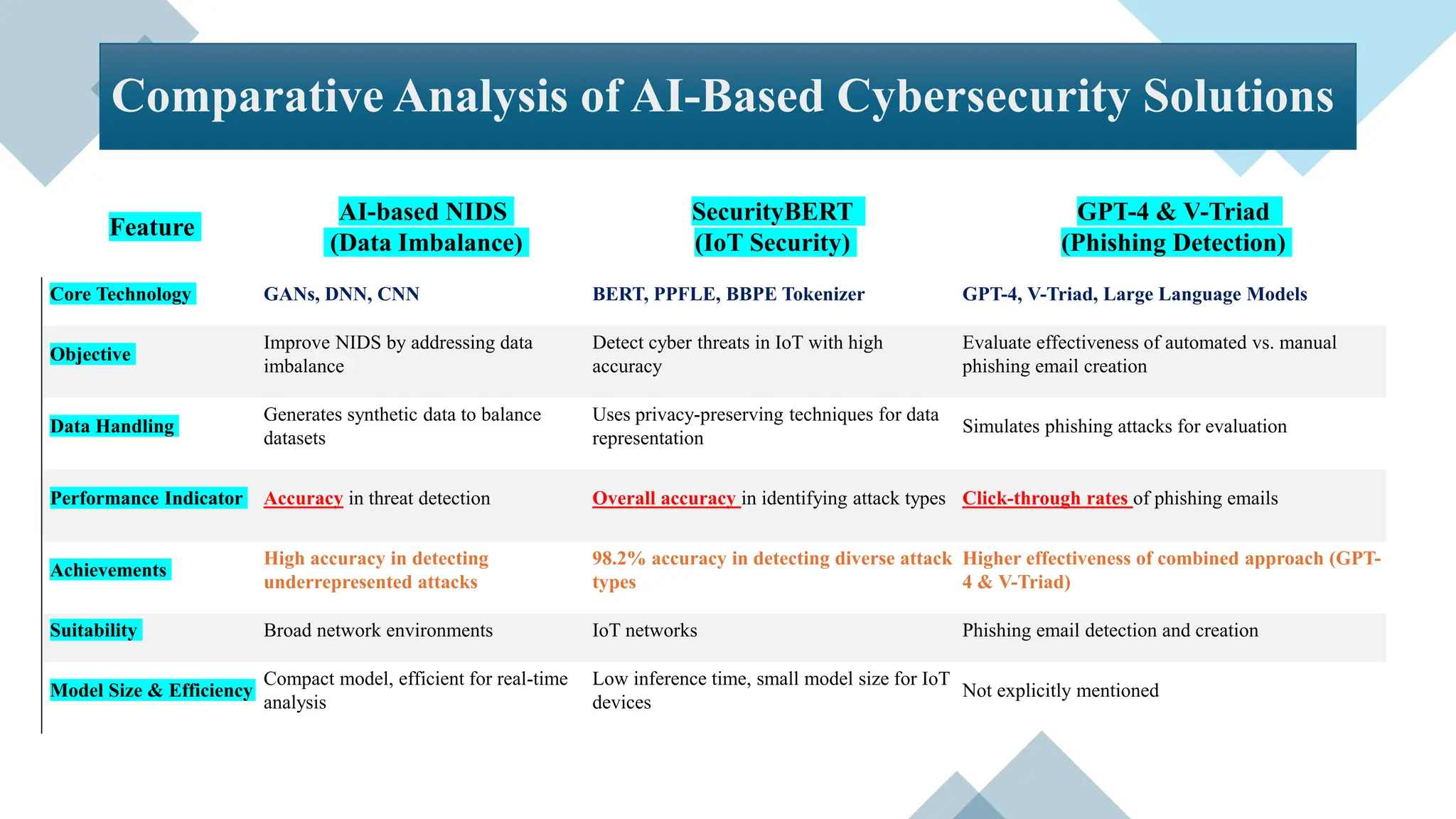

![• Objective: Propose a novel AI-based NIDS to efficiently resolve data imbalance problems and

enhance detection performance of network threats.

• Method

o Utilized state-of-the-art Generative Adversarial Networks (GANs) models , BEGAN , to generate

synthetic data for underrepresented attack traffic.

o Focused on reconstruction error and Wasserstein distance-based GANs, alongside autoencoder-driven

Deep Neural Networks (DNN) and Convolutional Neural Networks (CNN).

o Conducted evaluations across various datasets, including benchmark datasets, IoT datasets, and real

enterprise system data.

• Key Findings

o Achieved detection accuracies up to 93.2% on the NSL-KDD dataset and 87% on the UNSW-NB15

dataset, significantly improving minor class performance.

o Demonstrated the system’s effectiveness in detecting network threats within distributed environments

and IoT settings.

o Highlighted remarkable improvement in threat detection rates in real-world environments by addressing

data imbalance.

IV- Anomaly Detection

An Enhanced AI-Based Network Intrusion Detection System Using Generative Adversarial Networks [4]](https://image.slidesharecdn.com/generativecybersecurityai-240408161138-1b52f403/75/AI-Cybersecurity-Pros-Cons-AI-is-reshaping-cybersecurity-33-2048.jpg)

![References

[1] Jovanovic, M., & Campbell, M. (2022). Generative artificial intelligence: Trends and

prospects. Computer, 55(10), 107-112.

[2] Heiding, F., Schneier, B., Vishwanath, A., Bernstein, J., & Park, P. S. (2024).

Devising and detecting phishing emails using large language models. IEEE Access.

[3] Ferrag, M. A., Ndhlovu, M., Tihanyi, N., Cordeiro, L. C., Debbah, M., Lestable, T., &

Thandi, N. S. (2024). Revolutionizing Cyber Threat Detection with Large Language Models: A

privacy-preserving BERT-based Lightweight Model for IoT/IIoT Devices. IEEE Access.

[4] Park, C., Lee, J., Kim, Y., Park, J. G., Kim, H., & Hong, D. (2022). An enhanced AI-

based network intrusion detection system using generative adversarial networks. IEEE

Internet of Things Journal, 10(3), 2330-2345.](https://image.slidesharecdn.com/generativecybersecurityai-240408161138-1b52f403/75/AI-Cybersecurity-Pros-Cons-AI-is-reshaping-cybersecurity-37-2048.jpg)