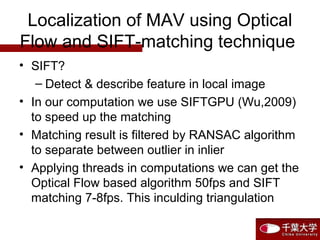

1) The document proposes using an embedded stereo camera and fusing optical flow and SIFT feature matching algorithms to estimate the localization of a micro aerial vehicle (MAV) in GPS-denied environments.

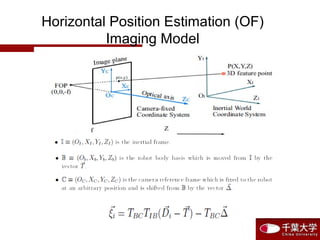

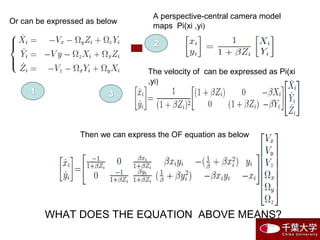

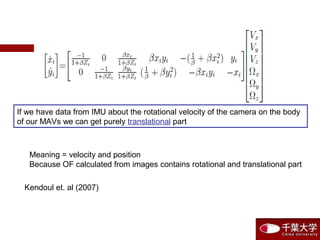

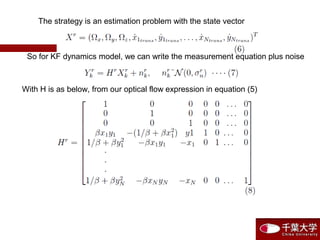

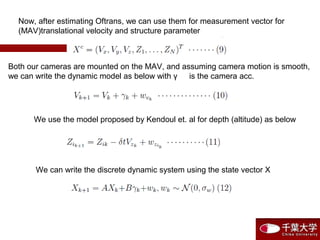

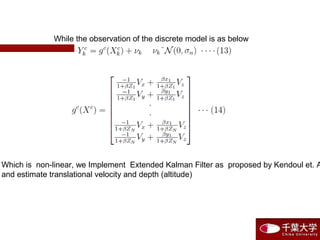

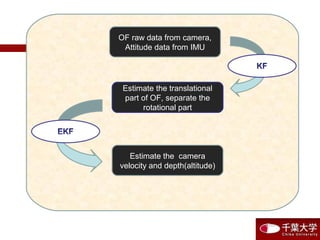

2) An Extended Kalman Filter is used to estimate the MAV's translational velocity and altitude from optical flow measurements separated into rotational and translational components using IMU data.

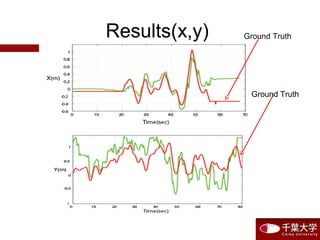

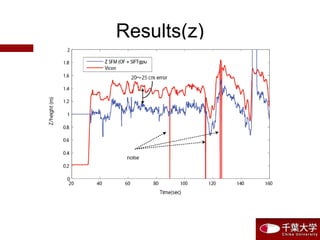

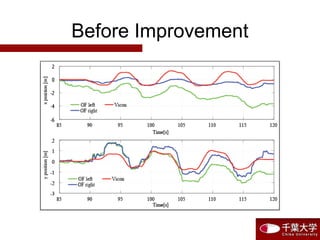

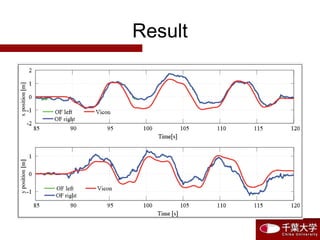

3) Initial experiments fusing optical flow and SIFT matching for altitude estimation showed promising results compared to ground truth, with room for improvement through onboard processing and successive frame SIFT matching for horizontal position estimation.

![Move along x-axis with various

attitude

X

Y

Verification Experiment of Image Algorithm Fused with IMU data

5 10 15 20 25 30

- 0.4

- 0.2

0

0.2

0.4

Time [s ]

X,Ydistance[m]](https://image.slidesharecdn.com/lrzhxxdtzioclexisd7z-signature-5e8d2c37827bd56603d99b1f6f446b66a2ef9224453fa704beeaf40d5e4bc784-poli-150722184151-lva1-app6891/85/P1131210137-18-320.jpg)