Optimizing shared caches in chip multiprocessors

•Download as PPTX, PDF•

1 like•194 views

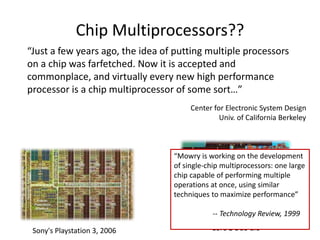

Chip multiprocessors, which place multiple processors on a single chip, have become common in modern processors. There are various approaches to managing caches in chip multiprocessors, including private caches for each processor core or shared caches that all cores can access. The optimal approach balances factors like minimizing traffic between cores, avoiding duplication of cached data, and reducing latency.

Report

Share

Report

Share

Recommended

Microkernel-based operating system development

Slides from my diploma thesis presentation. Theme was design and implementation of a microkernel-based operating system using open source components.

Mostly translated to English, except for a few pictures I don't have a source to, so couldn't change only the text. Hopefully it'll be clear form the context.

Microkernel Evolution

This presentation covers 3 Generations of Microkernels: Mach, L4, seL4/Fiasco.OC/NOVA.

In addition, the real use cases are included.

olibc: Another C Library optimized for Embedded Linux

http://olibc.so/

– Review C library characteristics

– Toolchain optimizations

– Build configurable runtime

– Performance evaluation

seL4 intro

Introduction to principles, concepts and mechanisms of the seL4 microkernel. Overview of the kernel API and programming examples

A Reimplementation of NetBSD Based on a Microkernel by Andrew S. Tanenbaum

Abstract

The MINIX 3 microkernel has been used as a base to reimplement NetBSD. To application programs, MINIX 3 looks like NetBSD, with the NetBSD headers, libraries, package manager, etc. Thousands of NetBSD packages run on it on the x86 and ARM Cortex V8 (BeagleBones). Inside, however, it is a completely different architecture, with a tiny microkernel and independent servers for memory management, the file system, and each device driver. This architecture has many valuable properties which will be described in the talk, including better security and the ability to recover from many component crashes without running applications even noticing. Updating to a new version of the operating system while it is running and without a reboot is on the roadmap for the future.

Running Applications on the NetBSD Rump Kernel by Justin Cormack

Abstract

The NetBSD rump kernel has been developed for some years now, allowing NetBSD kernel drivers to be used unmodified in many environments, for example as userspace code. However it is only since last year that it has become possible to easily run unmodified applications on the rump kernel, initially with the rump kernel on Xen port, and then with the rumprun tools to run them in userspace on Linux, FreeBSD and NetBSD. This talk will look at how this is achieved, and look at use cases, including kernel driver development, and lightweight process virtualization.

Speaker bio

Justin Cormack has been a Unix user, developer and sysadmin since the early 1990s. He is based in London and works on open source cloud applications, Lua, and the NetBSD rump kernel project. He has been a NetBSD developer since early 2014.

Recommended

Microkernel-based operating system development

Slides from my diploma thesis presentation. Theme was design and implementation of a microkernel-based operating system using open source components.

Mostly translated to English, except for a few pictures I don't have a source to, so couldn't change only the text. Hopefully it'll be clear form the context.

Microkernel Evolution

This presentation covers 3 Generations of Microkernels: Mach, L4, seL4/Fiasco.OC/NOVA.

In addition, the real use cases are included.

olibc: Another C Library optimized for Embedded Linux

http://olibc.so/

– Review C library characteristics

– Toolchain optimizations

– Build configurable runtime

– Performance evaluation

seL4 intro

Introduction to principles, concepts and mechanisms of the seL4 microkernel. Overview of the kernel API and programming examples

A Reimplementation of NetBSD Based on a Microkernel by Andrew S. Tanenbaum

Abstract

The MINIX 3 microkernel has been used as a base to reimplement NetBSD. To application programs, MINIX 3 looks like NetBSD, with the NetBSD headers, libraries, package manager, etc. Thousands of NetBSD packages run on it on the x86 and ARM Cortex V8 (BeagleBones). Inside, however, it is a completely different architecture, with a tiny microkernel and independent servers for memory management, the file system, and each device driver. This architecture has many valuable properties which will be described in the talk, including better security and the ability to recover from many component crashes without running applications even noticing. Updating to a new version of the operating system while it is running and without a reboot is on the roadmap for the future.

Running Applications on the NetBSD Rump Kernel by Justin Cormack

Abstract

The NetBSD rump kernel has been developed for some years now, allowing NetBSD kernel drivers to be used unmodified in many environments, for example as userspace code. However it is only since last year that it has become possible to easily run unmodified applications on the rump kernel, initially with the rump kernel on Xen port, and then with the rumprun tools to run them in userspace on Linux, FreeBSD and NetBSD. This talk will look at how this is achieved, and look at use cases, including kernel driver development, and lightweight process virtualization.

Speaker bio

Justin Cormack has been a Unix user, developer and sysadmin since the early 1990s. He is based in London and works on open source cloud applications, Lua, and the NetBSD rump kernel project. He has been a NetBSD developer since early 2014.

Introduction to Microkernels

The lecture by Bjoern Doebel for Summer Systems School'12.

Brief introduction to microkernels illustrated by examples (Fiasco.OC and L4Re).

SSS'12 - Education event, organized by ksys labs[1] in 2012, for students interested in system software development and information security.

1. http://ksyslabs.org/

From L3 to seL4: What have we learnt in 20 years of L4 microkernels

History of L4 microkernels. Look at Jochen Liedtke's original design and implementation insights and how they stood the test of time. What do latest-generation microkernels look like, especially seL4

Linux kernel Architecture and Properties

What is Kernel ? | Types of Kernel | Linux Kernel Architecture | Properties of the Linux Kernel | Major subsystems of the Linux Kernel

μ-Kernel Evolution

The Performance of μ-Kernel-Based Systems from Mach to L4. Analysing evolution in kernel structures, ipc - message based communication in monolithic linux kernel and microkernel

Architecture Of The Linux Kernel

Overview of the architecture of the Linux kernel, based on "Anatomy of the Linux Kernel" by M. Tim Jones (IBM Developerworks), http://www.ibm.com/developerworks/linux/library/l-linux-kernel/

L4 Microkernel :: Design Overview

(1) Myths of Microkernel

(2) Characteristics of 2nd generation microkernel

(3) Toward 3rd generation microkernel

[TALK] Exokernel vs. Microkernel![[TALK] Exokernel vs. Microkernel](data:image/gif;base64,R0lGODlhAQABAIAAAAAAAP///yH5BAEAAAAALAAAAAABAAEAAAIBRAA7)

![[TALK] Exokernel vs. Microkernel](data:image/gif;base64,R0lGODlhAQABAIAAAAAAAP///yH5BAEAAAAALAAAAAABAAEAAAIBRAA7)

http://hawxchen.blogspot.tw/2013/08/talk-exokernel-vs-microkernel.html

I presented this slide at COSCUP 2013 with about 1500 attendees in Taiwan.

I focus on introduction for Exokernel's design and comparison between other kernels, like Microkernel.

Hints for L4 Microkernel

Introduce L4 microkernel principles and concepts and Understand the real world usage for microkernels

Embedded Hypervisor for ARM

Agenda:

(1) Virtualization from The Past

(2) Hypervisor Design

(3) Embedded Hypervisors for ARM

(4) Toward ARM Cortex-A15

Multicore Processors

1) Design and Implementation of Multicore Processors

2) Coherence and Consistency

3) Power and Temperature

4) Interconnects

5) Multicore Caches

6) Security

7) Real world examples

Implement Runtime Environments for HSA using LLVM

* Transition to heterogeneous

- mobile phone to data centre

* LLVM and HSA

* HSA driven computing environment

Leveraging Structured Data To Reduce Disk, IO & Network Bandwidth

Most of the data that is pulled out of an SCM like Perforce Helix is common across multiple workspaces. Leveraging this fact means only fetching the data once from the repository. By creating cheap copies or clones of this data on demand, it is possible to dramatically reduce the load on the network, disks and Perforce servers, while making near-instant workspaces available to users.

More Related Content

What's hot

Introduction to Microkernels

The lecture by Bjoern Doebel for Summer Systems School'12.

Brief introduction to microkernels illustrated by examples (Fiasco.OC and L4Re).

SSS'12 - Education event, organized by ksys labs[1] in 2012, for students interested in system software development and information security.

1. http://ksyslabs.org/

From L3 to seL4: What have we learnt in 20 years of L4 microkernels

History of L4 microkernels. Look at Jochen Liedtke's original design and implementation insights and how they stood the test of time. What do latest-generation microkernels look like, especially seL4

Linux kernel Architecture and Properties

What is Kernel ? | Types of Kernel | Linux Kernel Architecture | Properties of the Linux Kernel | Major subsystems of the Linux Kernel

μ-Kernel Evolution

The Performance of μ-Kernel-Based Systems from Mach to L4. Analysing evolution in kernel structures, ipc - message based communication in monolithic linux kernel and microkernel

Architecture Of The Linux Kernel

Overview of the architecture of the Linux kernel, based on "Anatomy of the Linux Kernel" by M. Tim Jones (IBM Developerworks), http://www.ibm.com/developerworks/linux/library/l-linux-kernel/

L4 Microkernel :: Design Overview

(1) Myths of Microkernel

(2) Characteristics of 2nd generation microkernel

(3) Toward 3rd generation microkernel

[TALK] Exokernel vs. Microkernel![[TALK] Exokernel vs. Microkernel](data:image/gif;base64,R0lGODlhAQABAIAAAAAAAP///yH5BAEAAAAALAAAAAABAAEAAAIBRAA7)

![[TALK] Exokernel vs. Microkernel](data:image/gif;base64,R0lGODlhAQABAIAAAAAAAP///yH5BAEAAAAALAAAAAABAAEAAAIBRAA7)

http://hawxchen.blogspot.tw/2013/08/talk-exokernel-vs-microkernel.html

I presented this slide at COSCUP 2013 with about 1500 attendees in Taiwan.

I focus on introduction for Exokernel's design and comparison between other kernels, like Microkernel.

Hints for L4 Microkernel

Introduce L4 microkernel principles and concepts and Understand the real world usage for microkernels

Embedded Hypervisor for ARM

Agenda:

(1) Virtualization from The Past

(2) Hypervisor Design

(3) Embedded Hypervisors for ARM

(4) Toward ARM Cortex-A15

Multicore Processors

1) Design and Implementation of Multicore Processors

2) Coherence and Consistency

3) Power and Temperature

4) Interconnects

5) Multicore Caches

6) Security

7) Real world examples

Implement Runtime Environments for HSA using LLVM

* Transition to heterogeneous

- mobile phone to data centre

* LLVM and HSA

* HSA driven computing environment

What's hot (15)

From L3 to seL4: What have we learnt in 20 years of L4 microkernels

From L3 to seL4: What have we learnt in 20 years of L4 microkernels

Similar to Optimizing shared caches in chip multiprocessors

Leveraging Structured Data To Reduce Disk, IO & Network Bandwidth

Most of the data that is pulled out of an SCM like Perforce Helix is common across multiple workspaces. Leveraging this fact means only fetching the data once from the repository. By creating cheap copies or clones of this data on demand, it is possible to dramatically reduce the load on the network, disks and Perforce servers, while making near-instant workspaces available to users.

MT48 A Flash into the future of storage…. Flash meets Persistent Memory: The...

Several key technology trends are redefining the boundaries of the traditional storage infrastructure stack: In a rapidly changing world of system interconnects, emerging memory media, and storage semantics, Server Designers and Storage Architects are engaging and collaborating like never before to exploit breakthrough technology capabilities.

With the backdrop of Big Data volume, Cloud Data ubiquity and IoT Data velocity, Application Developers are entering the Post-POSIX world of real-time, high-frequency, low latency data management frameworks.

This session will address key technology trends in Storage, Networking, and Compute, as they define the parameters of a Memory Centric Architecture (MCA) and the Next Generation Data Center.

PyData Paris 2015 - Closing keynote Francesc Alted

Francesc Alted (UberResearch GmbH), “New Trends In Storing And Analyzing Large Data Silos With Python”.

Bio: Teacher, developer and consultant in a wide variety of business applications. Particularly interested in the field of very large databases, with special emphasis in squeezing the last drop of performance out of computer as whole, i.e. not only the CPU, but the memory and I/O subsystems.

Webinar: OpenEBS - Still Free and now FASTEST Kubernetes storage

Webinar Session - https://youtu.be/_5MfGMf8PG4

In this webinar, we share how the Container Attached Storage pattern makes performance tuning more tractable, by giving each workload its own storage system, thereby decreasing the variables needed to understand and tune performance.

We then introduce MayaStor, a breakthrough in the use of containers and Kubernetes as a data plane. MayaStor is the first containerized data engine available that delivers near the theoretical maximum performance of underlying systems. MayaStor performance scales with the underlying hardware and has been shown, for example, to deliver in excess of 10 million IOPS in a particular environment.

Preparing OpenSHMEM for Exascale

In this video from the 2015 Stanford HPC Conference, Pavel Shamis from ORNL presents: Preparing OpenSHMEM for Exascale.

"OpenSHMEM is a partitioned global address space (PGAS) one-sided communications library that enables remote memory access (RMA) across processing elements (PEs). Its API allows data to be transferred from one PE memory space to another PE’s symmetric memory space; decoupling the data transfers from synchronizations. OpenSHMEM is useful for applications that are latency driven or that have irregular communication patterns, because its one-sided API can be mapped very efficiently to hardware (e.g. RDMA interconnects, etc), and its one-sided programming model helps the overlapping of communication with computation. Summit is Oak Ridge National Laboratory’s next high performance supercomputer system that will be based on a many core/GPU hybrid architecture. In order to prepare OpenSHMEM for future systems, it is important to enhance its programming model to enable efficient utilization of the new hardware capabilities (e.g. massive multithreaded systems, accesses different type memories, next generation of interconnects, etc). This session will present recent advances in the area of OpenSHMEM extensions, implementations, and tools.”

Watch the video: http://insidehpc.com/2015/02/video-preparing-openshmem-for-exascale/

See more talks in the Stanford HPC Conference Video Gallery: http://wp.me/P3RLHQ-dOO

Chorus - Distributed Operating System [ case study ]![Chorus - Distributed Operating System [ case study ]](data:image/gif;base64,R0lGODlhAQABAIAAAAAAAP///yH5BAEAAAAALAAAAAABAAEAAAIBRAA7)

![Chorus - Distributed Operating System [ case study ]](data:image/gif;base64,R0lGODlhAQABAIAAAAAAAP///yH5BAEAAAAALAAAAAABAAEAAAIBRAA7)

ChorusOS is a microkernel real-time operating system designed as a message-based computational model. ChorusOS started as the Chorus distributed real-time operating system research project at Institut National de Recherche en Informatique et Automatique (INRIA) in France in 1979. During the 1980s, Chorus was one of two earliest microkernels (the other being Mach) and was developed commercially by Chorus Systèmes. Over time, development effort shifted away from distribution aspects to real-time for embedded systems.

Using the big guns: Advanced OS performance tools for troubleshooting databas...

UKOUG Techfest 2019 presentation.

Network support for resource disaggregation in next-generation datacenters

Presented at the 12th ACM Workshop on Hot Topics in Networks (HotNets XII)

Datacenters have traditionally been a collection of individual servers, each of which aggregates a fixed amount of compute, memory, storage, and network resources as an independent physical entity. Extrapolating from recent trends, we envisage that future datacenters will be architected in a drastically different manner: all computational resources within a server will be disaggregated into standalone blades, and the datacenter network will directly interconnect them. This is what we call "Disaggregated Datacenters".

This presentation briefly sketches why and how this transition will happen. In particular, we focus on the role of network fabric in such disaggregated datacenters, as it will face a set of challenges brought by the new datacenter architecture.

The paper can be found here: http://www.eecs.berkeley.edu/~sangjin/static/pub/hotnets2013_ddc.pdf

Similar to Optimizing shared caches in chip multiprocessors (20)

[Harvard CS264] 07 - GPU Cluster Programming (MPI & ZeroMQ)![[Harvard CS264] 07 - GPU Cluster Programming (MPI & ZeroMQ)](data:image/gif;base64,R0lGODlhAQABAIAAAAAAAP///yH5BAEAAAAALAAAAAABAAEAAAIBRAA7)

![[Harvard CS264] 07 - GPU Cluster Programming (MPI & ZeroMQ)](data:image/gif;base64,R0lGODlhAQABAIAAAAAAAP///yH5BAEAAAAALAAAAAABAAEAAAIBRAA7)

[Harvard CS264] 07 - GPU Cluster Programming (MPI & ZeroMQ)

Leveraging Structured Data To Reduce Disk, IO & Network Bandwidth

Leveraging Structured Data To Reduce Disk, IO & Network Bandwidth

247267395-1-Symmetric-and-distributed-shared-memory-architectures-ppt (1).ppt

247267395-1-Symmetric-and-distributed-shared-memory-architectures-ppt (1).ppt

MT48 A Flash into the future of storage…. Flash meets Persistent Memory: The...

MT48 A Flash into the future of storage…. Flash meets Persistent Memory: The...

PyData Paris 2015 - Closing keynote Francesc Alted

PyData Paris 2015 - Closing keynote Francesc Alted

Webinar: OpenEBS - Still Free and now FASTEST Kubernetes storage

Webinar: OpenEBS - Still Free and now FASTEST Kubernetes storage

Chorus - Distributed Operating System [ case study ]![Chorus - Distributed Operating System [ case study ]](data:image/gif;base64,R0lGODlhAQABAIAAAAAAAP///yH5BAEAAAAALAAAAAABAAEAAAIBRAA7)

![Chorus - Distributed Operating System [ case study ]](data:image/gif;base64,R0lGODlhAQABAIAAAAAAAP///yH5BAEAAAAALAAAAAABAAEAAAIBRAA7)

Chorus - Distributed Operating System [ case study ]

Using the big guns: Advanced OS performance tools for troubleshooting databas...

Using the big guns: Advanced OS performance tools for troubleshooting databas...

Network support for resource disaggregation in next-generation datacenters

Network support for resource disaggregation in next-generation datacenters

More from Hoang Nguyen

More from Hoang Nguyen (20)

Recently uploaded

FIDO Alliance Osaka Seminar: The WebAuthn API and Discoverable Credentials.pdf

FIDO Alliance Osaka Seminar

Builder.ai Founder Sachin Dev Duggal's Strategic Approach to Create an Innova...

In today's fast-changing business world, Companies that adapt and embrace new ideas often need help to keep up with the competition. However, fostering a culture of innovation takes much work. It takes vision, leadership and willingness to take risks in the right proportion. Sachin Dev Duggal, co-founder of Builder.ai, has perfected the art of this balance, creating a company culture where creativity and growth are nurtured at each stage.

DevOps and Testing slides at DASA Connect

My and Rik Marselis slides at 30.5.2024 DASA Connect conference. We discuss about what is testing, then what is agile testing and finally what is Testing in DevOps. Finally we had lovely workshop with the participants trying to find out different ways to think about quality and testing in different parts of the DevOps infinity loop.

To Graph or Not to Graph Knowledge Graph Architectures and LLMs

Reflecting on new architectures for knowledge based systems in light of generative ai

Software Delivery At the Speed of AI: Inflectra Invests In AI-Powered Quality

In this insightful webinar, Inflectra explores how artificial intelligence (AI) is transforming software development and testing. Discover how AI-powered tools are revolutionizing every stage of the software development lifecycle (SDLC), from design and prototyping to testing, deployment, and monitoring.

Learn about:

• The Future of Testing: How AI is shifting testing towards verification, analysis, and higher-level skills, while reducing repetitive tasks.

• Test Automation: How AI-powered test case generation, optimization, and self-healing tests are making testing more efficient and effective.

• Visual Testing: Explore the emerging capabilities of AI in visual testing and how it's set to revolutionize UI verification.

• Inflectra's AI Solutions: See demonstrations of Inflectra's cutting-edge AI tools like the ChatGPT plugin and Azure Open AI platform, designed to streamline your testing process.

Whether you're a developer, tester, or QA professional, this webinar will give you valuable insights into how AI is shaping the future of software delivery.

JMeter webinar - integration with InfluxDB and Grafana

Watch this recorded webinar about real-time monitoring of application performance. See how to integrate Apache JMeter, the open-source leader in performance testing, with InfluxDB, the open-source time-series database, and Grafana, the open-source analytics and visualization application.

In this webinar, we will review the benefits of leveraging InfluxDB and Grafana when executing load tests and demonstrate how these tools are used to visualize performance metrics.

Length: 30 minutes

Session Overview

-------------------------------------------

During this webinar, we will cover the following topics while demonstrating the integrations of JMeter, InfluxDB and Grafana:

- What out-of-the-box solutions are available for real-time monitoring JMeter tests?

- What are the benefits of integrating InfluxDB and Grafana into the load testing stack?

- Which features are provided by Grafana?

- Demonstration of InfluxDB and Grafana using a practice web application

To view the webinar recording, go to:

https://www.rttsweb.com/jmeter-integration-webinar

Smart TV Buyer Insights Survey 2024 by 91mobiles.pdf

91mobiles recently conducted a Smart TV Buyer Insights Survey in which we asked over 3,000 respondents about the TV they own, aspects they look at on a new TV, and their TV buying preferences.

Key Trends Shaping the Future of Infrastructure.pdf

Keynote at DIGIT West Expo, Glasgow on 29 May 2024.

Cheryl Hung, ochery.com

Sr Director, Infrastructure Ecosystem, Arm.

The key trends across hardware, cloud and open-source; exploring how these areas are likely to mature and develop over the short and long-term, and then considering how organisations can position themselves to adapt and thrive.

From Siloed Products to Connected Ecosystem: Building a Sustainable and Scala...

From Siloed Products to Connected Ecosystem: Building a Sustainable and Scalable Platform by VP of Product, The New York Times

When stars align: studies in data quality, knowledge graphs, and machine lear...

Keynote at DQMLKG workshop at the 21st European Semantic Web Conference 2024

UiPath Test Automation using UiPath Test Suite series, part 4

Welcome to UiPath Test Automation using UiPath Test Suite series part 4. In this session, we will cover Test Manager overview along with SAP heatmap.

The UiPath Test Manager overview with SAP heatmap webinar offers a concise yet comprehensive exploration of the role of a Test Manager within SAP environments, coupled with the utilization of heatmaps for effective testing strategies.

Participants will gain insights into the responsibilities, challenges, and best practices associated with test management in SAP projects. Additionally, the webinar delves into the significance of heatmaps as a visual aid for identifying testing priorities, areas of risk, and resource allocation within SAP landscapes. Through this session, attendees can expect to enhance their understanding of test management principles while learning practical approaches to optimize testing processes in SAP environments using heatmap visualization techniques

What will you get from this session?

1. Insights into SAP testing best practices

2. Heatmap utilization for testing

3. Optimization of testing processes

4. Demo

Topics covered:

Execution from the test manager

Orchestrator execution result

Defect reporting

SAP heatmap example with demo

Speaker:

Deepak Rai, Automation Practice Lead, Boundaryless Group and UiPath MVP

Essentials of Automations: Optimizing FME Workflows with Parameters

Are you looking to streamline your workflows and boost your projects’ efficiency? Do you find yourself searching for ways to add flexibility and control over your FME workflows? If so, you’re in the right place.

Join us for an insightful dive into the world of FME parameters, a critical element in optimizing workflow efficiency. This webinar marks the beginning of our three-part “Essentials of Automation” series. This first webinar is designed to equip you with the knowledge and skills to utilize parameters effectively: enhancing the flexibility, maintainability, and user control of your FME projects.

Here’s what you’ll gain:

- Essentials of FME Parameters: Understand the pivotal role of parameters, including Reader/Writer, Transformer, User, and FME Flow categories. Discover how they are the key to unlocking automation and optimization within your workflows.

- Practical Applications in FME Form: Delve into key user parameter types including choice, connections, and file URLs. Allow users to control how a workflow runs, making your workflows more reusable. Learn to import values and deliver the best user experience for your workflows while enhancing accuracy.

- Optimization Strategies in FME Flow: Explore the creation and strategic deployment of parameters in FME Flow, including the use of deployment and geometry parameters, to maximize workflow efficiency.

- Pro Tips for Success: Gain insights on parameterizing connections and leveraging new features like Conditional Visibility for clarity and simplicity.

We’ll wrap up with a glimpse into future webinars, followed by a Q&A session to address your specific questions surrounding this topic.

Don’t miss this opportunity to elevate your FME expertise and drive your projects to new heights of efficiency.

The Art of the Pitch: WordPress Relationships and Sales

Clients don’t know what they don’t know. What web solutions are right for them? How does WordPress come into the picture? How do you make sure you understand scope and timeline? What do you do if sometime changes?

All these questions and more will be explored as we talk about matching clients’ needs with what your agency offers without pulling teeth or pulling your hair out. Practical tips, and strategies for successful relationship building that leads to closing the deal.

Bits & Pixels using AI for Good.........

A whirlwind tour of tech & AI for socio-environmental impact.

ODC, Data Fabric and Architecture User Group

Let's dive deeper into the world of ODC! Ricardo Alves (OutSystems) will join us to tell all about the new Data Fabric. After that, Sezen de Bruijn (OutSystems) will get into the details on how to best design a sturdy architecture within ODC.

Connector Corner: Automate dynamic content and events by pushing a button

Here is something new! In our next Connector Corner webinar, we will demonstrate how you can use a single workflow to:

Create a campaign using Mailchimp with merge tags/fields

Send an interactive Slack channel message (using buttons)

Have the message received by managers and peers along with a test email for review

But there’s more:

In a second workflow supporting the same use case, you’ll see:

Your campaign sent to target colleagues for approval

If the “Approve” button is clicked, a Jira/Zendesk ticket is created for the marketing design team

But—if the “Reject” button is pushed, colleagues will be alerted via Slack message

Join us to learn more about this new, human-in-the-loop capability, brought to you by Integration Service connectors.

And...

Speakers:

Akshay Agnihotri, Product Manager

Charlie Greenberg, Host

Accelerate your Kubernetes clusters with Varnish Caching

A presentation about the usage and availability of Varnish on Kubernetes. This talk explores the capabilities of Varnish caching and shows how to use the Varnish Helm chart to deploy it to Kubernetes.

This presentation was delivered at K8SUG Singapore. See https://feryn.eu/presentations/accelerate-your-kubernetes-clusters-with-varnish-caching-k8sug-singapore-28-2024 for more details.

Slack (or Teams) Automation for Bonterra Impact Management (fka Social Soluti...

Sidekick Solutions uses Bonterra Impact Management (fka Social Solutions Apricot) and automation solutions to integrate data for business workflows.

We believe integration and automation are essential to user experience and the promise of efficient work through technology. Automation is the critical ingredient to realizing that full vision. We develop integration products and services for Bonterra Case Management software to support the deployment of automations for a variety of use cases.

This video focuses on the notifications, alerts, and approval requests using Slack for Bonterra Impact Management. The solutions covered in this webinar can also be deployed for Microsoft Teams.

Interested in deploying notification automations for Bonterra Impact Management? Contact us at sales@sidekicksolutionsllc.com to discuss next steps.

Recently uploaded (20)

FIDO Alliance Osaka Seminar: The WebAuthn API and Discoverable Credentials.pdf

FIDO Alliance Osaka Seminar: The WebAuthn API and Discoverable Credentials.pdf

Builder.ai Founder Sachin Dev Duggal's Strategic Approach to Create an Innova...

Builder.ai Founder Sachin Dev Duggal's Strategic Approach to Create an Innova...

To Graph or Not to Graph Knowledge Graph Architectures and LLMs

To Graph or Not to Graph Knowledge Graph Architectures and LLMs

Software Delivery At the Speed of AI: Inflectra Invests In AI-Powered Quality

Software Delivery At the Speed of AI: Inflectra Invests In AI-Powered Quality

JMeter webinar - integration with InfluxDB and Grafana

JMeter webinar - integration with InfluxDB and Grafana

Smart TV Buyer Insights Survey 2024 by 91mobiles.pdf

Smart TV Buyer Insights Survey 2024 by 91mobiles.pdf

Key Trends Shaping the Future of Infrastructure.pdf

Key Trends Shaping the Future of Infrastructure.pdf

From Siloed Products to Connected Ecosystem: Building a Sustainable and Scala...

From Siloed Products to Connected Ecosystem: Building a Sustainable and Scala...

When stars align: studies in data quality, knowledge graphs, and machine lear...

When stars align: studies in data quality, knowledge graphs, and machine lear...

UiPath Test Automation using UiPath Test Suite series, part 4

UiPath Test Automation using UiPath Test Suite series, part 4

Essentials of Automations: Optimizing FME Workflows with Parameters

Essentials of Automations: Optimizing FME Workflows with Parameters

The Art of the Pitch: WordPress Relationships and Sales

The Art of the Pitch: WordPress Relationships and Sales

Connector Corner: Automate dynamic content and events by pushing a button

Connector Corner: Automate dynamic content and events by pushing a button

Accelerate your Kubernetes clusters with Varnish Caching

Accelerate your Kubernetes clusters with Varnish Caching

Slack (or Teams) Automation for Bonterra Impact Management (fka Social Soluti...

Slack (or Teams) Automation for Bonterra Impact Management (fka Social Soluti...

FIDO Alliance Osaka Seminar: FIDO Security Aspects.pdf

FIDO Alliance Osaka Seminar: FIDO Security Aspects.pdf

Optimizing shared caches in chip multiprocessors

- 1. Core 2 Duo die “Just a few years ago, the idea of putting multiple processors on a chip was farfetched. Now it is accepted and commonplace, and virtually every new high performance processor is a chip multiprocessor of some sort…” Center for Electronic System Design Univ. of California Berkeley Chip Multiprocessors?? “Mowry is working on the development of single-chip multiprocessors: one large chip capable of performing multiple operations at once, using similar techniques to maximize performance” -- Technology Review, 1999 Sony's Playstation 3, 2006

- 2. CMP Caches: Design Space • Architecture – Placement of Cache/Processors – Interconnects/Routing • Cache Organization & Management – Private/Shared/Hybrid – Fully Hardware/OS Interface “L2 is the last line of defense before hitting the memory wall, and is the focus of our talk”

- 3. Private L2 Cache I$ D$ I$ D$ L2 $ L2 $ L2 $ L2 $ L2 $ L2 $ I N T E R C O N N E C T Coherence Protocol Offchip Memory + Less interconnect traffic + Insulates L2 units + Hit latency – Duplication – Load imbalance – Complexity of coherence – Higher miss rate L1 L1 Proc

- 4. Shared-Interleaved L2 Cache – Interconnect traffic – Interference between cores – Hit latency is higher + No duplication + Balance the load + Lower miss rate + Simplicity of coherence I$ D$ I$ D$ I N T E R C O N N E C T Coherence ProtocolL1 L2

- 5. Take Home Message • Leverage on-chip access time

- 6. Take Home Messages • Leverage on-chip access time • Better sharing of cache resources • Isolating performance of processors • Place data on the chip close to where it is used • Minimize inter-processor misses (in shared cache) • Fairness towards processors

- 7. On to some solutions… Jichuan Chang and Gurindar S. Sohi Cooperative Caching for Chip Multiprocessors International Symposium on Computer Architecture, 2006. Nikos Hardavellas, Michael Ferdman, Babak Falsafi, and Anastasia Ailamaki Reactive NUCA: Near-Optimal Block Placement and Replication in Distributed Caches International Symposium on Computer Architecture, 2009. Shekhar Srikantaiah, Mahmut Kandemir, and Mary Jane Irwin Adaptive Set-Pinning: Managing Shared Caches in Chip Multiprocessors Architectural Support for Programming Languages and Operating, Systems 2008. each handles this problem in a different way

- 8. Co-operative Caching (Chang & Sohi) • Private L2 caches • Attract data locally to reduce remote on chip access. Lowers average on-chip misses. • Co-operation among the private caches for efficient use of resources on the chip. • Controlling the extent of co-operation to suit the dynamic workload behavior

- 9. CC Techniques • Cache to cache transfer of clean data – In case of miss transfer “clean” blocks from another L2 cache. – This is useful in the case of “read only” data (instructions) . • Replication aware data replacement – Singlet/Replicate. – Evict singlet only when no replicates exist. – Singlets can be “spilled” to other cache banks. • Global replacement of inactive data – Global management needed for managing “spilling”. – N-Chance Forwarding. – Set recirculation count to N when spilled. – Decrease N by 1 when spilled again, unless N becomes 0.

- 10. Set “Pinning” -- Setup P1 P2 P3 P4 Set 0 Set 1 : : Set (S-1) L1 cache Processors Shared L2 cache I n t e r c o n n e c t Main Memory

- 11. Set “Pinning” -- Problem P1 P2 P3 P4 Set 0 Set 1 : : Set (S-1) Main Memory

- 12. Set “Pinning” -- Types of Cache Misses • Compulsory (aka Cold) • Capacity • Conflict • Coherence • Compulsory • Inter-processor • Intra-processor versus

- 13. P1 P2 P3 P4 Main Memory POP 1 POP 2 POP 3 POP 4 Set : : Set Owner Other bits Data

- 14. R-NUCA: Use Class-Based Strategies Solve for the common case! Most current (and future) programs have the following types of accesses 1. Instruction Access – Shared, but Read-Only 2. Private Data Access – Read-Write, but not Shared 3. Shared Data Access – Read-Write (or) Read-Only, but Shared.

- 15. R-NUCA: Can do this online! • We have information from the OS and TLB • For each memory block, classify it as – Instruction – Private Data – Shared Data • Handle them differently – Replicate instructions – Keep private data locally – Keep shared data globally

- 16. R-NUCA: Reactive Clustering • Assign clusters based on level of sharing – Private Data given level-1 clusters (local cache) – Shared Data given level-16 clusters (16 neighboring machines), etc. Clusters ≈ Overlapping Sets in Set-Associative Mapping • Within a cluster, “Rotational Interleaving” – Load-Balancing to minimize contention on bus and controller

- 17. Future Directions Area has been closed.

- 18. Just Kidding… • Optimize for Power Consumption • Assess trade-offs between more caches and more cores • Minimize usage of OS, but still retain flexibility • Application adaptation to allocated cache quotas • Adding hardware directed thread level speculation

- 20. Backup • Commercial and research prototypes – Sun MAJC – Piranha – IBM Power 4/5 – Stanford Hydra

- 21. Backup