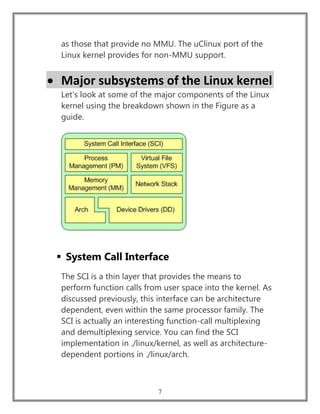

This document discusses the key components and architecture of the Linux kernel. It begins by defining the kernel as the central module of an operating system that loads first and remains in memory, providing essential services. It then describes the major subsystems of Linux, including process management, memory management, virtual file systems, network stacks, and device drivers. It concludes that the modular design of the Linux kernel has supported its growth and success through independent and extensible development of these subsystems.

![8

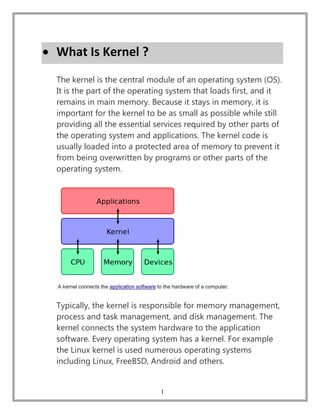

Process Management

Process management is focused on the execution of

processes. In the kernel, these are called threads and

represent an individual virtualization of the processor

(thread code, data, stack, and CPU registers). In user

space, the term process is typically used, though the

Linux implementation does not separate the two

concepts (processes and threads). The kernel provides an

application program interface (API) through the SCI to

create a new process (fork, exec, or Portable Operating

System Interface [POSIX] functions), stop a process (kill,

exit), and communicate and synchronize between them

(signal, or POSIX mechanisms).

Also in process management is the need to share the

CPU between the active threads. The kernel implements

a novel scheduling algorithm that operates in constant

time, regardless of the number of threads vying for the

CPU. This is called the O(1) scheduler, denoting that the

same amount of time is taken to schedule one thread as

it is to schedule many. The O(1) scheduler also supports

multiple processors (called Symmetric MultiProcessing,

or SMP). You can find the process management sources

in ./linux/kernel and architecture-dependent sources in

./linux/arch).](https://image.slidesharecdn.com/linuxkernel-190912163049/85/Linux-kernel-Architecture-and-Properties-9-320.jpg)

![10

File Systems

The term filesystem has two somewhat different

meanings, both of which are commonly used. This can be

confusing to novices, but after a while the meaning is

usually clear from the context.

One meaning is the entire hierarchy of directories (also

referred to as the directory tree) that is used to organize

files on a computer system. On Linux and Unix, the

directories start with the root directory (designated by a

forward slash), which contains a series of subdirectories,

each of which, in turn, contains further subdirectories,

etc.

A variant of this definition is the part of the entire

hierarchy of directories or of the directory tree that is

located on a single partition or disk. (A partition is a

section of a hard disk that contains a single type of

filesystem.)

The second meaning is the type of filesystem, that is,

]how the storage of data (i.e., files, folders, etc.) is

organized on a computer disk (hard disk, floppy disk,

CDROM, etc.) or on a partition on a hard disk. Each type

of filesystem has its own set of rules for controlling the

allocation of disk space to files and for associating data

about each file (referred to as meta data) with that file,](https://image.slidesharecdn.com/linuxkernel-190912163049/85/Linux-kernel-Architecture-and-Properties-11-320.jpg)

![11

such as its filename, the directory in which it is located,

its permissions and its creation date.

An example of a sentence using the word filesystem in

the first sense is: "Alice installed Linux with the filesystem

spread over two hard disks rather than on a single hard

disk." This refers to the fact that [ the entire hierarchy of

directories of ] Linux can be installed on a single disk or

spread over multiple disks, including disks on different

computers (or even disks on computers at different

locations).

An example of a sentence using the second meaning is:

"Bob installed Linux using only the ext3 filesystem

instead of using both the ext2 and ext3 filesystems." This

refers to the fact that a single Linux installation can

contain one or multiple types of filesystems. One hard

disk can contain one or multiple types of filesystems

(each z can be spread across multiple hard disks.

Virtual file system

The virtual file system (VFS) is an interesting aspect of the

Linux kernel because it provides a common interface

abstraction for file systems. The VFS provides a switching

layer between the SCI and the file systems supported by

the kernel (see the Figure).](https://image.slidesharecdn.com/linuxkernel-190912163049/85/Linux-kernel-Architecture-and-Properties-12-320.jpg)