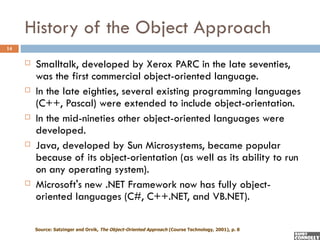

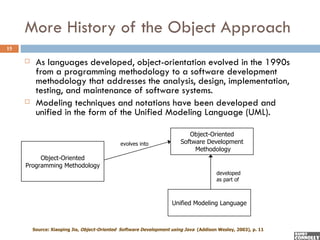

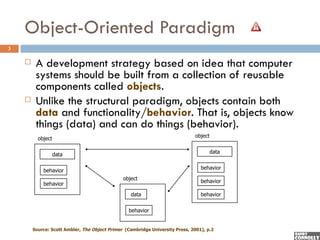

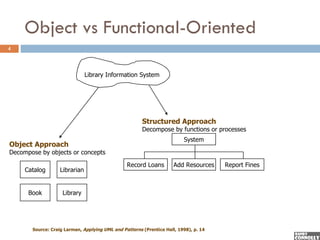

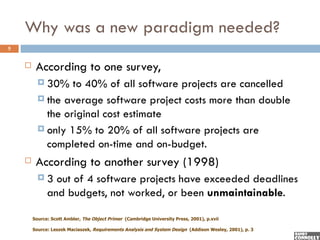

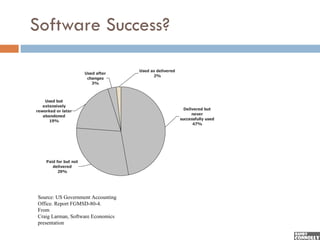

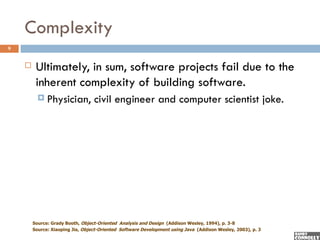

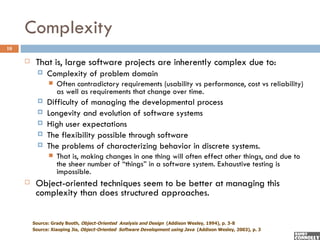

The document introduces object-orientation as a programming and analysis/design paradigm that emphasizes building software from reusable components called objects, which combine data and behavior. It discusses the limitations of traditional programming methodologies and presents the object-oriented approach as a solution to common software project failures caused by complexity and changing requirements. Additionally, it outlines the historical development of object-oriented programming and its evolution into a comprehensive software development methodology.

![Paradigms

2

Object-orientation is both a programming and

analysis/design paradigm.

A paradigm is a set of theories, standards and methods that

together represent a way of organizing knowledge; that is, a way

of viewing the world [Kuhn 70]

Examples of other programming paradigms:

Procedural (Pascal, C)

Logic (Prolog)

Functional (Lisp)

Object-oriented (C++, Smalltalk, Java, C#, VB.NET)

Example of other analysis/design paradigm

Structural (process modeling, data flow diagrams, logic modeling)](https://image.slidesharecdn.com/introobjectoriented-090521120359-phpapp02/85/OO-Development-1-Introduction-to-Object-Oriented-Development-2-320.jpg)

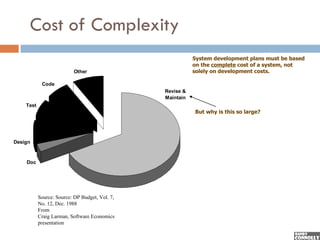

![Why is Maintenance so Expensive?

12

AT&T study indicates that business rules (i.e., user

requirements) change at the rate of 8% per month !

Another study found that 40% of requirements arrived after

development was well under way [Casper Jones]

Thus the key software development goal should be to

reduce the time and cost of revising, adapting and

maintaining software.

Object technology is especially good at

Reducing the time to adapt an existing system (quicker

reaction to changes in the business environment).

Reducing the effort, complexity, and cost of change.

From

Craig Larman, Software Economics presentation](https://image.slidesharecdn.com/introobjectoriented-090521120359-phpapp02/85/OO-Development-1-Introduction-to-Object-Oriented-Development-12-320.jpg)