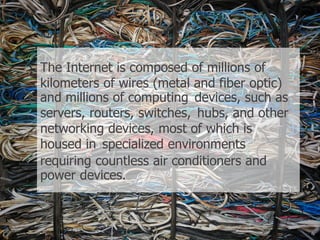

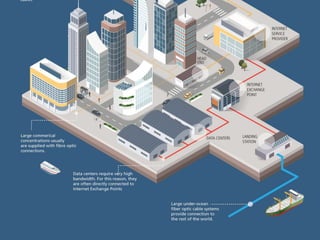

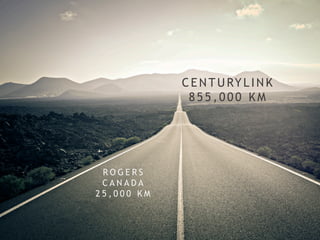

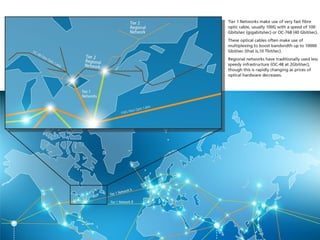

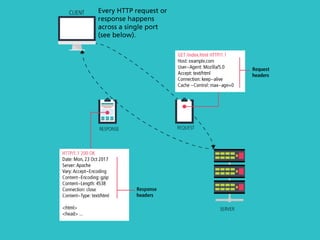

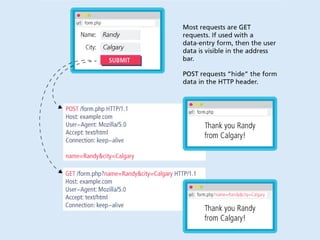

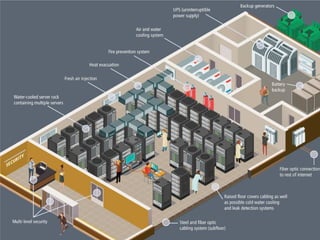

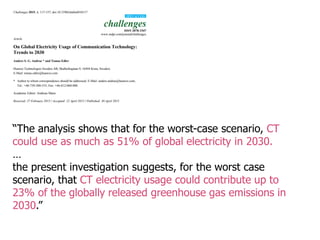

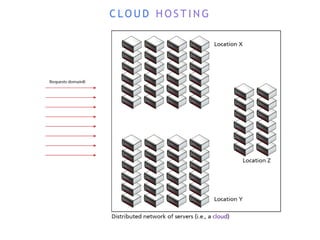

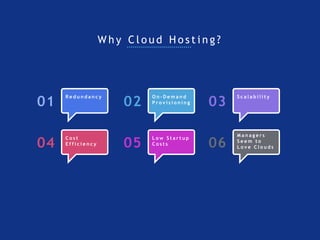

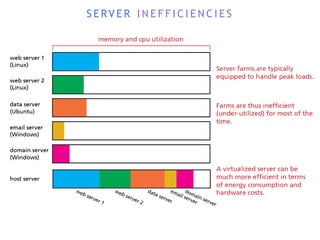

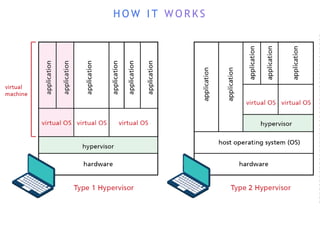

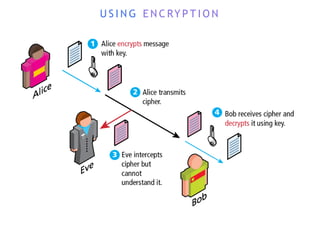

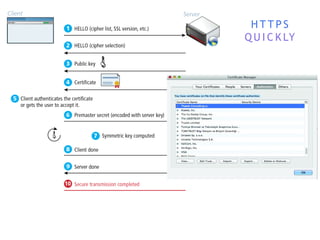

The internet is a complex infrastructure composed of physical networks, including wires and devices, managed primarily by Tier 1 and Tier 2 networks. Data centers play a crucial role in hosting services, consuming significant amounts of electricity, with projections indicating a rising trend in energy consumption and carbon emissions due to the increasing demand for computing resources. Additionally, technologies such as cloud computing and encryption, like HTTPS, are essential in enhancing security and resource management in the digital landscape.