The draft document provides an overview of Network Interface Card (NIC) virtualization concepts and technologies on IBM Flex System, including configuration examples and deployment scenarios. It covers the introduction to I/O module virtualization, converged networking, and architecture specifics of IBM Flex System networking. This document is intended for technical support and users of various IBM networking systems as of May 2014.

![Chapter 4. NIC virtualization considerations on the switch side 65

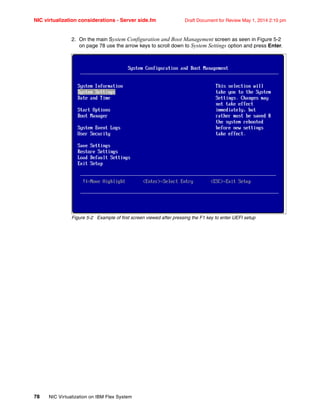

Draft Document for Review May 1, 2014 2:10 pm NIC virtualization considerations - Switch side.fm

vlan 30,40

enable

vmember INTA1.2

Optionally, Example 4-4 shows adding the ability to detect uplink failures referred to as

failover. Failover is a feature used to monitor an up uplink port or port channel and upon

detection of a failed link or port channel the I/O Module will disable any associated members

(INT Ports) or vmembers (UFP vPorts).

Example 4-4 UFP Failover of a vmembers

failover trigger 1 mmon monitor member EXT1

failover trigger 1 mmon control vmember INTA1.1

failover trigger 1 enable

Configuration validation and state of a UFP vPort

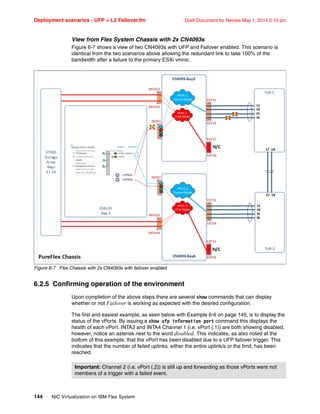

While it’s easy enough to read and understanding how to configure an I/O module for UFP,

there are several troubleshooting commands that can be utilized to validate the configuration

and the state of a vPort as seen below in Example 4-5. and Figure 4-7 on page 73.

Example 4-5 below shows the results of a successfully configured vPort with UFP selected

and running on the Compute Node.

Example 4-5 display’s individual ufp vPort configuration and status

PF_CN4093a#show ufp information vport port 3 vport 1

-------------------------------------------------------------------

vPort state evbprof mode svid defvlan deftag VLANs

--------- ----- ------- ---- ---- ------- ------ ---------

INTA3.1 up dis trunk 4002 10 dis 10 20 30

Below is an understanding of each of the states taking from the above Example 4-5.

vPort = is the Virtual Port ID [port.vport]

state = the state of the vPort (up, down or disabled)

evbprof = only used when Edge Virtual Bridge Profile is being utilized, i.e. 5000v

mode = vPort mode type, e.g. access, trunk, tunnel, fcoe, auto

svid = Reserved VLAN 4001-4004 for UFP vPort communication with Emulex NIC

defvlan = default VLAN is the PVID/Native VLAN for that vPort (untagged)

deftag = default TAG, disabled by default, allows for option to tag the defvlan

VLANs = list of VLAN’s assigned to that vPort

Some other useful UFP vPort troubleshooting commands can be seen below in Example 4-6.

Example 4-6 display's multiple ufp vPort configuration and status

PF_CN4093a(config)#show ufp information port

-----------------------------------------------------------------

Alias Port state vPorts chan 1 chan 2 chan 3 chan 4

------- ---- ----- ------ --------- --------- --------- ---------

INTA1 1 dis 0 disabled disabled disabled disabled

INTA2 2 dis 0 disabled disabled disabled disabled

INTA3 3 ena 1 up disabled disabled disabled

.](https://image.slidesharecdn.com/nicvirtualizationonibmflexsystems-140519024712-phpapp01/85/NIC-Virtualization-on-IBM-Flex-Systems-79-320.jpg)

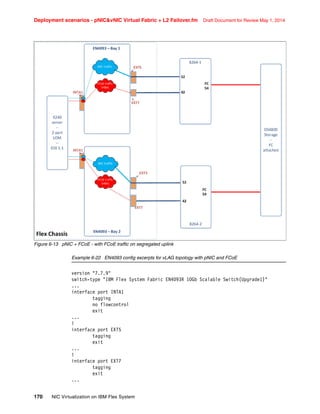

![177

Draft Document for Review May 1, 2014 2:10 pm Deployment scenarios - pNICvNIC Virtual Fabric + L2

An example config of Virtual Fabric vNIC with FCoE is shown in Example 6-25. In this

example, port EXT7 is used to carry FCoE traffic upstream to the 8264CS switch where the

FCF is.

Example 6-25 Virtual Fabric vNIC with FCoE

vnic enable

vnic port INTA1 index 1

enable

bandwidth 40

vnic port INTA1 index 2

enable

bandwidth 30

vnic port INTA1 index 3

enable

bandwidth 20

vnic port INTA1 index 4

enable

bandwidth 10

vnic vnicgroup 1

vlan 3001

member INTA1.1

(additional server vnics can go here)

port ext5

failover

enable

.... the FCoE vnic can not be added to a vNIC group

.... additional groups for data vNICs would be configured here

failover trigger 3 mmon monitor member EXT7

failover trigger 3 mmon control INTA1[,INTA2 ... etc.]

failover trigger 3 enable

... configuration for FCoE and for FCoE uplink to G8264CS....

cee enable

fcoe fips enable

int port ext7

vlan 1002

member ext7

The above configuration will implement failover for both the data and FCoE vNIC instances,

but it will behave in the following ways:

If port EXT5 fails, vNIC INTA1.1 and others configured in vnic group 1 (which would be on

other servers) would be administratively down. The same would happen if an uplink port

configured in vnic groups 3 or 4 should fail; the vNICs associated with those groups would

be disabled.

If the FCoE uplink, port EXT7 fails, then port INTA1 and other ports specified in the failover

trigger would be administratively down. This would include all of the vNIC instances

configured on those ports even though they might still have a working path to the

upstream network.](https://image.slidesharecdn.com/nicvirtualizationonibmflexsystems-140519024712-phpapp01/85/NIC-Virtualization-on-IBM-Flex-Systems-191-320.jpg)