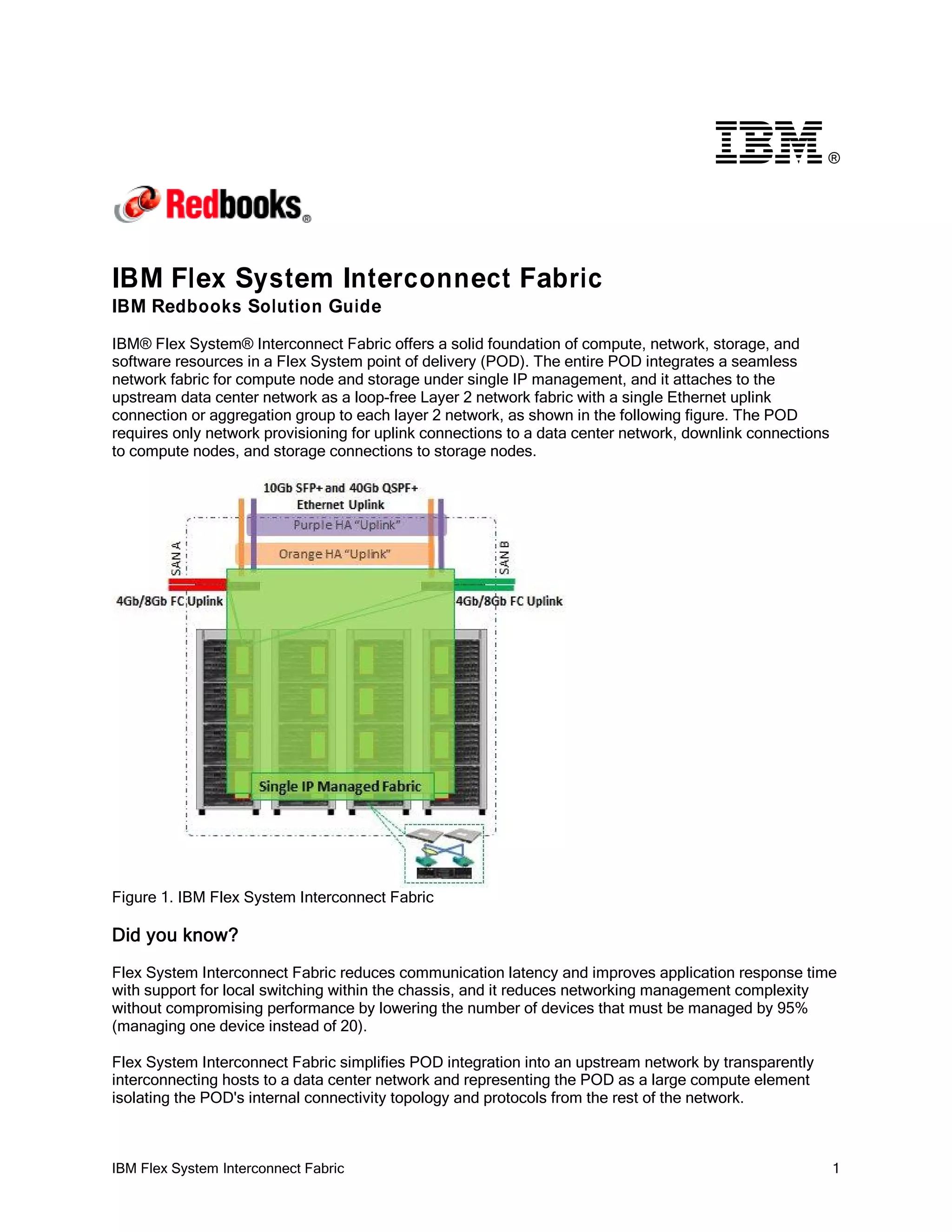

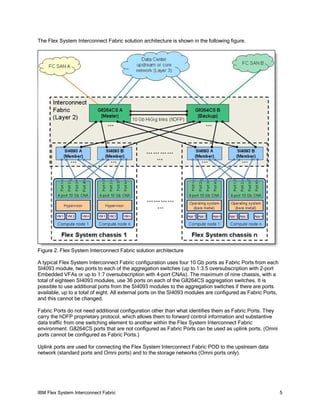

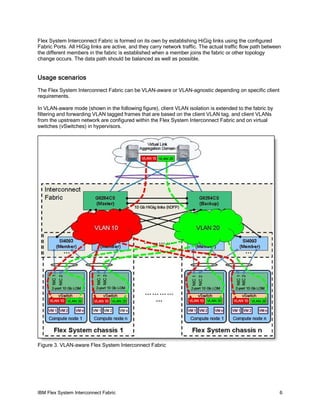

The IBM Flex System Interconnect Fabric provides a scalable network fabric for connecting compute nodes and storage within a Flex System POD using a single IP management approach. Key components include the SI4093 System Interconnect module for connecting nodes within a chassis and G8264CS aggregation switches. The fabric reduces latency and simplifies management by representing the POD as a single network element to upstream networks while providing a loop-free Layer 2 network internally.