Neural networks using tensor flow in amazon deep learning server

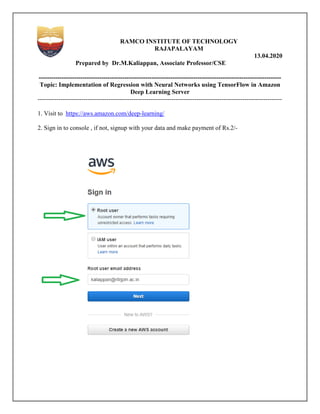

- 1. RAMCO INSTITUTE OF TECHNOLOGY RAJAPALAYAM 13.04.2020 Prepared by Dr.M.Kaliappan, Associate Professor/CSE -------------------------------------------------------------------------------------------------------------------- Topic: Implementation of Regression with Neural Networks using TensorFlow in Amazon Deep Learning Server --------------------------------------------------------------------------------------------------------------------- 1. Visit to https://aws.amazon.com/deep-learning/ 2. Sign in to console , if not, signup with your data and make payment of Rs.2/-

- 2. 3. Click on services then, click on EC2 4. Click on EC2 Dashboard and launch instances

- 3. 5. Press Ctrl+F (search) : Search a keyword deep learning 6. Select Deep learning AMI(Amazon Linux2) 7. Choose an instance type 8. Click Review and launch 9. Click launch

- 4. 10. Select create a new keypair 11. Give key file name: key(any name) 12. Download key pair A key.pem file is downloaded and saved in your computer(Download section)

- 5. 13. Select your created instances 14. Click connect button

- 6. Copy the Public DNS: ec2-3-133-153-1.us-east-2.compute.amazonaws.com 15. Open command prompt go to Downloads Type the following command ssh -L localhost:8888:localhost:8888 -i key1.pem ec2-user@ec2-3-133-153-1.us-east- 2.compute.amazonaws.com

- 7. Now, we got Amazon Linux system

- 8. 16. Type jupyter notebook

- 9. The jupyter notebook is running at the following URL. 17. Type this URL in browser. Click on new button. Select Environment (conda_tensorflow_p36) Regression with Neural Networks using TensorFlow Keras API (Blue color represents code, copy and paste in jupyter notebook) Figure 1: Neural Network

- 10. In regression, the computer/machine should be able to predict a value – mostly numeric. Generate Data: Here we are going to generate some data using our own function. This function is a non-linear function and a usual line fitting may not work for such a function def myfunc(x): if x < 30: mult = 10 elif x < 60: mult = 20 else: mult = 50 return x*mult Let us check what does this function return. print(myfunc(10)) print(myfunc(30)) print(myfunc(60)) It should print something like: 100 600 3000 Now, let us generate data. Let us import numpy library as np. here x is a numpy array of input values. Then, we are generating values between 0 and 100 with a gap of 0.01 using arange function. It generates a numpy array. import numpy as np x = np.arange(0, 100, .01) print(x) data: array([0.000e+00, 1.000e-02, 2.000e-02, ..., 9.997e+01, 9.998e+01, 9.999e+01]) To call a function repeatedly on a numpy array we first need to convert the function using vectorize. Afterwards, we are converting 1-D array to 2-D array having only one value in the second dimension – you can think of it as a table of data with only one column. myfuncv = np.vectorize(myfunc) y = myfuncv(x)

- 11. X = x.reshape(-1, 1) Print(X) [[0.000e+00], [1.000e-02], [2.000e-02], ... [9.997e+01], [9.998e+01], [9.999e+01]] Now, we have X representing the input data with single feature and y representing the output. We will now split this data into two parts: training set (X_train, y_train) and test set (X_test y_test). We are going make neural ne to produce y from X – we are going to test the model on the test set. import sklearn.model_selection as sk X_train, X_test, y_train, y_test = sk.train_test_split(X,y,test_size=0.33, random_state Let us visualize how does our data looks like. Here, we are plotting only X_train vs y_train. You can try plotting X vs y, as well as, X_test vs y_test. import matplotlib.pyplot as plt plt.scatter(X_train,y_train) plt.scatter(X_test,y_test) Now, we have X representing the input data with single feature and y representing the output. We will now split this data into two parts: training set (X_train, y_train) and test set (X_test y_test). We are going make neural network learn from training data, and once it has learnt we are going to test the model on the test set. import sklearn.model_selection as sk X_train, X_test, y_train, y_test = sk.train_test_split(X,y,test_size=0.33, random_state Let us visualize how does our data looks like. Here, we are plotting only X_train vs y_train. You can try plotting X vs y, as well as, X_test vs y_test. Figure 2: X_train vs y_train Now, we have X representing the input data with single feature and y representing the output. We will now split this data into two parts: training set (X_train, y_train) and test set (X_test twork learn from training data, and once it has learnt – how X_train, X_test, y_train, y_test = sk.train_test_split(X,y,test_size=0.33, random_state = 42) Let us visualize how does our data looks like. Here, we are plotting only X_train vs y_train. You

- 12. Let us import TensorFlow libraries and check the version. import tensorflow as tf print(tf.__version__) Now, let us create a neural network using Keras API of TensorFlow. # Import the kera modules from keras.layers import Input, Dense from keras.models import Model # This returns a tensor. Since the input only has one column inputs = Input(shape=(1,)) # a layer instance is callable on a tensor, and returns a tensor # To the first layer we are feeding inputs x = Dense(32, activation='relu')(inputs) # To the next layer we are feeding the result of previous call here it is h x = Dense(64, activation='relu')(x) x = Dense(64, activation='relu')(x) # Predictions are the result of the neural network. Notice that the predictions are also having one column. predictions = Dense(1)(x) # This creates a model that includes # the Input layer and three Dense layers model = Model(inputs=inputs, outputs=predictions) # Here the loss function is mse - model.compile(optimizer='rmsprop', loss='mse', Figure 2: X_test vs y_test et us import TensorFlow libraries and check the version. us create a neural network using Keras API of TensorFlow. from keras.layers import Input, Dense from keras.models import Model # This returns a tensor. Since the input only has one column # a layer instance is callable on a tensor, and returns a tensor # To the first layer we are feeding inputs x = Dense(32, activation='relu')(inputs) # To the next layer we are feeding the result of previous call here it is h )(x) x = Dense(64, activation='relu')(x) # Predictions are the result of the neural network. Notice that the predictions are also having one # This creates a model that includes # the Input layer and three Dense layers odel = Model(inputs=inputs, outputs=predictions) Mean Squared Error because it is a regression problem. model.compile(optimizer='rmsprop', # Predictions are the result of the neural network. Notice that the predictions are also having one Mean Squared Error because it is a regression problem.

- 13. metrics=['mse']) Let us now train the model. First, it would initialize the weights of each neuron with random values and the using backpropagation it is going to tweak the weights in order to get the appropriate result. Here we are running the iteration 500 times and we are feeding 100 records of X at a time. model.fit(X_train, y_train, epochs=500, batch_size=100) # starts training Once we have trained the model. We can do predictions using the predict method of the model. In our case, this model should predict y using X. Let us test it over our test set. Plot the results. y_test = model.predict(X_test) plt.scatter(X_test, y_test) References: 1. https://www.tutorialspoint.com/python_deep_learning/python_deep_learning_artificial_n eural_networks.htm 2. https://cloudxlab.com/blog/regression . First, it would initialize the weights of each neuron with random values and the using backpropagation it is going to tweak the weights in order to get the appropriate result. Here we are running the iteration 500 times and we are feeding 100 records of model.fit(X_train, y_train, epochs=500, batch_size=100) # starts training Once we have trained the model. We can do predictions using the predict method of the model. In our case, this model should predict y using X. Let us test it over our test set. Plot the results. https://www.tutorialspoint.com/python_deep_learning/python_deep_learning_artificial_n https://cloudxlab.com/blog/regression-using-tensorflow-keras-api/ . First, it would initialize the weights of each neuron with random values and the using backpropagation it is going to tweak the weights in order to get the appropriate result. Here we are running the iteration 500 times and we are feeding 100 records of Once we have trained the model. We can do predictions using the predict method of the model. In our case, this model should predict y using X. Let us test it over our test set. Plot the results. https://www.tutorialspoint.com/python_deep_learning/python_deep_learning_artificial_n