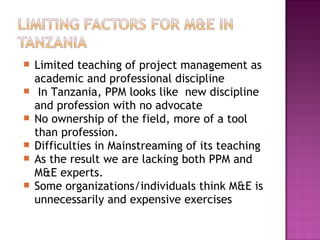

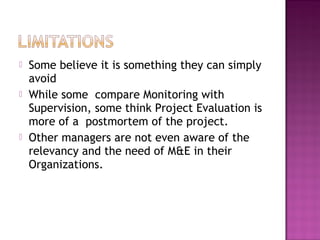

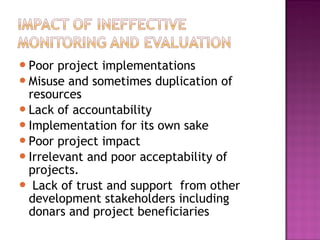

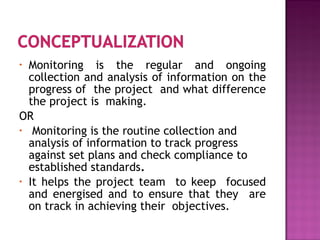

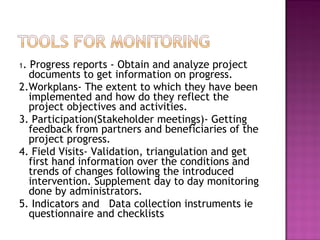

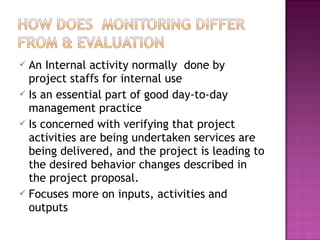

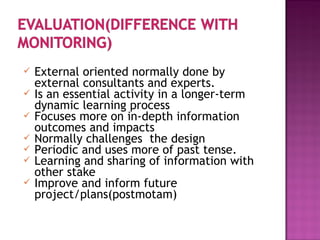

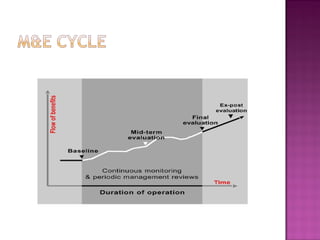

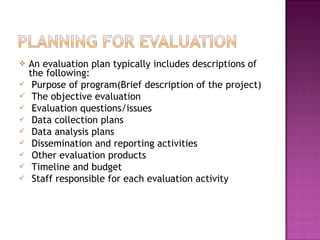

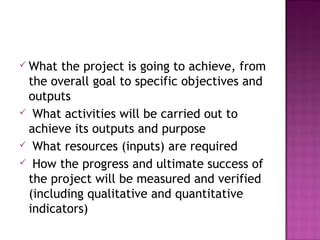

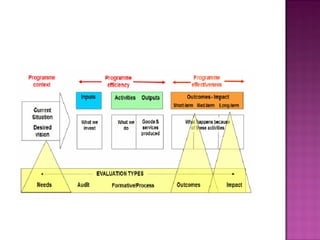

This document discusses monitoring and evaluation (M&E) capacity in Tanzania. It notes that while M&E is important for improving development outcomes, many countries, including Tanzania, lack necessary M&E capacity at both the individual and institutional levels. Comprehensive training is needed to address gaps in M&E skills. The document outlines the differences between monitoring, which tracks project progress, and evaluation, which assesses outcomes and impacts in more depth. Both M&E are important management tools that provide useful feedback when integrated.