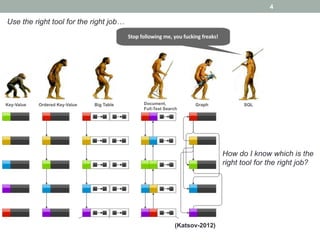

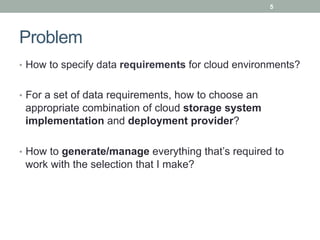

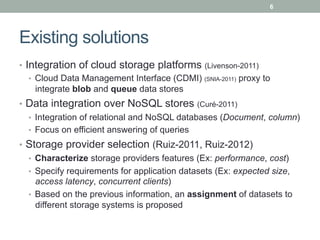

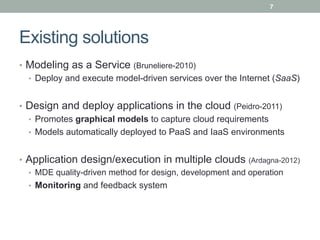

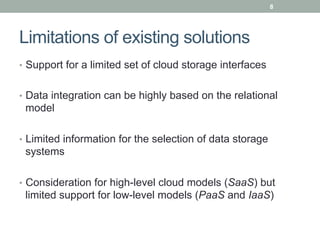

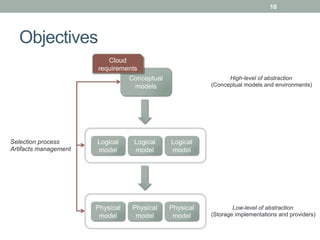

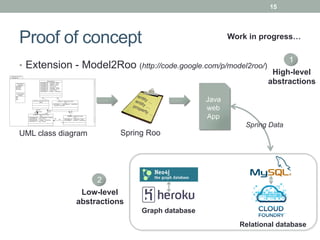

This document discusses model-driven approaches for cloud data storage. It outlines objectives to 1) characterize cloud data storage requirements using conceptual models, 2) select appropriate cloud data storage implementations and providers based on requirements, and 3) manage artifacts for working with different storage solutions. Existing solutions are limited and the proposed approach uses model-driven engineering with multiple levels of modeling and transformation to map between requirements and storage solutions.