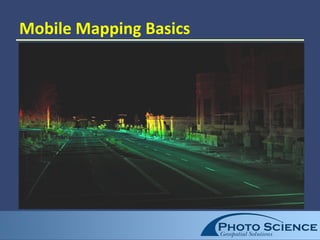

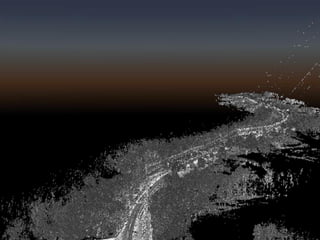

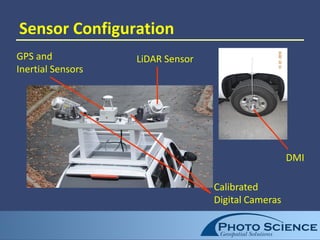

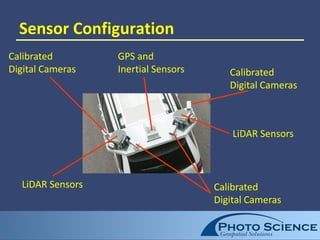

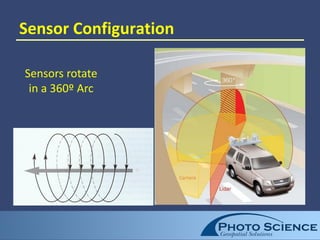

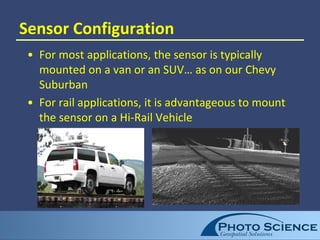

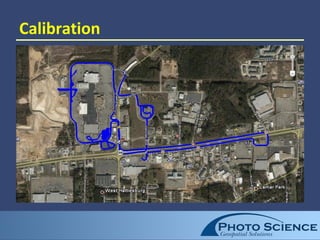

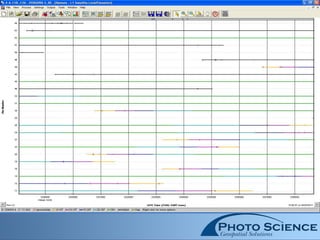

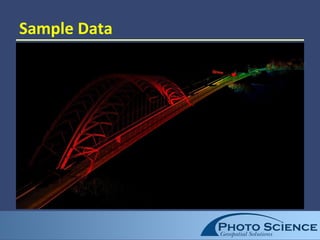

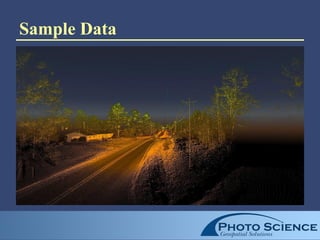

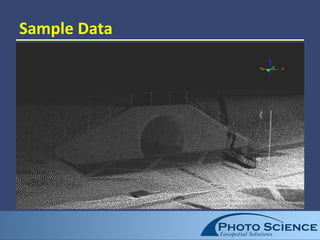

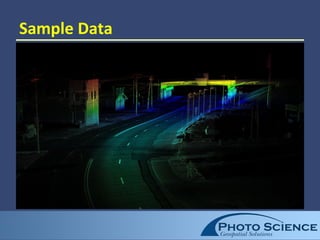

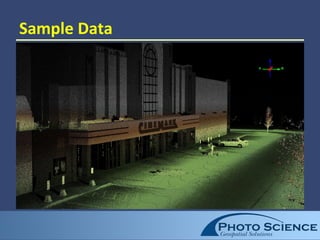

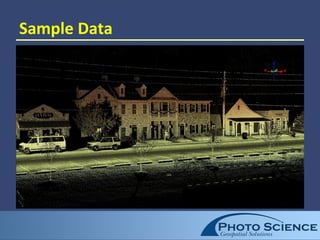

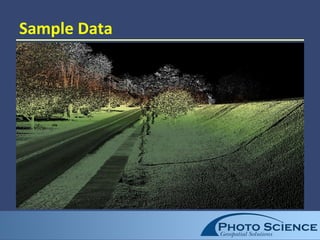

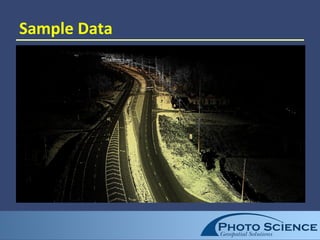

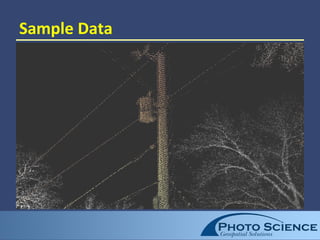

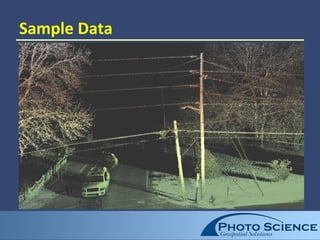

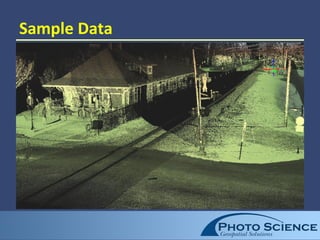

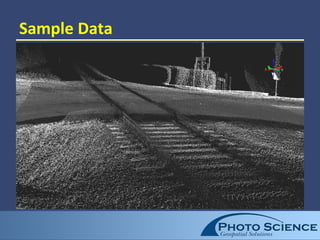

Mobile mapping uses sensors mounted on vehicles to efficiently collect dense 3D point clouds and imagery of linear infrastructure features like roads, railways, and utilities. Sensors include LiDAR to capture up to 500,000 3D points per second along with digital cameras. Post-processing extracts accurate vector features from point clouds for applications like mapping and asset management. Accuracies to 5 centimeters are possible with proper calibration and GPS configuration. While line-of-sight, mobile mapping provides safety advantages over traditional surveying and high productivity for linear projects.