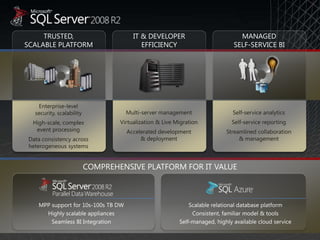

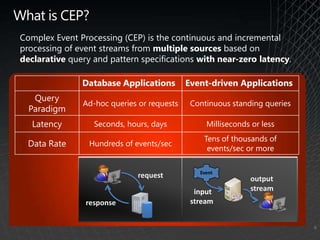

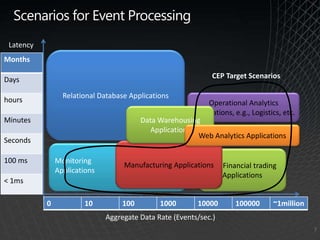

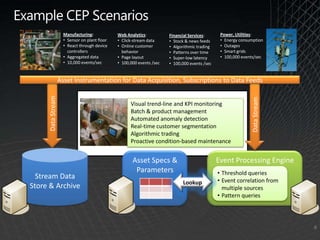

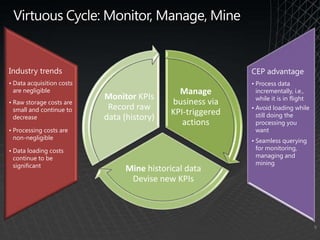

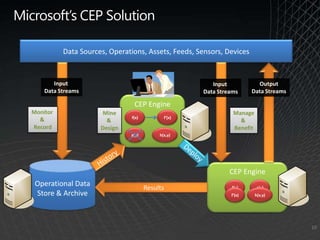

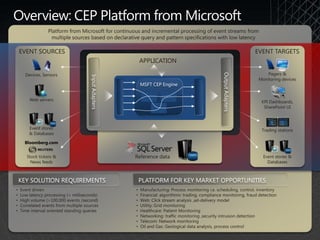

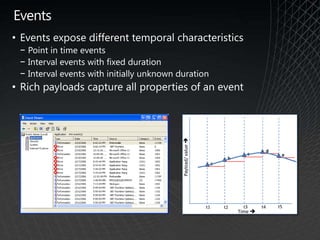

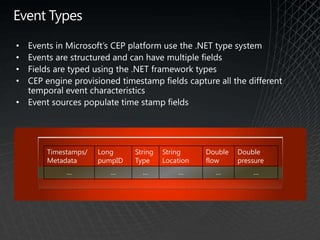

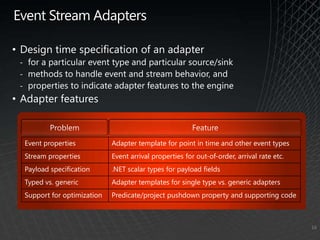

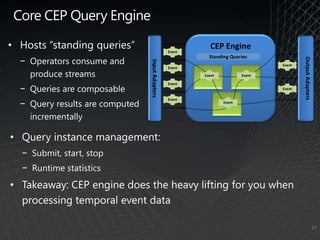

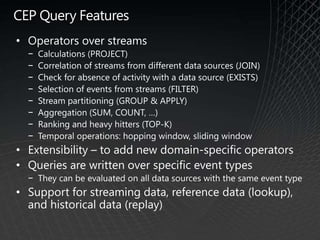

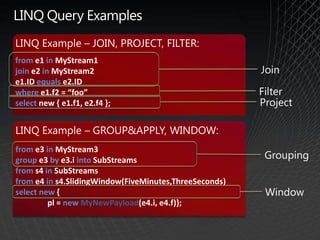

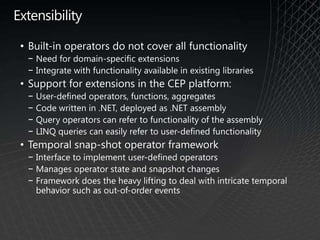

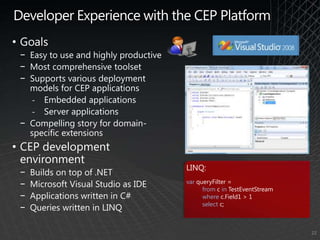

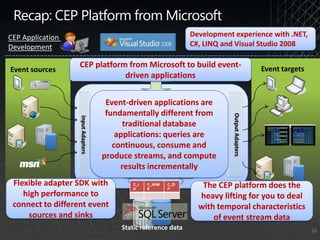

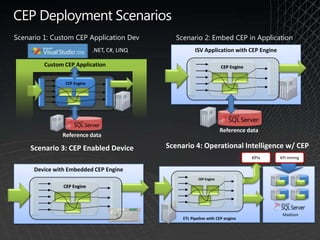

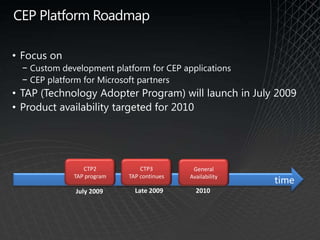

This document provides an overview of Microsoft's StreamInsight Complex Event Processing (CEP) platform. It discusses CEP concepts and benefits, the StreamInsight architecture and development environment, and deployment scenarios. The presentation aims to introduce IT professionals to CEP and Microsoft's StreamInsight solution for building event-driven applications that process streaming data with low latency.