The article presents Tiarrah computing as an integrated platform combining cloud, fog, and edge computing to address the challenges associated with the Internet of Things (IoT). It emphasizes high performance, flexibility in application development, and the use of open-source technologies to facilitate real-time data handling and dynamic resource allocation. The paper discusses the architecture, components, and application flow for effective deployment and management of IoT applications.

![International Journal of Electrical and Computer Engineering (IJECE)

Vol. 8, No. 2, April 2018, pp. 1247~1255

ISSN: 2088-8708, DOI: 10.11591/ijece.v8i2.pp1247-1255 1247

Journal homepage: http://iaescore.com/journals/index.php/IJECE

Tiarrah Computing: The Next Generation of Computing

Yanish Pradhananga, Pothuraju Rajarajeswari

Departement of Computer Science Engineering, KL University, Guntur, Andhra Pradesh, India

Article Info ABSTRACT

Article history:

Received Jun 13, 2017

Revised Sep 18, 2017

Accepted Sep 25, 2017

The evolution of Internet of Things (IoT) brought about several challenges

for the existing Hardware, Network and Application development. Some of

these are handling real-time streaming and batch bigdata, real- time event

handling, dynamic cluster resource allocation for computation, Wired and

Wireless Network of Things etc. In order to combat these technicalities,

many new technologies and strategies are being developed. Tiarrah

Computing comes up with integration the concept of Cloud Computing, Fog

Computing and Edge Computing. The main objectives of Tiarrah Computing

are to decouple application deployment and achieve High Performance,

Flexible Application Development, High Availability, Ease of Development,

Ease of Maintenances etc. Tiarrah Computing focus on using the existing

opensource technologies to overcome the challenges that evolve along with

IoT. This paper gives you overview of the technologies and design your

application as well as elaborate how to overcome most of existing challenge.

Keyword:

Cloud computing

Edge computing

Fog computing

Internet of things

Real-time streaming

Copyright © 2018 Institute of Advanced Engineering and Science.

All rights reserved.

Corresponding Author:

Yanish Pradhananga,

Departement of Computer Science Engineering,

K.L. University,

Green Fields, Guntur, Andhra Pradesh, India.

Email: hsinay@gmail.com

1. INTRODUCTION

The general arousal of interest in the Internet of Things (IoT) has introduced a variety of new

technologies and strategies to deal with all the production-related data at the core of IoT [1]. While many of

these technologies are not necessarily new, they are often unfamiliar to the industry and may require

explanation. As well as, these technologies are developing rapidly and adding new features. The technologies

like Fog Computing and Edge Computing are evolving and its purpose is to push intelligence and processing

capabilities closer to data source [2], [3], so that there is a quick response. Fog computing comes with the

concept to share cloud computing load in local area network and perform intelligence and processing in fog

layer.

Edge computing comes with the idea to handle intelligence, processing and communication

capabilities in edge gateway or in appliance directly with devices like programmable automation Controllers

(PACs). Tiarrah computing attempts to solve the limitation of cloud computing, Fog Computing and Edge

Computing by integrating these computing concepts. Tiarrah Computing is a platform or an application

development framework which is solving the challenges that Foghorns technology is trying to solve. Tiarrah

Computing is built with opensource technologies and one can have flexibility in development, deployment,

support as well as can upgrade to new release to have new features and use them in application. The IT

industry can use Tiarrah Comuting and can take benefit of reusing their existing algorithms, codes,

automation logic, database etc. Tiarrah Computing promote to handle mission critical event at device level

for immediate action. Devices and logic with Programmable logic controller(PLC), ARM Controller, Field

Programmable Gate Array(FPGA) etc. can be used to handle mission critical events. The proposed

computing has the power to deal with real-time bigdata stream processing onsite locations with private cloud

that has the features of dynamic auto scaling, zero downtime etc. The systems purpose is to make use of both](https://image.slidesharecdn.com/v6618sep1713jun7612-10098-1-ed-201110090115/75/Tiarrah-Computing-The-Next-Generation-of-Computing-1-2048.jpg)

![ ISSN: 2088-8708

Int J Elec & Comp Eng, Vol. 8, No. 2, April 2018 : 1247 – 1255

1248

cloud services as well as local server services to make very efficient, reliable, secure, high performing

application. The microservices enable high performance processing, optimized analytics and heterogeneous

services to be hosted as close to the control and monitoring system as possible.

This system can be designed to address complex event processing (CEP) by writing rules using a

powerful and expressive domain specific language (DSL) for the multitude of the incoming sensor streams of

data. These rules can then be used to prevent costly machine failures or downtime as well as improve the

efficiency and safety of industrial operations and processes in real time. The services are deployed in cloud

server as well as in fog server located in DMZ network. These services deployed in these two tier

communicate with each other as per requirement. User perform monitoring and controlling operation where

data are exchanged from both services deployed in different layers. This paper is focused on explaining the

details of application development and deployment with the existing technology using block diagram with

technologies details, architectural diagram with wired and wireless network of things with tiers details, flow

chart diagram and with the flow of system.

2. LITERATURE SURVEY

2.1. Edge Computing

Edge computing is an approach to push processing of certain data at edge network. An application

having requirement to handle and process real-time data then such processing cannot tolerate network latency

delay. Processing of such data in cloud will increase response time, which is not acceptable in practical case.

Processing of such data locally, will reduce the data that need to be send for processing which help to

decrease the network traffic as well as increase performance. The hardware device in edge network have very

less memory and processing capacity. Therefore, it is recommend handling only dedicated process in edge

computing network.

2.2. Fog Computing

Data generated from IoT devices has increased exponentially in volume and velocity. The old data

warehouse model cannot meet the low latency response times for users demand. Cloud was only as option for

sending data to store, analysis etc. that might lead to data bottlenecks. Business models need data analytics

response as soon as possible but it get delay when data have to travel to cloud, process it and send response

back. Fog Computing helps to overcome these challenges. Fog computing architecture is almost same as a

cloud computing architecture but fog computing is located in edge network. Characteristics of fog computing

include low latency, location awareness, real-time analytics and security. Fog computing is located in edge

network because of that monitoring, controlling and maintaining edge devices can be done in ease. This give

more flexibility in enhancing edge computing. Because of fog computing, edge computing can get rid of lot

of circumstance and challenges that it might face.

2.3. Cloud Computing

Cloud computing is a platform where it have exposed multiple services like Platform as a

service(PaaS), Infrastructure as a service(IaaS), Software as a service(SaaS) etc. Cloud Computing is an

approach to use services through internet using lightweight protocol. Cloud computing provide resources like

computer processing resources, data storage devices and other devices on demand[4]. These resources can be

used to build your own SaaS in cloud like bigdata analytics to perform your requirement explained in [14].

These resources can be control and monitor using lightweight protocol. One can use services available in

cloud using lightweight protocol and can get rid of developing your own services. Overall, Cloud computing

is a platform which exposed lot of services to develop application or business logic with ease.

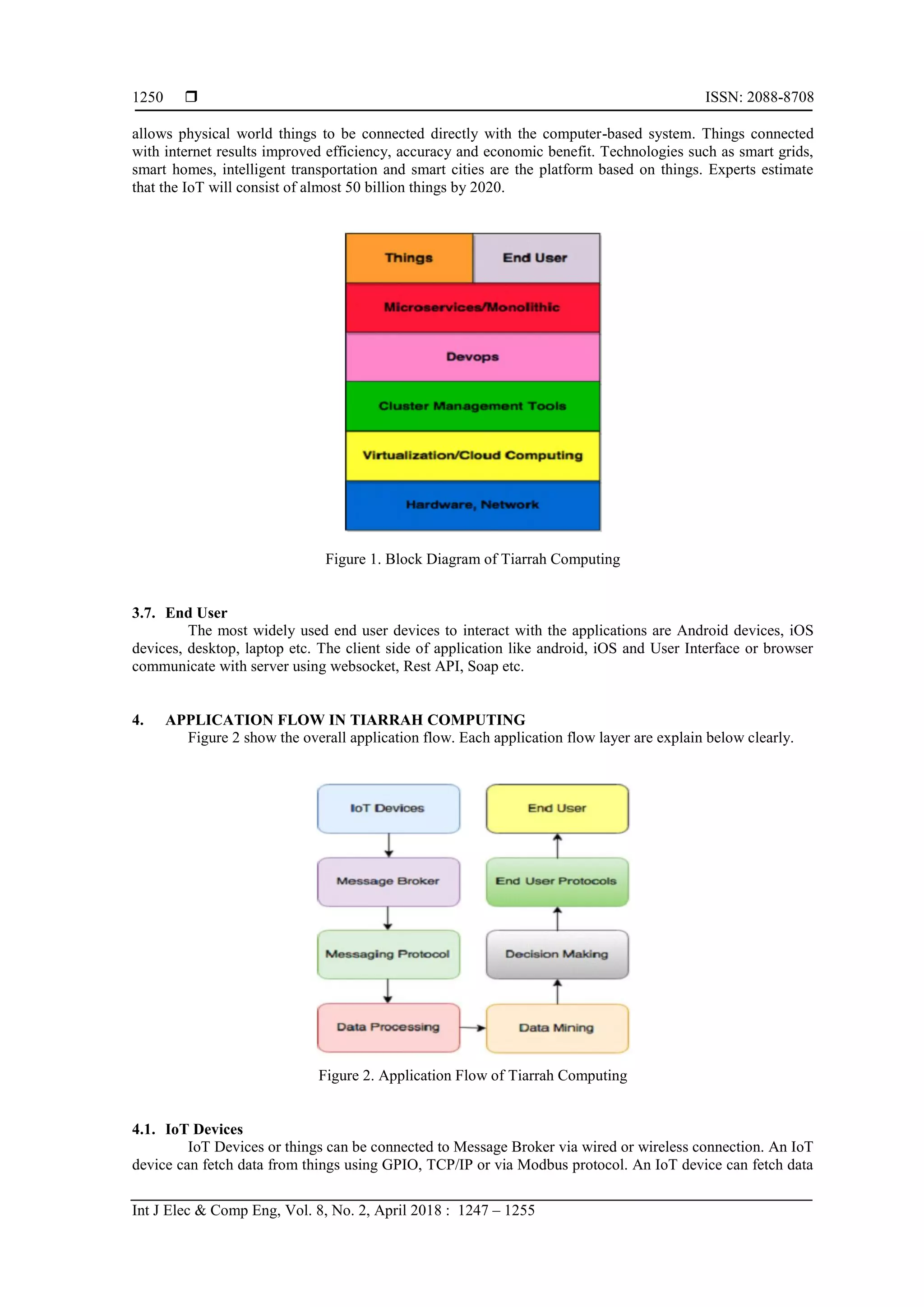

3. TIARRAH COMPUTING SYSTEM COMPONENT

The Components of Tiarrah Computing is shown in Figure 1. These components are explained

below:

3.1. Hardware, Network

To deploy and communicate with application there is always need of the hardware and networks.

The most widely used hardware and network in data Centre are Blaze Server, Racks, Router, Switch, cable-

wiring etc.](https://image.slidesharecdn.com/v6618sep1713jun7612-10098-1-ed-201110090115/75/Tiarrah-Computing-The-Next-Generation-of-Computing-2-2048.jpg)

![Int J Elec & Comp Eng ISSN: 2088-8708

Tiarrah Computing: The Next Generation of Computing (Yanish Pradhananga)

1249

3.2. Virtualization & Cloud Computing

Besides hardware, virtualization technology is used for the management and control of resources

like Memory, Storage, Network and Processor. By using virtualization, one can create virtual instance with

virtual image using a desired hardware with configuration that can be vertically scaled up or down as

required. The most popular cloud computing tools like openstack[12], [13] and cloudstack are used to create

virtual instance and destroy them. By using openstack and cloudstack one can create their own private or

public cloud. If there is no requirement of these technologies or one doesn’t want to invest in hardware to

build a datacenter or private cloud. One can have a simple server in private network or local network and

deploy rest of application in public cloud like Amazon AWS, Microsoft Azure, Rackspace etc. to deploy the

application.

3.3. Cluster Management

Tiarrah Computing promote for cluster management if the application is huge and it is necessary to

make the cluster running without any downtime since it is quite complicated to monitor and update each and

every machine individually. To solve these challenges there are opensource frameworks and tools for cluster

management as well as a lot of research going on in cluster management [15]. Since, it is opensource one can

use the features available or create his own feature or modify the feature as per requirement. There are also a

lot of forums and groups where one can find lot of support, documentations, and tutorials and so on to deal

with the challenges that you are facing. The benefits of using cluster management tools are as follows:

a. Cluster size can be scaled up or down easily or an automated design to scale cluster size up or

down can be put to use.

b. Zero downtime by using automatic failover or self-healing.

c. Controlling, monitoring and managing a group of clusters through a graphical user interface or

by using command line.

d. Dynamic load balancing can be achieved.

e. Easy deployment of cluster and easy deployment of application using DevOps.

f. Efficient use of resources.

For Small and Medium Enterprise (SME) there might not be requirement of Cluster Management,

since all workload can be handled by a server. But it can be implemented in future if required as application

grow.

3.4. DevOps

DevOps focuses on collaboration and communication of software developers and other information

technologies. Automating software delivery and infrastructure changes such concept and process comes

under DevOps practice. DevOps aims is to create an environment for building, testing and releasing software

rapidly, frequently and more reliably [16], [17]. Some of widely used DevOps tools in industries for software

development and delivery process are GIT, Docker, Jenkins, Puppet, Vagrant etc. Some of most popular and

widely used opensoure framework and tools for cluster management are Docker swarm, Fleet, Kubernetes,

Apache Mesos, Apache ZooKeeper, Apache Marathon etc.

3.5. Microservices and Monolitics

Microservices [11], [18], [19] and Monolitics architecture are architectures that are used for

developing applications. Most applications are developed using monolitics architecture and it is the

traditional way of application development. In software development there is requirement of continuous

increment in feature which increase sizes of application. As time passes application become complicated and

huge, which lead to degradation in performance and testing. This mean that application development speed is

inversely proportional to the size and features. To overcome this limitation, mocroservices based application

development approach emerged.

Microservices based application development is an approach to develop an application composed of

small services. Each services in microservices run in its own process. Each services are independent of each

other, which give flexibility in development, testing and deployment of services separately. Each service runs

as a separate process and communication between services is accomplished through lightweight mechanisms.

Microservices give more flexibility in developing application with feature like non-blocking, event driven,

concurrency, scalability, polyglot etc.

3.6. Things

The Internet of things is the internetworking of physical devices, vehicles, buildings etc. These

things are embedded with electronics, software, sensors, actuators etc. The network connection enables to

collect and exchange data from these things. IoT allows things to be monitor and/or control remotely IoT](https://image.slidesharecdn.com/v6618sep1713jun7612-10098-1-ed-201110090115/75/Tiarrah-Computing-The-Next-Generation-of-Computing-3-2048.jpg)

![Int J Elec & Comp Eng ISSN: 2088-8708

Tiarrah Computing: The Next Generation of Computing (Yanish Pradhananga)

1251

from a single thing which is directly connected or a group of things connected via Remote Terminal

Unit(RTU), PLC and Supervisory Control and Data Acquisition(SCADA). IoT Device send data fetched

from things to a message broker which tells the detailed status of things. IoT Devices may support wireless

communication protocols such as Zigbee[20-22], Wifi [23], Bluetooth [24] etc. and support wired connection

such as RS-232, RS-485, RJ-45, RJ-11, USB etc. Most IoT Devices are able to communicate using Modbus

protocol and TCP/IP protocol. The data from these connected things are further transferred to Cloud using a

Message Broker. Some example of sensors connected with things are temperature sensor, humidity sensor,

and light sensor etc.

4.2. Message Broker

Services on application can interact with one another using middleware called the message broker

which provide decoupling on the services of an applications. This decoupling of services gives more

flexibility in application development. There may be probability messages and workload may queue up when

multiple receiver is connected. These queues are handled by the message broker which make sure that

messages are delivered, transaction managed and reliable storage is always available. Message Broker

manage workload queue or message queue from multiple receivers, providing reliable storage, and guarantee

message delivery and transaction management. Some of the Popular and widely used opensource Message

Broker are Kafka, RabbitMQ, ActiveMQ, Mosquitto etc.

4.3. Messaging Protocol

In order to select the best possible messaging protocol solution in IoT and IIoT an in-depth

knowledge of the architecture as well as the messaging or data sharing are pre-requisites of every target

system is essential. IoT applications of the future will be supported by many important technologies that are

currently in an advancing phase. In order to connect devices messaging technologies like Data Distribution

Service DDS, Constrained Application Protocol CoAP, Message Queuing Telemetry Transport MQTT,

eXtensible Messaging and Presence Protocol XMPP, Advanced Message Queuing Protocol AMQP and

Representational State Transfer REST may be put to use. However, when one considers the fundamental

system requirements like performance interoperability, security, tolerance of fault, service quality etc. The

suitability of these messaging technologies may fall short of expectations, as they may be unable to support

communication within and between device to cloud communication and communication within a data centre.

In order to interchange data websocket protocol can also be used to make the full duplex connection with

Message Broker as well as with the services.

4.4. Data Processing

The process of gathering and managing data components to produce meaningful information is

called data processing. . It is a subset of information processing. Most applications gather information and

save it in the memory or database. These data are then processed as a batch job. Real-time data or streaming

data are becoming increasingly relevant. It is no longer sufficient to process big volumes of data but

operations on real-time data is also adding value in data processing. Real-time data processing is developing

fast and is currently one of the most popular research topics. To overcome these challenges of real-time data

processing there emerge two different architecture that are kappa architecture and lambda architecture. Data

processing is performed in two different mechanism:

4.4.1. Stream Processing

The analysis of large quantities of data and carrying out of actions on data even aas it is being

collected can be done using stream processing. For this purpose, a continuous series of queries (i.e. SQL-type

queries that operate over time and buffer windows) and applied on the streaming data. Streaming analytic, an

important component of stream processing is the ability to perform mathematical and statistical calculations

on streaming data, is made possible with a scalable, highly available and fault tolerant architecture.

A conventional database model first stores and classifes data before being processed by queries.

However, in stream processing data can be analysed even while it is en-route. Since stream processing can be

doen even on external data sources it enables selection of data into application flow and the update of the

external databases with data that has been processed.

4.4.2. Batch Processing

When transaction is processed in a blulk or a batch it is called batch processing. Unlike transaction

processing that can be performed only one at a time and may require user intervention, once batch processing

has begun, external interaction is not required. Its potential is best suitable for end of-cycle processing, such](https://image.slidesharecdn.com/v6618sep1713jun7612-10098-1-ed-201110090115/75/Tiarrah-Computing-The-Next-Generation-of-Computing-5-2048.jpg)

![ ISSN: 2088-8708

Int J Elec & Comp Eng, Vol. 8, No. 2, April 2018 : 1247 – 1255

1252

as for processing a bank’s reports at the end of a day, or generating monthly or bi-weekly payrolls, although

it can be performed as desired.

4.5. Data Mining

When transaction is processed in a bulk or a batch it is called batch processing. Unlike transaction

processing that can be performed only one at a time and may require user intervention, once batch processing

has begun, external interaction is not required. Its potential is best suitable for end of-cycle processing, such

as for processing a bank’s reports at the end of a day, or generating monthly or bi-weekly payrolls, although

it can be performed as desired.

4.5.1. Information Fusion

Information fusion is the process of combining or fusing information or data of same object or scene

to have a clear vision of complexity, reliability and accuracy of the situation of the information [5].

4.5.2. Multi-Sensor Data/Information Fusion

Developing any intelligence and smart appliation or system required multi-sensor data/information

fusion. Data/Information fusion from single source or things can be easily bypass and have lot of limitation in

intelligence, logic design, accuracy etc. The fusion of data/information from multiple source in multiple way

and multiple level gives more flexibility to achieve a unified pricture [6].

One good example, to elaborate real-time multi-sensor data/information fusion can be fire alarm

system. Having only smoke detector will not give the exact situation information whether fire occurred or

not. Effective use of multiple sensors like temperature sensor, smoke detector, humidity sensor etc. and

fusing the information/data from these sensors in real-time will be the exact situation and make complicated

to overcome or bypass the security threat.

4.5.3. Cross Domain Data Fusion

As the bigdata evolve, it comes with many challenges to handle data and get insight from it. Bigdata

open a new approach to visualize data from high level with a concrete vision. The data from multiple domain

have multiple scenario and fusing the data from these domains give a new way to solve complex challenges.

It is obvious that the data from multiple domain are in multiple format. Each data is handled separately and

information from these domains are again fused to have a high-level concrete visualization of data. Three

categories of data fusion methodologies for Cross-Domain data fusion approach are summarized as well as

elaborate the approach to unlock the knowledge from disparate datasets of different domain in the bigdata

research [7].

4.5.4. Big Data Fusion

Interacting with billions of rows of data from single source is not sufficient, now according to the

rapid development of thechnologies and requirements it is necessary to integrate multiple sources and

perform fusion. The data from multiple sources, real-time stream and historical data may be there included

while dealing with bigdata Fusion [8]. The fusion of these data across multiple sources and the result of

fusion of each sources get the right insights.

4.6. Decision Making

Decision Making is not an easy task. There is always a requirement for appropriate decision to be

made everywhere like in Business, Enterprise, Stock Market, Gambling etc. Decisions are made by satisfying

the condition. Decision are taken by using Message/Event Driven Architecture, Process Driven Architecture

and Service Driven Architecture etc. When complex processing is not required and there is a need to perform

mission critical operations Message/Event Driven Architecture are better to implement or programmed in

microcontroller, ARM Controller or any device that support programmable automation Controllers.

Implementation of such logic near the device give real-time triggering for the action that needs to be

performed. The trigger of event/action could be an email, SMS, controlling devices, call other event, start

new process etc. To make a complex decision, the use of predictive analytics, machine learning, aggregation,

summarization etc. is not sufficient. The effective use of these techniques along with the appropriate data

fusion techniques as defined in Section 3.5 needs to be used.](https://image.slidesharecdn.com/v6618sep1713jun7612-10098-1-ed-201110090115/75/Tiarrah-Computing-The-Next-Generation-of-Computing-6-2048.jpg)

![Int J Elec & Comp Eng ISSN: 2088-8708

Tiarrah Computing: The Next Generation of Computing (Yanish Pradhananga)

1253

5. ARCHITECTURE OF TIARRAH COMPUTING

Figure 3 explain detail about the Tiarrah Computing. This architecture is explained clearly with the

flow of data and the approach how it is handled. The below description of the Figure 3 will give you a clear

vision of application.

5.1. First Tier

First tier lies under Edge Computing. The deployment of hardware with an appropriate network

connection are made using standard protocols as requirement. In Figure 3 two widely popular and emerging

network used in wired and wireless connection are shown. I am considering widely used automation

industrial design pattern with devices like PLC, Scada etc. So that, Industries can adopt Tiarrah Computing

with their existing hardware and network without any modification or with less modification.

First Tier or Edge Computing architecture and working mechanism is almost similar with the

architecture and working mechanism of automation industries (with less modification). Figure 3, illustrates

things can be directly connected with ARM Controller using RS-232 or RS-485 connections. Most of the

automation industries use RTU or PLC to connect things or devices. PLC is used where there is need to

handle mission critical event in real-time. The logic and algorithm are designed with message/event driven

architecture and is embedded in PLC. Widely used and popular automation industry communication protocol

known as modbus protocol can be used. ARM Controller is used for data acquistation of things and sending

these data to Message Broker. As shown in the figure, Monitoring and Controlling Section can be built with

the traditional monitoring and controlling system using Scada or can use this system inside for monitoring

and controlling. The advantage that this system gives in behave of Scada is that it can be used to monitor and

control from anywhere and anytime with ease. FPGA design pattern can be used in this tier using modbus

protocol [25]. ARM Controller can be connected with Router using Wi-Fi or wired connection. The figure

shows a wired connection using Ethernet (RJ-45) connection.

The Figure 3 also shows another very popular and emerging wireless connection protocol being

used in IoT to make an application known as Zigbee Protocol. Devices or Things can be directly connected to

Zigbee Devices using GPIO. Zigbee device can have multiple type of input connection like RJ-45, RS-232,

Wi-Fi etc and support multiple protocols like TCP/IP, Modbus etc. Because of that fetching data from PLC,

RTU can be performed using modbus protocol. Zigbee support popular topologies like Mesh topology, Star

topology and Tree topology because of that Zigbee is becoming more popular. Zigbee devices consume less

power and use wireless communication network for exchanging data between Zigbee Slave and Zigbee

Master. Zigbee Master is connected with the Router to have a WAN network connection for exchanging data.

5.2. Second Tier

This tier lies under Fog Computing. Fog Computing is evolving and emerging to distribute the cloud

responsibility or services as close to the data source for quick and immediate interaction. The services are

deployed inside DMZ network. DMZ network is a separate network other than LAN and WAN network.

LAN network lies in first tier and WAN network lies in third tier. As application is microservies based

application. Microservies are deployed in standard server or a private cloud [10] depending on the types of

services deployed in this tier. Microservices are deployed in public cloud as well as in private cloud.

Microservies are deployed in this tier to achieve low latency, real-time interactions, application distribution,

event handling, process handling etc. The performance of services deployed for real-time interaction is faster

in this layer as compare to the services deployed in cloud. Services are deployed in this tier for quick

interaction or immediate response. Real-Time interaction of data is recommended to publish/subscribe from

this tier. Real- Time monitoring and controlling are more efficient and faster in this layer. The services

deployed in this tier interact with the services deployed in cloud tier using REST API. Real-Time

communication between users is made possible, using WebSocket, SockJS etc.

5.3. Third Tier

This tier lies in cloud computing. This tier consist of Services deployed in cloud server, database,

cloud storage etc. The microservices in cloud communicate with microservices in DMZ network server using

APIs to remotely configure, control and manage the systems things. Cloud microservices include a

management UI for developing and deploying analytics expressions, maintains details of users and devices

details in database for monitoring and managing, and for managing the integration of services with the

customers identity access management and persistence solutions. This system address bigdata analytics in

cloud where there is requirement of huge resources for batch processing. The services which are deployed in

cloud interact or communicate with the services in private cloud using APIs. By deploying services which](https://image.slidesharecdn.com/v6618sep1713jun7612-10098-1-ed-201110090115/75/Tiarrah-Computing-The-Next-Generation-of-Computing-7-2048.jpg)

![ ISSN: 2088-8708

Int J Elec & Comp Eng, Vol. 8, No. 2, April 2018 : 1247 – 1255

1254

required high computation resource in cloud give flexibility to perform bigdata analytics in desire time by

allocating resources dynamically [9].

Figure 3. System Architecture of Tiarrah Computing

5.4. Fourth Tier

The fourth tier is user interface where user can interact with application using iOS application or

android Application or web based application. The devices can be mobile, tablet, laptop, desktop etc. User

can interact with application from anywhere at any time. Remote monitoring and controlling system give

more flexibility and control for users.

6. CONCLUSION

Tiarrah Computing is a computing approaching for next generation application deployment. Most

automation industries applications rely on edge computing only and is bound with a lot of circumstances.

Most IoT based applications are deployed using first and third Tier of Tiarrah computing. The four tier

computing architecture gives a lot of opportunity and flexibility as well as the ability to deal with upcoming

challenges. This Computing approach is so flexible that it can deal with realtime/batch bigdata challenges,

IoT application development and deployment challenges, flexible application deployment, dynamic resource

allocation, self-healing approach etc. Overall it is a platform or a computing approach where one can work

with the existing cloud computing with its features, design own fog computing approach or use any software

or services which might be available in an application in the future. One can use evolving edge computing

design approach to connect things or deploy things using Edge computing service provider as available.

Design your deployment, Real-time event handling, and design concept is a concept and idea to build an

application using existing technologies. One can take the benefits of existing technologies with logic to](https://image.slidesharecdn.com/v6618sep1713jun7612-10098-1-ed-201110090115/75/Tiarrah-Computing-The-Next-Generation-of-Computing-8-2048.jpg)

![Int J Elec & Comp Eng ISSN: 2088-8708

Tiarrah Computing: The Next Generation of Computing (Yanish Pradhananga)

1255

overcome the challenges created by IoT, IIoT, bigdata, real-time bigdata, event handling, data fusion,

designing wired and wireless things network, dynamic scaling and zero-downtime cluster. Overall it is the

practice of dealing with technologies to build an application with features that are scalable, durable,

available, consistent etc. It is the way to have a high level view to tackle IoT, bigdata and cloud computing

challenges.

REFERENCES

[1] J. A. Stankovic, “Research directions for the Internet of Things”, IEEE Internet of Things J., vol. 1, no. 1, pp. 3-9,

Feb. 2014.

[2] Amir Vahid Dastjerdi, Rajkumar Buyya, “Fog Computing: Helping the Internet of Things Realize Its Potential”,

IEEE Computer Society, vol. 49, no. 8, pp. 112-116, Aug. 2016.

[3] Dusit Niyato, Lu Xiao, Ping Wang, “Machine-to-machine communications for home energy management system in

smart grid”, IEEE Communications Magazine, vol. 49, no. 4, pp.53-59, 2011.

[4] Alexandru losup, Simon Ostermann, M. Nezih Yigitbasi, “Performance Analysis of Cloud Computing Services for

Many-Tasks Scientific Computing”, IEEE Transactions on Parallel and Distributed System, vol. 22, no. 6,

pp. 931-945, Jun. 2011.

[5] Bingwei Liu, Yu Chen, Ari Hadiks, Erik Blasch, Alex Aved, Dan Shen, Genshe Chen, “Information fusion in a

cloud computing era: A systems level perspective”, IEEE Aerospace and Electronic Systems Magazine, vol. 29,

no, 10, pp. 16-24, Oct. 2014.

[6] David L. Hall, James Llinas, “An Introduction to Multisensor Data Fusion”, Proc. of IEEE, vol. 85, pp. 6-23, Jan.

1997.

[7] Yu Zheng, “Methodologies for Cross-Domain Data Fusion: An Overview”, IEEE Transactions on Big Data, vol. 1,

no. 1, pp. 16-33, Mar. 2015.

[8] George Suciu, Alexandru Vulpe, Razvan Craciunescu, Cristina Butca, Victor Suciu, “Big Data Fusion for eHealth

and Ambient Assisted Living Cloud Applications”, Proc. of IEEE International Black Sea Conference on

Communication and Networking (BlackSeaCom), pp. 102-106, 2015.

[9] Yanish Pradhananga, Shridevi Karande, Chandraprakash Karande, “High Performance Analytics of Bigdata with

Dynamic and Optimized Hadoop Cluster”, Proc. IEEE International Conference on Advanced Communication

Control and Computing Technologies(ICACCCT), pp. 715-720, Jan. 2017.

[10] Robert Birke, Andrej Podzimek, Lydia Y. Chen, Evgenia Smimi, “Virtualization in the Private Cloud: State of the

Practice”, IEEE Transactions on Network and Service Management, vol. 13, no. 3, pp. 608-621, Aug. 2016.

[11] David S. Linthicum, “Practical Use of Microservices in Moving Workloads to the Cloud”, IEEE Cloud Computing,

vol.3, no. 5, pp. 6-9, Nov. 2016.

[12] Thasviya Haroon, S Neena, K K Krishnaprasad, Rejoice Wilson, Sanjo Simon, John Paul Martin, “Convivial

private cloud implementation system using OpenStack”, Proc. IEEE International Conference on Electrical,

Electronics, and Optimization Techniques(ICEEOT), Nov. 2016.

[13] Openstack, http://www.openstack.org, 2016, (29.09.2016)

[14] Yanish Pradhananga, Shridevi Karande, Chandraprakash Karande, “CBA: Cloud-based Bigdata Analytics”, Proc.

IEEE International Conference on Computing Communication Control and Automation(ICCUBEA), pp. 47-51, Jul.

2015.

[15] Dmitry Duplyakin, Matthew Haney, Henry Tufo, “Highly Available Cloud-Based Cluster Management”, Proc.

IEEE 15th International Conference on Cluster, Cloud and Grid Computing(CCGrid), Jul. 2015.

[16] Daniel Sun, Min Fu, Liming Zhu, Guoqiang Li, Qinghua Lu, “Non-Intrusive Anomaly Detection with Streaming

Performance Metrics and Logs for DevOps in Public Clouds: A Case Study in AWS”, IEEE Transactions on

Emerging Topics in Computing, vol. 4, no. 2, pp. 278-289, Jun. 2016.

[17] Lianping Chen, “Continuous Delivery: Huge Benefits, but Challenges Too”, IEEE Software, vol. 32, pp. 50-54,

no. 2, Apr. 2015.

[18] Alan Sill, “The Design and Architecture of Microservices”, IEEE Cloud Computing, vol. 3, no. 5, pp. 76-80,

Nov. 2016.

[19] Christian Esposito, Aniello Castiglione, Kim-Kwang Raymond Choo, “Challenges in Delivering Software in the

Cloud as Microservices”, IEEE Cloud Computing, vol. 3, no. 5, pp. 10-14, Nov. 2016.

[20] Yuri Alvarez, Fernando Las Heras, “ZigBee-based Sensor Network for Indoor Location and Tracking

Applications”, IEEE Latin America Transactions, vol. 14, no. 7, pp. 3208-3214, Jul. 2016.

[21] Zuochen Shi, Yintang Yang, Di Li, Yang Liu, “A Fully-Integrated Low-Power Analog Front-End for ZigBee

Transmitter Applications”, IEEE Chinese Journal of Electronics, vol. 25, no. 3, pp. 424-431, Aug. 2016.

[22] Eugene David Ngangue Ndih, Soumaya Cherkaoui, “On Enhancing Technology Coexistence in the IOT Era:

ZigBee and 802.11 Case”, IEEE Access, vol. 4, pp. 1835-1844, Apr. 2016.

[23] Mikhail Afnasyev, Tsuwei Chen, Geoffrey M. Voelker, Alex C. Snoeren, “Usage Patterns in an Urban WiFi

Network”, IEEE/ACM Transactions on Networking, vol. 18, no. 5, pp. 1359-1372, Oct. 2010.

[24] Bluetooth, https://www.bluetooth.org/, 2016 (accessed 17.10.2016).

[25] Jaimeen N. Chhatrawala, Nandish Jasani, Vidita Tilva, “FPGA based data Acquistion with Modbus protocol”,

Proc. International Conference on Communication and Signal Processing(ICCSP), Nov. 2016](https://image.slidesharecdn.com/v6618sep1713jun7612-10098-1-ed-201110090115/75/Tiarrah-Computing-The-Next-Generation-of-Computing-9-2048.jpg)