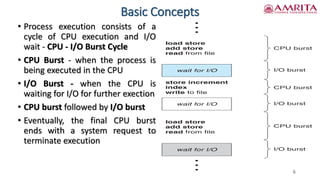

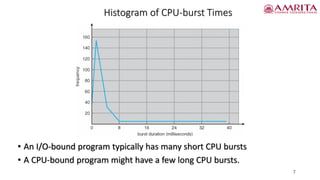

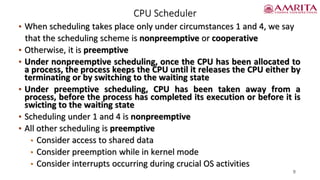

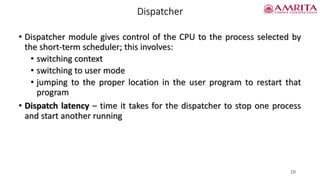

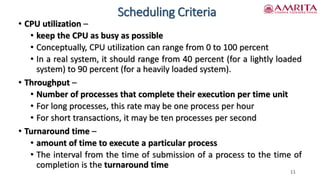

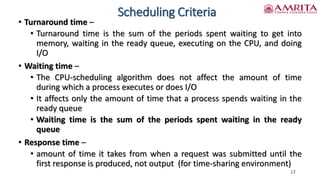

The document discusses CPU scheduling, a fundamental aspect of multiprogrammed operating systems, emphasizing the importance of maximizing CPU utilization by managing process execution effectively. It outlines various scheduling algorithms and evaluation criteria to select the appropriate scheduling method, including aspects like CPU utilization, throughput, turnaround time, and waiting time. Key distinctions are made between preemptive and nonpreemptive scheduling schemes, along with the role of dispatchers in managing process control.