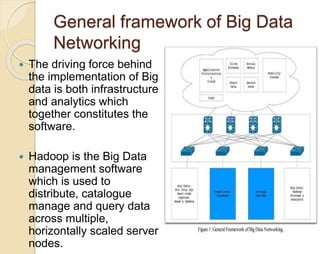

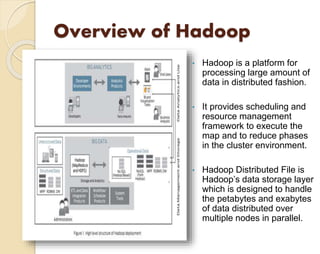

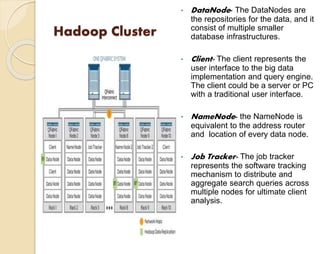

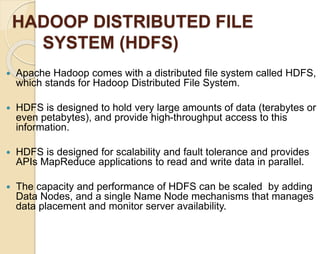

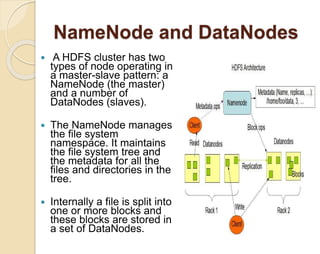

The document presents an overview of big data and its management using Hadoop, an open-source distributed software framework designed for processing large datasets. It includes a description of Hadoop's architecture, the Hadoop Distributed File System (HDFS), and the MapReduce programming model used for data processing. Finally, it discusses Hadoop as a service (HaaS) and identifies limitations and areas for future research in big data technologies.

![References:

White paper –Introduction to Big Data: Infrastructure

and Network consideration

MapReduce: Simplified Data processing on Large

Clusters, http://research .google.com/archive

/mapreduce.html

White paper Big Data Analytics[http:/Hadoop.intel.com]

The Hadoop Distributed File System Architecture and

Design:by Dhruba Borthakur

Big Data in the enterprise, Cisco White Paper.

Cloudera capacity planning recommendations:

http://www.cloudera.com/blog/ 2010/08/Hadoop HBase-capacity-

planning/

Apache Hadoop Wiki Website:

http://en.wikipedia.org/wiki/Apache-Hadoop.

Towards a Big Data Reference Architecture

[www.win.tue.nl/~gfletche/Maier_MSc_thesis.pdf]](https://image.slidesharecdn.com/presentation1-140911123059-phpapp02/85/Managing-Big-data-with-Hadoop-23-320.jpg)