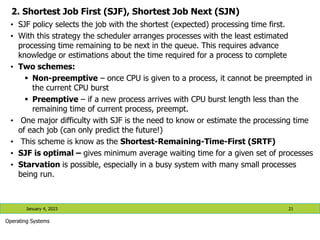

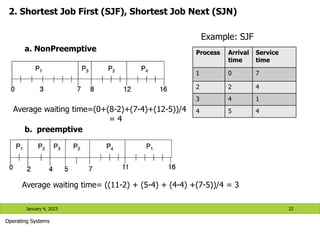

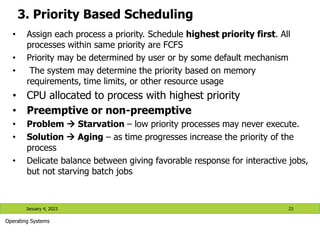

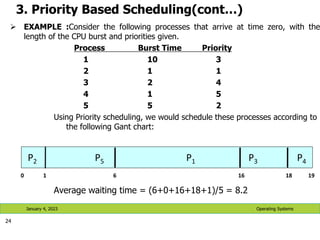

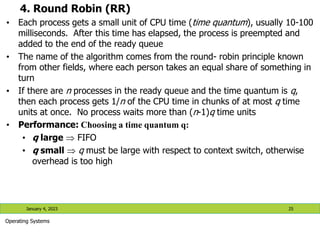

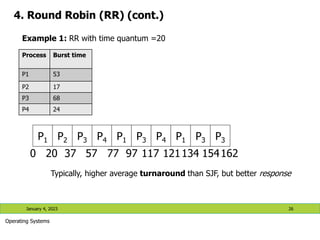

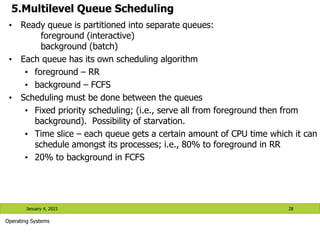

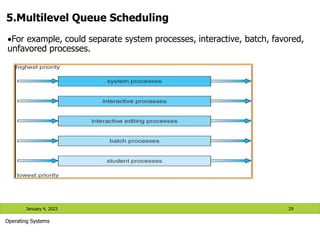

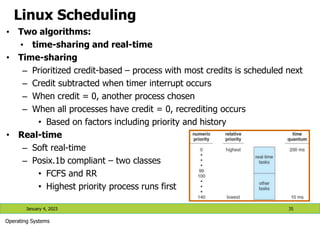

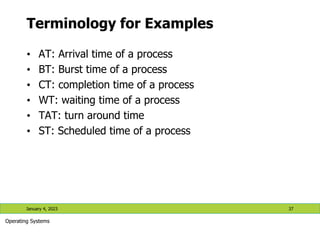

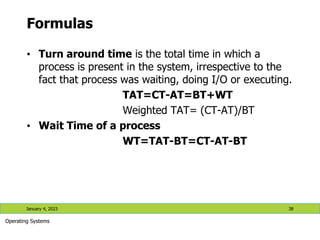

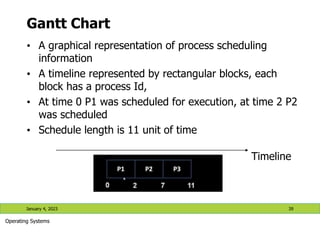

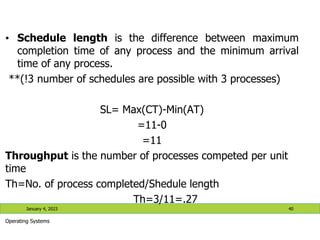

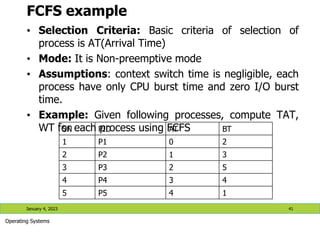

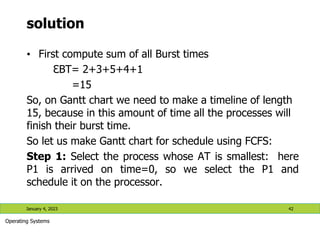

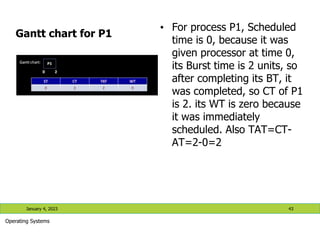

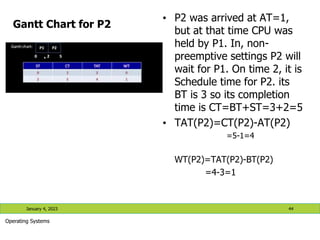

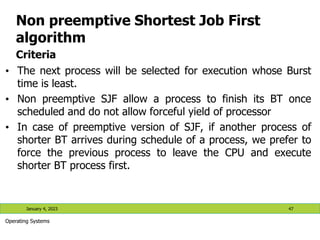

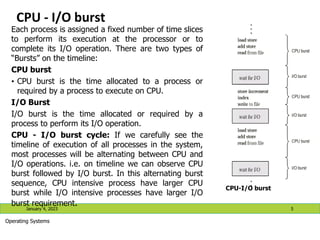

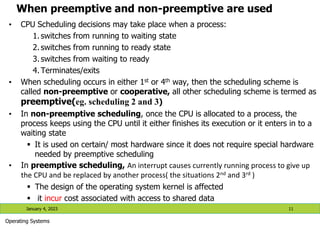

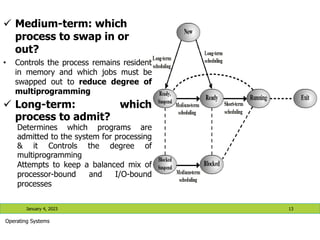

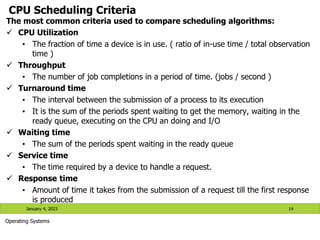

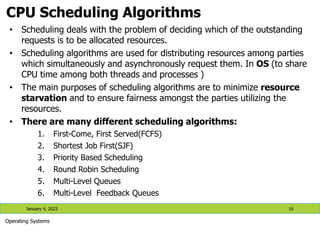

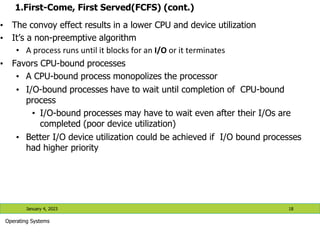

The document discusses process scheduling in operating systems. It covers basic concepts of process management including CPU scheduling algorithms. Several CPU scheduling algorithms are described in detail, including first-come, first-served (FCFS), shortest job first (SJF), priority-based scheduling, and round robin (RR). The goals of CPU scheduling algorithms are also discussed, such as minimizing waiting time and turnaround time while maximizing CPU utilization. Examples are provided to illustrate how each scheduling algorithm works.

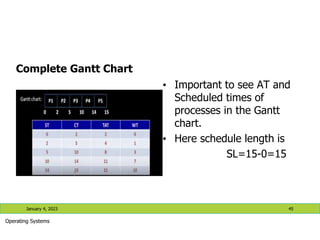

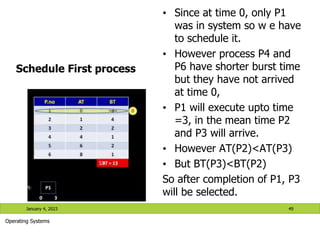

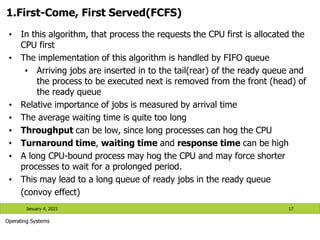

![1.First-Come, First Served (FCFS) (cont.)

January 4, 2023 20

Operating Systems

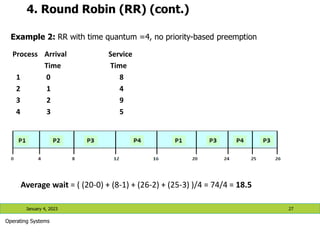

Process Arrival

time

Service

time

1 0 8

2 1 4

3 2 9

4 3 5

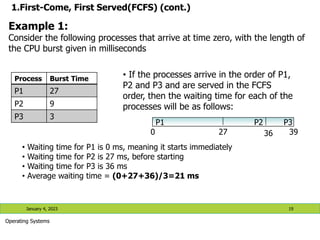

* What if the order of the processes was P2, P3, P1? What will be the average

waiting time? Check [avg. waiting time= 7 ms] what do you notice from this?

Example 2:

0 8 12 21 26

P1 P2 P3 P4

Average wait =((0) + (8-1) + (12-2) + (21-3) )/4 = 35/4 =

8.75

Waiting time for P1 = 0; P2 = 8-1; P3 = 12-2; P4=21-3](https://image.slidesharecdn.com/lecture4-processscheduling-230104191211-31196a1b/85/Lecture-4-Process-Scheduling-pptx-20-320.jpg)