【学会発表】LDAにおけるベイズ汎化誤差の厳密な漸近形【IBIS2020】

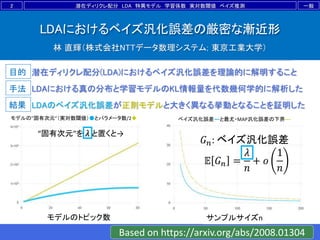

- 1. 目的 手法 結果 LDAにおけるベイズ汎化誤差の厳密な漸近形 林 直輝(株式会社NTTデータ数理システム; 東京工業大学) 潜在ディリクレ配分(LDA)におけるベイズ汎化誤差を理論的に解明すること LDAのベイズ汎化誤差が正則モデルと大きく異なる挙動となることを証明した LDAにおける真の分布と学習モデルのKL情報量を代数幾何学的に解析した 2 一般潜在ディリクレ配分 LDA 特異モデル 学習係数 実対数閾値 ベイズ推測 モデルの”固有次元”(実対数閾値)●とパラメータ数/2◆ モデルのトピック数 ベイズ汎化誤差―と最尤・MAP汎化誤差の下界--- サンプルサイズn 𝐺 𝑛: ベイズ汎化誤差 𝔼 𝐺 𝑛 = 𝜆 𝑛 + 𝑜 1 𝑛 Based on https://arxiv.org/abs/2008.01304 “固有次元”を 𝜆 と置くと→

- 2. 目的 手法 結果 LDAにおけるベイズ汎化誤差の厳密な漸近形 林 直輝(株式会社NTTデータ数理システム; 東京工業大学) 潜在ディリクレ配分(LDA)におけるベイズ汎化誤差を理論的に解明すること LDAのベイズ汎化誤差が正則モデルと大きく異なる挙動となることを証明した LDAにおける真の分布と学習モデルのKL情報量を代数幾何学的に解析した 2 一般潜在ディリクレ配分 LDA 特異モデル 学習係数 実対数閾値 ベイズ推測 モデルの”固有次元”(実対数閾値)●とパラメータ数/2◆ モデルのトピック数 ベイズ汎化誤差―と最尤・MAP汎化誤差の下界--- サンプルサイズn 𝐺 𝑛: ベイズ汎化誤差 𝔼 𝐺 𝑛 = 𝜆 𝑛 + 𝑜 1 𝑛 Based on https://arxiv.org/abs/2008.01304 “固有次元”を 𝜆 と置くと→

- 3. 概要 3 ポイント 1. LDAは特異モデル故に汎化誤差は不明 →これを理論的に解明した 2. 多くの汎化誤差解析はバウンド評価 →厳密な漸近形を導出した 3. “固有次元”λの理論値はsBICに必要 →精密なモデル選択が可能となった

- 4. 目次 1. 潜在ディリクレ配分(LDA) 2. 特異モデルの汎化誤差解析 3. 主定理 4. 考察と結論 4

- 6. 1.潜在ディリクレ配分(LDA) • 文書 𝑧 𝑛 と単語 𝑥 𝑛 :観測 • トピック 𝑦 𝑛 :不可観測 • 文書→単語の出現確率を推定するモデル 6 𝑥 𝑛 ∼ 𝑞 𝑥 𝑧 :真の分布 𝑝 𝑥 𝑧, 𝑦, 𝑤 :学習モデル estimate n xyz

- 7. 1.潜在ディリクレ配分(LDA) 7 ART Horsthuis fractal NAME MATH Weierstrass fractal NAME ・ ・ ・ ・ ・ ・ ART draw ・ ・ ・ NAME Takagi LDAによるデータ(単語)の生成過程モデリング Document 1 Document N [4] HW. 2020a, modified

- 8. 1.潜在ディリクレ配分(LDA) 8 ART Horsthuis fractal NAME MATH Weierstrass fractal NAME ・ ・ ・ ・ ・ ・ ART draw ・ ・ ・ NAME Takagi LDAによるデータ(単語)の生成過程モデリング Document 1 Document N [4] HW. 2020a, modified 文書jのトピック比率 𝑏𝑗 = 𝑏1𝑗, … , 𝑏 𝐻𝑗 トピックkの単語比率 𝑎 𝑘 = 𝑎1𝑘, … , 𝑎 𝑀𝑘

- 9. 1.潜在ディリクレ配分(LDA) • LDAの学習モデル: 𝑝 𝑥|𝑧, 𝑦, 𝐴, 𝐵 ≔ 𝑗 𝑁 𝑘 𝐻 𝑏 𝑘𝑗Cat 𝑥 𝑎 𝑘 𝑦 𝑘 𝑧 𝑗 . ‒ 文書 𝑧, トピック 𝑦, 単語 𝑥, : それぞれ(N,H,M)次元の onehot ベクトル. ‒ パラメータ 𝐴; 𝑀 × 𝐻, 𝐵; 𝐻 × 𝑁 :確率行列 𝑘 𝑎𝑖𝑘 = 1, 𝑗 𝑏 𝑘𝑗 = 1. ‒ トピックを周辺化すると, 𝑝 𝑥 𝑧, 𝐴, 𝐵 = 𝑘 𝑗 𝑁 𝑏 𝑘𝑗Cat 𝑥 𝑎 𝑘 𝑧 𝑗 . 9 0.3 0.1 0.5 0.3 0.1 0.1 0.4 0.8 0.4 確率行列の例

- 10. 1.潜在ディリクレ配分(LDA) • LDAはテキストマイニング以外にも様々な領域で 役に立つ ‒ 画像解析,計量経済学,地球科学,…… • LDAの推定性能(汎化誤差)は未知 ‒ 階層構造による特異性 10

- 12. 真の分布を𝑞 𝑥 ,予測分布を𝑝∗ 𝑥 = 𝑃 𝑥 𝑥 𝑛 とする. • 汎化誤差 𝐺 𝑛 :真から予測へのKL情報量 𝐺 𝑛 = 𝑞 𝑥 log 𝑞 𝑥 𝑝∗ 𝑥 d𝑥 . 12 真の分布 データ 予測分布 2.特異モデルの汎化誤差解析 汎化誤差

- 13. • 特異学習理論:特異な場合の汎化誤差解析 • 事後分布が正規分布で近似できなくても、 汎化誤差の平均値の挙動が分かる: 𝔼 𝐺 𝑛 = 𝜆 𝑛 − 𝑚 − 1 𝑛 log 𝑛 + 𝑜 1 𝑛 log 𝑛 • 係数𝜆を実対数閾値、 𝑚を多重度という. ‒ KL(q||p)の零点が作る代数多様体から定まる. • 周辺尤度𝑍 𝑛も 𝜆, 𝑚 が主要項となる: − log 𝑍 𝑛 = 𝑛𝑆 𝑛 + 𝜆 log 𝑛 − 𝑚 − 1 log log 𝑛 + 𝑂𝑝 1 . Snは経験エントロピー. 13 [7] Watanabe. 2001 2.特異モデルの汎化誤差解析

- 14. 2.特異モデルの汎化誤差解析 • 実対数閾値𝜆の直感的意味:体積次元 𝜆 = lim 𝑡→+0 log 𝑉 𝑡 log 𝑡 , 𝑉 𝑡 = 𝐾 𝑤 <𝑡 𝜑 𝑤 d𝑤 . ‒ KL(q||p) = 𝐾 𝑤 の零点近傍の体積次元,常に有理数 14 𝐾 𝑤 < 𝑡の模式図 黒+:零点集合 赤//: 𝑉 𝑡 の積分領域 𝑡 → +0

- 15. 2.特異モデルの汎化誤差解析 • 実対数閾値𝜆の直感的意味:体積次元 𝜆 = lim 𝑡→+0 log 𝑉 𝑡 log 𝑡 , 𝑉 𝑡 = 𝐾 𝑤 <𝑡 𝜑 𝑤 d𝑤 . ‒ KL(q||p) = 𝐾 𝑤 の零点近傍の体積次元,常に有理数 • 似た概念:ミンコフスキー次元𝑑∗ 𝑑∗ = 𝑑 − lim 𝑡→+0 log 𝒱 𝑡 log 𝑡 , 𝒱 𝑡 = dist 𝑆,𝑤 <𝑡 d𝑤 . ‒ 部分空間 𝑆 ⊂ ℝ 𝑑 のフラクタル次元,無理数になりうる 15

- 16. 2.特異モデルの汎化誤差解析 • (𝜆, 𝑚) を求める多くの研究がある: 16 特異モデル 文献 混合正規分布 Yamazaki, et. al. in 2003 [9] 縮小ランク回帰=行列分解 Aoyagi, et. al. in 2005 [1] マルコフモデル Zwiernik in 2011 [10] 非負値行列分解 H, et. al. in 2017/2020 [3]/[5] … … 本研究の位置づけ: 特異モデルの汎化誤差解析の知識体系への貢献

- 17. 2.特異モデルの汎化誤差解析 • (𝜆, 𝑚) を求める多くの研究がある: 17 特異モデル 文献 混合正規分布 Yamazaki, et. al. in 2003 [9] 縮小ランク回帰=行列分解 Aoyagi, et. al. in 2005 [1] マルコフモデル Zwiernik in 2011 [10] 非負値行列分解 H, et. al. in 2017/2020 [3]/[5] … … 本研究の位置づけ: 特異モデルの汎化誤差解析の知識体系への貢献 従来研究:ほとんどの研究ではλの上界のみ解明 本研究:LDAのλとmの厳密値を解明

- 19. 3.主定理 【本研究の主結果】 LDAの実対数閾値𝜆を明らかにした: (1) ①N+H0≦M+H & ②M+H0≦N+H & ③H+H0≦M+Nの とき, 19 𝜆 = 1 8 2 𝐻 + 𝐻0 𝑀 + 𝑁 − 𝑀 − 𝑁 2 − 𝐻 + 𝐻0 2 − 𝛿, 𝛿 = 𝑁 2 , 𝑀 + 𝑁 + 𝐻 + 𝐻0: 偶数. 𝑁 2 − 1 8 , 𝑀 + 𝑁 + 𝐻 + 𝐻0: 奇数. Thm. 3.1. in https://arxiv.org/abs/2008.01304

- 20. 3.主定理 【本研究の主結果】 LDAの実対数閾値𝜆を明らかにした: (2) not ①, i.e. M+H<N+H0のとき, 20 𝜆 = 1 2 𝑀𝐻 + 𝑁𝐻0 − 𝐻𝐻0 − 𝑁 . Thm. 3.1. in https://arxiv.org/abs/2008.01304

- 21. 3.主定理 【本研究の主結果】 LDAの実対数閾値𝜆を明らかにした: (3) not ②, i.e. N+H<M+H0のとき, 21 𝜆 = 1 2 𝑁𝐻 + 𝑀𝐻0 − 𝐻𝐻0 − 𝑁 . Thm. 3.1. in https://arxiv.org/abs/2008.01304

- 22. 3.主定理 【本研究の主結果】 LDAの実対数閾値𝜆を明らかにした: (4) not ③, i.e. M+N<H+H0のとき, 多重度は(1)の奇数ケースで 𝑚 = 2,それ以外で 𝑚 = 1. 22 𝜆 = 1 2 𝑀𝑁 − 𝑁 . Thm. 3.1. in https://arxiv.org/abs/2008.01304

- 24. 4.考察と結論 • 真を固定してトピック数を増やすとどうなるか? 24 実対数閾値lim 𝑛→∞ 𝑛𝔼𝐺𝑛 正則モデルと大きく異なる挙動 • パラメータ次元/2(黄◆): 線型に増加して非有界 • LDAの実対数閾値(青●): 非線形かつ上に有界 𝑑 2 = 𝑀 − 1 𝐻 + 𝐻 − 1 𝑁 2 . 𝑑 2 𝜆

- 25. 4.考察と結論 • ベイズ推測以外の推定手法との比較は? 25 • 同次元の正則モデル(黄---): 最尤・MAP汎化誤差の下界 • LDA(青ー): 同次元の正則モデル未満 汎化誤差𝔼𝐺𝑛 𝔼 𝐺 𝑛 MAP ≈ 𝜇 𝑛 𝜆 ≤ 𝑑 2 ≪ 𝜇 by [8]Watanabe.2018 ⇒ 𝜇 − 𝜆 ≥ 𝑑 2 − 𝜆. 𝔼 𝐺 𝑛 LDA ≈ 𝜆 𝑛 𝔼 𝐺 𝑛 Regular ≈ 𝑑 2𝑛

- 26. 4.考察と結論 • モデル選択への応用 ‒ 周辺尤度ベースのモデル選択:BIC, WBIC, sBIC, … • 特異ベイズ情報量規準sBIC ‒ 特異学習理論の周辺尤度を基にして周辺尤度を近似: − log 𝑍 𝑛 = 𝑛𝑆 𝑛 + 𝜆 log 𝑛 − 𝑚 − 1 log log 𝑛 + 𝑂𝑝 1 . ‒ WBICより数値的分散が小さく正確 ‒ 実対数閾値 𝜆 の理論値が必要 • 主定理→sBICによるモデル選択がLDAで可能に 26 [2] Drton & Plummer. 2017

- 27. 4.考察と結論 【結論】 • LDA:テキスト解析などに用いられる階層モデル ‒ 階層性により特異モデル→ベイズ汎化誤差が不明 • 特異学習理論:ベイズ汎化誤差は実対数閾値によって 決定される ‒ LDAのベイズ汎化誤差解析を可能とする • LDAについて実対数閾値を厳密に解明 ‒ sBICによる精密なモデル選択が可能に 27

- 28. 文献 [1] Aoyagi, M & Watanabe, S. Stochastic complexities of reduced rank regression in Bayesian estimation. Neural Netw. 2005;18(7):924–33. [2] Drton, M & Plummer, M. A Bayesian information criterion for singular models. J R Stat Soc B. 2017;79:323–80 with discussion. [3] H, N & Watanabe, S. (2017). Tighter upper bound of real log canonical threshold of non-negative matrix factorization and its application to Bayesian inference. In IEEE symposium series on computational intelligence (IEEE SSCI). (pp. 718–725). [4] H, N & Watanabe, S. (2020a). Asymptotic Bayesian generalization error in latent Dirichlet allocation. SN Computer Science. 2020;1(69):1-22. [5] H, N. (2020b). Variational approximation error in non-negative matrix factorization. Neural Netw. 2020;126(June):65-75. [6] Nakada, R & Imaizumi, M. Adaptive approximation and generalization of deep neural network with Intrinsic dimensionality. JMLR. 2020;21(174):1-38. [7] Watanabe, S. Algebraic geometrical methods for hierarchical learning machines. Neural Netw. 2001;13(4):1049–60. [8] Watanabe, S. Mathematical theory of Bayesian statistics. Florida: CR Press. 2018. [9] Yamazaki, K & Watanabe, S. Singularities in mixture models and upper bounds of stochastic complexity. Neural Netw. 2003;16(7):1029–38. [10] Zwiernik P. An asymptotic behaviour of the marginal likelihood for general Markov models. J Mach Learn Res. 2011;12(Nov):3283–310. 28

- 29. 音声ソフトと利益相反(CoI) 【音声ソフト】 • 『VOICEROID2 琴葉 茜・葵』(株式会社AHS) 【CoI】 • 本発表は著者個人の研究活動に依る. • 所属組織における業務は一切関係ない. 29

- 30. 音声ソフトと利益相反(CoI) 【音声ソフト】 • 『VOICEROID2 琴葉 茜・葵』(株式会社AHS) 【CoI】 • 本発表は著者個人の研究活動に依る. • 所属組織における業務は一切関係ない. 30