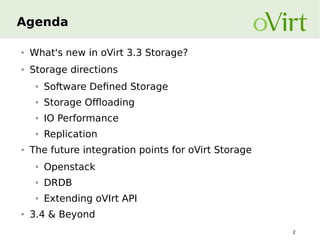

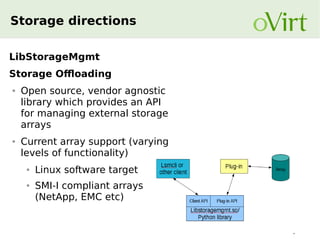

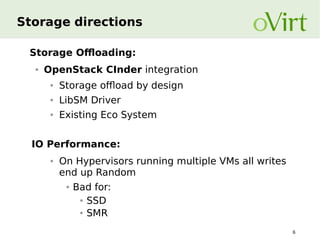

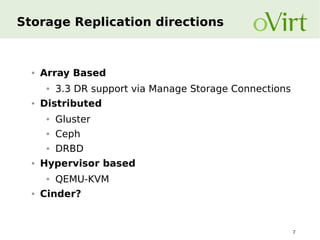

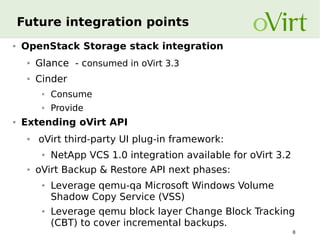

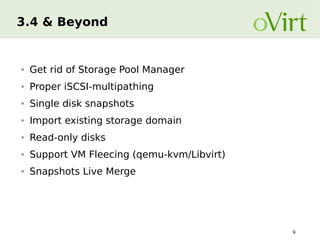

The document discusses the advancements and future integration points for oVirt storage as presented in a session at the Red Hat KVM Forum. Key topics include what's new in oVirt 3.3 storage, software-defined storage directions, storage offloading, and storage replication. The document emphasizes the importance of APIs for managing storage, integration with OpenStack, and future developments anticipated in oVirt 3.4 and beyond.