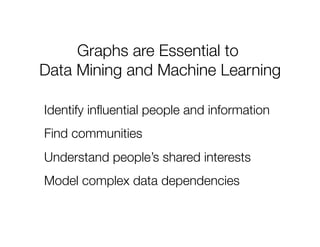

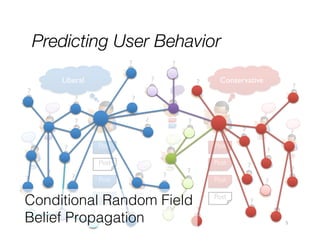

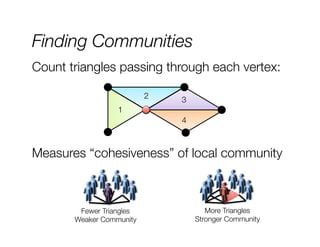

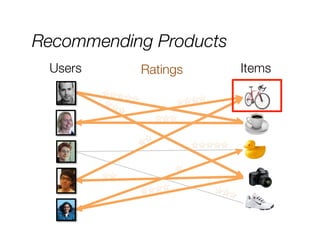

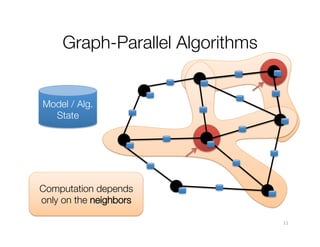

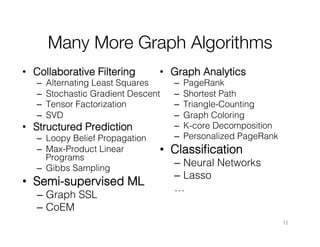

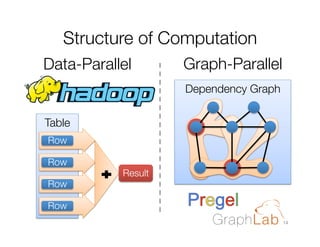

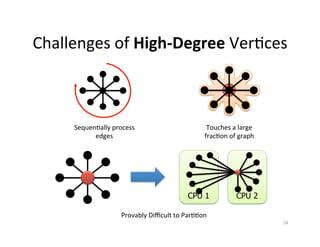

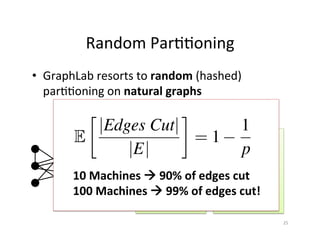

The document discusses machine learning techniques for graphs and graph-parallel computing. It describes how graphs can model real-world data with entities as vertices and relationships as edges. Common machine learning tasks on graphs include identifying influential entities, finding communities, modeling dependencies, and predicting user behavior. The document introduces the concept of graph-parallel programming models that allow algorithms to be expressed by having each vertex perform computations based on its local neighborhood. It presents examples of graph algorithms like PageRank, product recommendations, and identifying leaders that can be implemented in a graph-parallel manner. Finally, it discusses challenges of analyzing large real-world graphs and how systems like GraphLab address these challenges through techniques like vertex-cuts and asynchronous execution.

![Recommending Products

≈

Movies

f(j)

f(i)

Movies

Iterate:

f [i] = arg min

w2Rd

X

j2Nbrs(i)

rij

f(1)

User Factors (U)

Users

Netflix

x

f(2)

T

w f [j]

r13

r14

r24

r25

2

f(3)

f(4)

f(5)

Movie Factors (M)

Users

Low-Rank Matrix Factorization:

+ ||w||2

2

8](https://image.slidesharecdn.com/joeygonzalezgraphlabmlconf2013-131119180112-phpapp01/85/Joey-gonzalez-graph-lab-m-lconf-2013-8-320.jpg)

![Identifying Leaders

R[i] = 0.15 +

X

wji R[j]

j2Nbrs(i)

Rank of

user i

Weighted sum of

neighbors’ ranks

Everyone starts with equal ranks

Update ranks in parallel

Iterate until convergence

10](https://image.slidesharecdn.com/joeygonzalezgraphlabmlconf2013-131119180112-phpapp01/85/Joey-gonzalez-graph-lab-m-lconf-2013-10-320.jpg)

![How should we program"

graph-parallel algorithms?

“Think like a Vertex.”

- Pregel [SIGMOD’10]

15](https://image.slidesharecdn.com/joeygonzalezgraphlabmlconf2013-131119180112-phpapp01/85/Joey-gonzalez-graph-lab-m-lconf-2013-15-320.jpg)

![The Graph-Parallel Abstraction

A user-defined Vertex-Program runs on each vertex

Graph constrains interaction along edges

Using messages (e.g. Pregel [PODC’09, SIGMOD’10])

Through shared state (e.g., GraphLab [UAI’10, VLDB’12])

Parallelism: run multiple vertex programs simultaneously

16](https://image.slidesharecdn.com/joeygonzalezgraphlabmlconf2013-131119180112-phpapp01/85/Joey-gonzalez-graph-lab-m-lconf-2013-16-320.jpg)

![The GraphLab Vertex Program

Vertex Programs directly access adjacent vertices and edges

GraphLab_PageRank(i)

//

Compute

sum

over

neighbors

total

=

0

foreach

(j

in

neighbors(i)):

total

=

total

+

R[j]

*

wji

//

Update

the

PageRank

R[i]

=

0.15

+

total

//

Trigger

neighbors

to

run

again

if

R[i]

not

converged

then

signal

nbrsOf(i)

to

be

recomputed

R[4]

*

w41

4

+

1

+

3

Signaled vertices are recomputed eventually.

2

17](https://image.slidesharecdn.com/joeygonzalezgraphlabmlconf2013-131119180112-phpapp01/85/Joey-gonzalez-graph-lab-m-lconf-2013-17-320.jpg)

![A Common Pattern in

Vertex Programs

GraphLab_PageRank(i)

//

Compute

sum

over

neighbors

total

=

0

Gather

Informa1on

foreach(

j

in

neighbors(i)):

About

Neighborhood

total

=

total

+

R[j]

*

wji

//

Update

the

PageRank

Update

Vertex

R[i]

=

total

//

Trigger

neighbors

to

run

again

priority

=

|R[i]

–

oldR[i]|

Signal

Neighbors

&

if

R[i]

not

converged

then

Modify

Edge

Data

signal

neighbors(i)

with

priority

27](https://image.slidesharecdn.com/joeygonzalezgraphlabmlconf2013-131119180112-phpapp01/85/Joey-gonzalez-graph-lab-m-lconf-2013-27-320.jpg)

![Minimizing Communication in

PowerGraph

Y

Communication is linear in "

the number of machines "

each vertex spans

A vertex-cut minimizes "

machines each vertex spans

Percolation theory suggests that power law

graphs have good vertex cuts. [Albert et al. 2000]

29](https://image.slidesharecdn.com/joeygonzalezgraphlabmlconf2013-131119180112-phpapp01/85/Joey-gonzalez-graph-lab-m-lconf-2013-29-320.jpg)

![PageRank on Twitter Follower Graph

Natural Graph with 40M Users, 1.4 Billion Links

Run1me

Per

Itera1on

0

50

100

150

200

Hadoop

GraphLab

Twister

Piccolo

Order of magnitude

by exploiting

properties of Natural

Graphs

PowerGraph

Hadoop results from [Kang et al. '11]

Twister (in-memory MapReduce) [Ekanayake et al. ‘10]

31](https://image.slidesharecdn.com/joeygonzalezgraphlabmlconf2013-131119180112-phpapp01/85/Joey-gonzalez-graph-lab-m-lconf-2013-31-320.jpg)

![Triangle Counting on Twitter

40M Users, 1.4 Billion Links

Counted: 34.8 Billion Triangles

Hadoop

[WWW’11]

1536 Machines

423 Minutes

64 Machines

15 Seconds

1000 x Faster

34

S.

Suri

and

S.

Vassilvitskii,

“CounMng

triangles

and

the

curse

of

the

last

reducer,”

WWW’11](https://image.slidesharecdn.com/joeygonzalezgraphlabmlconf2013-131119180112-phpapp01/85/Joey-gonzalez-graph-lab-m-lconf-2013-34-320.jpg)