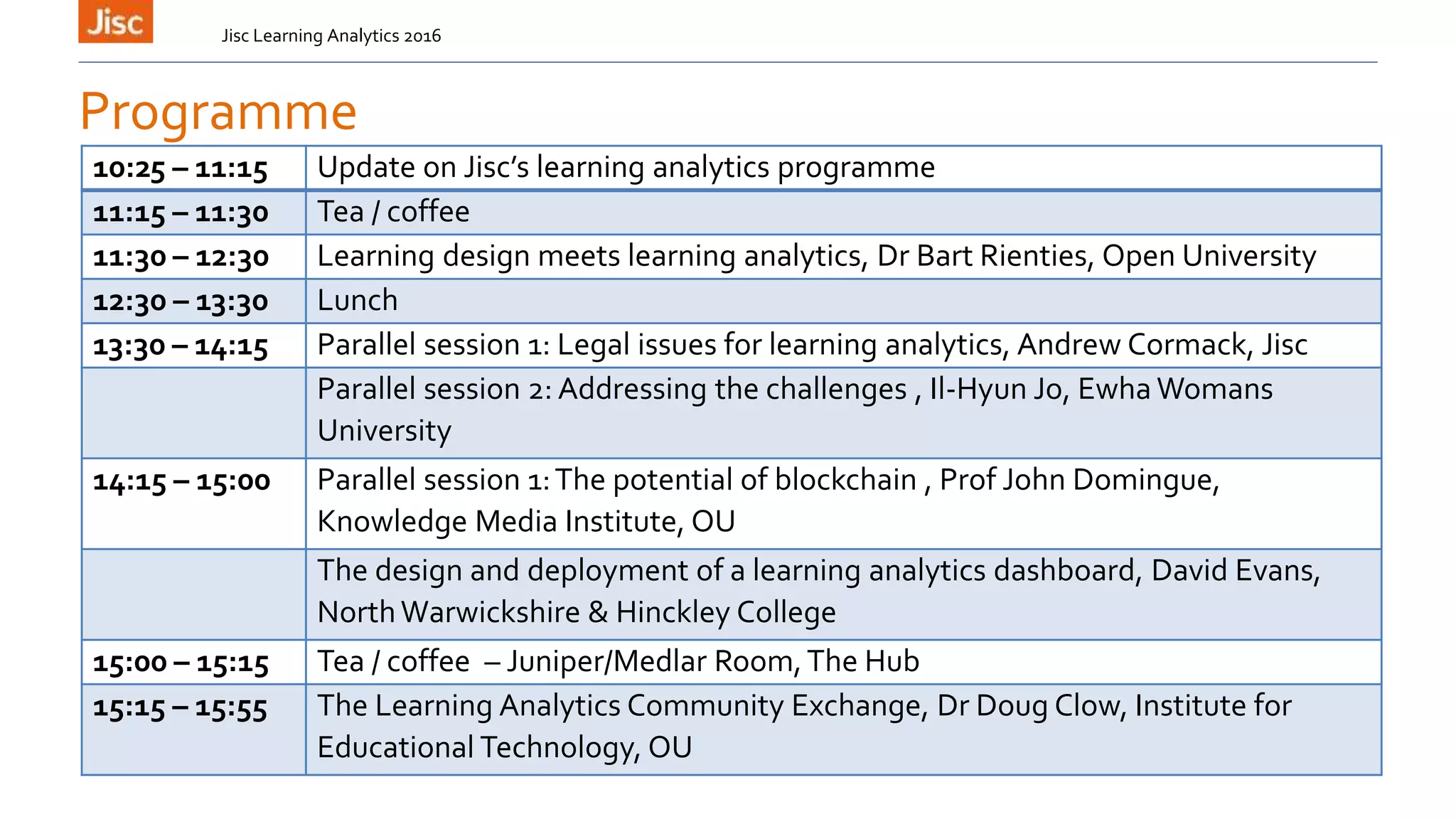

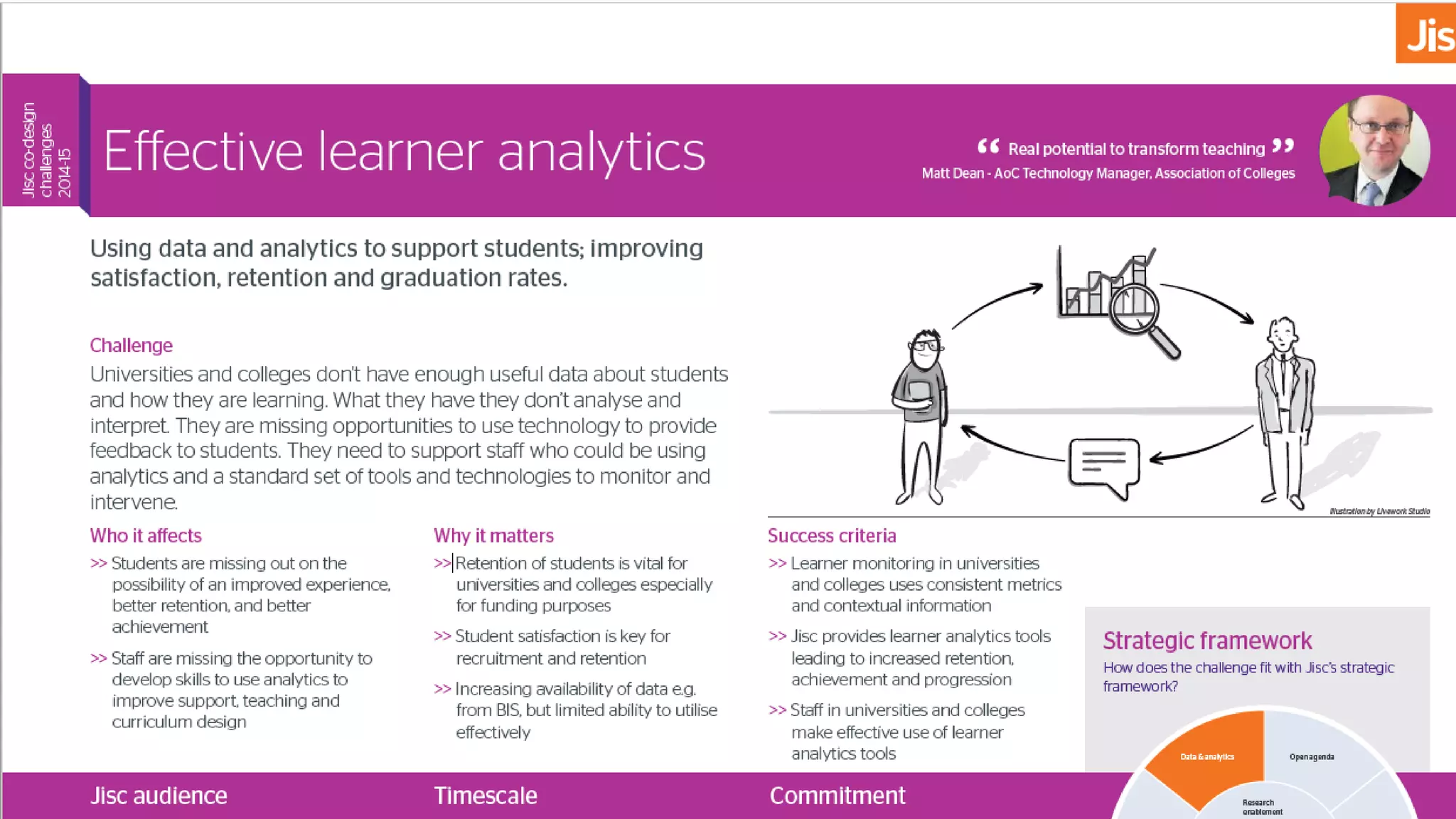

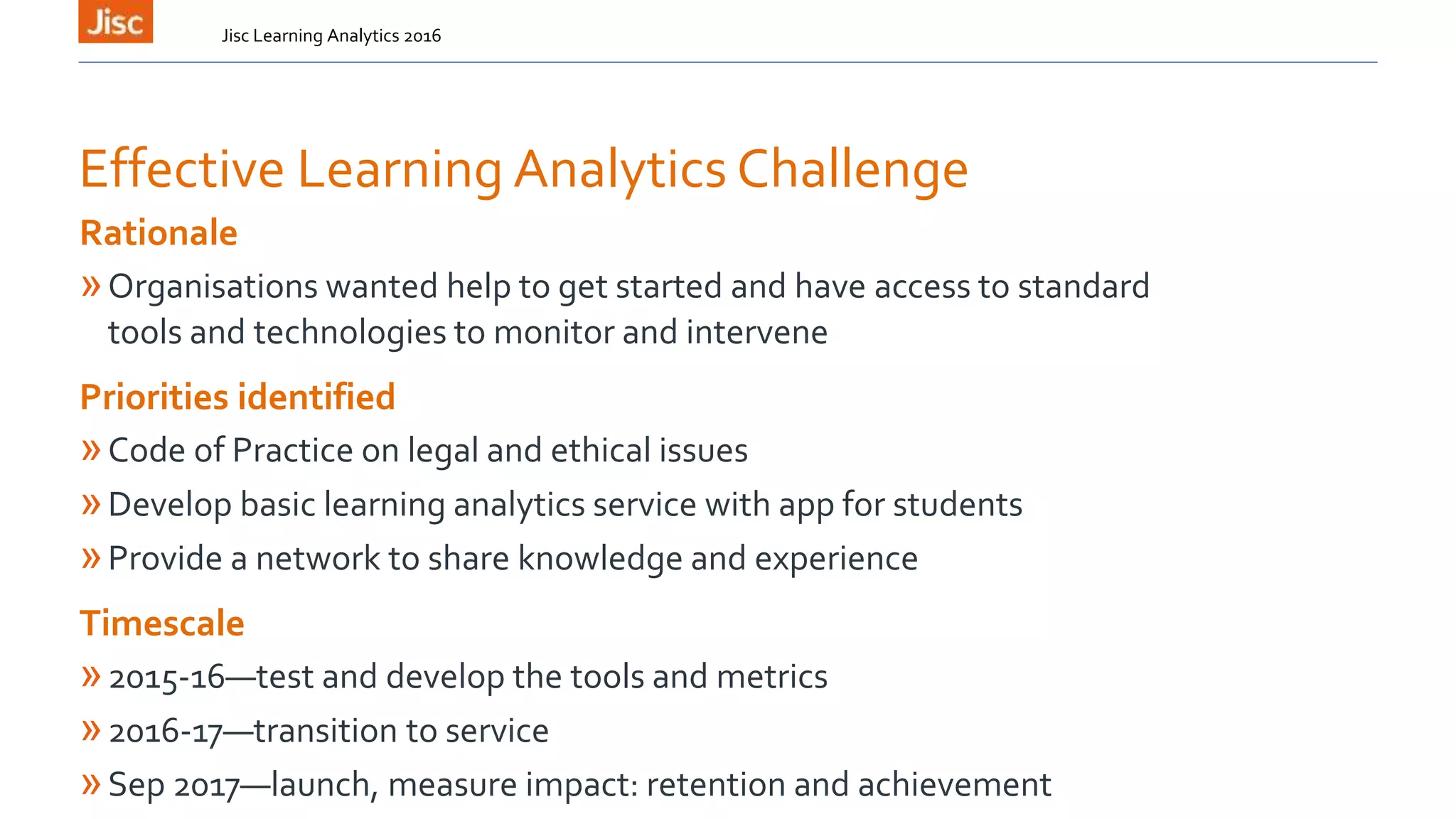

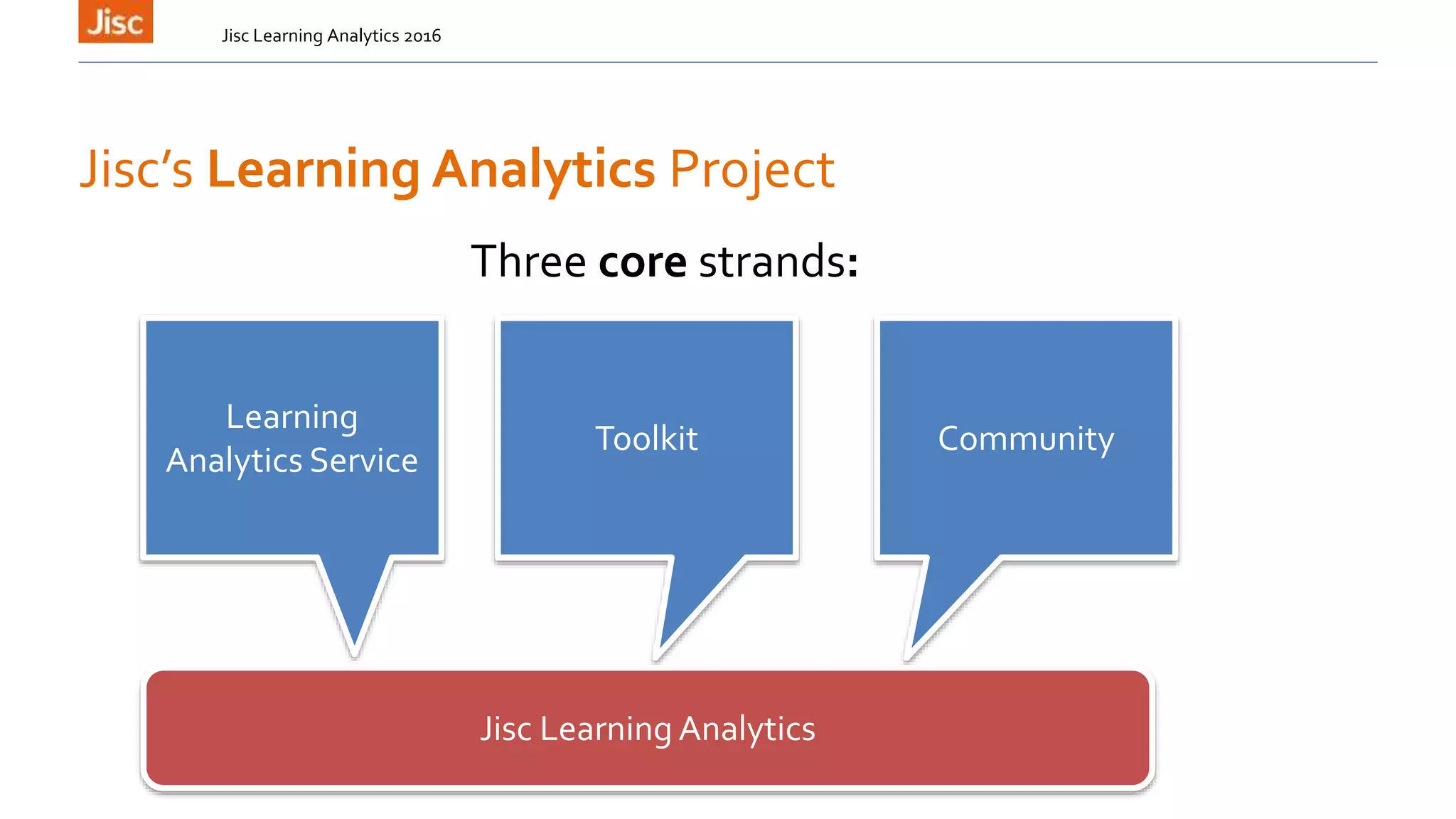

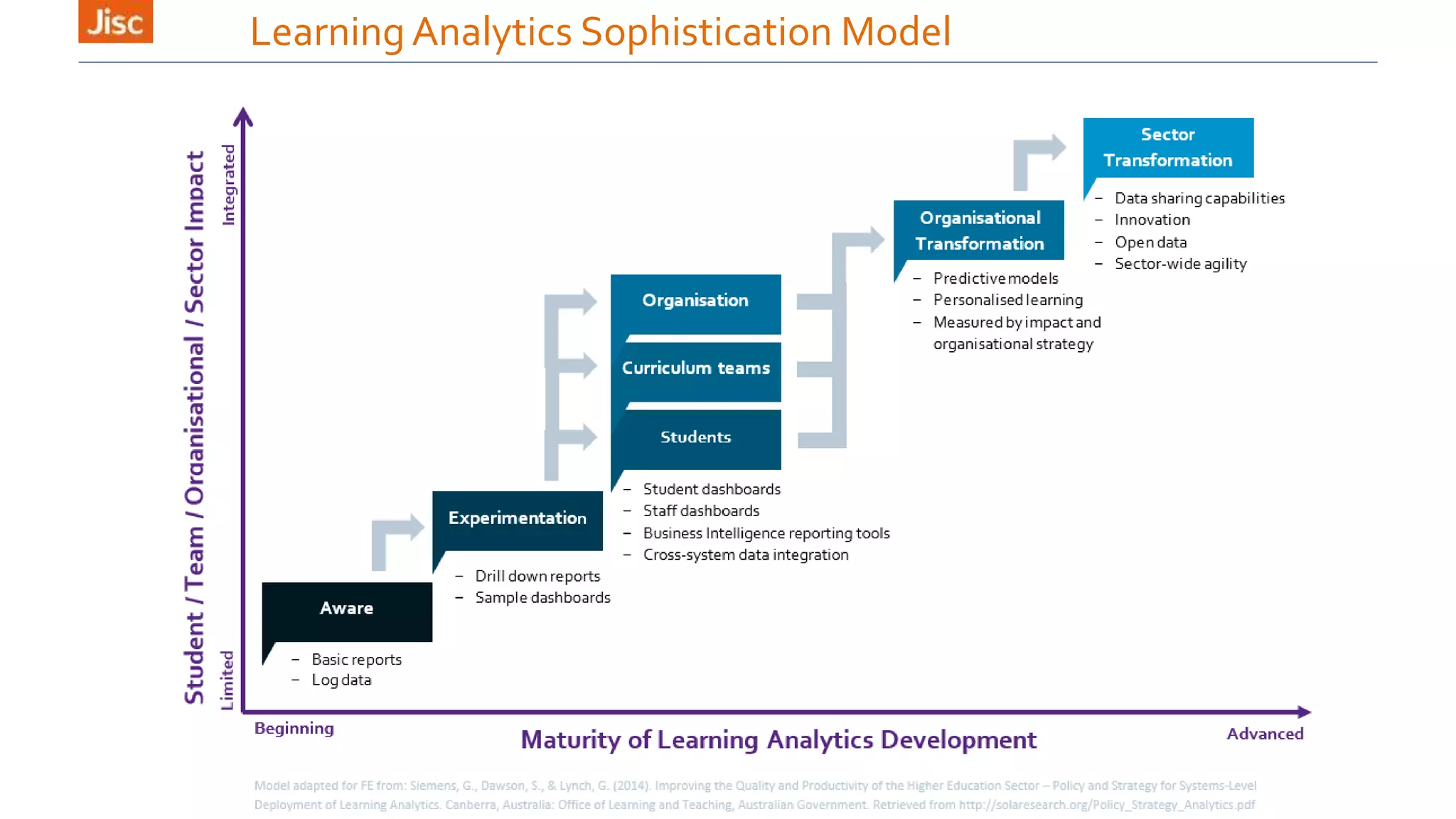

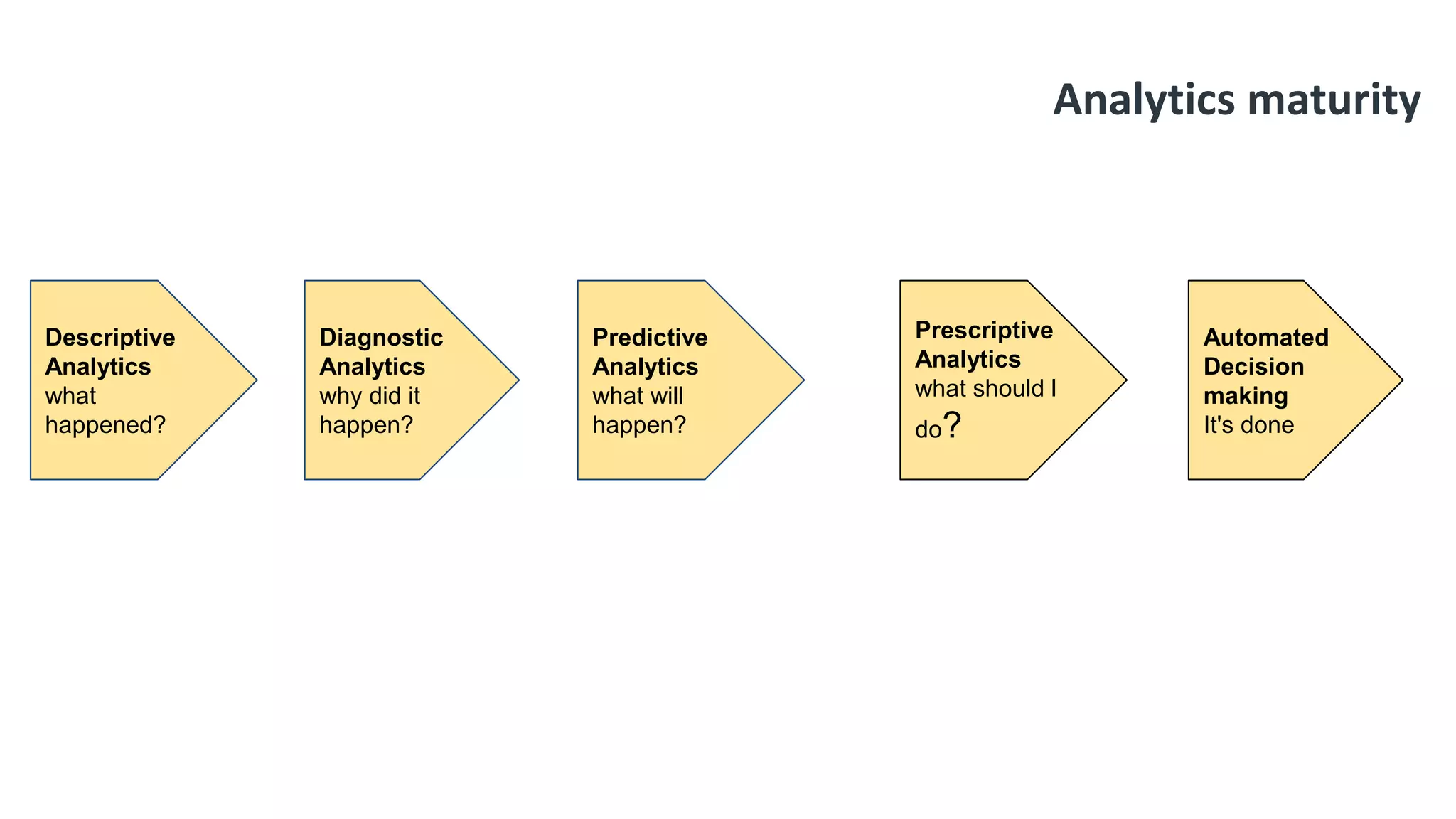

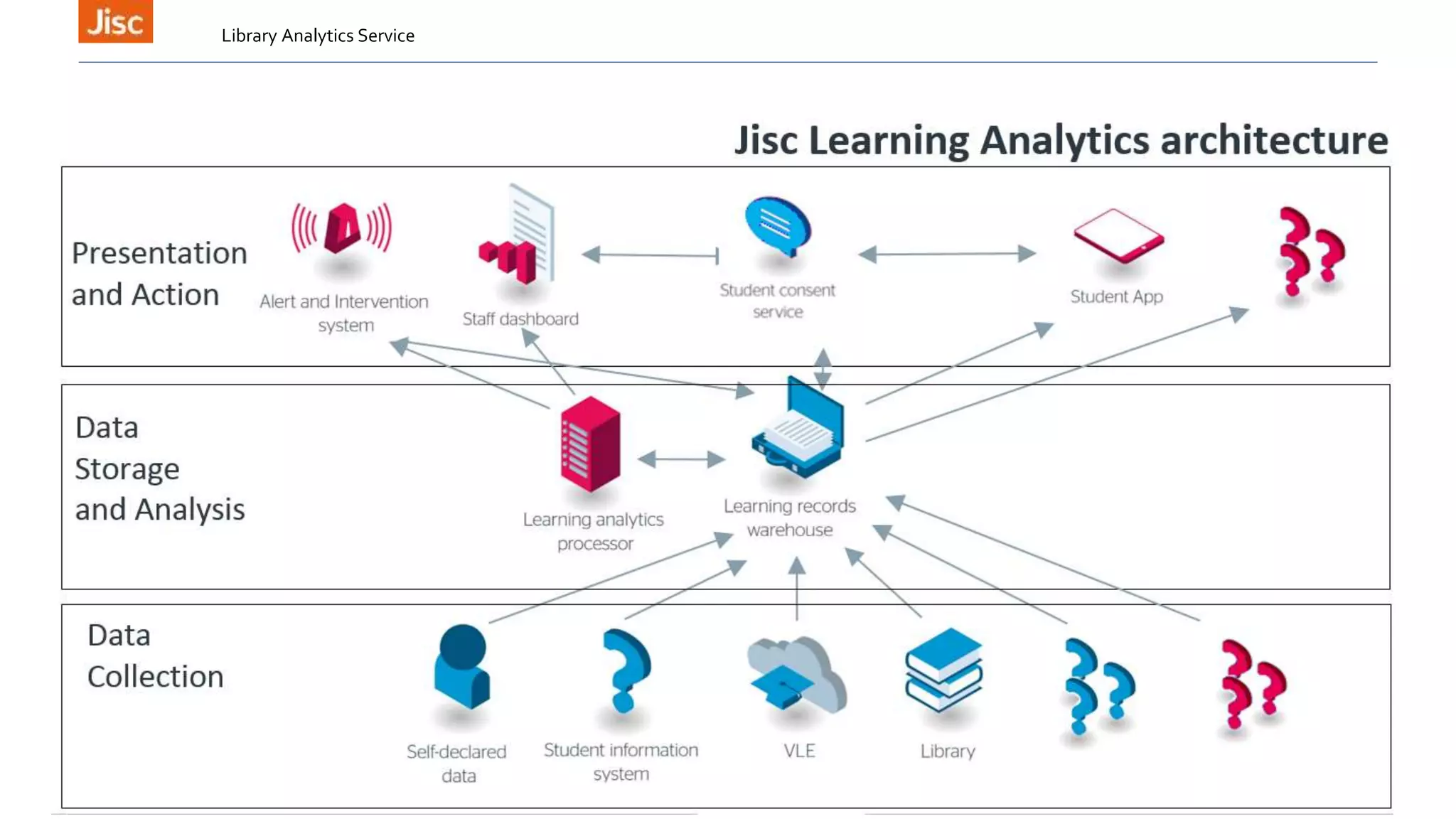

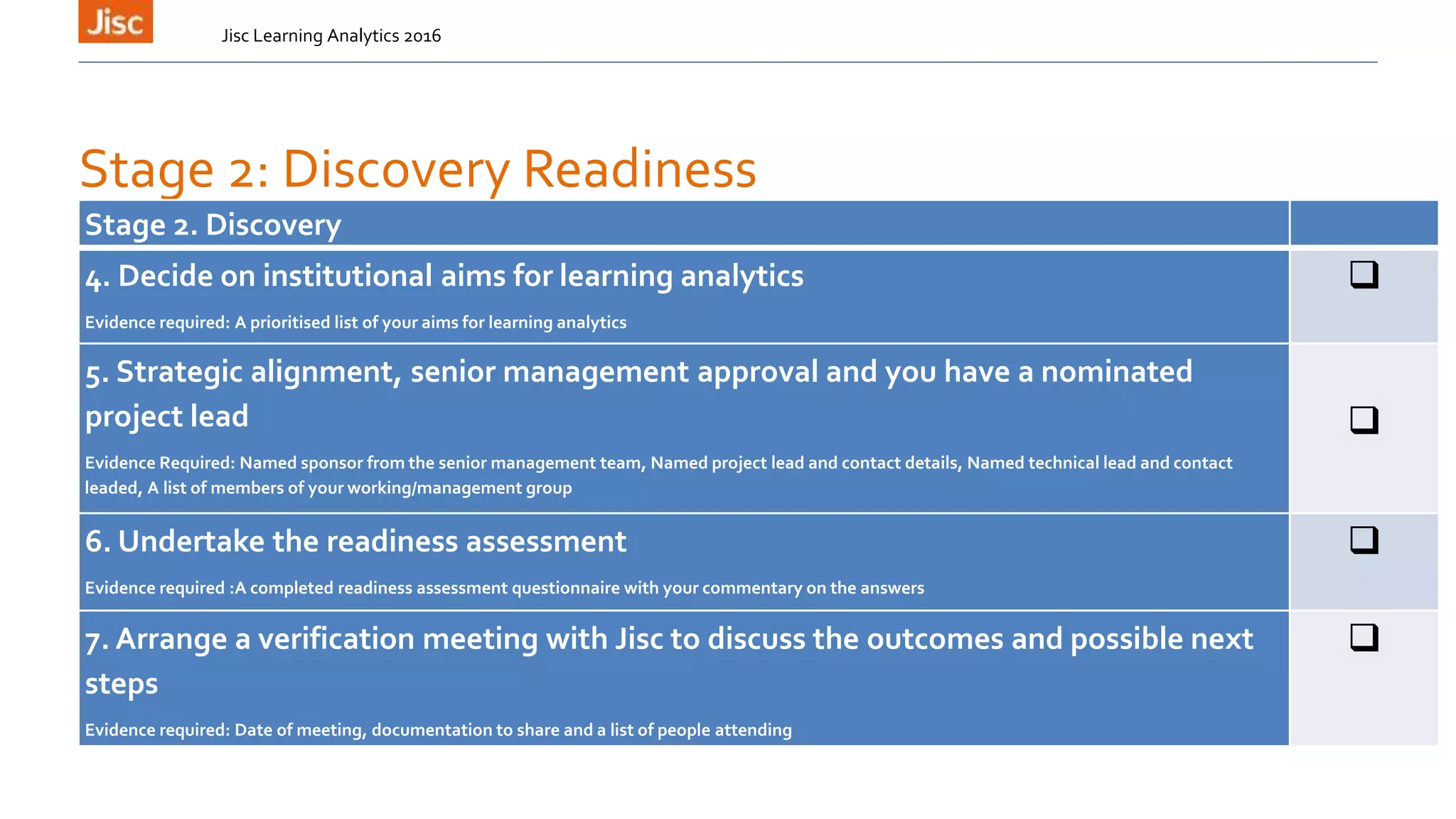

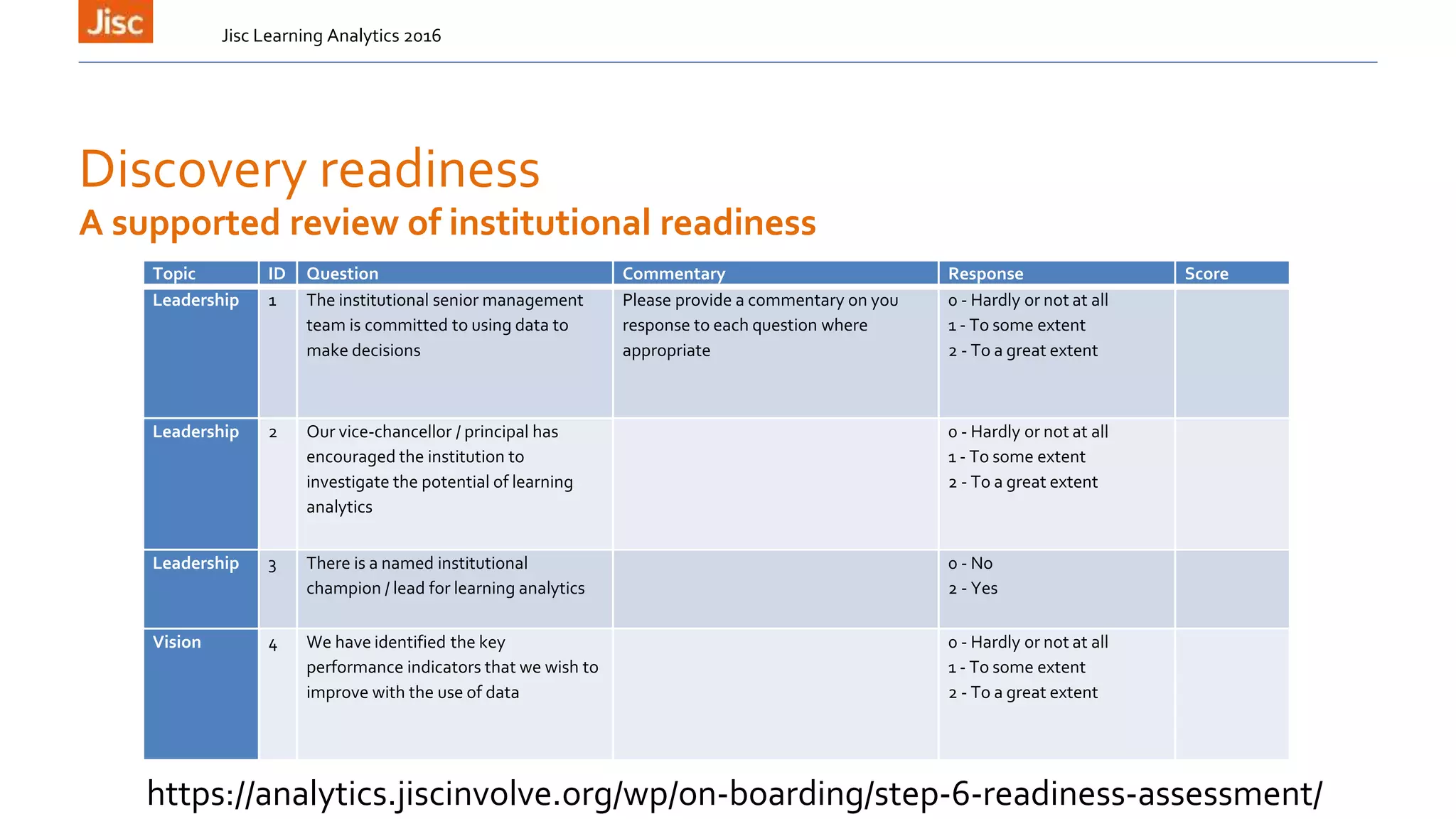

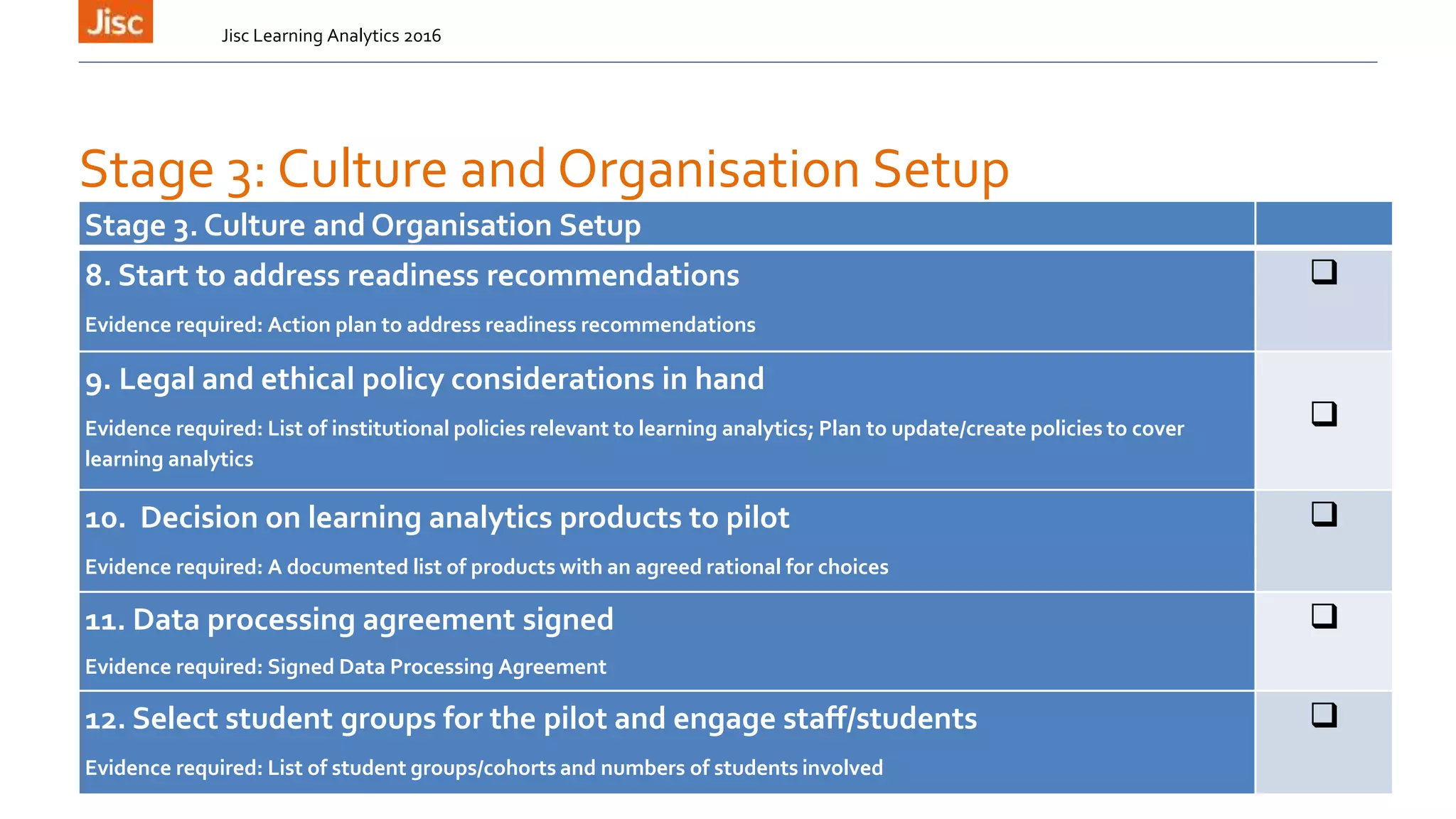

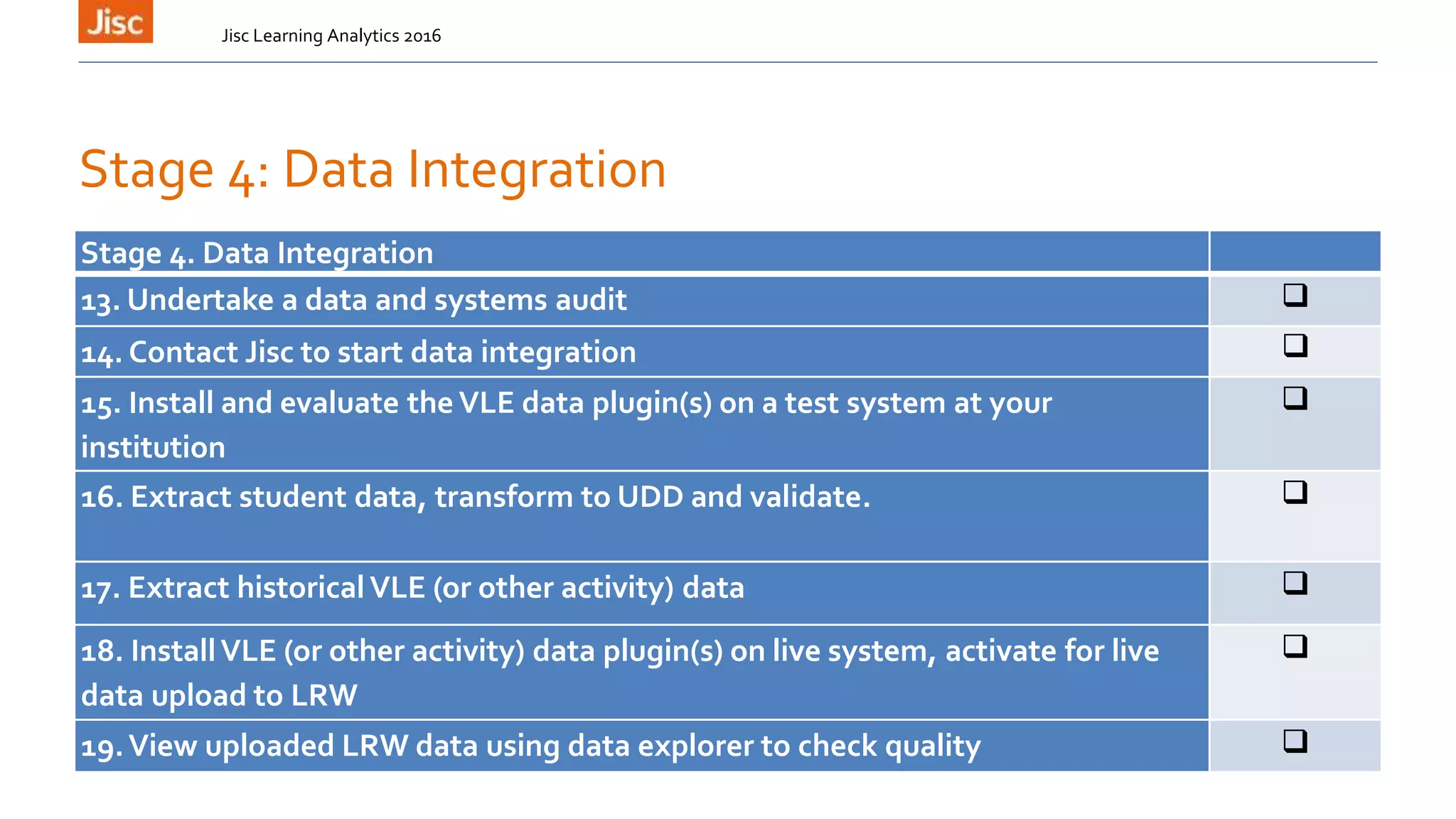

The document outlines the agenda for the 8th UK Learning Analytics Network Meeting at the Open University on November 2nd, 2016. The agenda includes updates on Jisc's learning analytics program, sessions on learning design and analytics, legal issues, and the Learning Analytics Community Exchange.